Self-Learning Series Part 4: Explore the Open Design and Platform Architecture

Sun, 19 Nov 2023 14:53:00 -0000

|Read Time: 0 minutes

Edge has a unique set of challenges that require a new way of architecting to solve them. Edge computing is a distributed computing paradigm where data processing is performed closer to the data source or "edge" of the network, rather than relying solely on centralized cloud servers.

An open design fosters a culture of innovation and collaboration. It promotes flexibility and a more future-proof approach to edge computing. However, it's essential to carefully evaluate the specific requirements of the edge computing environment and choose the approach that best aligns with the organization's goals and constraints.

In this blog, we will help you understand how to get the most out of edge investments using an open design that works with software applications, IoT frameworks, multi-vendor operations technology solutions, and multicloud environments of your choice. This will allow you to consolidate technology silos and deliver consistent management experience across devices with connectivity out of the box.

A Unique Set of Challenges

When edge computing lacks an open design, it can face several challenges, including:

- Vendor Lock-In—Without open standards and interoperability, organizations may become locked into a specific vendor's proprietary solutions. This limits flexibility, hinders innovation, and leads to higher costs.

- Lack of Ecosystem—A closed system can stifle competition, reducing options and potentially raising prices.

- Security Concerns—Closed, proprietary systems may lack transparency, making it more difficult to assess and improve security.

- Scalability—Scalability is critical for edge computing, as the number of edge devices and their diversity can vary widely. Closed systems are more rigid and make it difficult to scale.

As a result, closed systems may limit the ability of developers and organizations to innovate and create customized edge computing solutions.

What Is Multicloud by Design?

Multicloud by design, also known as a multi-cloud strategy or multi-cloud architecture, is an intentional approach to utilizing multiple cloud service providers for various aspects of an organization's computing needs. In this strategy, a company deliberately chooses to use two or more cloud platforms, such as Amazon Web Services (AWS), Microsoft Azure, or Google Cloud Platform (GCP) to meet specific business requirements.

While multicloud offers numerous benefits, it also introduces complexities in terms of management, orchestration, and security. Organizations need to plan their multi-cloud strategy carefully, including workload placement, data synchronization, network configurations, and security measures, to ensure a successful and efficient implementation. Specialized tools and services designed for managing multi-cloud environments can assist in these efforts.

Watch the following video on how to optimize your edge investment:

A New Way of Architecting

Built on an open design, Dell NativeEdge offers the flexibility to choose the ISV applications and cloud environments for your edge application workloads. You can centrally and consistently deploy containerized and virtual applications using blueprints to work with your choice of IoT frameworks and OT vendors. Like everything else from Dell, NativeEdge is multicloud by design, enabling you to deploy applications across and new or existing environment.

Here are a few advantages of using an open design system:

- Flexibility—Open architectures allow organizations to choose from a variety of hardware, software, and services. This flexibility is particularly important in the dynamic edge computing environment, where the diversity of devices and use cases can vary.

- Avoiding Vendor Lock-In—With open designs, organizations are less likely to become locked into a single vendor's proprietary solutions. This reduces the risks associated with vendor dependency and enables businesses to switch or integrate different technologies more easily.

- Cost-Effectiveness—Open design often leads to cost-effective solutions. Open-source software and standards can reduce licensing fees and minimize the need for expensive proprietary hardware, helping organizations optimize their budgets.

- Scalability—Open architectures are typically designed with scalability in mind, making it easier to expand edge computing solutions as requirements grow or change.

- Security and Transparency—Open-source projects are transparent, allowing users to inspect the source code for security vulnerabilities. Community review and contributions help identify and address security issues promptly.

- Ecosystem Growth—An open design fosters a broader ecosystem of complementary software and hardware solutions, enhancing the availability of tools and services that can be integrated into the edge computing environment.

Edge Partner Ecosystem

We are working with partners to co-engineer and develop solutions that include software, partner intellectual property, products, and services. Dell also has some of the biggest, longest-standing partnerships in the industry with companies like Microsoft, Intel, and VMware.

When market-leading companies team together to create and offer validated, proven reference architectures, then we can help you mitigate risk and accelerate your time to revenue.

As an example, with NativeEdge, the Dell Validated Design for Manufacturing Edge using Telit Cinterion can be implemented and brought to market quicker, allowing for faster and more secure deployment, lower costs, increased security, and more reliable and repetitive outcomes based on the blueprints implemented. This allows for:

- Quicker data collection and analysis when deployed on-premises

- Increased integration of information from existing assets across all NativeEdge-enabled Devices

- Simpler configuration

- Simplified connection of devices

By removing the complexity of deployment and adding the element of application-level lifecycle management, NativeEdge reduces the amount of physical touch required and creates a repeatable deployment process at scale.

Dell Technologies will continue to foster partnerships to develop open software that enables interoperability and ease of operations while avoiding being locked into expensive, proprietary technologies that limit your ability to innovate and create. For more information, visit our Edge Ecosystem.

Watch the following video: Power management company optimizes edge investments for success

Conclusion

Make the most of edge investments by using an open design that works with software applications, IoT frameworks, multi-vendor operations technology solutions, and multicloud environments. This enables you to deploy applications across new or existing environments. NativeEdge will support each edge use case with an open design that works with your choice of software applications, IoT frameworks, OT vendor solutions, and multicloud environments.

Dell Technologies is going to enable its existing strong edge ecosystem of partners to leverage the open, vendor-agnostic design, allowing customers to optimize their edge investment. This way, we can put the customer in the driver’s seat to control their edge.

To learn more about how to simplify edge operations at scale, click here to see an interactive flip-book.

Additional Resources

To learn more about NativeEdge Application Orchestration, click on the following links:

This blog is a part of a self-learning series. For more information on NativeEdge, go to:

Related Blog Posts

Unlocking the Power of AI-Assisted DevEdgeOps Automation

Wed, 27 Mar 2024 18:13:00 -0000

|Read Time: 0 minutes

In today's digital landscape, the expansion of edge computing has transformed how data is processed and managed. However, with this evolution comes the challenge of managing and maintaining numerous edge deployments efficiently. DevEdgeOps is a shift-left approach that moves operational tasks to an earlier stage. This approach facilitates collaboration between IT and OT streamlining edge operations. By integrating AI-assisted techniques into DevEdgeOps practices, organizations can unlock stacks of benefits, ranging from increased productivity to improved operational efficiency.

“The automation process using infrastructure as code (IaC) is complex, especially when it comes to highly distributed edge environments. It must be balanced with the requirements of edge operating environments which are different than IT. Gen AI and Copilot-based edge automation development tools can reduce the development process of that automation code and help to meet the requirements of edge operations workloads.” says Nati Shalom, Fellow at Dell NativeEdge, introducing the topic in his blog Edge-AI trends in 2024.

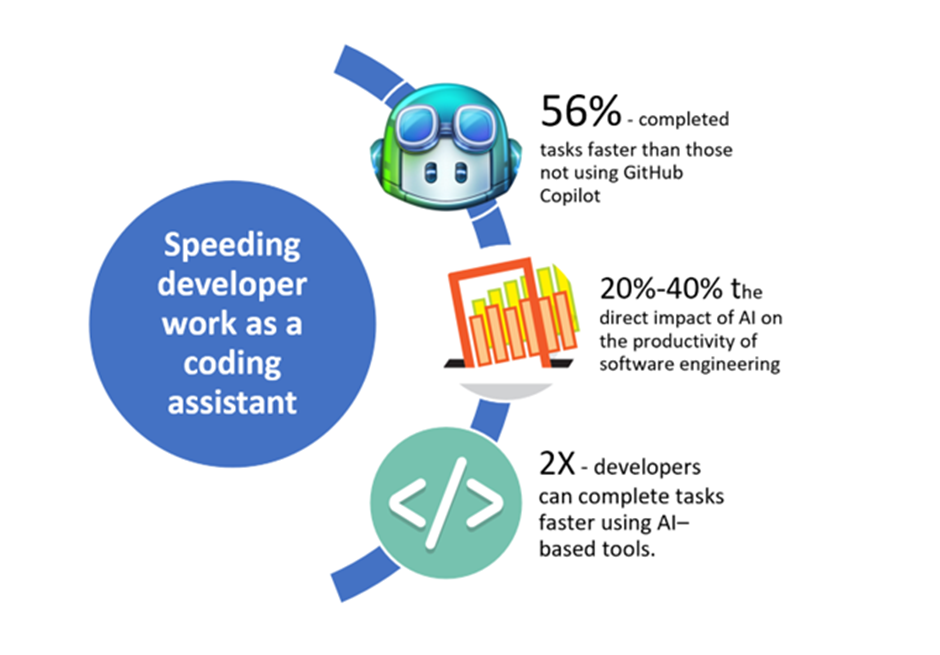

In a recent study by McKinsey, it indicates a potential improvement of up to 56 percent in productivity. DevEdgeOps advocates for reducing that complexity using a shift-left approach where production issues can be identified earlier during the development phase.

Figure 1. The benefits of using Gen AI as a coding assistant (Copilot) (Source: McKinsey)

Simplifying Complex Tasks with Automation

One of the primary advantages of leveraging AI in DevEdgeOps is the ability to automate and optimize complex tasks associated with edge operations. Traditional methods of managing edge environments often involve manual interventions, which are time consuming and error prone. AI-powered automation tools can streamline these processes by intelligently analyzing data patterns, predicting potential issues, and automating corrective actions. This reduces the burden on IT teams, minimizes the risk of downtime, and improves system reliability.

A case in point was the recent winner of the Dell Hackathon, Rachel Shalom, from the NativeEdge team. In her article DevOps Made Easy with Gen AI, she explores the integration of Gen AI into DevOps practices, simplifying and optimizing the development process. By leveraging Gen AI's capabilities, developers can automate tasks such as code generation, testing, and deployment, reducing time-to-market and enhancing efficiency. Through Rachel’s real-world example, she illustrates how Gen AI streamlines DevOps workflows, empowers teams to focus on innovation, and fosters collaboration between development and operations teams.

Regarding data and modeling insights, Rachel writes “You might be wondering, why not use Copilot or a commercial GPT for queries right off the bat? We gave that a shot, but it fell short of our specific need to generate configuration files. This was mainly because our proprietary internal data was not familiar to the model, leading us to the necessity of fine-tuning with a private GPT-3.5.”

A Proactive Approach to Edge Management

AI-assisted DevEdgeOps enables organizations to adopt a proactive approach to edge management. By leveraging predictive analytics and machine learning algorithms, businesses can anticipate and prevent potential issues before they escalate into critical failures. This proactive approach enhances system resilience and enables organizations to allocate resources more effectively, which optimizes operational costs.

Rapid Development and Deployment

AI-driven DevEdgeOps facilitates rapid development and deployment of edge applications. Traditional development processes often struggle to keep pace with the dynamic nature of edge computing, resulting in delays and inefficiencies. By harnessing Gen AI capabilities such as Copilot-based development tools, organizations can accelerate the development life cycle, reduce time-to-market, and stay ahead of the competition. This enhances agility and allows businesses to capitalize on emerging opportunities more effectively.

Reducing Costs with Automation

In addition to operational benefits, AI-assisted DevEdgeOps can also drive significant cost savings for organizations. The McKinsey study referenced earlier highlights the potential for a 56 percent improvement in productivity through the adoption of AI-driven automation. By automating repetitive tasks, optimizing resource utilization, and minimizing downtime, businesses can achieve substantial cost reductions while maximizing the return on investment (ROI) of their edge investments.

Furthermore, AI-assisted DevEdgeOps fosters innovation by empowering organizations to focus on value-added activities rather than repetitive mundane operational tasks. By automating routine maintenance, troubleshooting, and provisioning activities, IT teams can devote more time and resources to innovation-driven initiatives that drive business growth and competitive advantage. This enhances organizational agility and fosters a culture of continuous improvement and innovation.

Conclusion

The benefits of automating edge operations with AI-assisted DevEdgeOps are undeniable. By leveraging AI capabilities to streamline processes, enhance proactive management, accelerate development, and drive cost savings, organizations can unlock the full potential of their edge deployments. As the digital landscape continues to evolve, embracing AI-driven automation in DevEdgeOps will be essential for organizations looking to stay competitive, agile, and resilient in the face of the ever-changing demands.

References

Self-Learning Series Part 3: Using Automation to Scale and Streamline Operations

Sun, 05 Nov 2023 12:54:00 -0000

|Read Time: 0 minutes

Edge devices offer businesses across industries the opportunity to elevate their operations in an unprecedented way. Each edge device that is added to operations comes with multiple management challenges.

The two main challenges businesses constantly need to address are:

- The resources needed to deploy edge devices are not always readily available

- The time needed to manage edge devices is not always feasible

If the purpose of these edge devices is to deliver data and improve efficiency, the platform that manages them should match these goals.

Additionally, the struggle to keep IT and OT functioning seamlessly is compounded by the need for edge devices to be deployed, monitored, and updated without creating bottlenecks or unnecessary repetitive tasks.

Managing these distributed systems, especially in locations that don’t have technical personnel, must be simple, scalable, and easily repeatable. Systems must be fundamentally zero-touch once plugged in and powered on.

Therefore, eliminating operational complexity at scale via a centralized management platform would require zero-touch deployment and onboarding, and automated operations of infrastructure and applications from edge to multicloud are essential.

Dell NativeEdge is the edge operations software platform that will help enterprises simplify their edge environments by automating edge operations and enforcing zero trust security.

This blog explores how automation with NativeEdge helps simplify the operational processes, allowing for OT and IT to streamline tasks and increase edge device efficiency.

Imagine these possibilities with automation:

- What if you could consolidate all siloed solutions and make it easier to manage and scale them using consistent, repeatable processes?

- What if you could set up security controls across the edge one time, then enforce them automatically without IT intervention whenever you deploy more applications and devices?

- What if you could orchestrate all your applications, third-party or home-grown, from a single catalog, across any number of devices or locations, using blueprint templates?

- As your edge infrastructure expands, what if you could deploy and provision new devices automatically with all the required workloads?

- What if you could also push out patches and upgrades consistently and at scale?

Dell NativeEdge makes all these possible.

Through the automation of routine and repetitive tasks like onboarding devices, orchestrating application workloads, and managing them at scale, recent analysis suggests the NativeEdge platform can speed up application lifecycle management at the edge 22 times faster than current processes.1 This means a large-scale edge implementation that may take 100 or more hours to deploy could be completed in under five hours with Dell NativeEdge.

Automation’s Impact on the Whole Lifecycle Management

Reduce Human Intervention

NativeEdge dramatically simplifies operations through deeply integrated automation processes to streamline edge deployment and operations at scale without relying on IT expertise in the field. NativeEdge does so with centralized management, zero-touch deployment and onboarding, and automated operations.

Error-free Processes

Automating the provisioning and deployment processes enables developers to request and access the necessary resources and environments without relying on manual intervention from IT operations. This self-service approach accelerates development cycles and reduces the time required to set up and configure new environments.

Achieve Faster and More Reliable Software Delivery

Creating tools and workflows that automate tasks like deployment, testing, and monitoring helps reduce human intervention and ensures consistent and error-free processes. This aligns closely with the principles of DevOps implementation, where development and operations teams collaborate closely to achieve faster and more reliable software or hardware delivery.

Simplified Operations

Through the Dell NativeEdge platform, automation simplifies edge operations by providing centralized control and management of distributed edge devices and infrastructure. This simplification leads to increased operational efficiency, reduced manual intervention, improved reliability, and better utilization of edge resources. These advantages are particularly crucial in edge computing scenarios, where resources are distributed across various remote locations and need to function reliably and with minimal human intervention.

Leveraging Infrastructure as Code (IaC)

Imagine you have edge devices on a fleet of boats. Without automation, if you wanted to update the application version, you would have to send a DevOps specialist to each boat, which would take ages and raise costs astronomically. Taking a step back, if you want to find out what is wrong with the edge device, how long will it take to figure it out and how long would it take to repair it so the device is up and running properly?

NativeEdge leverages IaC to automate application provisioning, deployment, and lifecycle management on NativeEdge-enabled Devices as well as on other infrastructure with virtualized or containerized environments.

To understand how we can leverage IaC, let’s make sure we understand some basic terminology:

- Infrastructure as code (IaC): The managing and provisioning of infrastructure through code instead of through a manual process. Using IaC, configuration files that contain your infrastructure specifications are created, which makes it easier to edit and distribute configurations.

- Blueprint: a set of documented best practices, guidelines, and processes for implementing DevOps principles within an organization. A blueprint, in the context of automation, can be a valuable tool to facilitate the design, implementation, and management of automated processes.

Using blueprints is a powerful way to streamline infrastructure and application deployment, ensure consistency, and reduce the risk of errors in your software development and deployment process.

A common tool for creating and managing blueprints is IaC. An example on this could be using frameworks in Ansible for infrastructure provisioning, and configuration management tools like Puppet or Chef for application configuration.

Following our example above of updating an application on devices installed on a fleet of boats. You can leverage automation with Dell NativeEdge, and blueprints can facilitate the process. There are two routes to create blueprints:

- Internally write code or configuration files that define your blueprint. This code should specify how to set up and configure infrastructure components (servers, databases, load balancers) and application components (web servers, microservices, databases), and then upload it to NativeEdge.

- Alternatively, you can use the NativeEdge catalog which includes ready-to-use blueprints provided by Dell or written by independent software vendors (ISV).

Note: Components of a blueprint can often be reused in various contexts. For example, you can use the same blueprint to deploy similar microservices in different parts of your application.

Once you choose the blueprint you would like to use, it provides an option to deploy the updated application using the blueprint on all the devices running the old version across the entire fleet of boats with just a few clicks in NativeEdge. You don’t need to know how to create the VM receipt, or how to run a playbook, or how to install it. All you need to know is how to click install, and the rest is automated.

All these features in NativeEdge allow for a simplified operational process to update the edge device’s application on the fleet of boats in less time and with less technical expertise on hand. Similarly, we can apply these benefits to retail stores, manufacturing factories, or smart cities.

Conclusion

NativeEdge can manage your entire application lifecycle through automation tools. It helps deploy apps on any infrastructure, including public and private clouds. It is a reliable DevOps tool to speed up the building, deployment, and management of software, apps, and microservices without sacrificing operational efficiency or security.

1Estimated: Based on 2023 study of edge operations by GLG Research on behalf of Dell Technologies and estimates from test deployment of NativeEdge (Avg. of 100 responses from IT practitioners).

Additional Resources

To learn more about NativeEdge Application Orchestration, click on the following link:

This blog is a part of a self-learning series. For more information on NativeEdge, go to: