Running Llama 3.1 405B models on Dell PowerEdge XE9680

Tue, 01 Oct 2024 18:31:21 -0000

|Read Time: 0 minutes

Introduction

Meta recently introduced the largest open-source language model and most capable, Llama 3.1 405B, which enables the AI community to explore new frontiers in synthetic data generation, model distillation, and building agent applications. Llama 3.1 is an auto-regressive language model that uses an optimized transformer architecture. The tuned versions use supervised fine-tuning (SFT) and reinforcement learning with human feedback (RLHF) to align with human preferences for helpfulness and safety. This openly available model sets a new standard for general knowledge, steerability, math, tool use, and multilingual translation supported in 8 languages (English, German, French, Italian, Portuguese, Hindi, Spanish, and Thai). The Dell Technologies PowerEdge XE9680 server stands ready to smoothly host these powerful new models.

The Llama 3.1 405B release includes two versions: a pre-trained general LLM and an instruction fine-tuned LLM. The general version was trained with about 15 trillion tokens of publicly available data. Each version has open model weights released in BF16 precision and supports a model parallelism of 16, designed to run on two nodes with 8 GPUs each. Both the general and the instruction-tuned releases include a version with model parallelism of 8, while using FP8 dynamic quantization to allow for running on a single 8-GPU node, as well as a third, compact version of each model in FP8. All model versions use Grouped-Query Attention (GQA) for improved inferencing scalability. Table 1 lists models that can be downloaded after obtaining Llama 3.1 405B model access by completing the required Meta AI license agreement. The models are also available on Dell Enterprise Hub.

Table 1: Llama 3.1 405B Available Versions [1]

Model Versions | Model Names | Context Length | Training Tokens | Model Parallelism |

Pre-trained |

| 128 K | ~15 T | 16 and 8 |

Instruct |

|

In this blog, we demonstrate the inference flow of the Llama 3.1 405B models on Dell PowerEdge XE9680 servers [2]. With 8x NVIDIA H100 GPUs connected through 900GB/s NVLink internal fabric, as well as the support for 400Gb/s InfiniBand and Spectrum-X cross-node communication, the XE9680 is an ideal platform for both single-node and multi-node LLM inferencing for this level of model size.

Experimental setup

In our experiments, two Dell PowerEdge XE9680 servers are configured identically and are connected through an InfiniBand (IB) fabric to perform the multi-node inferencing. The flexibility of the XE9680 enables us to run two experiments: one using the FP8 instruct version running on a single PowerEdge server and another setup using the BF16 instruct model running on two nodes. Table 2 details each system’s configuration.

Table 2: Experimental Configuration for one Dell PowerEdge XE9680

Component | Details |

Hardware | |

Compute server for inferencing | PowerEdge XE9680 |

GPUs | 8x NVIDIA H100 80GB 700W SXM5 |

Host Processor Model Name | Intel(R) Xeon 8468 (TDP 350W) |

Host Processors per Node | 2 |

Host Processor Core Count | 48 |

Host Memory Capacity | 16x 64GB 4800 GHz RDIMMs |

Host Storage Type | SSD |

Host Storage Capacity | 4x 1.92TB Samsung U.2 NVMe |

Software | |

Operating System | Ubuntu 22.04.3 LTS |

CUDA | 12.1 |

CUDNN | 9.1.0.70 |

NVIDIA Driver | 550.90.07 |

Framework | PyTorch 2.5.0 |

Single Node Inferencing on PowerEdge XE9680

We performed torchrun on one XE9680 using the FP8 quantized version of Llama 3.1 405B. Details on the FP8 version can be found in the official release paper from Meta [1]. Here we explain the steps for running inference on this single system.

Step 1: Complete the Download form for the Llama 3.1 405B Instruct FP8 model. A unique URL will be provided. Git clone the repo llama-model and run download.sh under the models/llama3_1 folder to start downloading the models.

Step 2: Create and activate a conda environment.

- conda create --name myenv python=3.10 (replace "myenv" with your desired environment name)

- Activate the environment: conda activate myenv

Step 3: Navigate to the model folder within the conda environment. Ensure conda environment matches the CUDA, CUDNN, and NVIDIA driver versions listed in Table 2. Install required libraries from requirements.txt.

- cd path/to/model/folder (replace "path/to/model/folder" with the actual path)

- pip install -r requirements.txt

Step 4: To verify the deployment with torchrun, build run_chat_completion.sh. We utilize example_chat_completion.py from the llama Git repository as a testing example within the script below. For the FP8 inference, we import the FBGEMM library into the script for efficient execution [3]. We also use environment variables and settings as detailed in PyTorch distribution communication packages documentation [4].

run_chat_completion.sh #!/bin/bash set -euo set -x cd $(git rev-parse --show-toplevel) MASTER_ADDR=$1 NODE_RANK=$2 CKPT_DIR=$3 TOKENIZER_PATH=$4 NNODES=$5 NPROC=$6 RUN_ID=$7 if [ $RUN_ID = "fp8" ]; then ENABLE_FP8="--enable_fp8" else ENABLE_FP8="" fi NCCL_NET=Socket NCCL_SOCKET_IFNAME=eno8303 TIKTOKEN_CACHE_DIR="" \ torchrun \ --nnodes=$NNODES --nproc_per_node=$NPROC \ --node_rank=$NODE_RANK \ --master_addr=$MASTER_ADDR \ --master_port=29501 \ --run_id=$RUN_ID \ example_chat_completion.py $CKPT_DIR $TOKENIZER_PATH $ENABLE_FP8

Step 5: Execute the bash file run_chat_completion.sh using the required arguments. Example values are shown.

sh run_chat_completion.sh $MASTER_HOST $NODE_RANK $LOCAL_CKPT_DIR $TOKENIZER_PATH $NNODES $NPROCS $RUN_ID Example Usage: $MASTER_HOST= 192.x.x.x $NODE_RANK = 0 $LOCAL_CKPT_DIR= <model_checkpoint_dir_location> $TOKENIZER_PATH= <model_tokenizer_dir_location> $NNODES=1 $NPROCS=8 $RUN_ID=fp8

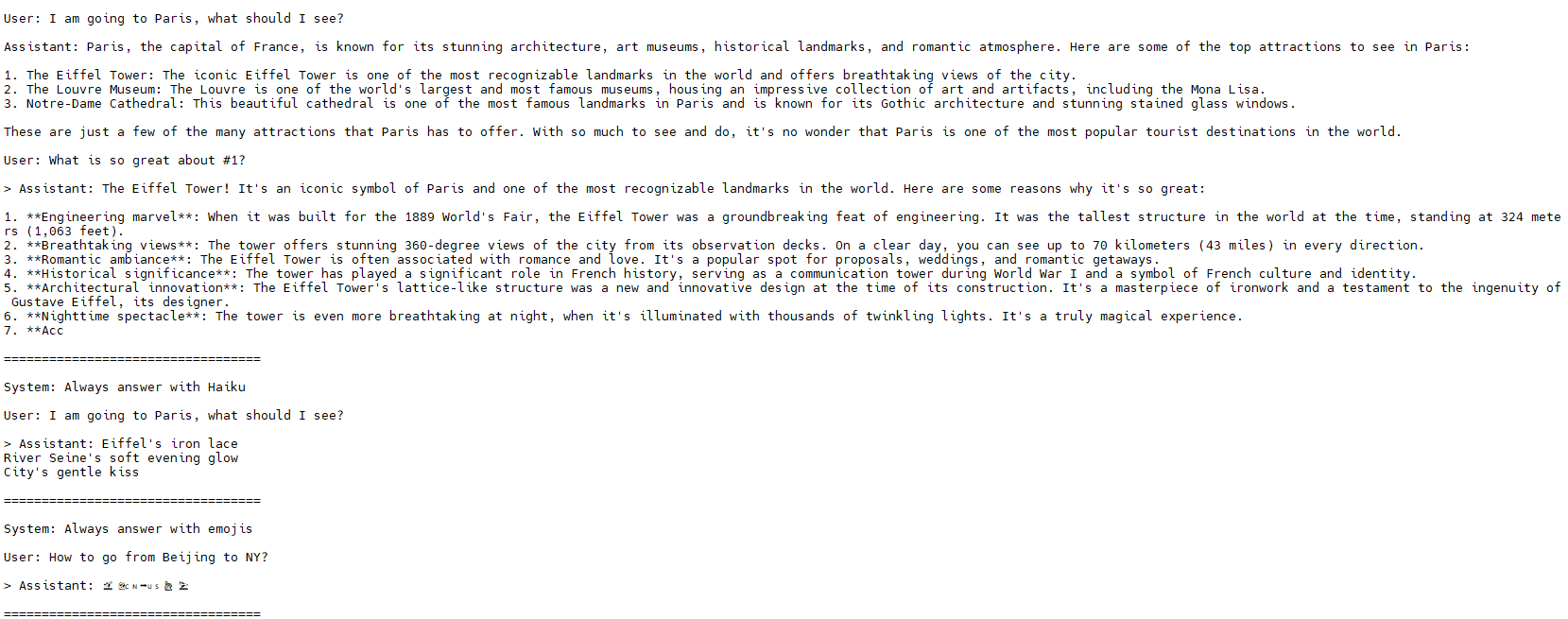

Figure 1 shows sample output after running the inference on XE9680.

Figure 1: XE9680 display of inference output.

Multi-Node Inferencing on 2 PowerEdge XE9680

Running the Llama 3.1 405B Instruct model with BF16 precision requires more GPU memory than the 640GB available on a single XE9680 with Nvidia H100. Fortunately, the XE9680 is flexible and enables the deployment of large-scale models that require distributed processing across multiple GPUs to meet these demanding memory requirements. We recommend a multi-node inferencing setup utilizing two XE9680 nodes, with a total of 16 GPUs, connected via 400 Gb/s high-speed InfiniBand (IB) network. This ensures seamless communication and maximum throughput. Within each PowerEdge XE9680, the NVLink high bandwidth enables tensor parallelism. In our experiment, the memory consumption of the model on 2 XE9680 came out to be around 1TB during the inferencing.

Multi-node inferencing follows steps similar to those of single-node inferencing.

Step 1: Download the Llama3.1-405B-instruct-MP16 model.

Step 2: Follow steps 2 to 4 from the single inferencing section above to create a conda environment in each XE9680 node. Use the example_chat_completion.py to execute a test example within run_chat_completion.sh.

Step 3: We execute torchrun separately on both nodes. Assign one node as $MASTER_HOST and set its $NODE_RANK to 0. Include the $MASTER_HOST IP address in the settings for the second node and set its $NODE_RANK to 1, as outlined below.

sh run_chat_completion.sh $MASTER_HOST $NODE_RANK $LOCAL_CKPT_DIR $TOKENIZER_PATH $NNODES $NPROCS Example Usage on Node1: $MASTER_HOST= 192.x.x.x $NODE_RANK = 0 $LOCAL_CKPT_DIR= <model_checkpoint_dir_location> $TOKENIZER_PATH= <model_tokenizer_dir_location> $NNODES=2 $NPROCS=8 Example Usage on Node2: $MASTER_HOST= 192.x.x.x $NODE_RANK = 1 $LOCAL_CKPT_DIR=<model_checkpoint_dir_location> $TOKENIZER_PATH=<model_tokenizer_dir_location> $NNODES=2 $NPROCS=8

Conclusion

Dell’s PowerEdge XE9680 server is purposely built to excel at the most demanding AI workloads, including the latest releases of Llama 3.1 405B. With the best available GPUs and high-speed in-node and cross-node network fabric, the XE9680 is an ideal platform flexible enough for both single-node and multi-node inferencing. In this blog, we demonstrated both single-node and multi-node inferencing capabilities by deploying the best-in-class open source Llama 3.1 405B models. Our step-by-step guide provides clear instructions for replication. Stay tuned for our next blog posts, where we dive into exciting use-cases such as synthetic data generation and LLM distillation and share performance metrics of these models on the XE9680 platform.

References

[1]. The Llama 3 Herd of Models: arxiv.org/pdf/2407.21783

[2] https://www.dell.com/en-us/shop/ipovw/poweredge-xe9680

[3] https://github.com/meta-llama/llama-stack/blob/main/llama_toolchain/inference/quantization

[4] Distributed communication package - torch.distributed — PyTorch 2.4 documentation

Authors:

Khushboo Rathi (khushboo.rathi@dell.com) ;

Tao Zhang (tao.zhang9@dell.com) ;

Sarah Griffin (sarah_g@dell.com)