PowerEdge “xs” vs. “Standard” vs. “xa” vs. “xd2”

Download PDFWed, 26 Apr 2023 22:34:11 -0000

|Read Time: 0 minutes

Summary

With the recent announcement of 4th Gen Intel® Xeon® Scalable processors, Dell has announced two different models of the R660 and four different models of the R760 to meet emerging customer demands. This paper highlights the engineering elements of each design and explains why we expanded the portfolio.

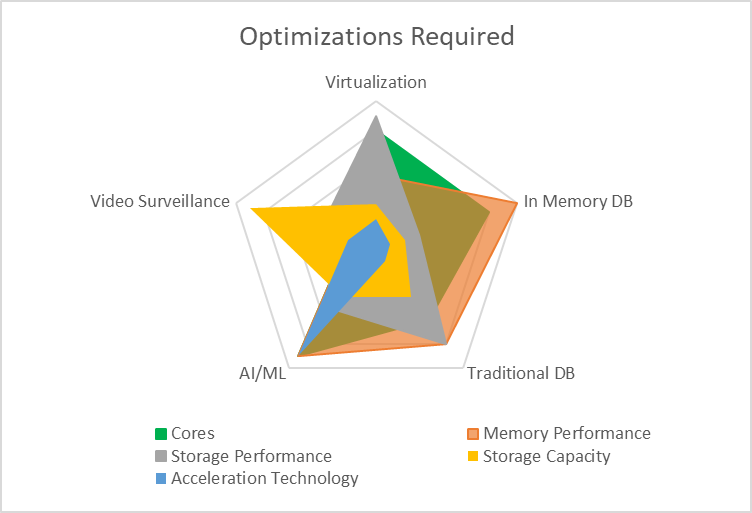

Balancing system cost, performance, scalability, and power consumption is difficult when designing a server. The evolution of workloads places additional demands on the design, with environments such as virtualization, artificial intelligence (AI), machine learning (ML), video surveillance, and object-based storage all centering on different optimization parameters.

The challenge for server design teams is to strike an effective balance that delivers maximum performance for each workload/environment but does not overly burden the customer with unnecessary cost for features they might not use. To illustrate this, consider that a server designed for maximum performance with an in-memory database might require higher memory density, while a server designed for AI/ML might benefit from enhanced GPU support. Similarly, a server designed for virtualization with software-defined storage might benefit from increased core counts and faster storage, while the massive amount of data generated by video surveillance workloads or object-based storage environments would benefit from larger storage capacities. Each of these environments requires different optimizations, as shown in the following figure.

While it might be technically possible to build a single system that could achieve all this, the result would be much more expensive to purchase and could be potentially physically larger. For example, a system capable of powering and cooling multiple 350 W GPUs needs to have bigger power supplies, stronger fans, additional space (particularly for double-width GPUs), and high core count CPUs. Conversely, a system designed for video surveillance might require none of these optimizations and instead require a large number of high-capacity hard drives. Trying to optimize for all workloads/environments often results in unacceptable trade-offs for each.

To achieve truly optimized systems, Dell Technologies has launched four classes of its industry-leading PowerEdge rack servers: the “xa” model, the “standard” models, the “xs” models and the “xd2” model.

- The “xa” model is designed for optimization in AI/ML environments. It delivers larger power supplies, high-performance cooling, and support for a large number of GPUs to deliver the highest levels of performance.

- The “standard” models are flexible enough to deliver enhanced virtualization support (with software-defined storage) or database performance (“in memory” or traditional database) with the addition of high storage performance, large memory expansion, and increased core counts.

- The “xs” models deliver right-sized configurations for the most popular workloads, providing a balance of lower power consumption, a range of upgrade options, memory capacity, and performance as well as high-performance NVMe storage for demanding virtualization environments.

- The “xd2” model is designed for maximum storage capacity using large-form-factor spinning hard drives to deliver critical storage capacity for demanding environments such as video surveillance and object-based storage.

Design optimizations

As noted, the “xa” model is optimized for GPU density, the “standard” models are optimized for high performance compute, the “xs” models are optimized for virtualized environments, and the “xd2” model is optimized for storage density. Here is an overview of the key feature differences:

While key specifications are different between models, much remains the same. All models support key features such as:

- iDRAC9 and OpenManage

- OCP3.0 networking options

- PCIe 4.0/5.0 slots (PCIe 4.0 only on the R760xd2)

- PERC 11/PERC 12 RAID, including optional support for NVMe RAID on some models

- 4,800 MT/s memory

“xa” design

The R760xa is optimized for enhanced GPU support. This support is accomplished by moving two of the PCIe cages from the back to the front, as indicated in the figure. Each of these cages can support up to two double-width PCIe x16 Gen 5 GPUs, and, in the case of the NVIDIA A100, each pair can be linked together with NVLink bridges. The R760xa can also support up to eight of the latest-generation NVIDIA L4 GPUs. These cards are a low-profile, single-width design that operates at PCIe Gen 4 speeds using x16 slots. Additional PCIe slots are available in the back of the system. With this change, internal storage has been designed to fit in the middle of the front of the server and provide up to eight SAS/SATA or NVMe drives or a mix of drive types. All these configurations are available with optional support for RAID, using the new PERC 11 based H755 (SAS/SATA) or H755n (NVMe). This model supports up to 32 DDR5 DIMMs, allowing a maximum capacity of 8 TB using 256 GB DIMMs.

“Standard” design

The R660/R760 “standard” models have been designed to accommodate the flexibility necessary to address a wide variety of workloads. With support for large numbers of hard drives (12 in the R660 and 26 in the R760), these models also offer optional performance and reliability features with the new PERC 11 and PERC 12 RAID controllers. These RAID controllers are located directly behind the drive cage to save space and are connected directly to the system motherboard to ensure PCIe 4.0 speeds. To ensure the highest levels of performance, these models ship with support for up to 32 DIMMs, allowing up to 8 TB of memory expansion using 256 GB DIMMs and support processors with up to 56 cores. In addition, both models support GPU but to a lesser extent than the “xa” series.

“xs” design

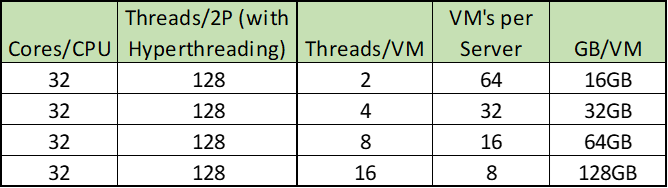

When designing for virtualization, we see a number of key factors that emerge. For example, storage requirements often serve software-defined storage schemas (such as vSAN), while the ability of a hypervisor to segment memory and cores creates a need to balance between the two. To meet these demands, the new “xs” designs include support for up to 16 DIMMs. This translates to 1 TB of DRAM when using 64 GB DIMMs, CPUs with up to 32 cores, and internal storage of up to 24 drives (2U) or 10 drives (1U).

Not that many years ago, the cost per GB of memory made it difficult to design systems that could accommodate the required “memory/VM” ratios necessary for a balanced hypervisor. However, recent pricing trends have created an opportunity to achieve excellent performance, scalability, and balance with fewer DIMMs. Specifically, the cost/GB ratio of a 64 GB DIMM is evolving to be similar to the ratio of a 32 GB DIMM. This means that customers can achieve the same balance that was achieved with previous generations of servers with fewer DIMM sockets. As the following chart shows, an “xs” system with only 16 DIMM sockets populated with 64 GB DIMMs (1 TB total) can deliver compelling GB/VM.

There are significant impacts to reducing the number of DIMM sockets. The most obvious is power and cooling. Any design needs to reserve enough “headroom” for a full configuration. For example, assuming a power requirement for memory of 5 W per socket, cutting the number of DIMM sockets in half, an “xs” power budget can be reduced by up to 80 W. This in turn reduces the amount of cooling required, which allows the use of more cost-effective fans and potentially reduced cost by limiting baffles and other hardware used to direct air flow. This also helps explain why an “xs” system can operate on a power supply as small as 600 W (R660xs), while a “standard” system requires a minimum of 800 W (R660) power supplies to operate.

“xd2” design

To deliver maximum storage capacity, the R760xd2 uses two rows of 3.5-inch drives in the front, each of which supports up to 12 drives for a total of 24 x 3.5-inch front-mounted drives. The chassis is designed to extend from the front, allowing for the hot-plug replacement of failed drives. This model also supports up to four E3.S NVMe-based drives in the back to allow customers to configure a PERC 11 or PERC 12 controller to natively tier 3.5-inch spinning disks with solid-state NVMe drives. This model supports up to two processors, each with up to 32 cores using the 185 W Intel® Xeon® Gold 6428N. Support for up to 16 DDR5 DIMM sockets allows for up to 1 TB of memory for demanding video surveillance and object storage environments.

Additional considerations for memory

It is important to note that each CPU has eight channels. When the processor is populated with one DIMM per channel (1DPC), the memory will operate at 4,800 MT/s; however, when populated with 2DPC (32 DIMMs total), the speed drops to 4,400 MT/s. In this context, models with only 16 DIMM sockets will operate at the fastest rated memory speed of the processor.

Another impact is cost. Increasing the number of DIMM sockets in a system increases the complexity of the design. The R660xs, R760xs, and R760xd2 all support 16 DIMMs. For every DIMM socket installed, space must be reserved in the motherboard design to accommodate the addition of electrical traces. In the case of DDR5, each DIMM has 288 pins. By reducing the number of supported DIMMs from 32 to 16, Dell engineers eliminated 4,608 electrical traces from these designs. A motherboard design with fewer traces often requires fewer “layers,” which translates directly into a lower cost for the motherboard.

Conclusion

With the launch of the new 4th Gen Intel® Xeon® Scalable processors, Dell Technologies can deliver a range of new technologies to meet customer requirements. With the “xa” model for high GPU density, “standard” models for a wide range of workloads, “xs” series for compelling price/performance, and the “xd2” model for maximum storage capacity, customers can now achieve a level of optimization not previously available.

Related Documents

Unlocking Machine Learning with Dell PowerEdge XE9680: Insights into MLPerf 2.1 Training Performance

Tue, 28 Mar 2023 23:05:15 -0000

|Read Time: 0 minutes

Executive Summary

The Dell PowerEdge XE9680 is a high-performance server designed and optimized to enable uncompromising performance for artificial intelligence, machine learning, and high-performance computing workloads. Dell PowerEdge is launching our innovative 8-way GPU platform with advanced features and capabilities.

- 8x NVIDIA H100 80GB 700W SXM GPUs or 8x NVIDIA A100 80GB 500W SXM GPUs

- 2x Fourth Generation Intel® Xeon® Scalable Processors

- 32x DDR5 DIMMs at 4800MT/s

- 10x PCIe Gen 5 x16 FH Slots

- 8x SAS/NVMe SSD Slots (U.2) and BOSS-N1 with NVMe RAID

This tech note, Direct from Development (DfD), offers valuable insights into the performance of the PowerEdge XE9680 using MLPerf 2.1 benchmarks from MLCommons.

Testing

MLPerf is a suite of benchmarks that assess the performance of machine learning (ML) workloads, with a focus on two crucial aspects of the ML life cycle: training and inference. This tech note specifically delves into the training aspect of MLPerf.

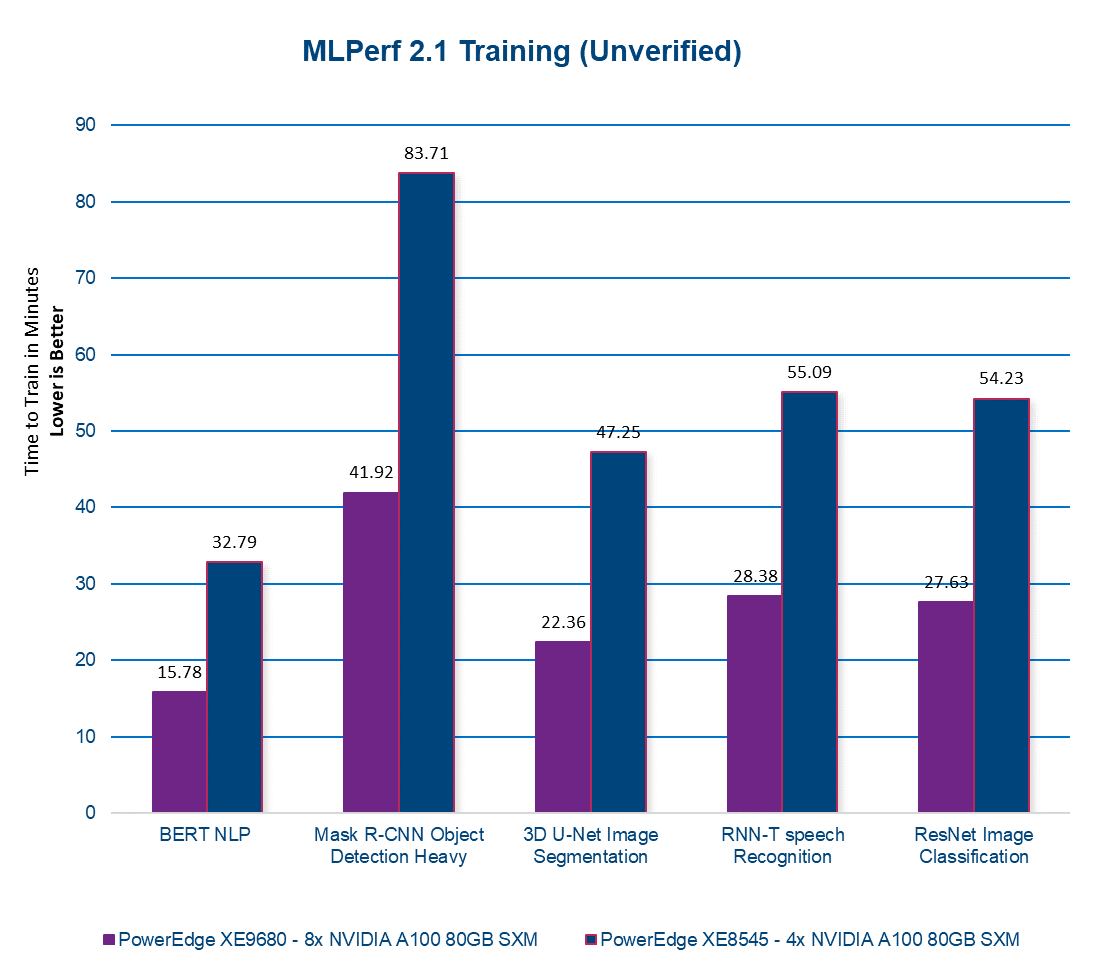

The Dell CET AI Performance and the Dell HPC & AI Innovation Lab conducted MLPerf 2.1 Training benchmarks using the latest PowerEdge XE9680 equipped with 8x NVIDIA A100 80GB SXM GPUs. For comparison, we also ran these tests on the previous generation PowerEdge XE8545, equipped with 4x NVIDIA A100 80GB SXM GPUs. The following section presents the results of our tests. Please note that in the figure below, a lower number indicates better performance and the results have not been verified by MLCommons.

Performance

Figure 1. MLPERF 2.1 Training

Our latest server, the PowerEdge XE9680 with 8x NVIDIA A100 80GB SXM GPUs, delivers on average twice the performance of our previous-generation server. This translates to faster AI model training, enabling models to be trained in half the time! With the PowerEdge XE9680, you can accelerate your AI workloads and achieve better results, faster than ever before. Contact your account executive or visit www.dell.com to learn more.

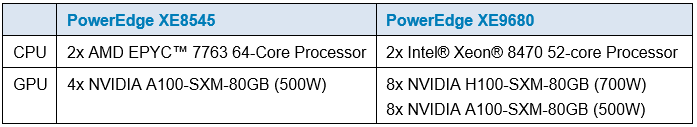

Table 1. Server configuration

(1) Testing conducted by Dell in March of 2023. Performed on PowerEdge XE9680 with 8x NVIDIA A100 SXM4-80GB and PowerEdge XE8545 with 4x NVIDIA A100-SXM-80GB. Unverified MLPerf v2.1 BERT NLP v2.1, Mask R-CNN object detection, heavy-weight v2.1 COCO 2017, 3D U-Net image segmentation v2.1 KiTS19, RNN-T speech recognition v2.1 rnnt Training. Result not verified by MLCommons Association. The MLPerf name and logo are trademarks of MLCommons Association in the United States and other countries. All rights reserved. Unauthorized use is strictly prohibited. See www.mlcommons.org for more information.” Actual results will vary.

BIOS Settings for Optimized Performance on Next-Generation Dell PowerEdge Servers

Thu, 02 Nov 2023 17:45:05 -0000

|Read Time: 0 minutes

Summary

Dell PowerEdge servers provide a wide range of tunable parameters to allow customers to achieve top performance. The information in this paper outlines the tunable parameters available in the latest generation of PowerEdge servers (for example, R660, R760, MX760, and C6620) and provides recommended settings for different workloads.

Figure 1. PowerEdge R660

Figure 1. PowerEdge R660

Figure 2. PowerEdge R760

The following tables provide the BIOS setting recommendations for the latest generation of PowerEdge servers.

Table 1. BIOS setting recommendations—System profile settings

System setup screen | Setting | Default | Recommended setting for performance | Recommended setting for low latency, Stream, and MLC environments | Recommended | |

System profile settings | System Profile | Performance Per Watt [1] | Performance Optimized | First select Performance Optimized and then select Custom [1] | Custom

| |

System profile settings | CPU Power Management | System DBPM | Maximum Performance | Maximum Performance | Maximum Performance | |

System profile settings | Memory Frequency | Maximum Performance | Maximum Performance | Maximum Performance | Maximum Performance | |

System profile settings | Turbo Boost [2] | Enabled | Enabled | Enabled | Enabled | |

System profile settings | C1E | Enabled | Disabled | Disabled | Disabled | |

System profile settings | C States | Enabled | Disabled | Disabled | Autonomous or Disabled [6] | |

System profile settings | Monitor/Mwait | Enabled | Enabled | Disabled [3] | Enabled | |

System profile settings | Memory Patrol Scrub | Standard | Standard [4] | Standard/Disabled [4] | Disabled | |

System profile settings | Memory Refresh Rate | 1x | 1x | 1x | 1x | |

System profile settings | Uncore Frequency | Dynamic | Maximum [5] | Maximum [5] | Dynamic | |

System profile settings | Energy Efficient Policy | Balanced Performance | Performance | Performance | Performance | |

System profile settings | CPU Interconnect Bus Link Power Management | Enabled | Disabled | Disabled | Disabled | |

System profile settings | PCI ASPM L1 Link Power Management | Enabled | Disabled | Disabled | Disabled | |

[1] Depends on how system was ordered. Other System Profile defaults are driven by this choice and may be different than the examples listed. Select Performance Profile first, and then select Custom to load optimal profile defaults for further modification

[2] SST Turbo Boost Technology is substantially better than previous generations for latency-sensitive environments, but specific Turbo residency cannot be guaranteed under all workload conditions. Evaluate Turbo Boost Technology in your own environment to choose which setting is most appropriate for your workload, and consider the Dell Controlled Turbo option in parallel.

[3] Monitor/Mwait should only be disabled in parallel with disabling Logical Processor. This will prevent the Linux intel_idle driver from enforcing C-states.

[4] You can test your own environment to determine whether disabling Memory Patrol Scrub is helpful.

[5] Dynamic selection can provide more TDP headroom at the expense of dynamic uncore frequency. Optimal setting is workload dependent.

[6] Autonomous on Air Cooled system or Disabled on Liquid Cooled Systems

Table 2. BIOS setting recommendations—Memory, processor, and iDRAC settings

System setup screen | Setting | Default | Recommended setting for performance | Recommended setting for low latency, Stream, and MLC environments | Recommended |

Memory settings | Memory Operating Mode | Optimizer | Optimizer [1] | Optimizer [1] | Optimizer [1] |

Memory settings | Memory Node Interleave | Disabled | Disabled | Disabled | Disabled |

Memory settings | DIMM Self Healing | Enabled | Disabled | Disabled | Disabled |

Memory settings | ADDDC setting | Disabled [2] | Disabled [2] | Disabled [2] | Disabled [2] |

Memory settings | Memory Training | Fast | Fast | Fast | Fast |

Memory settings | Correctable Error Logging | Enabled | Disabled | Disabled | Disabled |

Processor settings | Logical Processor | Enabled | Disabled [3] | Disabled [3] | Enabled |

Processor settings | Virtualization Technology | Enabled | Disabled | Disabled | Disabled |

Processor settings | CPU Interconnect Speed | Maximum Data Rate | Maximum Data Rate | Maximum Data Rate | Maximum Data Rate |

Processor settings | Adjacent Cache Line Prefetch | Enabled | Enabled | Enabled | Enabled |

Processor settings | Hardware Prefetcher | Enabled | Enabled | Enabled | Enabled |

Processor settings | DCU Streamer Prefetcher | Enabled | Enabled | Disabled | Disabled |

Processor settings | DCU IP Prefetcher | Enabled | Enabled | Enabled | Enabled |

Processor settings | Sub NUMA Cluster | Disabled | SNC 2 | SNC 4 on XCC SNC 2 on MCC | SNC 4 on XCC SNC 2 on MCC |

Processor settings | Dell Controlled Turbo | Disabled | Disabled | Enabled [4] | Disabled |

Processor settings | Dell Controlled Turbo Optimizer mode | Disabled | Enabled [5] | Enabled [5] | Enabled [5] |

Processor settings | XPT Prefetch | Enabled | Disabled | Disabled | Enabled |

Processor settings | UPI Prefetch | Enabled | Disabled | Disabled | Enabled |

Processor settings | LLC Prefetch | Disabled | Enabled | Disabled | Disabled |

Processor settings | DeadLine LLC Alloc | Enabled | Enabled | Enabled | Disabled |

Processor settings | Directory AtoS | Disabled | Disabled | Disabled | Disabled |

Processor settings | Dynamic SST Perf Profile | Disabled | Disabled | Enabled | Disabled |

Processor settings | SST-Perf- profile | Operating Point 1 | Operating Point 1 | Operating Point ? [6] | Operating Point 1 |

iDRAC settings | Thermal Profile | Default | Maximum Performance | Maximum Performance | Maximum Performance |

[1] Use Optimizer Mode when Memory Bandwidth Sensitive, up to 33% BW reduction with Fault Resilient Mode.

[2] Only available when x4 DIMMS installed in the system.

[3] Logical Processor (Hyper Threading) tends to benefit throughput-oriented workloads such as SPEC CPU2017 INT and FP_RATE. Many HPC workloads disable this option. This only benefits SPEC FP_rate if the thread count scales to the total logical processor count.

[4] Dell Controlled Turbo helps to keep core frequency at the maximum all-cores Turbo frequency, which reduces jitter. Disable if Turbo disabled.

[5] Option is available on liquid cooled systems only.

[6] Depends on if your program is affected by Base and Turbo frequency. Will reduce CPU core count and give higher Base and Turbo frequencies.

iDRAC recommendations

- Thermally challenged environments should increase fan speed through iDRAC Thermal section.

- All Power Capping should be removed in performance-sensitive environments.

BIOS settings glossary

- System Profile: (Default=Performance Per Watt)—It can be difficult to set each individual power/performance feature for a specific environment. Because of this, a menu option is provided that can help a customer optimize the system for things such as minimum power usage/acoustic levels, maximum efficiency, Energy Star optimization, or maximum performance.

- Performance Per Watt DAPC (Dell Advanced Power Control)—This mode uses Dell presets to maximize the performance/watt efficiency with a bias towards power savings. It provides the best features for reducing power and increasing performance in applications where maximum bus speeds are not critical. It is expected that this will be the favored mode for SPECpower testing. "Efficiency–Favor Power" mode maintains backwards compatibility with systems that included the preset operating modes before Energy Star for servers was released.

- Performance Per Watt OS—This mode optimizes the performance/watt efficiency with a bias towards performance. It is the favored mode for Energy Star. Note that this mode is slightly different than "Performance Per Watt DAPC" mode. In this mode, no bus speeds are derated as they are in the Performance Per Watt DAPC mode, leaving the operating system in control of those changes.

- Performance—This mode maximizes the absolute performance of the system without regard for power. In this mode, power consumption is not considered. Things like fan speed and heat output of the system, in addition to power consumption, might increase. Efficiency of the system might go down in this mode, but the absolute performance might increase depending on the workload that is running.

- Custom—Custom mode allows the user to individually modify any of the low-level settings that are preset and unchangeable in any of the other four preset modes.

- C-States—C-states reduce CPU idle power. There are three options in this mode:

- Enabled: When “Enabled” is selected, the operating system initiates the C-state transitions. Some operating system software might defeat the ACPI mapping (for example, intel_idle driver).

- Autonomous: When "Autonomous" is selected, HALT and C1 requests get converted to C6 requests in hardware.

- Disable: When "Disable" is selected, only C0 and C1 are used by the operating system. C1 gets enabled automatically when an OS auto-halts.

- C1 Enhanced Mode—Enabling C1E (C1 enhanced) state can save power by halting CPU cores that are idle.

- Turbo Mode—Enabling turbo mode can boost the overall CPU performance when all CPU cores are not being fully utilized. A CPU core can run above its rated frequency for a short period of time when it is in turbo mode.

- Hyper-Threading—Enabling Hyper-Threading lets the operating system address two virtual or logical cores for a physical presented core. Workloads can be shared between virtual or logical cores when possible. The main function of hyper-threading is to increase the number of independent instructions in the pipeline for using the processor resources more efficiently.

- Execute Disable Bit—The execute disable bit allows memory to be marked as executable or non-executable when used with a supporting operating system. This can improve system security by configuring the processor to raise an error to the operating system when code attempts to run in non-executable memory.

- DCA—DCA capable I/O devices such as network controllers can place data directly into the CPU cache, which improves response time.

- Power/Performance Bias—Power/performance bias determines how aggressively the CPU will be power managed and placed into turbo. With "Platform Controlled," the system controls the setting. Selecting "OS Controlled" allows the operating system to control it.

- Per Core P-state—When per-core P-states are enabled, each physical CPU core can operate at separate frequencies. If disabled, all cores in a package will operate at the highest resolved frequency of all active threads.

- CPU Frequency Limits—The maximum turbo frequency can be restricted with turbo limiting to a frequency that is between the maximum turbo frequency and the rated frequency for the CPU installed.

- Energy Efficient Turbo—When energy efficient turbo is enabled, the CPU's optimal turbo frequency will be tuned dynamically based on CPU utilization.

- Uncore Frequency Scaling—When enabled, the CPU uncore will dynamically change speed based on the workload.

- MONITOR/MWAIT—MONITOR/MWAIT instructions are used to engage C-states.

- Sub-NUMA Cluster (SNC)—SNC breaks up the last level cache (LLC) into disjoint clusters based on address range, with each cluster bound to a subset of the memory controllers in the system. SNC improves average latency to the LLC and memory. SNC is a replacement for the cluster on die (COD) feature found in previous processor families. For a multi-socketed system, all SNC clusters are mapped to unique NUMA domains. (See also IMC interleaving.) Values for this BIOS option can be:

- Disabled: The LLC is treated as one cluster when this option is disabled.

- Enabled: Uses LLC capacity more efficiently and reduces latency due to core/IMC proximity. This might provide performance improvement on NUMA-aware operating systems.

- Snoop Preference—Select the appropriate snoop mode based on the workload. There are two snoop modes:

- HS w. Directory + OSB + HitME cache: Best overall for most workloads (default setting)

- Home Snoop: Best for BW sensitive workloads

- XPT Prefetcher—XPT prefetch is a mechanism that enables a read request that is being sent to the last level cache to speculatively issue a copy of that read to the memory controller prefetcher.

- UPI Prefetcher—UPI prefetch is a mechanism to get the memory read started early on DDR bus. The UPI receive path will spawn a memory read to the memory controller prefetcher.

- Patrol Scrub—Patrol scrub is a memory RAS feature that runs a background memory scrub against all DIMMs. This feature can negatively affect performance.

- DCU Streamer Prefetcher—DCU (Level 1 Data Cache) streamer prefetcher is an L1 data cache prefetcher. Lightly threaded applications and some benchmarks can benefit from having the DCU streamer prefetcher enabled. Default setting is Enabled.

- LLC Dead Line Allocation—In some Intel CPU caching schemes, mid-level cache (MLC) evictions are filled into the last level cache (LLC). If a line is evicted from the MLC to the LLC, the core can flag the evicted MLC lines as "dead." This means that the lines are not likely to be read again. This option allows dead lines to be dropped and never fill the LLC if the option is disabled. Values for this BIOS option can be:

- Disabled: Disabling this option can save space in the LLC by never filling MLC dead lines into the LLC.

- Enabled: Opportunistically fill MLC dead lines in LLC, if space is available.

- Adjacent Cache Prefetch—Lightly threaded applications and some benchmarks can benefit from having the adjacent cache line prefetch enabled. Default is Enabled.

- Intel Virtualization Technology—Intel Virtualization Technology allows a platform to run multiple operating systems and applications in independent partitions, so that one computer system can function as multiple virtual systems. Default is Enabled.

- Hardware Prefetcher—Lightly threaded applications and some benchmarks can benefit from having the hardware prefetcher enabled. Default is Enabled.

- Trusted Execution Technology—Enable Intel Trusted Execution Technology (Intel TXT). Default is Disabled.