Launch Flexible Machine Learning Models Quickly with cnvrg.io® on Red Hat OpenShift

Download PDFWed, 17 Jan 2024 14:11:31 -0000

|Read Time: 0 minutes

Summary

Data scientists hold a high degree of responsibility to support the decision-making process of companies and their strategies. To this end, data scientists extract insights from a large amount of heterogeneous data through a set of iterative tasks that include various aspects: cleaning and formatting the data available to them, building training and testing datasets, mining data for patterns, deciding on the type of data analysis to apply and the ML methods to use, evaluating and interpreting the results, refining ML algorithms, and possibly even managing infrastructure. To ensure that data scientists can deliver the most impactful insights for their companies efficiently and effectively, convrg.io provides a unified platform to operationalize the full machine learning (ML) lifecycle from research to production.

As the leading data-science platform for ML model operationalization (MLOps) and management, cnvrg.io is a pioneer in building cutting-edge ML development solutions that provide data scientists with all the tools they need in one place to streamline their processes. In addition, by deploying MLOps on Red Hat OpenShift, data scientists can launch flexible, container-based jobs and pipelines that can easily scale to deliver better efficiency in terms of compute resource utilization and cost. Infrastructure teams can also manage and monitor ML workloads in a single managed and cloud-native environment. For infrastructure architects who are deploying cnvrg.io on Dell PowerEdge servers and Intel® components, this document provides recommended hardware bill of materials (BoM) configurations to help get them started.

Key considerations

Key considerations for using the recommended hardware BoMs for deploying cnvrg.io on Red Hat OpenShift include:

- Provision external storage. When deploying cnvrg.io on Red Hat OpenShift, local storage is used only for container images and ephemeral volumes. External persistent storage volumes should be provisioned on a storage array or on another solution that you already have in place. If you do not already have a persistent storage solution, contact your Dell Technologies representative for guidance.

- Use high-performance object storage. The hardware BoMs below assume that you use an in-cluster solution based on MinIO for object storage. The number of drives and the capacity for MinIO object storage depends on the dataset size and performance requirements. An alternative object store would be an external S3-compatible object store such as Elastic Cloud Storage (ECS) or Dell PowerScale (Isilon), powered by high-capacity Solidigm SSDs.

- Scale object storage independently. Object storage capacity can be scaled independently of worker nodes by deploying additional storage nodes. Both high-performance, high capacity (with NVM Express [NVMe] Solidigm solid-state drives [SSDs]), and high-capacity (with rotational hard-disk drives [HDDs]) configurations can be used. All nodes using NVMe drives should be configured with 100 Gbps network interface controllers (NICs) to take full advantage of the drives’ I/O throughput.

Recommended configurations

Controller nodes (3 nodes required) and worker nodes

Table 1. PowerEdge R660-based, up to 10 NVMe drives, 1RU

Feature | Control-Plane (Master) Nodes | ML/Artificial Intelligence (AI) CPU Cluster (Worker) Nodes | |

Platform | Dell R660 supporting 10 x 2.5” drives with NVMe backplane - direct connection | ||

CPU |

| Base configuration | Plus configuration |

2x Xeon® Gold 6426Y (16c @ 2.5GHz) | 2x Xeon® Gold 6448Y (32c @ 2.1GHz) | 2x Xeon® Platinum 8468 (48c @ 2.1GHz) | |

DRAM | 128GB (8x 16GB DDR5-4800) | 256GB (16x 16GB DDR5-4800) | 512GB (16x 32GB DDR5-4800) |

Boot device | Dell BOSS-N1 with 2x 480GB M.2 NVMe SSD (RAID1) | ||

Storage[1] | 1x 1.6TB Solidigm[2] D7-P5620 SSD (PCIe Gen4, Mixed-use) | 2x 1.6TB Solidigm2 D7-P5620 SSD (PCIe Gen4, Mixed-use) | |

Object storage[3] | N/A | 4x (up to 10x) 1.92TB, 3.84TB or 7.68TB Solidigm D7-P5520 SSD (PCIe Gen4, Read-Intensive) | |

Shared storage[4] | N/A | External | |

NIC[5] | Intel® X710-T4L for OCP3 (Quad-port 10Gb) | Intel® X710-T4L for OCP3 (Quad-port 10Gb), or Intel® E810-CQDA2 PCIe add-on card (dual-port 100Gb) | |

Additional NIC for external storage[6] | N/A | Intel® X710-T4L for OCP3 (Quad-port 10Gb), or Intel® E810-CQDA2 PCIe add-on card (dual-port 100Gb) | |

Optional – Dedicated storage nodes

Figure 2. PowerEdge R660-based, up to 10 NVMe drives or 12 SAS drives, 1RU

Feature | Description | |

Node type | High performance | High capacity |

Platform | Dell R660 supporting 10x 2.5” drives with NVMe backplane | Dell R760 supporting 12x 3.5” drives with SAS/SATA backplane |

CPU | 2x Xeon® Gold 6442Y (24c @ 2.6GHz) | 2x Xeon® Gold 6426Y (16c @ 2.5GHz) |

DRAM | 128GB (8x 16GB DDR5-4800) | |

Storage controller | None | HBA355e adapter |

Boot device | Dell BOSS-N1 with 2x 480GB M.2 NVMe SSD (RAID1) | |

Object storage3 | up to 10x 1.92TB / 3.84TB / 7.68TB Solidigm D7-P5520 SSD (PCIe Gen4, Read-Intensive) | up to 12x 8TB/16TB/22TB 3.5in 12Gbps SAS HDD 7.2k RPM |

NIC4 | Intel® E810-CQDA2 PCIe add-on card (dual-port 100Gb) | Intel® E810-XXV for OCP3 (dual-port 25Gb) |

Learn more

Contact your Dell or Intel account team for a customized quote at 1-877-289-3355

[1] Local storage used only for container images and ephemeral volumes; persistent volumes should be provisioned on an external storage system.

[2] Formerly Intel

[3] The number of drives and capacity for MinIO object storage depends on the dataset size and performance requirements.

[4] External shared storage required for Kubernetes persistent volumes.

[5] 100 Gb NICs are recommended for higher throughput.

[6] Optional, required only if a dedicated storage network for external storage system is necessary.

Related Documents

Deploy Machine Learning Models Quickly with cnvrg.io and VMware Tanzu

Wed, 13 Dec 2023 21:09:16 -0000

|Read Time: 0 minutes

Summary

Data scientists and developers use cnvrg.io to quickly deploy machine learning (ML) models to production. For infrastructure teams interested in enabling cnrvg.io on VMware Tanzu, this article contains a recommended hardware bill of materials (BoM). Data scientists will appreciate the performance boost that they can experience using Dell PowerEdge servers with Intel Xeon Scalable Processors as they wrangle big data to uncover hidden patterns, correlations, and market trends. Containers are a quick and effective way to deploy MLOps solutions built with cnvrg.io, and IT teams are turning to VMware Tanzu to create them. Tanzu enables IT admins to curate security-enabled container images that are grab-and-go for data scientists and developers, to speed development and delivery.

Market positioning

Too many AI projects take too long to deliver value. What gets in the way? Drudgery from low-level tasks that should be automated: managing compute, storage, and software, managing Kubernetes pods, sequencing jobs, monitoring experiments, models, and resources. AI development requires data scientists to perform many experiments that require adjusting a variety of optimizations, and then preparing models for deployment. There is no time to waste on tasks already automated by MLOps platforms.

Cnvrg.io provides a platform for MLOps that streamlines the model lifecycle through data ingestion, training, testing, deployment, monitoring, and continuous updating. The cnvrg.io Kubernetes operator deploys with VMware Tanzu to seamlessly manage pods and schedule containers. With cnvrg.io, AI developers can create entire AI pipelines with a few commands, or with a drag-and-drop visual canvas. The result? AI developers can deploy continuously updated models faster, for a better return on AI investments.

Key considerations

- Intel Xeon Scalable Processors – The 4th Generation Intel Xeon Scalable processor family features the most built-in accelerators of any CPU on the market for AI, databases, analytics, networking, storage, crypto, and data compression workloads.

- Memory throughput – Dell PowerEdge servers with Intel 4th Gen Xeon Scalable Processors provide an enhanced memory performance by supporting eight channels of DDR5 memory modules per socket, with speeds of up to 4800MT/s with 1 DIMM per channel (1DPC) or up to 4400MT/s with 2 DIMMs per channel (2DPC). Dell PowerEdge servers using DDR5 support higher-capacity memory modules, consume less power, and offer up to 1.5x bandwidth compared to previous generation platforms that use DDR4.

- Higher performance for intensive ML applications – Dell PowerEdge R760 servers support up to 24 x 2.5” NVM Express (NVMe) drives with an NVMe backplane. NVMe drives enable VMware vSAN, which runs under VMware Tanzu, to meet the high-performance requirements of ML workloads, in terms of both throughput and latency metrics.

- Storage architecture – vSAN’s Original Storage Architecture (OSA) is a legacy 2-tier model using high throughput storage drives for a caching tier, and a capacity tier composed of high-capacity drives. In contrast, the Express Storage Architecture (ESA) is an alternative design introduced in vSAN 8.0 that features a single-tier model designed to take full advantage of modern NVMe drives.

- Scale object-storage capacity – Deploy additional storage nodes to scale object-store capacity independently of worker nodes. Both high performance (with NVMe solid-state drives [SSDs]) and high-capacity (with rotational hard-disk drives [HDDs]) configurations can be used. All nodes using NVMe drives should be configured with 100 Gb network interface controllers (NICs) to take full advantage of the drives’ data transfer rates.

Recommended configurations

Worker Nodes (minimum four nodes required, up to 64 nodes per cluster)

Table 1. PowerEdge R760-based, up to 16 NVMe drives, 2RU

Feature | Description | |

Platform | Dell R760 supporting 16x 2.5” drives with NVMe backplane - direct connection | |

CPU | Base configuration: 2x Xeon Gold 6448Y (32c @ 2.1GHz), or Plus configuration: 2x Xeon Gold 8468 (48c @ 2.1GHz) | |

vSAN Storage Architecture | OSA | ESA |

DRAM | 256GB (16x 16GB DDR5-4800) | 512GB (16x 32GB DDR5-4800) |

Boot device | Dell BOSS-N1 with 2x 480GB M.2 NVMe SSD (RAID1) | |

vSAN Cache Tier [1] | 2x 1.92TB Solidigm D7-P5520 SSD (PCIe Gen4, Read-Intensive) | N/A |

vSAN Capacity Tier1 | 6x 1.92TB Solidigm D7-P5620 SSD (PCIe Gen4, Mixed Use) | |

Object storage1 | 4x (up to 10x) 1.92TB, 3.84TB or 7.68TB Solidigm D7-P5520 SSD (PCIe Gen4, Read-Intensive) | |

NIC[2] | Intel E810-XXV for OCP3 (dual-port 25Gb), or Intel E810-CQDA2 PCIe add-on card (dual-port 100Gb) | |

Additional NIC[3] | Intel E810-XXV for OCP3 (dual-port 25Gb), or Intel E810-CQDA2 PCIe add-on card (dual-port 100Gb) | |

Optional – Dedicated storage nodes

Table 2. PowerEdge R660-based, up to 10 NVMe drives or 12 SAS drives, 1RU

Feature | Description | |

Node type | High performance | High capacity |

Platform | Dell R660 supporting 10x 2.5” drives with NVMe backplane | Dell R760 supporting 12x 3.5” drives with SAS/SATA backplane |

CPU | 2x Xeon Gold 6442Y (24c @ 2.6GHz) | 2x Xeon Gold 6426Y (16c @ 2.5GHz) |

DRAM | 128GB (16x 8GB DDR5-4800) | |

Storage controller | None | HBA355e adapter |

Boot device | Dell BOSS-N1 with 2x 480GB M.2 NVMe SSD (RAID1) | |

Object storage1 | up to 10x 1.92TB / 3.84TB / 7.68TB Solidigm D7-P5520 SSD (PCIe Gen4, Read-Intensive) | up to 12x 8TB/16TB/22TB 3.5in 12Gbps SAS HDD 7.2k RPM |

NIC2 | Intel E810-CQDA2 PCIe add-on card (dual-port 100Gb) | Intel E810-XXV for OCP3 (dual-port 25Gb) |

Learn more

Deploy ML models quickly with cnvrg.io and VMware Tanzu. Contact your Dell or Intel account team for a customized quote, at 1-877-289-3355.

[1] Number of drives and capacity for MinIO object storage depends on the dataset size and performance requirements.

[2] 100Gbps NICs recommended for higher throughput.

[3] Optional – required only if dedicated storage network for external storage system is necessary.

Improve performance by easily migrating to a modern OpenShift environment on PowerEdge R7615 servers

Tue, 14 May 2024 20:15:19 -0000

|Read Time: 0 minutes

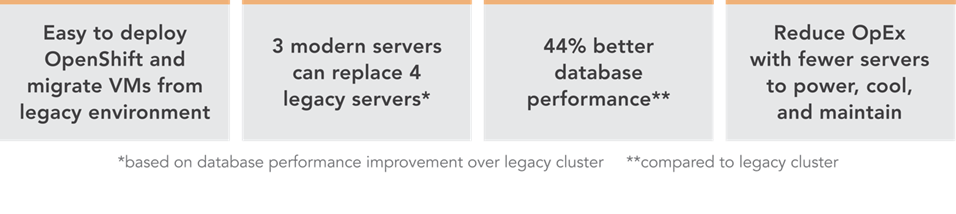

Improve performance and gain room to grow by easily migrating to a modern OpenShift environment on Dell PowerEdge R7615 servers with 4th Generation AMD EPYC processors and high-speed 100GbE Broadcom NICs

We deployed this modern environment, then migrated database VMs from legacy servers and saw performance improvements that support consolidation.

Transactional databases are the backbone of many business operations, powering ecommerce and order fulfillment, human resources and payroll, and a host of other activities. If your company is running these kinds of workloads on server infrastructure that is several years old, you might believe that performance is adequate and that you have little reason to consider upgrading to new servers with modern processors, networking, and a Red Hat® OpenShift® container-based environment. In fact, by continuing to use this older gear, you could be incurring higher than necessary operating expenditures by maintaining and powering more servers than you need to perform a given volume of work. You could also be risking downtime with aging hardware that is likelier to break down. By upgrading to a modern environment, you could mitigate these issues and future-proof your infrastructure. A 2019 Forrester Consulting report recommended that organizations refresh their servers at least every three years to maximize agility and productivity.[1] The report states not only that modern servers allow organizations to adopt more emerging technologies at a faster rate, but also “modern hardware has a profound impact on business benefits such as better customer experience, employee productivity, and innovation.”[2]

We explored the process of migrating VMs from a legacy environment and conducted testing to quantify the resulting improvements in network and database performance. We started with a legacy environment consisting of MySQL™ virtual machines (VMs) running on a cluster of three Dell™ PowerEdge™ R7515 servers with 3rd Generation AMD EPYC™ processors and 25Gb Broadcom® NICs. We then deployed a modern OpenShift container-based environment comprising three Dell PowerEdge R7615 servers with 4th Generation AMD EPYC processors and high-speed 100Gb Broadcom NICs. While the primary application of OpenShift is typically for containerized workloads, we used OpenShift Virtualization, which presents a familiar VM layer to administrators while utilizing the containerized technology on the underlying layer. Both environments used a Dell PowerStore 1200T for external storage that the servers accessed using iSCSI. We measured database performance using the HammerDB TPROC-C benchmark.

We found that the modern cluster environment of Dell PowerEdge R7615 servers with 4th Generation AMD EPYC processors and high-speed 100Gb Broadcom NICs outperformed the legacy cluster environment, delivering 44 percent greater database performance. These improvements mean that companies that upgrade can enjoy savings by meeting their workload requirements with fewer servers to license, maintain, power, and cool. Selecting 100Gb Broadcom NICs also positions companies well to take advantage of increasingly popular network-intensive technologies such as artificial intelligence (AI).

The benefits of containerization and Red Hat OpenShift Virtualization

Many organizations choose containers for DevOps due to their easy scalability and portability. Because a container encapsulates an application as well as everything necessary to run that application, it’s simple to move the container from development to test and production environments, adding instances of the application by replicating the container. Containers can also be useful for microservices, data streaming, and other use cases.[3]

Containers aren’t necessarily ideal for every use case, however, and for some infrastructures, IT teams may wish to incorporate both containers and VMs. Red Hat OpenShift Virtualization, which we used in our testing, enables organizations to run both VMs and containers on the same platform by bringing VMs into containers.[4] This lets IT reap the benefits of both containers and VMs with the efficiency benefit of relying on one management tool, rather than having to maintain two distinct infrastructures.

About our testing

We explored the process of deploying a modern data center environment and migrating VMs to it from a legacy environment. We also measured the database performance the VMs achieved in both environments:

Legacy environment

- Three Dell PowerEdge R7515 servers with 3rd Generation AMD EPYC 7663 56-core processors and Broadcom Advanced Dual 25Gb Ethernet NICs

- External storage using Dell PowerStore 1200T over iSCSI

- VMware® vSphere® 8

Modern environment

- Three Dell PowerEdge R7615 servers with 4th Generation AMD EPYC 9554 64-core processors and Broadcom NetExtreme-E BCM57508 100GB NICs

- External storage using Dell PowerStore 1200T over iSCSI

- Red Hat OpenShift 4.14

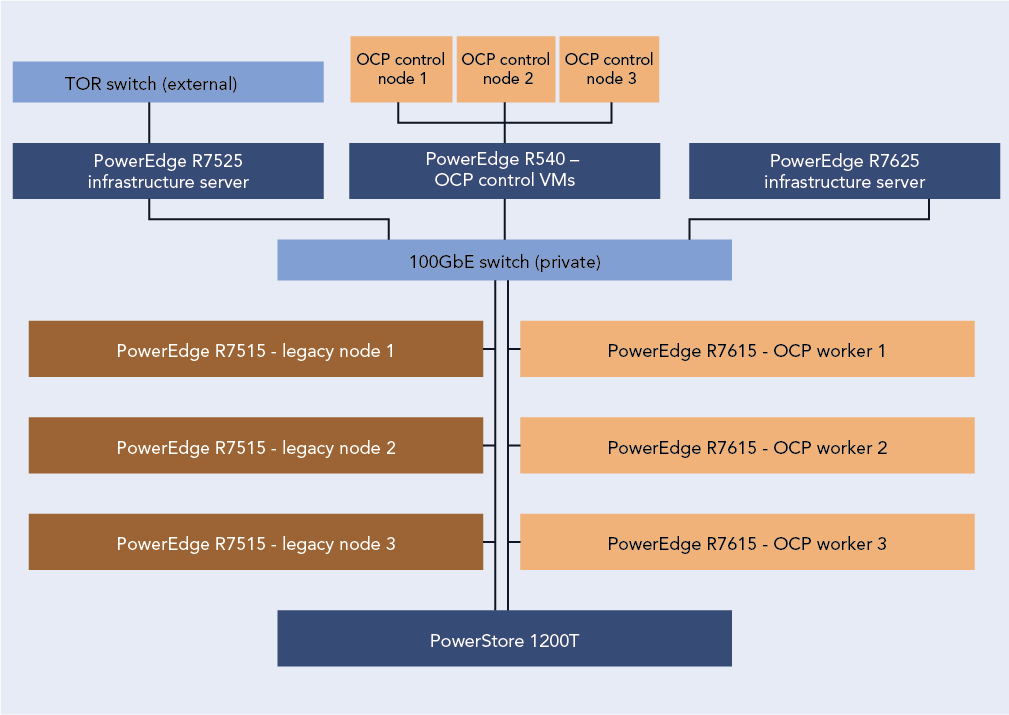

Figure 1 presents a diagram of our test configuration. In addition to our test server clusters, we needed three servers to host infrastructure VMs, workload client VMs, and the OpenShift control node VMs. We configured a Dell PowerEdge R7525 to serve as the host for our infrastructure VMs for services such as AD, DHCP, and DNS, as well as HammerDB client VMs. We also configured a Dell PowerEdge R7625 to host additional HammerDB client VMs. For the OpenShift environment, we deployed a Dell PowerEdge R540 to host the OCP control nodes. We virtualized the control nodes to reduce the number of servers needed for the test bed.

Figure 1: Our test configuration. Source: Principled Technologies.

To test the MySQL database performance of each environment, we used the TPROC-C workload from the HammerDB benchmark. HammerDB developers derived their OLTP workload from the TPC-C benchmark specifications; however, as this is not a full implementation of the official TPC-C standards, the results in this paper are not directly comparable to published TPC-C results. For more information, please visit https://www.hammerdb.com/docs/ch03s01.html.

Each VM had a single MySQL instance with a TPROC-C database. We targeted the maximum transactions per minute (TPM) each environment could achieve by increasing the user count until performance degraded.

What we learned

Finding 1: Deploying OpenShift in the modern environment was easy

For our environment, the OpenShift installation process using the Red Hat Assisted Installer to install an OpenShift Installer-Provisioned Cluster was straightforward and simple. We started by setting up the prerequisites for the environment, which included a VM for Active Directory, DNS, and DHCP. We created a domain for our private network and added the API and ingress routes as DNS A records. Next, we set up a VM as a router so that our OpenShift environment could access the internet from our private network. Finally, we created three blank VMs to serve as our OpenShift controller nodes. Once we had met the pre-requisite requirements, we logged into the Red Hat Hybrid Console and navigated to the Assisted Installer to create the cluster.

The Assisted Installer streamlined the process by walking us through configuration menus for storage, network, and access to the cluster. We started the cluster creation by assigning it a name, providing the domain, and selecting an OpenShift version. From there the installer guided us through the process of providing an installer image using the SSH public key of the server running the installer. After downloading the ISO, we booted each of the controller and worker nodes into the image and the Assisted Installer discovered each node. After discovering the controller and worker nodes, the installer walked us through the rest of the configuration process and then began the installation. The Assisted Installer made the process very simple with only six configuration tabs to advance through, and with our total install time after configuration taking around three hours. Once the installation was complete, each node rebooted into the OpenShift OS and the Assisted Installer provided us with a cluster console fully qualified domain name (FQDN) to connect to and manage the cluster from. For detailed steps on the OpenShift deployment process, see the science behind the report.

Finding 2: Migrating VMs from the legacy VMware environment to the modern OpenShift environment was easy

Migrating a VM from the VMware environment to OpenShift was also a straightforward process and quick to set up. While the actual migration time will vary depending on VM size and hardware speed, the setup consists of only a few steps and took us less than 10 minutes. We first installed the Migration Toolkit for Virtualization from the OpenShift OperatorHub. We then entered the IP address and credentials for the vCenter as a new provider. Next, we created a NetworkMap and a StorageMap to connect the respective resources between the environments. We then created a new migration plan to map the VMs to a namespace in OCP. We ran the migration plan on a single VM, and confirmed that we were able to enter the VM console once the migration was complete. For detailed steps on the process of migrating VMs from the legacy environment to the modern environment, see the science behind the report.

About 4th Gen AMD EPYC 9554 processors

According to AMD, EPYC 9554 processors deliver fast performance “for cloud, enterprise, and HPC workloads—helping accelerate your business.”[5] EPYC processors include AMD Infinity Guard, which per AMD is “a set of layered, cutting-edge security features that help you protect sensitive data and avoid the costly downtime cause by security breaches.”[6]

In addition to performance and security features, AMD claims their processors are energy-efficient, which can reduce energy costs and “minimize environmental impacts from data center operations while advancing your company’s sustainability objectives.”[7]

When comparing SPECCPU Floating Point peak rates and the default thermal design power (TDP) of the AMD EPYC 9554 and the AMD EPYC 7663, the 9554 has 54 percent better performance per watt, which demonstrates the improved power efficiency with the new 4th Gen AMD EPYC process.[8],[9]

For more information about 4th Gen AMD EPYC processors visit: https://www.amd.com/en/processors/epyc-server-cpu-family.

Finding 3: Database performance improved by 44 percent in the new environment

Figure 2 shows the results of our database performance testing using the TPROC-C workload from the HammerDB benchmark suite. The modern OpenShift cluster of Dell PowerEdge R7615 servers outperformed the legacy cluster by 44 percent. This extra capability could benefit companies upgrading to the new environment in several ways. The company could provide a better user experience, perform more work—or support more users—with a given number of servers, or reduce the number of servers necessary to execute a given workload.

Figure 2: Performance in transactions per minute using the TPROC-C workload of the HammerDB benchmark suite. Higher is better. Source: Principled Technologies.

Finding 4: Performance improved in the modern cluster, supporting consolidation, which leads to savings

Based on the results of our performance tests (see Figure 3), a company could consolidate the database workloads of a four-node Dell PowerEdge 7515 cluster with some additional headroom into three modern Dell PowerEdge R7615 servers with 4th Generation AMD EPYC processors and high-speed 100Gb Broadcom NICs.

The cluster of three modern servers delivered a total of 9,674,180 transactions per minute (3,224,726 TPMs per server). The cluster of three legacy servers delivered a total of 6,714,712 TPM (2,238,237 per server). Based on these results, four legacy servers would achieve a total of 8,952,948 TPM, which would leave 721,231 additional TPM room for growth on the modern three-node cluster.

Reducing the number of servers you need means that operational expenditures such as data center power and cooling and administrator time for maintenance also decrease, leading to ongoing savings.

Figure 3: Performance in transactions per minute that three modern servers and four legacy servers could achieve, based on our hands-on testing. Higher is better. Source: Principled Technologies.

About Dell PowerEdge R7615 servers

The Dell PowerEdge R7615 is a 2U, single-socket rack server. Dell states that it has designed this server to provide “performance and flexible, low-latency storage options in an air or Direct Liquid Cooling (DLC) configuration.”[10]

According to Dell, this server uses the AMD EPYC 4th generation processor to deliver up to 50 percent higher core count per single-socket platform in an innovative air-cooled chassis.[11] It also supports DDR5 at 4800 MT/s memory and PCIe® Gen5 with double the speed of previous Gen4 for faster access and transport of data, optimizing application output.[12] It supports up to six single-wide full-length GPUs or three double-wide full-length GPUs, to improve responsiveness or reduce app load time for power users, plus lower-latency, high-performance NVMe SSDs to help maximize compute performance.[13]

Learn more at https://www.delltechnologies.com/asset/en-us/products/servers/technicalsupport/poweredge-r7615-spec-sheet.pdf.

How high-speed 100Gb Broadcom NICs can help your organization

Even if a 25Gb NIC is sufficient to meet a company’s current networking needs, opting to equip new servers with the high-speed 100Gb Broadcom NIC can be a smart move. Future-proofing your network can allow you to meet the increasing demands of emerging technologies.

Advanced technologies such as artificial intelligence and machine learning, which can require the processing and transmission of large amounts of data, are becoming increasingly prevalent across businesses of all sizes. In a June 2023 survey of small business decision-makers, 74 percent were interested in using AI or automation in their business and 55 percent said their interest in these technologies had grown in the first half of 2023.[14] Upgrading to a modern environment with a highspeed 100Gb Broadcom NIC positions companies to take advantage of AI applications for social media, content creation, marketing, customer support, and many other use cases.

Another way that investing in the high-speed 100Gb Broadcom NIC can help your company is through improved efficiency. You might be tempted to go with a 25Gb NIC, thinking that as your networking needs increase, you can simply add more NICs of this size. However, consider a 2023 Principled Technologies study that compared the performance of a server solution with a 100Gb Broadcom 57508 NIC and a solution with four 25Gb NICs.[15] Testing revealed that the 100Gb NIC solution achieved up to 2.3 times the throughput of the solution with 25Gb NICs. It also delivered greater bandwidth consistency, which can translate to providing a better user experience; the report states that applications using the 25Gb NICs network configuration “would experience significant variation in available bandwidth, potentially causing jittery or interrupted service to multiple streams.”[16]

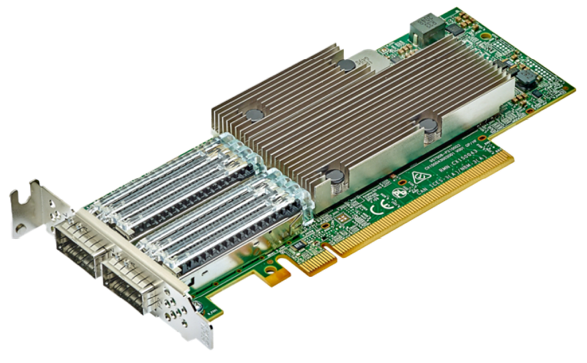

About the Broadcom BCM57508-P2100G Dual-Port 100GbE PCle 4.0 ethernet controller

A higher performing NIC can reduce latency, increase throughput, and allow the server to transmit and receive a great volume of data. The Dell PowerEdge R7615 we tested features the Broadcom BCM57508-P2100G DualPort 100GbE PCle 4.0 ethernet controller, which supports speeds of up to 200 Gigabits per second. Broadcom designed the BCM57508-P2100G “to build highlyscalable, feature-rich networking solutions in servers for enterprise and cloud-scale networking and storage applications, including high-performance computing, telco, machine learning, storage disaggregation, and data analytics.”[17]

The BCM57508-P2100G features BroadSAFE® technology, “to provide unparalleled platform security” and a “unique set of highly-optimized hardware acceleration engines to enhance network performance and improve server efficiency.”[18]

BCM57508-P2100G Dual-Port 100GbE PCle 4.0 ethernet controller. Image provided by Dell.

BCM57508-P2100G Dual-Port 100GbE PCle 4.0 ethernet controller. Image provided by Dell.

Conclusion

If your organization’s transactional databases are running on gear that is several years old, you have much to gain by upgrading to modern servers with new processors and networking components and an OpenShift environment. In our testing, a modern OpenShift environment with a cluster of three Dell PowerEdge R7615 servers with 4th Generation AMD EPYC processors and high-speed 100Gb Broadcom NICs outperformed a legacy environment with MySQL VMs running on a cluster of three Dell PowerEdge R7515 servers with 3rd Generation AMD EPYC processors and 25Gb Broadcom NICs. We also easily migrated a VM from the legacy environment to the modern environment, with only a few steps required to set up and less than ten minutes of hands-on time. The performance advantage of the modern servers would allow a company to reduce the number of servers necessary to perform a given amount of database work, thus lowering operational expenditures such as power and cooling and IT staff time for maintenance. The high-speed 100Gb Broadcom NICs in this solution also give companies better network performance and networking capacity to grow as they embrace emerging technologies such as AI that put great demands on networks.

This project was commissioned by Dell Technologies.

May 2024

Principled Technologies is a registered trademark of Principled Technologies, Inc.

All other product names are the trademarks of their respective owners.

Read the report on the PT site at https://facts.pt/2V6p3FG and see the science at https://facts.pt/Dj53ZJb.

Author: Principled Technologies

[1] Forrester, “Why Faster Refresh Cycles and Modern Infrastructure Management are Critical to Business Success,” accessed May 1, 2024, www.techrepublic.com/resource-library/casestudies/forrester-why-faster-refresh-cycles-and-modern-infrastructure-management-are-critical-to-business-success/.

[2] Forrester, “Why Faster Refresh Cycles and Modern Infrastructure Management are Critical to Business Success,” accessed May 1, 2024, www.techrepublic.com/resource-library/casestudies/forrester-why-faster-refresh-cycles-and-modern-infrastructure-management-are-critical-to-business-success/.

[3] Red Hat, “Understanding containers,” accessed April 12, 2024, https://www.redhat.com/en/topics/containers.

[4] Red Hat, “Red Hat OpenShift Virtualization,” accessed April 12, 2024,

https://www.redhat.com/en/technologies/cloud-computing/openshift/virtualization.

[5] AMD, “AMD EPYC Processors,” accessed April 12, 2024, https://www.amd.com/en/processors/epyc-server-cpu-Family.

[6] AMD, “AMD EPYC Processors.”

[7] AMD, “AMD EPYC Processors.”

[8] SPEC, “SPEC CPU®2017 Floating Point Rate Result for Dell PowerEdge R6615 (AMD EPYC 9554 64-Core Processor),” accessed May 2, 2024, https://www.spec.org/cpu2017/results/res2024q1/cpu2017-20240212-41481.html.

[9] SPEC, “SPEC CPU®2017 Floating Point Rate Result for Dell PowerEdge R6515 (AMD EPYC 7663 56-Core Processor),” accessed May 2, 2024, https://www.spec.org/cpu2017/results/res2021q3/cpu2017-20210913-29288.html.

[10] Dell, “PowerEdge R7615 Specification Sheet,” accessed April 12, 2024, https://www.delltechnologies.com/asset/en-us/products/servers/technical-support/poweredge-r7615-spec-sheet.pdf.

[11] Dell, “PowerEdge R7615 Specification Sheet.”

[12] Dell, “PowerEdge R7615 Specification Sheet.”

[13] Dell, “PowerEdge R7615 Specification Sheet.”

[14] Constant Contact, “AI Stats and Trends Small Businesses Need to Know Now,” accessed April 12, 2024, https://news.constantcontact.com/small-business-now-ai-2023.

[15] Principled Technologies, “Opt for modern 100Gb Broadcom 57508 NICs in your

Dell PowerEdge R750 servers for improved networking performance,” accessed April 12, 2024,

https://www.principledtechnologies.com/Dell/PowerEdge-R750-networking-iPerf-1023.pdf.

[16] Principled Technologies, “Opt for modern 100Gb Broadcom 57508 NICs in your

Dell PowerEdge R750 servers for improved networking performance,” accessed April 12, 2024,

https://www.principledtechnologies.com/Dell/PowerEdge-R750-networking-iPerf-1023.pdf.

[17] Broadcom, “BCM57508 – 200GbE,” accessed April 12, 2024,

https://www.broadcom.com/products/ethernet-connectivity/network-adapters/bcm57508-200g-ic.

[18] Broadcom, “BCM57508 – 200GbE.”