Introducing Dell PowerEdge XE9680 with Intel Gaudi 3 AI Accelerator

Download PDFMon, 16 Sep 2024 14:59:55 -0000

|Read Time: 0 minutes

Summary

Generative AI has emerged as a transformative technology, enabling the creation of new content across various media. The computational demands of these models require robust hardware solutions, making accelerators and specialized AI chips indispensable in server environments. Accelerators play a pivotal role in the performance of generative AI workloads. They significantly enhance computational efficiency, reduce training times, and enable the processing of larger datasets and more complex models. This makes them essential for deploying generative AI at scale in server environments. This Direct from Development (DfD) tech note describes the new capabilities you can expect from the Dell PowerEdge XE9680 server with Intel Gaudi 3 AI accelerator. This document covers the key highlights that make the Intel Gaudi 3 AI accelerator superior to traditional GPU offerings in the market.

Market positioning

The PowerEdge XE9680 with Intel Gaudi 3 AI accelerator is designed to be the addition in the 8-way Dense GPU Acceleration Portfolio for Dell. As AI and machine learning (ML) models continue to increase in size and complexity, the demand for more computation, memory, and networking continues to increase. With the Intel Gaudi 3 AI accelerator, the PowerEdge XE9680 is optimized to handle very large AI models while providing customers with more choice beyond single source GPUs. It also offers an alternative to proprietary software and networking with the ability to scale while containing the costs of infrastructure. Enterprises seeking an affordable server capable of scaling to tackle complex AI workloads will benefit the most from this offering.

Key Highlights

Estimated performance per dollar

The Intel Gaudi 3 AI accelerator will be offered as an Accelerator Card (HL-325L, OAM-Compliant) and as a Universal Baseboard (UBB) (HLB-325). The PowerEdge XE9680 will feature the UBB which includes eight Intel Gaudi 3 AI Accelerator Cards.

- Performance per dollar for Intel Gaudi 3 AI accelerator vs major competitor[1][2][3]

Comparison For | Gaudi 3 UBB vs Major Competitor | Performance per Dollar |

List Price | 0.66x | N/A |

Inference Speed | Up to 1.5x faster | 2.3x |

Training Speed | Up to 1.7x faster | 2.6x |

Training Time | Up to 1.4x faster | 2.1x |

Compute Performance[2] | Up to 1.8x better | 2.7x |

Intel showed benchmarks comparing the Intel Gaudi 3 AI accelerator to its competition, running different large language models (LLMs) such as LLAMA2-7B, LLAMA2-13B, LLAMA2-70B, Falcon-180B and GPT 3-175B. The Intel Gaudi 3 AI accelerator is up to 1.5x faster on inferencing and 1.7x faster on training workloads.[1]

For compute performance, the Intel Gaudi 3 AI accelerator baseboard is rated at 14.68 petaflops at BFP16 precision with no sparsity, compared to 8 petaflops for its competition, making it 1.8x more performant.[2]

The Intel Gaudi 3 AI accelerator stands out as a more cost-effective choice compared to its competitors, thanks to Intel’s strategic pricing and the accelerator’s resource efficiency. The $125,000 price puts the Intel Gaudi 3 AI platform at two-thirds of the estimated cost of its competition.[3]

Figure 1. Dell PowerEdge XE9680 server

Figure 1. Dell PowerEdge XE9680 server  Figure 2. Intel Gaudi 3 OAM

Figure 2. Intel Gaudi 3 OAM

Redefining Networking for AI

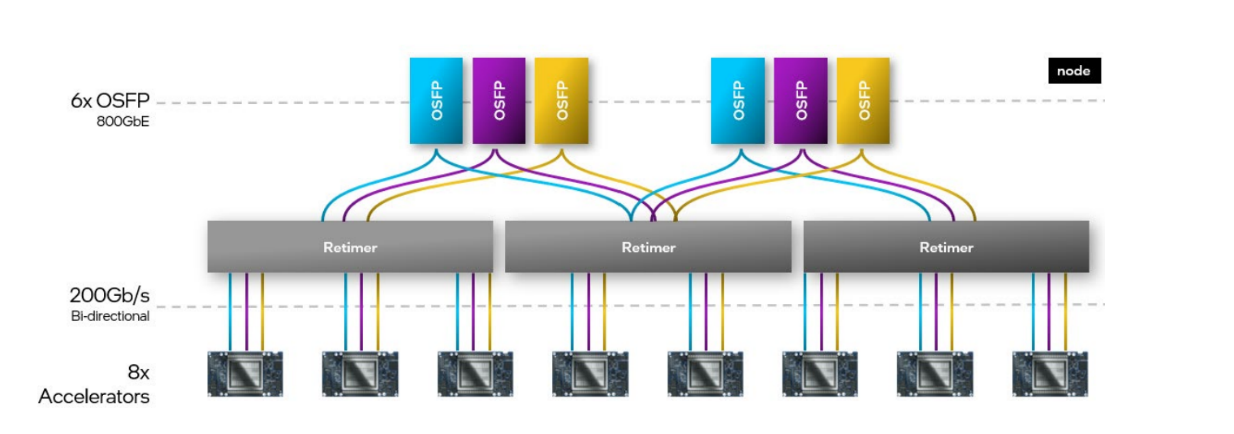

The Intel Gaudi 3 AI accelerator is designed with advanced Ethernet networking capabilities to support large-scale AI workloads. The integration of RDMA over Converged Ethernet on the Intel Gaudi 3 AI accelerator delivers distinct advantages enabling massive and flexible on-chip networking and scaling from a single node to thousands.

- Each OAM offers 24x 200 GbE RoCE (RDMA over Converged Ethernet) for scaleup and scale-out.[1]

- On the UBB, each OAM card has a NIC port connected to 3 of the OSFP scale-out ports of the server, while 21 x 200 GbE are part of OAM-to-OAM connections

Figure 3. Intel Gaudi 3 AI Accelerator – Scale-out Cluster Architecture

Figure 3. Intel Gaudi 3 AI Accelerator – Scale-out Cluster Architecture

- The total throughput remains the same from Intel Gaudi 3 AI platform with 8 OAM cards with 3x200GbE (4800GbE) converging to 6*800GbE (4800 GbE) with OSFP ports on the XE9680 server.

RoCE Benefits:

- It leverages existing Ethernet infrastructure, thus significantly reducing initial set up costs along with ease of maintenance. It does not require specialized infrastructure.

- When compared with competitor proprietary ethernet fabrics such as InfiniBand (IB) which require proprietary IB Network interface cards and cables, the Intel Gaudi 3 AI accelerator is compatible with the greater Ethernet ecosystem providing flexibility and vendor choice.

Availability

The Intel Gaudi 3 AI accelerator, integrated into the Dell PowerEdge XE9680 server, is expected to enter high-volume production by Q3 2024 and will be available soon. Despite the chip shortage causing delays for small to large enterprise customers[4], the Intel Gaudi 3 AI accelerator offers a valuable choice. At the same time, these systems can also be made available via Intel Tiber Developer Cloud, providing opportunity for prospective customers to test their workload on Dell’s Intel Gaudi 3 AI platform at no charge.

Conclusion

In conclusion, the Intel Gaudi 3 AI accelerator represents a significant advancement in AI computing, offering superior performance, cost-efficiency, and scalability. With its AI-optimized Ethernet networking, high-speed connectivity, it is well-positioned to meet the demands of modern AI workloads. As it enters production in Q3 2024, the Intel Gaudi 3 AI accelerator promises to provide enterprises with a powerful and flexible solution for their AI needs, despite the ongoing challenges in the semiconductor market. The integration of the Intel Gaudi 3 AI accelerator into platforms like the PowerEdge XE9680 further underscores its potential to deliver exceptional value and choice to customers across various industries.

References

[1] Intel Gaudi 3 AI Accelerator White Paper

[2] Stacking Up Intel Gaudi Against Nvidia GPUs For AI

[3] Intel Gaudi Enables a Lower Cost Alternative for AI Compute and GenAI

[4] Nvidia Chip Shortages Leave AI Startups Scrambling for Computing Power

[5] Intel Gaudi 3 vs. Nvidia H100: Advancing Enterprise AI

Authors: Flavio Fomin (Intel), Manya Rastogi (Dell)