Graph DB Use Cases – Put a Tiger in Your Tank

Mon, 06 Feb 2023 18:44:06 -0000

|Read Time: 0 minutes

In the NoSQL Database Taxonomy there are four basic categories:

- Key Value

- Wide Column

- Document

- Graph

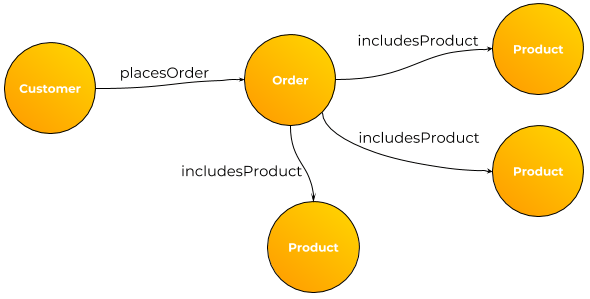

Although Graph is arguably the smallest category by several measures it is the richest when it comes to use cases. Here is a sampling of what I’ve seen to date:

- Fraud detection

- Feature store for ML/DL

- C360 – yeah you can do that one in most any db.

- As an overlay to an ERP application allowing the addition of new attributes without changing the underlying data model or code. For select objects the keys (primary & alternate) with select attributes populate the graph. The regular APIs are wrapped to check for new attributes in the graph. If none then the call is passed thru. For new attributes there would be a post processing module that makes sense of it and takes additional actions based on the content.

- One could use this same technique for many homegrown applications.

- As an integrated database for multiple disparate, hetereogenous data store integration. I solutioned this conceptually for a large bank that had data in the likes of Snowflake, Oracle, MySQL, Hadoop and Teradata. The key to success here is not dragging all the data into the graph but merely keys, select attributes

- Recommendation engines

- Configuration management

- Network management

- Transportation problems

- MDM

- Threat detection

- Bad guy databases

- Social networking

- Supply chain

- Telecom

- Call management

- Entity resolution

We’re closely partnered with Tiger Graph and can cover the above use cases and many more.

If you’d like to hear more and work on solutions to your problem please do drop me an email at Mike.King2@Dell.com

Related Blog Posts

What's a Data Hoarder to Do?

Tue, 10 May 2022 19:18:45 -0000

|Read Time: 0 minutes

So you're buried in data, your can't afford to expand, your performance is bad and getting worse and your users can't find what they need. Yes it's a tsunami of data that's the root cause of your problems. You ask your Mom for advice and she says "Why don't you watch that TV show called Hoarders?" You watch a few episodes and can relate to the problem but they offer no formidable solutions for our excess data. Then you talk to Mike King over at Dell and he says "That problem has been around since the ENIAC". The bottom line is that almost all systems are designed to store certain kinds of data for a pre-determined amount of time (retention). If you don't have retention rules then you failed as an architect. The solution for data hoarding is much more recent evolving over the last 40 years or so. It was first called data archiving. That term is still used today by some. The concept is really simple one takes the data that is no longer needed and removes it from the system of record. If the data is still needed but just way less frequently then it would be move to a cheaper form of storage. The disciple that evolved around this practice was first called data lifecycle management (DLM) and later information lifecycle management (ILM). ILM considers many more aspects of the archiving process in a more holistic sense including policies, governance, classification, access, compliance, retention, redaction, privacy, recall, query and more. We won't get into all the ILM stuff in this post.

Let's take a concrete example to get started. We have a regional bank called Happy Piggy Bank. They do business in 30 states and have supporting ERP applications like Oracle EBS, databases such as Greenplum & SingleStore for analytics and hadoop for an integrated data warehouse and AI platform. The EBS db has six years of data and a stout 600TB of data. The Greenplum db is around 1PB and stores just 90 days of data. SingleStore is new but they have big plans and it's at 200TB today but will grow to 3PB in a year. The hadoop is the largest of all and has detail transaction and account statements going back 10 years and stores 10PB of raw data. Only the Greenplum db has a formal purge program that was actually written and put in production. Both the hadoop and EBS environments have no purge program. The first order of business is to determine how much data they should or need to retain. This is mostly a business activity. The next step is to determine the access patterns. In order to do data archiving one needs to determine the active portion of the data. In most systems perhaps 99% of the access is constrained to a smaller portion of the retention continuum. Let's consider that EBS db and it's six years of data. We might run some reports and do some analysis and it's highly likely that 90% of the data is less than 6 months old and let's say 99% is less than 1 year old. In this case we should target the 5 oldest years of retention (83% of the data or 498TB of the db) to migrate to a more cost effective platform. In a similar fashion we determine that 60% of the hadoop data is accessed less than 1% of the time so that's a 6PB chunk we can lop off of the hadoop system. So for Happy Piggy Bank we have determined we can remove 6.5PB of data from two of the systems which will yield the following benefits:

- Room for future growth will be created in the source systems

- Performance should improve in these systems

- Overall data storage costs will go down

- The source systems will be easier to manage

- We will likely avoid increased software licensing charges for Oracle and hadoop as compared to doing nothing

So ye ask what might the solution be? Enter Versity a partner of Dell Technologies enabled through our OEM channel. Versity is a full featured archiving solution that enables:

- High performance parallel archive

- Covers a wide variety of applications, databases and such

- Stores data in three successive tiers (local, NAS & object)

- Supports selective recall

The infrastructure includes:

- Versity software

- PE 15G servers such as R750s

- PowerVault locally attached arrays

- PowerScale NAS appliances

- ECS object appliances

A future post will cover more details on what this solution could look like for Happy Piggy Bank.

Versity targets customers that have 5PB of data or more that can be archived.

Live Optics is Your Friend

Tue, 05 Oct 2021 19:01:57 -0000

|Read Time: 0 minutes

It’s a rare day that a free tool exists that can help profile customer workloads to the mutual benefit of all. Live Optics (previously DPack) is a gem in the rough that is truly a win-win proposition for customers and vendors such as Dell. I’ve been using it for years and found that it’s a rare day that I don’t learn something of use.

The tool is similar to SAR on steroids. Data is collected for each host. Hosts can be VMs. Servers can be from any manufacturer. The data collected is on IOPS (size and amount), memory usage, CPU usage and network activity. It can be run in local mode where the data doesn’t go anywhere else or it can be stored in a Dell private cloud. The later is more beneficial as it may be accessed by folks in many roles for various assessments. The data may also be mined to help Dell make better decisions of current and future products based on actual observed user profiles.

I use LiveOptics to profile database workloads like Greenplum and Vertica, Hadoop, NoSQL databases like MongoDB, Cassandra, Marklogic and more.

Upon inspection of the workload the data collected helps facilitate more meaningful discussions with various SMEs and to right size future designs. In one case I found a customer that was using less than half their memory during peak periods…so we suggested new server BOMs with much less memory as they didn’t need what they had.

Can we help you with assessing your workloads of interest on our servers or those of our competitors?

Some links of interest

- https://www.delltechnologies.com/en-us/live-optics/index.htm#pdf-overlay=//www.delltechnologies.com/asset/en-us/solutions/business-solutions/briefs-summaries/cloud-live-optics-data-for-it-decisions-ebrochure.pdf

- https://www.liveoptics.com/

- https://www.starwindsoftware.com/resource-library/understand-your-it-environment-with-dell-live-optics/