Accelerating AI for Indic Languages with Dell PowerEdge

Tue, 17 Sep 2024 18:24:18 -0000

|Read Time: 0 minutes

In the ever-evolving realm of artificial intelligence, the ability to support multilingual language models has become increasingly essential, especially in a linguistically diverse country like India. This guide explores how Dell PowerEdge servers, paired with NVIDIA H100 GPUs, provide a robust platform for AI workloads. By leveraging Docker for containerization and NVIDIA Triton for efficient inference, these technologies are ideal for running large-scale AI models, such as those developed for Indic language processing.

Introduction: Powering AI with Dell and Nvidia for Indic Languages

Dell PowerEdge servers, which are designed for AI and high performance computing , have proven their capability in deploying sophisticated models that support multiple languages. In one such experiment, a multilingual text generation model—Sarvam-2B from Sarvam AI—was used as a case study. This model, which is designed to generate text in various Indic languages, provided a great example of the potential of Dell's infrastructure when working with large language models.

While Sarvam-2B’s capabilities were tested in this setup, it is one of many AI models that can benefit from Dell’s powerful computational infrastructure. The synergy between Dell PowerEdge servers and NVIDIA H100 GPUs ensures high performance and efficiency, particularly when dealing with large datasets and multilingual outputs. The forthcoming version of this model will expand its capabilities further, having been trained on an extraordinary 4 trillion tokens, evenly divided between English and Indic languages, thereby enhancing its overall performance and utility.

It is important to note that the sarvam-2b-v0.5 model is a pre-trained text-completion model. It is not instruction fine-tuned or aligned, meaning it cannot answer questions or follow instructions. Its primary functionality is limited to generating text completions based on the input that it receives. The model was tested for its ability to handle multilingual text generation on a Dell PowerEdge R760 platform with NVIDIA H100 GPUs. This test confirmed its efficiency in generating text across various Indic languages, showcasing its versatility and effectiveness in real-world applications.

Configuring the Dell PowerEdge Server

Components | Version |

Kernel Version | 5.14.0-162.6.1.el9_1 |

Release Date | Fri Sep 30 07:36:03 EDT 2022 |

Architecture | x86_64 (64-bit) |

Operating System | Linux (RedHat Enterprise Linux) |

Docker version | 27.1.1, build 6312585 |

CUDA Version | 12.4 CUDA version: 6.1 |

Server | Dell™ PowerEdge™ R760 |

GPU | 2x NVIDIA H100 PCIe |

Inference Server | NVIDIA Triton 23.09 (backend by vLLM) |

Model | sarvamai/sarvam-2b-v0.5 |

Supporting AI Innovation in India with Dell Infrastructure

India's growing demand for AI-driven applications necessitates an infrastructure that can handle complex, multilingual models. Dell PowerEdge AI-enabled servers, equipped with NVIDIA H100 GPUs, are ideally suited for these tasks.

In our tests, the system efficiently handled AI models like Sarvam-2B, but the same infrastructure can be used for a wide range of AI models beyond this example. This setup offers flexibility and performance, allowing organizations to build, deploy, and scale AI models that cater to India's diverse linguistic landscape. Whether it's for research, industry, or social applications, Dell’s infrastructure provides the backbone for the next wave of AI innovation.

Empowering India's AI Growth with Global Excellence

AI-Ready Infrastructure: Dell's PowerEdge servers with NVIDIA GPUs provide Indian organizations with the tools to deploy advanced AI models, capable of handling large-scale workloads, while also supporting global AI initiatives.

Localized AI Solutions for India: Dell focuses on AI models tailored for India's diverse needs, ensuring global competitiveness while addressing the country’s unique challenges.

Scalable and Efficient AI: Dell's infrastructure enables Indian organizations to build adaptable, resource-efficient AI models that drive innovation across industries.

Data Sovereignty and Security: Dell upholds India’s data privacy regulations, promoting secure and sovereign AI development, reinforcing its leadership in global AI innovation.

Assessing Indic Language Support with Dell PowerEdge: A Case Study with Sarvam AI’s Multilingual Model

Preparing for Deployment - Key Aspects of Sarvam AI’s Tokenizer and Vocabulary

Before deploying the Sarvam AI model, it's essential to understand the following key aspects of its tokenizer and vocabulary setup:

- Tailored Tokenizer: Sarvam AI employs a custom tokenizer designed for Indic languages, adept at handling diverse scripts and complex linguistic structures.

- Advanced Techniques: Moving beyond traditional Byte Pair Encoding (BPE), the model uses specialized methods such as SentencePiece, which better accommodates the rich morphology of Indic languages.

- Effective Vocabulary: The model features a meticulously constructed vocabulary that ensures accurate text representation by converting and decoding tokens with precision.

- High Precision: The tokenizer and vocabulary are fine-tuned to provide accurate and contextually relevant text generation across multiple Indic languages.

Deploying the Sarwam AI Model

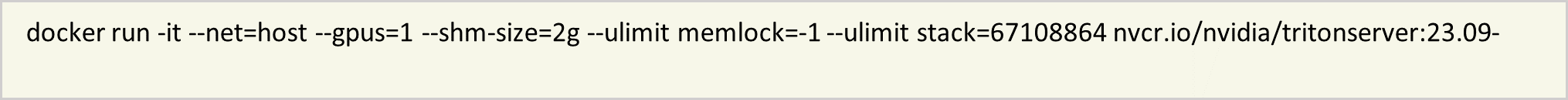

- Set up the Docker container.

To ensure a consistent and isolated environment, a Docker container running the NVIDIA Triton Inference Server was used. The following command configures the container with GPU support and necessary system settings:

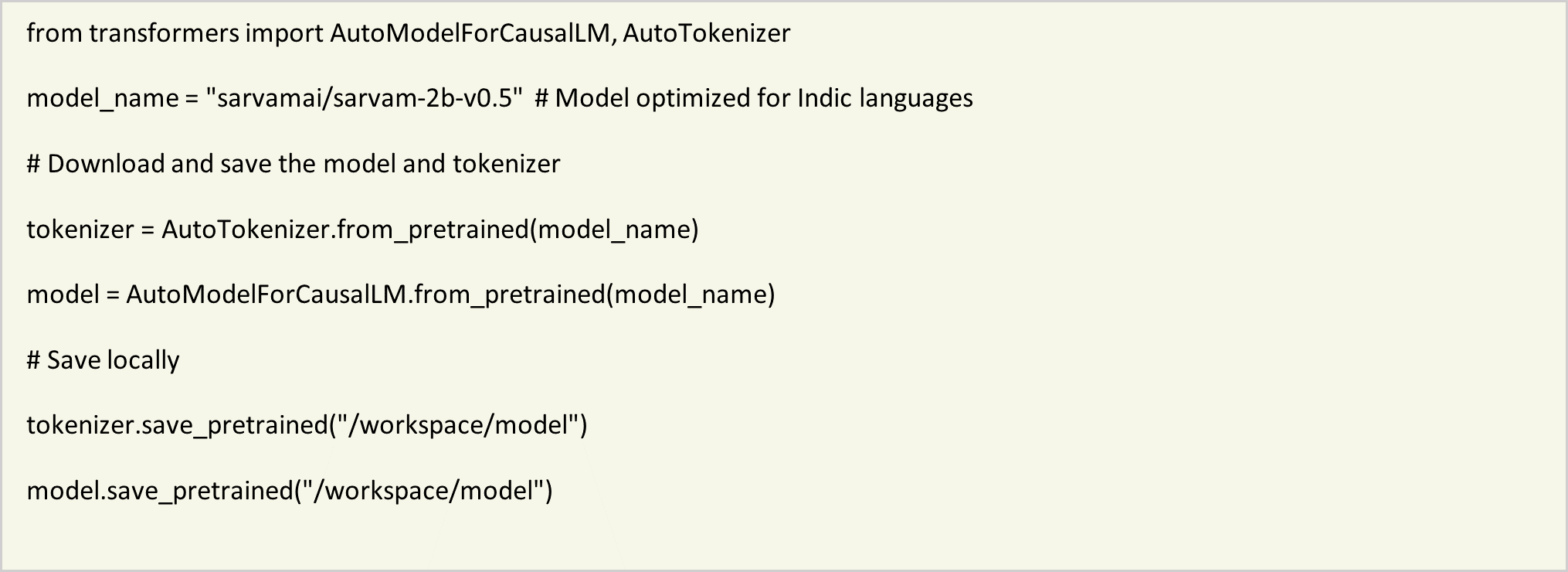

- Download and configure the model.

Within the Docker container, the Sarvam-2B model, optimized for Indic language text generation, was downloaded. The transformers library from Hugging Face facilitated this process:

This ensures that the model and tokenizer are correctly installed and available for local use within the Docker container.

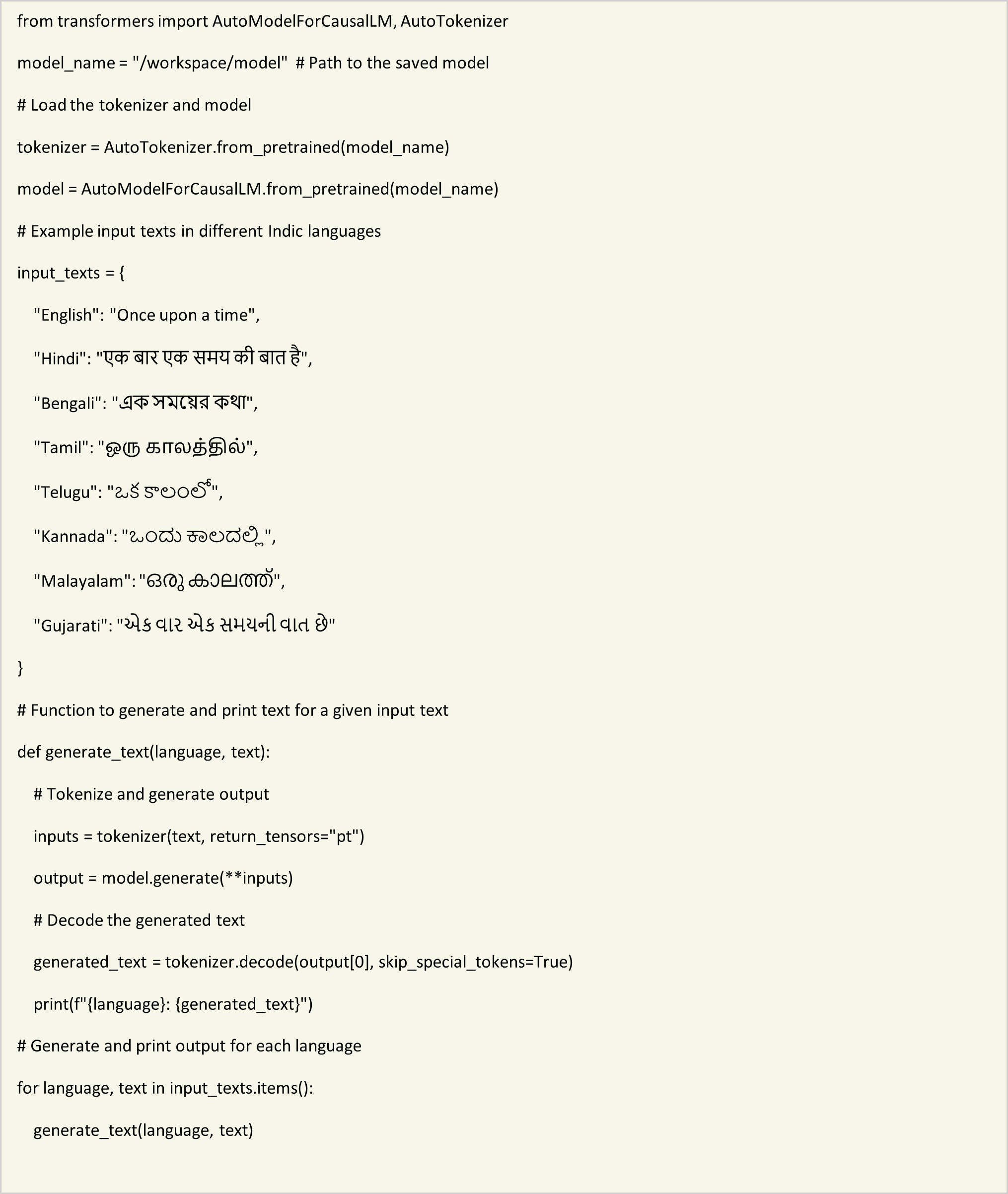

- Generate text in multiple Indic languages.

With the model and tokenizer ready, a Python script was created to generate text in various Indic languages. The script loads the model and tokenizer, and produces text based on predefined input texts: With the model and tokenizer ready, a Python script was created to generate text in various Indic languages. The script loads the model and tokenizer and produces text based on predefined input texts:

Observations and Results

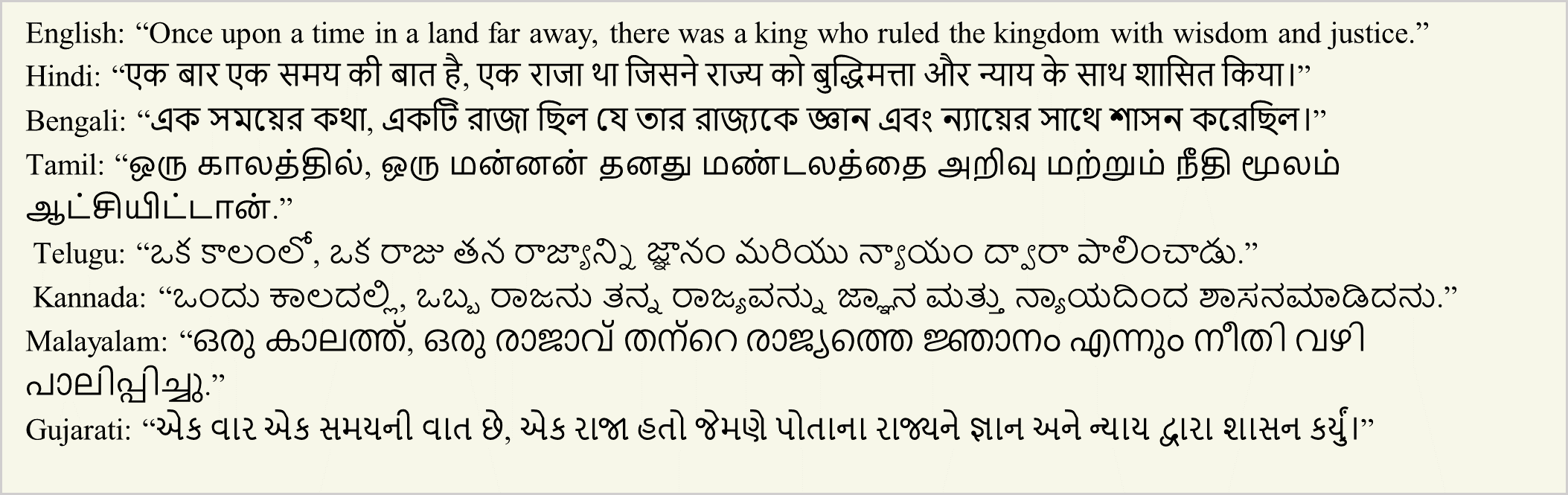

The Sarvam-2B model effectively generated meaningful and contextually appropriate text in all tested Indic languages. Sample outputs include:

Conclusion

Integrating Docker containers with Sarvam AI's Sarvam-2B model, and using NVIDIA H100 GPUs within Dell PowerEdge R760 servers, creates a robust framework for multilingual text generation tasks. This configuration ensures high performance and scalability, making it an excellent solution for enterprises and applications requiring advanced language processing capabilities across multiple Indic languages. The setup not only supports efficient model deployment but also leverages state-of-the-art hardware for optimal results.