Mitigating Slow Drain with Per Initiator Bandwidth Limits

Wed, 22 Jun 2022 13:16:04 -0000

|Read Time: 0 minutes

This blog discusses a recently introduced functionality for PowerMax and All Flash arrays as part of the Foxtail release. This new feature allows customers to leverage QoS settings on the PMAX/VMAXAF to reduce or eliminate Slow Drain issues due to servers with slow HBAs. This was introduced in Q2 2019 (OS 5978 Q2 2019 SR 5978.479.479). From a PowerMax or VMAX AF perspective, this can help customers alleviate congestion spreading caused by Slow Drain devices, such as HBAs that have a lower link speed compared to the storage array SLIC. Customers have been burdened by this Slow Drain phenomenon for many years and this issue can lead to severe fabric wide performance degradation.

While there are many definitions and descriptions of Slow Drain, in this blog we define Slow Drain as:

Slow Drain is an FC SAN phenomenon where a single FC end point, due to an inability to accept data from the link at the speed at which it is being sent, causes switch/link buffers and credits to be consumed, resulting in data being “backed up” on the SAN. This causes overall SAN degradation as central components, such as switches, encounter resources that are monopolized by traffic that is destined for the slow drain device, impacting all SAN traffic.

In short, Slow Drain is caused by an end device that is not capable of accepting data at the rate that it is being received.

For example, if an 8 GBs HBA sends a series of large block read requests to a 32Gbs PowerMax front-end Coldspell SLIC, the transition rate will be 32 GBs when the Array starts to send the data back to the host. Since the HBA is only capable of receiving data at 8 GB, there will be congestion at the host end. Too much congestion can lead to congestion spreading, which can affect unrelated server and storage ports to experience performance degradation.

The goal of the Foxtail release is to reduce Slow Drain issues, as they are difficult to prevent and diagnose. It does this through a mechanism where the customer can prevent an end device from becoming a Slow Drain by limiting the amount of data that will be sent to it.

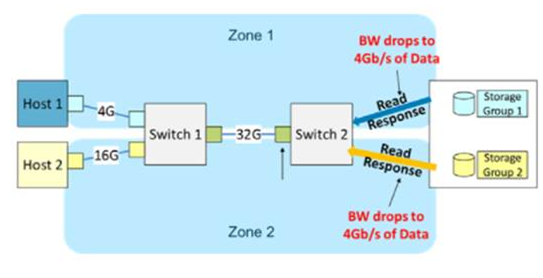

On PowerMax, this can be accomplished by applying a per initiator bandwidth limit. This application limits the amount of data that is sent to the end device (host) at a rate at which it can receive data. We have provided customers the ability to leverage Unisphere or Solutions Enabler QoS settings to keep faster array SLICs from overwhelming slower host HBAs. Figure 1 shows a scenario of congestion spreading caused by Slow Drain devices, which can lead to severe fabric-wide performance degradation.

Figure 1: Congestion spreading

Implementing per initiator bandwidth limits

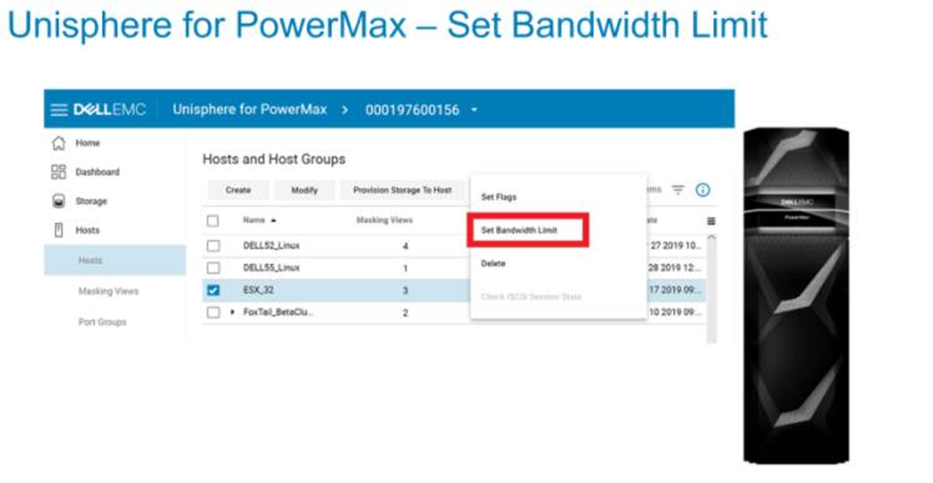

Customers can now configure per initiator bandwidth limits at an Initiator Group (IG) or Host level. The I/O for that Initiator is throttled to a set limit by the PowerMaxOS. This can be configured through Unisphere, REST API, Solutions Enabler (9.1 and above), and Inlines.

Note: The Array must be running PowerMaxOS 5978 Q2 2019 or later.

Figure 2: Unisphere for PowerMax 9.1 and higher support

This release also includes a bandwidth limit setting. Users can go to this new menu item by clicking on the “traffic lights” which prompts the set bandwidth limit dialogue to open. The range for the bandwidth limit is between zero and the maximum rate that the Initiators can support (for example, 16,32 GB).

Note: The menu item to bring up the dialogue is only enabled for Fibre (FC) and disabled for ISCSI hosts.

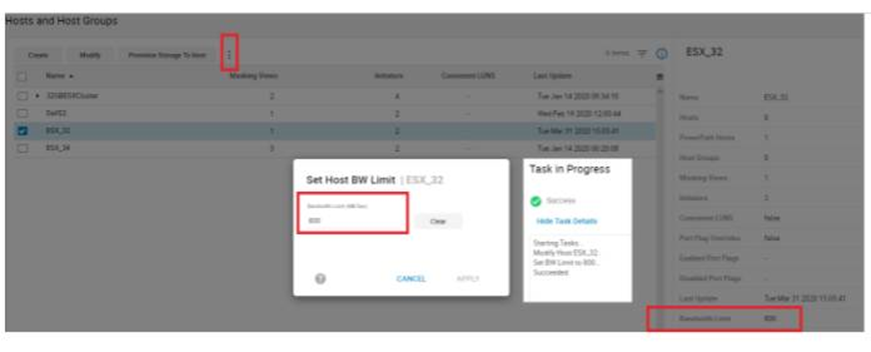

The Bandwidth Limit is set using a value in MB/sec. For example, Figure 3 shows setting the Bandwidth Limit to 800MB/s. The details panel for the Host displays an extra field for the bandwidth limit.

Figure 3: Unisphere Set Host Bandwidth Limit

Figure 3: Unisphere Set Host Bandwidth Limit

Slow Drain monitoring

Typically, B2B credit starvation or drops in R_RDYs symptoms of Slow Drain. Writes can take a long time to complete if they are stuck in the queue behind reads, it may also cause the XFER_RDY to be delayed.

The Bandwidth Limit Exceeded seconds metric is available for Solutions Enabler 9.1. In the Performance dashboard, this is the number of seconds that the director port and initiator has run at maximum quota. This metric uses the bw_exceeded_count. The KPI is available under the initiator objects section.

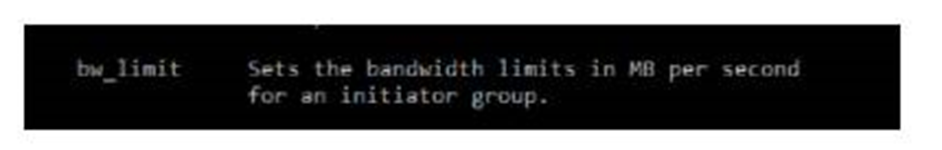

Solutions Enabler 9.1 also features enhanced support setting bandwidth limits at the Initiator Group Level. It allows the user to create an IG with bandwidth limits, set bandwidth limits on an IG, clear bandwidth limits on an IG, Modify the bandwidth limits on an IG, and of course display the IG bandwidth limits

Figure 4: Solutions Enabler bandwidth limit

Figure 4: Solutions Enabler bandwidth limit

REST API Support

REST API can also be used to set bandwidth limits. All communication for REST is HTTPS over IP, and calls authenticated against Unisphere for PowerMax server. REST API supports these four main verbs:

GET

POST

PUT

DELETE

To set the bandwidth limit, we used a REST PUT call, as shown in Figure 6.

Figure 5: Inlines support

Figure 5: Inlines support

Note: Inlines support is also available with the 8F Utility.

Additional information

The following list provides more information about this release:

- Host I/O limits and Initiator limits can co-exist, one at the SG level and the other at Initiator level.

- PowerMaxOS supports a max of 4096 limits, both host I/O limits and initiator limits share this limit

- When an Initiator connects to multiple directors, the per initiator limit is distributed evenly across the directors

- Limits can be set for child IG (Host) ONLY - not Parent IG (Host Group)

- An Initiator must be in an IG to set a BW limit on it

- The IG must contain an initiator in it to set a BW limit for it

- For PowerMaxOS downgrades (NDDs) if the system has Initiators which have bandwidth limits set, the downgrade will be blocked until the limits are cleared from the config

- Currently, only FC support is offered

Note: The bandwidth limit is set per Initiator and split across directors. Although the limit is applied within the IG/Host group screen within Unisphere for PowerMax, it applies to every initiator in that group. This means that the limit is not aggregate across all the initiators in that group, but individually applied to all of them and split across directors.

Resources

- Unisphere for PowerMax Release Notes (log in required)

- Connectrix Switch Cisco & Brocade Series: Performance issues in a SAN (log in required)

- Congestion Spreading and How to Avoid It

- Slow Drain Knowledge Map

Author Information

Author: Pat Tarrant, Principal Engineering Technologist