Accelerating AI Inferencing with Dell PowerEdge XE9680: A Performance Analysis

Download PDFTue, 28 Mar 2023 23:05:16 -0000

|Read Time: 0 minutes

Executive Summary

The Dell PowerEdge XE9680 is a high-performance server designed and optimized to enable uncompromising performance for artificial intelligence, machine learning, and high-performance computing workloads. Dell PowerEdge is launching our innovative 8-way GPU platform with advanced features and capabilities.

- 8x NVIDIA H100 80GB 700W SXM GPUs or 8x NVIDIA A100 80GB 500W SXM GPUs

- 2x Fourth Generation Intel® Xeon® Scalable Processors

- 32x DDR5 DIMMs at 4800MT/s

- 10x PCIe Gen 5 x16 FH Slots

- 8x SAS/NVMe SSD Slots (U.2) and BOSS-N1 with NVMe RAID

This Direct from Development (DfD) tech note provides valuable insights on AI inferencing performance for the recently launched PowerEdge XE9680 server by Dell Technologies.

Testing

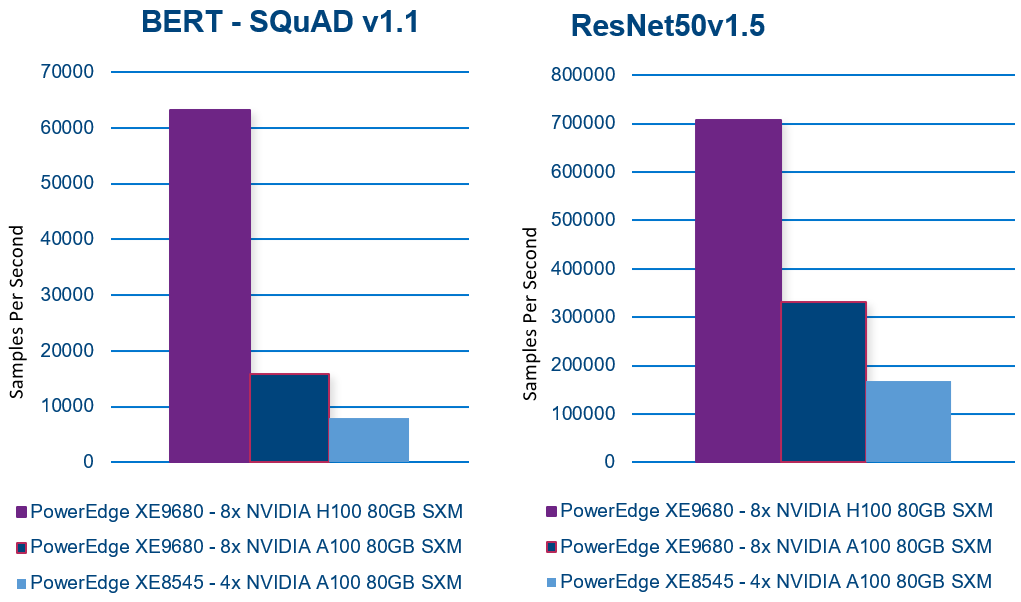

To evaluate the inferencing performance of each GPU option available on the new PowerEdge XE9680, the Dell CET AI Performance Lab, and the Dell HPC & AI Innovation Lab selected several popular AI models for benchmarking. Additionally, to provide a basis for comparison, they also ran benchmarks on our last-generation PowerEdge XE8545. The following workloads were chosen for the evaluation:

- BERT-large (Bidirectional Encoder Representations from Transformers) – Natural language processing like text classification, sentiment analysis, question answering, and language translation

- XE8545 Batch Size 512

- XE9680-A100 Batch Size 512

- XE9680-H100 Batch Size 1024

- ResNet (Residual Network) – Image recognition. Classify, object detection, and segmentation

- XE8545 Batch Size 2048

- XE9680-A100 Batch Size 2048

- XE9680-H100 Batch Size 2048

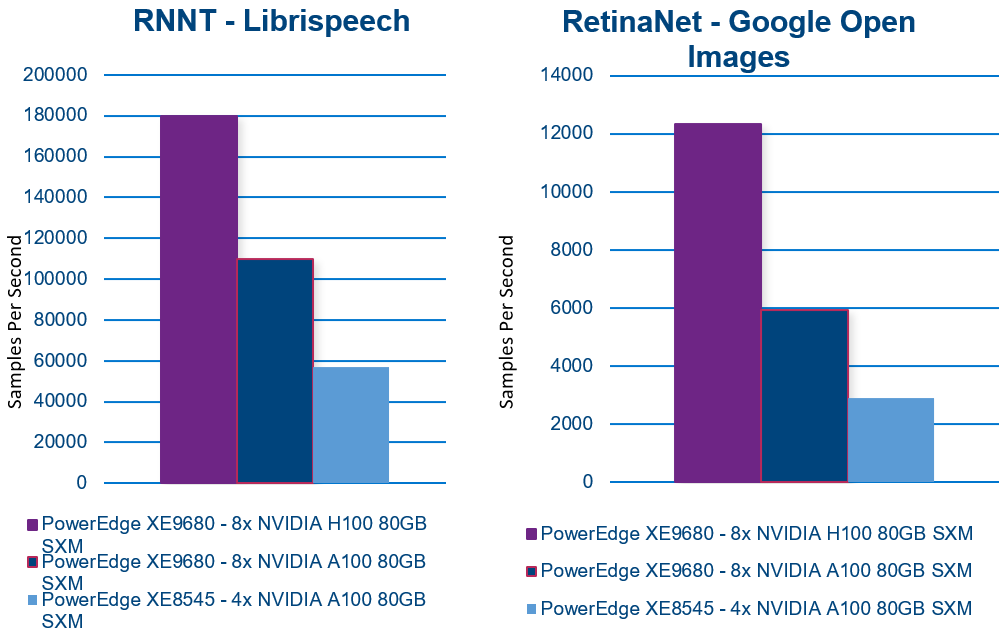

- RNNT (Recurrent Neural Network Transducer) – Speech recognition. Converts audio signal to words

- XE8545 Batch Size 2048

- XE9680-A100 Batch Size 2048

- XE9680-H100 Batch Size 2048

- RetinaNET – Object detection in images

- XE8545 Batch Size 16

- XE9680-A100 Batch Size 32

- XE9680-H100 Batch Size 16

Performance

The results are remarkable! The PowerEdge XE9680 demonstrates exceptional inferencing performance!

+300%: PowerEdge XE9680 NVIDIA A100 to H100 performance(1)

+700%: When compared to PowerEdge XE8545(2)

Comparing the NVIDIA A100 SXM configuration with the NVIDIA H100 SXM configuration on the same PowerEdge XE9680 reveals up to a 300% improvement in inferencing performance! (1)

Even more impressive is the comparison between the PowerEdge XE9680 NVIDIA H100 SXM server and the XE8545 NVIDIA A100 SXM server, which shows up to a 700% improvement in inferencing performance! (2)

Here are the results of each benchmark. In all cases, higher is better.

With exceptional AI inferencing performance, the PowerEdge XE9680 sets a high benchmark for today’s and tomorrow's AI demands. Its advanced features and capabilities provide a solid foundation for businesses and organizations to take advantage of AI and unlock new opportunities.

Contact your account executive or visit www.dell.com to learn more.

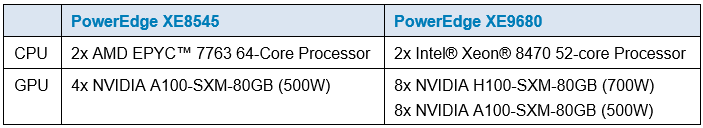

Table 1. Server configuration

(1) Testing conducted by Dell in March of 2023. Performed on PowerEdge XE9680 with 8x NVIDIA H100 SXM5-80GB and PowerEdge XE9680 with 8x NVIDIA A100 SXM4-80G. Actual results will vary.

(2) Testing conducted by Dell in March of 2023. Performed on PowerEdge XE9680 with 8x NVIDIA H100 SXM5-80GB and PowerEdge XE8545 with 4x NVIDIA A100-SXM-80GB. Actual results will vary.