Enabling GPUs for use inside OpenShift Container Platform 4.6

Home > Communication Service Provider Solutions > Telecom Multicloud Foundation > Red Hat > Guides > Red Hat Open Shift Container Platform Guides > Deployment Guide: Red Hat OpenShift Container Platform Reference Architecture for Telecom > Enabling GPUs for use inside OpenShift Container Platform 4.6

Enabling GPUs for use inside OpenShift Container Platform 4.6

-

To install the NFD Operator, log in to the OpenShift cluster through the web console (see Accessing the OpenShift web console).

- Create a project to manage the GPU resources:

$ oc new-project gpu-operator-resources

- From the web console, log in to your OpenShift cluster, select Operators > OperatorHub, and then search for the NFD Operator.

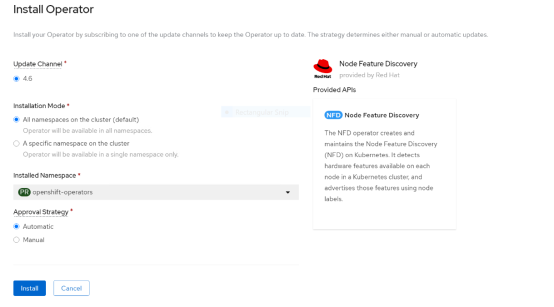

The Install Operator page opens, as shown in the following figure:

Figure 19. NFD Operator installation page

- Choose to install the operator in All namespaces on the cluster (default) and select Automatic under Approval Strategy.

- In the navigation pane, select Operators > Installed Operators, switch to the gpu-operator-resources namespace, and click NFD Operator.

- Click the Node Feature Discovery tile, and then click Create NodeFeatureDiscovery.

- Click Create to start the pods that are needed to label the nodes.

After the NFD pods have started running, more compute node labels are added.

- Validate that the GPU labels are present.

Note: Depending on the GPUs that you are using, the node label that the NFD generates might vary. The V100 GPUs in this example the pci-de.present=true label.

Installing the NVIDIA GPU Operator

To install the NVIDIA GPU Operator:

- Log in to the OpenShift web console, select Operators > OperatorHub, and then search for the NVIDIA GPU Operator.

- Choose to install the Operator in all namespaces in the cluster and select the automatic approval strategy.

- After the Operator is installed:

- Select Operators > Installed Operators, switch to the gpu-operator-resources project, and select the NVIDIA GPU Operator.

- Click the ClusterPolicy tab, click Create ClusterPolicy, and then click Create to start the NVIDIA GPU Operator pods.

- Under the gpu-operator-resources namespace, click Workloads > Pods and confirm that all NVIDIA GPU Operator pods are running or have completed, as shown in the following figure:

Figure 20. NVIDIA GPU Operator pods status

A new nvidia.com/gpu resource is displayed In the NodeSpec for nodes with GPUs.

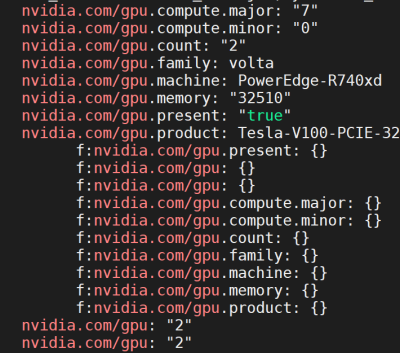

- To confirm that the new resource is present, run:

oc get node <gpu_node> -o yaml | grep -i nvidia.com/gpu

The following output is displayed:

Figure 21. Resources present

Providing GPU resources to a pod

Sample Pod Spec

As shown in the following sample Pod Spec, you can provide GPUs to pods by specifying the GPU resource nvidia.com/gpu and requesting the number of GPUs that you want. This number must not exceed the number of GPUs present on a specific node.

apiVersion: v1

kind: Pod

metadata:

name: tensorflow-benchmarks-gpu

spec:

nodeSelector:

nvidia.com/gpu.product: Tesla-V100-PCIE-32GB

containers:

- image: nvcr.io/nvidia/tensorflow:19.09-py3

name: cudnn

command: ["/bin/sh","-c"]

args: ["git clone https://github.com/tensorflow/benchmarks.git;cd benchmarks/scripts/tf_cnn_benchmarks;python3 tf_cnn_benchmarks.py --num_gpus=2 --data_format=NHWC --batch_size=32 --model=resnet50 --variable_update=parameter_server"]

resources:

limits:

nvidia.com/gpu: 2

requests:

nvidia.com/gpu: 2

restartPolicy: Never

The NVIDIA GPU Operator also deploys gpu-feature-discovery pods on each compute node. The pod labels each node with information about the GPU type, family, count, and so on, as shown in the Pod Spec. These node labels can be used in the Pod Spec to schedule workloads based on criteria such as the GPU product name, as shown under nodeSelector.

References

The following documentation provides additional information about the operation and usage of GPUs in the OpenShift environment: