Intel Ice Lake - BIOS Characterization for HPC

Tue, 25 May 2021 13:10:03 -0000

|Read Time: 0 minutes

Intel recently announced the 3rd Generation Intel Xeon Scalable processors (code-named “Ice Lake”), which are based on a new 10 nm manufacturing process. This blog provides the new Ice Lake processor synthetic benchmark results and the recommended BIOS settings on Dell EMC PowerEdge servers.

Ice Lake processors offer a higher core count of up to 40 cores with a single Ice Lake 8380 processor. The Ice Lake processors have larger L3, L2, and L1 data cache than Intel’s second-generation Cascade Lake processors. These features are expected to improve performance of CPU-bound software applications. Table 1 shows the L1, L2, and L3 cache size on the 8380 processor model.

Ice Lake still supports the AVX 512 SIMD instructions, which allow for 32 DP FLOP/cycle. The upgraded Ultra Path Interconnect (UPI) Link speed of 11.2GT/s is expected to improve data movement between the sockets. In addition to core count and frequency, Ice Lake-based Dell EMC PowerEdge servers support DDR4 - 3200 MT/s DIMMS with eight memory channels per processor, which is expected to improve the performance of memory bandwidth-bound applications. Ice Lake processors now support DIMMs with 6 TB per socket.

Instructions such as Vector CLMUL, VPMADD52, Vector AES, and GFNI Extensions have been optimized to improve use of vector registers. The performance of software applications in the cryptography domain is also expected to benefit. The Ice Lake processor also includes improvements to Intel Speed Select Technology (Intel SST). With Intel SST, a few cores from the total available cores can be operated at a higher base frequency, turbo frequency, or power. This blog does not address this feature.

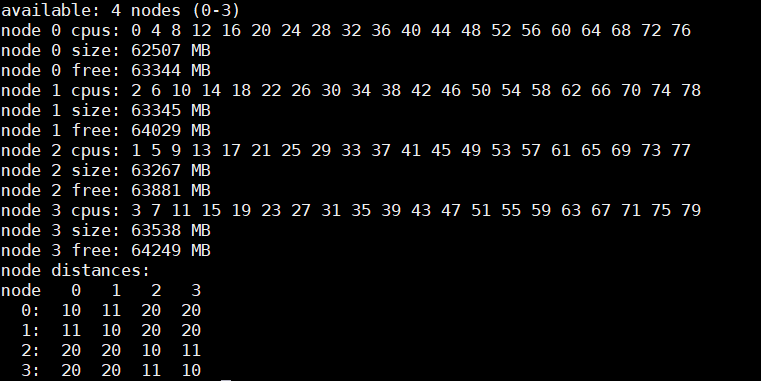

Table 1: hwloc-ls and numactl -H command output on an Intel 8380 processor model-based server with Round Robin core enumeration (MadtCoreEnumeration) and SubNumaCuster(Sub-NUMA Cluster) set to 2-Way

hwloc-ls | numactl -H |

Machine (247GB total) Package L#0 + L3 L#0 (60MB) Group0 L#0 NUMANode L#0 (P#0 61GB) L2 L#0 (1280KB) + L1d L#0 (48KB) + L1i L#0 (32KB) + Core L#0 + PU L#0 (P#0) L2 L#1 (1280KB) + L1d L#1 (48KB) + L1i L#1 (32KB) + Core L#1 + PU L#1 (P#4) L2 L#2 (1280KB) + L1d L#2 (48KB) + L1i L#2 (32KB) + Core L#2 + PU L#2 (P#8) L2 L#3 (1280KB) + L1d L#3 (48KB) + L1i L#3 (32KB) + Core L#3 + PU L#3 (P#12) L2 L#4 (1280KB) + L1d L#4 (48KB) + L1i L#4 (32KB) + Core L#4 + PU L#4 (P#16) L2 L#5 (1280KB) + L1d L#5 (48KB) + L1i L#5 (32KB) + Core L#5 + PU L#5 (P#20) L2 L#6 (1280KB) + L1d L#6 (48KB) + L1i L#6 (32KB) + Core L#6 + PU L#6 (P#24) L2 L#7 (1280KB) + L1d L#7 (48KB) + L1i L#7 (32KB) + Core L#7 + PU L#7 (P#28) L2 L#8 (1280KB) + L1d L#8 (48KB) + L1i L#8 (32KB) + Core L#8 + PU L#8 (P#32) L2 L#9 (1280KB) + L1d L#9 (48KB) + L1i L#9 (32KB) + Core L#9 + PU L#9 (P#36) L2 L#10 (1280KB) + L1d L#10 (48KB) + L1i L#10 (32KB) + Core L#10 + PU L#10 (P#40) L2 L#11 (1280KB) + L1d L#11 (48KB) + L1i L#11 (32KB) + Core L#11 + PU L#11 (P#44) L2 L#12 (1280KB) + L1d L#12 (48KB) + L1i L#12 (32KB) + Core L#12 + PU L#12 (P#48) L2 L#13 (1280KB) + L1d L#13 (48KB) + L1i L#13 (32KB) + Core L#13 + PU L#13 (P#52) L2 L#14 (1280KB) + L1d L#14 (48KB) + L1i L#14 (32KB) + Core L#14 + PU L#14 (P#56) L2 L#15 (1280KB) + L1d L#15 (48KB) + L1i L#15 (32KB) + Core L#15 + PU L#15 (P#60) L2 L#16 (1280KB) + L1d L#16 (48KB) + L1i L#16 (32KB) + Core L#16 + PU L#16 (P#64) L2 L#17 (1280KB) + L1d L#17 (48KB) + L1i L#17 (32KB) + Core L#17 + PU L#17 (P#68) L2 L#18 (1280KB) + L1d L#18 (48KB) + L1i L#18 (32KB) + Core L#18 + PU L#18 (P#72) L2 L#19 (1280KB) + L1d L#19 (48KB) + L1i L#19 (32KB) + Core L#19 + PU L#19 (P#76) HostBridge. <snip> . .

| |

BIOS options tested on Ice Lake processors

Table 2 provides the server details used for the performance tests. The following BIOS options were explored in the performance testing:

- BIOS.ProcSettings.SubNumaCluster—Breaks up the LLC into disjoint clusters based on address range, with each cluster bound to a subset of the memory controllers in the system. It improves average latency to the LLC. Sub-NUMA Cluster (SNC) is disabled if NVDIMM-N is installed in the system.

- BIOS.ProcSettings.DeadLineLlcAlloc—If enabled, fills in dead lines in LLC opportunistically.

- BIOS.ProcSettings.LlcPrefetch—Enables and disables LLC Prefetch on all threads.

- BIOS.ProcSettings.XptPrefetch—If enabled, enables the MS2IDI to take a read request that is being sent to the LLC and speculatively issue a copy of that read request to the memory controller.

- BIOS.ProcSettings.UpiPrefetch—Starts the memory read early on the DDR bus. The UPI Rx path spawns a MemSpecRd to iMC directly.

- BIOS.ProcSettings.DcuIpPrefetcher (Data Cache Unit IP Prefetcher)—Affects performance, depending on the application running on the server. This setting is recommended for High Performance Computing applications.

- BIOS.ProcSettings.DcuStreamerPrefetcher (Data Cache Unit Streamer Prefetcher)—Affects performance, depending on the application running on the server. This setting is recommended for High Performance Computing applications.

- BIOS.ProcSettings.ProcAdjCacheLine—When set to Enabled, optimizes the system for applications that require high utilization of sequential memory access. Disable this option for applications that require high utilization of random memory access.

- BIOS.SysProfileSettings.SysProfile—Sets the System Profile to Performance Per Watt (DAPC), Performance Per Watt (OS), Performance, Workstation Performance, or Custom mode. When set to a mode other than Custom, the BIOS sets each option accordingly. When set to Custom, you can change setting of each option.

- BIOS.ProcSettings.LogicalProc—Reports the logical processors. Each processor core supports up to two logical processors. When set to Enabled, the BIOS reports all logical processors. When set to Disabled, the BIOS only reports one logical processor per core. Generally, a higher processor count results in increased performance for most multithreaded workloads. The recommendation is to keep this option enabled. However, there are some floating point and scientific workloads, including HPC workloads, where disabling this feature might result in higher performance.

You can set the DeadLineLlcAlloc, LlcPrefetch, XptPrefetch, UpiPrefetch, DcuIpPrefetcher, DcuStreamerPrefetcher, ProcAdjCacheLine, and LogicalProc BIOS options to either Enabled or Disabled. You can set the SubNumaCluster to 2-Way and Disabled. The SysProfile setting can have five possible values: PerformanceOptimized, PerfPerWattOptimizedDapc, PerfPerWattOptimizedOs, PerfWorkStationOptimized and Custom.

Table 2: Test bed hardware and software details

Component | Dell EMC PowerEdge R750 server | Dell EMC PowerEdge C6520 server | Dell EMC PowerEdge C6420 server | Dell EMC PowerEdge C6420 server |

OPN | 8380 | 6338 | 8280 | 6252 |

Cores/Socket | 40 | 32 | 28 | 24 |

Frequency (Base-Boost) | 2.30 – 3.40 GHz | 2.0 – 3.20 GHz | 2.70 – 4.0 GHz | 2.10 – 3.70 GHz |

TDP | 270 W | 205 W | 205 W | 150 W |

L3Cache | 60M | 48M | 38.5M | 37.75M |

Operating System | Red Hat Enterprise Linux 8.3 4.18.0-240.22.1.el8_3.x86_64 | Red Hat Enterprise Linux 8.3 4.18.0-240.22.1.el8_3.x86_64 | Red Hat Enterprise Linux 8.3 4.18.0-240.el8.x86_64 | Red Hat Enterprise Linux 8.3 4.18.0-240.el8.x86_64 |

Memory | 16 GB x 16 (2Rx8) 3200 MT/s | 16 GB x 16 (2Rx8) 3200 MT/s | 16 GB x 12 (2Rx8) 2933 MT/s | 16 GB x 12 (2Rx8) 2933 MT/s |

BIOS/CPLD | 1.1.2/1.0.1 | |||

Interconnect | NVIDIA Mellanox HDR | NVIDIA Mellanox HDR | NVIDIA Mellanox HDR100 | NVIDIA Mellanox HDR100 |

Compiler | Intel parallel studio 2020 (update 4) | |||

Benchmark software |

| |||

The system profile BIOS meta option helps to set a group of BIOS options (such as C1E, C States, and so on), each of which control performance and power management settings to a particular value. It is also possible to set these groups of BIOS options individually to a different value using the Custom system profile.

Application performance results

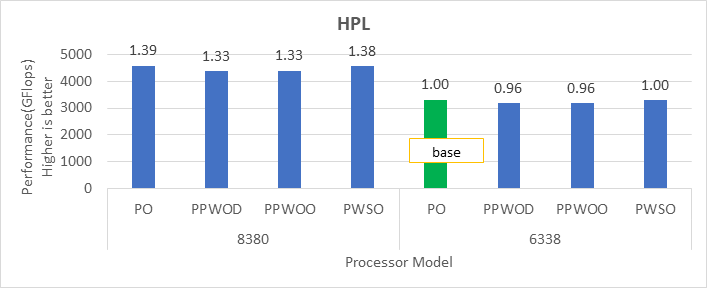

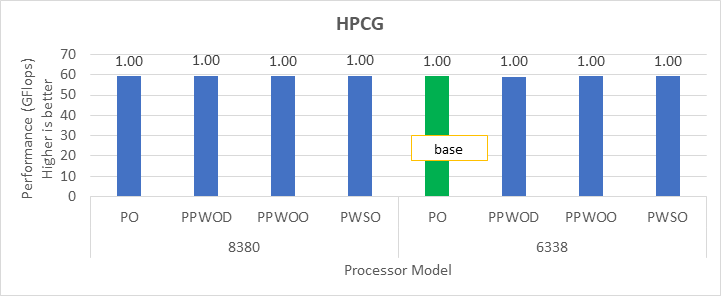

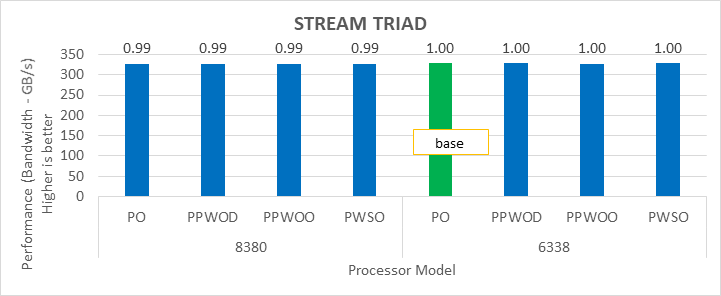

Table 2 lists details about the software used for benchmarking the server. We used the precompiled HPL and HPCG binary files, which are part of Intel Parallel Studio 2020 update 4 software bundle, for our tests. We compiled the WRF application with AVX2 support. WRF and HPCG issue many nonfloating point packed micro-operations (approximately 73 percent to 90 percent of the total packed micro-operations). They are memory-bound (and DRAM-bandwidth bound) workloads. HPL issues packed double precision micro-operations and is a compute-bound workload.

After setting Sub-NUMA Cluster (BIOS.ProcSettings.SubNumaCluster) to 2-Way, Logical Processors (BIOS.ProcSettings.LogicalProc) to Disabled, and other settings (DeadLineLlcAlloc, LlcPrefetch, XptPrefetch, UpiPrefetch, DcuIpPrefetcher, DcuStreamerPrefetcher, ProcAdjCacheLine) to Enabled, we measured the impact of System Profile (BIOS.SysProfileSettings.SysProfile) BIOS parameters on application performance.

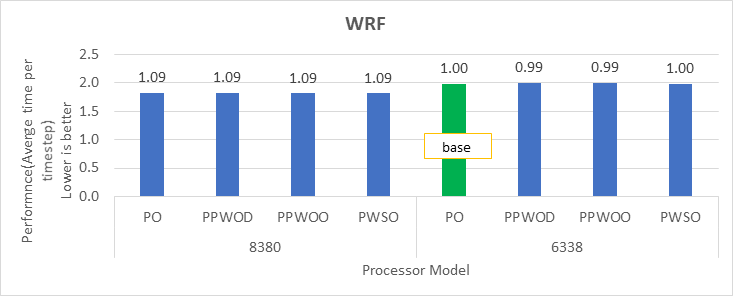

Figure 1 through Figure 4 show application performance. In each figure, the numbers over the bars represent the relative change in the application performance with respect to the application performance obtained on the Intel 6338 Ice Lake processor with the System Profile set to Performance Optimized (PO).

Note: In the figures, PO=PerformanceOptimized, PPWOD=PerfPerWattOptimizedDapc, PPWOO=PerfPerWattOptimizedOs, and PWSO=PerfWorkStationOptimized.

HPL Benchmark

Figure 1: Relative difference in the performance of HPL by processor and Sysprofile setting

HPCG Benchmark

Figure 2: Relative difference in the performance of HPCG by processor and Sysprofile setting

STREAM Benchmark

Figure 3: Relative difference in the performance of STREAM by processor and Sysprofile setting

WRF Benchmark

Figure 4: Relative difference in the performance of WRF by processor and Sysprofile setting

We obtained the performance for the applications in Figure 2 through Figure 4 by fully subscribing to all available cores. Depending on the processor model, we achieved 78 percent to 80 percent efficiency with HPL and STREAM benchmarks using the Performance Optimized profile.

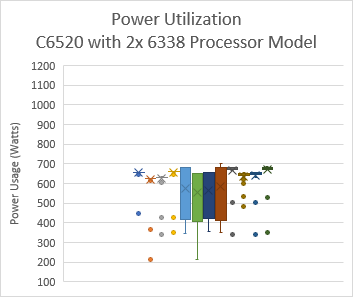

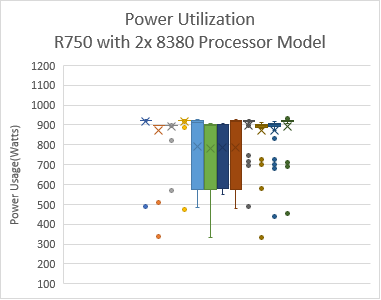

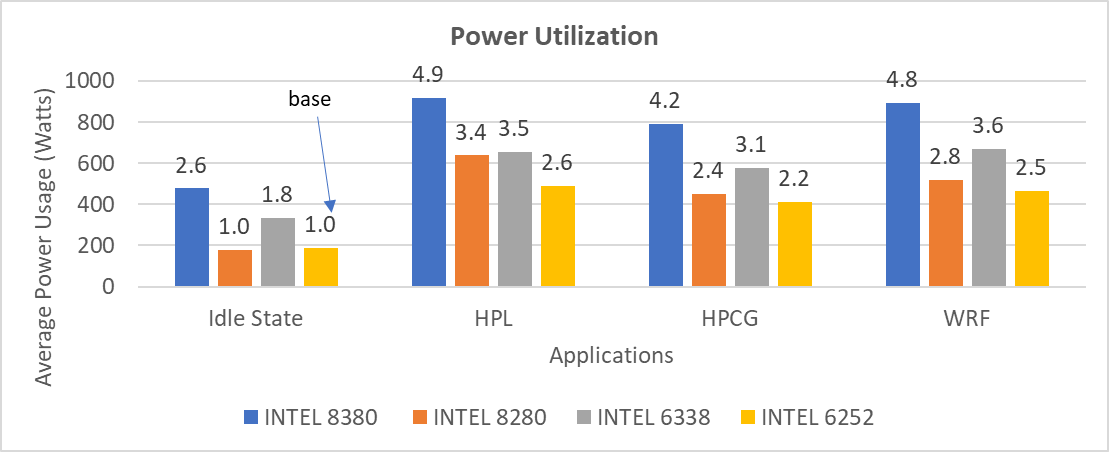

Intel has extended the TDP of the Ice Lake processors with the top-end Intel 8380 processor at 270 W TDP. The following figure shows the power use on the systems with the applications listed in Table 2.Note: In this figure, PO=PerformanceOptimized, PPWOD=PerfPerWattOptimizedDapc, PPWOO=PerfPerWattOptimizedOs and PWSO=PerfWorkStationOptimized

Figure 5: Power use by platform and processor type. Average Idle power usage on the PowerEdge C6520 server (Intel 6338 processor) with approximately 335 W and the PowerEdge R750 server (intel 8380 processor) with approximately 470 W using the Performance Optimized System Profile.

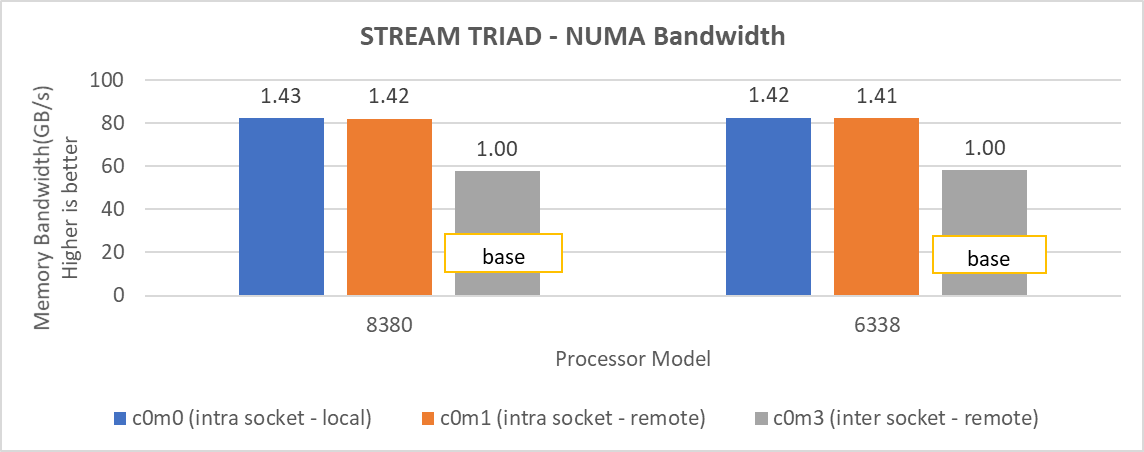

When SNC is set to 2-Way, the system exposes four NUMA nodes. We tested the NUMA bandwidth, remote socket bandwidth, and local socket bandwidth using the STREAM TRIAD benchmark. In Figure 6, the CPU NUMA node is represented as c and the memory node is represented as m. As an example for NUMA bandwidth, the c0m0 (blue bars) test type represents the STREAM TRIAD test carried out between NUMA node 0 and memory node 0. Figure 6 shows the best bandwidth numbers obtained on varying the number of threads per test type.

Figure 6: Local and remote NUMA memory bandwidth.

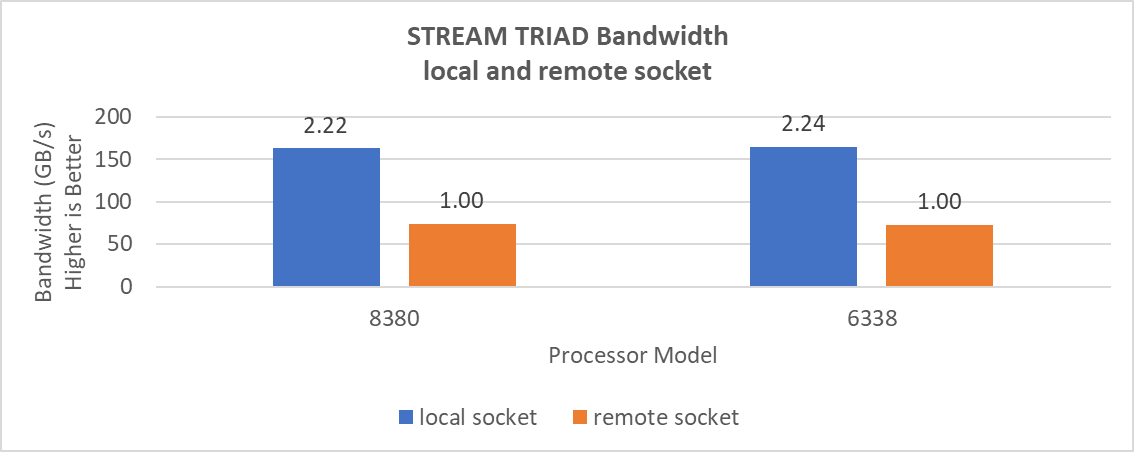

Remote socket bandwidth numbers were measured between CPU node 0, 1 and memory node 2, 3. Local bandwidths were measured between CPU node 0, 1, and 0, 1. The following figure shows the performance numbers.

Figure 7: Local and remote processor bandwidth.

Impact of BIOS options on application performance

We tested the impact of the DeadLineLlcAlloc, LlcPrefetch, XptPrefetch, UpiPrefetch, DcuIpPrefetcher, DcuStreamerPrefetcher and ProcAdjCacheLine with the Performance Optimized (PO) system profile. These BIOS options do not have significant impact on the performance of applications addressed in this blog, therefore we recommend that these options be set as Enabled.

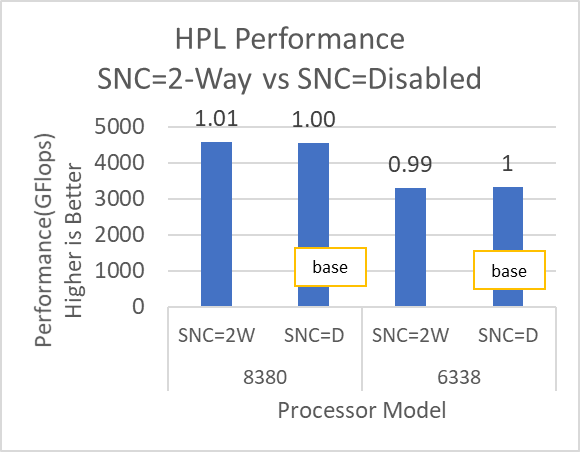

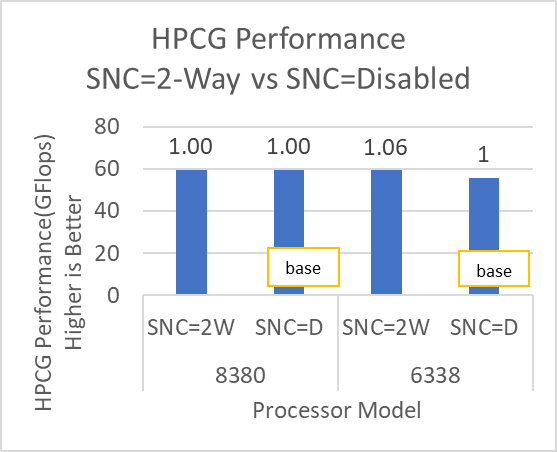

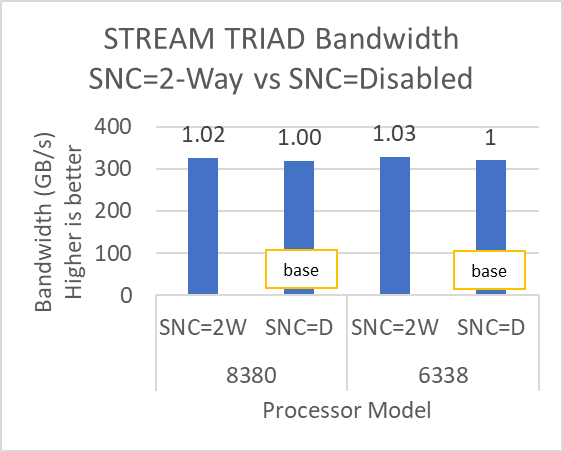

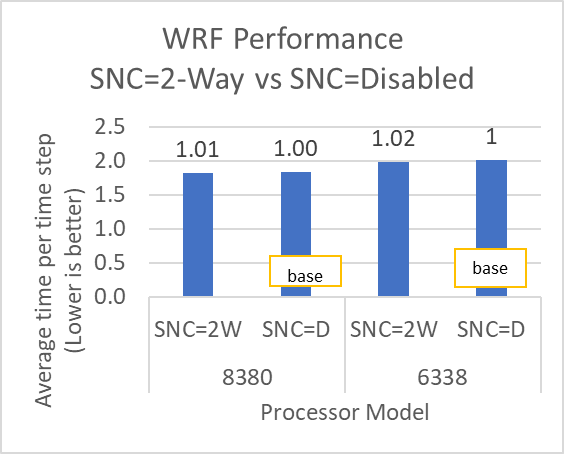

Figure 8 and Figure 9 show the impact of the Sub-NUMA Cluster (SNC) BIOS option on the application performance. In each figure, the numbers over the bars represent the relative change in the application performance with respect to the application performance obtained on the Intel 6338 Ice Lake processor with SNC feature set to Disabled.

Figure 8: HPL and HPCG performance variation by processor model with Sub-NUMA Cluster set to Disabled (SNC=D) and 2-Way (SNC=2W)

Figure 9: STREAM and WRF performance variation by processor model with Sub-NUMA Cluster set to Disabled (SNC=D) and 2-Way (SNC=2W)

The SubNumaCluster option can impact the applications that are Memory Bandwidth-bound (for example, STREAM, HPCG, and WRF). The SubNumaCluster option is recommended to be set to 2-Way as it can optimize the workloads addressed in this blog by a range of one percent to six percent, depending on the processor model and application.

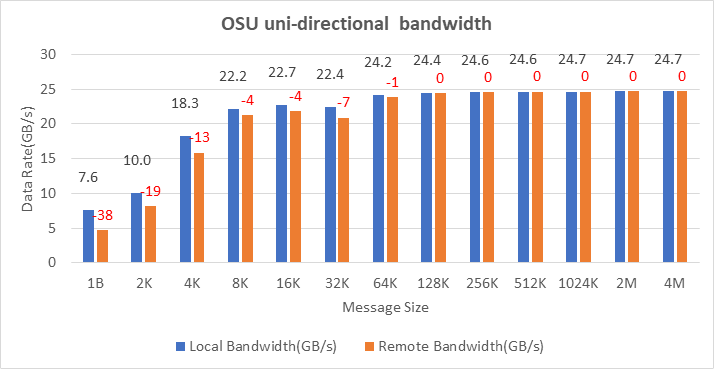

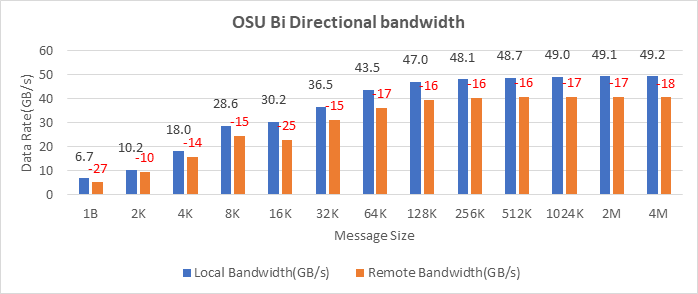

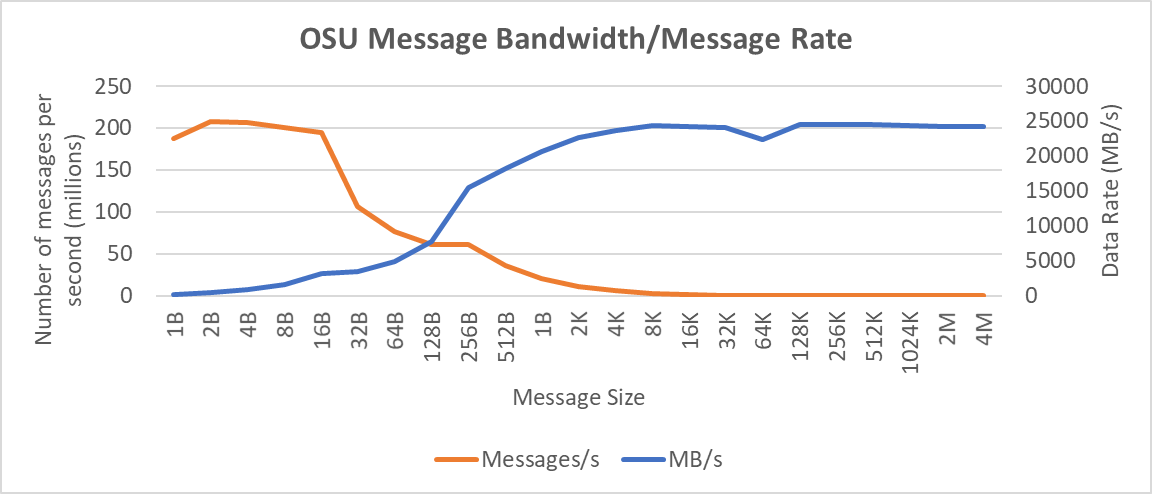

InfiniBand bandwidth and message rate

The Ice Lake-based processors now support PCIe Gen 4, which allows the NVIDIA MELLANOX HDR adapter cards to be used with Dell EMC PowerEdge servers. Figure 10, Figure 11, and Figure 12 show the Message Rate, Unidirectional, and Bi-directional InfiniBand bandwidth test results of the OSU Benchmarks suite. The network adapter card was connected to the second socket (NUMA node 2), therefore, the local bandwidth tests were carried out with processes bound to NUMA node 2. The remote bandwidth tests were carried out with processes bound to NUMA node 0. In Figure 10 and Figure 11, the numbers in red over the orange bars represent the percentage difference between local and remote bandwidth performance numbers.

Figure 10: OSU Benchmark unidirectional bandwidth test on two servers with Intel 8380 processors and NVIDIA Mellanox HDR InfiniBand

Figure 10: OSU Benchmark unidirectional bandwidth test on two servers with Intel 8380 processors and NVIDIA Mellanox HDR InfiniBand

Figure 11: OSU Benchmark bi-directional bandwidth test on two servers with Intel 8380 processors and NVIDIA Mellanox HDR InfiniBand

Figure 11: OSU Benchmark bi-directional bandwidth test on two servers with Intel 8380 processors and NVIDIA Mellanox HDR InfiniBand

Figure 12: Interconnect bandwidth and message rate performance obtained between two servers having Intel 8380 processors with OSU Benchmark

On two nodes connected using the NVIDIA Mellanox ConnectX-6 HDR InfiniBand adapter cards, we achieved approximately 25 GB/s unidirectional bandwidth and a message rate of approximately 200 million messages/second—almost double the performance numbers obtained on the NVIDIA Mellanox HDR100 card.

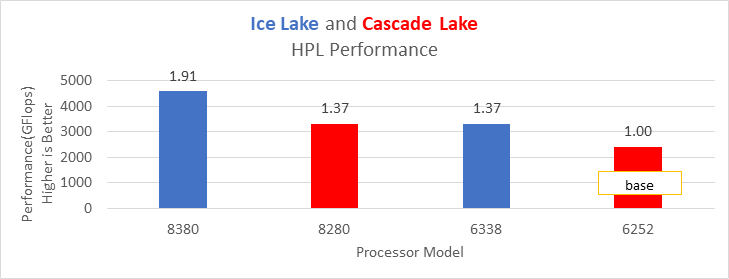

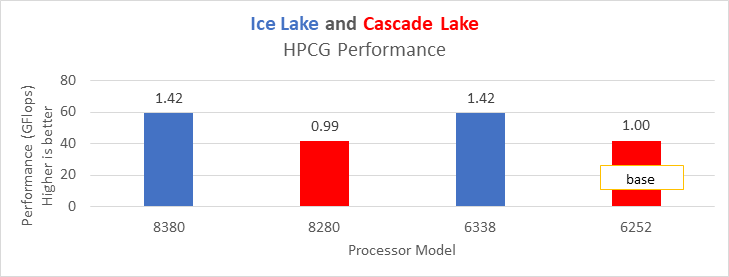

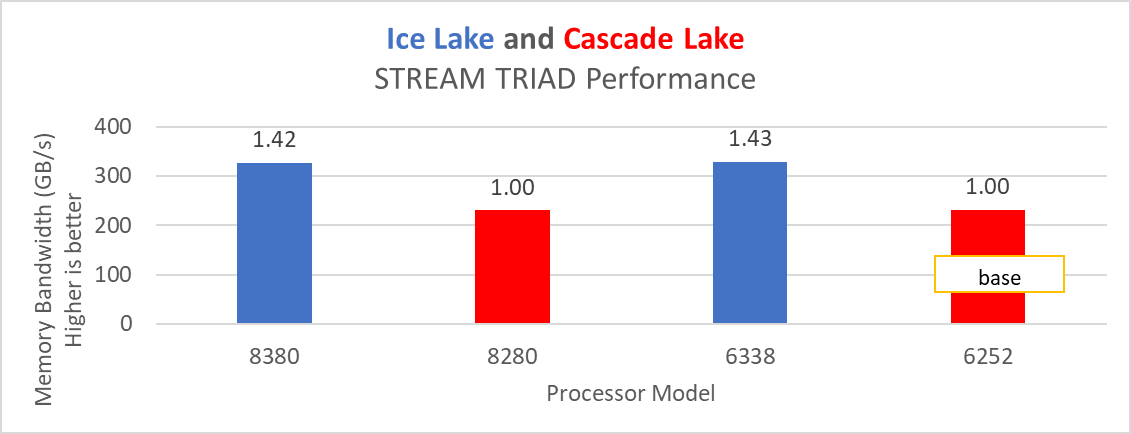

Comparison with Cascade Lake processors

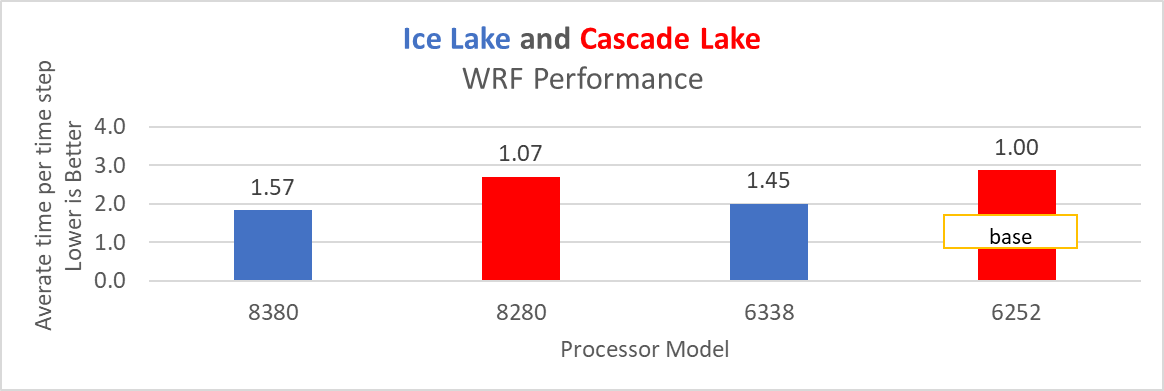

Based on the compute resources availability in our Dell EMC HPC & AI Innovation Lab, we selected the Cascade Lake processor-based servers and benchmarked them with software listed in Table 1. Figure 13 through Figure 16 show performance results from the Intel Ice Lake and Cascade Lake processors. The numbers over the bars represent the relative change in the application performance with respect to the application performance obtained on the Intel 6252 Cascade Lake processor.

Figure 13: HPL performance on processors listed in Table 2

Figure 13: HPL performance on processors listed in Table 2

Figure 14: HPCG performance on processors listed in Table 2

Figure 14: HPCG performance on processors listed in Table 2

Figure 15: STREAM TRIAD test performance on Processors listed in Table 2

Figure 15: STREAM TRIAD test performance on Processors listed in Table 2

Figure 16: WRF performance on Processors listed in Table 2

Figure 16: WRF performance on Processors listed in Table 2

Ice Lake delivers approximately 38 percent better performance than Cascade Lake with HPL on the top-end processor model. The memory bandwidth-bound benchmarks such as STREAM and HPCG (see Figure 13 and Figure 14) delivered 42 percent to 43 percent performance improvement over the top-end Cascade Lake processors addressed in this blog.

The average real-time power usage of the Dell EMC PowerEdge platforms (listed in Table 1) was measured with the synthetic benchmarks listed in this blog. Figure 17 compares the power usage data from the Cascade Lake and Ice Lake platforms. The number over the bar represents the relative change of power with respect to the base (Intel 6252 processor in the idle state) power measured.

Figure 17: Average power usage during benchmark runs on Dell EMC PowerEdge servers (see details in Table 1)

Considering the data with the Performance Optimized profile with the respective power measurement, the applications (depending on the processor model) were unable to deliver better performance per watt on the Ice Lake platform when compared to the Cascade Lake platform.

Summary and future work

The Ice Lake processor-based Dell EMC Power Edge servers, with notable hardware feature upgrades over Cascade Lake, show up to 47 percent performance gain for all the HPC benchmarks addressed in this blog. Hyper-threading should be Disabled for the benchmarks addressed in this blog; for other workloads the option should be tested and enabled as appropriate. Watch this space for subsequent blogs that describe application performance studies on our new Ice Lake processor-based cluster.