Using Synthetic Data Generation to Fine Tune a model from the Dell Enterprise Hub

Tue, 01 Oct 2024 19:12:10 -0000

|Read Time: 0 minutes

Background

The Dell Enterprise Hub offered by Dell Technologies and Hugging Face enables faster time to value for on-premises AI solutions. It provides an easily accessible cloud-hosted source for obtaining and using optimized models that run on some of the latest and greatest Dell hardware. For industries that must comply with data protection and security standards, on-premises deployment of AI is often required to meet those standards. The likelihood of significant data breaches and unauthorized access vastly decreases with on-premises solutions.

The Dell Enterprise Hub now hosts a comprehensive range of offerings, including all three architectures of the Llama 3.1 models. These models have been trained on an extensive dataset consisting of over 15 trillion tokens from diverse data sources. This meticulous curation and processing of the training data has significantly enhanced the models' generalization and task performance across various benchmarks, enabling long-context processing and multilingual support.

For the Llama 3.1 models, in addition to the ~15 trillion tokens of training data from publicly available sources, a fine-tuning dataset was used that includes additional publicly available instruction datasets and over 25M synthetically generated examples. The pretraining cutoff date for both sets of data was December 2023.

Despite the rapid growth in available training data and more frequent model releases, the training data cutoff date limits a model's ability to generate responses based on new information released after the cut off. In addition, most organizations have data that is private and would not be available during collection of a training dataset.

For example, during our evaluation of the latest Llama 3.1 models, we noticed that responses to questions such as "What is the Dell Enterprise Hub?" were very generic and specifically did not include references to our partner Hugging Face or large language models and AI. LLMs will often exhibit this behavior, mainly when no guardrails or application logic is in place to check the certainty of the next word prediction process so that an alert can be sent indicating that the response may not be reliable. Even when the model discovers many choices for the next word of roughly equal likelihood, there is no mechanism to intervene with phrases like "I'm not sure..." or "I don't know...". In these cases, the model will produce a stream of tokens that most likely will not have much accurate information, often called hallucination. We saw this as an opportunity to evaluate tools for improving the performance of models downloaded from the Dell Enterprise Hub.

Fine Tuning

There are many ways to incorporate new information unavailable for training by the data cutoff date. Retrieval Augmented Generation (RAG) and Supervised Fine Tuning (SFT) are the most popular. A thorough treatment of the advantages and disadvantages is not feasible in this article. However, here are some high-level comparisons:

Purpose | Both techniques can extend and improve an LLM's ability to produce responses with information unavailable during training.

|

Data Requirements: | RAG and SFT techniques require a large corpus of documents containing new information.

|

Flexibility: | RAG can dynamically retrieve information, making it adaptable to new data without retraining. SFT performance improvements are acquired in discrete increments, requiring additional training when new data is available. |

Operational Complexity: | RAG is more complex due to the integration of retrieval and generation components. The SFT fine-tuning of the model with supervised data is less operationally complex. |

The remainder of this article describes the implementation of and results from a demonstration of using Dell Enterprise Hub resources to implement a method of SFT called Direct Preference Optimization (DPO) fine-tuning.

Direct Preference Optimization (DPO) fine-tuning

DPO is designed to optimize a model based on human or system preferences. This form of SFT is especially useful in cases like question-and-answer or summarization tuning, where you have pairs of outputs: one is preferred, and the other is rejected.

Unlike traditional methods like Reinforcement Learning from Human Feedback (RLHF), which require a separate reward model, DPO simplifies the process by using a binary cross-entropy loss function to optimize the policy model directly to generate responses that align more closely with the preferred ones.

We chose to focus on using DPO with question-and-answer pairs. We need a three-column dataset to start fine-tuning questions and answers using DPO.

Prompt | Preferred Answer | Rejected Answer |

|

|

|

As noted above, RAG and SFT require enough training data to affect change in how the model responds to prompts, but nothing nearly as significant as is used for the initial round of training. Quality dataset development will involve substantial human-in-the-loop (HITL) review. With our limited time for this project, data collection and preparation would have to be scaled down to something less than typically required to fine-tune a production model.

Synthetic Data Generation

Synthetic data generation with LLMs for training and fine-tuning is becoming more widespread. Most organizations have internal or public data unavailable during initial training for off-the-shelf foundational models. Extracting training data is laborious, and using Synthetic Data Generation (SDG) can greatly expedite this process.

Data Extraction

For our project, we extracted text from a list of ~50 URLs containing previous Blogs that discussed Dell AI and the Dell Enterprise Hub in particular.

We used the Beautiful Soup library (https://beautiful-soup-4.readthedocs.io/en/latest/) to extract the text from the HTML pages. We did some cleaning and decided to keep the entire text in a single chunk instead of splitting it. Most Blogs were between 1000 and 2000 words, so they were well within the supported context length of 128k for Llama 3.1 models.

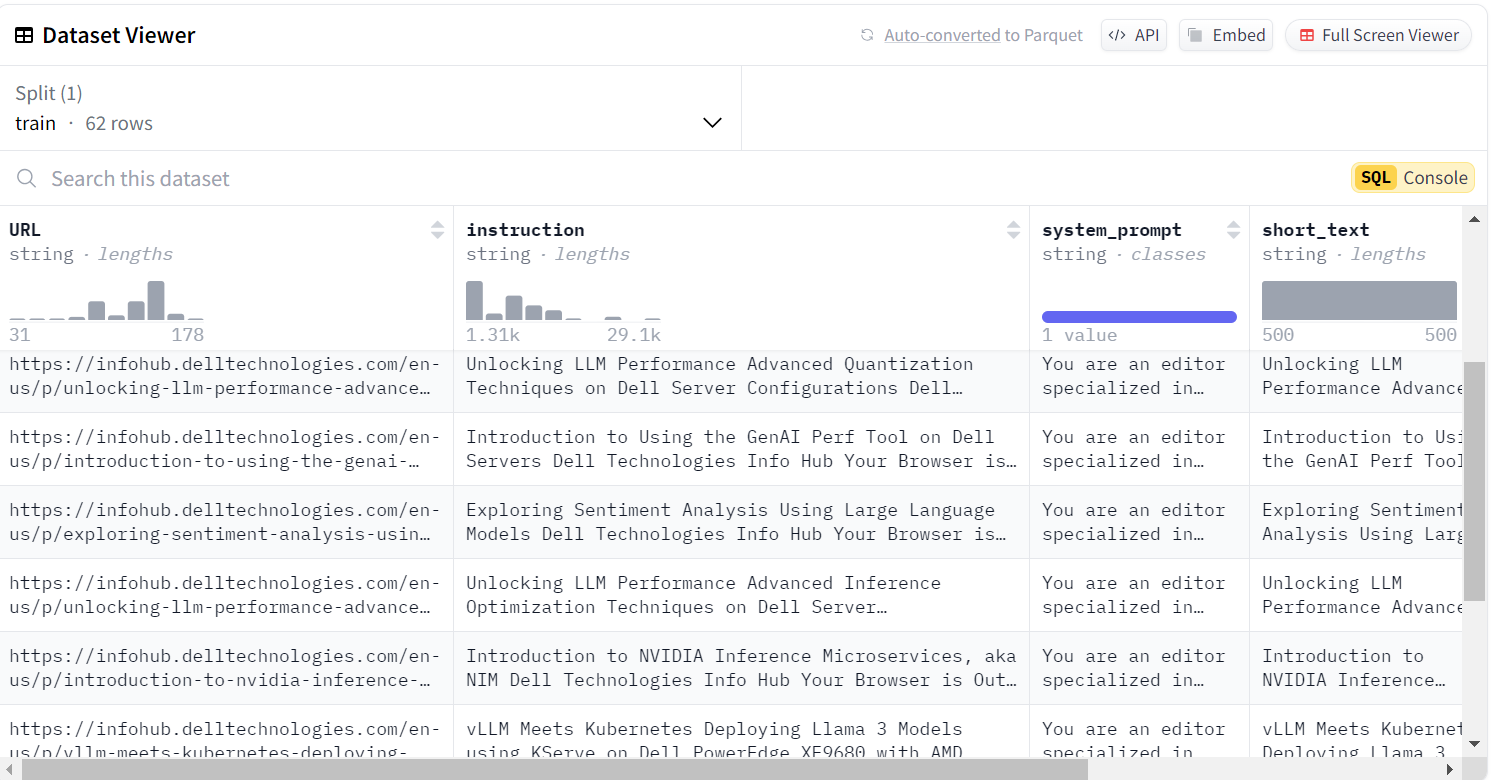

Our plan was to test the fine-tuning of one of the Llama 3.1 models with questions and answers extracted from this text, so we uploaded the raw text dataset to the Hugging Face hub, which made the next step easier to process with Distilabel. The first few rows of the dataset are shown here:

The LLM should rely primarily on the information supplied in the context of the above instruction. Models like the Llama 3.1 versions can produce high-quality, factually accurate questions and answers with sufficient prompting context, even for topics not well represented in the original training data. The output from the above step will fulfill the needs for the first two columns of the DPO training dataset.

This part of the dataset generation could be completed with no HITL; however, in a business-critical application, there would be a careful review using crowdsourcing and potentially multiple reviews with voting. For our project, we manually reviewed the final generated data prior to starting the fine-tuning.

Distilabel

The Distilabel (https://distilabel.argilla.io/latest/) framework was selected to generate questions and answers from the data collected from the URLs. Distilabel is a rich library that simplifies generating and rating of synthetic data. In this case, we will use a simple Distilabel pipeline to create questions using a local deployment of the Llama 8B model:

from distilabel.llms import InferenceEndpointsLLM

from distilabel.pipeline import Pipeline

from distilabel.steps import LoadDataFromHub

from distilabel.steps.tasks import TextGeneration

llama8B = InferenceEndpointsLLM(

api_key="hf_YOUR_API_KEY",

model_display_name="llama8B",

base_url="http://1.2.3.4:80", #Local model

generation_kwargs={

"max_new_tokens": 1024,

"temperature": 0.7

}

)

with Pipeline(name="synthetic-data-with-llama3.1") as pipeline:

# load dataset with Blog Data and prompts

load_dataset = LoadDataFromHub(

repo_id="ianr007/test-hf-blog"

)

# generate questions

generate = [

TextGeneration(llm=llama8B),

]

load_dataset >> generate

if __name__ == "__main__":

result = pipeline.run()

df = result['default']['train'].to_pandas()

print(df.head())

# write output file that will be used for the next phase

df.to_csv('distilabel_output.csv')In this snippet, a dataset is loaded and used to generate questions and answers. The critical elements of the Dataset are:

- The instruction column contains the contents of a Blog page.

- The system_prompt contains the following “You are an editor specialized in creating frequently asked questions. Create as many questions and answers as possible from the text provided.”

This was sufficient for the Llama 3.1 8B model to generate over 500 questions from the raw text content extracted from the blogs using the Distilabel pipeline.

Final Dataset

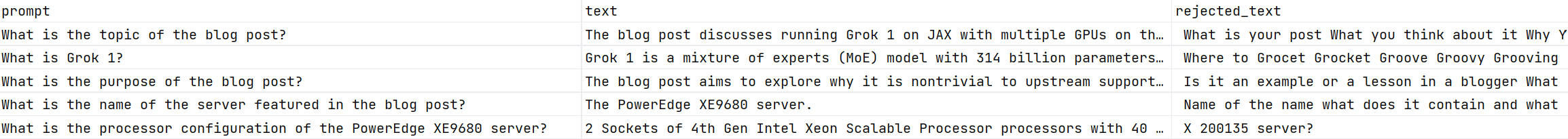

The next step was to parse the output into two columns, one for the questions and one for the answers. Since we chose to try Direct Preference Optimization (DPO) as the tool for fine-tuning, there was one more pre-processing step left.

In the previous step, the generated question was put into the prompt column (question), and the answer was put into the text column. The inputs for DPO with questions and answers need an additional column for an answer that is “less preferred” and must be labeled rejected_text.

The DPO process requires a dataset with the following layout:

The rejected_text column should contain answers to the questions considered worse than the answers we generated based on the blog knowledge, and we still need to generate those.

The dataset has over 500 questions. Creating these bad answers using a human-in-the-loop approach would take a considerable amount of time. The approach we tested was to use the TinyLlama (https://huggingface.co/TinyLlama/TinyLlama-1.1B-Chat-v1.0) model to generate answers to the questions. The premise was that it also did not have access to training data with knowledge of the Dell Enterprise Hub and would give poor answers. The answers produced were, in fact, poor.

For business-critical applications, a thorough review of the rejected answers should be performed as with the first stage. However, for our project, the final step was to manually review the dataset, remove any invalid questions, and ensure that no Null or empty values were present.

Hugging Face AutoTrain

AutoTrain is a no-code platform developed by Hugging Face to simplify training state-of-the-art models across multiple domains: Natural Language Processing (NLP), Computer Vision (CV), and even Tabular Data analysis. It is for organizations that want to train a state-of-the-art model for NLP, CV, Speech, or Tabular Data tasks but can't invest time learning all the technical details of training a model.

AutoTrain abstracts much of the complexity involved in model training, fine-tuning, and evaluation, offering a solution that automatically handles hyperparameter optimization, dataset processing, and model selection. AutoTrain automatically tunes hyperparameters like learning rate, batch size, and epochs, which can significantly impact model performance. This is done through techniques like grid search or Bayesian optimization.

AutoTrain includes a DPO Trainer specifically designed to facilitate the DPO process. This trainer helps optimize a reward function based on direct comparisons of different model outputs, making it easier to align models with human preferences.

Fine Tuning DEH containers

This example shows how to fine-tune a Dell Enterprise Hub model container using Docker. The command is based on the command:

https://dell.huggingface.co/authenticated/models/meta-llama/Meta-Llama-3.1-8b/train/docker

but was modified as follows to use a DPO trainer in place of SFT.

docker run \

--gpus 2 \ --shm-size 1g \ -v /home/ianr.dell/hf/data/cleaned_questions-8B.csv:/app/data/dataset.csv \ -v /home/ianr.dell/hf/output:/app/autotrain \ registry.dell.huggingface.co/enterprise-dell-training-meta-llama-meta-llama-3.1-8b \ --model /app/model \ --project-name fine-tune \ --data-path /app/data \ --trainer dpo \ --text-column text \ --rejected-text-column rejected_text \ --prompt-text-column prompt \ --epochs 3 \ --mixed_precision bf16 \ --batch-size 2 \ --peft \ --merge-adapter \ --quantization int4

This command uses the prepared dataset, maps the dataset columns, and trains for 3 Epochs. Obviously, these properties can be tuned per use case.

It is also possible to fine-tune using Kubernetes, but we will not cover those steps in this blog.

Testing the new model

In the previous step, we trained a model with a new dataset, so now it is time to test the model and see if the fine-tuning produced the expected result.

The first step is to launch the Bring Your Own Model (BYOM) container from the DEH. An example can be found at https://dell.huggingface.co/authenticated/models/meta-llama/Meta-Llama-3.1-8b/deploy-fine-tuned/docker . This special inference container will take the weights produced as a result of fine-tuning and automatically load them into the container. At this point, the container acts like a normal inference container with the normal endpoints exposed.

To test the new model, a simple curl command can be used:

curl 11.22.33.44:80/generate -X POST -d '{"inputs":"What is the Dell Enterprise Hub?","parameters":{"max_new_tokens":50}}' -H 'Content-Type: application/json'As mentioned above, the Llama 3.1 models without fine-tuning do not appear to have been trained with the Dell Enterprise Hub knowledge, which was released after the model training data collection cutoff date.

The result of the regular Llama 3.1 8B model was:

{"generated_text":" The Dell Enterprise Hub is a centralized management platform that provides a single pane of glass for managing Dell EMC storage, servers, and networking products. It offers a unified view of the entire IT infrastructure, enabling administrators to monitor, manage, and optimize their IT"}The result from the model, after being fine-tuned with information in the Dell Blogs is:

{"generated_text":"Dell Enterprise Hub is a self-service platform that enables you to easily provision, manage, and scale AI workloads on Dell"}This shows that when training with limited data and for as little as 3 Epochs, we can see a marked improvement in the fine-tuned model's question-answering capability. In addition, this was all done with synthetic data generation using the Llama 3.1 8B model, and it is expected to improve more if the 405B model is used. We plan to investigate this and discuss the results in a future blog.

Conclusion

In this project, we have shown how to generate your own synthetic data from either private or public content. We have also shown how to fine-tune a Llama model from the DEH, and the result is that the model can now answer domain-specific questions that were previously not possible.