Streaming for Edge Inferencing; Empowering Real-Time AI Applications

Tue, 06 Feb 2024 10:17:30 -0000

|Read Time: 0 minutes

In the era of rapid technological advancements, artificial intelligence (AI) has made its way from research labs to real-world applications. Edge inferencing, in particular, has emerged as a game-changing technology, enabling AI models to make real-time decisions at the edge. To harness the full potential of edge inferencing, streaming technology plays a pivotal role, facilitating the seamless flow of data and predictions. In this blog, we will explore the significance of streaming for edge inferencing and how it empowers real-time AI applications.

The Role of Streaming

Streaming technology is a core component of edge inferencing, as it allows for the continuous and real-time transfer of data between devices and edge servers. Streaming can take various forms, such as video streaming, sensor data streaming, and audio streaming, or depending on the specific application's requirements. This real-time data flow enables AI models to make predictions and decisions on the fly, enhancing the overall user experience and system efficiency.

Typical Use Cases

Streaming for edge inferencing is already transforming various industries. Here are some examples:

- Smart cities—Edge inferencing powered by streaming technology can be used for real-time traffic management, crowd monitoring, and environmental sensing. This enables cities to become more efficient and responsive.

- Healthcare—Wearable devices and IoT sensors can continuously monitor patients, providing early warnings for health issues and facilitating remote diagnosis and treatment.

- Retail—Real-time data analysis at the edge can enhance customer experiences, optimize inventory management, and provide personalized recommendations to shoppers.

- Manufacturing—Predictive maintenance using edge inferencing can help factories avoid costly downtime by identifying equipment issues before they lead to failures.

Dell Streaming Data Platform

The Dell Streaming Data Platform (SDP) is a comprehensive solution for ingesting, storing, and analyzing continuously streaming data in real-time. It provides one single platform to consolidate real-time, batch, and historical analytics for improved storage and operational efficiency.

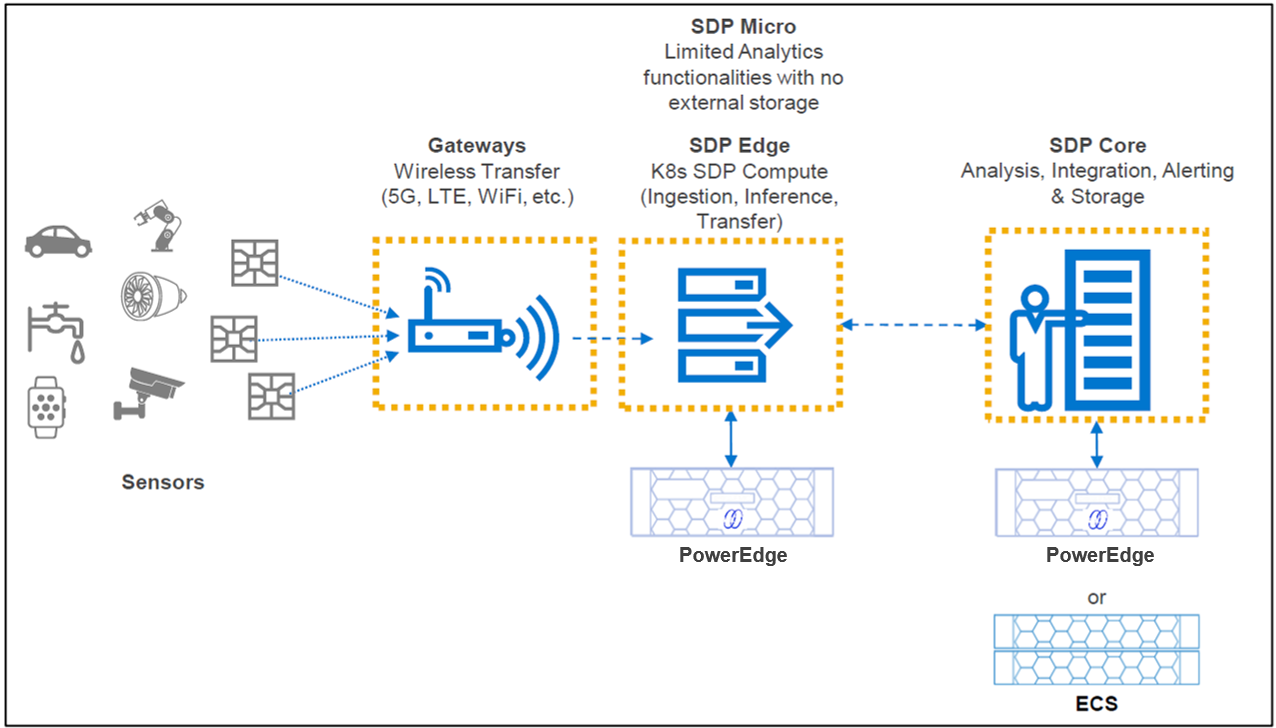

The following figure shows a high-level overview of the Streaming Data Platform architecture streaming data from the edge to the core.

Figure 1. SDP high-level architecture

Using SDP for Edge Video Ingestion Inferencing

The key advantage of SDP, in the context of edge, is the low footprint and the ability to deal with long-term data storage. Because the platform is built on Kubernetes, storing all that data is a matter of adding nodes. Now that you have all that data, using it becomes a practice in innovation. By autotiering storage upon ingestion, SDP allows for unlimited historical data retrieval for analysis alongside real-time streaming data. This enables endless business insights at your fingertips, far into the future.

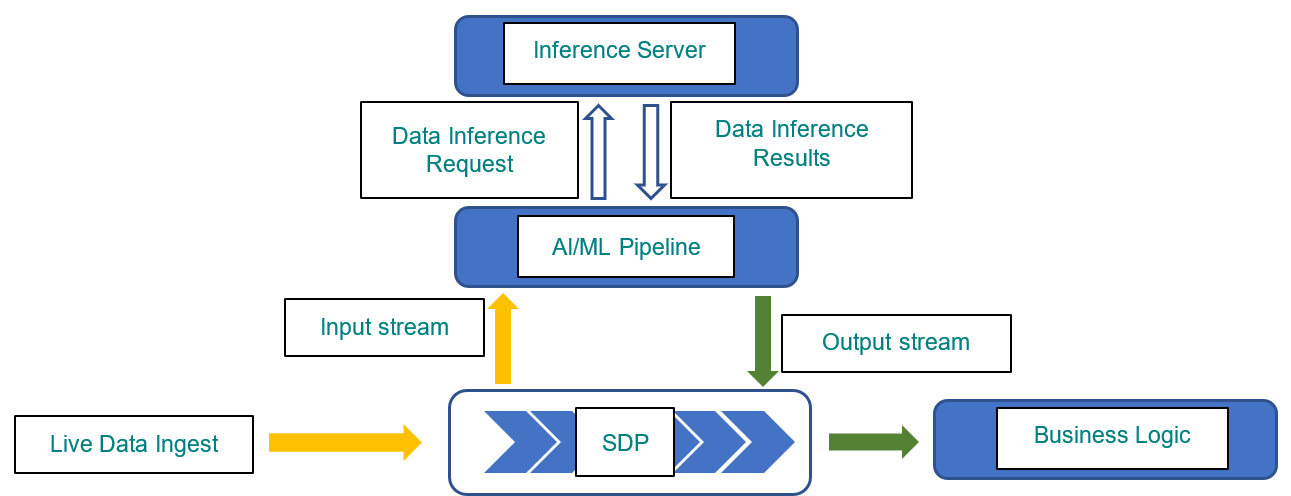

One key advantage of SDP at edge deployment is its capability of supporting real-time inferencing for edge AI/ML applications as live data is ingested into SDP. Data insights can be obtained without delay while such data is also persistently stored using SDP’s innovative tiered-storage design that provides long-term storage and data protection.

For computer vision use cases, SDP provides plugins for the popular open-source multimedia processing framework GStreamer that enables easy integration with GStreamer video analytics pipelines. See the GStreamer and GStreamer Plugins.

Figure 2. Edge Inferencing with SDP

Optimized for Deep Learning at Edge

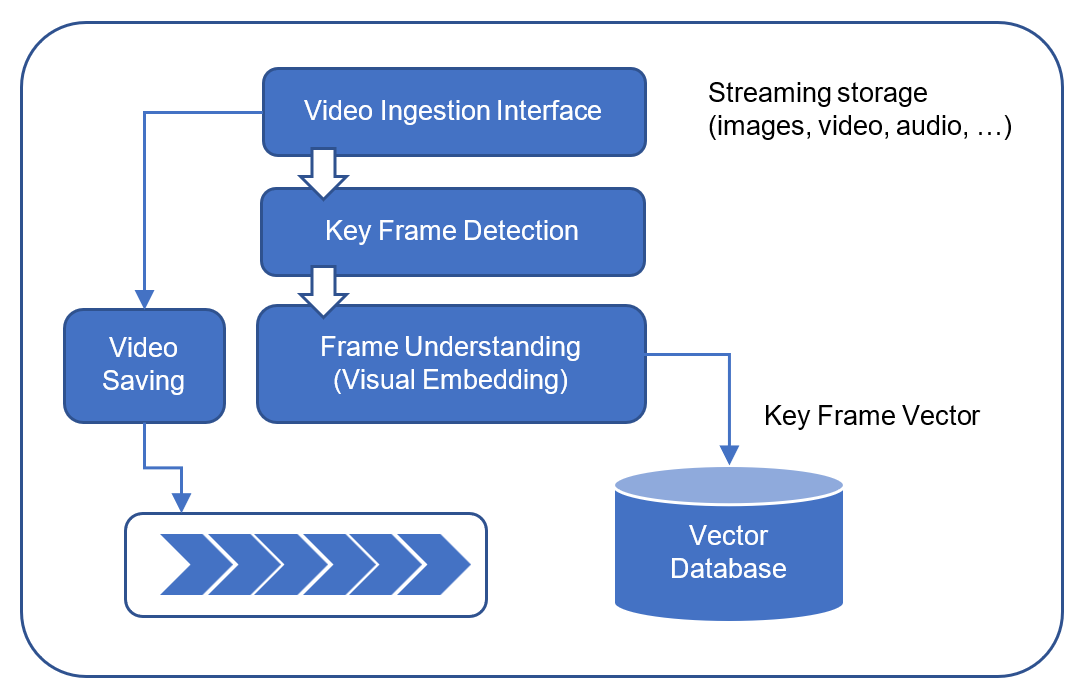

Figure 3. Using SDP for visual embedding of computer vision

SDP was also optimized to process video streaming data and process it at the edge by adding frame detection. Saving video frames combined with an integrated vector database enables to handle video processing at the edge.

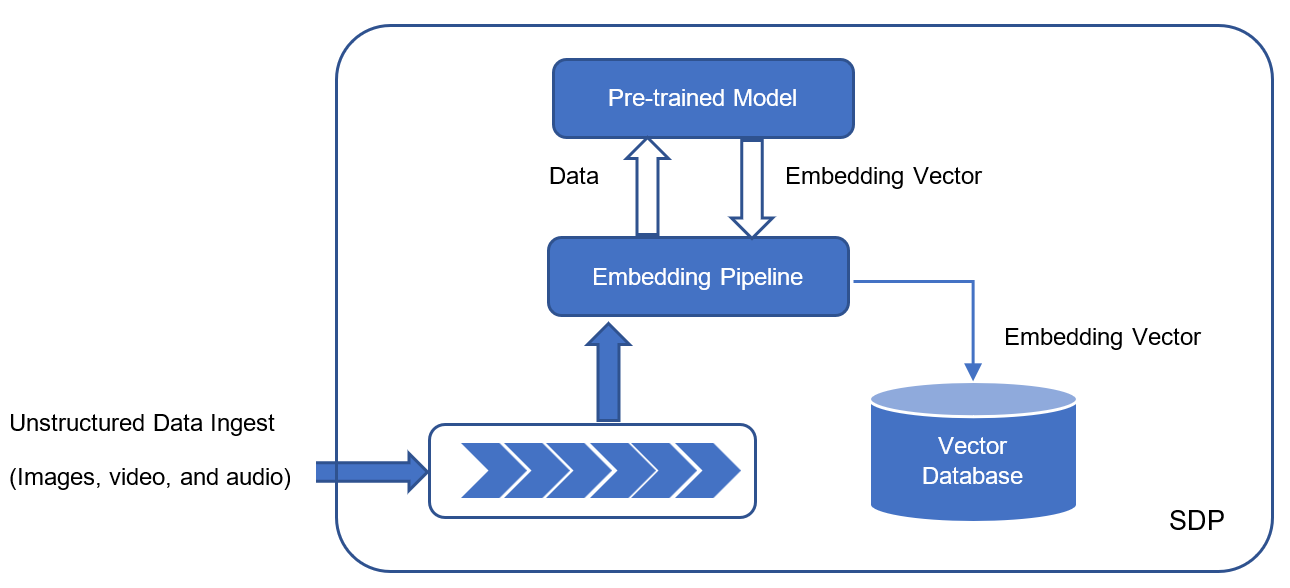

SDP is optimized for deep learning by providing semantic embedding of ingested data, especially for unstructured data such as images and videos. As unstructured data is ingested into SDP, they are processed by an embedding pipeline that leverages pretrained models to extract semantic embeddings from raw data and persist such embeddings in a vector database. Such semantic embedding of raw data enables advanced data management capabilities as well as support for GenAI type of applications. For example, these embeddings can provide domain-specific context for GenAI type of query using Retrieval Augmented Generation (RAG).

Optimized for Edge AI

As AI/ML applications are becoming more widely adopted at the edge, SDP is ideally suited to support these applications by enabling real-time inference at the edge. Compared to traditional edge AI applications where data is transported to the cloud or core for inferencing, SDP can provide real-time inference right at the edge when live data is ingested so that inference latency can be greatly reduced.

In addition, SDP embraces rapidly emerging deep learning and GenAI applications by providing advanced data semantic embedding extraction and embedding vector storage, especially for unstructured multimedia data such as audio, image, and video data.

Figure 4. Unstructured data embedding vector generation

Long-Term Storage

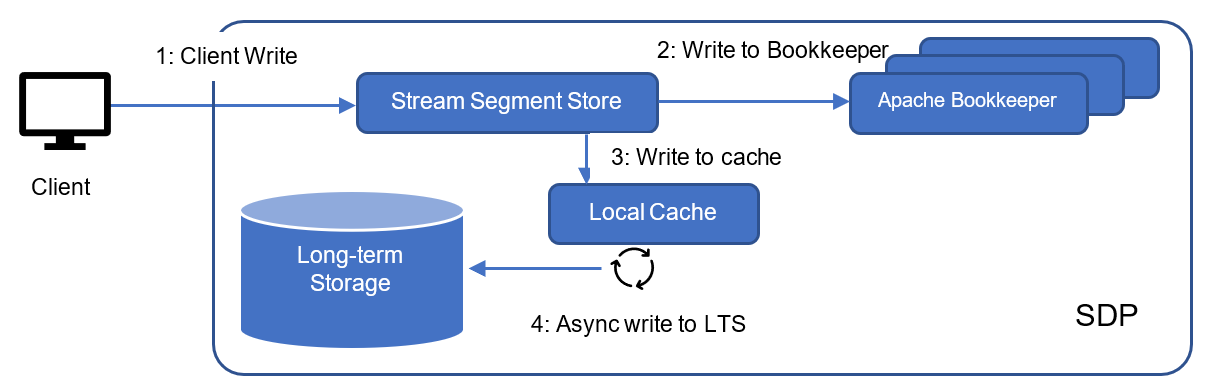

SDP is designed with an innovative tiered-storage architecture. Tier-1 provides high performance local storage while tier-2 provides long-term storage. Specifically, tier-1 data storage is provided by replicated Apache Bookkeeper backed by a local storage to guarantee data durability once data is ingested into SDP. Asynchronously, data is flushed into tier-2 long-term storage such as Dell’s PowerScale to provide unlimited data storage and data protection. Alternatively, on NativeEdge, replicated Longhorn storage can also be used as long-term storage for SDP. With long term data storage, analytics applications can consume unbounded data to gain insights over a long period of time.

The following figure illustrates the long-term storage architecture in SDP.

Figure 5. SDP long-term storage

Cloud Native

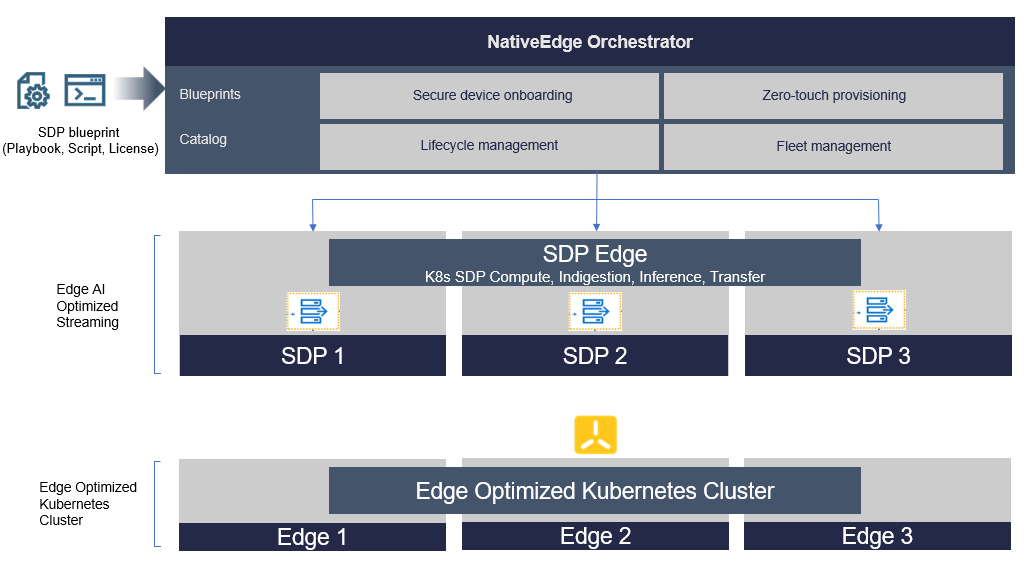

SDP is fully cloud-native in its design with distributed architecture and autoscaling. SDP can be readily deployed on any Kubernetes environment in the cloud or on-premises. On NativeEdge, SDP is deployed on a K3s cluster by the NativeEdge Orchestrator. In addition, SDP can be easily scaled up and down as the data ingestion rate and application workload vary. This makes SDP flexible and elastic in different NativeEdge deployment scenarios. For example, SDP can leverage Kubernetes and autoscale its stream segment stores to adapt to changing data ingestion rates.

Automating the Deployment of SDP on Dell NativeEdge

Dell NativeEdge is an edge operations software platform that helps customers securely scale their edge operations to power any use case. It streamlines edge operations at scale through centralized management, secure device onboarding, zero-touch deployment, and automated management of infrastructure and applications. With automation, open design, zero-trust security principles, and multicloud connectivity, NativeEdge empowers businesses to attain their wanted outcomes at the edge.

Dell NativeEdge provides several features that make it ideal for deploying SDP on the edge, including:

- Centralized management—Remotely manage your entire edge estate from a central location without requiring local, skilled support.

- Secure device onboarding with zero-touch provisioning—Automate the deployment and configuration of the edge infrastructure managed by NativeEdge, while ensuring a zero-trust chain of custody.

- Zero-trust security enabling technologies—From secure component verification (SCV) to secure operating environment with advanced security control to tamper-resistant edge hardware and software integrity, NativeEdge secures your entire edge ecosystem throughout the lifecycle of devices.

- Lifecycle management—NativeEdge allows complete lifecycle management of your fleet of edge devices as well as applications.

- Multicloud app orchestration—NativeEdge provides templatized application orchestration using blueprints. It also provides the flexibility to choose the ISV application and cloud environments for your edge application workloads.

Deploying SDP as a Cloud Native Service on top of NativeEdge-Enabled Kubernetes Cluster

In a previous blog, we provided insight into how we can turn our Edge devices into a Kubernetes cluster using NativeEdge Orchestrator. This step creates the foundation that allows us to deploy any edge service through a standard Kubernetes Helm package.

Figure 6. Deploying SDP solution on NativeEdge-enabled Kubernetes

The Deployment Process

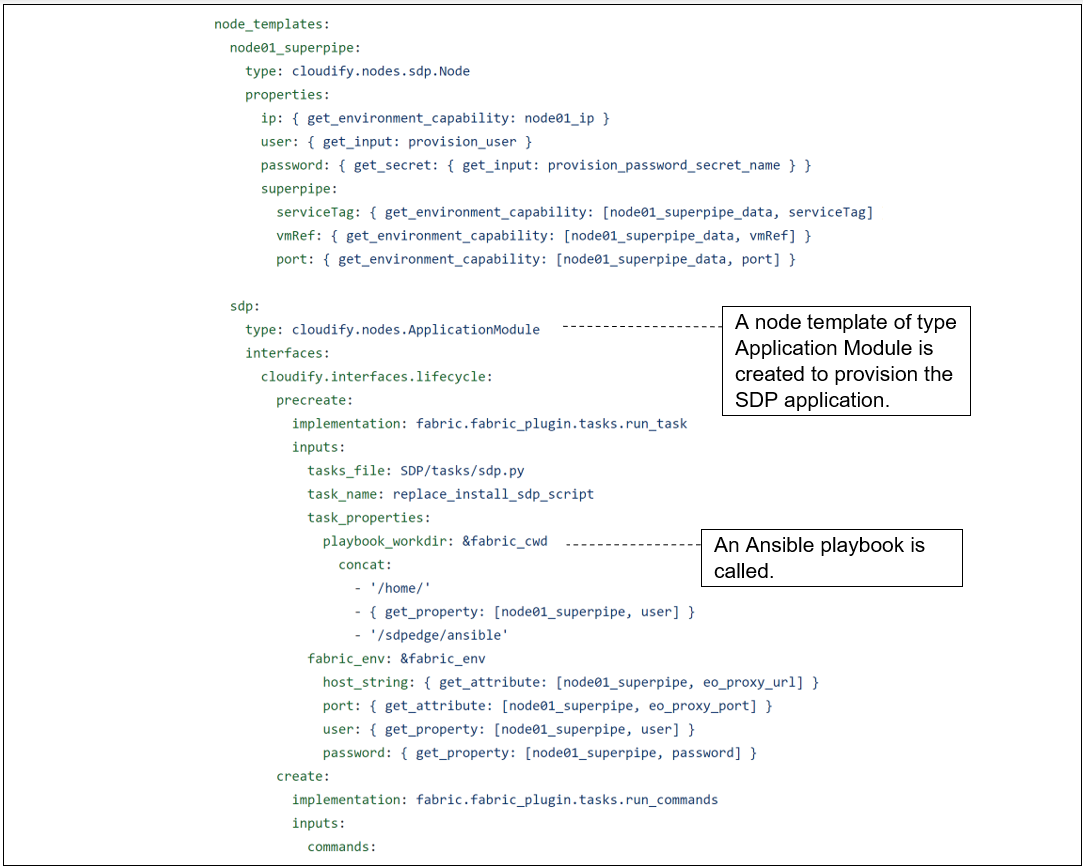

SDP is built as a cloud native service. The deployment of SDP includes a set of microservices as well as Ansible playbooks to automate the configuration management of those services.

The main blueprint that deploys the SDP app is shown in the following figure. It is a TOSCA node definition of an Application Module type, and it invokes an Ansible playbook to configure and start the deployment process.

Figure 7. Deploying SDP App

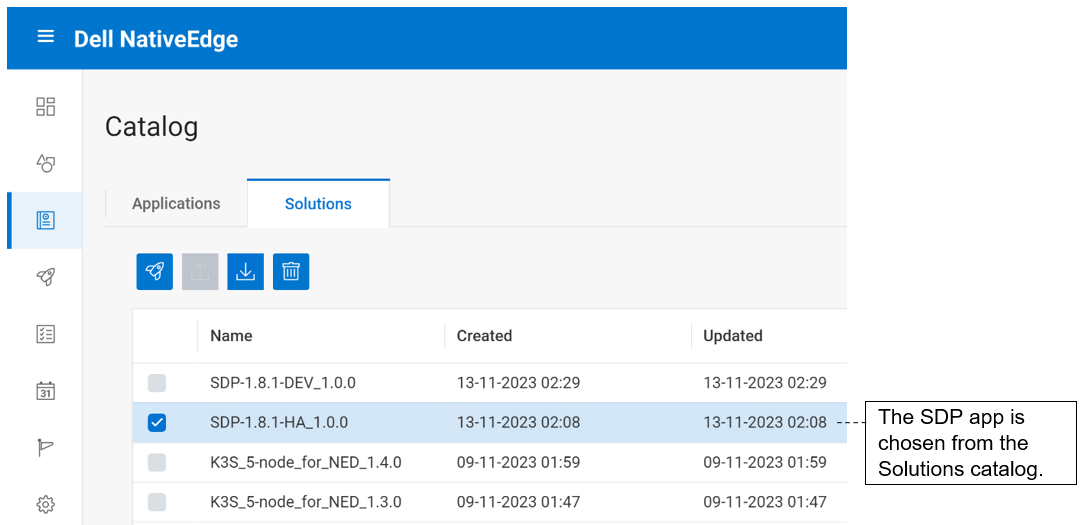

For the deployment, SDP is one of the available services we can choose from the NativeEdge Catalog under the Solutions tab. In the following figure, an HA SDP service is deployed on top of a Kubernetes cluster.

Figure 8. Select the SDP service from the NativeEdge Catalog

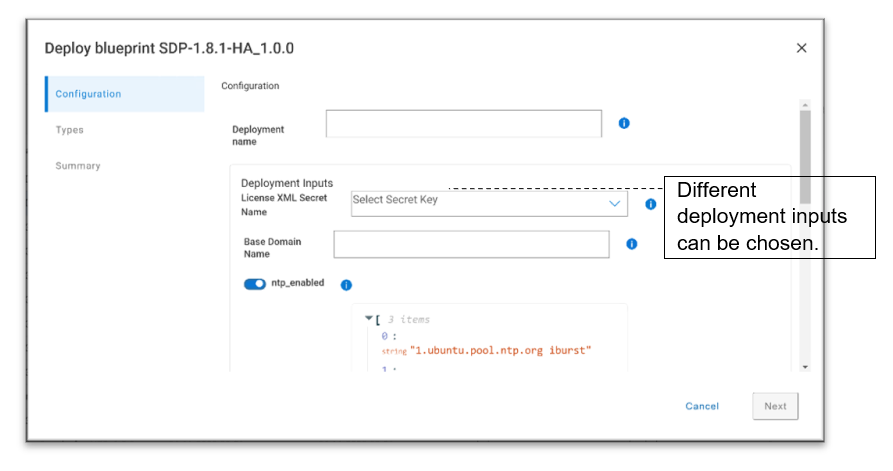

In the following figure, as part of the deployment process, we can provide input parameters. In this case, we provide configuration parameters that can vary from one edge location to another. We use the same blueprint definition with different deployment parameters to adjust various edge requirements, like different location requirements, different configurations, and so on.

Figure 9. Deploy the SDP blueprint and enter the inputs

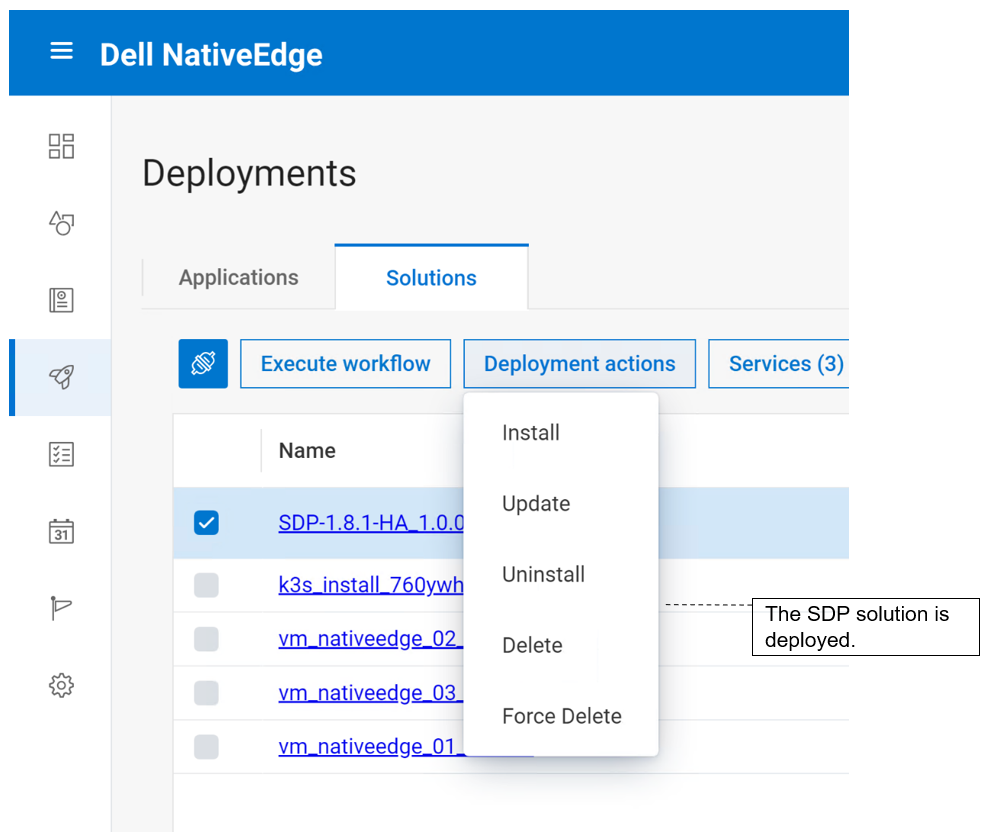

SDP service is deployed as can be seen in the following figure.

Figure 10. Create an SDP instance by performing the install workflow

Benefits of Streaming Services

Streaming services is a critical part of any edge inferencing solution and comes with the following benefits:

- Reduced latency—Streaming ensures that data is processed when it is generated. This minimal delay is crucial for applications where even a few milliseconds can make a significant difference, such as when autonomous vehicles need to react quickly to changing road conditions.

- Enhanced privacy—By processing data at the edge, streaming minimizes the need to send sensitive information to the cloud for processing. This enhances user privacy and security, which is a critical consideration in applications like healthcare and smart homes.

- Improved scalability—Streaming can efficiently handle large volumes of data generated by edge devices, making it a scalable solution for applications that involve multiple devices or sensors.

- Real-time decision making—Streaming enables AI models to make decisions in real time, which is vital for applications like predictive maintenance in industrial settings or emergency response systems.

- Cost efficiency—By performing inferencing at the edge, streaming reduces the need for continuous cloud processing, which can be costly. This approach optimizes resource utilization and cost savings,

- Adaptability—Streaming is flexible and adaptable, making it suitable for a wide range of applications. Whether it is processing visual data from cameras or analyzing sensor data, streaming can be customized to meet specific needs.

NativeEdge Support for Edge AI-Enabled Streaming Through Integrated SDP Integration

NativeEdge comes with built-in support for SDP which comes with an edge-optimized streaming solution geared specifically to fit edge use cases such as video inferencing.

NativeEdge is a great choice for edge AI-enabled streaming because:

- It is optimized for edge AI data processing

- It has a long-term storage

- It is cloud native

- It has a low footprint

Optimized for NativeEdge

SDP lifecycle management is fully automated on NativeEdge Orchestrator. SDP is available as Solutions in the NativeEdge Catalog. To deploy an instance of SDP on NativeEdge, a customer simply selects SDP from the NativeEdge Catalog under the Solutions tab and triggers the SDP deployment. The SDP blueprint deploys an SDP cluster on NativeEdge Endpoints. Once SDP is deployed, its ongoing day two operations are also managed by NativeEdge Orchestrator, providing a seamless experience for customers.

NativeEdge also comes with a fully optimized stack for handling AI workload through the support integrated accelerators through GPU pass through, SRIOV, and so on.

Support for Custom Streaming Platform

NativeEdge provides an open platform that easily plugs into your custom streaming platform through the support of Kubernetes and Blueprints, which is an integrated part of NativeEdge Orchestrator.

References

For more information, see the following: