From Bare-Metal Edge Devices to a Full-Blown Kubernetes Cluster

Tue, 02 Jan 2024 09:45:00 -0000

|Read Time: 0 minutes

Deploying a Kubernetes cluster on the edge involves setting up a lightweight, efficient Kubernetes (K8s) environment suitable for edge computing scenarios. Edge computing often involves deploying clusters on remote or resource-constrained locations, such as remote data centers, or even on-premise hardware in locations with limited connectivity.

This blog describes the steps for deploying an edge-optimized Kubernetes cluster on Dell NativeEdge.

Step 1: Select an Edge-Optimized Kubernetes Stack

Our Kubernetes stack is comprised of a Kubernetes controller, storage, and a virtual IP (also known as load balancer). We have chosen open-source components as our first choice for obvious reasons.

Standard K8s comes with a relatively high footprint cost which doesn’t fit low-cost functional edge use cases, and this is why MicroK8, K3s, K0, KubeVirt, Virtlet, and Krustlet have emerged as smaller footprint variants of Kubernetes.

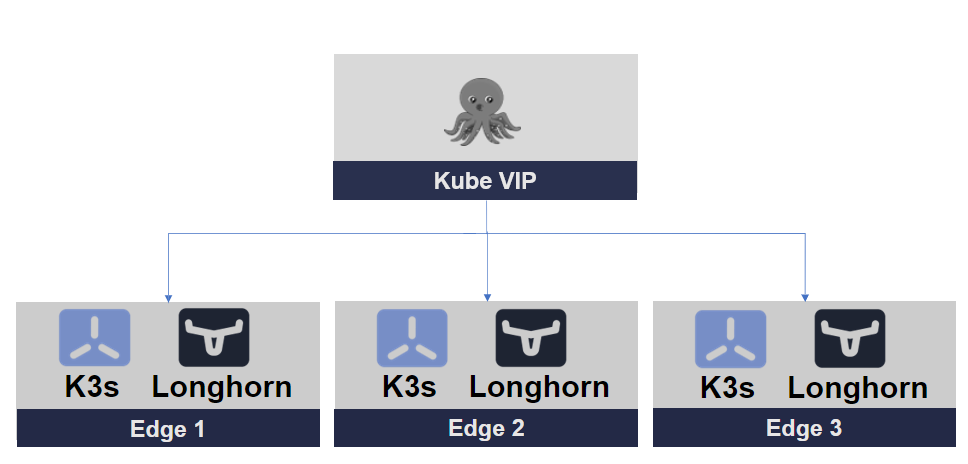

We have chosen K3s as our Kubernetes cluster, Longhorn for storage, and Kube-VIP for our cluster networking.

Figure 1. Edge-Optimized Kubernetes Stack

The following sections provide a quick overview of each element in the stack.

Edge-Optimized Kubernetes Cluster

Edge is a constrained environment that is often limited by resource capacity.

K3s is a lightweight, certified Kubernetes distribution designed for lightweight environments, including edge computing scenarios. It's an excellent choice for deploying Kubernetes clusters on the edge due to its reduced resource requirements and simplified installation process.

K3s Key Features:

- Minimal resource usage—K3s is designed to have a small memory and CPU footprint. It can run on devices with as little as 512MB of RAM and is suitable for single-node setups.

- Reduced dependencies—K3s eliminates many of the dependencies that are present in a full Kubernetes cluster, resulting in a smaller installation size and simplified management. It uses SQLite as the default database, for example, instead of etcd.

- Lightweight images—K3s uses lightweight container images, which further reduces its overall size. It includes only the necessary components to run a Kubernetes cluster.

- Single binary—K3s is distributed as a single binary, making it easy to install and manage. This binary includes both the server and agent components of a Kubernetes cluster.

- Highly compressed artifacts—K3s uses highly comp

ressed artifacts, including container images and binary files to reduce disk space usage. - Reduced network overhead—K3s can operate in network-constrained environments, making it suitable for edge computing scenarios.

- Efficient updates—K3s is designed to handle updates efficiently, ensuring that the cluster stays small and doesn't accumulate unnecessary data.

Edge-Optimized Storage

Longhorn is an open-source, cloud-native distributed storage system for Kubernetes. It is designed to provide persistent storage for containerized applications in Kubernetes environments.

Longhorn Key Features:

- Distributed block storage—Longhorn offers distributed block storage that can be used as persistent storage for applications running in Kubernetes pods. It uses a combination of block devices on worker nodes to create distributed storage volumes.

- Data redundancy—Longhorn incorporates data redundancy mechanisms such as replication and snapshots to ensure data integrity and high availability. This means that even if a node or volume fails, data is not lost.

- Kubernetes-native—Longhorn is designed specifically for Kubernetes and integrates seamlessly with it. It is implemented as a custom resource definition (CRD) within Kubernetes, making it a first-class citizen in the Kubernetes ecosystem.

- User-friendly UI—Longhorn provides a user-friendly web-based management interface for users to easily create and manage storage volumes, snapshots, and backups. This simplifies storage management tasks.

- Backup and restore—Longhorn offers a built-in backup and restore feature, enabling users to take snapshots of their data and restore them when needed. This is crucial for disaster recovery and data protection.

- Cross-cluster replication—Longhorn has features for replicating data across different Kubernetes clusters, providing data availability and disaster recovery options.

- Lightweight and resource-efficient—Longhorn is resource-efficient and lightweight, making it suitable for various environments, including edge computing, where resource constraints may exist.

- Open source and community-driven—Longhorn is an open-source project with an active community, which means it receives regular updates and improvements.

- Cloud-native storage solutions—It is well-suited for stateful applications, databases, and other workloads that require persistent storage in Kubernetes, offering a cloud-native approach to storage.

Kube-VIP (Load Balancer)

Kubernetes Virtual IP (Kube-VIP) is an open-source tool for providing high availability and load balancing within Kubernetes clusters. It manages a virtual IP address associated with services, ensuring continuous access to services, load balancing, and resilience to node failures.

Kube-VIP Key Features:

- Virtual IP (VIP)—Kube-VIP manages a virtual IP address, which is associated with a Kubernetes service. This IP address can be used to access the service, and Kube-VIP ensures that the traffic is directed to healthy pods and nodes.

- High availability—Kube-VIP supports high-availability configurations, allowing it to function even when nodes or control plane components fail. It can automatically detect node failures and reroute traffic to healthy nodes.

- Load balancing—Kube-VIP provides load-balancing capabilities for services, distributing incoming traffic among multiple pods for the same service. This helps distribute the load evenly and improve the service's availability.

- Support for various load-balancing algorithms—Kube-VIP supports multiple load-balancing algorithms, such as round-robin, least connections, and more, allowing you to choose the most suitable strategy for your services.

- Integration with Kubernetes—Kube-VIP is designed to work seamlessly with Kubernetes clusters and leverages Kubernetes resources to configure and manage the virtual IP and load balancing.

- Customizable configuration—Kube-VIP provides configuration options to fine-tune its behavior based on specific cluster requirements.

- Support for multiple load-balancer implementations—Kube-VIP can be used with different load-balancer implementations, including Border Gateway Protocol (BGP) and other network load-balancing solutions.

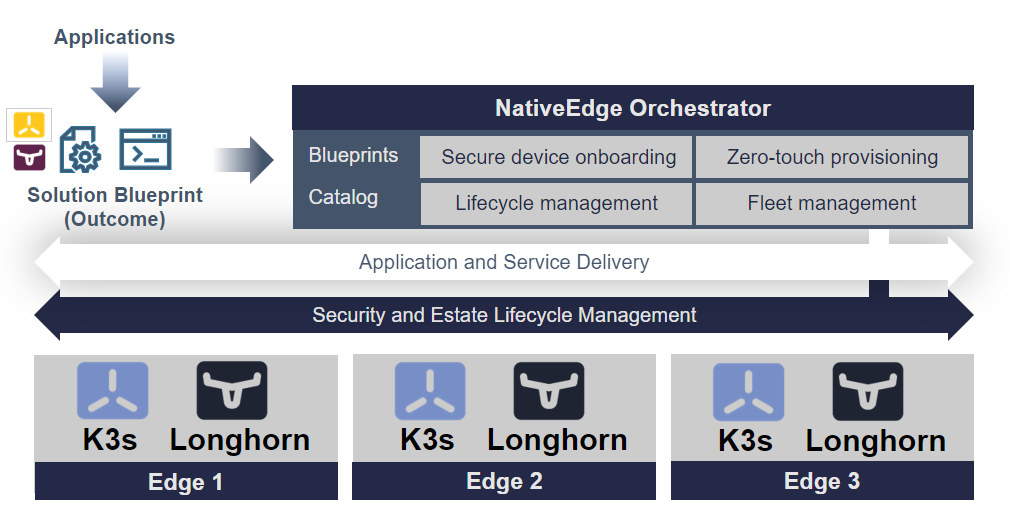

Step 2: Automating the Deployment of Edge Kubernetes on Dell NativeEdge

To automate the deployment of Edge Kubernetes on NativeEdge, we need to automate the deployment of all three components of our edge architecture.

For that purpose, we use the NativeEdge Orchestrator blueprint. The blueprint provides the automation scheme for each component and allows us to compose a solution offering an end-to-end automation of the entire cluster on all its components.

Figure 2. Automating the Kubernetes Cluster Deployment

Step 3: Deployment and Configuration

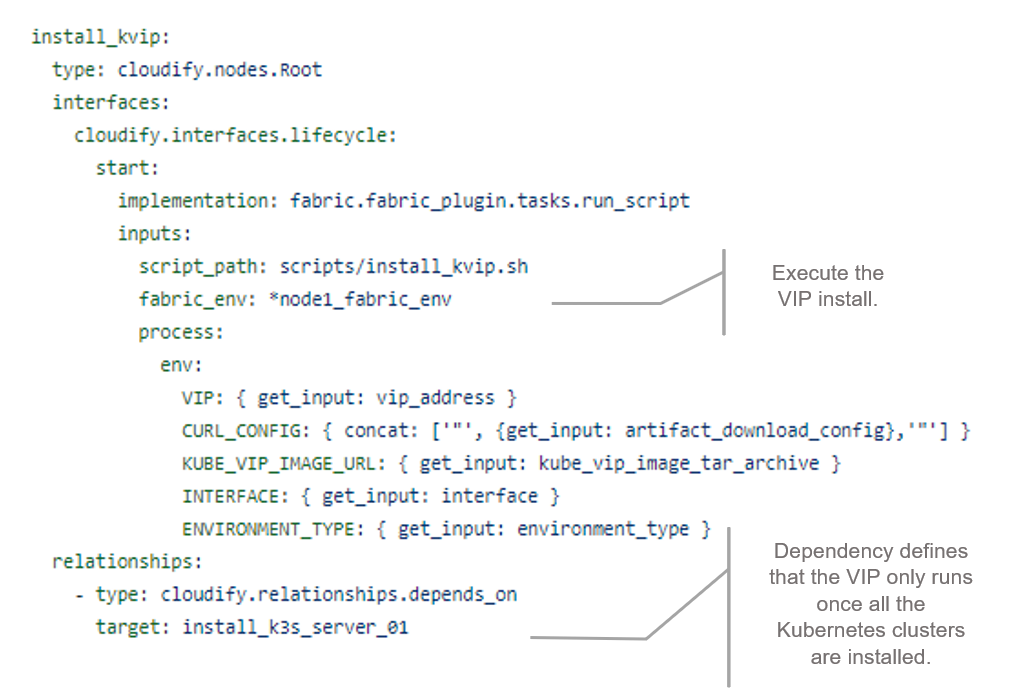

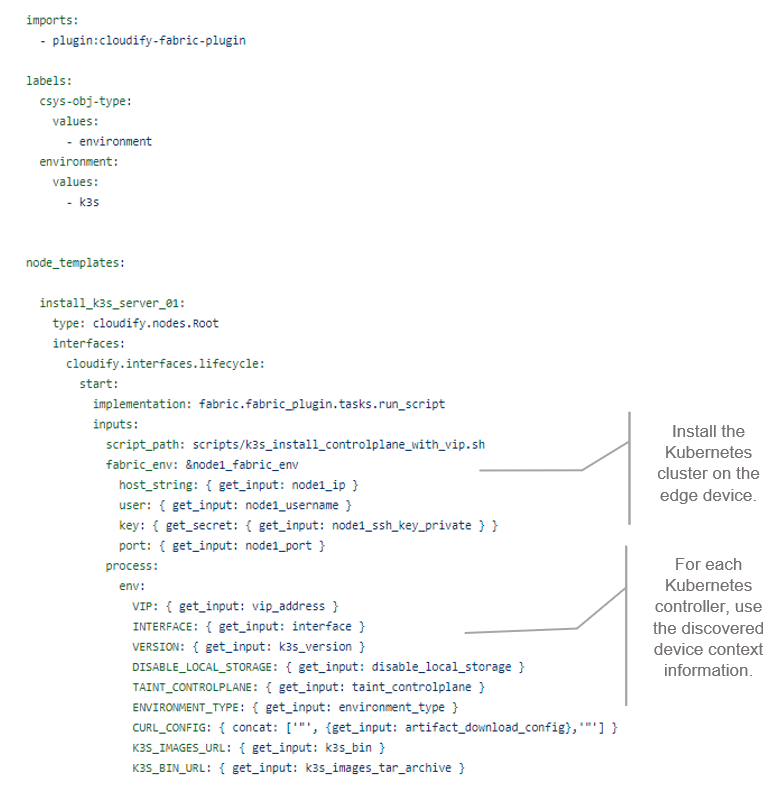

The following snippets show the blueprint for each of the three components that were previously described. A blueprint is a form of infrastructure as code (IaC) written in YAML format. Each blueprint uses a different automation plugin that fits each unit.

The first snippet shows the provisioning of a virtual IP address (VIP) that serves as the cluster entry point to the outside world. As with any load-balancer, it provides a single VIP address for all three nodes in the cluster. In this case, we chose a fabric plugin (SSH script) to automate the installation and configuration of that VIP service (scripts/install_kvip.sh).

Figure 3. VIP Blueprint Snippet

The second snippet shows the provision of the K3s cluster. It comes in multiple configuration flavors, a single node, and a three or five node HA cluster. We first provision the first node and then, in case of a multi node cluster, provision the rest of the nodes. All of the nodes form a cluster and result in an HA solution.

Figure 4. K3s Blueprint Snippet

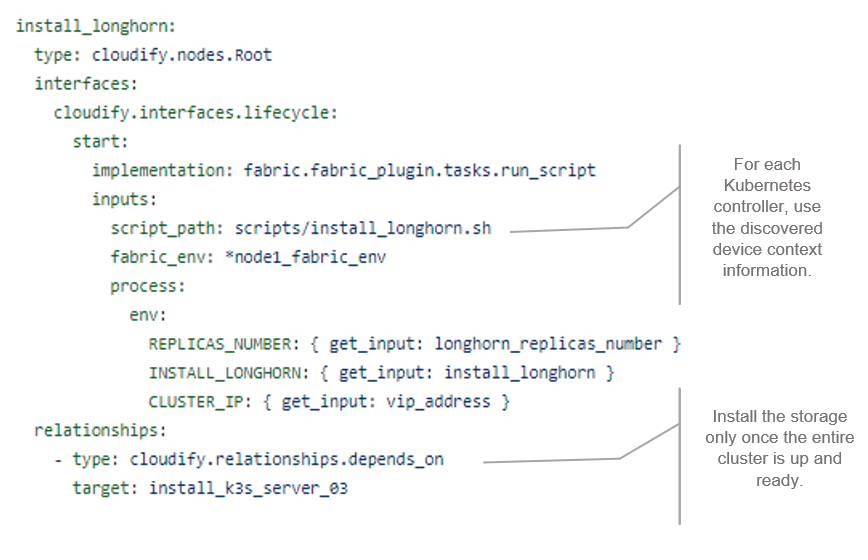

The third snippet shows the provision of Longhorn, a cloud-native HA distributed block storage for Kubernetes. It is optional and the user can decide, using inputs, whether to add HA storage to the cluster. Longhorn creates replicas of the data in other nodes' volumes in the cluster, so in the case that a node fails, you still have the other replicas.

Figure 5. Storage (Longhorn) Helm Chart Blueprint

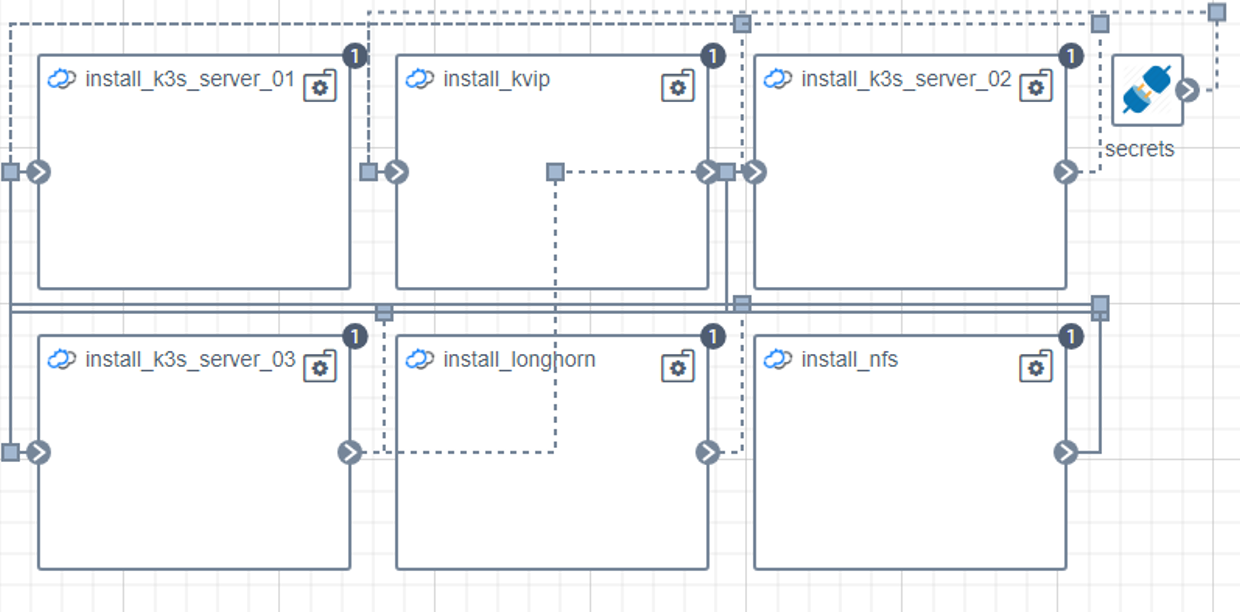

After you connect all of the components and create the HA Kubernetes cluster, you have a topology of three Kubernetes nodes (in case a of a three-node cluster), plus Kube-VIP as the VIP entry point to the cluster, and a Longhorn storage component, as shown in the following topology diagram.

Figure 6. Automation Topology

This process takes a few minutes, and then you have an HA Kubernetes cluster.

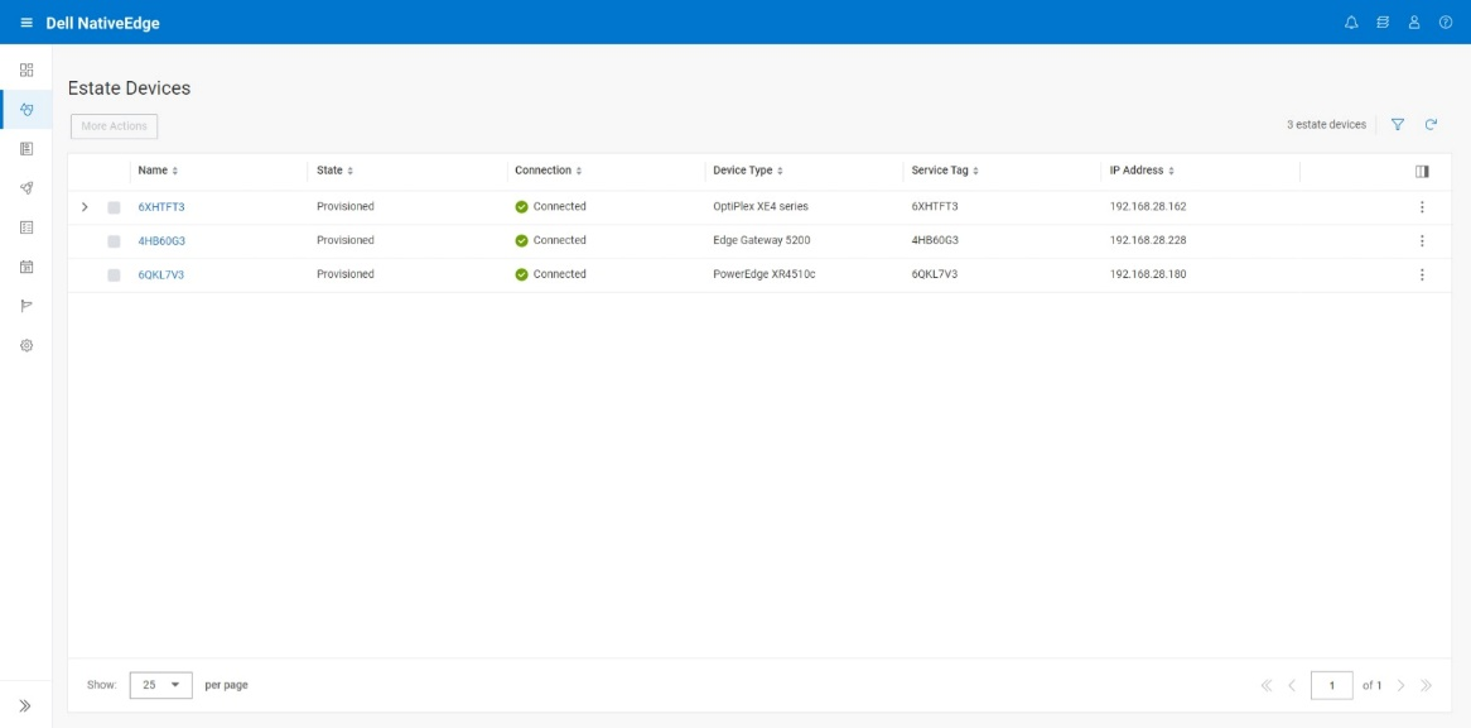

Discovery

The discovery phase is responsible for maintaining the list of available edge devices. The result of the discovery is a list of environment entries each containing the relevant device assets management.

This list is used as an input to the deployment phase and lets the user select the designated devices that are used for the cluster.

Figure 7. NativeEdge Discovery

In the previous snippet, we see the available NativeEdge Endpoints that the user can choose from to form a cluster. The user can choose either one or three NativeEdge Endpoints to create an HA cluster. An odd number of endpoints is needed for the cluster leader election algorithm. It is essential to avoid multiple leaders getting elected, a condition known as a split-brain problem. Consensus algorithms use odd number voting to elect the leader. An example of this could be electing the node with majority votes.

Workflow Execution

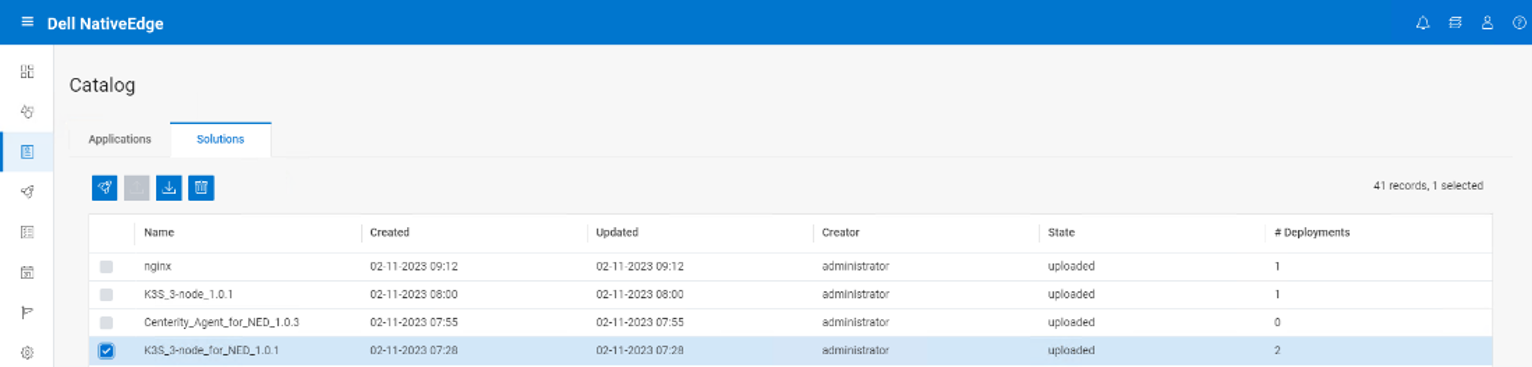

Workflow execution is the phase where we map the automation plan as described in the blueprint into a chain of tasks. This calls the relevant infrastructure resource API needed to establish our cluster.

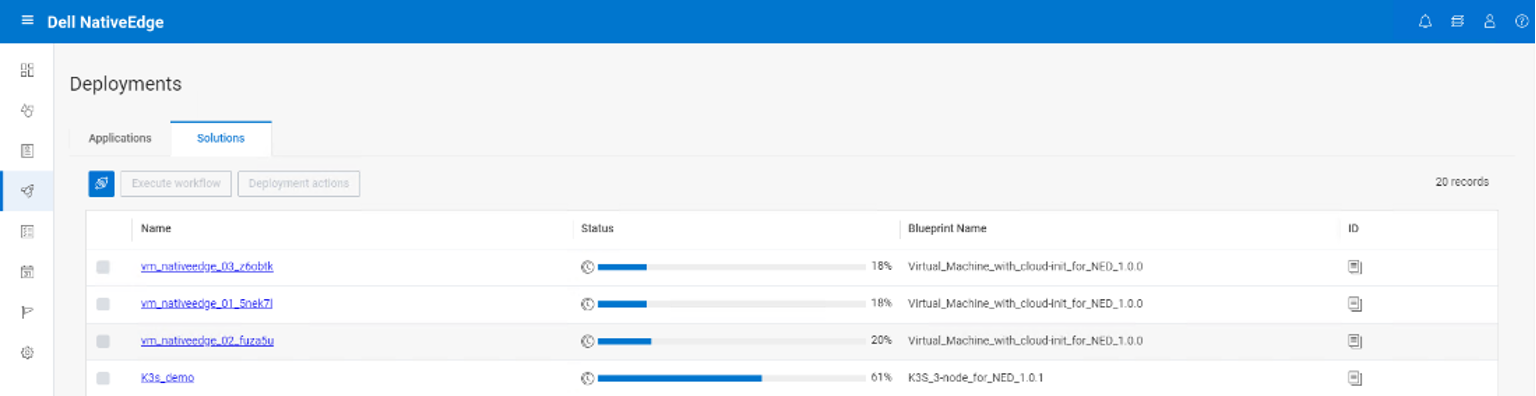

The user starts by deploying the K3S blueprint from the application catalog, as shown in the following figure.

Figure 8. NativeEdge Workflow Execution

In the following figure, we can see the deployment progress bar, at 61 percent complete. It deploys all the necessary cluster resources, the K3S components, the Kube-VIP, and Longhorn.

Figure 9. NativeEdge Solution Deployments

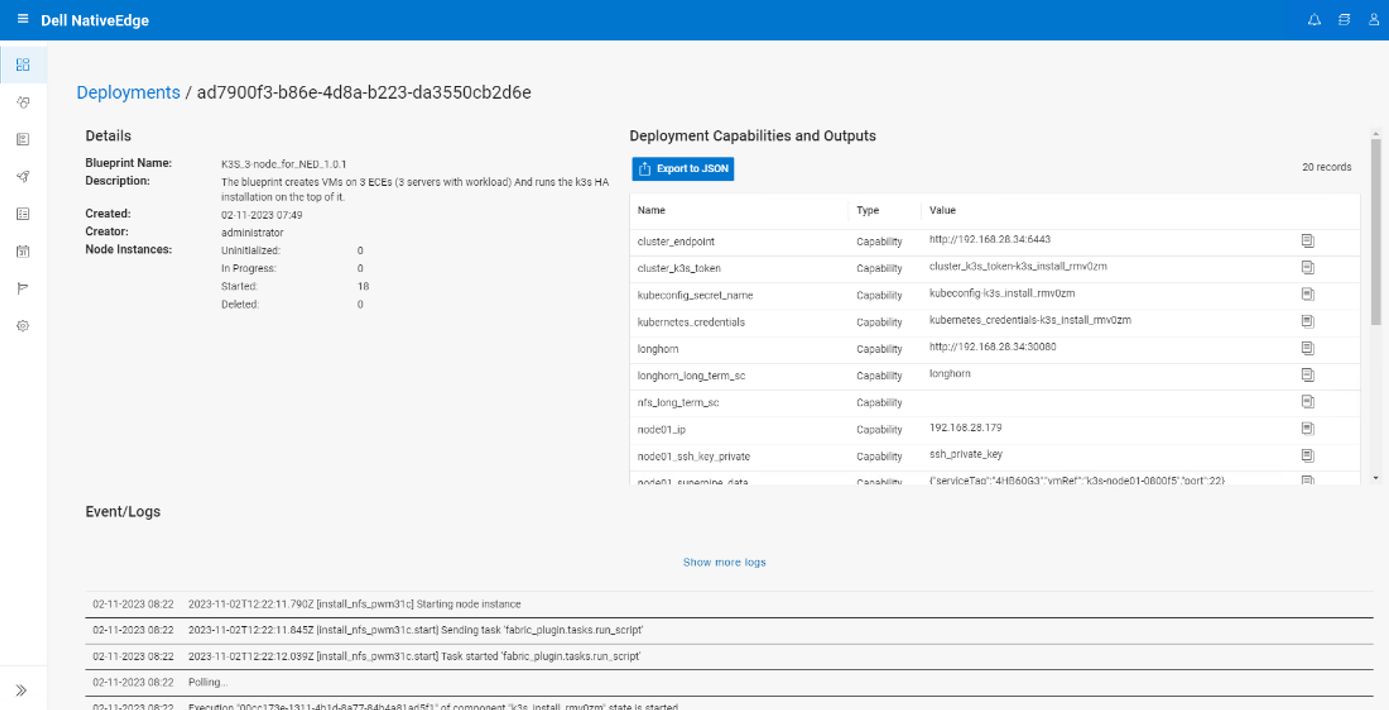

Upon deployment completion, NativeEdge shows the Deployment Capabilities and Outputs, as seen in the following figure. This list includes important information such as the K3s cluster endpoint to access the cluster.

The Deployment Capabilities and Outputs display also includes events or logs of the deployment execution, where the user can view various steps of the deployment execution.

Figure 10. Deployment Details

Final Notes

Edge devices can vary significantly in terms of networking capability, resource level, hardware capabilities, operating systems, and functional role, leading to fragmentation in the edge computing ecosystem.

Edge AI is a catalyst event that leads to even more significant edge device fragmentation. It requires specialized hardware accelerators like GPUs, Neural Processing Units (NPUs), or Tensor Processing Units (TPUs) to efficiently run deep learning models. Different manufacturers produce these accelerators, leading to a variety of hardware platforms and architectures. In addition to that, many organizations, especially in industries such as automotive, healthcare, and industrial IoT, develop custom edge AI solutions tailored to their specific requirements.

Kubernetes Reduces the Edge Fragmentation Complexity

Using Kubernetes at the edge can help reduce device fragmentation complexity through:

- Abstraction—Kubernetes provides an abstraction of hardware differences.

- Containerization—Kubernetes provides a lightweight, portable workload execution framework, and can run consistently across various edge devices, regardless of the underlying operating system or hardware.

- Resource management—Kubernetes provides resource management features that allow you to allocate CPU and memory resources to containers.

- Edge clusters—Kubernetes can be set up to manage clusters of edge devices distributed across different locations, leveraging a fabric or mesh topology architecture.

- Rolling updates and version control—Kubernetes supports rolling updates and version control of containerized applications.

- Avoid vendor lock-in, the right Kubernetes for the job—Evolving extensions or variants of Kubernetes, may be better suited for the edge, including MicroK8, K3s, K0, KubeVirt, Virtlet, and Krustlet.

Having said that, setting up a Kubernetes cluster on edge devices can be a complex task.

NativeEdge provides a built-in blueprint that automates the entire process through a single API call.

It is also important to note that in this specific example, we refer to a specific edge Kubernetes stack. The provided blueprint can be easily extended to fit your specific environment or your choice of Kubernetes stack.

Related Blog Posts

Litmus and Dell NativeEdge - A Powerful Duo for Improving Industrial IoT Operations

Wed, 08 May 2024 15:18:51 -0000

|Read Time: 0 minutes

Edge AI plays a significant role in the digital transformation of the industrial Internet of things (IIoT). It improves efficiency, productivity, and decision-making processes in the following areas:

- Predictive maintenance—AI algorithms can analyze data from sensors and other connected devices to predict equipment failures before they happen.

- Anomaly detection—AI can identify abnormal patterns or anomalies in data collected from various sensors.

- Operations optimization—AI algorithms can optimize industrial processes by analyzing data and adjusting parameters in real time.

- Supply chain optimization—AI can optimize supply chain processes by analyzing data from inventory levels, demand forecasting, and logistics.

- Quality control—AI-powered vision systems and machine learning algorithms can be implemented in manufacturing quality control. These systems can identify defects or deviations from quality standards, ensuring that only high-quality products reach the market.

- Energy management—AI can analyze energy consumption patterns and optimize energy usage in industrial settings.

- Continuous improvement—AI facilitates continuous improvement by learning from data over time.

Figure 1. Industrial IoT 4.0

This blog demonstrates the benefits of the edge solutions integration on top of NativeEdge with Litmus, one of the integrated solutions.

Industrial IoT Edge AI with NativeEdge and Litmus

Dell NativeEdge serves as a platform for deploying and managing edge devices and applications at the edge. One notable addition to NativeEdge’s latest version is the ability to deliver an end-to-end solution on top of the platform that includes PTC, Litmus, Telit Cinterion, Centerity, and others. This capability allows users to get a consistent and simple management from bare-metal provisioning to a full-blown solution that is fully automated.

Figure 2. Introducing Edge Solutions on top of NativeEdge

Introduction to Litmus

Litmus is an industrial IoT platform that helps businesses collect, analyze, and manage data from IIoT devices. Dell NativeEdge is a cloud-based and on-premise software solution that helps businesses improve their email security and delivery.

Litmus includes two main parts:

- Litmus Edge Manager

- Litmus Edge

Litmus Edge Manager

Litmus Edge Manager serves as a central management console or interface for configuring, monitoring, and managing the Litmus Edge deployments and Litmus Edge.

Figure 3. Litmus Edge Manager

Litmus Edge

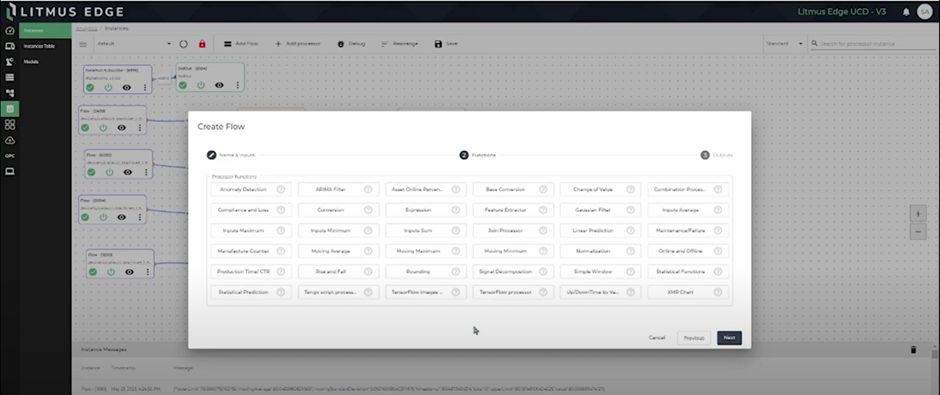

Litmus Edge is an industrial edge computing platform designed for edge inferencing locally in real time. It facilitates edge and IoT device management, supports various industrial protocols, enables analytics and machine learning at the edge, and emphasizes security measures.

Figure 4. Litmus Edge platform

Litmus Edge provides a flexible solution for organizations to optimize data processing, enhance device connectivity, and derive insights directly at the edge of their industrial IoT deployments through a simple no-code user experience.

Figure 5. No-Code Editor for Edge Inferencing

Deploying the Litmus Solution on NativeEdge

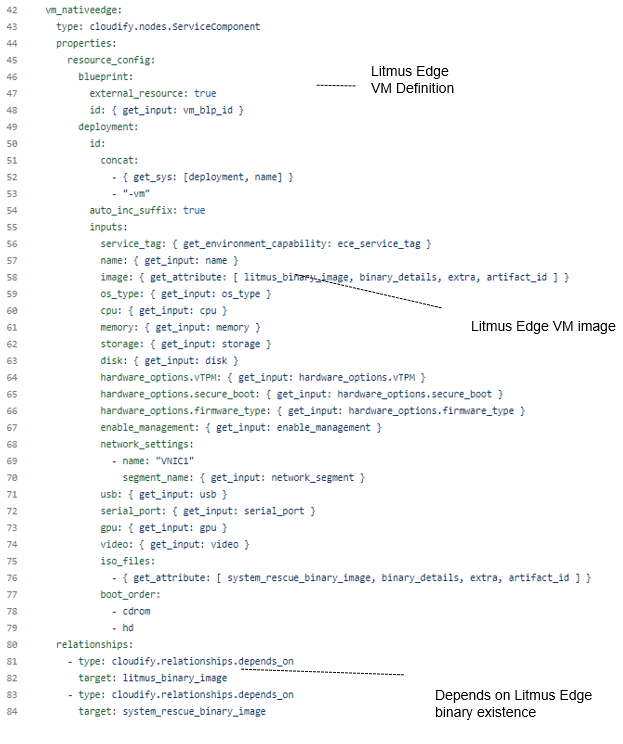

First, deploy the Litmus Edge. Multiple Litmus Edge instances can be deployed on multiple NativeEdge Endpoints. Each Litmus Edge is connected to sensors like robotic arms and CNCs. The following image shows the blueprint that provisions the Litmus Edge VM from a Litmus Edge image.

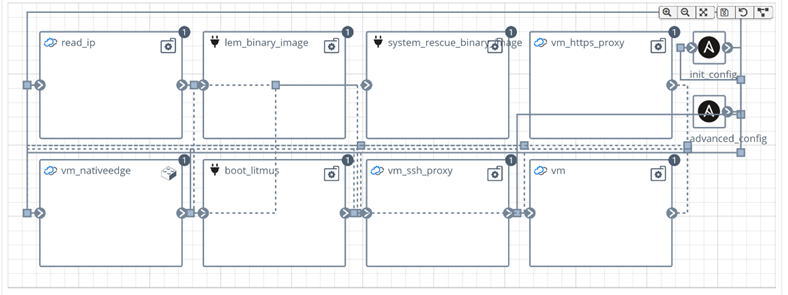

The following figure shows the Litmus Edge topology on NativeEdge. We can see the NativeEdge Litmus VM provisioned as well as the binary Litmus image and their dependencies.

We can also see that there is an SDP node, where data is streamed to and persisted.

Figure 6. Litmus Edge blueprint topology

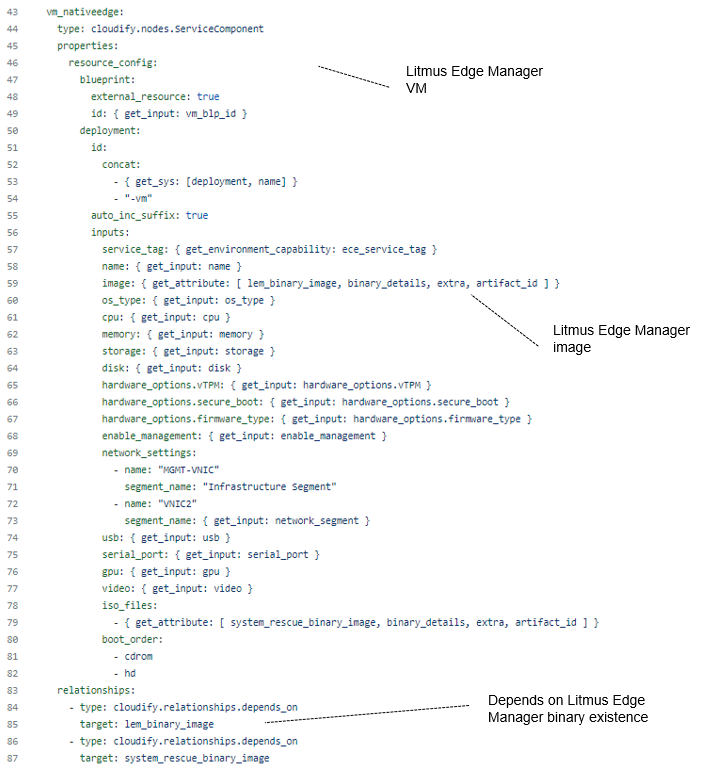

The second blueprint provisions the Litmus Edge Manager VM that can connect to multiple Litmus Edges on multiple NativeEdge Endpoints.

The following figure shows the Litmus Edge Manager topology on NativeEdge. The Litmus Edge Manager can also be provisioned on vSphere. We can see the NativeEdge Litmus Manager VM provisioned as well as the binary Litmus manager image and their dependencies.

Figure 7. Litmus Edge Manager blueprint topology

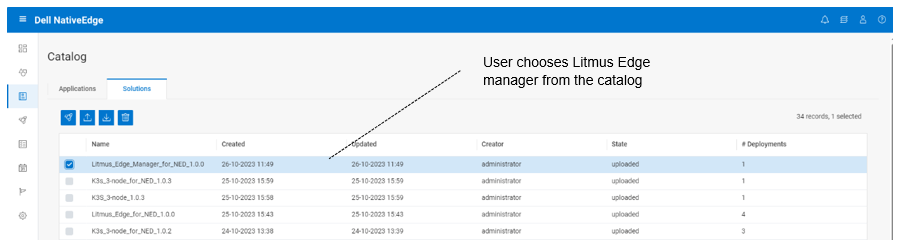

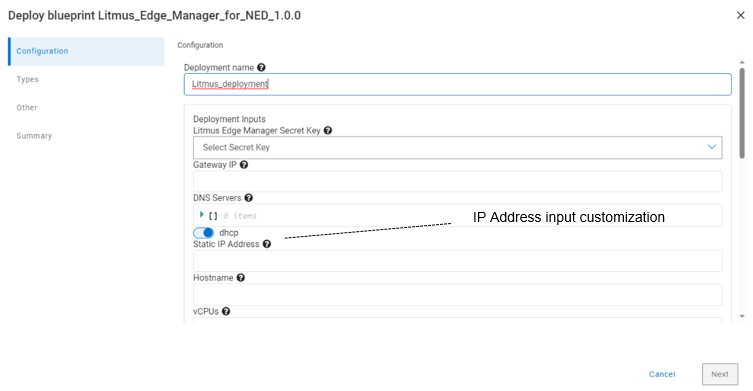

Let us look at how a NativeEdge user interacts with Litmus Edge. From the NativeEdge App Catalog, choose the deploy Litmus Edge Manager or Litmus Edge (or both) and go to the deployment inputs customization.

Figure 8. NativeEdge App Catalog

On the deployment inputs, you can customize the IP address and hostname to access the Litmus Edge Manager. This includes the number of vCPUs to allocate for the Litmus Manager VM.

Figure 9. Litmus Edge deployment inputs

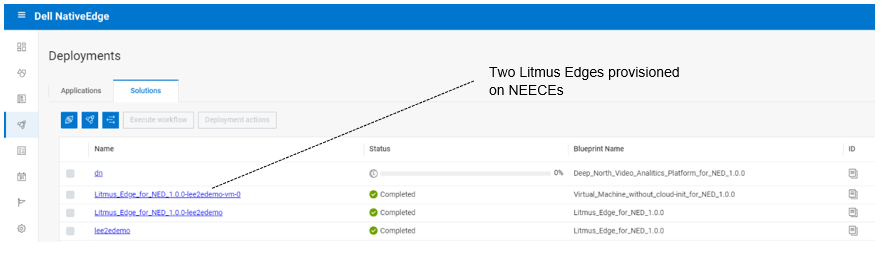

After deployment execution, we can see in the following figure that we provisioned multiple Litmus Edges. We can provision a fleet of Litmus Edges that are connected and managed by one Litmus Edge Manager.

Figure 10. Litmus Edge deployment

Conclusion

Dell NativeEdge provides fully automated, secure device onboarding from bare metal to cloud. As a DevEdgeOps platform, NativeEdge also gives the ability to validate and continuously manage the provisioning and configuration of those device endpoints in a secured manner. This reduces the risk of failure or security breaches due to misconfiguration or human error by detecting those potential vulnerabilities earlier in the pre-deployment development process.

The introduction of NativeEdge Orchestrator enables customers to have consistent and simple management of integrated solutions across their entire fleet of new and existing devices, supporting external services, VxRail, and soon other cloud infrastructures. The separation between the device management and solution is the key to enabling consistent operational management between different solution vendors and cloud infrastructures.

The specific integration between NativeEdge and Litmus provides a full-blown IIoT management platform from bare metal to cloud. It also simplifies the ability to process data at the edge by introducing edge AI inferencing through a simple no-code interface.

The solution framework allows vendors to use Dell NativeEdge as a generic edge infrastructure framework, addressing fundamental aspects of device fleet management. Vendors can then focus on delivering the unique value of their solution, be it predictive maintenance or real-time monitoring, as demonstrated by the Litmus use case.

References

- Litmus Edge | Dell Technologies Validated Design for Manufacturing Edge with Litmus - TechBook | Dell Technologies Info Hub

- Litmus Live Demo

- DevEdgeOps Defined | Dell Technologies Info Hub

- Simplify Edge Operations - Flipbook | Multimedia for the NativeEdge Platform | Dell Technologies Info Hub

Will AI Replace Software Developers?

Thu, 02 May 2024 09:38:01 -0000

|Read Time: 0 minutes

Over the past year, I have been actively involved in generative artificial intelligence (Gen AI) projects aimed at assisting developers in generating high-quality code. Our team has also adopted Copilot as part of our development environment. These tools offer a wide range of capabilities that can significantly reduce development time. From automatically generating commit comments and code descriptions to suggesting the next logical code block, they have become indispensable in our workflow.

According to a recent study by McKinsey, quantify the level of productivity gain in the following areas:

Figure 1. Software engineering: speeding developer work as a coding assistant (McKinsey)

This study shows that “The direct impact of AI on the productivity of software engineering could range from 20 to 45 percent of current annual spending on the function. This value would arise primarily from reducing time spent on certain activities, such as generating initial code drafts, code correction and refactoring, root-cause analysis, and generating new system designs. By accelerating the coding process, Generative AI could push the skill sets and capabilities needed in software engineering toward code and architecture design. One study found that software developers using Microsoft’s GitHub Copilot completed tasks 56 percent faster than those not using the tool. An internal McKinsey empirical study of software engineering teams found those who were trained to use generative AI tools rapidly reduced the time needed to generate and refactor code and engineers also reported a better work experience, citing improvements in happiness, flow, and fulfilment.”

What Makes the Code Assistant (Copilot) the Killer App for Gen AI?

The remarkable progress of AI-based code generation owes its success to the unique characteristics of programming languages. Unlike natural language text, code adheres to a structured syntax with well-defined rules. This structure enables AI models to excel in analyzing and generating code.

Several factors contribute to the swift evolution of AI-driven code generation:

- Structured nature of code–Code follows a strict format, making it amenable to automated analysis. The consistent structure allows AI algorithms to learn patterns and generate syntactically correct code.

- Validation tools–Compilers and other development tools play a crucial role. They validate code for correctness, ensuring that generated code adheres to language specifications. This continuous feedback loop enables AI systems to improve without human intervention.

- Repeatable work identification–AI excels at identifying repetitive tasks. In software development, there are numerous areas where routine work occurs, such as boilerplate code, data transformations, and error handling. AI can efficiently recognize and automate these repetitive patterns.

From Coding Assistant to Fully-Autonomous AI Software Engineer

The Cognition & Development Lab at Washington University in St. Louis investigates how infants and young children think, reason, and learn about the world around them. Their research focuses on the development of early social-cognitive capacities. They are the makers of Devin, the world’s first AI software engineer.

Devin possesses remarkable capabilities in software development in the following areas:

- Complex engineering tasks–With advances in long-term reasoning and planning, Devin can plan and execute complex engineering tasks that involve thousands of decisions. Devin recalls relevant context at every step, learns over time, and even corrects mistakes.

- Coding and debugging–Devin can write code, debug, and address bugs in codebases. It autonomously finds and fixes issues, making it a valuable teammate for developers.

- End-to-end app development–Devin builds and deploys apps from scratch. For example, it can create an interactive website, incrementally adding features requested by the user and deploying the app.

- AI model training and fine-tuning–Devin sets up fine-tuning for large language models, demonstrating its ability to train and improve its own AI models.

- Collaboration and communication–Devin actively collaborates with users. It reports progress in real-time, accepts feedback, and engages in design choices as needed.

- Real-world challenges–Devin tackles real-world GitHub issues found in open-source projects. It can also contribute to mature production repositories and address feature requests. Devin even takes on real jobs on platforms like Upwork, writing and debugging code for computer vision models.

The Devin project is a clear indication of how fast we move from simple coding assistants to more complete engineering capabilities.

Will AI Replace Software Developers?

When I asked this question recently during a Copilot training session that our team took, the answer was “No”, or to be more precise “Not yet”. The common thinking is that it provides a productivity enhancement tool that will save developers from spending time on tedious tasks such as documentation, testing, and so on. This could have been true yesterday, but as seen with project Devin, it already goes beyond simple assistance to full development engineering. We can rely on the experience from past transformations to learn a bit more about where this is all heading.

Learning from Cloud Transformation: Parallels with Gen AI Transformation

The advent of cloud computing, pioneered by AWS approximately 15 years ago, revolutionized the entire IT landscape. It introduced the concept of fully automated, API-driven data centers, significantly reducing the need for traditional system administrators and IT operations personnel. However, beyond the mere shrinking of the IT job market, the following parallel events unfolded:

- Traditional IT jobs shrank significantly–Small to medium-sized companies can now operate their IT infrastructure without dedicated IT operators. The cloud’s self-service capabilities have made routine maintenance and management more accessible.

- Emergence of new job titles: DevOps, SRO, and more–As organizations embrace cloud technologies, new roles emerge. DevOps engineers, site reliability operators (SROs), and other specialized positions became essential for optimizing cloud-based systems.

- The rise of SaaS startups–Cloud computing lowered the barriers of entry for delivering enterprise-grade solutions. Startups capitalized on this by becoming more agile and growing faster than established incumbents.

- Big tech companies’ accelerated growth–Tech giants like Google, Facebook, and Microsoft swiftly adopted cloud infrastructure. The self-service nature of APIs and SaaS offerings allowed them to scale rapidly, resulting in record growth rates.

Impact on Jobs and Budgets

While traditional IT jobs declined, the transformation also yielded positive outcomes:

- Increased efficiency and quality–Companies produced more products of higher quality at a fraction of the cost. The cloud’s scalability and automation played a pivotal role in achieving this.

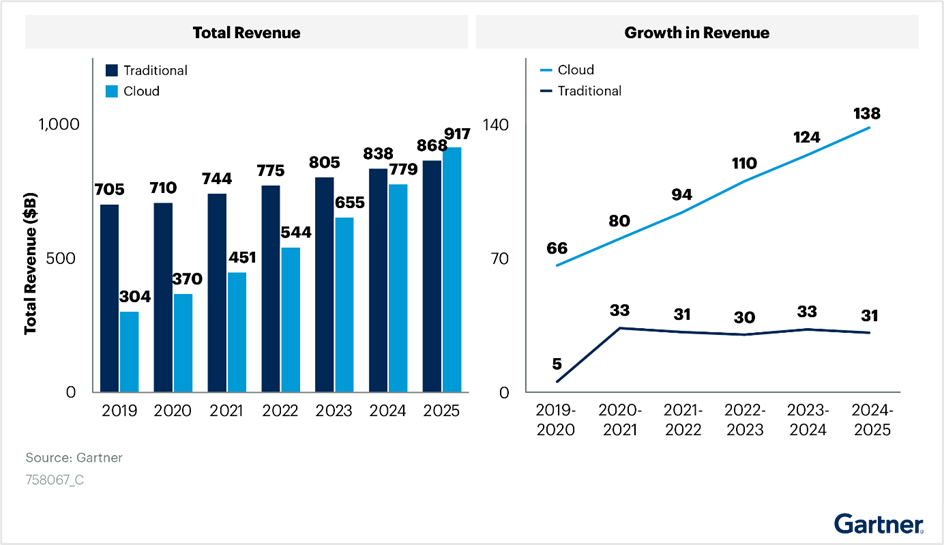

- Budget shift from traditional IT to cloud–Gartner’s IT spending reports reveal a clear shift in budget allocation. Cloud investments have grown steadily, even amidst the disruption caused by the introduction of cloud infrastructure, see the following figure:

Figure 2. Cloud transformation’s impact on IT budget allocation

Looking Ahead: AI Transformation

As we transition to the era of AI, we can anticipate similar trends:

- Decline in traditional jobs–Just as cloud computing transformed the job landscape, AI adoption may lead to the decline of certain traditional roles.

- Creation of new jobs–Simultaneously, AI will create novel opportunities. Roles related to AI development, machine learning, and data science will flourish.

Short Term Opportunity

Organizations will allocate more resources to AI initiatives. The transition to AI is not merely an evolutionary step; it is a strategic imperative.

According to a research conducted by ISG on behalf of Glean, Generative AI projects consumed an average of 1.5 percent of IT budgets in 2023. These budgets are expected to rise to 2.7 percent in 2024 and further increase to 4.3 percent in 2025. Organizations recognize the potential of AI to enhance operational efficiency and bridge IT talent gaps. Gartner predicts that Generative AI impacts will be more pronounced in 2025. Despite this, worldwide IT spending is projected to grow by 8 percent in 2024. Organizations continue to invest in AI and automation to drive efficiency. The White House budget proposes allocating $75 billion for IT spending at civilian agencies in 2025. This substantial investment aims to deliver simple, seamless, and secure government services through technology.

The impact of AI extends far beyond the confines of the IT job market. It permeates nearly every facet of our professional landscape. As with any significant transformation, AI presents both risks and opportunities. Those who swiftly embrace it are more likely to seize the advantages.

So, what steps can software developers take to capitalize on this opportunity?

Tips for Software Developers in the Age of AI

In the immediate term, developers can enhance their effectiveness when working with AI assistants by acquiring a combination of the following technical skills:

- Learn AI basics–I would recommend starting the learning with AI Terms 101. I also recommend following the leading AI podcasts. I found this useful to keep myself up to date in this space and learn some useful tips and updates from industry experts.

- Use coding assistant tools (Copilot)–Coding assistant tools are definitely the low-hanging fruit and probably the simplest step to get into the AI development world. There is a growing list of tools that are available and can be integrated seamlessly into your existing development IDE. The following provides a useful reference to The Top 11 AI Coding Assistants to Use in 2024.

- Learn machine learning (ML) and deep learning concepts–Understanding the fundamentals of ML and deep learning is crucial. Familiarize yourself with neural networks, training models, and optimization techniques.

- Data science and analytics–Developers should grasp data preprocessing, feature engineering, and model evaluation. Proficiency in tools like Pandas, NumPy, and scikit-learn is beneficial.

- Frameworks and tools–Learn about popular AI frameworks such as TensorFlow, and PyTorch. These tools facilitate model building and deployment.

More skilled developers will need to learn how to create their own “AI engineers” which they will train and fine tune to assist them with user interface (UI), backend, and testing development tasks. They could even run a team of “AI engineers” to write an entire project.

Will AI Reduce the Demand for Software Engineers?

Not necessarily. In the case of cloud transformation, developers with AI expertise will likely be in high demand. Those who will not be able to adapt to this new world are likely to stay behind and face the risk of losing their job.

It would be fair to assume that the scope of work, post-AI transformation, will grow and will not stay stagnant. As an example, we will likely see products adding more “self-driving” capabilities, where they could run more complete tasks without the need for human feedback or enable close to human interaction with the product.

Under this assumption, the scope of new AI projects and products is going to grow, and that growth should balance the declining demand for traditional software engineering jobs.

Conclusion

As a history enthusiast, I often find parallels in the past that can serve as a guide to our future. The industrial era witnessed disruptive technological advancements that reshaped job markets. Some professions became obsolete, while new ones emerged. As a society, we adapted quickly, discovering new growth avenues. However, the emergence of AI presents unique challenges. Unlike previous disruptions, AI simultaneously impacts a wide range of job markets and progresses at an unparalleled pace. The implications are indeed profound.

Recent research by Nexford University on How Will Artificial Intelligence Affect Jobs 2024-2030 reveals some startling predictions. According to a report by the investment bank Goldman Sachs, AI could potentially replace the equivalent of 300 million full-time jobs. It could automate a quarter of the work tasks in the US and Europe, leading to new job creation and a productivity surge. The report also suggests that AI could increase the total annual value of goods and services produced globally by 7 percent. It predicts that two-thirds of jobs in the US and Europe are susceptible to some degree of AI automation, and around a quarter of all jobs could be entirely performed by AI.

The concerns raised by Yuval Noa Harari, a historian and professor at the Department of History of the Hebrew University of Jerusalem, resonate with many. The rapid evolution of AI may indeed lead to significant unemployment.

However, when it comes to software engineers, we can assert with confidence that regardless of how automated our processes become, there will always be a fundamental need for human expertise. These skilled professionals perform critical tasks such as maintenance, updates, improvements, error corrections, and the setup of complex software and hardware systems. These systems often require coordination among multiple specialists for optimal functionality.

In addition to these responsibilities, computer system analysts play a pivotal role. They review system capabilities, manage workflows, schedule improvements, and drive automation. This profession has seen a surge in demand in recent years and is likely to remain in high demand.

In conclusion, AI represents both risk and opportunity. While it automates routine tasks, it also paves the way for innovation. Our response will ultimately determine its impact.

References

- Economic potential of generative AI | McKinsey

- Introducing Devin, the first AI software engineer (cognition-labs.com)

- IT Spending & Budgets: Trends & Forecasts 2024

- Organizations continue to invest in AI and automation to drive efficiency

- This substantial investment aims to deliver simple, seamless, and secure government services through technology

- AI Terms 101: An A to Z AI Terminology Guide for Beginners

- 11 AI Podcasts That Will Shape Your Perspective (geekflare.com)\

- How Will Artificial Intelligence Affect Jobs 2024-2030 | Nexford University

- The Top 11 AI Coding Assistants to Use in 2024 | DataCamp

- Yuval Harari On The Future of Jobs & Technology, Intelligence vs Consciousness, & Future Threats to Humanity - Jacob Morgan (thefutureorganization.com)