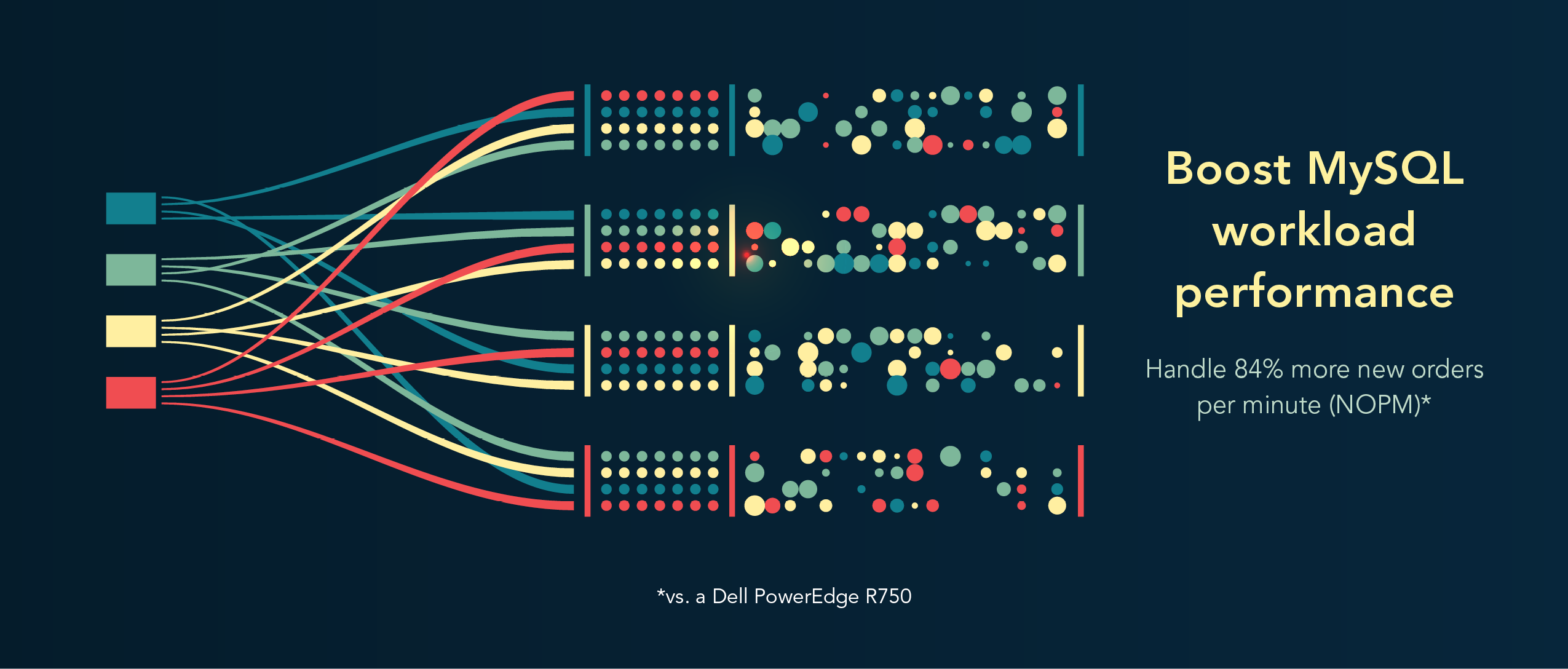

Process 84% more MySQL database activity with the latest-gen PowerEdge R760 server running VMware vSphere 8.0

Read the Report See the Science View the InfographicWed, 21 Jun 2023 21:54:59 -0000

|Read Time: 0 minutes

The 16th Generation server handled more database transactions than a previous-generation PowerEdge R750 server with vSphere 7

Upgrading hardware and software in your infrastructure can bring a bevy of benefits to your organization, including the potential for increased performance. For database servers, that could mean more ecommerce activity or faster inventory management.

At Principled Technologies, we used online transaction processing (OLTP) workloads targeting a MySQL 8.0 database to test a latest-generation Dell™ PowerEdge™ R760 server running a VMware® vSphere® 8.0 environment and a previous-generation PowerEdge R750 server running vSphere 7.0. Based on the increased OLTP performance of the PowerEdge R760, upgrading to the newer server with the latest version of the hypervisor software could provide a boost in MySQL production workload performance.

We also used Dell Live Optics software to monitor the latest-gen PowerEdge server and vSphere 8.0 environment. Live Optics enabled us to gather environment and workload characteristics, which could help optimize MySQL workload performance and potentially minimize overspending on data center resources.

How we tested

We used an online transaction processing workload, called TPROC-C, from the benchmarking tool HammerDB. We created VMware vSphere VMs on the two servers and set up one workload on each VM that used a MySQL 8.0 database. We then scaled up to 10 VMs on each server. We chose that number so we could divide each server into medium-sized database VMs equally while leaving some cores and memory for hypervisor overhead. In terms of average CPU load, the PowerEdge R760 utilized 79.9 percent of its CPU, and the PowerEdge R750 utilized 69.3 percent.

We performed all testing remotely. For more information on how we tested, see the science behind this report.

About the Dell PowerEdge R760

The 2U Dell PowerEdge R760 rack server features up to two 4th Generation Intel® Xeon® Scalable processors with up to 56 cores per processor to provide “performance and versatility for demanding applications.”1

Key features of the Dell PowerEdge R760 include:

- Up to two 300W (dual-width) or six 75W (single-width) GPUs

- Up to 28 storage drives, including 24 NVMe® direct-attached drives and 32 DDR5 DIMMs of memory

- Flexible I/O options, with up to eight PCIe® slots and optional two 1GbE LOM and one OCP 3.0 slots

- Dell OpenManage systems management platform for automated deployment, updates, and maintenance (features depend on license)

- Dell Smart Flow design and OpenManage Enterprise Power Manager 3.0 (with license) to improve energy efficiency

For more PowerEdge R760 details, visit https://www.dell.com/en-us/shop/cty/pdp/spd/poweredge-r760.

Why NOPM?

NOPM is a metric for OLTP workloads that shows only the number of new-order transactions completed in one minute as part of a serialized business workload. HammerDB claims that because NOPM is “independent of any particular database implementation [it] is the recommended primary metric to use.”2 NOPM comes from the database schema itself, which means IT staff and IT decision makers can use the data to compare performance of different databases that run different transaction types. For example, a database administrator could compare the NOPM of their ecommerce database workload to the NOPM of their inventory database workload because both run new-order transactions but differ in other transaction types.

About VMware vSphere 8.0

vSphere is an enterprise compute virtualization program that aims to bring “the benefits of cloud to on-premises workloads” by combining “industry-leading cloud infrastructure technology with data processing unit (DPU)- and GPU-based acceleration to boost workload performance.”3

This latest version introduces the vSphere Distributed Services Engine, which enables organizations to distribute infrastructure services across compute resources available to the VMware ESXi™ host and, for systems with DPUs, offload networking functions to the DPU.4 (We did not use DPUs in our testing.)

How the servers performed

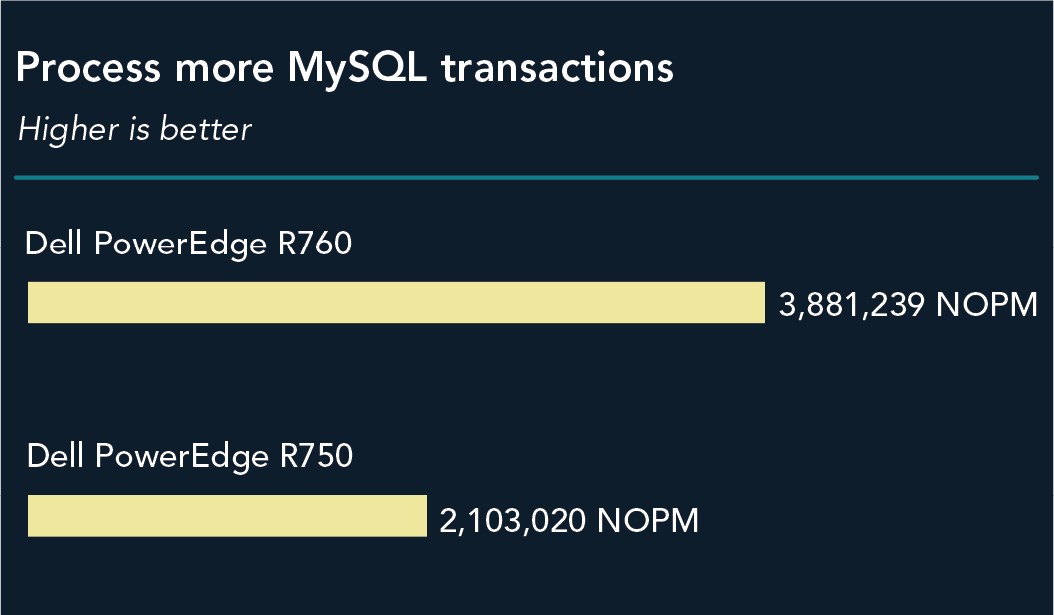

Process more MySQL transactions

When we ran the TPROC-C workload from HammerDB on both solutions, we saw an advantage of 84 percent for the latest-generation Dell PowerEdge R760 running VMware vSphere 8.0. The solution processed 3,881,239 NOPM, while the previous-generation PowerEdge R750 running vSphere 7 processed 2,103,020. Figure 1 shows the total NOPM each server processed.

This performance increase from upgrading to PowerEdge R760 servers and vSphere 8.0 could enable organizations to provide a better experience in many use cases where MySQL is the back end. Customer-facing ecommerce applications could process more sales and support a larger customer base. Internal-facing inventory management applications using MySQL could have a faster response, thus providing a potentially better experience for their users. IT monitoring applications using MySQL as a back end could potentially handle more transactions.

About HammerDB results

We tested each server with a TPROC-C OLTP workload from the HammerDB suite of benchmarks. While the HammerDB developers derived TPROC-C from the TPC-C specification, the workload is not a full implementation of the TPC-C standard. For this reason, our test results are not official TPC results and are not comparable to them in any manner.

For more information on the HammerDB benchmark suite, visit their website at www.hammerdb.com.

Collect data quickly with Dell Live Optics

Dell offers customers and service providers Live Optics, online IT infrastructure software, at no cost to help with collecting, visualizing, and sharing environment and workload data. According to Dell, customers and service providers can “gain deeper insights into performance, workload simulations, utilization, and support” and “analyze data in 24 hours or less rather than…weeks to months”5 with Live Optics. Monitoring infrastructure with Live Optics can help admins optimize systems and workloads to avoid over- and underutilizing resources.

In our testing, we used Live Optics to get a bird’s-eye view of our environment and its performance metrics. We pointed Live Optics at our VMware vCenter server, provided credentials, and selected a time period for the collection to run. After the collection period finished, we could view performance metrics and detailed inventory data for our physical and virtual environment.

Live Optics collects information on the following five components of infrastructure:

- Server and cloud: Inventory and performance monitoring of hosts and VMs regardless of platform or vendor

- Workloads: Machine and installation details, SQL Server-specific features, and database information

- File: Insight into unstructured data, such as file growth and potential for space savings

- Storage: Hardware inventory, configuration, performance history, and value assessment

- Data protection: Backup software and appliances and front-end capacity management

For more information about Live Optics, visit https://www.dell.com/en-us/dt/live-optics/index.htm.

Conclusion

New servers and software versions can be a boon for your organization. If you’re running MySQL workloads for web applications, retail, or other use cases, your organization could see a performance boost by upgrading to latest-generation Dell PowerEdge R760 servers running VMware vSphere 8.0. In our testing, a PowerEdge R760 server handled 84.5 percent more NOPM than an older Dell PowerEdge R750 server. We also found that Live Optics infrastructure monitoring software allows you to view performance data for new PowerEdge R760 and vSphere 8.0 environments so you can manage those resources to optimize your MySQL workloads.

- Dell, “Dell PowerEdge Rack Servers,” accessed May 11, 2023, https://i.dell.com/sites/csdocuments/Product_Docs/en/poweredge-rack-quick-reference-guide.pdf.

- HammerDB, “Comparing HammerDB results,” accessed May 11, 2023, https://www.hammerdb.com/docs/ch03s04.html.

- VMware, “VMware vSphere,” accessed May 11, 2023, https://www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/vsphere/vmw-vsphere-datasheet.pdf.

- VMware, “Introducing VMware vSphere Distributed Services Engine and Networking Acceleration by Using DPUs,” accessed May 11, 2023, https://docs.vmware.com/en/VMware-vSphere/8.0/vsphere-esxi-installation/GUID-EC3CE886-63A9-4FF0-B79F-111BCB61038F.html.

- Dell, “Live Optics for Service Providers,” accessed May 11, 2023, https://www.dell.com/en-us/shop/live-optics/cp/live-optics.

This project was commissioned by Dell Technologies.

June 2023