Model Training – Dell Validated Design

Fri, 03 May 2024 16:09:06 -0000

|Read Time: 0 minutes

Introduction

When it comes to large language models (LLMs), there may be fundamental question that everyone looking to leverage foundational models need to answer: should I train my model, or should I customize an existing model?

There can be strong arguments for either. In a previous post, Nomuka Luehr covered some popular customization approaches. In this blog, I will look at the other side of the question: training, and answer the following questions: Why would I train an LLM? What factors should I consider? I’ll also cover the recently announced Generative AI in the Enterprise – Model Training Dell Validated Design - A Scalable and Modular Production Infrastructure for AI Large Language Model Training. This is a collaborative effort between Dell Technologies and NVIDIA, aimed at facilitating high-performance, scalable, and modular solutions for training large language models (LLMs) in enterprise settings (more about that later).

Training pipeline

The data pipelines for training and customization are similar because both processes involve feeding specific datasets through the LLM.

In the case of training, the dataset is typically much larger than for customization, because customization is targeted at a specific domain. Remember, for training a model, the goal is to embed as much knowledge into the model as possible, so the dataset must be large.

This raises the question of the dataset and its accuracy and relevance. Curating and preparing the data are essential processes to avoid biases and misinformation. This step is vital for ensuring the quality and relevance of the data fed into the LLM. It involves meticulously selecting and refining data to minimize biases and misinformation, which if overlooked, could compromise the model's output accuracy and reliability. Data curation is not just about gathering information; it's about ensuring that the model's knowledge base is comprehensive, balanced, and reflects a wide array of perspectives.

When the dataset is curated and prepped, the actual process of training involves a series of steps where the data is fed through the LLM. The model generates outputs based on the input provided, which are then compared against expected results. Discrepancies between the actual and expected outputs lead to adjustments in the model's weights, gradually improving its accuracy through iterative refinement (using supervised learning, unsupervised learning, and so on).

While the overarching principle of this process might seem straightforward, it's anything but simple. Each step involves complex decisions, from selecting the right data and preprocessing it effectively to customizing the model's parameters for optimal performance. Moreover, the training landscape is evolving, with advancements, such as supervised and unsupervised learning, which offer different pathways to model development. Supervised learning, with its reliance on labeled datasets, remains a cornerstone for most LLM training regimes, by providing a structured way to embed knowledge. However, unsupervised learning, which explores data patterns without predefined labels, is gaining traction for its ability to unearth novel insights.

These intricacies highlight the importance of leveraging advanced tools and technologies. Companies like NVIDIA are at the forefront, offering sophisticated software stacks that automate many aspects of the process, and reducing the barriers to entry for those looking to develop or refine LLMs.

Network and storage performance

In the previous section, I touched on the dataset required to train or customize models. While having the right dataset is a critical piece of this process, being able to deliver that dataset fast enough to the GPUs running the model is another critical and yet often overlooked piece. To achieve that, you must consider two components:

- Storage performance

- Network performance

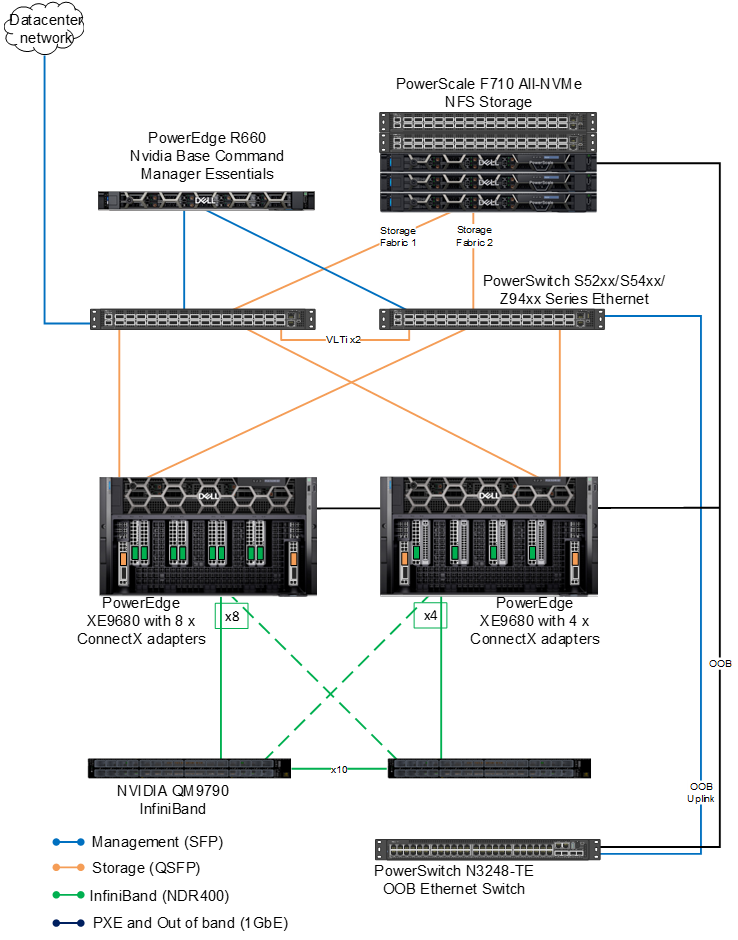

For anyone looking to train a model, having a node-to-node (also known as East-West) network infrastructure based on 100Gbps, or better yet, 400Gbps, is critical, because it ensures sufficient bandwidth and throughput to keep saturated the type of GPUs, such as the NVIDIA H100, required for training.

Because customization datasets are typically smaller than full training datasets, a 100Gbps network can be sufficient, but as with everything in technology, your mileage may vary and proper testing is critical to ensure adequate performance for your needs.

Datasets used to train models are typically very large: in the 100s of GB. For instance, the dataset used to train GPT-4 is said to be over 550GB. With the advance of RDMA over Converged Ethernet (RoCE), GPUs can pull the data directly from storage. And because 100Gbps networks are able to support that load, the bottleneck has moved to the storage.

Because of the nature of large language models, the dataset used to train them is made of unstructured data, such as Sharepoint sites and document repositories, and are therefore most often hosted on network attached storage, such as Dell PowerScale. I am not going to get into further details on the storage part because I’ll be publishing another blog on how to use PowerScale to support model training. But you must make careful considerations when designing the storage to ensure that the storage is able to keep up with the GPUs and the network.

A note about checkpointing

As we previously mentioned, the process of training is iterative. Based on the input provided, the model generates outputs, which are then compared against expected results. Discrepancies between the actual and expected outputs lead to adjustments in the model's weights, gradually improving its accuracy through iterative refinement. This process is repeated across many iterations over the entire training dataset.

A training run (that is, running an entire dataset through a model and updating its weight), is extremely time consuming and resource intensive. According to this blog post, a single training run of ChatGPT-4 costs about $4.6M. Imagine running a few of them in a row, only to have an issue and having to start again. Because of the cost associated with training runs, it is often a good idea to save the weights of the model at an intermediate stage during the run. Should something fail later on, you can load the saved weights and restart from that point. Snapshotting the weights of a model in this way is called checkpointing. The challenge with checkpointing is performance.

A checkpoint is typically stored on an external storage system, so again, storage performance and network performance are critical considerations to offer the proper bandwidth and throughput for checkpointing. For instance, the Llama-2 70B consumes about 129GB of storage. Because each of its checkpoints is the exact same predictable size, they can be saved quickly (to disk) to ensure the proper performance of the training process.

NVIDIA software stack

The choice of which framework to use depends on whether you typically lean more towards doing it yourself or buying specific outcomes. The benefit of doing it yourself is ultimate flexibility, sometimes at the expense of time to market, whereas buying an outcome can offer better time to market at the expense of having to choose within a pre-determined set of components. In my case, I have always tended to favor buying outcomes, which is why I want to cover the NVIDIA AI Enterprise (NVAIE) software stack at a high level.

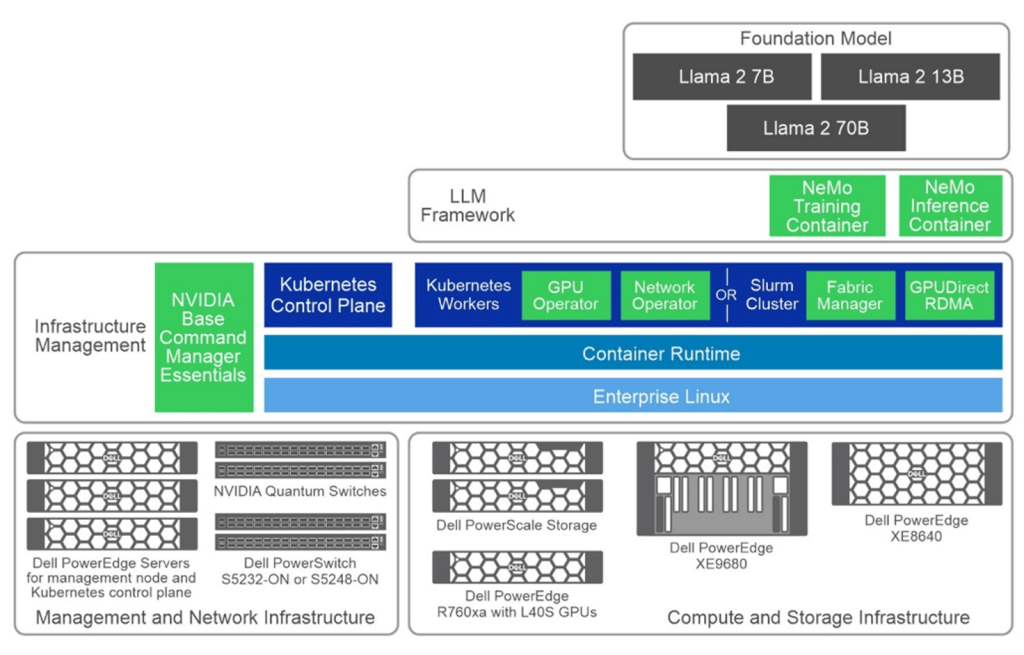

The following figure is a simple layered cake that showcases the various components of the NVAIE, in light green.

The white paper Generative AI in the Enterprise – Model Training Dell Validated Design provides an in-depth exploration of a reference design developed by Dell Technologies in collaboration with NVIDIA. It offers enterprises a robust and scalable framework to train large language models effectively. Whether you're a CTO, AI engineer, or IT executive, this guide addresses the crucial aspects of model training infrastructure, including hardware specifications, software design, and practical validation findings.

Training the Dell Validated Design architecture

The validated architecture aims to give the reader a broad output of model training results. We used two separate configuration types across the compute, network and GPU stack.

There are two 8x PowerEdge XE9680 configurations both with 8x NVIDIA H100 SXM GPUs. The difference between the configurations (again) is the network. The first configuration is equipped with 8x ConnectX-7; the second configuration is equipped with four ConnectX-7 adapters. Both are configured for NDR.

On the storage side, the evolution of PowerScale continues to thrive in the AI domain with the launch of its latest line, including the notable PowerScale F710. This addition embraces Dell PowerEdge 16G servers, heralding a new era in performance capabilities for PowerScale's all-flash nodes. On the software side, the F710 benefits from the enhanced performance features found in the PowerScale OneFS 9.7 update.

Key takeaways

The guide provides training times for the Llama 2 7B and Llama 2 70B models over 500 steps, with variations based on the number of nodes and configurations used.

Why only 500 steps? The decision to train models for a set number of steps (500), rather than to train models for convergence, is practical for validation purposes. It allows for a consistent benchmarking metric across different scenarios and models, to produce a clearer comparison of infrastructure efficiency and model performance in the early stages.

Efficiency of Model Sizing: The choice of 7B and 70B Llama 2 model architectures indicates a strategic approach to balance computational efficiency with potential model performance. Smaller models like the 7B are quicker to train and require fewer resources, making them suitable for preliminary tests and smaller-scale applications. On the other hand, the 70B model, while significantly more resource-intensive, was chosen for its potential to capture more complex patterns and provide more accurate outputs.

Configuration and Resource Optimization: Comparing two hardware configurations provides valuable insights into optimizing resource allocation. While higher-end configurations (Configuration 1 with 8 adapters) offer slightly better performance, you must weigh the marginal gains against the increased costs. This highlights the importance of tailoring the hardware setup to the specific needs and scale of the project, where sometimes, a less maximalist approach (Configuration 2 with 4 adapters) can provide a more balanced cost-to-benefit ratio, especially in smaller setups. Certainly something to think about!

Parallelism Settings: The specific settings for tensor and pipeline parallelism (as covered in the guide), along with batch sizes and sequence lengths, are crucial for training efficiency. These settings impact the training speed and model performance, indicating the need for careful tuning to balance resource use with training effectiveness. The choice of these parameters reflects a strategic approach to managing computational loads and training times.

To close

With the scalable and modular infrastructure designed by Dell Technologies and NVIDIA, you are well-equipped to embark on or enhance your AI initiatives. Leverage this blueprint to drive innovation, refine your operational capabilities, and maintain a competitive edge in harnessing the power of large language models.

Authors: Bertrand Sirodot and Damian Erangey