Model Selection Made Easy by Dell Enterprise Hub

Thu, 10 Oct 2024 13:53:50 -0000

|Read Time: 0 minutes

With so many models available in Dell Enterprise Hub, which one should you use?

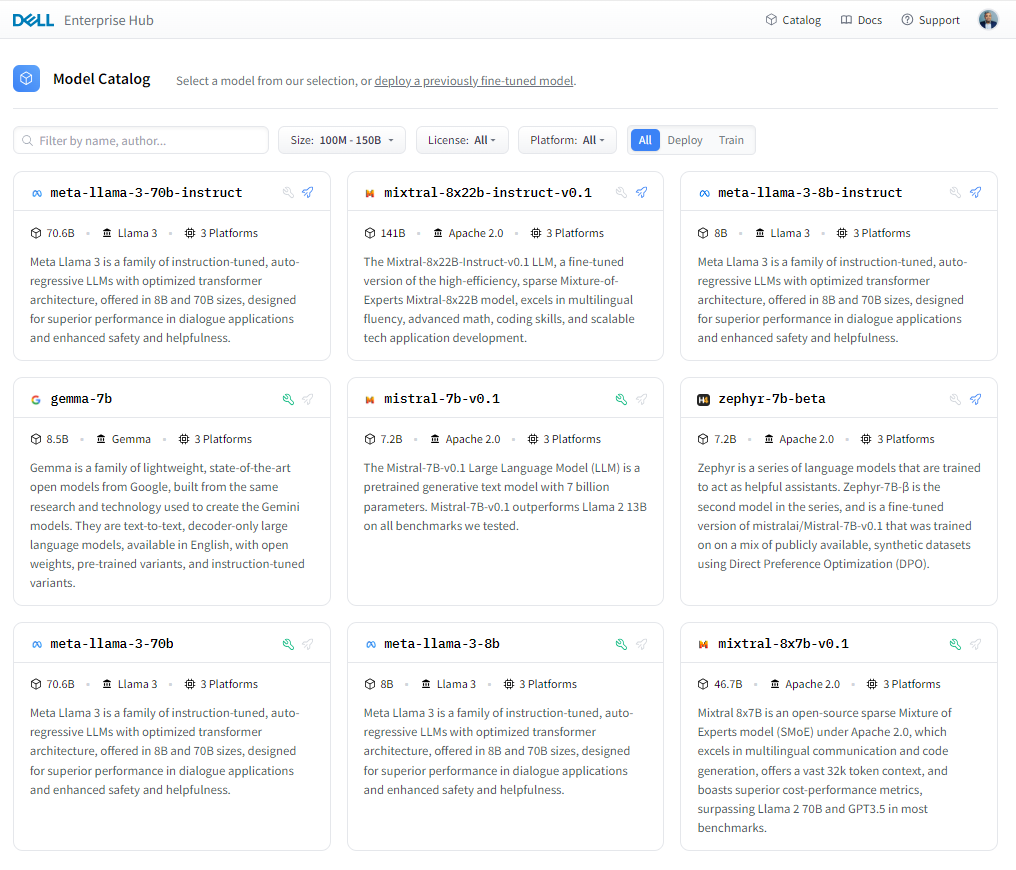

Figure 1. Dell Enterprise Hub https://dell.huggingface.co

In reality, there is no one model to rule them all. Even if there was one, it would be inefficient and ineffective to use the same model for all your applications. The model best used as a point-of-sale chatbot is very different from the model for a domain-specific knowledge bot. Dell Enterprise Hub has a diverse array of popular high-performance model architectures to enable a wide range of customers and applications. We will continue to add more models to meet the needs of our customers, as model architectures, capabilities and application needs evolve.

Let’s look at some of the most important criteria for selecting the right model for an application.

Size and capabilities

The number of parameters used while training—often referred to as the size of the model—varies per model. Larger models with larger parameters tend to demonstrate superior functionalities, however they tend to be slower in performance and have higher computation costs. Sometimes, larger models support special techniques while a smaller model of the same architecture might not.

For example, Llama 3 70B uses Grouped Query Attention (GQA) for improved inference scalability to overcome computational complexity but not Sliding Window Attention (SWA) for handling sequences of arbitrary length with a reduced inference cost. In comparison, Mistral’s models support GQA, SWA, and the Byte-fallback BPE tokenizer which ensures that characters are never mapped to out-of-vocabulary tokens. As a unique feature, Dell Enterprise Hub maps a model and task to a Dell Platform, and thus model selection may also be limited by hardware requirements.

Training data

Different models are trained on different datasets. The quality and quantity of the training data vary from model to model. Llama 3 was trained on 15T tokens, all collected from publicly available sources. Compared to Llama 2, Llama3 is seven times larger with four times more code. Five percent of Llama 3 consists of high-quality non-English datasets that cover 30 languages. Gemma models are trained on 6T tokens of web documents, code, and mathematics that help the model learn logical reasoning, symbolic representation, and mathematical queries. Compared to the Gemma and Llama 3 family of models, Mistral is fluent in English, French, Italian, German, and Spanish.

The quality and diversity of data sources is crucial when training powerful language models that handle a variety of tasks and text formats, whether the models are trained on data passed through heuristic filters, Not Safe for Work (NSFW) filters, Child Sexual Abuse Material (CSAM) filters, Sensitive Data Filtering, semantic deduplication approaches, or text classifiers to improve data quality.

Model evaluation benchmarks

Benchmarks provide good insight into a model’s application performance; however they should be taken with a grain of salt. The datasets used in these benchmarks are public and can contain the data used to train these models, thus causing inflated performance in the benchmark scores.

The assumption that the test prompts within a benchmark represent a random sample is incorrect. The correlation in model performance across test prompts is non-random, and accounting for correlation across tests reveals variability in model rankings on major benchmarks. This raises serious concerns about the validity of existing benchmarking studies and the future of evaluation using benchmarks.

Massive Multitask Language Understanding (MMLU), the most popular benchmark which uses multiple choice questions and answers to evaluate models, has been shown to be highly sensitive to minute details in the questions asked. Simple tweaks like changing the order of choices or the method of answer selection results in ranking shifts up to 8 positions. To learn more about this phenomenon, check out these Arxiv papers: Examining the robustness of LLM evaluation and When Benchmarks are Targets: Revealing the Sensitivity of Large.

Model architectures

Most new LLMs are based on transformer architecture, yet there are many differences between them architecturally. Traditional large language models (LLMs) often use an encoder-decoder architecture. The encoder processes the entire input, and the decoder generates the output. A decode-only model skips the encoder and directly generates the output based on the input it receives, one piece at a time. Llama 3 is a decoder-only mode, which makes it well-suited for tasks that involve generating texts for chatbots, dialogue systems, machine translation, text summarization, and creative text generation, but not well-suited for tasks that require a deeper understanding of context.

BERT and T5 are the most common and well-known encoder-decoder architectures. Gemma is decoder-only LLM. Implementation of techniques like GQA and SWA within the model delivers a better inference performance for Mistral compared to its peers. Mixture of Experts (MOE) models like Mistral 8X 22B are related to sparse MOE models which reduce inference costs by only keeping 44B active during inference with 8 experts despite having 176B parameters. On the other hand, fine-tuning and training is lot more complex for MOE models compared to non-MOE architecture models. New techniques are constantly evolving.

Context windows

LLMs are stateless and do not understand the difference between one question and another. The short-term memory is built into the application where previous inputs and outputs are fed back to the LLM to provide context and the illusion of continuous conversation. A larger context window allows the model to consider a broader context and could potentially lead to a more accurate response. Llama 2 7B has a context window of 4096 tokens—meaning the model can consider up to 4096 tokens of text while generating a response—whereas Gemma 7B has context window of 8192 tokens. A RAG-based AI solution tends to need much greater context window to facilitate high-quality retrieval and high-quality generation from the LLM as a result. Mistral 8x 22B has a 64K context window. Both Llama 3 8B and 70B have 8K context windows, but there are plans to increase that in future releases.

Vocab size and head size

Vocab size, referring to the number of distinct words or tokens that the model can recognize and work with, is essentially the LLM’s vocabulary breadth, one of the most important criteria and yet often overlooked. A larger vocabulary size translates to a more nuanced understanding of language by the LLM, however higher vocab sizes come with higher training costs.

Another interesting criterion is head size, which is specifically associated with the self-attention layer. The self-attention layer allows the model to identify relationships between parts of the input sequence. Head size determines the dimensionality of the output vectors produced by this layer. Imagine these vectors as representations of the input, where each dimension captures a different aspect. Head size influences the model’s capacity to capture different aspects of the relationship within the input sequence. More heads generally allow for richer understanding and increased computational complexity.

Licensing

Open-source model licenses define how the users interact with the models and use them in their applications. The licenses grant them specific rights and responsibilities, ensuring transparency and collaboration with the open-source community. The models made available on Dell Enterprise Hub have the following license categories:

- Apache 2.0: Permissive

- Allows the users to use, modify, and distribute the code for any purpose, including commercially.

- Requires users to maintain copyright and license notices in the code.

- Offers a potential patent grant with certain patent rights associated with the licensed software.

- Llama 2 and Llama 3: CopyLeft

- Restrictive than Apache 2.0, emphasizing sharing and collaboration.

- Enforces sharing of source code for any modifications or derivative works from Llama3-licensed code.

- Must also be released under the Llama3 license.

- Gemma: Gemma license is similar to Apache 2.0 in terms of permissiveness, allowing the free use and mediation with certain exceptions, potentially limiting certain commercial use.

We encourage developers to review the respective licenses in detail on the following pages:

- Mistral: https://help.mistral.ai/en/articles/156914-under-which-license-are-the-open-models-available

- Falcon: https://falconllm.tii.ae/acceptable-use-policy.html

- Llama 2: https://llama.meta.com/llama2/license/

- Llama 3: https://llama.meta.com/llama3/license/

- Gemma: https://www.kaggle.com/models/google/gemma/license/consent

Conclusion

A multitude of pieces go into building an AI solution in addition to the model. In an AI solution powered by LLMs, it might not be just one model, but a combination of different models working together to deliver an elegant solution to a business challenge. Dell Technologies considered all of the criteria mentioned in this blog when creating this curated set of models for Dell Enterprise Hub in partnership with Hugging Face. Each month, newer and more powerful open-source LLMs are expected to be released with greater model support added for optimization by Dell Technologies and Hugging Face to Dell Enterprise Hub

Eager for more? Check out the other blogs in this series to get inspired and discover what else you can do with the Dell Enterprise Hub and Hugging Face partnership.

Author: Bala Rajendran, AI Technologist

To see more from this author, check out Bala Rajendran on Info Hub.