Firmware Device Order for PERC H750, H755, H350, and H355 Storage Controllers (Linux Only)

Download PDFThu, 20 Jul 2023 20:10:45 -0000

|Read Time: 0 minutes

Summary

Dell Technologies provides a feature to the PERC 11 family of controllers that gives users the limited ability to influence the ordering of devices within Linux operating systems.

This DfD tech note is intended to educate customers about this feature and its caveats. It also provides the necessary background about device enumeration.

Introduction

PERC 11-series controllers provide a feature called Firmware Device Order that provides limited operator control of the order of host-visible SCSI devices in compatible Linux distributions[1]. A This feature is called Firmware Device Order (FDO). When enabled, this feature influences the Linux kernel’s SCSI device enumeration (that is, the /dev/sdXX ordering).

This feature is particularly targeted to customers transitioning from PERC 9/10 controllers to PERC 11 on Dell’s 14G PowerEdge servers, while looking to maintain a consistent device order enumeration.

This document describes the design, control, and limitations of this feature.

Background

Linux device enumeration

The PERC device driver presents to the Linux kernel a pseudo-SCSI (Small Computing System Interface) adapter where the configured Virtual Drives (VDs) and Non-RAID drives are individual SCSI targets.

The PERC device driver does not directly control the SCSI disk drive enumeration. It is the kernel’s prerogative, for example, to use /dev/sda to refer to the first discovered drive. The feature in this DfD will enforce an ordering in the revealing of SCSI disk drives to the kernel.

PERC 11

PERC 11-series controllers support the concurrent existence of Non- RAID and Virtual Drives (VDs).

Under Linux, without Firmware Device Order enabled, the PERC driver enumerates any configured Non-RAID drives first, followed by VDs. This results in the Non-RAID drives having lower /dev/sdXX device assignments than VDs when listed alphabetically.

The ordering logic within the two groups – Non-RAID and Virtual Drives – differs between PERC H75x and PERC H35x. For details, see the following table:

Table 1. PERC 11-series default Linux enumeration

Group | Property | PERC H75x | PERC H35x |

1st | Type | Non-RAID | Non-RAID |

Ordering | Enclosure/Slot position order | Discovery order, | |

2nd | Type | Virtual Drives | Virtual Drives |

Ordering | Reverse creation order | Order of creation |

Although creating VDs while the OS is running is a supported PERC operation, note that newly created devices may not adhere to the ordering rules in Table 1. After a restart, those rules apply.

Creating a new VD after deleting Virtual Disks out-of-order might alter the presentation order (that is, deleting a VD other than the last VD, then creating a new VD).

The following table represents an example configuration where a PERC H75x controller has two VDs created and two Non-RAID drives. This ordering is what will appear in a Linux-based operating system enumeration after booting the system.

Table 2. PERC H75x default Linux enumeration example

Type | Description | Block Device |

Non-RAID

| Non-RAID in backplane slot 6 | /dev/sda |

Non-RAID in backplane slot 7 | /dev/sdb | |

Virtual Drives | Second VD created | /dev/sdc |

First VD created | /dev/sdd |

Note that for demonstration purposes, the block device enumeration is assumed to start as /dev/sda. That may not be the case in your system if the Linux kernel discovered other SCSI attached devices prior to enumeration of the drives attached to PERC.

Introducing the Firmware Device Order feature

Functionality

Firmware Device Order (FDO) alters the order of device presentation to the Linux kernel. It adds a third type - the designated boot volume. When enabled, the following order is used:

- Designated boot device

- Virtual Drives (VDs)

- Non-RAID drives

Table 3. PERC 11-series FDO Linux enumeration

Order | FDO enabled |

1st | Boot device |

2nd | Virtual Drives |

3rd | Non-RAID |

Firmware Device Order requires supported PERC 11-series controller firmware and a FDO aware Linux device driver. See the section Minimum required component versions.

Boot device

The boot device specified in the PERC controller will be presented first to the Linux kernel. The boot device may be chosen by the operator, or if none is chosen, the PERC controller automatically determines its designated boot device. Either a Virtual Drive or a Non-RAID drive can be a boot device. The PERC controller and driver use this information regardless of the system’s current boot mode and independent of whether the boot device was used to boot the current running operating system.

See the PERC 11 User’s Guide for further instructions about how to designate a boot device.

Virtual drives

After the optional boot device, the configured Virtual Drives will be presented to the Linux kernel in the order of creation (that is, the 1st VD created is presented 1st, the 2nd VD created is presented second, and so on).

Non-RAID drives

Non-RAID drives are presented after the VDs. Non-RAID drives are presented in the order of PERC’s discovery of the drives during system boot. This may not be the same as the ordering of enclosure/slot position of the drives.

Summary

The following table summarizes the Firmware Device Order behavior for PERC H75x and PERC H35x.

Table 4. PERC 11-series Firmware Device Order Linux enumeration

Group | Property | PERC H75x | PERC H35x |

1st | Type | Boot device | Boot device |

2nd | Type | Virtual Drives | Virtual Drives |

Ordering | Creation order | Creation order | |

3rd | Type | Non-RAID | Non-RAID |

Ordering | Discovery order, Not based on slot | Discovery order, Not based on slot |

How to enable Firmware Device Order

Overview

Firmware Device Order (FDO) is disabled by default. To enable FDO you can use the PERC System Setup Utility or the perccli utility. Note that FDO requires:

- Using or installing a compatible Linux-based operating system

- Using a compatible PERC Linux device driver

- Selecting a preferred boot device (see the Boot device section)

System setup

The PERC 11-series firmware includes a new Human Interactive Interface (HII) setting to enable the Firmware Device Order feature. This setting is on the Advanced Controller Properties page.

- Open the Dell PERC 11 Configuration Utility.

- Select Main Menu > Controller Management > Advanced Controller Properties.

- Select Firmware Device Order, then select the option desired.

- Confirm the change by selecting Apply Change.

Note that a system restart is necessary for an FDO enable or disable operation to take effect. See the section Manage PERC 11 Controllers Using HII Configuration Utility of the User's Guide for steps to enter and navigate in HII.

The perccli utility

You can use the perccli utility to query the current Firmware Device Order setting, and to enable/disable the feature (see the Minimum required component versions section).

To query the current setting:

# perccli /cx show deviceorderbyfirmware

To enable Firmware Device Order:

# perccli /cx set deviceorderbyfirmware=on

To disable Firmware Device Order:

# perccli /cx set deviceorderbyfirmware=off

where x is the controller instance for the PERC 11-series controller being targeted.

Note: A system restart is necessary for an FDO enable or disable operation to take effect.

Operating system support

Overview

The Firmware Device Order feature is only supported on Linux distributions. Enabling the feature on systems that run other operating systems, such as Microsoft Windows or VMware ESXi, will result in no VDs nor Non-RAID drives being visible in these operating systems. If this is attempted, disable the feature, and reboot your system. The contents on the underlying storage/devices are not affected by the setting.

Linux

A Firmware Device Order compatible device driver must be used on Linux-based distributions. Using an incompatible driver causes both VDs and Non-RAID drives to be hidden from the host.

The following table lists the minimum versions of the major Linux distributions that support the Firmware Device Order feature.

Table 5. FDO enabled distributions

Distribution | Inbox driver version |

RHEL 8.2 | 07.710.50.00-rh1 |

RHEL 7.8 | 07.710.50.00-rh1 |

SLES 15 SP2 | 07.713.01.00-rc1 |

Ubuntu 20.04 LTS | 07.710.06.00-rc1 |

Notes:

Not all operating system distribution release versions listed in Table 5 may be supported by your specific system and controlled combination. See the Linux OS Support Matrix on Dell.com to confirm the supported Linux distributions for your system and PERC controller.

Linux 5.x kernels and above probe for block devices asynchronously. Device ordering can be inconsistent because of this, even with FDO enabled. See the OS documentation for custom persistent device alternatives.

Unsupported operating systems

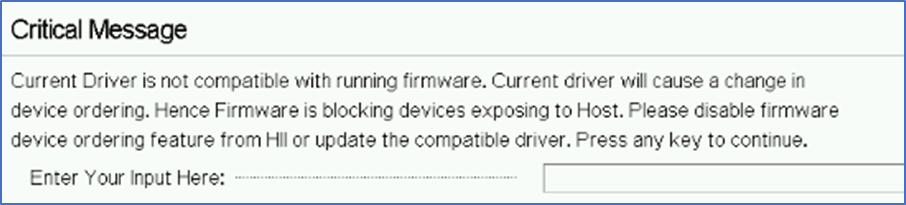

Attempting to boot into an operating system running a device driver that does not support Firmware Device Order will result in no storage being presented to the operating system. If PERC is your boot controller, the OS will fail to start correctly. After the system reboots, the PERC 11- series will display a warning indicating that an incompatible operating system driver was detected.

Figure 1. Critical message displayed with incompatible operating system

If this message appears on your system, it means that you are running an incompatible operating system with Firmware Device Order enabled. (To disable Firmware Device Order, see the System setup section).

Windows

Microsoft Windows is not supported with Firmware Device Order.

VMware ESXi

VMware ESXi is not supported with Firmware Device Order.

Minimum required component versions

This section lists the minimum PERC 11-series component versions required to use the Firmware Device Order (FDO) feature.

Table 6. FDO minimum component versions

Component | PERC H75x | PERC H35x |

Controller Firmware | 52.16.1-4074 | 52.19.1-4171 |

Linux Device Driver | 07.707.51.00-rc1 | 07.707.51.00-rc1 |

perccli Utility | 7.1604.00 | 7.1604.00 |

Note: Not all firmware, driver, and utility version combinations may be supported by your system and controller combination. Visit support.dell.com for the latest component releases for your system and PERC controller.

Summary

The new PERC series-11 Firmware Device Order (FDO) feature enables an alternate presentation order of Virtual Drives and Non-RAID drives. This feature is particularly targeted to those customers on Dell’s 14G PowerEdge who want to transition to PERC 11 from PERC 9/PERC 10. The FDO feature requires a supporting PERC 11-series firmware, an aware device driver, and that the system be running a Linux-based operating system. If you prefer, the feature can be turned off at any time to resume traditional enumeration, or to transition from a Linux environment to another operating system

[1] Includes PERC H750, PERC H755, PERC H350, and PERC H355 storage controllers. See the Minimum required component versions section.