Dell and Databricks Announce a Multicloud Analytics and AI Solution

Mon, 22 May 2023 16:58:09 -0000

|Read Time: 0 minutes

Dell and Databricks' partnership will bring customers cloud-based analytics and AI using Databricks with data stored in Dell Object Storage.

The biggest business opportunity for enterprises today lies in harnessing data for business insight and gaining a competitive edge. At the same time, the data landscape is more distributed and fragmented than ever. Data is spread out across multiple environments including on-premises and multiple public clouds, thus complicating the ability to access and process data efficiently.

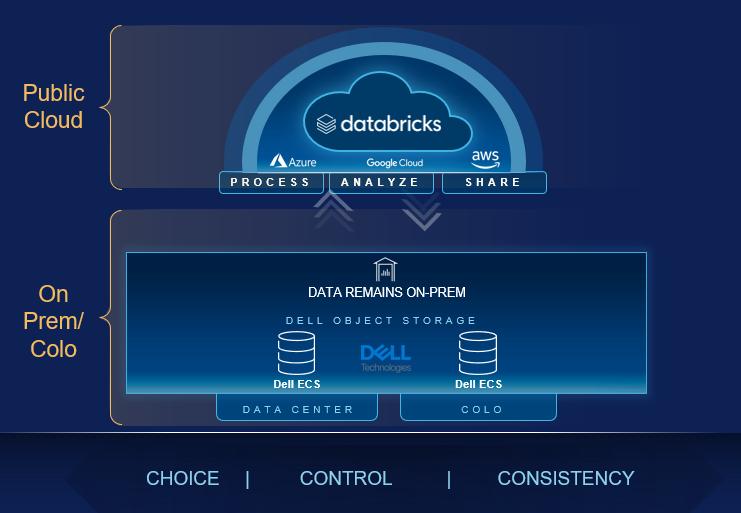

Enterprises require solutions that enable a multicloud data strategy by design. That means leveraging data wherever it is stored, across clouds, with a consistent management, security, and governance experience to build analytical and AI/ML-based workloads.

At Dell, the business of data is not new to us. We store and process a large majority of the world’s data on our systems. And we work with customers across the globe every day to accelerate time to value from their data. Dell is building an open ecosystem of partners who together can help address next-gen challenges in data management.

Dell and Databricks

Today, during the opening keynote at Dell Technologies World 2023, Dell Technologies announced a strategic and multi-phase partnership with Databricks.

I want to share some additional details around that announcement.

Customers today can leverage native Databricks capabilities to process, analyze and share data stored in Dell object storage, located on-prem or in a cloud-adjacent datacenter like Faction, without moving data into the cloud. This unlocks phenomenal benefits for customers including compute on-demand to process on-premise data assets, the ability to securely share data within and outside the enterprise, reduced data movements and copies, compliance with data localization regulations, multicloud resiliency, the adoption of open architecture standards and an overall reduction in cost and complexity of their data landscape. Key to this integration is support for Delta Sharing, an open standard for secure sharing of data assets to securely share live data with any computing platform.

Customers today can leverage native Databricks capabilities to process, analyze and share data stored in Dell object storage, located on-prem or in a cloud-adjacent datacenter like Faction, without moving data into the cloud. This unlocks phenomenal benefits for customers including compute on-demand to process on-premise data assets, the ability to securely share data within and outside the enterprise, reduced data movements and copies, compliance with data localization regulations, multicloud resiliency, the adoption of open architecture standards and an overall reduction in cost and complexity of their data landscape. Key to this integration is support for Delta Sharing, an open standard for secure sharing of data assets to securely share live data with any computing platform.

And that’s not all - our teams at Dell and Databricks are excited to engineer a deeper integration that will deliver a truly seamless experience of using Dell object storage within the Databricks Lakehouse Platform. This will completely transform the way customers manage on-premises data with cloud platforms.

Dell and Databricks realize it is a multi-faceted world, and customers benefit from being able to access data wherever it resides to unlock competitive differentiation. Dell and Databricks will closely partner in the market to bring these solutions to our joint customers.

“Databricks is focused on helping businesses extract the most valuable insights from their data, wherever it resides,” said Adam Conway, senior vice president, product at Databricks. “This partnership provides the ability to leverage cloud and on-premises data together with best-of-breed technologies, and to securely share that data through Delta Sharing. Combining the best of Dell and Databricks changes the data landscape for customers as they operate in today’s multicloud world.”

Data Management with Dell Technologies

This partnership is a great addition to Dell’s open ecosystem of technologies in the data space. Together with Dell’s market-leading portfolio of storage, compute and services, this ecosystem aims to provide the best-in-class data management solutions to our customers and help them build a multicloud data strategy by design. The data space is buzzing with new innovations and technologies and aimed at improving the user experience, productivity, and business value by orders of magnitude. Partner with Dell Technologies to create the right multicloud data strategy for your enterprise and unleash the next wave of transformation and competitive edge for your business. Visit us at dell.com/datamanagement to stay tuned to the latest in this space.

To learn more about how Dell and Databricks can help your organization streamline its data strategy, read the "Power Multicloud Data Analytics and AI using Dell Object Storage and Databricks" white paper, or contact the Dell Technologies data management team at Dell.data.management@dell.com.