Amazon EKS Connector with EKS Anywhere on Dell PowerFlex

Tue, 17 Jan 2023 06:19:04 -0000

|Read Time: 0 minutes

Why Amazon EKS Anywhere?

Digital transformation and application modernization have taken to new heights in the recent past. As businesses transform digitally, a demand for adopting modern infrastructure has equally grown to run applications at scale and provide fault-tolerant infrastructure.

With an ever-evolving technology landscape, it becomes important for industries to be well-positioned and equipped with the tools and capabilities to scale as the business grows.

Kubernetes is an effective containerization platform when it comes to running microservices and modern applications. Kubernetes is available with every major cloud provider as a service and can also be deployed in private cloud environments.

Amazon Elastic Kubernetes Service (EKS) Anywhere has taken the containerized platform to new heights. Amazon EKS Anywhere allows organizations to run an Amazon EKS cluster anywhere including in an on-premises private cloud.

What is Amazon EKS Connector?

In this blog, we will discuss the Amazon EKS connector that enables you to leverage Amazon EKS Console to view the entire Kubernetes infrastructure from a single pane of glass. Amazon EKS Anywhere and Amazon EKS Connector are strategically the best fit for businesses embracing hybrid cloud environments and private infrastructure setups.

Amazon EKS Connector is a new capability that allows administrators to securely connect any Kubernetes cluster (not limited to EKS Anywhere) to the EKS Console and provides a unified view of the entire Kubernetes environment.

Connecting your on-premises Kubernetes cluster with the EKS Console requires you to register the cluster with EKS and run the EKS Connector agent on the external Kubernetes cluster. EKS Connector agent installed on the connected cluster establishes a secured communication channel using a session manager.

There are multiple ways to register a Kubernetes cluster running anywhere with the AWS EKS console. You can use AWS CLI, eksctl, SDK or console. When registering with the eksctl or console option, a YAML manifest file is auto-populated with the required parameters and settings. However, some additional manual steps are required.

Note: Registering the Kubernetes cluster with the EKS console requires that you create the following IAM roles and groups in AWS IAM to be able to perform the required operations:

- Service-linked role for Amazon EKS

- EKS-Connector-Agent Role

Once the connection process is complete, administrators can use the Amazon EKS Console to view all connected clusters and their associated resources.

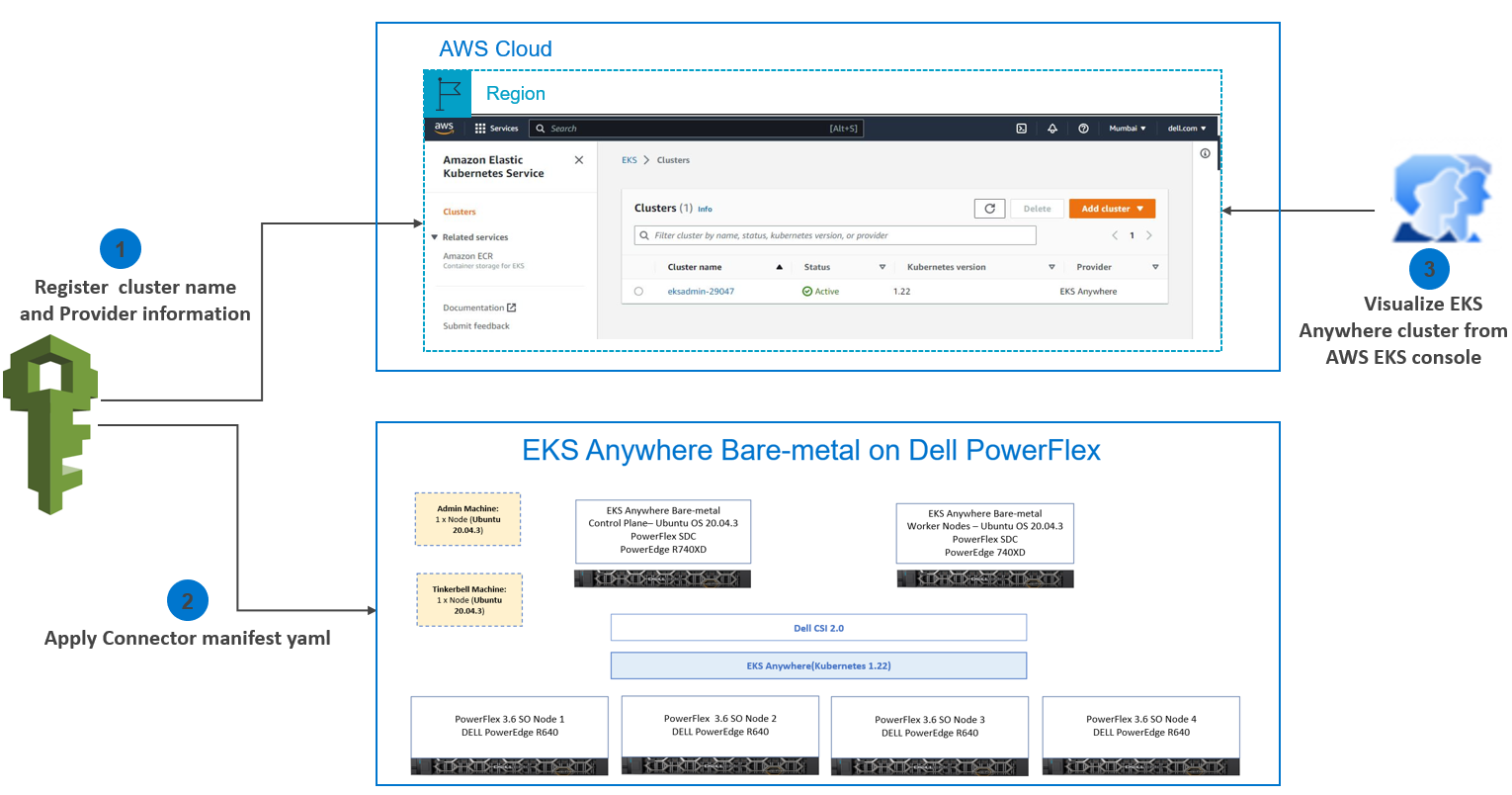

Amazon EKS Connector

Amazon EKS Connector

Connecting a Kubernetes Cluster running on the Dell PowerFlex cluster to the EKS Console

Let us dig a little deeper into connecting a Kubernetes Cluster running on the Dell PowerFlex cluster to the EKS Console.

The cluster registration process involves two steps:

1. Register the cluster with Amazon EKS

2. Apply a connector YAML manifest file in the target cluster to enable connectivity.

Step 1: Register the cluster with Amazon EKS

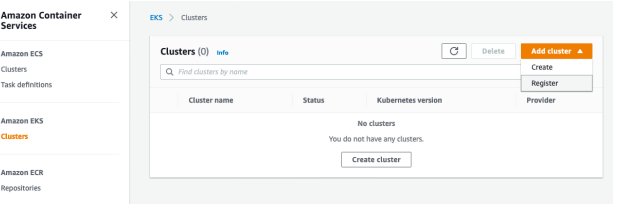

EKS console includes a register option along with the create cluster option. Open the EKS console and go to the Clusters section. From Add cluster select the Register option as shown in the following image:

Registering the EKS Connector

Registering the EKS Connector

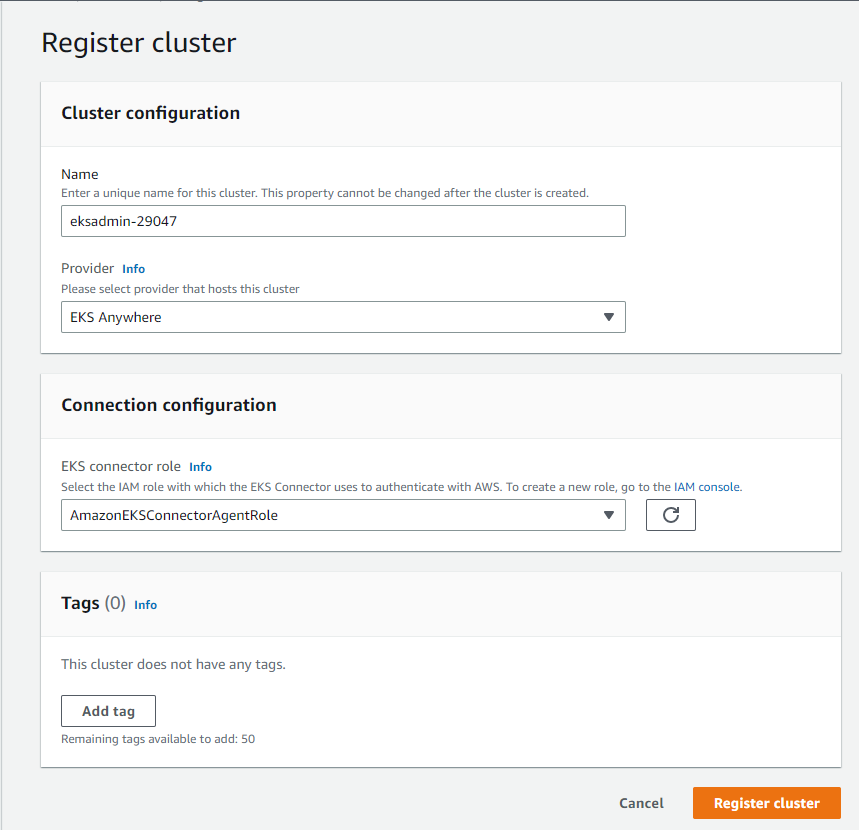

- Enter the following details in the cluster registration form:

- Define a name for your cluster.

- Select the provider as EKS Anywhere (which is the case in this example).

- Select the EKS Connector Role that you created to enable the Kubernetes control plane to create resources on your behalf.

Cluster registration

Cluster registration

- Click Register cluster.

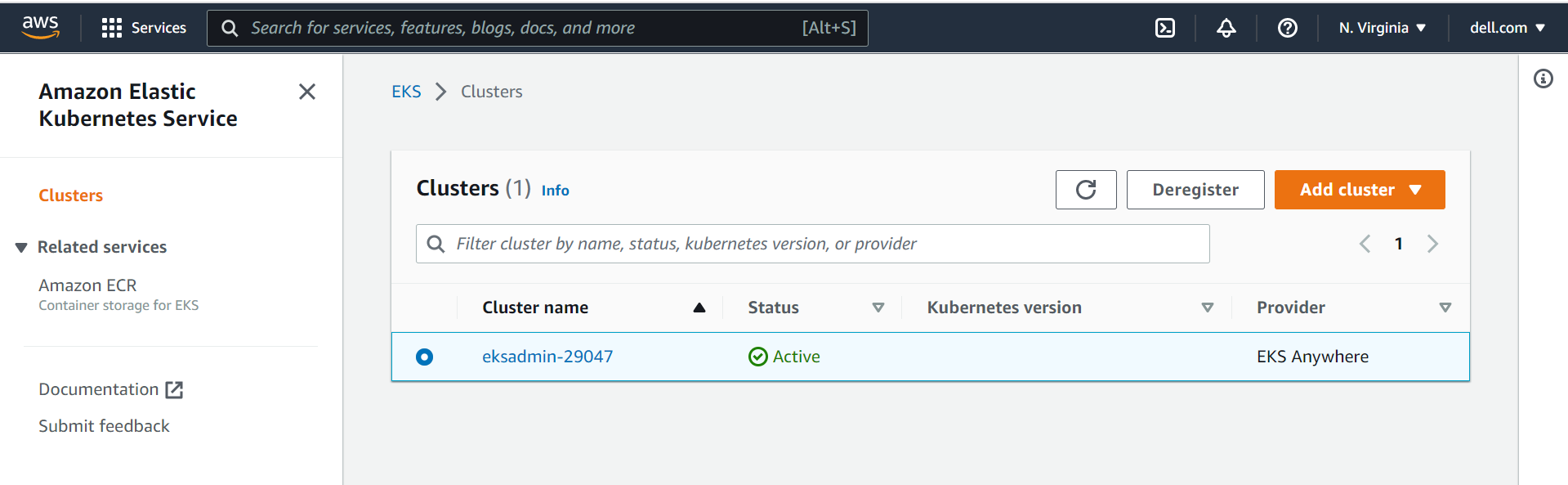

- After the cluster is added the Cluster name is displayed and the status shows Active as shown in the following figure:

Cluster status

Cluster status

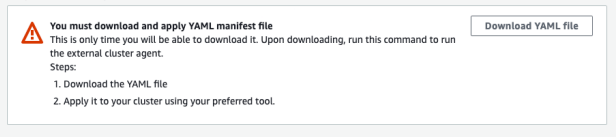

Step 2: Apply a connector YAML manifest file in the target cluster to enable connectivity

- After registering the cluster, you will be redirected to the Cluster Overview page. Click Download YAML file and install the file on your Kubernetes cluster to connect to the EKS console as shown in the following figure:

Cluster overview

Cluster overview

- Apply downloaded eks-connector.yaml as follows:

kubectl apply -f eks-connector.yaml |

The EKS Connector runs in StatefulSet mode on your Kubernetes cluster. The connector establishes a connection and proxies the communication between the API server of your EKS Anywhere cluster and Amazon Web Services. The connector is used to display cluster data in the Amazon EKS console until you disconnect the cluster from AWS.

The YAML manifest file generated during the cluster registration process creates the following containers:

InitContainer: This container registers the EKS Connector agent with the Systems Manager control plane service and populates the registration information in the Kubernetes backend data store. InitContainer mounts this data to the EKS Connector agent’s volume when it is recycled. This eliminates the need of registration whenever a pod is recycled.

EKS Connector agent: This is an agent based on the SSM agent, running in container mode. This agent creates an outbound connection from the Kubernetes cluster to the AWS network. All subsequent requests from AWS are performed using the connection channels established by the EKS Connector agent.

Connector proxy: This agent acts as a proxy between the EKS Connector agent and Kubernetes API Server. This proxy agent uses the Kubernetes service account to impersonate the IAM user that accesses the console and fetches information from the Kubernetes API Server.

As one can see the EKS connector agent liaisons with the SSM service, which in turn interacts with the EKS service via EventBridge. To facilitate the interaction, the EKS connector agent role is required with appropriate permissions to create, open, and control the SSM channels. In the absence, of this important IAM role at AWS end, the creation and control of Systems Manager channels would not be possible eventually leading to an unsuccessful registration

Upon successful registration, one can notice the changes in the AWS EventBridge services. A new event rule with the pattern of registration and deregistration is created under the “default” event bus.

eks-connector-console-dashboard-full-access-group: This is a YAML manifest consisting of roles and bindings that are required to get access to all namespaces and resources to be visualized in the console.

Download and apply the eks-connector-console-dashboard-full-access. YAML as follows:

curl -o eks-connector-console-dashboard-full-access-group.yaml https://s3.us-west-2.amazonaws.com/amazon-eks/eks-connector/manifests/eks-connector-console-roles/eks-connector-console-dashboard-full-access-group.yaml

kubectl apply -f eks-connector-console-dashboard-full-access.yaml

eks-connector-clusterrole: This is a YAML manifest consisting of cluster roles and bindings for the cluster to define permissions on namespaces and cluster scope resources.

Download the apply eks-connector-cluster-role as follows:

curl -o eks-connector-clusterrole.yaml https://s3.us-west-2.amazonaws.com/amazon-eks/eks-connector/manifests/eks-connector-console-roles/eks-connector-clusterrole.yaml

kubectl apply -f eks-connector-clusterrole.yaml

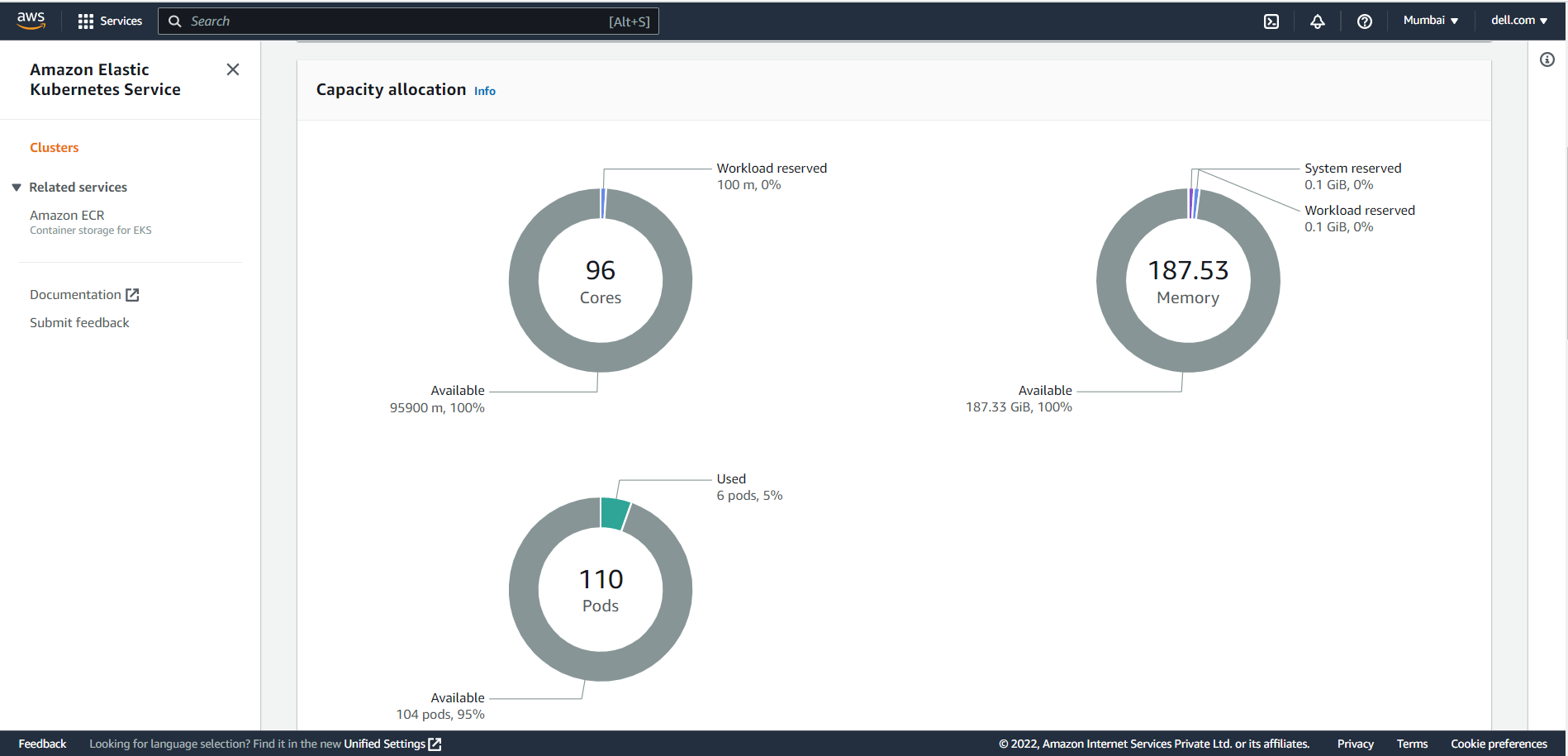

Amazon EKS Console

The Overview section shows all the cluster resources. All the objects are read-only, and the user cannot edit or delete an object in the registered cluster as shown in the following figure:

Dashboard

Dashboard

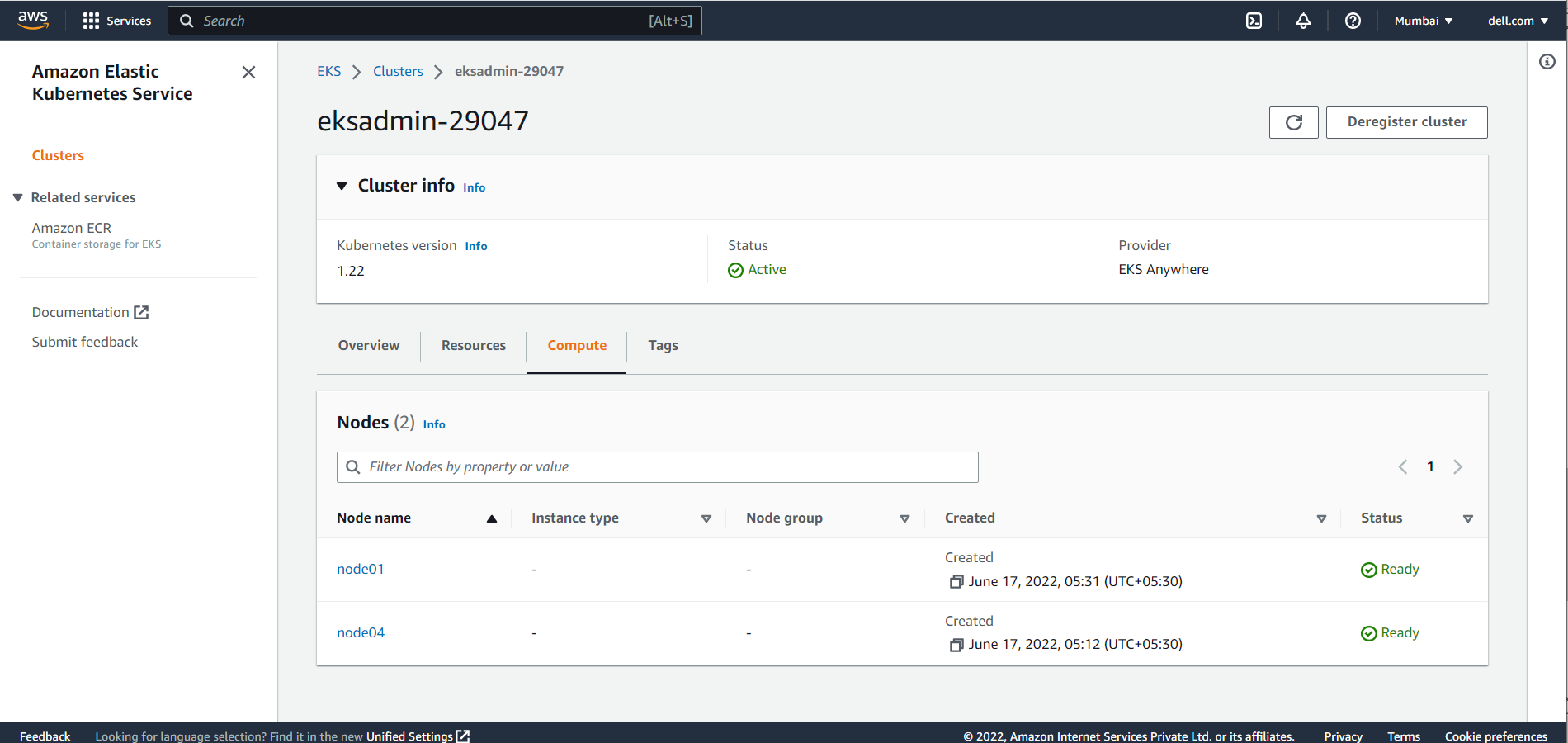

The Compute section shows all the Dell PowerFlex node resources in the Amazon EKS Anywhere Cluster.

Compute

Compute

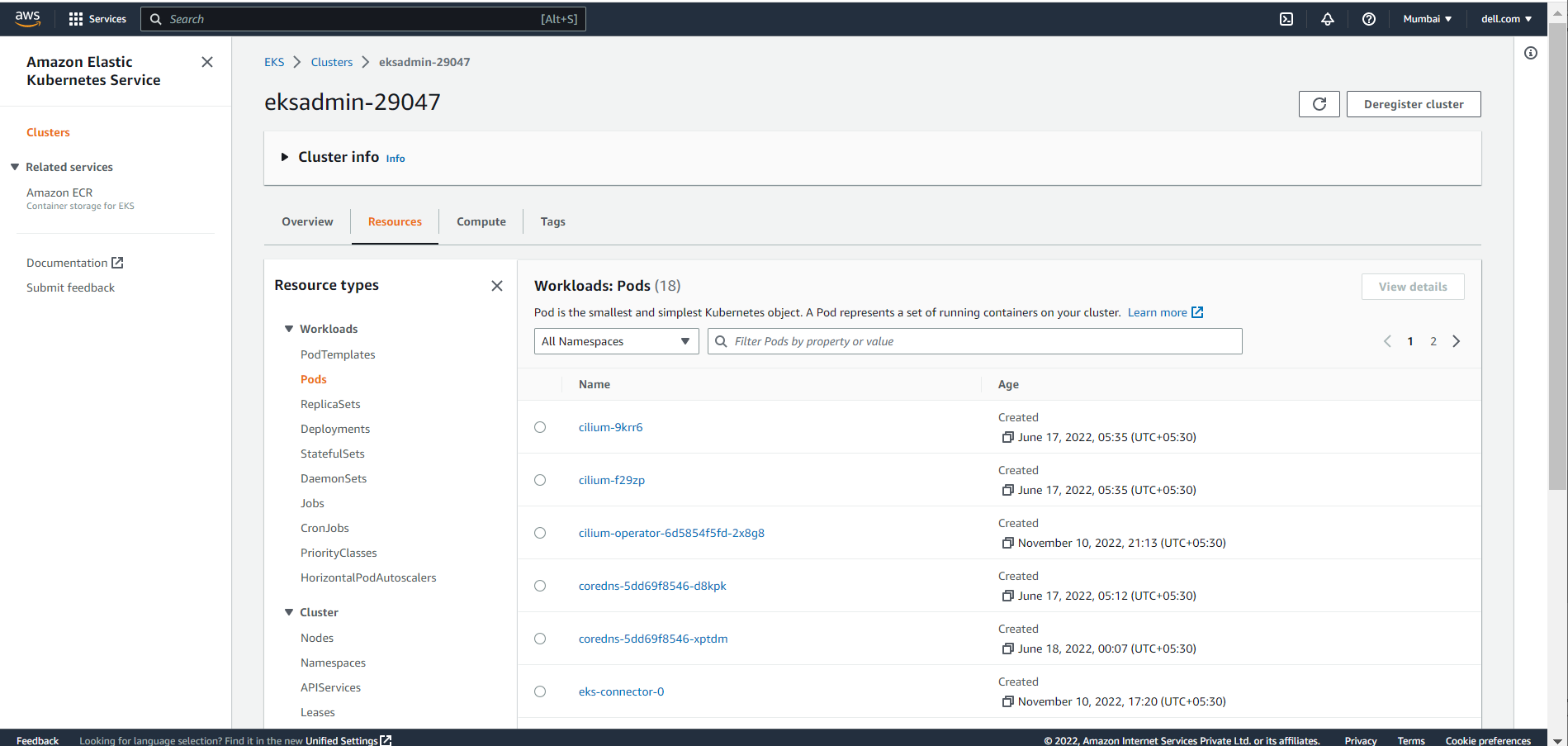

The Workloads section displays all objects of Type: Deployment, DaemonSet and StatefulSet. Users can select these objects to select a pod-level overview.

Workloads

Workloads

Conclusion

In this blog, we have explored the Amazon EKS Connector, and how to connect and register the Kubernetes cluster to the Amazon console. Using the Amazon EKS Connector, organizations can now leverage Amazon EKS Console to bring together both the cloud environment and private infrastructure setups and view them from a single pane of glass.

If you are interested to find out more about how to use Amazon EKS Anywhere and the Amazon EKS Connector in the PowerFlex environment, reach out to your Dell representative.

Resources

- Amazon Elastic Kubernetes Service Anywhere on Dell PowerFlex

- Introducing bare metal deployments for Amazon EKS Anywhere

- Customer Choice Comes First: Dell Technologies and AWS EKS Anywhere