Accelerating Relational Database Workloads with 16G Dell PowerEdge R6625 Servers Equipped with PCIe 5.0 E3.S

Download PDFThu, 08 Feb 2024 02:28:42 -0000

|Read Time: 0 minutes

Summary

The latest 16G Dell PowerEdge R6625 servers support the PCIe 5.0 interface and the Enterprise and Datacenter Standard Form Factor (EDSFF) E3.S form factor. They deliver significant performance benefits and an improved system airflow that enhances heat dissipation. This can lead to less thermal throttling and increased lifespans for system components such as CPUs, memory and storage when compared with prior PCIe generations deployed with 2.5-inch1 form factor SSDs.

The purpose of this tech note is to present a generational server performance and power consumption comparison using PostgreSQL® relational database2 workloads. It compares 16G Dell PowerEdge R6625 PCIe 5.0 E3.S servers deployed with KIOXIA CM7-R Series E3.S enterprise NVMe SSDs with a previous generation system configuration.

The test results indicate that the latest 16G Dell PowerEdge R6625 servers deliver almost twice the relational database transactions using approximately the same amount of power when compared with the previous generation system.

Market positioning

Relational databases are vital to today’s data centers as they store an overwhelming amount of data captured on premises and at the edge of the network. Sales transactions and information relating to customers, vendors, products and financials represent key data.

IT teams need solutions that scale their data center storage platforms to better address large datasets and future growth. As these databases are dependent on fast underlying storage, one way to achieve high performance and scalability is by utilizing servers equipped with enterprise SSDs based on the latest PCIe 5.0 interface and the NVMe 2.0 protocol. The PCIe 5.0 revision can move data through the PCIe interface almost twice as fast when compared with the previous PCIe

4.0 generation. This enables SSDs to deliver input/output (I/O) even faster while requiring fewer servers to achieve the same level of performance.

With the recent availability of EDSFF SSDs, storage performance and total capacity per server can also increase. Servers with EDSFF E3.S slots deployed with E3.S SSDs deliver fast data throughput, fast input/output operations per second (IOPS) performance, low latency, high density and thermally optimized capabilities.

Product Features

Dell PowerEdge 6625 Rack Server (Figure 1)

Specifications: https://www.delltechnologies.com/asset/en-us/products/servers/technical-support/poweredge-r6625-spec- sheet.pdf.

Figure 1: Side angle of Dell PowerEdge 6625 Rack Server3

KIOXIA CM7 Series Enterprise NVMe SSD (Figure 2) Specifications:https://americas.kioxia.com/en-us/business/ssd/enterprise-ssd.html.

Figure 2: Front view of KIOXIA CM7 Series SSD4

PCIe 5.0 and NVMe 2.0 specification compliant; Two configurations: CM7-R Series (read intensive), 1 Drive Write Per Day5 (DWPD), up to 30,720 gigabyte6 (GB) capacities and CM7-V Series (higher endurance mixed use), 3 DWPD, up to 12,800 GB capacities.

Performance specifications: SeqRead = up to 14,000 MB/s; SeqWrite = up to 7,000 MB/s; RanRead = up to 2.7M IOPS; RanWrite = up to 600K IOPS.

Hardware/Software test configuration

The hardware and software equipment used in this virtualization comparison (Figure 3):

Server Information | ||

Server Model | Dell PowerEdge R6625 | Dell PowerEdge R6525 |

No. of Servers | 1 | 1 |

CPU Information | ||

CPU Model | AMD EPYC™ 9334 | AMD EPYC 7352 |

No. of Sockets | 2 | 2 |

No. of Cores | 32 | 24 |

Memory Information | ||

Memory Type | DDR5 | DDR4 |

Memory Speed (in megatransfers per second) | 4,800 MT/s | 3,200 MT/s |

Memory Size (in gigabytes) | 384 GB | 128 GB |

SSD Information | ||

SSD Model | KIOXIA CM7-R Series | KIOXIA CM6-R Series |

SSD Type | Read intensive | Read intensive |

Form Factor | E3.S | 2.5-inch (U.3) |

Interface | PCIe 5.0 x4 | PCIe 4.0 x4 |

Interface Speed (in gigatransfers per second) | 128 GT/s | 64 GT/s |

No. of SSDs | 4 | 4 |

SSD Capacity (in terabytes6) | 3.84 TB | 3.84 TB |

DWPD | 1 | 1 |

Active Power | up to 24 watts | up to 19 watts |

Operating System Information | ||

Operating System (OS) | Ubuntu® | Ubuntu |

OS Version | 22.04.2 | 22.04.2 |

Kernel | 5.15.0-76-generic | 5.15.0-76-generic |

RAID | RAID 57 | RAID 5 |

RAID Version | mdadm 4.2 | mdadm 4.2 |

Test Software Information | ||

Software | HammerDB8 | HammerDB |

Benchmark | TPROC-C9 | TPROC-C |

Version | 4.8 | 4.8 |

No. of Virtual Users | 128 | 128 |

Figure 3: Hardware/software configuration used in the comparison

For additional information regarding PostgreSQL relational database parameters and the OS tuning parameters used in this comparison, see Appendix A.

Set-up and test procedures

Set-up #1:

A Dell PowerEdge 6625 Rack Server was set-up with the Ubuntu 22.04.2 operating system.

Additional OS level parameters were adjusted to help increase system performance (to adjust these parameters, refer to Appendix A).

The system was rebooted.

Four 3.84 TB KIOXIA CM7 Series SSDs were placed in a RAID 5 set (via mdadm) to hold the PostgreSQL database in the server.

An XFS® file system was placed on top of the RAID 5 set and was mounted with noatime10 and discard11 flags. PostgreSQL relational database was installed in the server and the service was started.

HammerDB test software was installed on the server for the KIOXIA CM7 Series SSDs, enabling the TPROC-C online transaction processing (OLTP) workloads to run against the PostgreSQL database.

Set-up #2:

A Dell PowerEdge 6525 Rack Server was set-up with the Ubuntu 22.04.2 operating system.

Additional OS level parameters were adjusted to help increase system performance (to adjust these parameters, refer to Appendix A).

The system was rebooted.

Four 3.84 TB KIOXIA CM6 Series SSDs were placed in a RAID 5 set (via mdadm) to hold the PostgreSQL database in the server.

An XFS file system was placed on top of the RAID 5 set and was mounted with noatime and discard flags. PostgreSQL relational database was installed in the server and the service was started.

HammerDB test software was installed on the server for the KIOXIA CM6 Series SSDs, enabling the TPROC-C OLTP workloads to run against the PostgreSQL database.

Test procedures:

The following metrics were recorded when the TPROC-C workload was run against each configuration: Average Database Throughput

Average Drive Read Latency

Average Drive Write Latency Average Server Power Consumption

For each individual metric, three total runs were performed and the average of the three runs were calculated and compared with each configuration.

Test results12

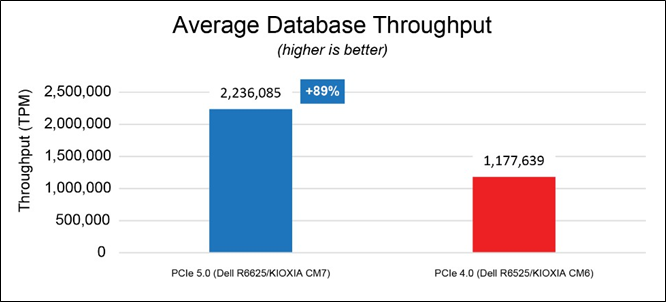

Average Database Throughput (Figure 4).

This test measured how many transactions in the TPROC-C workload were executed per minute. The HammerDB software, executing the TPROC-C transaction profile, randomly performed new order, payment, order status, delivery and stock level transactions. The benchmark simulated an OLTP environment with a large number of users conducting simple and short transactions (that require sub-second response times and return relatively few records). Figure 4 shows the average database throughput from three test runs for each set of drives. The results are in transactions per minute (TPM)

- the higher result is better.

Figure 4: Average database throughput results

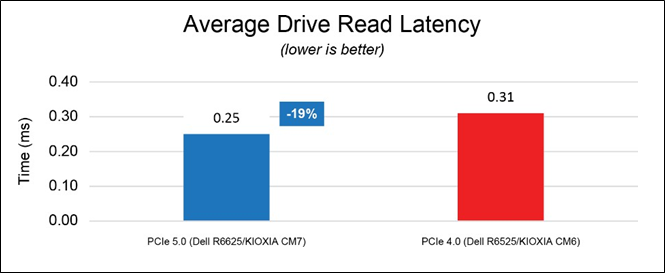

Average Read Latency (Figure 5).

This test measured drive read latency in milliseconds (ms) - the time it took to perform a drive read operation and included the time it took to complete the operation and receive a ‘successfully completed’ acknowledgement. These metrics were obtained from the drives while the database workload was running. Figure 5 shows the average read latency from three test runs for each set of drives - the lower result is better.

Figure 5: Average read latency results

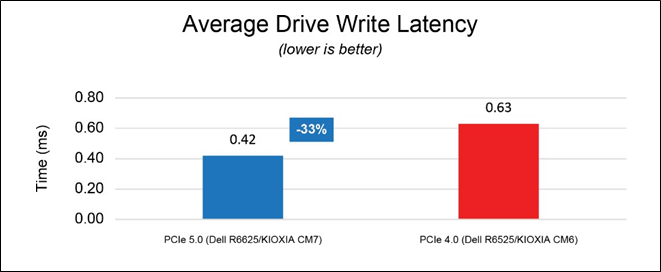

Average Write Latency (Figure 6).

This test measured drive write latency in milliseconds (ms) - the time it took to perform a drive write operation and included the time it took to complete the operation and receive a ‘successfully completed’ acknowledgement. These metrics were obtained from the drives while the database workload was running. Figure 6 shows the average write latency from three test runs for each set of drives - the lower result is better.

Figure 6: Average write latency results

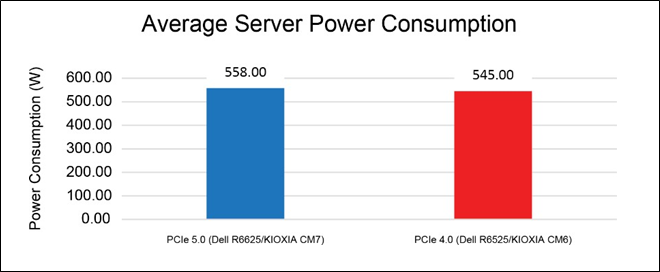

Average Server Power Consumption (Figure 7).

This test measured the average amount of power drawn by each server system in its entirety including all of the individual components that run from the server’s power supply unit (PSU). This includes the motherboard, CPU, memory, storage and other server components. The following results in Figure 7 were obtained from the Integrated Dell Remote Access Controller (iDRAC) – the results are in watts (W).

Figure 7: Average server power consumption results

Although the overall system power draw is slightly higher in the PCIe 5.0 configuration, the solution is able to maintain 89% higher database throughput, 19% lower read latency and 33% lower write latency on average.

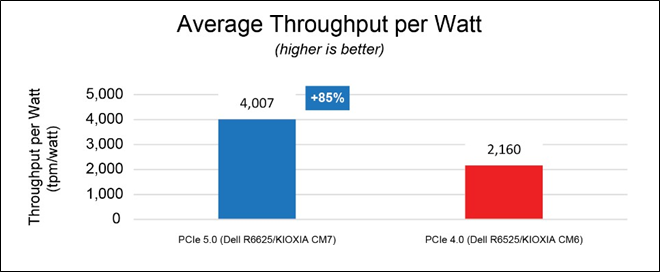

From the Figure 7 results, database throughput per watt can be easily determined by dividing the average database throughput by the average server consumption as depicted in Figure 8 – the higher result is better.

Figure 8: Average throughput per watt results

The PCIe 5.0 configuration was able to deliver 4,007 TPM per watt versus 2,160 TPM per watt delivered by the PCIe 4.0 configuration, nearly doubling database throughput per watt. At the data center level, these results enable administrators to use the same number of servers for nearly double the performance, or converse to this, scale the number of servers to help save on power consumption and total cost of ownership without sacrificing performance.

Final analysis

Next generation Dell PowerEdge 6625 Rack Servers deployed with KIOXIA CM7 Series PCIe 5.0 E3.S SSDs show nearly double the database performance when compared with a previous generation while lowering SSD latency by performing read/write operations faster. This system delivered 89% more transactions per minute enabling higher relational database workload densities while reducing the footprint of servers needed to service these workloads.

The Dell PowerEdge R6625 and KIOXIA CM7 Series SSD test configuration also demonstrated a comparable server power draw when compared with the previous generation test system. Though the active power increased from PCIe 4.0 to PCIe 5.0 by approximately 13 watts, the system was able to process almost twice as many transactions while consuming almost the same amount of power. As such, fewer servers are necessary to achieve the same level of performance without experiencing a power consumption spike.

The test results indicate that the latest 16G Dell PowerEdge 6625 Rack Servers deliver almost twice the relational database transactions using approximately the same amount of power when compared with prior PCIe generations.

Appendix A – PostgreSQL Parameters / OS Tuning Parameters

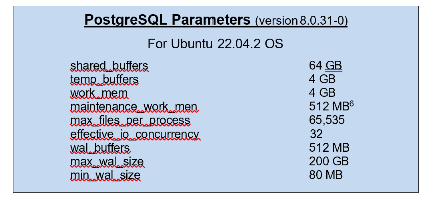

The PostgreSQL parameters used for this comparison include:

Additional tuning parameters performed on the OS to optimize system performance were made to /etc/sysctl.conf files and

/etc/security/limits.conf files. The /etc/sysctl.conf files override OS default kernel parameter values while the

/etc/security/limits.conf files allow resource limits to be set. These tuning parameters include:

/etc/sysctl.conf file changes:

Parameter | Value |

vm.swappiness | 0 |

kernel.sem | 250 32000 100 128 |

fs.file-max | 6815744 |

net.core.rmem_default | 262144 |

net.core.rmem_max | 4194304 |

net.core.wmem_default | 262144 |

net.core.wmem_max | 1048576 |

fs.aio-max-nr | 1048576 |

vm.nr_hugepages | 35000 |

/etc/security/limits.conf file changes:

User | Values | ||

* | soft | nproc | 65535 |

* | hard | nproc | 65535 |

* | soft | nofile | 65535 |

* | hard | nofile | 65535 |

root | soft | nproc | 65535 |

root | hard | nproc | 65535 |

root | soft | nofile | 65535 |

root | hard | nofile | 65535 |

postgres | soft | memlock | 100000000 |

postgres | hard | memlock | 100000000 |

References

Footnotes

- 2.5-inch indicates the form factor of the SSD and not its physical size.

- PostgreSQL is a powerful, open source object-relational database system with over 35 years of active development and a reputation for reliability, feature robustness and performance.

- The product image shown is a representation of the design model and not an accurate product depiction.

- The product image shown was provided with permission from KIOXIA America, Inc. and is a representation of the design model and not an accurate product depiction.

- Drive Write Per Day (DWPD) means the drive can be written and re-written to full capacity once a day, every day for five years, the stated product warranty period. Actual results may vary due to system configuration, usage and other factors. Read and write speed may vary depending on the host device, read and write conditions and file size.

- Definition of capacity - KIOXIA Corporation defines a megabyte (MB) as 1,000,000 bytes, a gigabyte (GB) as 1,000,000,000 bytes and a terabyte (TB) as 1,000,000,000,000 bytes. A computer operating system, however, reports storage capacity using powers of 2 for the definition of 1Gbit = 230 bits = 1,073,741,824 bits, 1GB = 230 bytes = 1,073,741,824 bytes and 1TB = 240 bytes = 1,099,511,627,776 bytes and therefore shows less storage capacity. Available storage capacity (including examples of various media files) will vary based on file size, formatting, settings, software and operating system, and/or pre-installed software applications, or media content. Actual formatted capacity may vary.

- RAID 5 is a redundant array of independent disks configuration that uses disk striping with parity - Data and parity are striped evenly across all of the disks, so no single disk is a bottleneck.

- HammerDB is benchmarking and load testing software that is used to test popular databases. It simulates the stored workloads of multiple virtual users against specific databases to identify transactional scenarios and derive meaningful information about the data environment, such as performance comparisons.

- TPROC-C is the OLTP workload implemented in HammerDB derived from the TPC-C™ specification with modification to make running HammerDB straightforward and cost-effective on any of the supported database environments. The HammerDB TPROC-C workload is an open source workload derived from the TPC-C Benchmark Standard and as such is not comparable to published TPC-C results, as the results comply with a subset rather than the full TPC-C Benchmark Standard. TPROC-C means Transaction Processing Benchmark derived from the TPC "C" specification.

- The noatime option turns off access time recording so that the file system will ignore access time updates on files. If the file system is used for database workloads, specifying noatime can reduce writes to the file system.

- The discard option allows the file system to inform the underlying block device to issue a TRIM command when blocks are longer used. KIOXIA makes no warranties regarding the test results and performance can vary due to system configuration usage and other factors.

- Read and write speed may vary depending on the host device, read and write conditions and file size.

Trademarks

AMD EPYC and combinations thereof are trademark of Advanced Micro Devices, Inc. Dell and PowerEdge are registered trademarks or trademarks of Dell Inc.

NVMe is a registered or unregistered trademark of NVM Express, Inc. in the United States and other countries. PCIe is a registered trademark of PCI-SIG.

PostgreSQL is a registered trademark of the PostgreSQL Community Association of Canada.

All other company names, product names and service names may be trademarks or registered trademarks of their respective companies.

TPC-C is a trademark of the Transaction Processing Performance Council. All other company names, product names and service names may be trademarks or registered trademarks of their respective companies.

Ubuntu is a registered trademark of Canonical Ltd.

XFS is a registered trademark of Silicon Graphics International Corp. or its subsidiaries in the United States and/or other countries.

All other company names, product names and service names may be trademarks or registered trademarks of their respective companies.

Disclaimers

© 2023 Dell, Inc. All rights reserved. Information in this tech note, including product specifications, tested content, and assessments are current and believed to be accurate as of the date that the document was published and subject to change without prior notice. Technical and application information contained here is subject to the most recent applicable product specifications.