Accelerate Genomics Insights and Discovery with High-Performing, Scalable Architecture from Dell and Intel

Download PDFThu, 05 Oct 2023 19:52:19 -0000

|Read Time: 0 minutes

Summary

The field of genomics requires the storage and processing of vast amounts of data. In this brief, Intel and Dell technologists discuss key considerations to successfully deploy BeeGFS based storage for genomics applications on the 16th Generation PowerEdge Server portfolio offerings.

Market positioning

The life sciences industry faces intense pressure to accelerate results and bring new treatments to market while lowering costs, especially in genomics. But life-changing discoveries often depend on processing, storing, and analyzing enormous volumes of genomic sequencing data — more than 20 TB of new data per day by one organization alone[1], with each modern genome sequencer producing up to 10TB of new data per day. Researchers need high-performing solutions built to handle this volume of data, in addition to demanding analytics and artificial intelligence (AI) workloads, and that are also easy to deploy and scale.

Dell and Intel have collaborated on a bill of materials (BoM) that provides life science organizations with a scalable solution for genomics. This solution features high-performance compute and storage building blocks for one of the leading parallel cluster file systems, BeeGFS. The BoM features four Dell PowerEdge rack server nodes powered by 4th Generation Intel® Xeon® Scalable processors, which deliver the performance needed for faster results and time to production.

The BoM can be tailored for each organization’s architectural needs. For dense configurations, customers can use the Dell PowerEdge C6600 enclosure with PowerEdge C6620 server nodes instead of standard PowerEdge R660 servers (each PowerEdge C6600 chassis can hold up to four PowerEdge C6620 server nodes). If they already have a storage solution in place using InfiniBand fabric, the nodes can be equipped with an additional Mellanox ConnectX-6 HDR100 InfiniBand adapter.

Key Considerations

Key considerations for deploying genomics solutions on Dell PowerEdge servers include:

- Core count: Life sciences organizations often process a whole genome on a cluster, which scales linearly with core count. The Dell PowerEdge solution offers up to 32 cores per CPU to meet performance requirements.

- Memory requirements: This BoM provides 512 GB of DRAM to support specific tasks in workloads that have higher memory requirements, such as running Burrows-Wheeler Aligner algorithms.

- Local and distributed storage: Input/output (I/O) is a big consideration for genomics workloads because datasets can reach hundreds of gigabytes in size. Dell and Intel recommend 3.2 TB of local storage specifically for commonly used genomics tools that read and write many temporary files.

Available Configurations

Feature | Configuration |

Platform | 4 x Dell R660 supporting 8 x 2.5” NVMe drives - direct connection |

CPU (per server) | 2x Xeon Gold 6438Y+ (32c @ 2.0GHz) |

DRAM | 512GB (16 x 32GB DDR5-4800) |

Boot device | Dell BOSS-N1 with 2x 480GB M.2 NVMe SSD (RAID1) |

Storage | 1x 3.2TB Solidigm D7-P5620 SSD (PCIe Gen4, Mixed-use) |

Capacity storage | Dell Ready Solutions for HPC BeeGFS Storage: 500 GB capacity per 30x coverage whole genome sequence (WGS) to be processed; 800 MB/s total (200 MB/s per node). |

NIC | Intel E810-XXV Dual Port 10/25GbE SFP28, OCP NIC 3.0 |

Learn More

Contact your Dell or Intel account team for a customized quote at 1-877-289-3355.

Intel Select Solutions for Genomics Analysis: https://www.intel.com/content/dam/www/public/us/en/documents/solution-briefs/select-genomics-analytics.pdf

Dell HPC Ready Architecture for Genomics: https://infohub.delltechnologies.com/static/media/6cb85249-c458-4c06-bcec-ef35c1a363ca.pdf?dgc=SM&cid=1117&lid=spr4502976221&linkId=112053582

Dell Ready Solutions for HPC BeeGFS Storage: https://www.dell.com/support/kbdoc/en-us/000130963/dell-emc-ready-solutions-for-hpc-beegfs-high-performance-storage

[1] Broad Institute. “Sharing Data and Tools to Enable Discovery” https://www.broadinstitute.org/sharing-data-and-tools/cloud-computing#top.

Related Documents

PowerEdge “xs” vs. “Standard” vs. “xa” Servers

Tue, 17 Jan 2023 05:30:43 -0000

|Read Time: 0 minutes

Summary

With the recent announcement of 3rd Gen Intel® Xeon® Scalable processors, Dell has announced 2 different models of the R650 and 3 different models of the R750 to meet emerging customer demands. This paper is intended to highlight the engineering elements of each design and to describe the reason for the expansion of the portfolio.

These 3 classes of systems are designed to optimize for differing workloads.

- The R750xa design is optimized for heavy compute environments and to support this, it has been designed for maximum cooling with front mount slots for the use of GPU’s and support for CPU Thermal Design Points (TDP’s) up to 270W and 40 cores.

- The R650 and R750 systems are also designed for heavy compute environments but are designed for maximum flexibility. This includes enhanced drive counts to deliver optimal storage capacity, and CPU’s with TDP’s up to 270W and 40 cores.

- The R650xs and R750xs systems are designed for traditional virtualized environments and support CPU’s with TDP’s up to 220W and 32 cores. performance in a Software Defined Storage environment like vSAN. Both models support a maximum of 1TB of memory using 64GB DIMM’s.

Introduction

Optimizing between cost, performance and scalability is a difficult balancing act when designing a Server. Mainstream environments like virtualization have established design points that focus on cores, memory capacity and storage density to achieve the ideal configuration. The advent of new technologies like Persistent Memory places additional demands on the design and emerging applications like Artificial Intelligence (AI) and Machine Learning (ML) stretch these designs even further.

The challenge for server design teams is to strike an effective balance that delivers maximum performance for each workload/environment but doesn’t overly burden the customer with unnecessary cost for features they might not use. To illustrate this, consider that a server designed for maximum performance with an in-memory database will require higher memory density while a server designed for AI/ML might benefit from enhanced GPU support and a server designed for virtualization with software defined storage might benefit from enhanced disk counts as shown in the chart below. All of these technologies could take advantage of a new processor design and all need access to memory, but each requires a unique approach to deliver optimization.

| Virtualization | AI/ML | Database |

Memory Capacity |

|

|

|

GPU Support |

|

|

|

Storage Capacity |

|

|

|

While it may be technically possible to build a single system that could achieve all of this, the end result would be much more expensive to purchase and could be potentially larger. For example, a system capable of powering and cooling multiple 400W GPU’s needs to have bigger power supplies, stronger fans, additional space (particularly for double wide GPU’s) and high core count CPU’s. Conversely, a system designed as a virtualization node might require none of these optimizations. Trying to optimize for all often results in unacceptable trade-offs for each.

To achieve truly optimized systems, Dell Technologies is launching 3 classes of its industry leading PowerEdge Rack Servers. The “xa” model, the “standard” models and the “xs” models. The “xa” model is designed for optimization in AI/ML environments and to support that, delivers optimized power, cooling and enhanced GPU support. The “standard” models are flexible enough to deliver an enhanced virtualization or Database environment with the addition of storage capacity and extra memory expansion using DRAM or Persistent Memory (PMEM) and the “xs” models are designed for mainstream virtualization with large disk capacities, CPU support for up to 32 cores and cost effective memory capacities of up to 1TB.

Design Optimizations

As noted above, the “xa” model is optimized for GPU, the “standard” models are optimized for high performance compute and the “xs” models are optimized for virtualized environments. Below is an overview of the key feature differences:

| R650xs | R650 | R750xs | R750 | R750xa |

Height | 1U | 1U | 2U | 2U | 2U |

CPU | Up to 220W | Up to 270W | Up to 220W | Up to 270W | Up to 270W |

Max Core Count1 | 32 | 40 | 32 | 40 | 40 |

Memory slots | 16 | 32 | 16 | 32 | 32 |

Drives supported |

Up to 10 SAS/SATA or NVMe |

Up to 10 SAS/SATA or NVMe + 2 optional rear mount drives |

Up to 24 with 16 SAS/SATA + 8 NVMe | Up to 24 SAS/SATA or NVMe or mixed + 4 optional rear mount drives |

Up to 8 SAS/SATA or NVMe |

Intel® OptaneTM | None | Full Support | None | Full Support | Full Support |

GPU Support* |

None |

Up to 3 SW |

None | Up to 2 DW2 or 6 SW3 | up to 4 DW2 or 6 SW3 |

Boot Support | Boss2 | Hot Plug Boss 2 | Hot Plug Boss 2 | Hot Plug Boss 2 | Hot Plug Boss 2 |

Cooling | Cold Plug Fans | Hot Plug Fans | Hot Plug Fans | Hot Plug Fans | Hot Plug Fans |

Power Supplies |

Redundant 600W to 1400W |

Redundant 800W to 1400W |

Redundant 600W to 1400W |

Redundant 800W to 2400W | Redundant 1400W to 2400W |

Depth | 749mm | 823mm | 721mm | 736mm | 837.2mm |

1Based on current 3rd Gen Intel® Xeon® Scalable processor family

2DW=Double Wide GPU

3SW=Single Wide GPU

While key specifications differ between models, much remains the same. It is important to note that all models support key features such as:

- iDRAC 9 and OpenManage

- OCP3.0 Networking options

- PCIe 4.0 slots – up to 3 in the R650xx series and up to 8 in the R750xx series

- PERC 11 RAID including optional support for NVMe RAID

- 3200MT/s Memory

- Installation in standard depth racks

“xa” Design

As noted above, the R750xa is optimized for enhanced GPU support. This support is accomplished by moving 2 of the rear PCIe cages to the front as highlighted in the graphic below. Each of these cages can support up to 2 Double Width GPU’s and in the case of the NVidia A100, each pair can be linked together with NVLink bridges. Additional PCIe slots are available in the rear of the system. GPU workloads typically require less internal storage than mainstream workloads so with this change, internal storage has been located in middle of the front of the server and provide up to 8 SAS/SATA, NVMe or a mix of drive types. All of these configurations are available with optional support for RAID using the new PERC11 based H755 (SAS/SATA) or H755n (NVMe). These RAID controllers are located directly behind the drive cage to save space and are connected directly to the Motherboard of the system to ensure PCIe 4.0 speeds. To accommodate these new technologies, the depth of the chassis has been extended by 101.2mm (compared to the R750 “standard”) but will still fit within a standard depth rack. To ensure the highest levels of performance, this model ships with optional support for the 2nd Generation of Intel® OptaneTM Memory, up to 32 DIMM slots and Processors with up to 40 cores.

“Standard” Design

The R650/R750 “standard” models have been designed to accommodate the flexibility necessary to address a wide variety of workloads. With support for large numbers of hard drives (up to 12 in the R650 and up to 28 in the R750), these models also offer optional performance and reliability features with the new PERC 11 RAID controller using the PERC H755 (SAS/SATA) or H755n (NVMe) including a “Dual PERC” option with multiple controllers. These RAID controllers are located directly behind the drive cage to save space and are connected directly to the Motherboard of the system to ensure PCIe 4.0 speeds. To ensure the highest levels of performance, these model ship with optional support for the 2nd Generation of Intel® OptaneTM Memory, up to 32 DIMM slots and Processors with up to 40 cores. In addition, both models support GPU but to a lesser extent than the “xa” series.

“xs” Design

When designing for virtualization, a number of key factors emerge. Storage requirements often serve software defined storage schemas (like vSAN) while the ability of a hypervisor to segment memory and cores creates a need to balance between the two. To meet these demands, the new “xs” designs include support for up to 16 DIMM’s, which translates to 1TB of DRAM when using 64GB DIMM’s, CPU’s with up to 32 cores and internal storage of up to 24 drives (16 SAS/SATA+8 NVMe – R750xs) or 10 drives (SAS/SATA or NVMe – R650xs). These designs assign 1 DIMM socket per channel allowing customers to scale out with balanced configurations. These models were also optimized to provide a lower acquisition cost. While the cost of a DIMM socket might appear insignificant, the impact of reducing the number of DIMM sockets is large. The most obvious is power and cooling. Any design needs to reserve enough “headroom” for a full configuration and by cutting the number of DIMM sockets in half, an “xs” power budget can be reduced. This in turn reduces the amount of cooling required which allows the use of more cost effective fans and potentially reduced cost by limiting baffles and other hardware used to direct air flow. This also helps explains why an “xs” system can operate on a power supply as small as 600W while a “standard” system requires a minimum of 800W power supplies to operate. Another impact to cost is the fact that increasing the number of DIMM sockets in a system increases the complexity of the design. A DDR4 DIMM has 288 pins and by removing 16 sockets from the design, 4,608 electrical traces were also removed. Reducing the number of electrical traces by this scale allows the motherboard to be built with fewer “layers” which translates directly into a lower cost. Recent pricing trends for memory have created an opportunity to achieve excellent performance, scalability and balance with smaller numbers of DIMM’s. Specifically, the $/GB ratio of a 64GB DIMM is evolving to be similar to the ratio of a 32GB DIMM. This means that customers can achieve the same balance that was achieved with previous generations with fewer DIMM sockets.

Conclusion

With the launch of the new 3rd Gen Intel® Xeon® Scalable processors, Dell Technologies is able to deliver a range of new technologies to meet customer requirements. From the “xa” model and its ability to deliver high GPU density to the “standard” models that deliver a robust platform for a wide range of workloads through to the “xs” series that delivers compelling price:performance, customers can now achieve a level of optimization not previously available.

Powering Kafka with Kubernetes and Dell PowerEdge Servers with Intel® Processors

Mon, 29 Jan 2024 23:33:38 -0000

|Read Time: 0 minutes

Kafka with Kubernetes

At the top of this webpage are 3 PDF files outlining test results and reference configurations for Dell PowerEdge servers using both the 3rd Generation Intel® Xeon® processors and 4th Generation Intel Xeon processors. All testing was conducted in Dell Labs by Intel and Dell Engineers in October and November of 2023.

- “Dell DfD Kafka ICX” – highlights the recommended configurations for Dell PowerEdge servers using 3rd generation Intel® Xeon® processors.

- “Dell DfD Kafka SPR” – highlights the recommended configurations for Dell PowerEdge servers using 4th generation Intel® Xeon® processors.

- “Dell DfD Kafka Kubernetes Test Report” – Highlights the results of performance testing on both configurations with comparisons that demonstrate the performance differences between them.

Solution Overview

The Apache® Software Foundation developed Kafka as an Open Source solution to provide distributed event store and stream processing capabilities. Apache Kafka uses a publish-subscribe model to enable efficient data sharing across multiple applications. Applications can publish messages to a pool of message brokers, which subsequently distribute the data to multiple subscriber applications in real time.

Kafka is often deployed for mission-critical applications and streaming analytics along with other use cases. These types of workloads require leading-edge performance which places significant demand on hardware.

There are five major APIs in Kafka[i]:

- Producer API – Permits an application to publish streams of records.

- Consumer API – Permits an application to subscribe to topics and process streams of records.

- Connect API – performs the reusable producer and consumer APIs that can link the topics to the existing applications.

- Streams API – This API converts the input streams to output and produces the result.

- Admin API – Used to manage Kafka topics, brokers, and other Kafka objects.

Kafka with Dell PowerEdge and Intel processor benefits

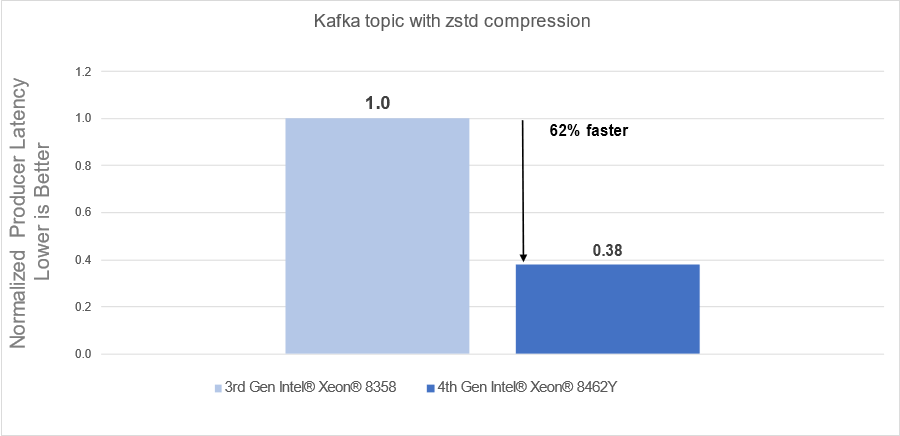

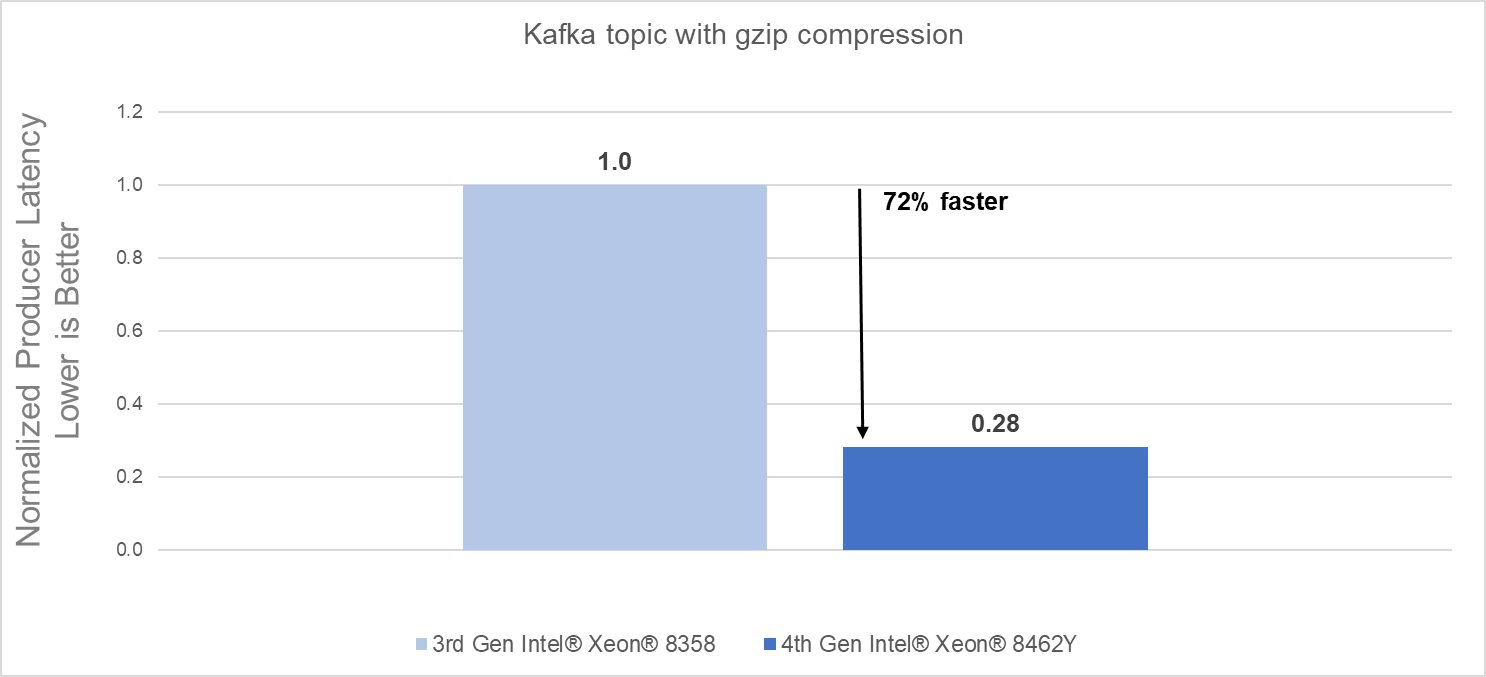

The introduction of new server technologies allows customers to deploy solutions using the newly introduced functionality, but it can also provide an opportunity for them to review their current infrastructure and determine if the new technology might increase performance and efficiency. Dell and Intel recently conducted testing of Kafka performance in a Kubernetes environment and measured the performance of two different compression engines on the new Dell PowerEdge R760 with 4th generation Intel® Xeon® Scalable processors and compared the results to the same solution running on the previous generation R750 with 3rd generation Intel® Xeon® Scalable processors to determine if customers could benefit from a transition.

Some of the key changes incorporated into 4th generation Intel® Xeon® Scalable processors include:

- Quick Assist Technology (QAT) to accelerate data compression and encryption.

- Support for 4800 MT/s DDR5 memory

Raw performance: As noted in the report, our tests showed a 72% producers’ latency decrease with gzip compression and a 62% producers’ latency decrease with zstd compression.

Conclusion

Choosing the right combination of Server and Processor can increase performance and reduce time, allowing customers to react faster and process more data. As this testing demonstrated, the Dell PowerEdge R760 with 4th Generation Intel® Xeon® CPUs significantly outperformed the previous generation.

- The Dell PowerEdge R760 with 4th Generation Intel® Xeon® Scalable processors delivered:

- 62% faster processing using zstd compression

- 72% faster procession using gzip compression

- 4th Generation Intel® Xeon® Scalable processors benefits are the results of:

- Innovative CPU microarchitecture providing a performance boost

- Introduction of DDR5 memory support

[i] https://en.wikipedia.org/wiki/Apache_Kafka