Home > Workload Solutions > SAP > Guides > SAP Data Intelligence on Dell Ready Stack for Red Hat OpenShift Container Platform 4.6 > Installing SAP Data Intelligence using SLC Bridge

Installing SAP Data Intelligence using SLC Bridge

-

Run the deployment using the SLC Bridge 1.0 tool with Maintenance Planner. SLC Bridge runs as a pod named ”SLC Bridge Base” in your Kubernetes cluster. The pod downloads the required images from the SAP registry and deploys them to your private container registry, Container Image Repository, from which they are retrieved by SLC Bridge to perform an installation, upgrade, or uninstallation.

Run the SLCB tool twice for an installation:

- Run slcb -init to enable the container bridge.

- Run slcb -execute with the stack.xml provided by Maintenance Planner to install SAP Data Intelligence.

Install SAP Data Intelligence

Follow these steps:

- Connect to the CSAH admin node as a user with root permissions and download the SLCB01_<Version>.EXE program for Linux on X86_64 from SAP Launchpad Support or SAP Toolset Support (SAP login credentials are required).

Note: Dell recommends that you use the latest version of the SLCB program and run it on the CSAH admin node to ensure that you always have the latest version of the SLC Bridge Base in your Kubernetes cluster.

- Rename the downloaded SLCB01_<Version>.EXE program to slcb for Linux to simplify working with the program in your command terminal. Our example uses “slcb” to describes commands that are being run.

- On Linux, assign the required permissions to the SLCB program:

a+x <path to SLCB program location>slcb

- Run the following command to run the SLCB program for the SLC Bridge Base installation:

<path to SLCB program location>slcb init

- Follow the instructions on the SLCB Bridge tool to enter the required input parameters. For more information, see the SAP document Required Input Parameters.

Note:

Use the vsystem-registry-secret.txt you created earlier to provide the private container registry username, password, and address when prompted by the slcb tool. Select export mode and provide the SLC Bridge namespace that you already created: “sap-slcbridge.” You cannot use the SLC Bridge service through routes (Ingress Operator) because doing so causes timeouts. This limitation will be addressed in future releases. Use the NodePort service directly instead.- Click Next to start the download of the required SLC Bridge Base images from the source SAP Docker Registry to your private container registry and the deployment of the SLC Bridge Base as a pod in the Kubernetes cluster.

The deployment is complete when the message Execution finished successfully is displayed.

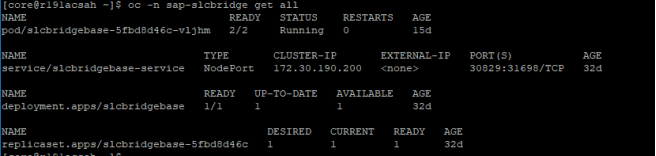

- Confirm that the SLC Bridge Base pod and the slcbridgebase-service are available in the cluster by running: #oc-n sap-slcbridge get all

The following sample output is displayed:

Figure 16. Confirm that the SCL Bridge base pod and service are available

Configuring the SAP Data Intelligence project

This section assumes that the SDI project has been created, as described in Deploying SAP Data Intelligence Observer.

Log on to OpenShift as a cluster-admin, and run:

# # change to the SDI_NAMESPACE project using: oc project "${SDI_NAMESPACE:-sdi}"

# oc adm policy add-scc-to-group anyuid "system:serviceaccounts:$(oc project -q)"

# oc adm policy add-scc-to-user privileged -z "$(oc project -q)-elasticsearch"

# oc adm policy add-scc-to-user privileged -z "$(oc project -q)-fluentd"

# oc adm policy add-scc-to-user privileged -z default

# oc adm policy add-scc-to-user privileged -z mlf-deployment-api

# oc adm policy add-scc-to-user privileged -z vora-vflow-server

# oc adm policy add-scc-to-user privileged -z "vora-vsystem-$(oc project -q)"

# oc adm policy add-scc-to-user privileged -z "vora-vsystem-$(oc project -q)-vrep"

Installing SAP Data Intelligence

Follow the steps in the SAP document Install SAP Data Intelligence with SLC Bridge in a Cluster with Internet Access.

Maintenance Planner

Maintenance Planner is an SAP-hosted solution that helps you plan and maintain systems in your SAP landscape. Plans include complex activities such as installing a new system or updating existing systems.

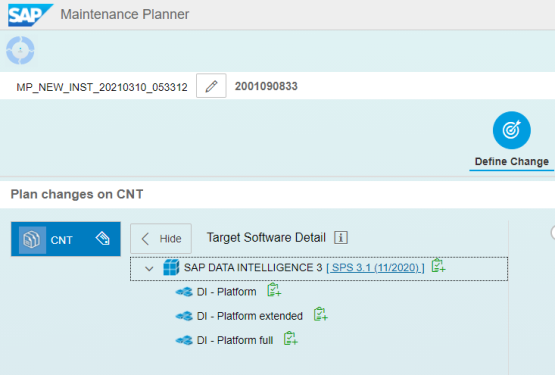

Plan changes in Maintenance Planner

To plan a new SAP Data Intelligence installation in Maintenance Planner:

- Connect to Maintenance Planner (access requires SAP login credentials) and select the Plan a New System tile.

- Select lifecycle option plan.

This option plans a software change on your system, including a download of files. The Maintenance Planner prompts you to define the change:

Figure 17. Maintenance planner for SAP Data Intelligence

- For the Target Software Level to Install, select the option you want under SAP DATA INTELLIGENCE 3:

- DI - Platform

- DI - Platform Extended

- DI - Platform Full

For information about which SAP Data Intelligence components are included in each of these options, see System Landscape and Component Overview.

- Select Support Package Stack and confirm your selection.

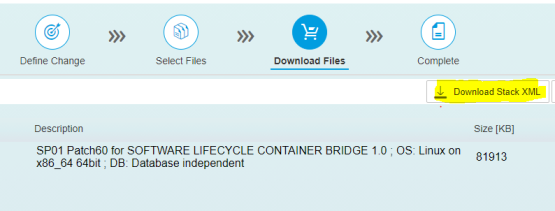

- On the Select OS/DB dependent files and Select Stack Dependent and Independent files dialogs, select the following items and confirm your selection:

- Choose Linux on x86_64 64bit

- SL CONTAINER BRIDGE

The Download Files dialog is displayed, as shown in the following figure:

Figure 18. Download the Stack XML

- Click Download Stack XML and save a copy of the Stack XML file.

We downloaded the Stack XML file to the CSAH node, where the slcb tool is available to install the SLC Bridge Base.

Run the SAP Data Intelligence installation

To run the installation:

- Start the SLCB program by running:

<path to SLCB program location>slcb execute --useStackXML <path to Stack XML file> -u web

or:

<path to SLCB program location>slcb execute --url https:// <SLC Bridge Base IP >:<port>: --useStackXML <path to Stack XML file>

where <SLC Bridge Base IP> is the external IP address of the SDI compute node on which the SLC Bridge Base resides and <port> is the port to connect to the SLC Bridge Base, for example, port 31689.

Note: This is applicable only when Service Type is set to NodePort. After the SLC Bridge is deployed, its NodePort is determined.

- Run the following command to determine which NodePort to use:

# oc get svc -n "${SLCB_NAMESPACE:-sap-slcbridge}" slcbridgebase-service -o jsonpath='{.spec.ports[0].nodePort}{"\n"}'

31689

- Log in with the name and password of the administrator user for the SLC Bridge Base, as specified in the SLC Bridge Base installation.

For more information about the syntax of the URL and the administrator user, see Making the SLC Bridge Base available on Kubernetes.

SAP Data Installation parameters summary

The following piece of code provides a summary of the parameters that are required for SAP Data Installation:

ShowDialog ID: summaryDialog, caption: Parameter Summary

Choose "Next" to start the deployment with the displayed parameter values or choose "Back" to revise the parameters.

Kubernetes Namespace

Kubernetes Namespace: sdi

Installation Type

1. Basic Installation

> 2. Advanced Installation

Restore from Backup

Choose if you want to restore from an existing backup for SAP Data Intelligence.: n

Container Image Repository

Container Image Repository: container-image-registry-sdi-observer.apps.r191a.oss.labs

SAP Data Intelligence System Tenant Administrator Password

SAP Data Intelligence Initial Tenant Name

Tenant Name: default

SAP Data Intelligence Initial Tenant Administrator Username

Username: admin

SAP Data Intelligence Initial Tenant Administrator Password

Cluster Proxy Settings

Choose if you want to configure proxy settings on the cluster: n

Backup Configuration

Choose if you want to enable backups for SAP Data Intelligence.: n

Checkpoint Store Configuration

Choose if you want to use SAP Data Intelligence streaming tables and enable the checkpoint store.: y

Object Store Type

1. Amazon S3

2. Azure Storage Blob (WASB)

3. Google Cloud Storage (GCS)

4. Alibaba OSS

> 5. S3 Compatible Object Storage

Access Key For S3 Compatible Object Storage

Access Key: 2NI09x5X4T23N4YeqGCI

Secret Access Key For S3 Compatible Object Storage

Endpoint For S3 Compatible Object Storage

Endpoint: http://s3.openshift-storage.svc.cluster.local

Path For S3 Compatible Object Storage

S3 bucket and directory: sdi-checkpoint-store-652fdcc8-1752-46f4-b7f9-05b4a2d2d79e

Timeout

Timeout: 180

Checkpoint Store Validation

Checkpoint Store Validation: y

Storage Class Configuration

Choose if you want to configure storage classes for ReadWriteOnce PersistentVolumes: y

Default Storage Class

Default storage class: ocs-storagecluster-cephfs

System Management Storage Class

System Management Storage Class: ocs-storagecluster-ceph-rbd

Dlog Storage Class

Dlog Storage Class: ocs-storagecluster-cephfs

Disk Storage Class

Disk Storage Class: ocs-storagecluster-cephfs

SAP HANA Storage Class

SAP HANA Storage Class: ocs-storagecluster-cephfs

SAP Data Intelligence Diagnostics Storage Class

SAP Data Intelligence Diagnostics Storage Class: ocs-storagecluster-cephfs

Docker Container Log Path Configuration

Choose whether the configuration of your kubernetes cluster requires a custom container log path configuration: n

Enable Kaniko Usage

Choose if you want to enable kaniko usage: y

Container Image Repository Settings for SAP Data Intelligence Modeler

Choose if you want to use a different container image repository for SAP Data Intelligence Modeler.: n

Loading NFS Modules

Choose if you want to enable loading the kernel modules or not.: n

Enable Network Policies

Choose if you want to enable network policies.: n

Enable Vora Text-Analysis Deployment

Choose if you want to deploy Vora-Text Analysis.: n

Enable Spark Runtime for Data Transform Operators

Choose if you want to enable Spark Runtime for Data Transform Operators: n

Timeout

Timeout in seconds: 3600

Additional Installation Parameters

Enter a cluster name

Cluster Name: r191am1.oss.labs

Enter Logon Information for SAP Support Portal Access

User Name: s-user

SMPUserPassword s-user password

Choose "Next" to start the deployment with the displayed parameter values or choose "Back" to revise the parameters.

Post-installation steps

After a successful installation, perform the following steps to configure the SAP Data Intelligence solution:

- Follow the steps in Post Installation Configurations for SAP Data Intelligence.

- Validate the installation to ensure that everything works as expected by following the steps in the SAP document Testing Your Installation (3.1).

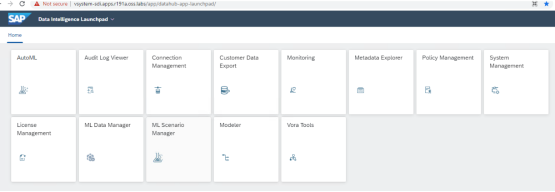

- Connect to the SAP Data Intelligence Launchpad, as shown in the following figure: