Home > Communication Service Provider Solutions > Telecom Multicloud Foundation > Red Hat > Guides > Red Hat Open Shift Container Platform Guides > Reference Architecture Guide: Dell Technologies - Red Hat OpenShift Reference Architecture for Telecom > Remote compute nodes

Remote compute nodes

-

Remote compute nodes can be necessary in a variety of industries, including the telecommunications industry. These nodes can be added to an existing cluster in OpenShift Container Platform 4.6 on a bare-metal, user-provisioned infrastructure.

Note: The use of remote compute nodes adds complexity to the solution architecture because of an increased risk of higher latency, reduced network connectivity, and reduced resiliency.

Cluster architecture with remote compute nodes

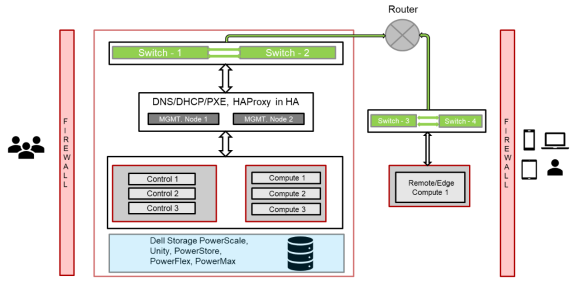

The following figure shows an example of the cluster architecture with remote compute nodes:

Figure 8. Remote/edge compute node architecture

Challenges

Many complexities arise with the addition of remote compute nodes in a cluster. This includes, but is not limited to, network separation, power outages, and latency. These complexities require consideration about how to maintain applications that are running on remote compute nodes in a highly available manner.

Network separation refers to the fact that the cluster control plane and the remote compute nodes must be connected to each other but may not be on the same network. Connectivity issues can also arise due to proxies and firewalls between the cluster network and the remote compute nodes. Power outages can happen at any time and on either the cluster control plane or the remote compute node. Latency between the cluster and the remote compute node can have negative effects on the cluster.

Mitigation strategies

All nodes, including remote compute nodes, must send heartbeats every ten seconds to the Kube controller in the cluster control plane. If a node fails to send heartbeats because of network connectivity issues or a power loss, the cluster changes the node health status to “Unhealthy” and marks the node condition as “Unknown.” The scheduler then avoids scheduling pods to that node, and pods on the node are evicted after five minutes. To change this behavior, deploy applications on remote worker nodes using either static pods or daemon sets to avoid automatic pod evictions. Daemon sets are preferred because their state is not changed by the cluster control plane, even in cases where the node stops communicating with the cluster control plane. However, daemon sets are not automatically rescheduled if the node is rebooted and cannot contact the control plane after the reboot. Static pods are preferred if automatic restarts of pods running on a remote compute node are needed after a node reboot, especially in cases caused by power loss. Static pods have disadvantages, however, in that they cannot use secrets or configuration maps.

Other OpenShift Container Platform objects can be used to manage workloads and reschedule them in cases of node disconnection from the cluster control plane. These objects include deployments, replica sets, and replication controllers. However, if workloads must be run on a particular remote worker node or a particular type of node (such as a node with GPU resources), these options might not be viable.

Note: For more information, see the remote compute nodes topic in Red Hat OpenShift Container Platform 4.6 documentation.

Storage

Nodes operating at the edge may not be able to readily communicate with storage arrays or solutions that are stored in the core data center. To ensure a relatively independent operation, applications running on edge nodes must be able to access and use local storage to store pertinent data. This can be accomplished in OpenShift Container Platform 4.6 by using the Local Storage Operator. The Local Storage Operator provisions local disks as Persistent Static Volumes (PSVs) on the specified node or nodes with either file system storage or block storage. After Persistent Volume Claims (PVCs) are created, they can be provided to applications needing persistent storage.

However, the Local Storage Operator does not provide a resilient storage solution for nodes at the edge. If an edge node fails in such a way that it is not recoverable, the data that is stored on its local disks is lost. If backups and resilient storage are needed, storage solutions such as Dell EMC storage arrays and OpenShift Data Foundation, which use CSI storage, can perform backups and restore data by using the Kubernetes snapshot feature or PowerProtect software.

Adding remote nodes to clusters

Adding a remote compute node to an existing cluster involves the following high-level steps:

- Create DHCP reservations on a DHCP server that are reachable by remote compute node.

- Create a PXE service that is reachable by remote compute node.

- In the PXE service, point the remote compute node to the location of required installation files, including the operating system and ignition files. These files can be located on the CSAH node managing the cluster.

- Boot the remote compute node to PXE and install the operating system.

- After the remote compute node has been set up on the CSAH node, approve the CSRs created so that the remote compute node can be added to the cluster.

- Repeat as needed for other remote compute nodes.

For more information, see the Dell Technologies – Red Hat® OpenShift® Reference Architecture for Telecom 4.6 Deployment Guide at the Dell Technologies Solutions Info Hub for Communication Service Providers.