Home > Communication Service Provider Solutions > Telecom Multicloud Foundation > Red Hat > Guides > Red Hat Open Shift Container Platform Guides > Reference Architecture Guide: Dell Technologies - Red Hat OpenShift Reference Architecture for Telecom > OpenShift network operations

OpenShift network operations

-

Operating components

Applications run on compute nodes. Each compute node is equipped with resources such as CPU cores, memory, storage, NICs, and add-in host adapters including GPUs, SmartNICs, and FPGAs. Kubernetes provides a mechanism to enable orchestration of network resources through the Container Network Interface (CNI) API.

The CNI API uses the Multus CNI plug-in to enable attachment of multiple adapter interfaces on each pod. CRD objects are responsible for configuring Multus CNI plug-ins.

Container communications

A pod, which is a basic unit of application deployment, consists of one or more containers that are deployed together on the same compute node. A pod shares the compute node network infrastructure with the other network resources that make up the cluster. As service demand expands, additional identical pods are often deployed to the same or other compute nodes.

Networking is critical to the operation of an OpenShift Container cluster. Four basic network communication flows occur within every cluster:

- Container-to-container connections

- Pod communication over the local host network (127.0.0.1)

- Pod-to-pod connections, as described in this guide

- Pod-to-service and ingress-to-service connections, which are handled by services

Containers that communicate within their pod use the local host network address. Containers that communicate with any external pod originate their traffic based on the IP address of the pod.

Application containers use shared storage volumes (configured as part of the pod resource) that are mounted as part of the shared storage for each pod. Network traffic that might be associated with nonlocal storage must be able to route across node network infrastructure.

Services networking

Services are used to abstract access to Kubernetes pods. Every node in a Kubernetes cluster runs a kube-proxy and is responsible for implementing virtual IP (VIP) for services. Kubernetes supports two primary modes of finding (or resolving) a service:

- Using environment variables: This method requires a reboot of the pods when the IP address of the service changes.

- Using DNS: OpenShift Container Platform 4.6 uses CoreDNS to resolve service IP addresses.

Some parts of the application (for example, front-ends) might need to expose a service outside the application. If the service uses HTTP, HTTPS, or any other TLS-encrypted protocol, use an ingress controller; for other protocols, use a load balancer, external service IP address, or node port.

A node port exposes the service on a static port on the node IP address. A service with NodePort-type as a resource exposes the resource on a specific port on all nodes in the cluster. Ensure that external IP addresses are routed to the nodes.

Ingress controller

OpenShift Container Platform uses an ingress controller to provide external access. The ingress controller defaults to running on two compute nodes, but it can be scaled up as required. Dell Technologies recommends creating a wildcard DNS entry and then setting up an ingress controller. This method enables you to work only within the context of an ingress controller. An ingress controller accepts external HTTP, HTTPS, and TLS requests using SNI, and then proxies them based on the routes that are provisioned.

You can expose a service by creating a route and using the cluster IP. Cluster IP routes are created in the OpenShift Container Platform project, and a set of routes is admitted into ingress controllers.

You can perform sharding (horizontal partitioning of data) on route labels or name spaces. Sharding enables you to:

- Load-balance the incoming traffic.

- Hive off the required traffic to a single ingress controller.

Networking operators

The following operators are available for network administration:

- Cluster Network Operator (CNO): Deploys the OpenShift SDN plug-in during cluster installation and manages kube-proxy on each node.

- DNS operator: Deploys and manages CoreDNS and instructs pods to use the CoreDNS IP address for name resolution.

- Ingress operator: Enables external access to OpenShift Cluster Platform cluster services and deploys and manages one or more HAProxy-based ingress controllers to handle routing.

Container Networking Interface

The CNI specification serves to make the networking layer of containerized applications pluggable and extensible across container run-times. The specification is used in both upstream Kubernetes and OpenShift in the pod network. This use is not implemented by Kubernetes, but by various CNI plug-ins. The most commonly used CNI plug-ins are:

- Multus: CNI plug-in that supports the multinetwork function in Kubernetes. While Kubernetes pods typically have only one networking interface, the use of Multus means that pods can be configured to support multiple interfaces. Multus acts as a “meta plug-in,” a plug-in which calls other CNI plug-ins. Multus also supports SR-IOV and DPDK workloads.

- DANM: Developed by Nokia, DANM is a CNI plug-in for telecom-oriented workloads. DANM supports the provisioning of advanced IPVLAN interfaces, acts like Multus in that it is also a meta plug-in, can control VxLAN and VLAN interfaces for all Kubernetes hosts, and more. The DANM CNI plug-in creates a network management API to give administrators greater control of the physical networking stack through the standard Kubernetes API.

OpenShift SDN

OpenShift SDN creates an overlay network that is based on Open Virtual Switch (OVS). The overlay network enables communication between pods across the cluster. OVS operates in one of the following modes:

- Network policy mode (the default), which allows custom isolation policies

- Multitenant mode, which provides project-level isolation for pods and services

- Subnet mode, which provides a flat network

OpenShift Container Platform 4.6 also supports using Open Virtual Network (OVN)-Kubernetes as the CNI network provider. OVN-Kubernetes will become the default CNI network provider in a future release of OpenShift Container Platform. OpenShift Container Platform 4.6 supports additional SDN orchestration and management plug-ins that comply with the CNI specification. See Use cases for examples.

Service Mesh

Distributed microservices work together to make up an application. Service Mesh provides a uniform method to connect, manage, and observe microservices-based applications. The Red Hat OpenShift implementation of Service Mesh is based on Istio, an open-source project. You must use operators from the OperatorHub to install Service Mesh; OpenShift Service Mesh is not installed automatically as part of a default installation.

Service Mesh has key functional components that belong to either the data plane or the control plane:

- Envoy proxy: Intercepts all traffic for all services in Service Mesh. Envoy proxy is deployed as a sidecar.

- Mixer: Enforces access control and collects telemetry data.

- Pilot: Provides service discovery for the envoy sidecars.

- Citadel: Provides strong service-to-service and end-user authentication with integrated identity and credential management.

Users define the granularity of the Service Mesh deployment, enabling them to meet their specific deployment and application needs. Service Mesh can be employed at the cluster level or the project level. For more information, see OpenShift Service Mesh documentation.

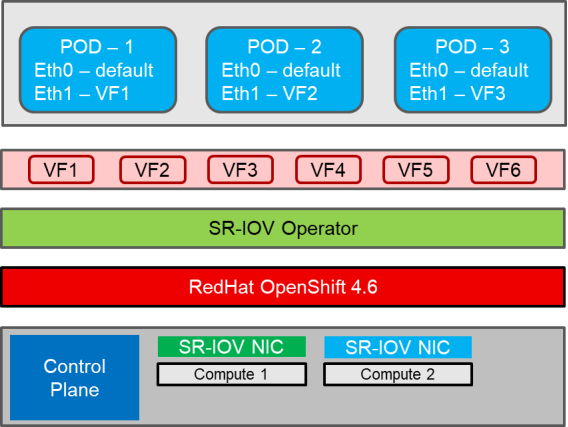

SR-IOV and multiple networks

Single Root Input/Output Virtualization (SR-IOV) enables the creation of multiple virtual functions from one physical function for a PCIe device such as a NIC. Figure 5shows this architecture. In the network, this capability can be used to create many virtual functions from a single NIC, where each virtual function can be attached to a pod. Latency is reduced because of the reduced input/output overhead from the software switching layer. Also, SR-IOV can be used to configure multiple networks by attaching multiple virtual functions with different networks to a single pod. SR-IOV can be configured in OpenShift by using the SR-IOV operator, which can create virtual functions and provision additional networks. For more information, see the Dell Technologies - Red Hat OpenShift Reference Architecture for Telecom Deployment Guide at the Dell Technologies Info Hub for Communication Service Provider Solutions.

Note: For SR-IOV interfaces to be able to connect across multiple VLANs, VLANs in the switch must be set up with appropriate IPs and the interfaces must be tagged with VLANs. After the switch configuration is complete, VLANs must be tagged to the SR-IOV interface on compute nodes to ensure successful pings from the POD to the different networks that are specified in the VLAN.

Data Plane Development Kit

The Data Plane Development Kit (DPDK) is a set of libraries that allow for network packet processing to be completed at the user-space level, bypassing the Linux kernel networking stack. DPDK can communicate directly with the networking hardware, improving packet processing capacity. In OpenShift Container Platform 4.6, DPDK is a Technology Preview feature. Technology Preview features are not currently supported by Red Hat in production environments.

The following figure shows a high-level overview of SR-IOV in OpenShift Container Platform:

Figure 5. SR-IOV in OpenShift Container Platform

To permit the use of DPDK libraries in OpenShift, the SR-IOV Operator must be installed and network attachments using virtual functions must be created on the appropriate NICs. Further, the administrator must allocate “huge pages” and exclusive CPUs on the node that is running DPDK workloads. The Performance Addon Operator can be used to perform this configuration.

Supported NFV NIC cards

Dell Technologies has validated the following NIC cards on the Dell EMC PowerEdge R640 and R740xd servers for the network functions virtualization (NFV) features SR-IOV and DPDK:

- Mellanox ConnectX-4 25G

- Intel® XXV710 25G

Dell Technologies has validated the following NIC card on the Dell EMC PowerEdge R650 and R750 servers for the NFV features SR-IOV and DPDK:

- Mellanox ConnectX-5 25G

Multinetwork support

OpenShift Container Platform 4.6 also supports software-defined multiple networks. The platform comes with a default network. The cluster administrator defines additional networks using the Multus CNI plug-in, and then chains the plug-ins. The additional networks are useful for increasing the networking capacity of the pods and meeting traffic separation requirements.

The following CNI plug-ins are available for creating additional networks:

- Bridge: The same host pods can communicate over a bridge-based additional network.

- Host-device: Pods can access the physical Ethernet network device of the host.

- Macvlan: Pods attached to a macvlan-based additional network have a unique MAC address and communicate using a physical network interface.

- Ipvlan: Pods communicate over an ipvlan-based additional network.

Leaf-switch considerations

When pods are provisioned with additional network interfaces that are based on macvlan or ipvlan, corresponding leaf-switch ports must match the VLAN configuration of the host. Failure to properly configure the ports results in a loss of traffic.