Home > Communication Service Provider Solutions > Telecom Multicloud Foundation > Canonical > Guides > Reference Architecture—Canonical Charmed OpenStack (Ussuri) on Dell EMC Hardware > Storage charms

Storage charms

-

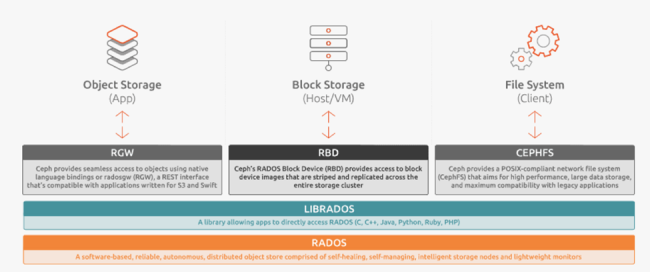

Ceph is a distributed storage and network file system designed to provide excellent performance, reliability, and scalability. Canonical uses Ceph by default for providing block/object/file storage functionality to the private cloud, however, this can be replaced by, or complemented with, another storage solution such as dedicated storage appliances.

The following charms allow to deploy and manage a production-grade Ceph cluster.

ceph-monitor

Ceph monitors are the endpoints of the storage cluster and store the map of the data placement across Ceph OSDs.

By default, the Ceph cluster does not bootstrap until three service units have been deployed and started. This is to ensure that a quorum is achieved before adding storage devices.

After the initialization of the monitor cluster a quorum forms quickly, and OSD bring-up proceeds.

ceph-osd

Ceph OSDs are managing underlay storage devices that contain user’s data and represent the capacity of the cluster.

This charm provides the Ceph OSD personality for expanding storage capacity within a Ceph deployment. It manages the entire set of block devices on the physical node, allowing to add/remove block devices if needed, as well as change their parameters on the fly.

ceph-radosgateway

This charm provides an API endpoint for Swift or S3 clients, supporting OpenStack Keystone-based RBAC and storing objects in the Ceph cluster underneath.

ceph-fs

Deploys the metadata server daemon (MDS) for the Ceph distributed file system (CephFS). This allows to dynamically create file systems on top of Ceph block storage and mount a remote file system on the client with help of the kernel driver.