Home > AI Solutions > Artificial Intelligence > White Papers > Digital Assistant with Red Hat OpenShift AI on Dell APEX Cloud Platform for Red Hat OpenShift > Logical architecture

Logical architecture

-

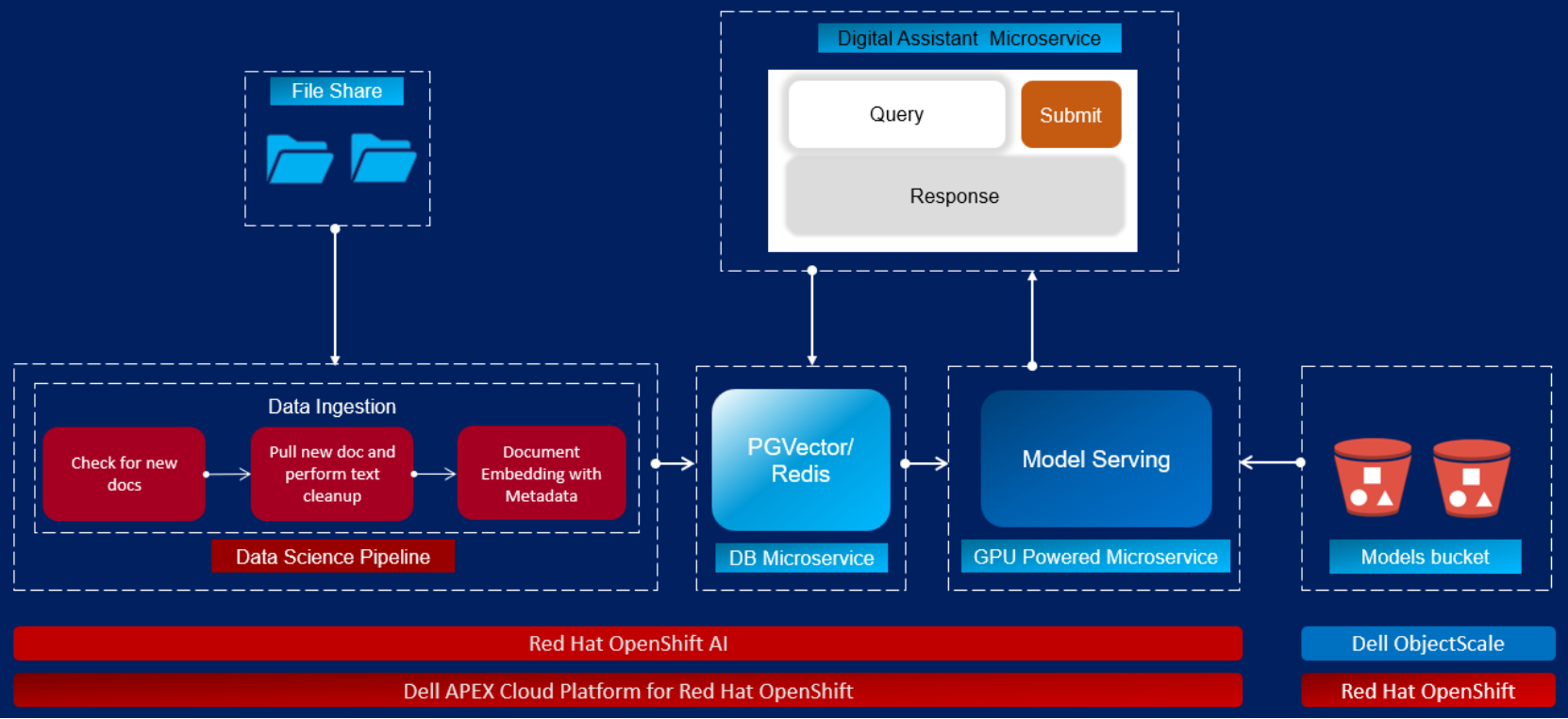

The following figure showcases the logical architecture design of the digital assistant.

Figure 6. Digital assistant logical architecture

We used a modular design based on microservices to build the digital assistant. The following list describes the microservices and their functions.

- Digital assistant: This microservice runs the LangChain, which integrates different components of the LLM-based application together. It also provides the user interface to interact with the digital assistant. The user interface is based on Gradio UI framework.

- Vector database: Digital assistant is compatible to work with both PGVector and Redis as a vector store. In our solution, both PGVector and Redis are deployed as a microservice. Vector store is used to store knowledge base embeddings, and to perform similarity search during context retrieval.

- Model serving: KServe model serving framework along with vLLM serving runtime is leveraged to serve the Llama 2 model. We used a single model serving feature available in OpenShift AI. This GPU powered microservice also interacts with Dell ObjectScale to download the model weights at the runtime.

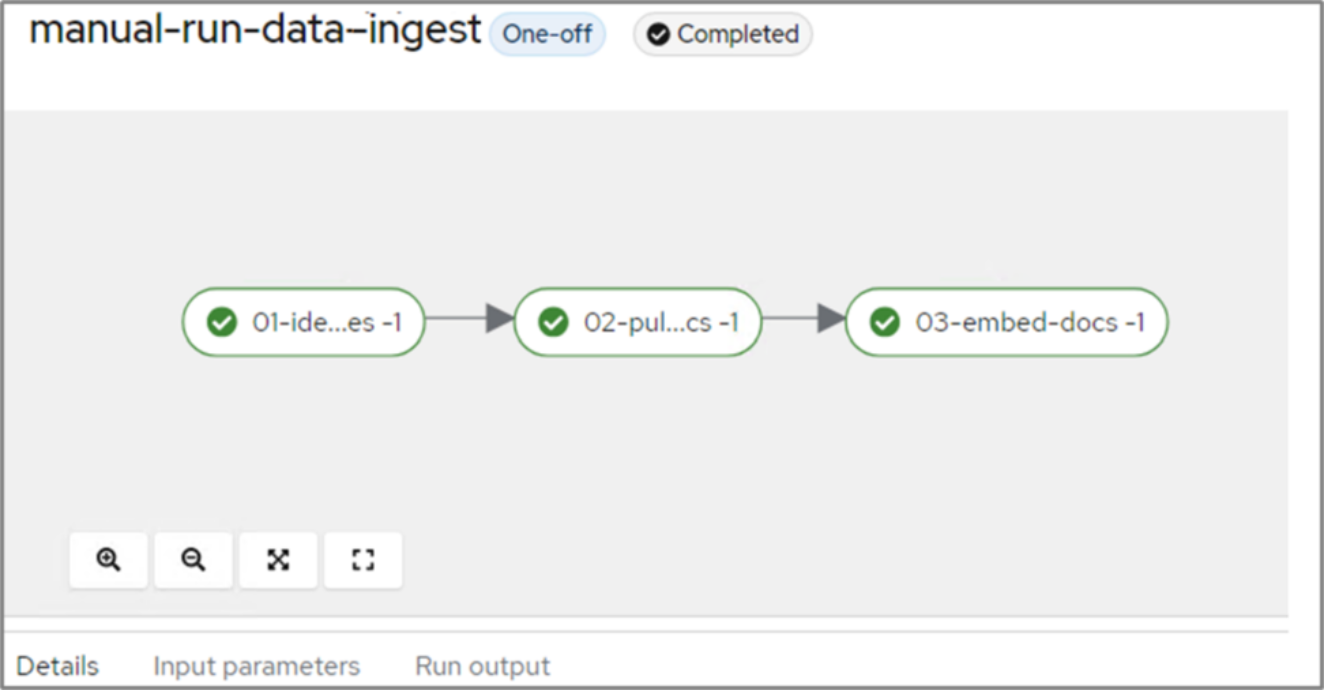

- Data science pipeline: The data science pipeline allows users to create and manage AI and data science workflows in OpenShift AI. Workflows run in a modular and containerized approach. It is scheduled to run periodically and leveraged to build a data ingestion workflow. Each task within the workflow runs as a separate microservice. This workflow is used to detect recently added or modified documents from file share, extract texts, perform cleanup of data, followed by creating and loading embeddings into the PGVector database.

Figure 7. Data ingestion pipeline

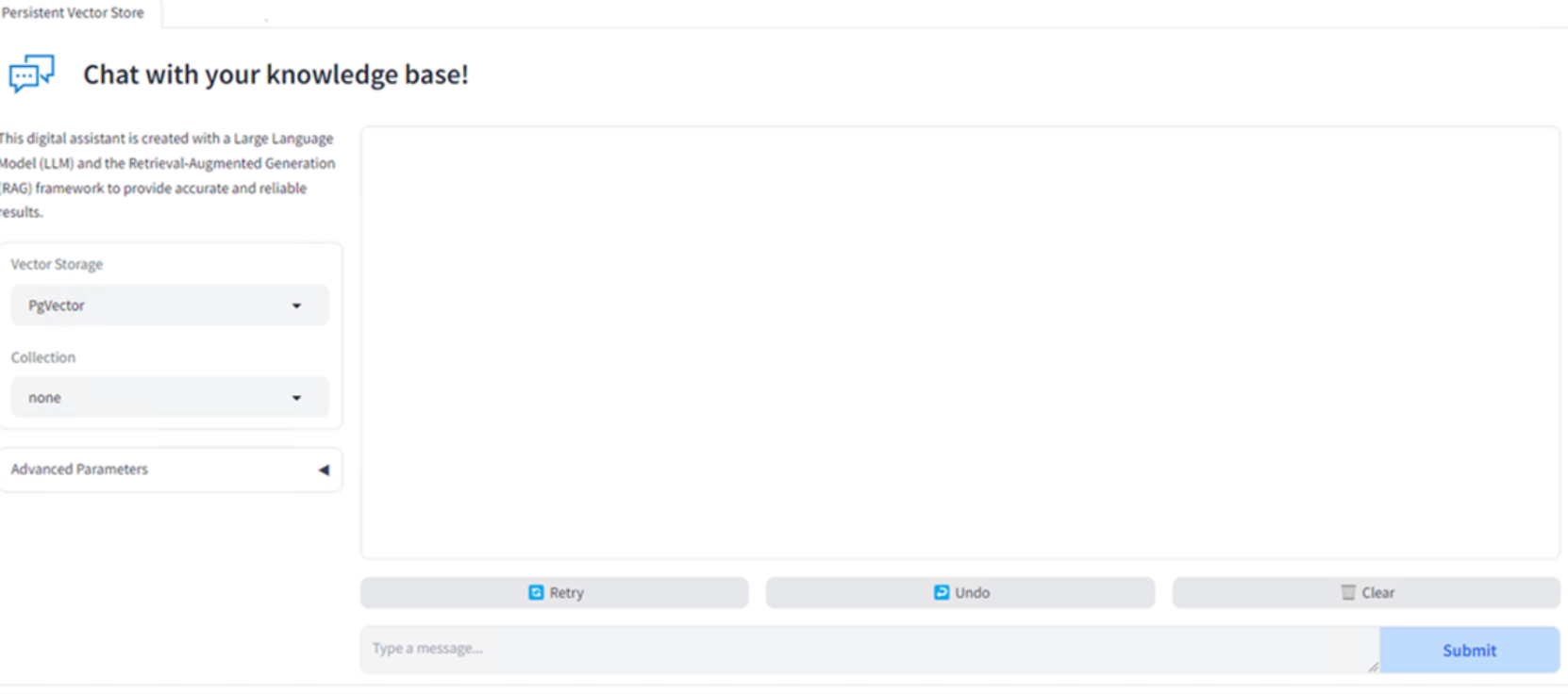

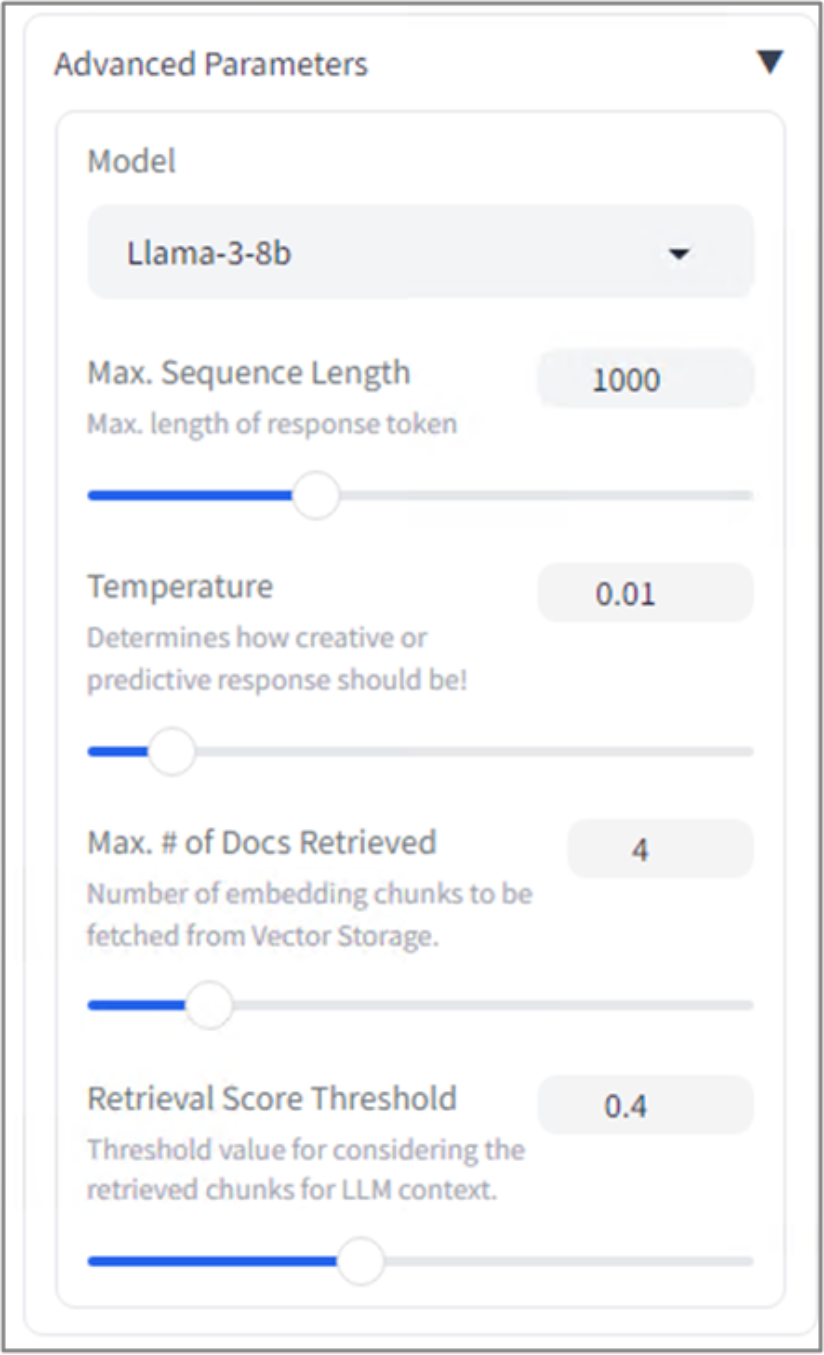

- User Interface: Gradio is the UI framework used to develop digital assistant UI. Gradio is an open-source Python library that enables fast development and prototyping of AI/ML web applications with user friendly interface. It provides a simple and intuitive API that is compatible with all Python programs and libraries. This digital assistant solution is provided with advanced UI features which include choice of different LLMs, vector stores, and catalogues. Digital Assistant UI also provides additional options to users such as maximum sequence length, temperature control, retrieval score threshold, and maximum number of documents retrieved. These additional options allow users to control the response from the digital assistant.

Figure 8. Digital assistant UI

Figure 9. Advanced parameters available in digital assistant UI

- File share: Apache HTTP is deployed as a microservice to host the internal documents, which will be ingested into the vector store through data ingestion pipeline.