Home > Storage > PowerMax and VMAX > Storage Admin > Dell PowerMax and VMware vSphere Configuration Guide > Virtual disk allocation schemes

Virtual disk allocation schemes

-

VMware vSphere offers multiple ways of formatting virtual disks through GUI interface or CLI. For new virtual machine creation, only the formats eagerzeroedthick, zeroedthick, and thin are offered as options.

VM disk controllers

Before discussing the type of virtual disks to use, it is worth mentioning the disk controllers and whether one type is preferred by Dell over another.

There are three type of controllers as of the current vSphere 7.0 release: SCSI, NVMe, and SATA. When a new VM is created, the GuestOS selection drives the controller that VMware selects by default. For instance, if Mac OS is selected, VMware will assign a SATA controller. If Windows or Linux is selected, as SCSI controller is assigned. In addition, VMware will also assign a SCSI controller “type”. These are:

- BusLogic Parallel

- LSI Logic Parallel

- LSI Logic SAS

- VMware Paravirtual (PVSCSI)

For most Windows GuestOS VMware will assign LSI Logic SAS, and for Linux, PVSCSI. Though there are some differences, these two types are quite similar and can be used interchangeably.

For most situations, Dell recommends keeping VMware’s default assignment of the controller and type. For environments with intensive IO, however, the PVSCSI will generally provide better performance and use less CPU cycles so it is recommended under those conditions; but there is no requirement to change the controller type.

The NVMe controller was introduced in vSphere 6.5 and is designed to improve performance while reducing CPU overhead when accessing high-performing NVMe media. In addition to vSphere 6.5, hardware version 13 is required along with a supported GuestOS. This usually involves installing a driver if the OS does not have one natively. The NVMe controller is assigned by default when using a newer version of Windows GuestOS (e.g., 2016). There are some limitations with the NVMe controller, and it does not work with all applications, so it is important to thoroughly review the requirements. VMware indicates the NVMe controller may provide better performance for NVMe; however, VMware also clearly states that when using the HPP plug-in for pathing, using PVSCSI is a best practice. Ultimately, for customers using NVMeoF on the PowerMax, either controller type is acceptable. Testing is always recommended.

Note: Changing the controller type or controller itself on an existing VM may require that certain steps are completed. See VMware KB 1002149 for more detail.

Zeroedthick allocation format

When creating, cloning, or converting virtual disks in the vSphere Client, the default option is called Thick Provision Lazy Zeroed in vSphere 6 and higher. The Thick Provision Lazy Zeroed selection is commonly referred to as the zeroedthick format. In this allocation scheme, the storage required for the virtual disks is reserved in the datastore but the VMware kernel does not initialize all the blocks. The blocks are initialized by the guest operating system as write activities to previously uninitialized blocks are performed. The VMFS will return zeros to the guest operating system if it attempts to read blocks of data that it has not previously written to. This is true even in cases where information from a previous allocation (data “deleted” on the host, but not de-allocated on the thin pool) is available. The VMFS will not present stale data to the guest operating system when the virtual disk is created using the zeroedthick format.

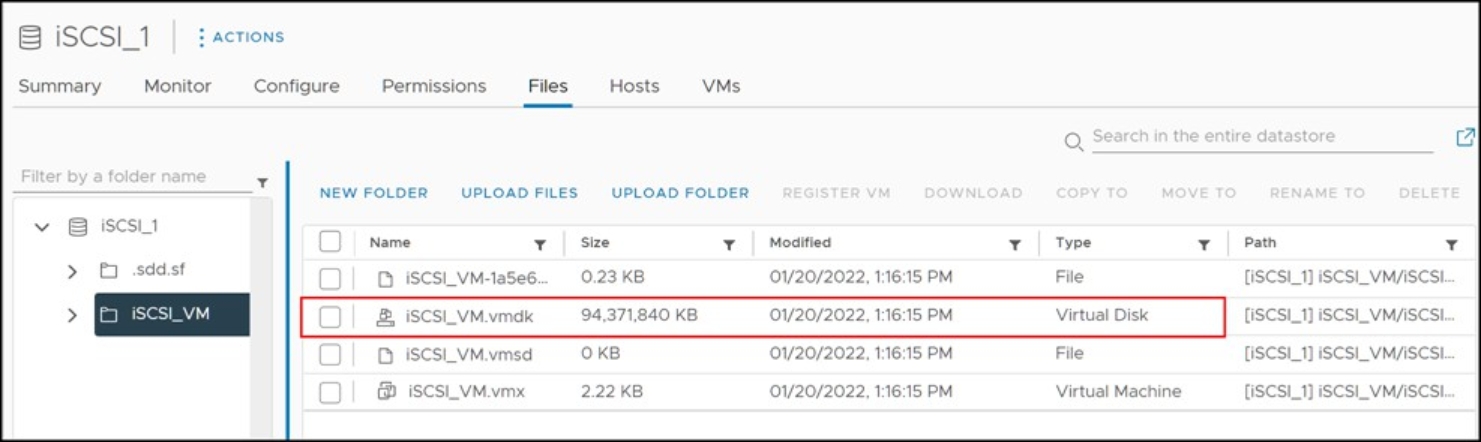

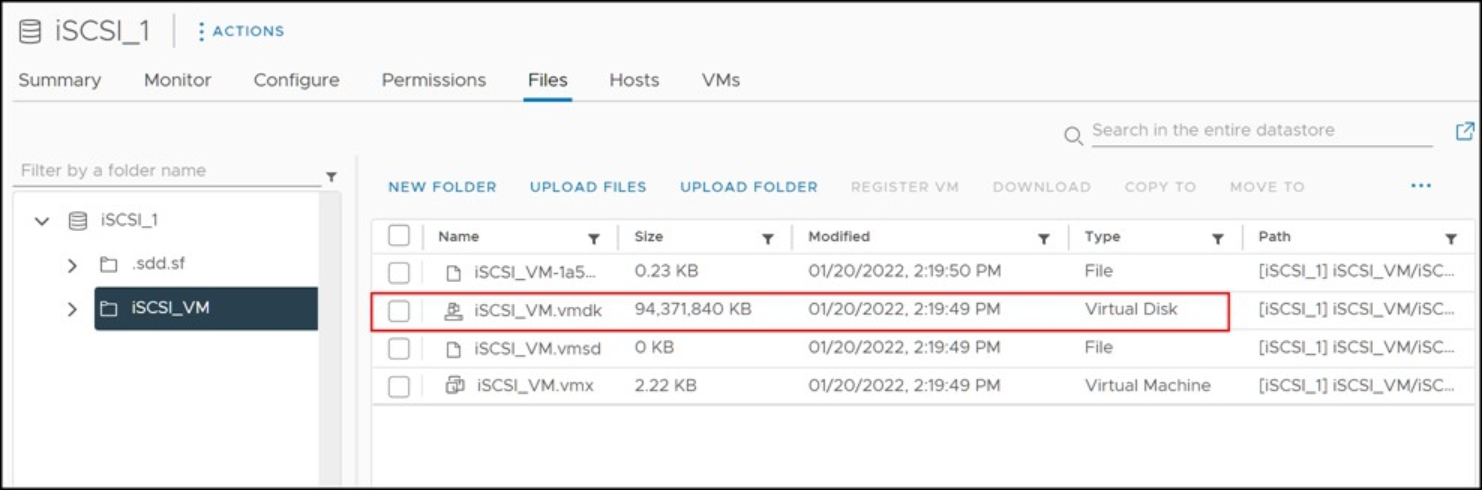

Since the VMFS volume will report the virtual disk as fully allocated, the risk of oversubscribing is essentially removed. This is due to the fact that the oversubscription does not occur on both the VMware layer and the array layer. The virtual disks will not require more space on the VMFS volume as their reserved size is static with this allocation mechanism and more space will be needed only if additional virtual disks are added. For example, in Figure 109, a single VM resides on the 500 GB datastore iSCSI_1. The VM has a single 90 GB virtual disk that uses the zeroedthick allocation method. The datastore browser reports the virtual disk as consuming the full 90 GB.

Figure 109. Zeroedthick virtual disk allocation size as seen in a VMFS datastore browser

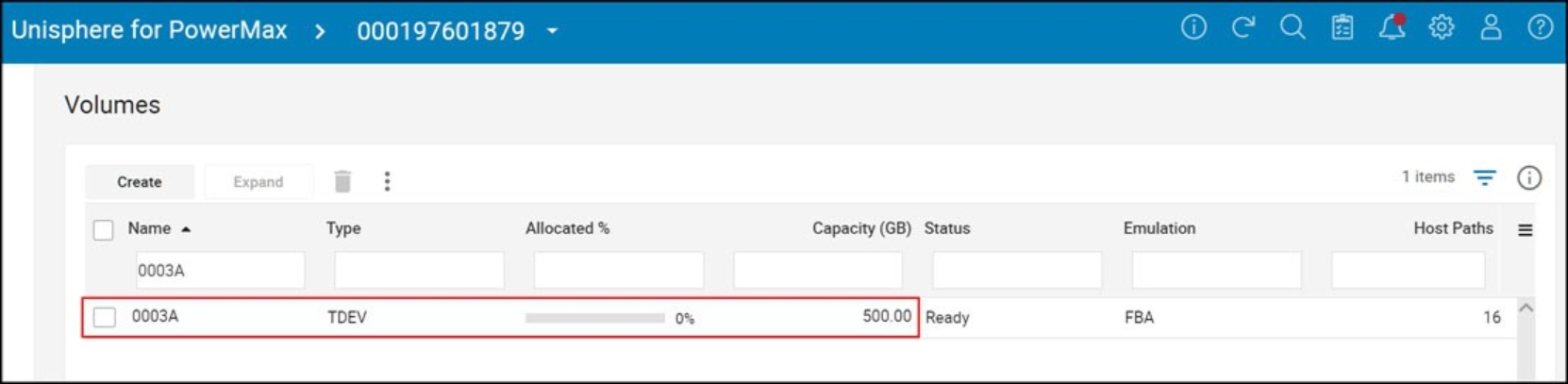

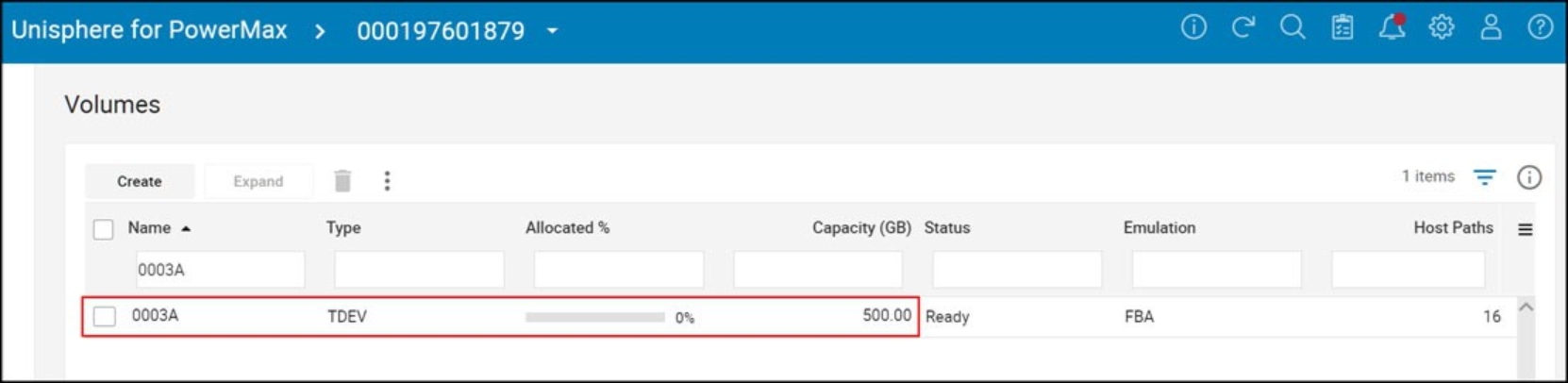

However, since the VMware kernel does not actually initialize unused blocks, the full 90 GB is not consumed on the thin device backing the datastore. In the example, the virtual disk resides on thin device 0003A and as seen in Figure 110, no space is consumed.

Figure 110. Zeroedthick virtual disk allocation on a PowerMax thin device

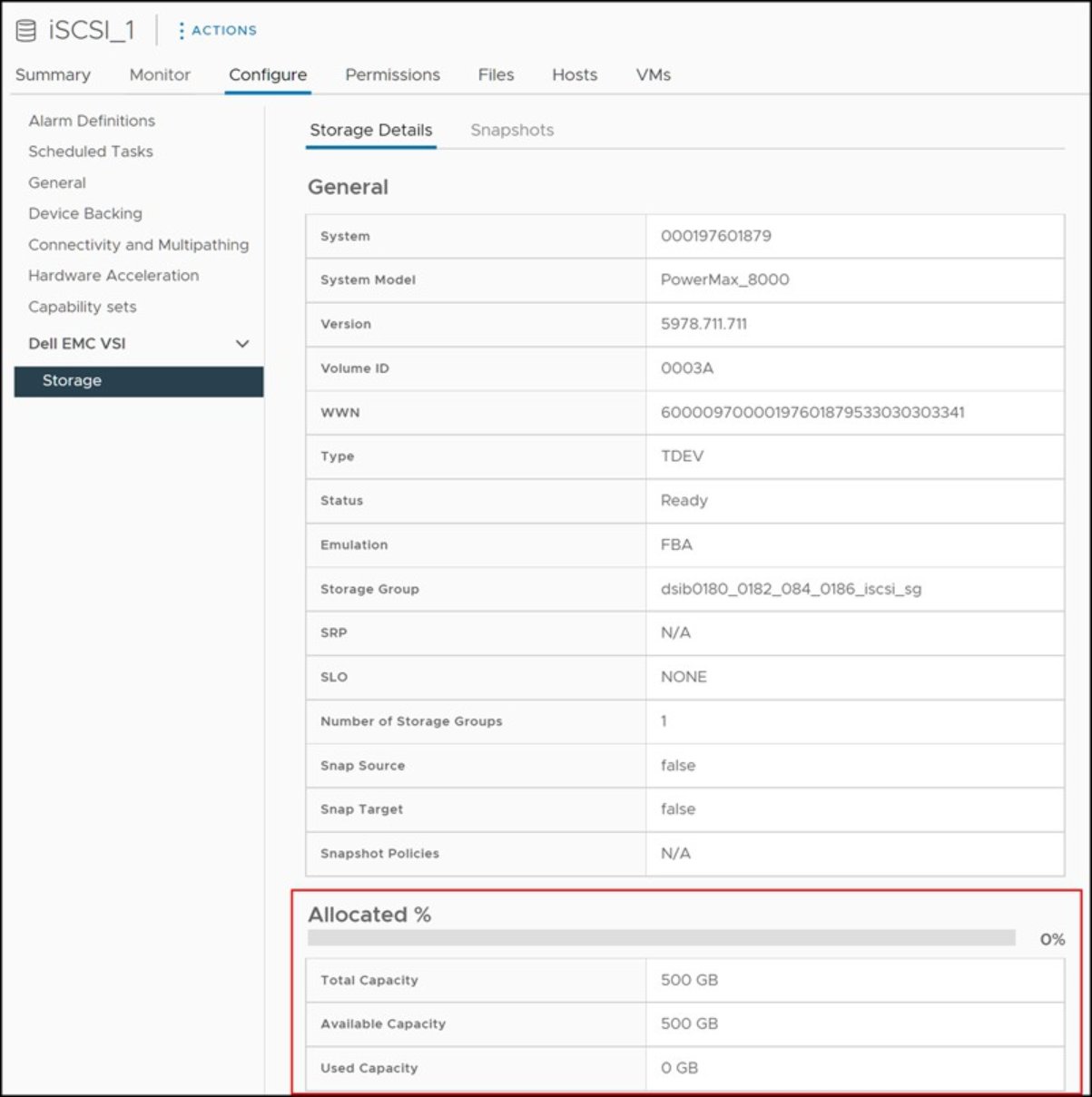

If the VMware administrator does not have access to Unisphere, to view the relationship between the backend device and datastore, VSI is recommended. The highlighted area in Figure 111 is pulled directly from the array and shows current allocation, again zero.

Figure 111. Zeroedthick virtual disk allocation on PowerMax thin devices within VSI

Thin allocation format

Like zeroedthick, the thin allocation mechanism is also virtual provisioning-friendly, but, as will be explained in this section, it should be used with caution in conjunction with array virtual provisioning. Thin virtual disks increase the efficiency of storage utilization for virtualization environments by using only the amount of underlying storage resources needed for that virtual disk, exactly like zeroedthick. But unlike zeroedthick, thin devices do not reserve space on the VMFS volume which allows more virtual disks per VMFS. Upon the initial provisioning of the virtual disk, the disk is provided with an allocation equal to one block size worth of storage from the datastore. As that space is filled, additional chunks of storage in multiples of the VMFS block size (1 MB) are allocated for the virtual disk so that the underlying storage demand will grow as its size increases.

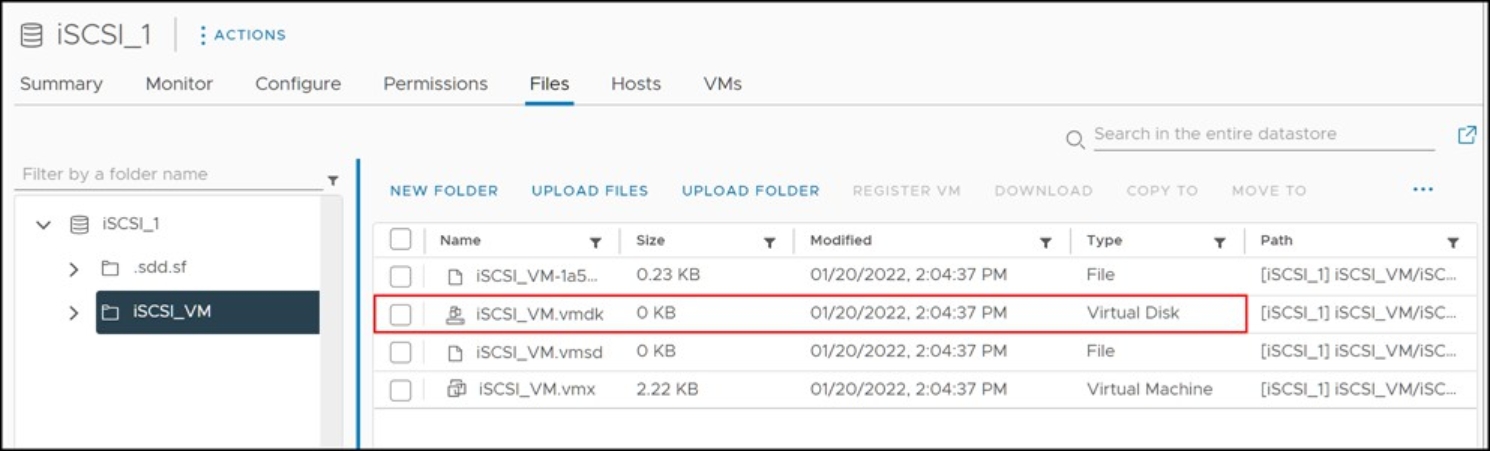

Using the same sized VM as in Figure 109, a single 90 GB virtual disk is created that uses the thin allocation method rather than zeroedthick. Note now in Figure 112 that vmdk reports as zero.

Figure 112. Thin virtual disk allocation size as seen in a VMFS datastore browser

As is the case with the zeroedthick allocation format, the VMware kernel does not actually initialize unused blocks for thin virtual disks, so the full 90 GB is neither reserved on the VMFS nor consumed on the thin device on the array. The virtual disk presented in Figure 112 resides on thin device 0003A and, as seen in Figure 113, consumes no space.

Figure 113. Thin virtual disk allocation on PowerMax thin devices

Eagerzeroedthick allocation format

With the eagerzeroedthick allocation mechanism (referred to as Thick Provision Eager Zeroed in the vSphere Client, or EZT), space required for the virtual disk is completely allocated and written to at creation time. This leads to a full reservation of space on the VMFS datastore and on the underlying PowerMax device. Accordingly, it takes longer to create disks in this format than to create other types of disks.[17]

A single 90 GB virtual disk that uses the eagerzeroedthick allocation method is shown in Figure 114. The datastore browser reports the virtual disk as consuming the entire 90 GB on the volume, just like zeroedthick and thin.

Figure 114. Eagerzeroedthick virtual disk allocation size as seen in a VMFS datastore browser

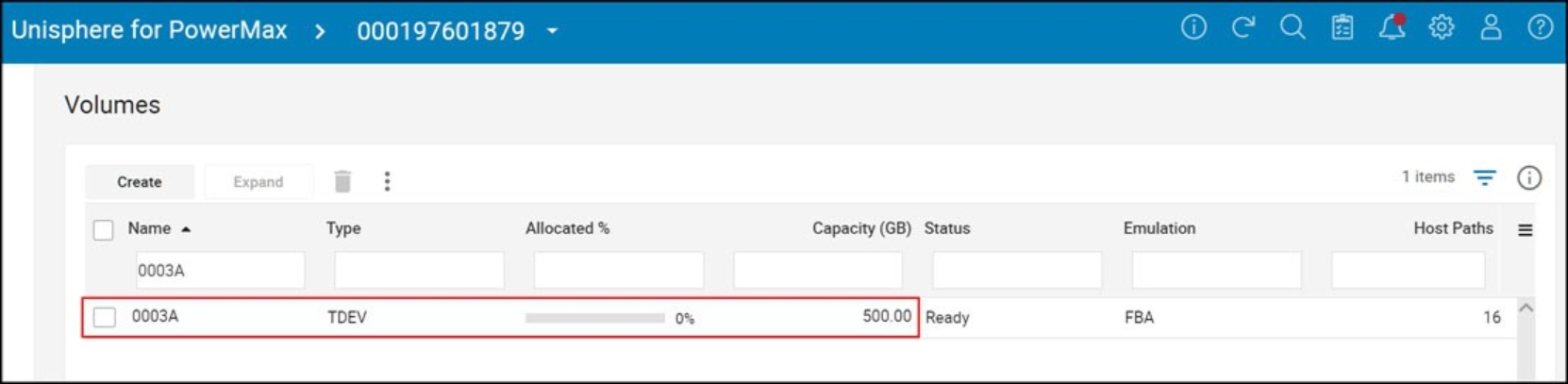

On most thin arrays, eagerzeroedthick will cause an equal amount of space to be reserved on the array as VMware; however, the PowerMax does not work this way. The eagerzeroedthick virtual disk resides on thin device 003A and as highlighted in Figure 115 no space is consumed. The reason for this is addressed in the next sections.

Figure 115. Eagerzeroedthick virtual disk allocation on PowerMax thin devices

Eagerzeroedthick and VAAI

VMware vSphere offers a variety of VMware Storage APIs for Array Integration (VAAI) that provide the capability to offload specific storage operations to Dell PowerMax to increase both overall system performance and efficiency. One of these supported primitives is Block Zero (also referred to as “write same” since it uses the WRITE SAME SCSI operation (0x93)) which directly impacts eagerzeroedthick disks.

Using software to zero out a virtual disk is a slow and inefficient process. By passing off this task to the array, the typical back and forth from host to array is avoided and the full capability of the array can be utilized to accomplish the zeroing.

When an EZT device is created on VMware with a PowerMax backend, the PowerMax employs block zero to zero out the disk. As explained, most arrays then reserve that space equal to the vmdk size; however, the PowerMax architecture is different and no space is actually reserved. This is because the PowerMax can be thought of as a single pool of storage, since new extents can be allocated from any thin pool on the array. Therefore, the only way that a thin device can run out of space is if the entire array runs out of space. It was deemed unnecessary, therefore, to reserve space on the PowerMax when using block zero. Despite this, the array will make a change to the extents to prime them for data. This means the array must still process the write same requests from VMware so the creation time for an EZT vmdk is not as quick as the other formats.

Block Zero is enabled by default and does not require any user intervention. Block zero can be disabled on an ESXi host if desired through the use of the vSphere Client or command line utilities. Block zero can be disabled or enabled by altering the setting DataMover.HardwareAcceleratedInit in the ESXi host advanced settings under DataMover.

NVMeoF

NVMe over fabrics, or NVMeoF, defines a common storage architecture for accessing the NVMe block storage protocol over a storage network. This means going from server to SAN, including a front-end interface to NVMe storage. There are any number of fabrics that make up NVMeoF including FC, RoCE, and IP. Dell offers two implementations of NVMeoF - FC and IP - or FC-NVMe and NVMe/TCP. NVMeoF devices do not use the VAAI Plugin, however some of the VAAI SCSI commands have been ported over. WRITE SAME or Block Zero is implemented as the command Write Zeroes on NVMeoF and deallocate is used for UNMAP. These commands provide the same functionality as the SCSI command. XCOPY is not yet ported.