Home > Storage > PowerMax and VMAX > Storage Admin > Dell PowerMax and VMware vSphere Configuration Guide > Full copy tuning

Full copy tuning

-

By default, when the Full Copy primitive is supported, VMware informs the storage array to copy data in 4 MB chunks. The PowerMax, however, can accept much larger sizes. The following two sections outline how to inform ESXi to send those larger sizes.

Default behavior

The default copy size can be incremented from 4 MB to a maximum value of 16 MB. This should be done for all PowerMax arrays.

The following commands show how to query the current copy (or transfer) size and then how to alter it.

# esxcfg-advcfg -g /DataMover/MaxHWTransferSize

Value of MaxHWTransferSize is 4096

# esxcfg-advcfg -s 16384 /DataMover/MaxHWTransferSize

Value of MaxHWTransferSize is 16384

Note that this change is per ESX(i) server and can only be done through the CLI (no GUI interface) as in Figure 164:

Figure 164. Changing the default copy size for Full Copy on the ESXi host

Alternatively, vSphere PowerCLI can be used at the vCenter level to change the parameter on all hosts as in Figure 165.

Figure 165. Changing the default copy size for Full Copy using vSphere PowerCLI

Claim rule

In addition to maxing out the standard size to 16 MB, VMware is also capable of sending even larger sizes through a VAAI claim rule. These claim rules will take precedence over the MaxHWTransferSize and can be utilized on the PowerMax.

Claim rules in general have always been used to implement VAAI on vSphere. VAAI is a plug-in claim rule that says if a device is from a particular vendor, in this case a Dell PowerMax device, it should go through the VAAI filter. In other words if VAAI can be used it will be used.[1] Each block storage device managed by a VAAI plug-in needs two claim rules, one that specifies the hardware acceleration filter and another that specifies the hardware acceleration plug-in for the device. Within the storage claim rules, VMware has three columns that control the behavior allowing larger XCOPY sizes. They are:

- XCOPY Use Array Reported Values

- XCOPY Use Multiple Segments

- XCOPY Max Transfer Size

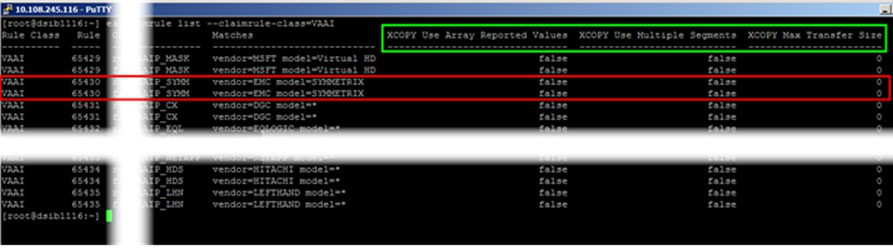

Figure 166 shows the output from vSphere with the columns and their default values.

Figure 166. VAAI claim rule class in vSphere 6.x

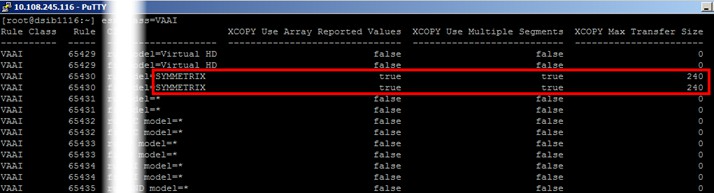

By altering the rules and changing these three settings, one can adjust the max transfer size up to a maximum of 240 MB for the PowerMax. Though the columns show in both the Filter and VAAI claim rule classes, only the VAAI class needs to be changed. The new required settings are:

- XCOPY Use Array Reported Values = true

- XCOPY Use Multiple Segments = true

- XCOPY Max Transfer Size = 240

It is not sufficient to simply change the transfer size value because VMware requires that the array reported values is set to true if the transfer size is set. When use array reported values is set it means that VMware will query the array to get the largest transfer size if the max transfer size is not set or if there is a problem with the value.

Unfortunately, the PowerMax advertises a value higher than 240 MB so in the case of an issue with the transfer size (for whatever reason) VMware will default to the MaxHWTransferSize, hence why it is also important to set that to 16 MB. In addition, in order for the PowerMax to accept up to 240 MB, the multiple segments value must be enabled since a single segment is limited to 30 MB (there are 8 segments available). Therefore when modifying the claim rules, the size is set to 240 MB and set both other columns to true.

The process to change the claim rules involves removing the existing VAAI rule class, adding it back with the new columns set, and then loading the rule (to be completed on all servers involved in testing). Following this a reboot of the server is needed. The commands to run on the ESXi host as root are the following. They will not return a response. Note that sometimes VMware alters the exact syntax so use the esxcli help if necessary.

esxcli storage core claimrule remove --rule 65430 --claimrule-class=VAAI

esxcli storage core claimrule load --claimrule-class=VAAI

esxcli storage core claimrule add -r 65430 -t vendor -V EMC -M SYMMETRIX -P VMW_VAAIP_SYMM -c VAAI -a -s -m 240

To verify the changes are set before reboot, list the claim rules as in Figure 167 below:

esxcli storage core claimrule list --claimrule-class=VAAI

Figure 167. Updated VAAI claim rule for XCOPY

Once the reboot is complete, re-run the command to ensure the changes appear.

It is important to remember that the new claim rule does not impact how VAAI functionality works. For instance, the following still hold true:

- It will revert to software copy when it cannot use XCOPY

- It can still be enabled/disabled through the GUI or CLI

- It will use the DataMover/MaxHWTransferSize when the claim rules are not changed or a non-PowerMax array is being used

The maximum value is just that, a maximum. That is the largest extent VMware can send but does not guarantee all extents will be that size. VMware is capable of sending extents anywhere up to 240 MB depending on the fragmentation of the vmdks.

vSphere 7+ and VMW_VAAIP_T10

VMware has begun the process of moving away from the custom vendor VAAI plug-ins (VMW_VAAIP_SYMM) enumerated in the previous section. To that end, VMware has always offered a generic plug-in for VAAI, named VMW_VAAIP_T10. This generic plug-in works the same way the custom plug-in does. The idea is to eventually deprecate all vendor custom plug-ins. While the current implementation enumerated above using the custom plug-in is still supported, for new servers which do not already have the claim rules setup for PowerMax, Dell recommends using the new generic plug-in as VMware will eventually deprecate the Dell custom one. The steps are similar to the custom plug-in and are as follows:

First, the existing custom vendor rule must be removed. Because VMware suggests using claim rule number 914, remove both the Filter and VAAI classes. This is different than what was done for the custom plug-in as the Filter class did not need to be removed. Note that if an attempt is made to add claim rule 914 before 65430 is removed, VMware will error and indicate 914 is a duplicate of 65430.

esxcli storage core claimrule remove --rule 65430 --claimrule-class=Filter

esxcli storage core claimrule remove --rule 65430 --claimrule-class=VAAI

Reload the filter and rule before adding the new ones to avoid duplication.

esxcli storage core claimrule load --claimrule-class=Filter

esxcli storage core claimrule load --claimrule-class=VAAI

Now add the new filter and rule.

esxcli storage core claimrule add -r 914 -t vendor -V EMC -M SYMMETRIX -P VAAI_FILTER -c Filter

esxcli storage core claimrule add -r 914 -t vendor -V EMC -M SYMMETRIX -P VMW_VAAIP_T10 -c VAAI -a -s -m 240

Reboot the ESXi host for the changes to take effect. Once the host comes back up, verify the changes. Be aware that because rule 65430 is still part of the ESXi build, VMware will add it back after the reboot; however, it is only listed as a "file" and not also "runtime" as is the new generic claim rule. Therefore, it cannot claim any devices.

The new rule is seen in the red box, while the old rule is in blue in Figure 168.

esxcli storage core claimrule list --claimrule-class=VAAI

Figure 168. Use of the generic VAAI T10 rule

Note: For Storage vMotion operations, VMware will use a maximum of 64 MB for the extent size. This is a VMware restriction and has nothing to do with the array. No change to the claim rules is required. Remember if VMware can use an extent size up to 240 MB it will, if not it will use something smaller. In the case of Storage vMotion it won't exceed 64 MB.

Thin vmdks

One area where the increased extent size has made no difference in the past is with thin vmdks as VMware always used 1 MB extents. In vSphere 6 and higher, however, VMware tries to consolidate extents and therefore when cloning will use the largest extent it can consolidate for each copy IO (software or hardware). This new behavior has resulted in two benefits on the PowerMax. The first is the obvious one that any task requiring XCOPY on thin vmdks will be faster than on previous vSphere versions. The second benefit is somewhat unexpected and that is a clone of a thin vmdk now behaves like a thick vmdk in terms of further cloning. In other words, if one clones a thin vmdk VM and then uses that clone as a template for further clones, those further clones will be created at a speed almost equal to a thick vmdk VM clone. This is the case whether that initial clone is created with or without XCOPY. The operation is essentially a defragmentation of the thin vmdk. It is critical, therefore, if a thin vmdk VM is going to be a template for other VMs, that rather than converting the VM to template it is cloned to a template. This will ensure all additional deployments from the template will benefit from the consolidation of extents.

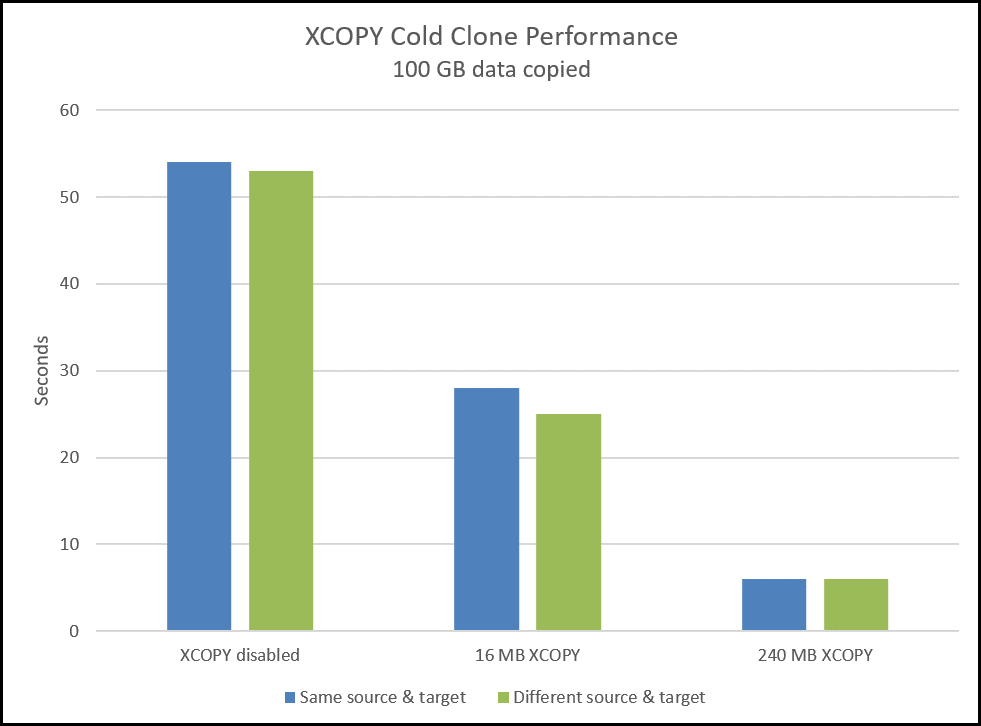

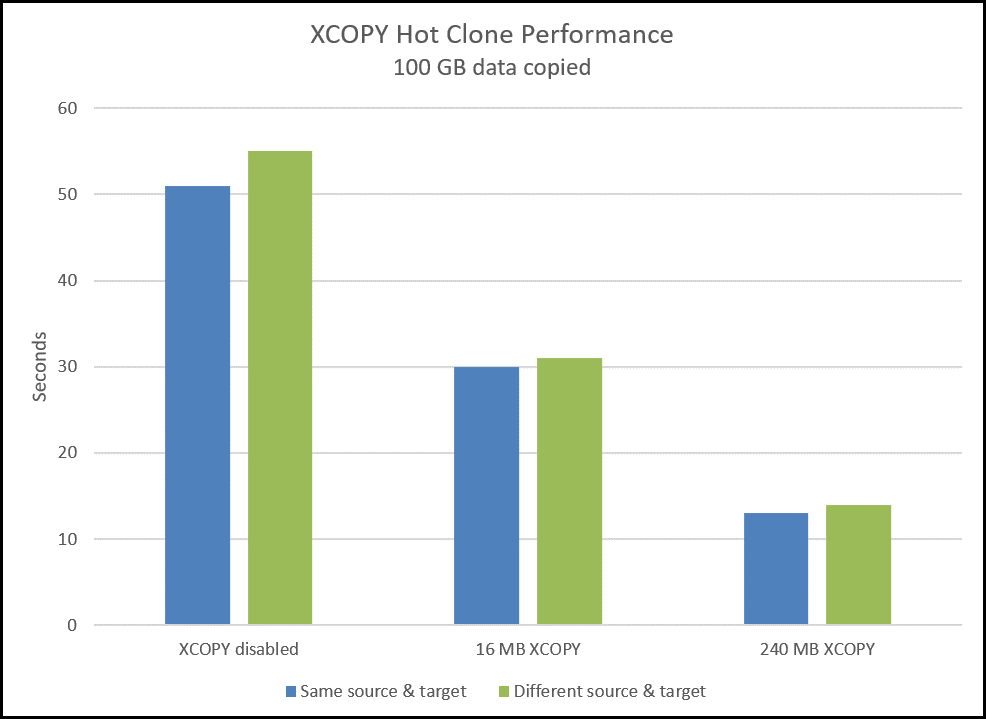

Claim rule results

The following graphs, Figure 169 and Figure 170, demonstrates the effectiveness of using claim rules for XCOPY when cloning 100 GB of data.

Figure 169. XCOPY cold clone performance on the PowerMax

Figure 170. XCOPY hot clone performance on the PowerMax

For more detail and additional test results for XCOPY please refer to the white paper Using VMware Storage APIs for Array Integration with Dell PowerMax.

SRDF and XCOPY

In order to maintain consistency of SRDF pairs, Dell implements synchronous XCOPY for any SRDF target device using SRDF/S or SRDF/Metro. The performance of the synchronous implementation is, by its nature, more akin to VMware's software copy than Dell’s asynchronous implementation for SRDF/A or non-SRDF targets. As such, while Dell synchronous copy will generally outpace VMware's host copy, the improvement will not mirror those for asynchronous copy. It is, however, more desirable to offload the copy to the array to free the host and network resources for more important activities. If the time to complete an XCOPY task is more critical than the resources saved on the host, XCOPY can be disabled for SRDF devices on the array by Dell.[2]

Caveats for using hardware-accelerated Full Copy

The following are some general caveats that Dell provides when using this feature:

- VMware publishes a KB article (1021976) which details when Full Copy will not be issued. Such cases include VMs that have snapshots or cloning or moving VMs between datastores with different block sizes, among others.

- One case not included is when the configuration parameter disk.EnableUUID is set to TRUE on Windows VMs. VMware Tools will set this parameter so be aware this will prevent XCOPY.

- Always set MaxTransferSize to 16 MB and use claim rules.

- Limit the number of simultaneous clones using Full Copy to three or four. This is not a strict limitation, but Dell believes this will ensure the best performance when offloading the copy process.

- A PowerMax device that has SAN Copy, TimeFinder and certain RecoverPoint sessions will not support Full Copy. Any cloning or Storage vMotion operation run on datastores backed by these volumes will automatically be diverted to the default VMware software copy. Note that the vSphere Client has no knowledge of these sessions and as such the "Hardware Accelerated" column in the vSphere Client will still indicate "Supported" for these devices or datastores.

- Full Copy is not supported for use with Open Replicator. VMware will revert to software copy in these cases.

- Full Copy is not supported for use with Federated Live Migration (FLM) target devices.

- Although SRDF is supported with Full Copy, certain RDF operations, such as an RDF failover, will be blocked until the PowerMax has completed copying the data from a clone or Storage vMotion.

- When the available capacity of a Storage Resource Pool (SRP) reaches the Reserved Capacity value, active or new Full Copy requests can take a long time to complete and may cause VMware tasks to timeout. For workarounds and resolutions please see Dell KB article 503348.

- Full Copy cannot be used between devices that are presented to a host using different initiators. For example, take the following scenario:

- A host has four initiators

- The user creates two initiator groups, each with two initiators

- The user creates two storage groups and places the source device in one, the target device in the other

- The user creates a single port group

- The user creates two masking views, one with the source device storage group and one of the initiator groups, and the other masking view with the other initiator group and the storage group with the target device.

- The user attempts a Storage vMotion of a VM on the source device to the target device. Full Copy is rejected, and VMware reverts to software copy.

SRDF/Metro specific

The following are caveats for SRDF/Metro when using Full Copy. In SRDF/Metro configurations the use of Full Copy does depend on whether the site is the one supplying the external WWN identity.

- Full Copy will not be used between a non-SRDF/Metro device and an SRDF/Metro device when the device WWN is not the same as the external device WWN. Typically, but not always, this means the non-biased site (recall even when using witness, there is a bias site). In such cases Full Copy is only supported when operations are between SRDF/Metro devices or within a single SRDF/Metro device; otherwise software copy is used.

- As Full Copy is a synchronous process on SRDF/Metro devices, which ensures consistency, performance will be closer to software copy than asynchronous copy.

- While an SRDF/Metro pair is in a suspended state, Full Copy reverts to asynchronous copy for the target R1. It is important to remember, however, that Full Copy is still bound by the restriction that the copy must take place on the same array. For example, assume SRDF/Metro pair AA, BB is on array 001, 002. In this configuration device AA is the R1 on 001 and its WWN is the external identity for device BB, the R2 on 002. If the pair is suspended and the bias is switched such that BB becomes the R1, the external identity still remains that of AA from array 001 (i.e. it appears as a device from 001). The device, however, is presented from array 002 and therefore Full Copy will only be used in operations within the device itself or between devices on array 002.

Data reduction specific

The following are caveats when using Full Copy with devices in storage groups with data reduction enabled.

- When Full Copy is used on a device in a storage group with data reduction enabled, or between storage groups with data reduction enabled, Full Copy will uncompress the data during the copy and then re-compress on the target.

- When Full Copy is used on a device in a storage group with data reduction enabled to a storage group without data reduction enabled, Full Copy will uncompress the data during the copy and leave the data uncompressed on the target.

- When Full Copy is used on a device in a storage group without data reduction enabled to a storage group with data reduction enabled, the data will remain uncompressed on the target and be subject to the normal data reduction algorithms.

- As Full Copy is a background process, deduplication of data, if any, may be delayed until after all tracks have been copied. Full clone copies have been shown to deduplicate at close to 100% in enabled storage groups.

eNAS/PowerMax File and VAAI

Dell offers Embedded NAS (eNAS) guest OS (GOS) on the PowerMax 2000/8000 and Embedded File GOS on the PowerMax 2500/8500. NAS on the PowerMax enables customers to leverage vital Tier 1 features including SLO-based provisioning, Host I/O Limits, and data reduction technologies. eNAS is comprised of virtual instances of VNX Software Data Movers and Control Stations that run on the PowerMax, while File is a new implementation that is being utilized across different arrays in the portfolio.

Unlike block storage, file storage does not support VAAI as part of the array code. All NFS file systems, regardless of vendor, require a NAS plug-in. Fortunately, the plug-in code is generic so that the same plug-in used for the Dell Unity platform can also be used for other arrays like PowerMax and PowerStore. Note that the plug-in works with either NFS version 3 or 4.1.

The VAAI features for NAS are not exactly equivalent to those available on block, though the idea is exactly the same: to offload tasks to the array. Features on NAS include NFS Clone Offload, extended stats, space reservations, and snap of a snap. In essence the NFS clone offload works much the same way as XCOPY as it offloads ESXi clone operations to the array.

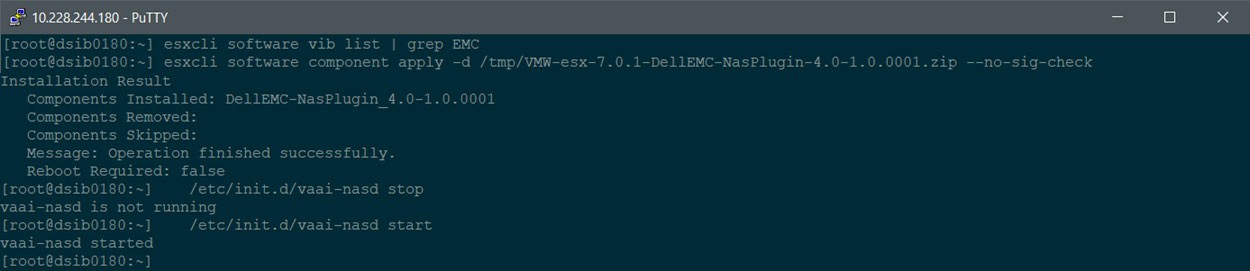

There are three different versions of the plug-in depending on which ESXi version that is running: 3.0.1 for 6.7+, 3.0.2 for 7.0+ and 4.0.1 for 7.0.1+. Version 4.0.1 is the only one that does not require a reboot after installation. To install the plug-in, download the NAS plug-in from Dell support. The plug-in is delivered as a VMware Installation Bundle (vib). The plug-in can be installed through VMware vCenter Update Manager or through the CLI as demonstrated in Figure 171. Be sure to check for any existing NAS plug-in as it must be removed before installing the new one. Stop and start the vaai-nasd service if installing version 4.0.1 as below, otherwise reboot for the other versions.

Figure 171. Installation of the NAS plug-in for VAAI

Note: VAAI with eNAS is only supported on NFS version 3 and not NFS version 4.1. VAAI supports both 3 and 4.1 with PowerMax File.

Once installed, the vSphere Client will show that VAAI is supported on the NFS datastores as in Figure 172. If the plug-in is not installed, the column will show as "Unknown".

Figure 172. NFS datastore with VAAI support

Extended stats

As mentioned, one of the other VAAI integrations for NFS is extended stats. Using vmkfstools the user can display the disk utilization for virtual machine disks configured on NFS datastores. The extendedstat argument provides disk details for the virtual disks. The command reports, in bytes, virtual disk size, used space, and unshared space. An example is shown in Figure 173.

Figure 173. VAAI NFS extended stats feature

Nested clones

Another VAAI capability on NFS is the ability to create a thin-clone virtual machine from an existing thin-clone virtual machine. This functionality is referred to as nested clones. The functionality uses the snapshot architecture to instantaneously create lightweight virtual machine clones. VMware products such as Horizon can initiate these nested snapshots. The clone operations are off-loaded to the array. To take advantage of this feature with eNAS, enable the nested clone support property when you create the file system for eNAS. It cannot be changed after the file system is created. File enables the capability automatically upon file system creation.

VAAI and Dell PowerMaxOS version support

Dell implementation of VAAI is done within the PowerMaxOS software. As with any software, bugs are an inevitable byproduct. While Dell makes every effort to test each use case for VAAI, it is impossible to mimic every customer environment and every type of use of the VAAI primitives. To limit any detrimental impact our customers might experience with VAAI, Table 2 is provided. It includes all current PowerMaxOS releases and any E Packs that must be applied at that level. Using this table will ensure that a customer's array is at the proper level for VAAI interaction.

Note: This table does not include HYPERMAX OS, however those supported versions may be found in the Dell whitepaper Using VMware vSphere Storage APIs for Array Integration with Dell PowerMax.

Table 2. PowerMaxOS and VAAI support

PowerMaxOS Code Level

VAAI primitives supported

Minimum version of vSphere required

Important Notes

PowerMaxOS

10.0.1 ,10.1.0

ATS, Block Zero, Full Copy, UNMAP

vSphere 6.7 for fixed UNMAP, vSphere 7.0 for FC-NVMe, vSphere 7.0 U3 for NVMe/TCP

NVMeoF does not support the Full Copy (XCOPY) primitive.

5978.711.711

ATS, Block Zero, Full Copy, UNMAP

vSphere 6.7 for fixed UNMAP, vSphere 7.0 forFC-NVMe

NVMeoF does not support Full Copy (XCOPY) or Block Zero (WRITE SAME) primitives.

5978 Q3 2020

(5978.669.669)

ATS, Block Zero, Full Copy, UNMAP

vSphere 6.7 for fixed UNMAP,vSphere 7.0 for FC-NVMe

NVMeoF does not support Full Copy (XCOPY) or Block Zero (WRITE SAME) primitives.

5978.479.479

ATS, Block Zero, Full Copy, UNMAP

vSphere 6.7 for

fixed UNMAP, vSphere 7.0 for

FC-NVMe

NVMeoF does not support Full Copy (XCOPY) or Block Zero (WRITE SAME) primitives.

5978.444.444

ATS, Block Zero, Full Copy, UNMAP

vSphere 6.7 for fixed UNMAP

First release to support VAAI metrics.

5978.221.221

ATS, Block Zero, Full Copy, UNMAP

vSphere 6.7 for fixed UNMAP

5978.144.144

ATS, Block Zero, Full Copy, UNMAP

vSphere 6.7 for fixed UNMAP

This is the first PowerMaxOS release for the PowerMax platform.

Note: Be aware that this table only displays information related to using vSphere with VAAI and PowerMaxOS. It is not making any statements about general vSphere support with PowerMaxOS. For general support information consult E-Lab Navigator. It may also not contain every release available.

ODX and VMware

The Offloaded Data Transfer (ODX) feature of Windows Server 2012 and Window 8 or ODX, is a Microsoft technology that like Full Copy allows the offloading of host activity to the array. ODX can be a feature utilized in VMware environments when the Guest OS is running one of the two aforementioned Microsoft operating systems, and the virtual machine is presented with physical RDMs. In such an environment, ODX will be utilized for certain host activity, such as copying files within or between the physical RDMs. ODX will not be utilized on virtual disks that are part of the VM. ODX also requires the use of Fixed path. PP/VE or NMP will not work and in fact it is recommended that ODX be disabled on Windows if using those multipathing softwares.[3] In order to take full advantage of ODX, the allocation unit on the drives backed by physical RDMs should be 64k.

Note: There is no user interface to indicate ODX is being used.

[1] Various situations exists - detailed in this paper - where primitives like XCOPY will not be used.

[2] For more detail on XCOPY performance considerations, please see Dell KB 533648 https://www.dell.com/support/kbdoc/en-us/000014788?lang=en.

[3] See Microsoft TechNote http://technet.microsoft.com/en-us/library/jj200627.