PowerMax 2500 and 8500 Dynamic Fabric—A High-Performance Clustered Architecture for Enterprise Storage

Tue, 18 Apr 2023 16:15:55 -0000

|Read Time: 0 minutes

Introduced in 2022, the PowerMax 2500 and 8500 systems represent the next generation of the PowerMax family. At their core, the PowerMax 2500 and 8500 have a high-performing cluster architecture solely dedicated to processing the vast amounts of storage operations and storage data from modern applications. The four key components of the PowerMax cluster architecture are the compute nodes; the storage Dynamic Media Enclosures (DMEs); the Dynamic Fabric that ties the elements together; and the internal system software, PowerMaxOS 10. The PowerMax 2500 and 8500 cluster architecture provides the following benefits natively without the need for added hardware, software, or licensing:

- High reliability and fault tolerance: All the nodes in the PowerMax can access application I/O and supply resources to process it, making it a true shared everything, active/active system. In case of a node failure, there is no failover operation needed as with active/passive systems. PowerMaxOS 10 will automatically redirect the failed node’s I/O to other nodes. Since no failover is required, there is no need to ever configure a quorum device to guard against issues such as “split brain.”

- Simplicity: All the compute nodes and DMEs in a PowerMax system appear to the user as a single entity. A user does not have to log in to separate individual nodes to manage or configure the PowerMax system. The management of the entire system is performed using the HTML5-based Unisphere for PowerMax UI and/or REST API. These tools provide an easy-to-use interface for management actions and monitoring operations that are crucial to an organization’s needs.

- Fully autonomous operations: PowerMaxOS 10 manages all system resources autonomously—continuously running diagnostics, and scrubbing and moving data within the system for maximum performance and availability 24x7. There is no need to manually configure load balancing between the nodes because PowerMaxOS 10 automatically decides which node will process the incoming I/O data based on locality and resource availability.

- Predicable performance and scalability: As compute requirements increase, you can “scale out” the PowerMax system by adding nodes nondisruptively to supply a predictable linear increase in performance and computing power. As capacity requirements increase, you can “scale up” the system granularly, using single-drive increments. This allows PowerMax to scale based on application requirements rather than system architectural requirements. The architecture also enables holistic software scalability; the PowerMax can support 64K host-presentable devices, more than 65 million snapshots, and 2,048 remote replication groups with other systems.

The compute nodes, DMEs, and PowerMaxOS 10 software supply the foundation for a true active/active, shared everything solution for the demanding requirements of enterprise data centers. The glue that ties all these components together and allows PowerMax to deliver these benefits to customers is the internal Dynamic Fabric.

PowerMax Dynamic Fabric

Both the PowerMax 2500 and 8500 use full end-to-end, internal Non-Volatile Memory Express over Fabrics (NVMe-oF) topologies between the compute nodes and DMEs. These NVMe-oF topologies are referred to as the PowerMax Dynamic Fabric.

Core elements

The Dynamic Fabric turns the compute and backend storage elements into individual independent endpoints on a large internal storage fabric. Each endpoint in this fabric is dual ported, with each port being connected to a physically isolated fabric—Fabric A or Fabric B—for redundancy. These individual compute and storage endpoints can be placed into shared resource pools, disaggregating the storage and compute in the system. In this architecture, all compute node endpoints can access all shared memory in all the other compute nodes using the system’s high-speed RDMA protocol. Further, all compute node endpoints can access all storage endpoints and SSDs in the DMEs using the system’s high-speed NVMe-oF protocol. This access to all shared memory and all storage endpoints and DME SSDs creates a true active/active and share everything system architecture. This system disaggregation decouples the compute and storage so that they can be scaled and provisioned independently of each other to meet application requirements rather than adhering to strict system architecture requirements. To allow for this shared everything decoupled architecture, the Dynamic Fabric provides the system with these key elements:

- Ability to share memory access between the nodes

- High-speed interconnection between cluster components

- End-to-end data consistency checking based on SCSI T10-DIF Protection Information that protects against erroneous data transmission and data corruption

Shared memory

The PowerMax system uses an active/active, share everything architecture. This includes memory content sharing, where each node can access data in another node’s memory. On PowerMax, this is done by using Remote Direct Memory Access (RDMA). RDMA is the ability to read from and write to memory on an external compute node without interrupting the processing of the CPU(s) on that node. RDMA permits high-throughput, low-latency data transfer between the memory of two computing systems, which is essential in high performing clustered computing systems such as PowerMax. Using RDMA, all PowerMax nodes can access the memory content of any other node in the system as if it was its own. The ability for each node to access other nodes’ memory using RDMA turns the total capacity of cache in the PowerMax systems into a truly shared resource pool called PowerMax Global Cache.

High-speed interconnecting fabric

PowerMax RDMA communications are subject to fabric latency, thereby making the PowerMax a Non-Uniform Memory Access (NUMA) system. A key requirement for PowerMax Global Cache (and NUMA systems in general) is that the fabric that transports the internode RDMA communications must be extremely low latency and have high bandwidth. For this reason, PowerMax uses the InfiniBand (IB) protocol as its primary internode fabric. IB is a fabric technology and set of protocols most often seen in high-performance computing (HPC) environments such as Wall Street’s real-time trading and risk analysis applications. It is used in these environments because it natively provides:

- A highly energy-efficient, high-bandwidth, and low-latency fabric: In PowerMax, the IB fabric is run in connected mode, where a single lane can transmit 100 Gbps with MTUs of 2 MB. Larger single-lane data transfer is more efficient than smaller multilane data transfer because more data is transferred using a single clock cycle—consuming far fewer compute resources to transfer data. Studies have shown that when run in connected mode, IB is over 70 percent more energy efficient per byte sent than other RDMA fabric choices such as Ethernet: Evaluating Energy Efficiency of Gigabit Ethernet and Infiniband Software Stacks in Data Centres (IEEE study).

- Scalability: IB can support tens of thousands of fabric endpoints in a flat single-subnet network. Thus, you can scale the PowerMax disaggregated architecture by adding independent compute and storage endpoints into the fabric without having to add more subnets and without incurring additional latency penalties.

- High security: The protocol is implemented in hardware, and the communication attributes are configured centrally in a way that does not enable software applications to gain control over them and maliciously spoof or change those attributes.

- Resiliency: RDMA data transfers can be protected with SCSI T10-DIF Protection Information. This allows detection of data corruption from many sources, and, with the PowerMax redundant architecture, the correct data can always be referenced.

Implementation

Using RDMA and NVMe-oF with an IB fabric architecture, the PowerMax Dynamic Fabric delivers the high levels of performance, scalability, efficiency, and security that enterprise customers require. Implementation of the Dynamic Fabric to achieve these outcomes is different between the PowerMax 2500 and PowerMax 8500.

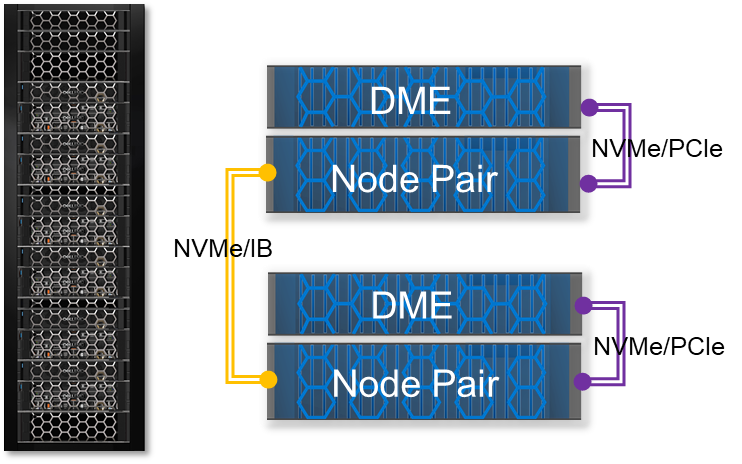

PowerMax 2500

A PowerMax 2500 can scale up to two node pairs. Each node pair comes with its own direct-attached PCIe DME. A key architectural part of the PowerMax 2500 Dynamic Fabric is the use of PCIe multihost technology. In the case of the PowerMax 2500, PCIe multihost allows both nodes to share each other's internal PCIe IB 100 Gb Host Channel Adapter (HCA), creating a high-speed, switchless fabric between the nodes for low-latency RDMA communication. Data transfer between the nodes and direct-attached PCIe DMEs uses a x32 lane NVMe/PCIe fabric.

The Dynamic Fabric configuration on the PowerMax 2500 allows the system to deliver performance and scalability in a compact package. It allows for higher levels of efficiency because the PowerMax 2500 can store up to 7x more capacity in half the rack space (over 4 PBe in 5U) compared with the earlier-generation PowerMax 2000. Along with its compact design, the 2500 supports the full complement of rich data services for open systems, mainframe, file, and virtual environments.

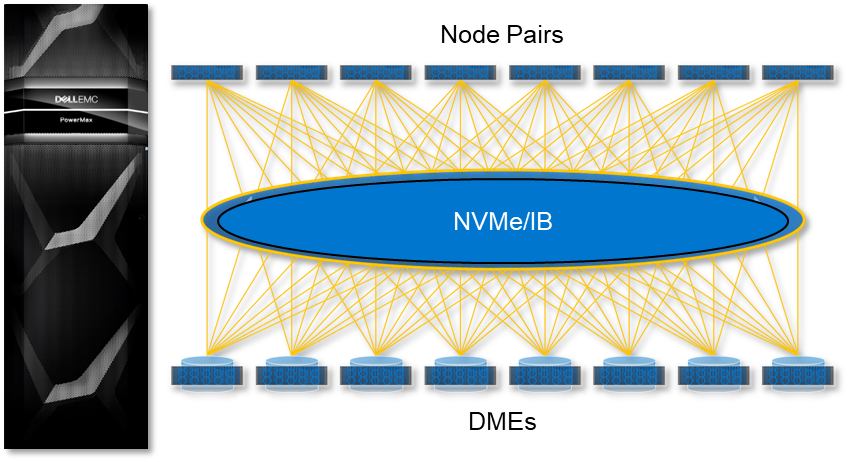

PowerMax 8500

The PowerMax 8500 Dynamic Fabric is a fully switched 100 Gbps per lane, NVMe/IB fabric that is used by all RDMA communications and NVMe data transfers. This is different from the PowerMax 2500 where the NVMe data transfers from the node to its PCIe-connected DME use NVMe/PCIe. On the PowerMax 8500, each compute node and storage DME are treated as unique endpoints on the fabric, allowing them to be added into the fabric independently of each other while being able to access all other endpoints. The system nodes and DMEs are fully disaggregated and dynamically connected, allowing the system to scale up compute to eight-node pairs while scaling out storage to eight DMEs—providing over 18 PBe in a single system.

Another difference with the Dynamic Fabric on the PowerMax 8500 is the use of intelligent DMEs. The DMEs connect into the NVMe/IB fabric using dual Link Control Cards (LCCs), each with dual 100 Gb IB ports. Each LCC board has its own NVIDIA BlueField data processing unit (DPU), allowing it to perform critical storage management functions such as fabric offload. The LCCs with their DPUs essentially make each PowerMax 8500 DME a unique active/active, dual-controller NVMe storage subsystem on the fabric.

Conclusion

The Dynamic Fabric is what enables the PowerMax 2500 and 8500 to function as a true active/active, share everything architecture for enterprise storage. With this native architecture, customers can:

- Achieve high levels of fault tolerance and resiliency natively without the need for costly additional hardware and software

- Meet workload requirements with less power and fewer resources

- Scale up and scale out with no manual intervention for load balancing or other backend clustering operations, which are performed automatically by PowerMaxOS

The Dynamic Fabric is the key component that allows for the low-latency, high-bandwidth connections required for RDMA communication between the nodes and for the extensive NVMe data processing and movement between the nodes and DMEs in the system. The Dynamic Fabric is the backbone of the PowerMax 2500 and 8500. It allows the systems to deliver the kind of performance, security, efficiency, and scalability required by the modern data center.

Resources

- The Next-Generation PowerMax Family Overview White Paper

- The PowerMax 2500 and 8500 Specification Sheet

- The PowerMax 2500 and 8500 Data Sheet

- New PowerMax Architecture adds NVIDIA Bluefield DPUs

Author: Jim Salvadore, Senior Principal Engineering Technologist

Email: james.salvadore@dell.com