The information in this section addresses the following topics:

- Data center creation

- Creation of a cluster

- Adding a host

- Virtual machine creation

- Configuration of a cluster

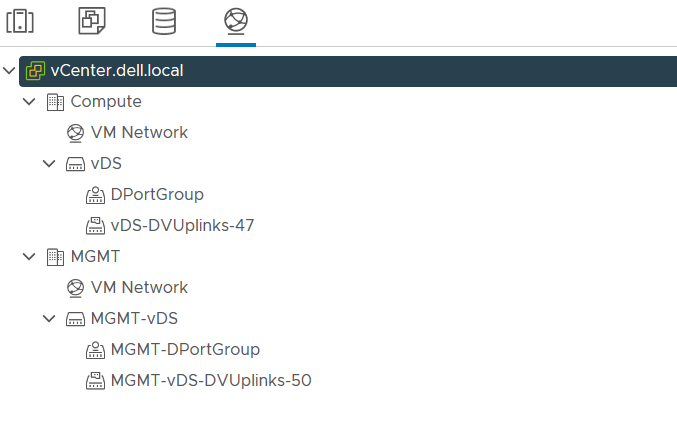

- vDS creation

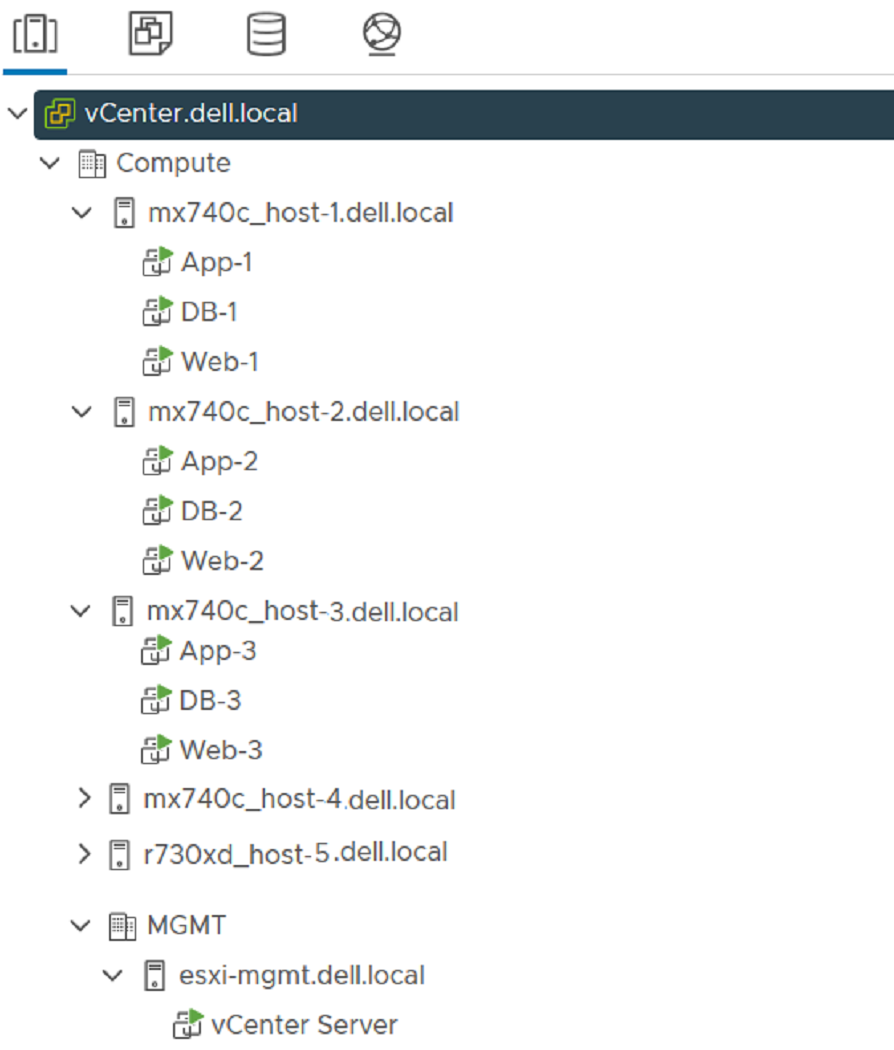

Rack servers can be added to vCenter after the SmartFabric is deployed and the uplink is created. The example environment in this guide has three PowerEdge rack servers that are connected to the S5232F-ON leaf switches. The rack servers are in a vSphere cluster named Compute.

The links that are provided in the table below contain instructions on how to configure the wanted VMware environment. These steps were used to set up the example topologies in this guide. The VMware configurations in this guide are only examples on how to set up the environment.

| Steps | Reference |

| Create a data center | VMware vSphere Documentation: Create Data Centers |

| Create a cluster | VMware vSphere Documentation: Creating Clusters |

| Configure a cluster | VMware vSphere Documentation: Configure a Cluster in the vSphere Client |

| Add a host | VMware vSphere Documentation: Add a Host |

| Create a virtual machine | VMware vSphere Documentation: Creating a Virtual Machine in the vSphere Client |

| Create vDS and set up networking | VMware vSphere Documentation: Setting Up Networking with vSphere Distributed Switches |

The MX compute sleds can now communicate with the rack servers and the vCenter vDS. The MX compute sleds are joined to the vSphere cluster by an administrator as well.

The best practice is to configure vDS, along with six distributed port groups, one for each VLAN.

NIC teaming

NIC teaming can be used to connect a virtual switch to multiple physical NICs on a host to increase network bandwidth and to provide redundancy. If there is adapter failure or a network outage, a NIC team can distribute the traffic between its members and provide passive failover. NIC teaming policies are set at the virtual switch or port group level for a vSphere Standard Switch, and at a port group or port level for a vSphere Distributed Switch.

The links that are provided in the table below contain instructions on how to configure the wanted NIC Teaming environment that is based on the switch type being used.

| Steps | Reference |

| Distributed switch | VMware vSphere Documentation: Configure NIC Teaming, Failover, and Load Balancing on a Distributed Port Group or Distributed Port |

| Standard switch | VMware vSphere Documentation: Configure NIC Teaming, Failover, and Load Balancing on a vSphere Standard Switch or Standard Port Group |

In this deployment example, if NIC teaming is defined at VMware vSphere, then a teaming configuration is not needed on PowerEdge MX environment with SmartFabric mode. From the OME-M console, select Configuration > Templates > Select Server Template > Edit Network, and then select the NO Teaming option.

Depending on the deployment environment, if the NIC teaming is set on VMware vSphere, when one uplink goes down, the connection is switched to another uplink in the team. When the down connection comes up, it is immediately restored. An unstable connection can cause packet loss. In VMware ESXi 6.7 and later, the user can edit the delay threshold in order to avoid packet loss. The default value is 100 ms, however Dell Technologies recommends that you change the value to 30000 ms. To modify the TeamPolicyUpDelay, from VMware vSphere, select ESXi Host > Configure > System > Advanced System Settings > Net.teampolicyupdelay. From this location, you can edit the value to 30000 ms.