Next-Generation PowerEdge Servers: Thoughtful Thermal Design

Download PDFMon, 16 Jan 2023 13:44:20 -0000

|Read Time: 0 minutes

Summary

Next-Generation Intel and AMD PowerEdge servers will support internal components with increased capabilities, such as higher CPU core counts and memory frequencies. These new features bring with them increased power consumption. Dell Technologies has refined its thermal design to optimize cooling of these enhanced hardware ingredients. This DfD will explain what changes were made to the thermal architecture of next- generation Intel and AMD PowerEdge servers, as well as the key benefits each change will bring to the end user.

Introduction

The installment of 3rd Generation Intel and AMD processors will give next- generation PowerEdge servers ample computing capacity. The newest PowerEdge servers are packed full of dense heat-producing semiconductors that must be adequately managed to stay below the recommended operating temperatures. The Dell Technologies thermal engineering team has tailored new thermal solutions and designs to address these concerns.

Increased Power Means Increased Heat

The most significant driver for the thermal redesign is the increase in power (Watts) being consumed by internal components. For example, Intel Ice Lake processors can now support up to 40 cores of processing power, but this at the toll of consuming up to 270W. Additionally, Intel also has a 33% increase in memory channels that support 9% higher speeds, amongst other new heat- producing features below:

- 2x PCIe performance with Gen4

- 33% more I/O lanes

- NVMe HW RAID

- Hot-Plug BOSS (2x M.2) for boot

These features create a dense server that can consume more power than previous generations. To keep the system cool, the following thermal design changes have been made to next-generation (15G) PowerEdge servers:

Thoughtful Thermal Design Changes

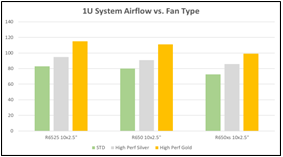

High Performance Fans – New higher performance fans have been added to increase the amount of cool air pushed through the system, targeted at higher power semiconductors (CPUs, GPUs and NVMe drives). A 3-tier approach to fan performance is employed to address the trend of increasing total power. This includes standard fans and high performance silver and gold fans, which increase the airflow capability versus the previous-generation fans (see Figure 1).

Figure 1 – Graph illustrating the increased fan airflow (in CFM) for high performance silver and gold fans compared to baseline fans

In 2U systems, the gold fan features a dual rotor design in a larger form factor and spacers implemented for the standard and silver fans. Both Intel and AMD next-generation PowerEdge servers will support the silver and gold fans. The fan type is predetermined according to the server platform and hardware configuration.

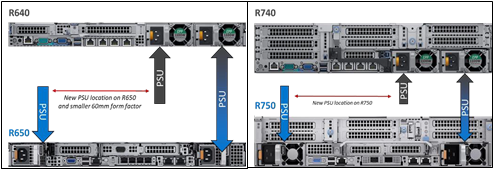

Smaller PSU Form Factor and Location – To create space for a thermal architecture redesign, PSUs have been relocated to the outside edges of the 1U and 2U server chassis. This provides purposeful exhaust lanes for hot airflow from the CPUs to prevent overheating of downstream hardware components such as PCIe cards, OCP or PSUs. In the 1U systems a new, narrower, 60mm form factor PSU is implemented to further increase the exhaust path space. The new PSU layout and form factors are supported for both Intel and AMD next-generation PowerEdge servers. See Figure 2 below for illustrations of this new PSU layout.

Figure 2 – PowerEdge R650/R750 PSU architecture compared to R640/R740

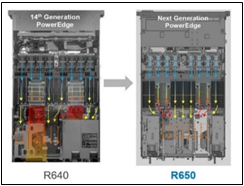

Balanced Airflow Design – Perhaps the most impactful change is having a more balanced airflow design. Figure 3 illustrates that the R650 motherboard layout is more symmetrical than the R640. The R640 layout was challenged due to PSU and PCIe cards located directly downstream of the CPU exhaust paths creating PSU and PCIe cooing challenges and an imbalance in airflow across the width of the system. The R650 virtually eliminates these bottlenecks by moving the PSUs out of the CPU exhaust path, balancing the airflow, and creating the purposeful paths for CPU exhaust airflow to the rear of the chassis. The R750 features the same split PSU layout and purposeful CPU exhaust paths in addition to a dedicated duct design that delivers fresh air to the rear PCIe slots for high power GPU configurations.

Figure 3 – The PowerEdge R650 has a more balanced airflow design compared to the PowerEdge R640

Conclusion

Dell Technologies PowerEdge servers with 3rd generation Intel® and AMD processors delivers a thoughtful total solution that accommodates semiconductor thermal requirements by improving the internal thermal design. By implementing higher performance fans, new PSUs and purposeful airflow pathways, PowerEdge customers can maximize their work output without having to worry about overheating their system.

Related Documents

Understanding the Value of AMDs Socket to Socket Infinity Fabric

Tue, 17 Jan 2023 00:43:22 -0000

|Read Time: 0 minutes

Summary

AMD socket-to-socket Infinity Fabric increases CPU-to-CPU transactional speeds by allowing multiple sockets to communicate directly to one another through these dedicated lanes. This DfD will explain what the socket-to-socket Infinity Fabric interconnect is, how it functions and provides value, as well as how users can gain additional value by dedicating one of the x16 lanes to be used as a PCIe bus for NVMe or GPU use.

Introduction

Prior to socket-to-socket Infinity Fabric (IF) interconnect, CPU-to-CPU communications generally took place on the HyperTransport (HT) bus for AMD platforms. Using this pathway for multi-socket servers worked well during the lifespan of HT, but developing technologies pushed for the development of a solution that would increase data transfer speeds, as well as allow for combo links.

AMD released socket-to-socket Infinity Fabric (also known as xGMI) to resolve these bottlenecks. Having dedicated IF links for direct CPU-to- CPU communications allowed for greater data-transfer speeds, so multi-socket server users could do more work in the same amount of time as before.

How Socket-to-Socket Infinity Fabric Works

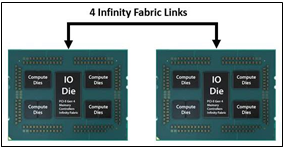

IF is the external socket-to-socket interface for 2-socket servers. The architecture used for IF links is a combo of serializer/deserializer (SERDES) that can be both PCIe and xGMI, allowing for sixteen lanes per link and a lot of platform flexibility. xGMI2 is the current generation available and it has speeds that reach up to 18Gbps; which is faster than the PCIe Gen4 speed of 16Gbps. Two CPUs can be supported by these IF links. Each IF lane connects from one CPU IO die to the next, and they are interwoven in a similar fashion, directly connecting the CPUs to one- another. Most dual-socket servers have three to four IF links dedicated for CPU connections. Figure 1 depicts a high- level illustration of how socket to socket IF links connect across CPUs.

Figure 1 – 4 socket to socket IF links connect two CPUs

The Value of Infinity Fabric Interconnect

Socket to socket IF interconnect creates several advantages for PowerEdge customers:

- Dedicated IF lanes are routed directly from one CPU to the other CPU, ensuring inter-socket communications travel the shortest distance possible

- xGMI2 speeds (18Gbps) exceed the speeds of PCIe Gen4, allowing for extremely fast inter-socket data transfer speeds

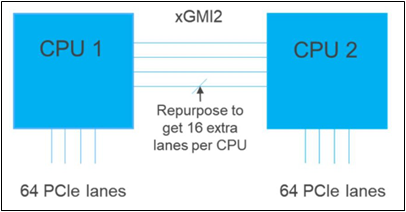

Furthermore, if customers require additional PCIe lanes for peripheral components, such as NVMe or GPU drives, one of the four IF links are a cable with a connector that can be repurposed as a PCIe lane. AMD’s highly optimized and flexible link topologies enable sixteen lanes per socket of Infinity Fabric to be repurposed. This means that 2S AMD servers, such as the PowerEdge R7525, have thirty-two additional lanes giving a total of 160 PCIe lanes for peripherals. Figure 2 below illustrates what this would look like:

Figure 2 – Diagram showing additional PCIe lanes available in a 2S configuration

Conclusion

AMDs socket-to-socket Infinity Fabric interconnect replaced the former HyperTransport interconnect in order to allow massive amounts of data to travel fast enough to avoid speed bottlenecks. Furthermore, customers needing additional PCIe lanes can repurpose one of the four IF links for peripheral support. These advantages allow AMD PowerEdge servers, such as the R7525, to meet our server customer needs.

The Latest GPUs of 2022

Mon, 16 Jan 2023 13:44:30 -0000

|Read Time: 0 minutes

And How We Recommend Applying Them to Enable Breakthrough Performance

Summary

Dell Technologies offers a wide range of GPUs to address different workloads and use cases. Deciding on which GPU model and PowerEdge server to purchase, based on intended workloads, can become quite complex for customers looking to use GPU capabilities. It is important that our customers understand why specific GPUs and PowerEdge servers will work best to accelerate their intended workloads. This DfD informs customers of the latest and greatest GPU offerings in 2022, as well as which PowerEdge servers and workloads we recommend to enable breakthrough performance.

PowerEdge servers support various GPU brands and models. Each model is designed to accelerate specific demanding applications by acting as a powerful assistant to the CPU. For this reason, it is vital to understand which GPUs on PowerEdge servers will best enable breakthrough performance for varying workloads. This paper describes the latest GPUs as of Q1 2022, shown below in Figure 1, to help educate PowerEdge customers on which GPU is best suited for their specific needs.

GPU Model | Number of Cores | Peak Double Precision (FP64) | Peak Single Precision (FP32) | Peak Half Precision (FP16) | Memory Size / Bus | Memory Bandwidth | Power Consumption |

A2 | 2560 | N/A | 4.5 TFLOPS | 18 TFLOPS | 16GB GDDR6 | 200 GB/s | 40-60W |

A16 | 1280 x4 | N/A | 4.5 TFLOPS x4 | 17.9 TFLOPS x4 | 16GB GDDR6 x4 | 200 GB/s x4 | 250W |

A30 | 3804 | 5.2 TFLOPS | 10.3 TFLOPS | 165 TFLOPS | 24GB HBM2 | 933 GB/s | 165W |

A40 | 10752 | N/A | 37.4 TFLOPS | 149.7 TFLOPS | 48GB GDDR6 | 696 GB/s | 300W |

MI100 | 7680 | 11.5 TFLOPS | 23.1 TFLOPS | 184.6 TFLOPS | 32GB HBM2 | 1.2 TB/s | 300W |

A100 PCIe | 6912 | 9.7 TFLOPS | 19.5 TFLOPS | 312 TFLOPS | 80GB HBM2e | 1.93 TB/s | 300W |

A100 SXM2 | 6912 | 9.7 TFLOPS | 19.5 TFLOPS | 312 TFLOPS | 40GB HBM2 | 1.55 TB/s | 400W |

A100 SXM2 | 6912 | 9.7 TFLOPS | 19.5 TFLOPS | 312 TFLOPS | 80GB HBM2e | 2.04 TB/s | 500W |

T4 | 2560 | N/A | 8.1 TFLOPS | 65 TFLOPS | 16GB GDDR6 | 300 GB/s | 70W |

Figure 1 – Table comparing 2022 GPU specifications

NVIDIA A2

The NVIDIA A2 is an entry-level GPU intended to boost performance for AI-enabled applications. What makes this product unique is its extremely low power limit (40W-60W), compact size, and affordable price. These attributes position the A2 as the perfect “starter” GPU for users seeking performance improvements on their servers. To benefit from the performance inferencing and entry-level specifications of the A2, we suggest attaching it to mainstream PowerEdge servers, such as the R750 and R7515, which can host up to 4x and 3x A2 GPUs respectively. Edge and space/power constrained environments, such as the XR11, are also recommended, which can host up to 2x A2 GPUs. Customers can expect more PowerEdge support by H2 2022, including the PowerEdge R650, T550, R750xa, and XR12.

Supported Workloads: AI Inference, Edge, VDI, General Purpose Recommended Workloads: AI Inference, Edge, VDI Recommended PowerEdge Servers: R750, R7515, XR11

NVIDIA A16

The NVIDIA A16 is a full height, full length (FHFL) GPU card that has four GPUs connected together on a single board through a Mellanox PCIe switch. The A16 is targeted at customers requiring high-user density for VDI environments, because it shares incoming requests across four GPUs instead of just one. This will both increase the total user count and reduce queue times per request. All four GPUs have a high memory capacity (16GB DDR6 for each GPU) and memory bandwidth (200GB/s for each GPU) to support a large volume of users and varying workload types. Lastly, the NVIDIA A16 has a large number of video encoders and decoders for the best user experience in a VDI environment.

To take full advantage of the A16s capabilities, we suggest attaching it to newer PowerEdge servers that support PCIe Gen4. For Intel-based PowerEdge servers, we recommend the R750 and R750xa, which support 2x and 4x A16 GPUs, respectively. For AMD-based PowerEdge servers, we recommend the R7515 and R7525, which support 1x and 3x A16 GPUs, respectively.

Supported Workloads: VDI, Video Encoding, Video Analytics Recommended Workloads: VDI Recommended PowerEdge Servers: R750, R750xa, R7515, R7525

NVIDIA A30

The NVIDIA A30 is a mainstream GPU offering targeted at enterprise customers who seek increased performance, scalability, and flexibility in the data center. This powerhouse accelerator is a versatile GPU solution because it has excellent performance specifications for a broad spectrum of math precisions, including INT4, INT8, FP16, FP32, and FP64 models. Having the ability to run third- generation tensor core and the Multi-Instance GPU (MIG) features in unison further secures quality performance gains for big and small workloads. Lastly, it has an unconventionally low power budget of only 165W, making it a viable GPU for virtually any PowerEdge server.

Given that the A30 GPU was built to be a versatile solution for most workloads and servers, it balances both the performance and pricing to bring optimized value to our PowerEdge servers. The PowerEdge R750, R750xa, R7525, and R7515 are all great mainstream servers for enterprise customers looking to scale. For those requiring a GPU-dense server, the PowerEdge DSS8440 can hold up to 10x A30s and will be supported in Q1 2022. Lastly, the PowerEdge XR12 can support up to 2x A30s for Edge environments.

Supported Workloads: AI Inference, AI Training, HPC, Video Analytics, General Purpose Recommended Workloads: AI Inference, AI Training Recommended PowerEdge Servers: R750, R750xa, R7525, R7515, DSS8440, XR12

NVIDIA A40

The NVIDIA A40 is a FHFL GPU offering that combines advanced professional graphics with HPC and AI acceleration to boost the performance of graphics and visualization workloads, such as batch rendering, multi-display, and 3D display. By providing support for ray tracing, advanced shading, and other powerful simulation features, this GPU is a unique solution targeted at customers that require powerful virtual and physical displays. Furthermore, with 48GB of GDDR6 memory, 10,752 CUDA cores, and PCIe Gen4 support, the A40 will ensure that massive datasets and graphics workload requests are moving quickly.

To accommodate the A40s hefty power budget of 300W, we suggest customers attach it to a PowerEdge server with ample power to spare, such as the DSS8440. However, if the DSS8440 is not possible, the PowerEdge R750xa, R750, R7525, and XR12 are also compatible with the A40 GPU and will function adequately so long as they are using PSUs with adequate power output. Lastly, populating A40 GPUs within the PowerEdge T550 is also a great play for customers who want to address visually demanding workloads outside the traditional data center.

Supported Workloads: Graphics, Batch Rendering, Multi-Display, 3D Display, VR, Virtual Workstations, AI Training, AI Inference Recommended Workloads: Graphics, Bach Rendering, Multi-Display Recommended PowerEdge Servers: DSS8440, R750xa, R750, R7525, XR12, T550

NVIDIA A100

The NVIDIA A100 focuses on accelerating HPC and AI workloads. It introduces double-precision tensor cores that significantly reduce HPC simulation run times. Furthermore, the A100 includes Multi-Instance GPU (MIG) virtualization and GPU partitioning capabilities, which benefit cloud users looking to use their GPUs for AI inference and data analytics. The newly supported sparsity feature can also double the throughput of tensor core operations by exploiting the fine- grained structure in DL networks. Lastly, A100 GPUs can be inter-connected either by NVLink bridge on platforms like the R750xa and DSS8440, or by SXM4 on platforms like the PowerEdge XE8545, which increases the GPU-to- GPU bandwidth when compared to the PCIe host interface.

The PowerEdge DSS8440 is a great server for the A100, as it provides ample power and can hold the most GPUs. If not the DSS8440, we would suggest using the PowerEdge XE8545, R750xa, or R7525. Please note that only the 80GB model is supported for PCIe connections, and be sure to provide plenty of power to accommodate the A100s 300W/400W power requirements.

Supported Workloads: HPC, AI Training, AI Inference, Data Analytics, General Purpose Recommended Workloads: HPC, AI Training, AI Inference, Data Analytics Recommended PowerEdge Servers: DSS8440, XE8545, R750xa, R7525

AMD MI100

The AMD MI100 value proposition is similar to the A100 in that it will best accelerate HPC and AI workloads. At 11.5 TFLOPS, its FP64 performance is industry-leading for the acceleration of HPC workloads. Similarly, at 23.1 TFLOPs, the FP32 specifications are more than sufficient for any AI workload. Furthermore, the MI100 supports 32GB of high-bandwidth memory (HBM2) to enable a whopping 1.2TB/s of memory bandwidth. In a nutshell, this GPU is designed to tackle complex, data-intensive HPC and AI workloads for enterprise customers.

The AMD MI100 is qualified on both the Intel-based PowerEdge R750xa, which supports up to 4x MI100 GPUs, and the AMD- based PowerEdge R7525, which supports up to 3x MI100 GPUs. We highly recommend adopting a powerful PSU for either server, as the MI100 also has a massive power consumption of 300W.

Supported Workloads: HPC, AI Training, AI Inference, ML/DL Recommended Workloads: HPC, AI Training, AI Inference Recommended PowerEdge Servers: R750xa, R7525

Conclusion

The GPUs we are recommending in this list offer a wide variety of features that are designed to accelerate a diverse range of server workloads. A PowerEdge server configured with the most appropriate GPU will enable intended customer workloads to use these features in concert with other system components to yield the best performance. We hope this discussion of the latest 2022 GPUs, as well as our recommendations for Dell PowerEdge servers and workloads, will help customers choose the most appropriate GPU for their data center needs and business goals.

Learn More

Dell PowerEdge Accelerated Servers and Accelerators Dell eBook

Demystifying Deep Learning Infrastructure Choices using MLPerf Benchmark Suite HPC at Dell