Broadcom Emulex Gen 7 FC HBA has Significantly Improved Performance over Gen 6

Download PDFMon, 16 Jan 2023 13:44:28 -0000

|Read Time: 0 minutes

Summary

As the adoption of All-Flash Arrays (AFA) over Fabric for the public cloud continues to grow, server HBA standards must steadily rise to ensure maximum workload performance and security are intact. Dell EMC and Broadcom have partnered together to test the new Gen 7 Emulex HBA and compare its performance to the previous generation. The results serve to be a reminder that data center networking can quickly and critically impact system performance in our rapidly evolving technical climate.

Gen 7 FC HBA

Data centers are undergoing a transformation with the emergence of all- flash arrays (AFAs), faster media types and more efficient ways to access media. These forms of storage deliver record speeds and lower latencies to significantly improve application performance. One key technology that is driving this rapid evolution is NVMe over Fabrics (NVMe-oF). Swift speeds have proven the value of running AFAs over Fabrics, and now networking HBA’s are being further developed to avoid bottlenecking performance. The latest storage networking standard, Gen 7 FC (Fibre Channel) HBA, provides the ideal combination of performance improvements plus features to support this data center transformation, while maintaining backward compatibility with existing Fibre Channel infrastructure.

These bold claims of performance, security and efficiency improvements over the previous generation compelled Dell EMC to dive deeper, in hopes that our latest PowerEdge products would utilize Gen 7 to achieve significant read/write IOPS (I/O Operations per Second) within a flash- oriented datacenter. To determine the latency and read/write performance advantages compared to its Gen 6 predecessor, three tests were conducted with the newest Emulex Gen 7 LPe35000-series HBAs (Host Bus Adapters) by Broadcom.

Figure 1: Emulex Gen 7 LPe35000-series LPe35002

Test Procedure and Results

To measure Gen 7 HBAs latency improvement, two important interfaces of the HBA were prepared: The Fiber Channel port as it connects to the SAN, and the PCIe interface of the host computer. Two protocol logic analyzers were used on each connection with synchronized clocks to ensure that both analyzers measured the timing of a full iteration (from when a FC frame is received at the HBA FC port until it was converted to the PCIe protocol).

To measure Gen 7 HBA write IOPS improvement, both HBA performance metrics were compared in an Oracle Database 12c server with data stored on a NetApp AFF A800 all-flash array. HammerDB benchmark was used to simulate an OLTP client load of 128 virtual SQL transaction users to a 500GB TPC-C- like dataset representing 5000 warehouses.

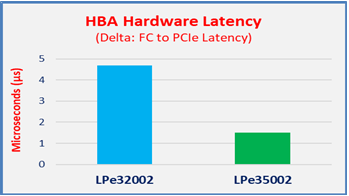

Gen 7 has ~1/3 latency of Gen 6 (Figure 2)

The fast path hardware architecture design reduces average hardware latency to one third of the latency seen in the previous generation Gen 6 HBA. This dramatic reduction in latency impacts every frame that moves from the SAN to host PCIe bus in either direction as it passes through the HBA.

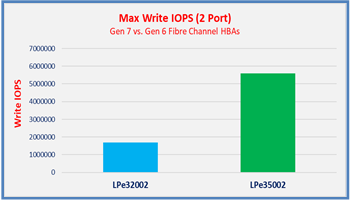

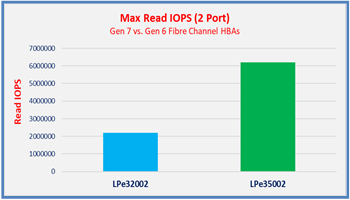

Gen 7 has ~3x greater read & write IOPS (Figures 3 and 4)

Running synthetic, I/O workloads, Broadcom Emulex Gen 7 HBAs delivered nearly 3x as many IOPS across two ports in both the read and write tests. This serves as an excellent example of the increased application value gained through updating HBA’s on an already existing server and storage investment.

Figure 2: Gen 7 has 1/3 the latency of Gen 6, which is better

Figure 3: Gen 7 significantly outperforms Gen 6 for Write IOPS

Figure 4: Gen 7 significantly outperforms Gen 6 for Read IOPS

Additional Improvements to Gen 7

- Trunking: Supports up to 64GFC on a single port by aggregating multiple physical ports to form a single, logical, extremely high-bandwidth port.

- Supports PCIe 4.0: Gen 7 is the first HBA with PCIe 4.0 supporting 2x the bit transfer rate compared to PCIe 3.0

- Enhanced security with support for Dell Cyber-resiliency: Checks for authentic firmware every time the system is booted and before installing any new firmware.

In Conclusion

The test results point to the conclusion that servers using a dense number of high speed storage devices, such as Dell EMC AFAs, NVMe devices, or Connectrix 32GFC switches, could be under-optimized if using an outdated HBA. By updating the previous Gen 6 FC HBA to the current Gen 7 FC HBA, users ensure that their networking components are not limiting the optimal performance that the PowerEdge system was built to yield.

Notes:

- ESG, 2019 Data Storage Predictions, 1/7/2019

- Demartek Evaluation, Emulex Gen 7 Fibre Channel HBAs by Broadcom, 12/2018

Related Documents

Dell PowerEdge R7625 Rack Server & Emulex LPe36002 Host Bus Adapter: 64G Fibre Channel Microsoft SQL Server

Fri, 29 Mar 2024 16:19:02 -0000

|Read Time: 0 minutes

Dell PowerEdge R7625 Rack Server & Emulex LPe36002 Host Bus Adapter

64G Fibre Channel Microsoft SQL Server Performance – NVMe/FC vs. SCSI/FC

Tolly Report #224107

Tolly test report demonstrating that Dell PowerEdge R7625 Rack Server outfitted with the Emulex LPe36002 Host Bus Adapter using NVMe/FC can improve application performance vs older generation SCSI/FC.

Executive Summary

New generation servers can bring higher performance across a range of areas. This is certainly the case with Dell’s 16th-generation server line. Similarly, newer protocols like NVM Express (NVMe) over Fibre Channel (FC) can provide greater throughput and efficiency than older SCSI over FC. Dell is unique in offering an end-to-end NVMe/FC connectivity solution in the mid-range storage marketplace with the PowerStore line.

Dell commissioned Tolly to benchmark the performance of the Broadcom Emulex LPe36002 64G Fibre Channel dual-port host bus adapter (HBA) running in the Dell PowerEdge R7625 Rack Server with AMD EPYC processors by testing using actual database applications rather than simulated I/O microbenchmarks. Testing focused on evaluating the database throughput, latency, and CPU efficiency of accessing Microsoft SQL Server 2019 for Linux systems over older SCSI/FC and newer NVMe/FC. Databases were stored on a Dell PowerStore 9200T storage appliance.

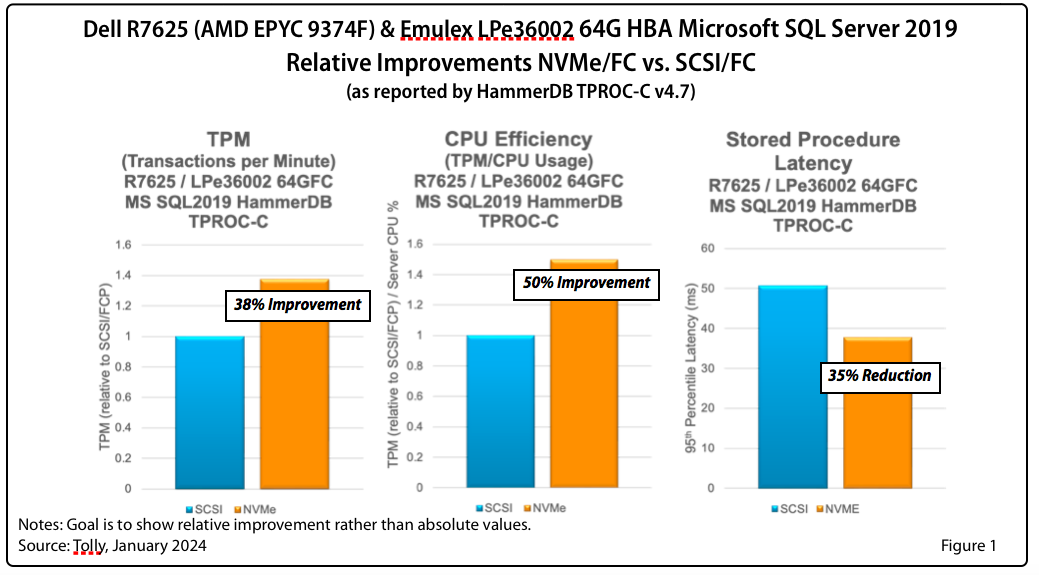

Tests showed significant improvements in transaction throughput, latency reduction, and CPU efficiency. See Figure 1 for a summary of relative improvements.

The Bottom Line | |

Dell PowerEdge R7625 with AMD EPYC processors & Emulex LPe36002 64G HBA using NVMe/FC: | |

1 | Improved database transactions by 38% |

2 | Reduced database stored procedure latency by 35% |

Overview

The goal of this test was to illustrate the performance benefits of using the newer, more-efficient NVMe/FC protocol in lieu of the older, less-efficient SCSI/FC protocol in conjunction with Emulex 64G FC HBAs running under Linux in a Dell PowerEdge R7625 Rack Server. (Dell sells the Emulex 64G FC HBA for the same price as the Emulex 32G FC HBA.)

The test was run using Microsoft SQL Server 2019 for Linux accessing the database via SCSI and then via NVMe.

While low-level component benchmarks are instructive, ultimately system architects are rightly most interested in how network-level improvements can translate into application performance improvements. This benchmarking was done with HammerDB which generates actual user transactions against an actual database. The test was focused on TPROC-C which is the HammerDB, database-oriented implementation of the de facto standard TPC-C online transaction processing benchmark.

Tests showed significant improvements in key benchmarks.

Test Results

Microsoft SQL Server 2019 for Linux

Transaction Processing. The NVMe/FC results were significantly better than the SCSI/FC results. When run over NVMe/FC, 38% more transactions per minute were processed.

CPU Efficiency. The NVMe/FC results were significantly better than the SCSI/FC results. When run over NVMe/FC, the CPU efficiency was improved by 50%.

P95 Stored Procedure Latency. Similarly, the NVMe/FC results were significantly better than the SCSI/FC results. When run over NVMe/FC, the latency was reduced by 35%.

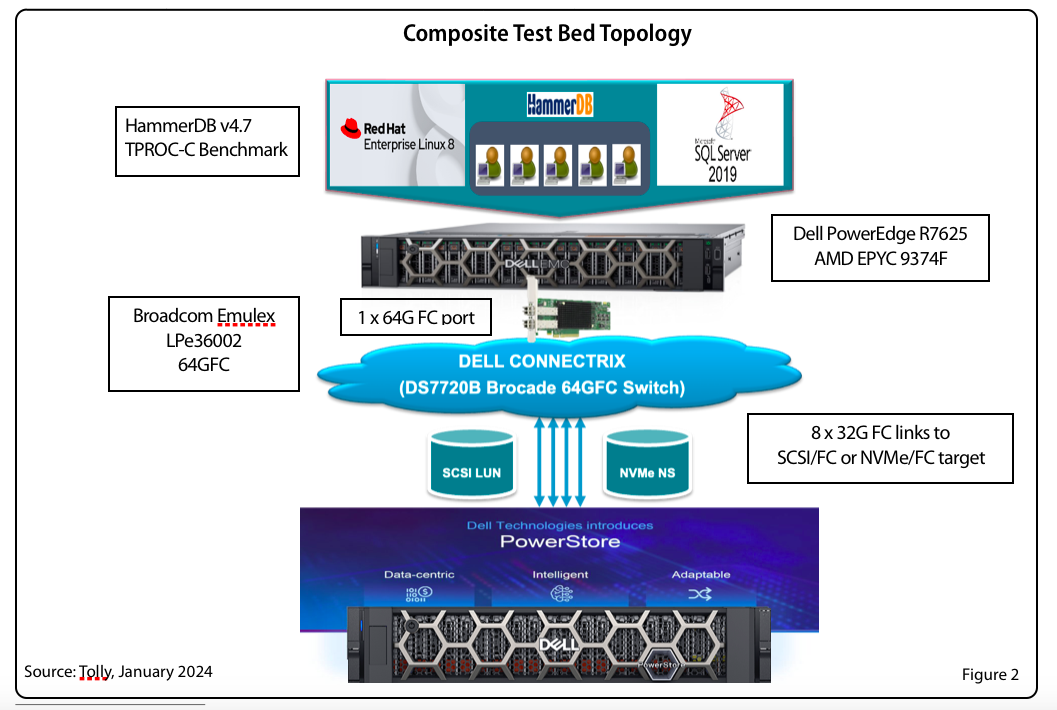

Test Setup & Methodology

The HBA under test used current production drivers that are publicly available. Default settings were used. Details of the test environment and systems under test are found in Tables 1-5. Figure 2 shows a composite test environment.

Database Test

The goal of this test was to benchmark the database transaction performance of each HBA running the HammerDB “TPROC-C” workload which, as noted earlier, is the HammerDB, database version of the Transaction Processing Council’s TPC-C OLTP benchmarked

A Dell PowerEdge R7625 server, powered by AMD EPYC processors, was configured with the HBA under test. The Broadcom Emulex LPe36002 64G HBA connected to a Dell PowerStore 9200T via a Dell Connectrix 64G Fibre Channel switch. The test utilized a single 64G FC port of the Emulex HBA.

The server ran RHEL 8.9. SCSI Device Mapper and NVMe native multipath were enabled for the respective devices. NUMA was set to off and “transparent huge pages” was disabled.

For storage, path selection policy for NVMe native multipath was set to “round-robin". For SCSI Device mapper multipath was set to "queue-length 0”.

This test was run using Microsoft SQL Server 2019 for Linux,

The open source HammerDB test tool was used to populate the database schema and run the workload.

Table 1. HBA Under Test

Vendor | Product Name | Firmware | Driver |

Broadcom | Emulex LPe36002 (64G) (PCIe 4.0) | 14.0.539.26 | 14.0.0.15 |

Table 2. Server Configuration

Vendor/System | Dell PowerEdge R7625 |

CPU | 2 socket AMD EPYC 9374F 32-Core Processor @ 3.8 GHz |

Number of CPUs | 128 logical processors. Profile: Performance, Logical Processors: Enabled, Sub Numa Clustering: Disabled |

Memory (RAM) | 256 GB |

Power Mode

| Performance |

OS | Red Hat Ent. Linux 8.9 (RHEL8) |

Kernel | 4.18.0-425.3.1 |

Table 3. Microsoft Database Configuration

Database | Microsoft SQL Server 2019 for Linux |

Storage | Single volume, XFS |

Dataset Size | 100 GB |

DB Memory Allocation | 10G |

Table 4. Database Test Tool

Vendor | Open Source |

Application | HammerDB 4.7 |

TPROC-C settings | Total # of Warehouses = 1,000 Transactions per user = 1 million Ramp-up time: 2 minutes Run time: 5 minutes |

Table 5. Storage Configuration

Vendor/Device | Dell PowerStore 9200T v3.5 |

Ports | 8 x 32G FC |

Volume Size | 1,024GB volume each for NVMe/FC and SCSI/FC |

Namespace/LUN | 8 x 32G target ports (single namespace) |

Network Fabric | Dell Connectrix 64G FC switch v9.0.1a |

About AMD

For over 50 years, AMD has been at the forefront of driving innovation in high-performance computing, graphics, and visualization technologies. Their products are relied upon by billions of people, leading Fortune 500 businesses, and cutting-edge scientific research institutions worldwide. AMD's mission is to build exceptional products that accelerate next-generation computing experiences and power solutions for the world's most important challenges. Visit http://www.amd.com for more information about AMD.

Broadcom Emulex LPe36002

The Broadcom Emulex LPe36000-series Gen 7 Fibre Channel HBAs are designed for demanding mission-critical workloads and emerging applications. The family of adapters features Silicon Root of Trust security, designed to thwart firmware attacks aimed at enterprises and governments.

Gen 7 64G provides seamless backward compatibility to 32G and 16G networks.

Dell sells the LPe36002 64G HBA for the same price as the 32G model.

About Tolly

The Tolly Group companies have been delivering world-class IT services for over 30 years. Tolly is a leading global provider of third-party validation services for vendors of IT products, components and services.

You can reach the company by E-mail at sales@tolly.com, or by telephone at +1 561.391.5610.

Visit Tolly on the Internet at: http://www.tolly.com

Tolly Terms Of Usage

The Tolly Gro This document is provided, free-of-charge, to help you understand whether a given product, technology, or service merits additional investigation for your particular needs. Any decision to purchase a product must be based on your own assessment of suitability based on your needs. The document should never be used as a substitute for advice from a qualified IT or business professional. This evaluation was focused on illustrating specific features and/or performance of the product(s) and was conducted under controlled, laboratory conditions. Certain tests January have been tailored to reflect performance under ideal conditions; performance January vary under real-world conditions. Users should run tests based on their own real-world scenarios to validate performance for their own networks.

Reasonable efforts were made to ensure the accuracy of the data contained herein but errors and/or oversights can occur. The test/audit documented herein January also rely on various test tools the accuracy of which is beyond our control. Furthermore, the document relies on certain representations by the sponsor that are beyond our control to verify. Among these is that the software/hardware tested is production or production track and is, or will be, available in equivalent or better form to commercial customers. Accordingly, this document is provided "as is," and Tolly Enterprises, LLC (Tolly) gives no warranty, representation or undertaking, whether express or implied, and accepts no legal responsibility, whether direct or indirect, for the accuracy, completeness, usefulness, or suitability of any information contained herein. By reviewing this document, you agree that your use of any information contained herein is at your own risk, and you accept all risks and responsibility for losses, damages, costs, and other consequences resulting directly or indirectly from any information or material available on it. Tolly is not responsible for, and you agree to hold Tolly and its related affiliates harmless from any loss, harm, injury, or damage resulting from or arising out of your use of or reliance on any of the information provided herein.

Tolly makes no claim as to whether any product or company described herein is suitable for investment. You should obtain your own independent professional advice, whether legal, accounting or otherwise, before proceeding with any investment or project related to any information, products or companies described herein. When foreign translations exist, the English document is considered authoritative. To assure accuracy, only use documents downloaded directly from Tolly.com. No part of any document January be reproduced, in whole or in part, without the specific written permission of Tolly. All trademarks used in the document are owned by their respective owners. You agree not to use any trademark in or as the whole or part of your own trademarks in connection with any activities, products or services which are not ours, or in a manner which January be confusing, misleading, or deceptive or in a manner that disparages us or our information, projects or developments.

Lab Insight: Dell AI PoC for Transportation & Logistics

Wed, 20 Mar 2024 21:23:12 -0000

|Read Time: 0 minutes

Introduction

As part of Dell’s ongoing efforts to help make industry-leading AI workflows available to its clients, this paper outlines a sample AI solution for the transportation and logistics market. The reference solution outlined in this paper specifically targets challenges in the maritime industry by creating an AI powered cargo monitoring PoC built with DellTM hardware.

AI as a technology is currently in a rapid state of advancement. While the area of AI has been around for decades, recent breakthroughs in generative AI and large language models (LLMs) have led to significant interest across almost all industry verticals, including transportation and logistics. Futurum intelligence projects a 24% growth of AI in the transportation industry in 2024 and a 30% growth for logistics.

The advancements in AI now open significant possibilities for new value-adding applications and optimizations, however different industries will require different hardware and software capabilities to overcome industry specific challenges. When considering AI applications for transportation and logistics, a key challenge is operating at the edge. AI-powered applications for transportation will typically be heavily driven by on-board sensor data with locally deployed hardware. This presents a specific challenge, requiring hardware that is compact enough for edge deployments, powerful enough to run AI workloads, and robust enough to endure varying edge conditions.

This paper outlines a PoC for an AI-based transportation and logistics solution that is specifically targeted at maritime use cases. Maritime environments represent some of the most rigorous edge environments, while also presenting an industry with significant opportunity for AI-powered innovation. The PoC outlined in this paper addresses the unique challenges of maritime focused AI solutions with hardware from Dell and BroadcomTM.

The PoC detailed in this paper serves as a reference solution that can be leveraged for additional maritime, transportation, or logistics applications. The overall applicability of AI in these markets is much broader than the single maritime cargo monitoring solution, however, the PoC demonstrates the ability to quickly deploy valuable edge-based solutions for transportation and logistics using readily available edge hardware.

Importance for the Transportation and Logistics Market

Transportation and logistics cover a broad industry with opportunity for AI technology to create a significant impact. While the overarching segment is widespread, including public transportation, cargo shipping, and end-to-end supply chain management, key to any transportation or logistics process is optimization. These processes are dependent on a high number of specific details and variables such as specific routes, number and types of goods transported, compliance regulations, and weather conditions. By optimizing for the many variables that may arise in a logistical process, organizations can be more efficient, save money, and avoid risk.

In order to create these optimal processes, however, the data surrounding the many variables involved needs to be captured. Further, this data needs to be analyzed, understood, and acted on. The large quantity of data required and the speed at which it must be processed in order to make impactful decisions to complex logistical challenges often surpasses what a human can achieve manually.

By leveraging AI technology, impactful decisions to transportation and logistics processes can be achieved quicker and with greater accuracy. Cameras and other sensors can capture relevant data that is then processed and understood by an AI model. AI can quickly process vast amounts of data and lead to optimized logistics conclusions that would otherwise be too timely, costly, or complex for organizations to make.

The potential applications for AI in transportation are vast and can be applied to various means of transportation including shipping, rail, air, and automotive, as well as associated logistical processes such as warehouses and shipping yards. One possible example is AI optimized route planning which could pertain to either transportation of cargo or public transportation and could optimize for several factors including cost, weather conditions, traffic, or environmental impact. Additional applications could include automated fleet management, AI powered predictive vehicle maintenance, and optimized pricing. As AI technology improves, many transportation services may be additionally optimized with the use of autonomous vehicles.

By adopting such AI-powered applications, organizations can implement optimizations that may not otherwise be achievable. While new AI applications show promise of significant value, many organizations may find adopting the technology a challenge due to unfamiliarity with the new and rapidly advancing technology. Deploying complex applications such as AI in transportation environments can pose an additional challenge due to the requirements of operating in edge environments.

The following PoC solution outlines an example of a transportation focused AI application that can offer significant value to maritime shipping by providing AI-powered cargo monitoring using Dell hardware at the edge.

Solution Overview

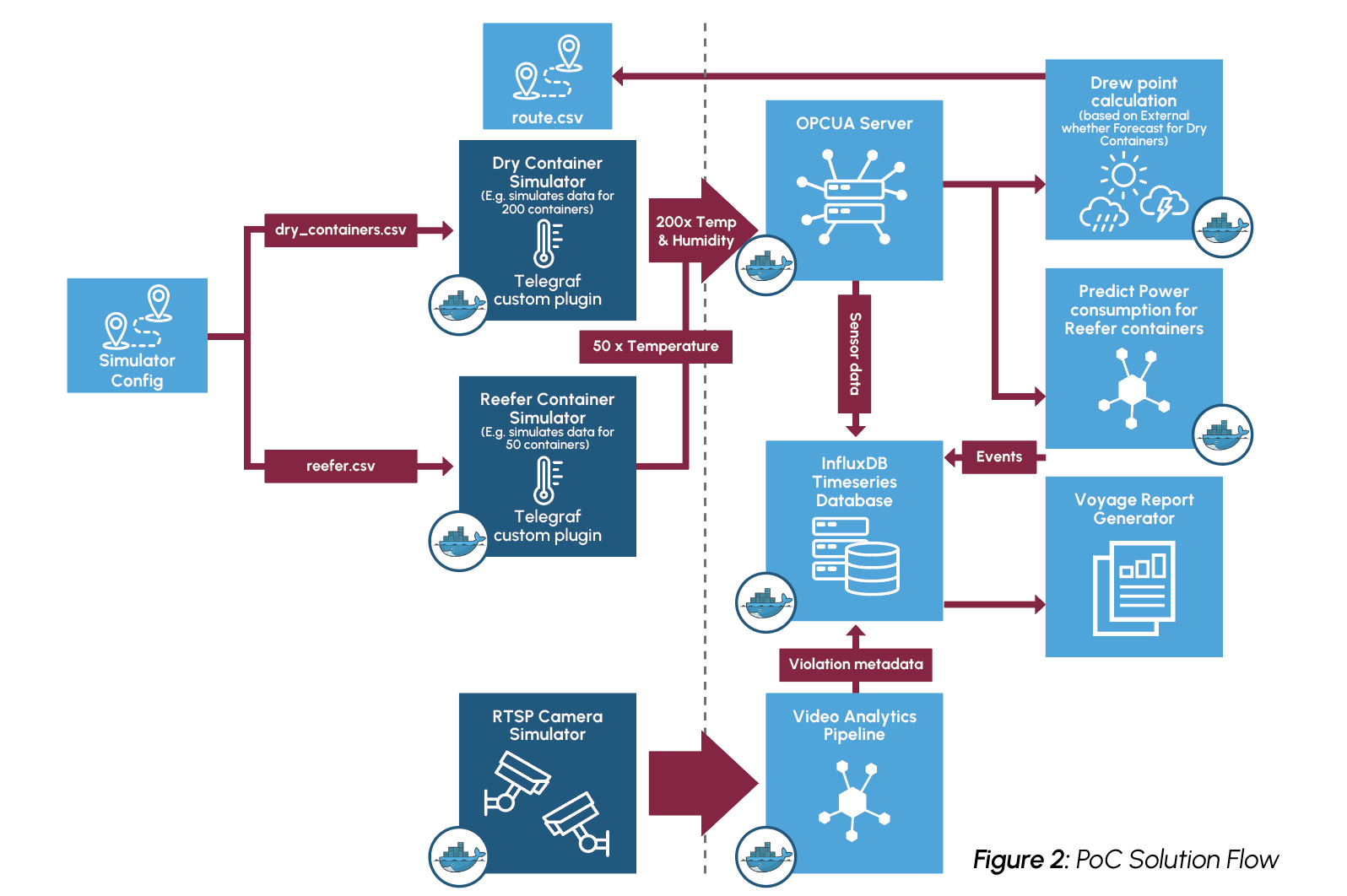

To demonstrate an AI-powered application focused on transportation and logistics, Scalers AITM, in partnership with Dell, Broadcom, and The Futurum Group implemented a proof-of-concept for a maritime cargo monitoring solution. The solution was designed to capture sensor data from cargo ships as well as image data from on-board cameras. Cargo containers can be monitored for temperature and humidity to ensure optimal conditions are maintained for the shipped cargo. In addition, cameras can be used to monitor workers in the cargo area to ensure worker safety and prevent injury. The captured data is then utilized by an LLM to create an AI generated compliance report at the end of the ship’s voyage.

This proof-of-concept addresses several problems that can be encountered in maritime shipping. Refrigerated cargo, known as reefer, is utilized to ship perishable items and pharmaceuticals that must be kept at specific temperatures. Without proper monitoring to ensure optimal temperatures, reefer may experience swings in temperature, resulting in spoiled products and ultimately financial loss. Predictive forecasting of the power requirements for refrigerated cargo can provide additional cost and environmental savings by providing greater power usage insights.

Similarly, dry cargo can become spoiled or damaged when exposed to excessive moisture. Moisture can be introduced in the form of condensation – known as cargo sweat – due to changes in climate and humidity during the ships journey. By monitoring the temperature and humidity of the cargo, alerts can be raised signaling the possibility of cargo sweat and allowing ventilation adjustments to be made which can prevent moisture related damage.

A third issue addressed by the maritime cargo monitoring PoC is that of worker safety. The possibility of shifting cargo containers can lead to dangerous situations and potential injuries for those working in container storage areas. By using video surveillance of workers in cargo areas, these potential injuries can be avoided.

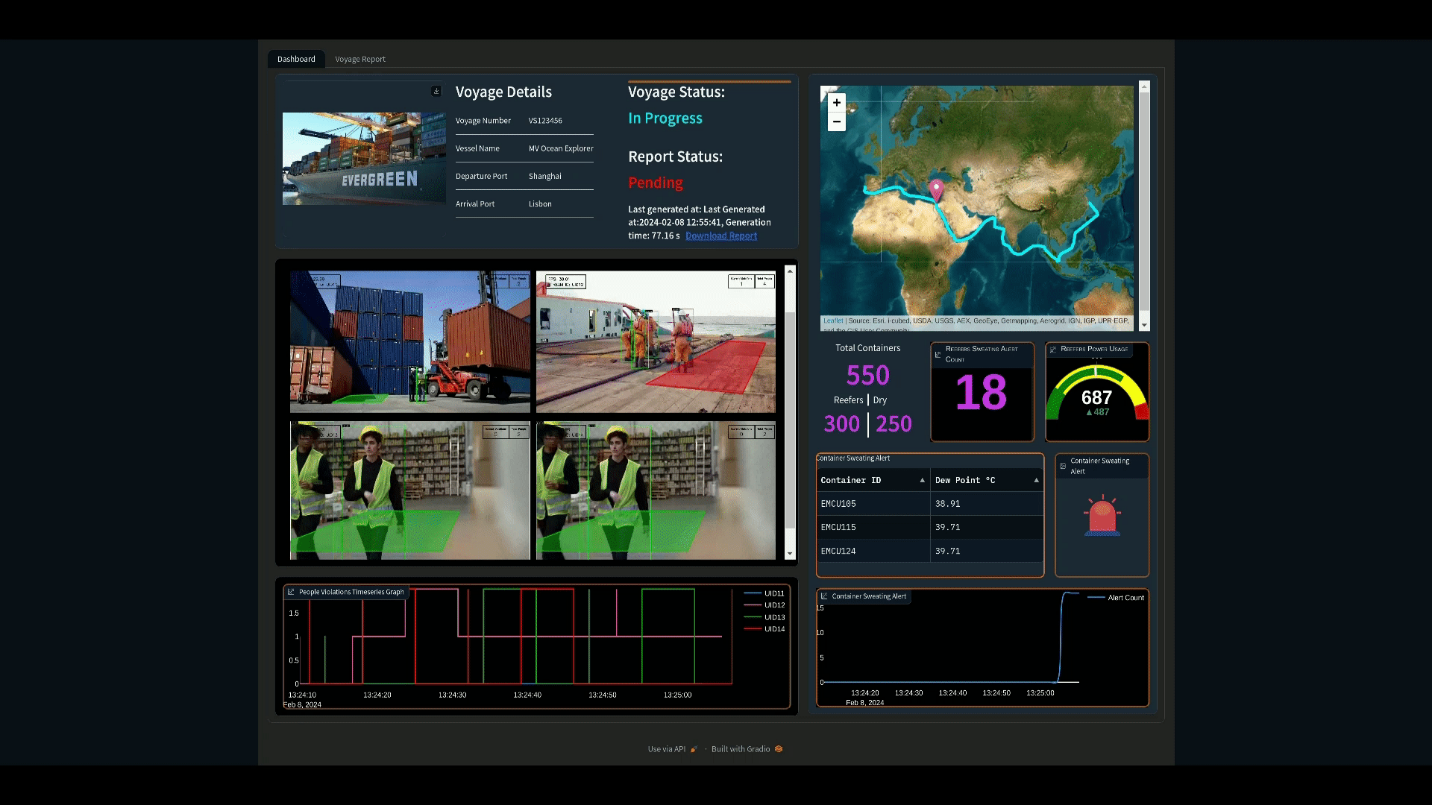

The PoC provides monitoring of these challenges with an additional visualization dashboard that displays information such as number of cargo containers, forecasted energy consumption, container temperature and humidity, and a video feed of workers. The dashboard additionally raises alerts as issues arise in any of these areas. This information is further compiled in to an end of voyage report for compliance and logging purposes, automatically generated with an LLM.

To achieve the PoC solution, simulated sensor data is generated for both reefers and dry containers, approximating the conditions undergone during a real voyage. The sensor data is written to an OPCUA server which then supplies data to a container sweat analytics module and a power consumption predictor. For dry containers, the temperature and humidity data is utilized alongside the forecasted weather of the route to create dew point calculations and monitor potential container sweat. Sensor data recording the temperature of reefer containers is monitored to ensure accurate temperatures are maintained, and a decision tree regressor model is leveraged to predict future power consumption for the next hour.

Figure 1: Visualization Dashboard

For monitoring worker safety, RTSP video data is captured into a video analytics pipeline built on NVIDIATM DeepStream. Streaming data is decoded and then inferenced using the YoloV8s model to detect workers entering dangerous, restricted zones. The restricted zones are configured as x,y coordinate pairs stored as JSON objects. Uncompressed video is then published to the visualization service using the Zero Overhead Network Protocol (Zenoh).

Monitoring and alerts for all of these challenges is displayed on a visualization dashboard as can be seen in Figure 1, as well as summarized in an end of voyage compliance report. The resulting compliance report that details the information collected on the voyage is AI generated using the Zephyr 7B model. Testing of the PoC found that the report could be generated in approximately 46 seconds, dramatically accelerating the reporting process compared to a manual approach.

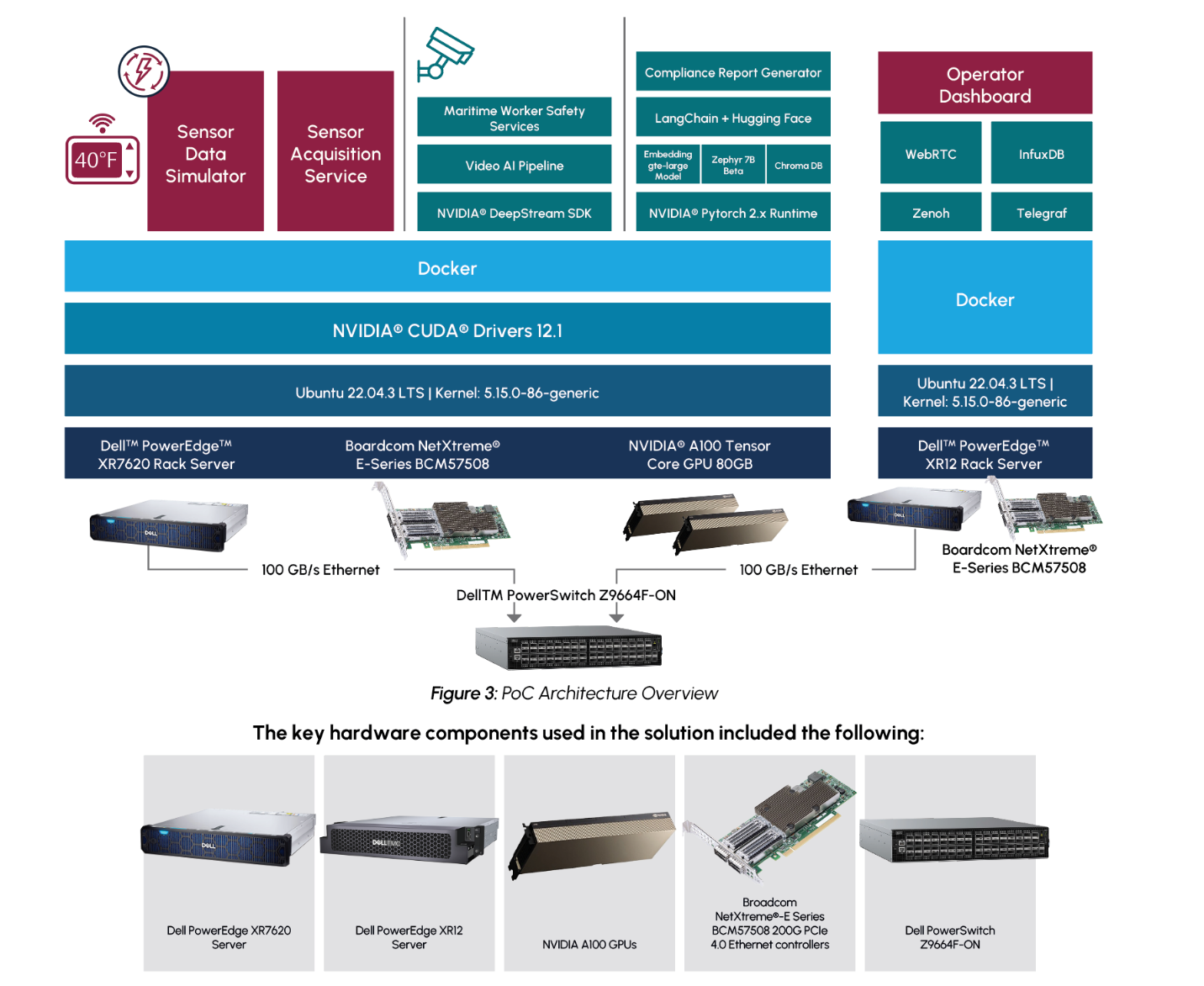

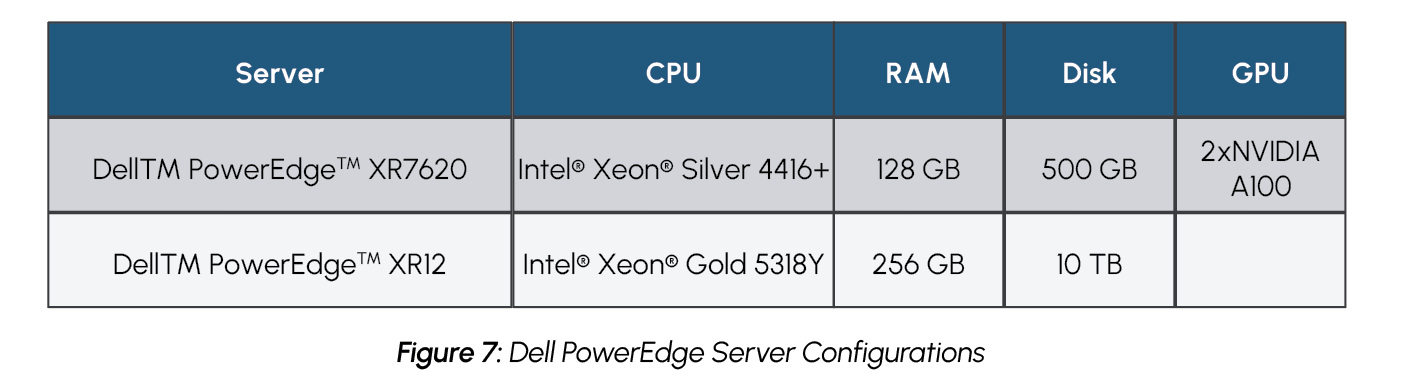

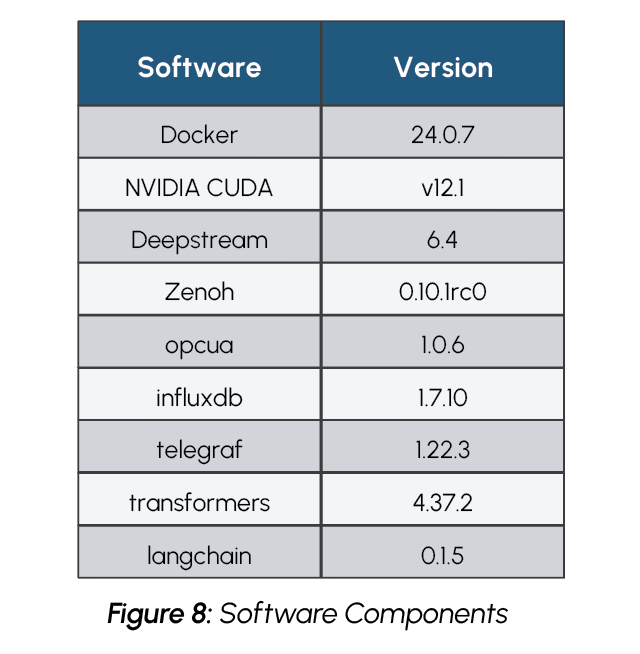

To achieve the PoC solution in-line with the restraints of a typical maritime use case, the solution was deployed using Dell PowerEdge servers designed for the edge. The sensor data calculations and predictions, video pipeline, and AI report generation were achieved on a Dell PowerEdge XR7620 server with dual NVIDIA A100 GPUs. A Dell PowerEdge XR12 server was deployed to host the visualization dashboard. The two servers were connected with high bandwidth Broadcom NICs.

An overview of the solution can be seen in Figure 2

Additional details about the implementation and performance testing of the PoC on GitHub, including:

- Configuration information including diagrams and YAML code

- Instructions for doing the performance tests

- Details of performance results

- Source code

- Samples for test process

https://github.com/dell-examples/generative-ai/tree/main/transportation-maritime

Highlights for AI Practitioners

The cargo monitoring PoC demonstrates a solution that can avoid product loss, enhance compliance and logging, and improve worker safety, all by using AI. The creation of these AI processes was done using readily available AI tools. The process of creating valuable, real-world solutions by utilizing such tools should be noted by AI practitioners.

The end of voyage compliance report is generated using the Zephyr 7B LLM model created by Hugging Face’s H4 team. The Zephyr 7B model, which is a modified version of Mistral 7B, was chosen as it is a publicly available model that is both lightweight and highly accurate. The Zephyr 7B model was created using a process called Distilled Supervised Fine Tuning (DSFT) which allows the model to provide similar performance to much larger models, while utilizing far fewer parameters. Zephyr 7B, which is a 7 Billion parameter model, has demonstrated performance comparable to 70 Billion parameter models. This ability to provide the capabilities of larger models in a smaller, distilled model makes Zephyr 7B an ideal choice for edge-based deployments with limited resources, such as in maritime or other transportation environments.

While Zephyr 7B is a very powerful and accurate LLM model, it was trained on a broad data set and it is intended for general purpose usage, rather than specific tasks such as generating a maritime voyage compliance report. In order to generate a report that is accurate to the maritime industry and the specific voyage, more context must be supplied to the model. This was achieved using a process called Retrieval Augmented Generation (RAG). By utilizing RAG, the Zephyr 7B model is able to incorporate the voyage specific information to generate an accurate report which detailed the recorded container and worker safety alerts. This is notable for AI practitioners as it demonstrates the ability to use a broad, pre-trained LLM model, which is freely available, to achieve an industry specific task.

To provide the voyage specific context to the LLM generated report, time series data of recorded events, such as container sweating, power measurements, and worker safety violations, is queried from InfluxDB at the end of the voyage. This text data is then embedded using the Hugging Face LangChain API with the gte-large embedding model and stored in a ChromaDB vector database. These vector embeddings are then used in the RAG process to provide the Zephyr 7B model with voyage specific context when generating the report.

AI practitioners should also note that AI image detection is utilized to detect workers entering into restricted zones. This image detection capability was built using the YOLOv8s object detection model and NVIDIA DeepStream. YOLOv8s is a state of the art, open source, AI model for object detection built by Ultralytics. The model is used to detect workers within a video frame and detect if they enter into pre-configured restricted zones. NVIDIA DeepStream is a software development toolkit provided by NVIDIA to build and accelerate AI solutions from streaming video data, which is optimized for NVIDIA hardware such asthe A100 GPUs used in this PoC. It is notable that NVIDIA DeepStream can be utilized for free to build powerful video-based AI applications, such as the worker detection component of the maritime cargo monitoring solution. In this case, the YOLOv8s model and the DeepStream toolkit are utilized to build a solution that has the potential to prevent serious workplace injuries.

Key Highlights for AI Practitioners

- Maritime compliance report generated with Zephyr 7B LLM model

- Retrieval Augmented Generation (RAG) approach used to provide Zephyr 7B with voyage specific information

- YOLOv8s and NVIDIA DeepStream used to create powerful AI worker detection solution using video streaming data

Considerations for IT Operations

The maritime cargo monitoring PoC is notable for IT operations as it demonstrates the ability to deploy a powerful AI driven solution at the edge. For many in IT, AI deployments in any setting may be a challenge, due to overall unfamiliarity with AI and its hardware requirements. Deployments at the edge introduce even further complexity.

Hardware deployed at the edge requires additional considerations, including limited space and exposure to harsh conditions, such as extreme temperature changes. For AI applications deployed at the edge, these requirements must be maintained, while simultaneously providing a system powerful enough to handle such a computationally intensive workload.

For the maritime cargo monitoring PoC, Dell PowerEdge XR7620 and PowerEdge XR12 servers were chosen for their ability to meet both the most demanding edge requirements, as well as the most demanding computational requirements. Both servers are ruggedized and are capable of operating in temperatures ranging from -5°C to 55°C, as well as withstanding dusty or otherwise hazardous environments. They additionally offer a compact design that is capable of fitting into tight environments. This provides servers that are ideal for a demanding edge environment, such as in maritime shipping, which may experience large temperature swings and may have limited space for servers. Meanwhile, the Dell PowerEdge XR7620 is also equipped with NVIDIA GPUs, providing it with the compute power necessary to handle AI workloads.

Dell PowerEdge XR7620

NVIDIA A100 GPUs were chosen as they are well suited for various types of AI workflows. The PoC includes both a video classification component and a large language model component, requiring hardware that is well suited for both workloads. While there are other processors that are more specialized specifically for either video processing or language models, the A100 GPU provides flexibility to perform both well on a single platform.

The use of high bandwidth Broadcom NICs is also a notable component of the PoC solution for IT operations to be aware of. The Broadcom NICs are responsible for providing a high bandwidth Ethernet connection between the cargo and worker monitoring applications and the visualization and alerting dashboard. The use of scalable, high bandwidth NICs is crucial to such a solution that requires transmitting large amounts of sensor and video data, which may include time sensitive information.

Detection of issues with either reefer or dry containers may require quick action to protect the cargo, and quick detection of workers in hazardous environments can prevent serious harm or injury. The use of a high bandwidth Ethernet connection ensures that data can be quickly transmitted and received by the visualization dashboard for operators to respond to alerts as they arise.

Key Highlights for IT Operations

- AI solution deployed on rugged Dell PowerEdge XR7620 and PowerEdge XR12 servers to accommodate edge environmentand maintain high computational requirements.

- NVIDIA A100 GPUs provide flexibility to support both video and LLM workloads.

- Broadcom NICs provide high bandwidth connection between monitoring applications and visualization dashboard.

Solution Performance Observations

Key to the performance of the maritime cargo monitoring PoC is its ability to scale to support multiple concurrent video streams for monitoring worker safety. The solution must be able to quickly decode and inference incoming video data to detect workers in restricted areas. The ability for the visualization dashboard to quickly receive this data is additionally critical for actions to be taken on alerts as they are raised. The solution was separated into a distinct inference server, to capture and inference data, and an encode server, to display the visualization service. This architecture allows the solution to scale the services independently as needed for varying requirements of video streams and application logic. The separate services are then connected with high bandwidth Ethernet using Broadcom NetXtreme®-E Series Ethernet controllers. The following performance data demonstrates the ability to scale the solution with an increasing number of data streams. Each test was run for a total of 10 minutes and video streams were scaled evenly across the two NVIDIA A100 GPUs. Additional performance results are available in the appendix.

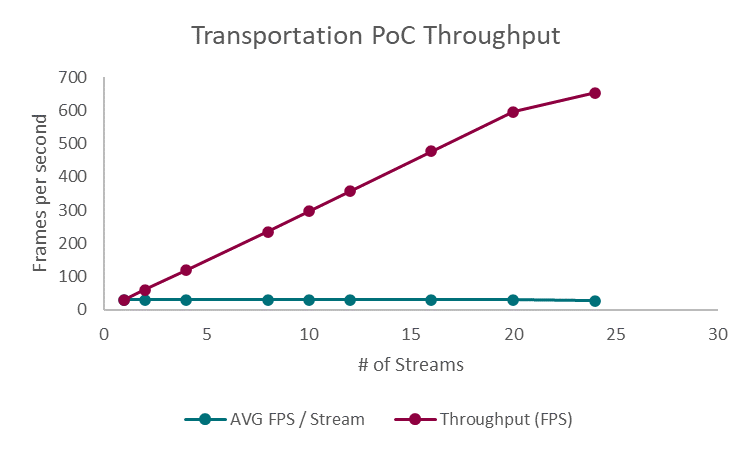

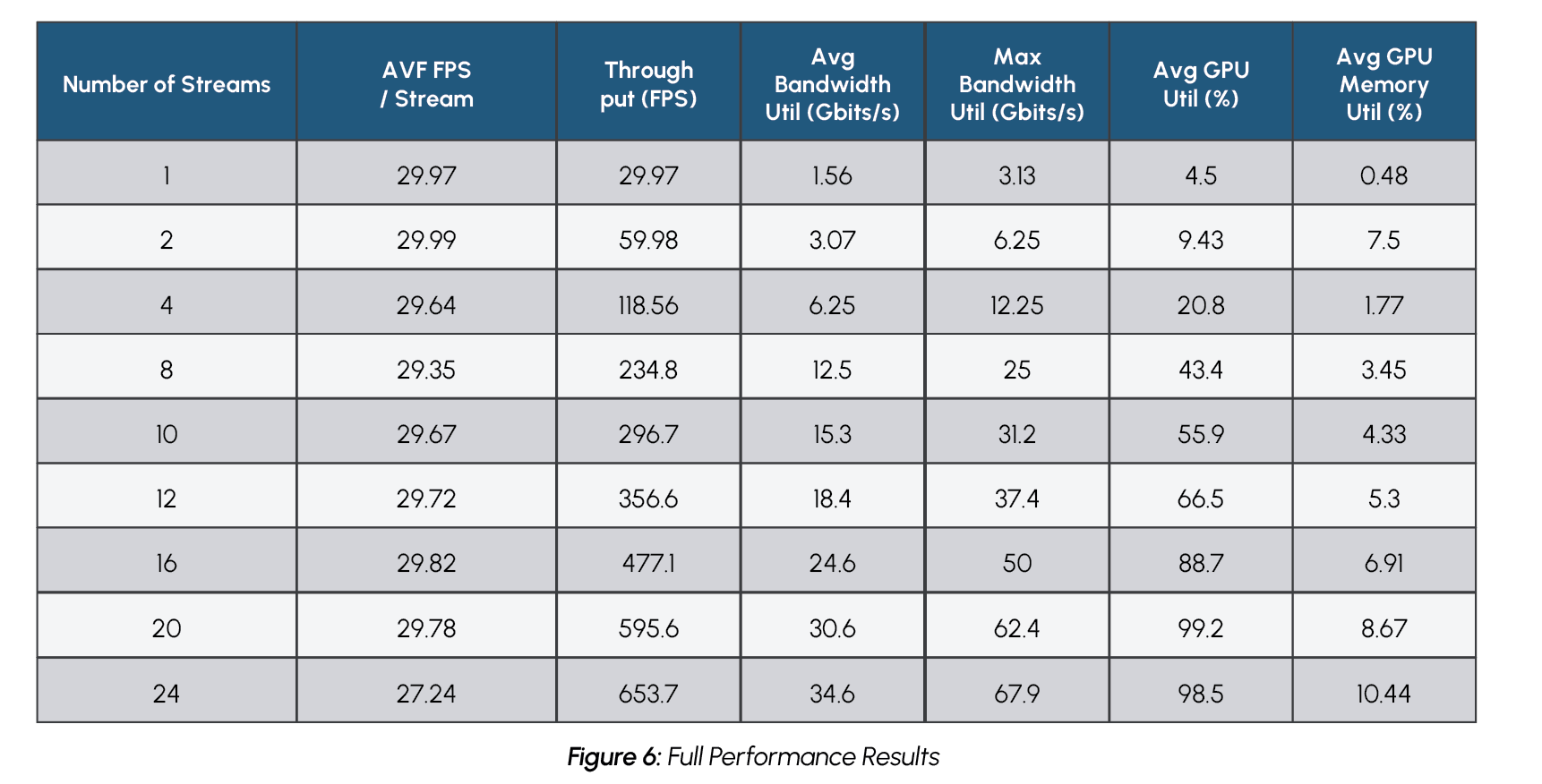

Figure 4: Transportation PoC Throughput

Figure 4 displays the total throughput of frames per second as well as the average throughput as the number of streams increased. The frames per second metric includes video decoding, inference, post-processing, and publishing of an uncompressed stream. The PoC displayed increasing throughput with a maximum of 653.7 frames per second when tested with 24 concurrent streams. Notably, the average frames per second remained steady at approximately 30 frames per second for up to 20 streams, which is considered an industry standard for video processing workloads. When tested with 24 streams, the solution did experience a slight drop, with an average of 27.24 frames per second. Overall, the throughput performance demonstrates the ability of the Dell PowerEdge Server and the NVIDIA A100 GPUs to successfully handle a demanding video-based AI workload with a significant number of concurrent streams.

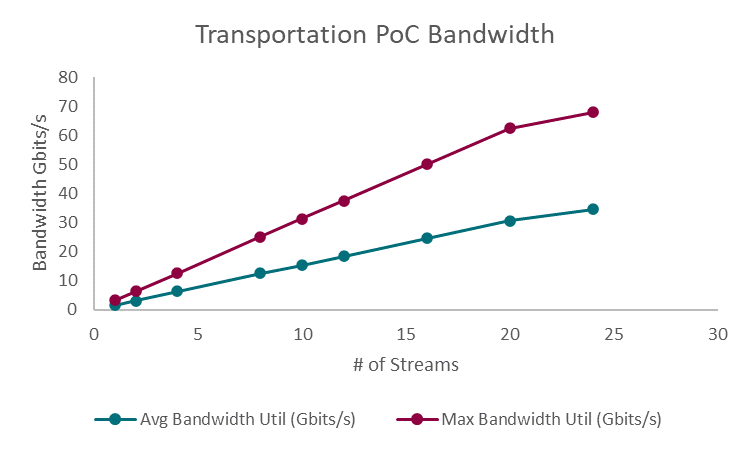

Figure 5: Transportation PoC Bandwidth Utilization

Figure 5 displays the solution’s bandwidth utilization as the number of streams increased from 1 to 24. The results demonstrate the increase in required bandwidth, both at a maximum and on average, as the number of streams increased. The average bandwidth utilization scaled from 1.56 Gb/s with a single video stream, to 34.6 Gb/s when supporting 24 concurrent streams The maximum bandwidth utilization was observed to be 3.13 Gb/s with a single stream, up to 67.9 Gb/s with 24 streams. By utilizing scalable, high bandwidth 100Gb/s Broadcom Ethernet, the solution is able to achieve the increasing bandwidth utilization required when adding additional video streams.

The performance results showcase the PoC as a flexible solution that can be scaled to accommodate varying levels of video requirements while maintaining performance and scaling bandwidth as needed. The solution also provides the foundation for additional AI-powered transportation and logistics applications that may require similar transmission of sensor and video data.

Final Thoughts

The maritime cargo monitoring PoC provides a concrete example of how AI can improve transportation and logistics processes by monitoring container conditions, detecting dangerous working environments, and generating automated compliance reports. While the PoC presented in this paper is limited in scope and executed using simulated sensor datasets, the solution serves as a starting point for expanding such a solution and a reference for developing related AI applications.

The solution additionally demonstrates several notable results. The solution utilizes readily available AI tools including Zephyr 7B, YOLOv8s, and NVIDIA DeepStream to create valuable AI applications that can be deployed to provide tangible value in industry specific environments. The use of RAG in the Zephyr 7B implementation is especially notable, as it provides customization to a general-purpose language model, enabling it to function for a maritime specific use case. The PoC also showcased the ability to deploy an AI solution in demanding edge environments with the use of Dell PowerEdge XR7620 and XR12 servers and to provide high bandwidth when transmitting critical data by using Broadcom NICs.

When tested, the PoC solution demonstrated the ability to scale up to 24 concurrent streams while experience little loss of throughput and successfully supporting increased bandwidth requirements. Testing of the LLM report generation showed that an AI augmented maritime compliance report could be generated in as little as 46 seconds. The testing of the PoC demonstrate both its real-world applicability in solving maritime challenges, as well as its flexibility to scale to individual deployment requirements.

Transportation and logistics are areas that rely heavily upon optimization. With the advancements in AI technology, these markets are well positioned to benefit from AI-driven innovation. AI is capable of processing data and deriving solutions to optimize transportation and logistics processes at a scale and speed that humans are not capable of achieving manually. The opportunity for AI to create innovative solutions in this market is broad and extends well beyond the maritime PoC detailed in this paper. By understanding the approach to creating an AI application and the hardware components used, however, organizations in the transportation and logistics market can apply similar solutions to innovate and optimize their business.

Appendix

Figure 6 shows full performance testing results for the cargo monitoring PoC.

CONTRIBUTORS

Mitch Lewis

Research Analyst | The Futurum Group

PUBLISHER

Daniel Newman

CEO | The Futurum Group

INQUIRIES

Contact us if you would like to discuss this report and The Futurum Group will respond promptly.

CITATIONS

This paper can be cited by accredited press and analysts, but must be cited in-context, displaying author’s name, author’s title, and “The Futurum Group.” Non-press and non-analysts must receive prior written permission by The Futurum Group for any citations.

LICENSING

This document, including any supporting materials, is owned by The Futurum Group. This publication may not be

reproduced, distributed, or shared in any form without the prior written permission of The Futurum Group.

DISCLOSURES

The Futurum Group provides research, analysis, advising, and consulting to many high-tech companies, including those mentioned in this paper. No employees at the firm hold any equity positions with any companies cited in this document.

ABOUT THE FUTURUM GROUP

The Futurum Group is an independent research, analysis, and advisory firm, focused on digital innovation and market-disrupting technologies and trends. Every day our analysts, researchers, and advisors help business leaders from around the world anticipate tectonic shifts in their industries and leverage disruptive innovation to either gain or maintain a competitive advantage in their markets.

© 2024 The Futurum Group. All rights reserved.