Home > Servers > Rack and Tower Servers > Intel > White Papers > LLM Deployments on Dell R760xa with NVIDIA H100 GPUs and Dell Hugging Face Enterprise Hub > Overview

Overview

-

This deployment guide details the process of deploying AI models on the Dell Enterprise Hugging Face Hub using the Dell PowerEdge R760xa server. The deployment utilized a Dell R760xa server configured with dual Intel® Xeon® Platinum 8358 CPUs, 512 GB of DDR4 RAM, and two NVIDIA H100 PCIe GPUs, each with 80 GB of HBM2e memory. This setup provided the necessary computational power and memory for efficient model training and inference. The Dell Hugging Face Enterprise Hub allows for seamless deployment and management of advanced AI models, ensuring optimal performance. This guide covers the entire deployment process from setup to deployment, highlighting steps, challenges, and solutions throughout the process as well as providing code examples to help users deploy their own large language models (LLMs) efficiently.

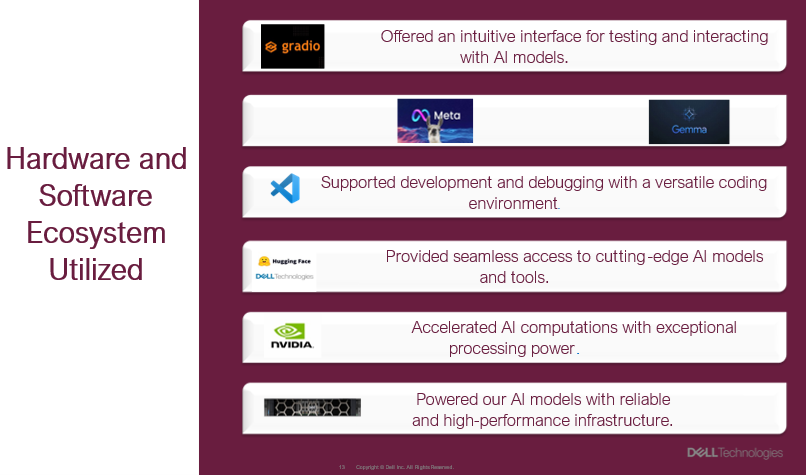

Figure 1. Hardware and software ecosystems utilized

Figure 1. Hardware and software ecosystems utilized