Streamline VMware Server Deployment and Configuration: Dell OpenManage Enterprise Integration for VMware VC

Read the Report See the Science View the InfographicWed, 21 Jun 2023 16:11:43 -0000

|Read Time: 0 minutes

In our hands-on tests, the OpenManage Enterprise with OMEVV solution took less time and fewer steps than VMware vSphere Auto Deploy for Stateful Installation of ESXi to a bare-metal server host

Expanding your organization’s data center with new servers typically means that admins must devote time to configuring and deploying them. Being able to harness tools that streamline and automate these processes reduces the burden on IT staff and gets the new gear into action sooner.

We compared the process of deploying and configuring ESXi on Dell™ servers in a VMware®-based PowerEdge™ environment using two tools: Dell OpenManage™ Enterprise Integration for VMware vCenter® (OMEVV) 1.1.0.1250 and VMware vSphere® Auto Deploy for Stateful Installation (VMware vSphere Auto Deploy).

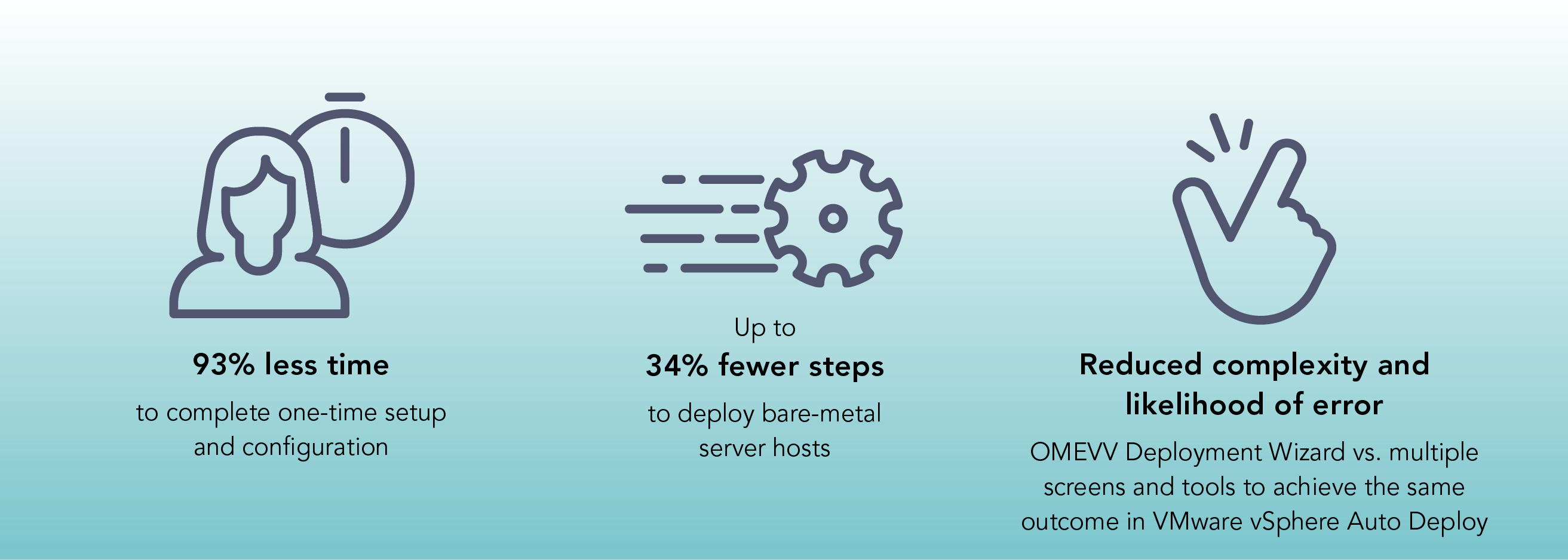

We found that the administrative time for one-time setup and configuration was up to 93 percent less for OMEVV and that deploying bare-metal server hosts after the one-time setup and configuration was up to 74 percent less. OMEVV greatly streamlined these activities, reducing the number of required steps by as much as 83 percent for setup and configuration and by up to 34 percent for bare-metal host deployment.

By decreasing the amount of time and number of steps necessary to put new hosts into service, using OpenManage Enterprise Integration for VMware vCenter can free your admins to perform other activities.

How we approached testing

We set up three Dell PowerEdge servers to capture the amount of time and number of steps required to provision Dell servers with ESXi software in a vCenter environment using two different automatic deployment solutions:

- OpenManage Enterprise with OMEVV leveraging agent-free iDRAC

- vSphere Auto Deploy for Stateful Installation using PXE boot

We also explored the features the two solutions offer and noted several advantages that OpenManage Enterprise with OMEVV offers over vSphere Auto Deploy for Stateful Installation.

About the OpenManage Enterprise Integration for VMware vCenter

The latest release of OpenManage Enterprise Integration for VMware vCenter (OMEVV) utilizes OpenManage Enterprise data in the vCenter administration portal. The integration can improve vCenter monitoring and management in a VMware software-based PowerEdge environment by offering the following:1

- Hardware information and alerts pulled into vCenter with controls for notifications

- iDRAC address and service tag details

- Dell warranty information

- Deep-level detail on certified Dell hardware components, including memory and local drives

- Support for both vLCM and VMware Active HA

What we found: The numbers

In this section, we focus on the quantitative advantages of Dell OMEVV over VMware vSphere Auto Deploy for configuration and deployment: less time and fewer steps required to complete tasks.

One-time setup and configuration

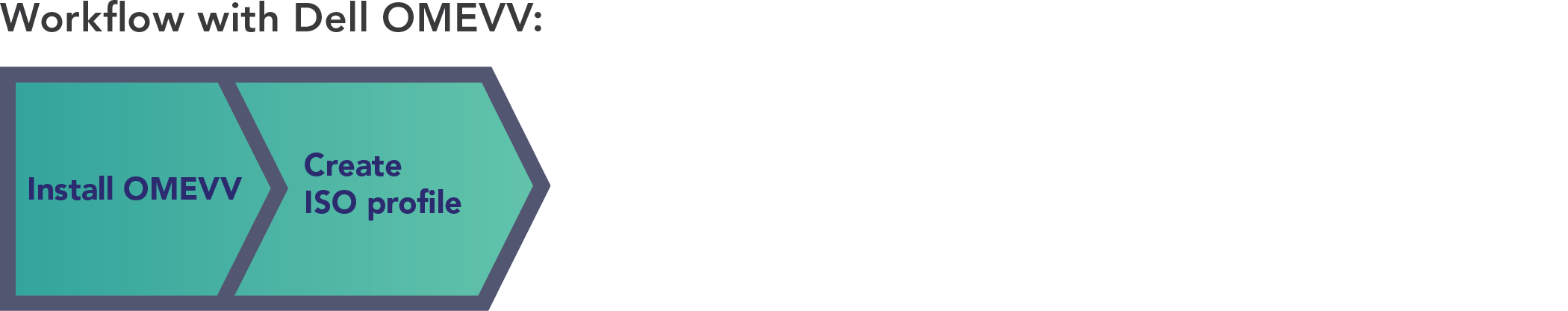

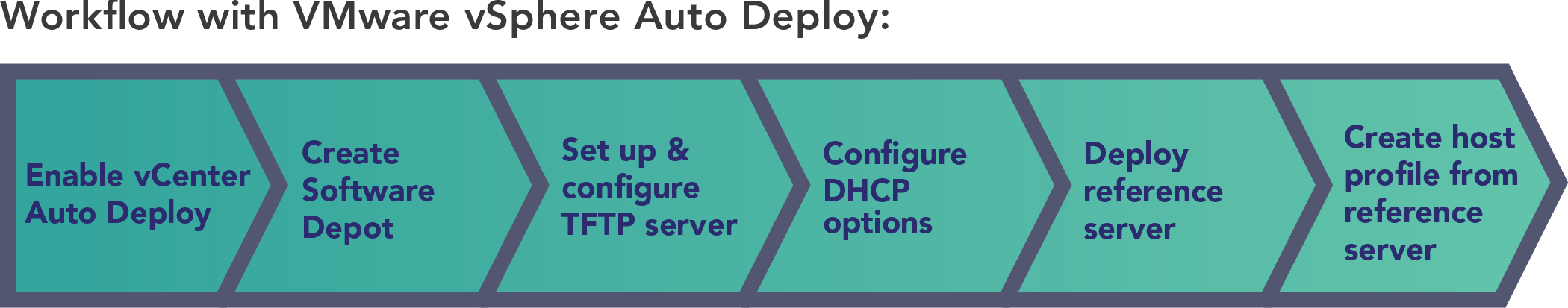

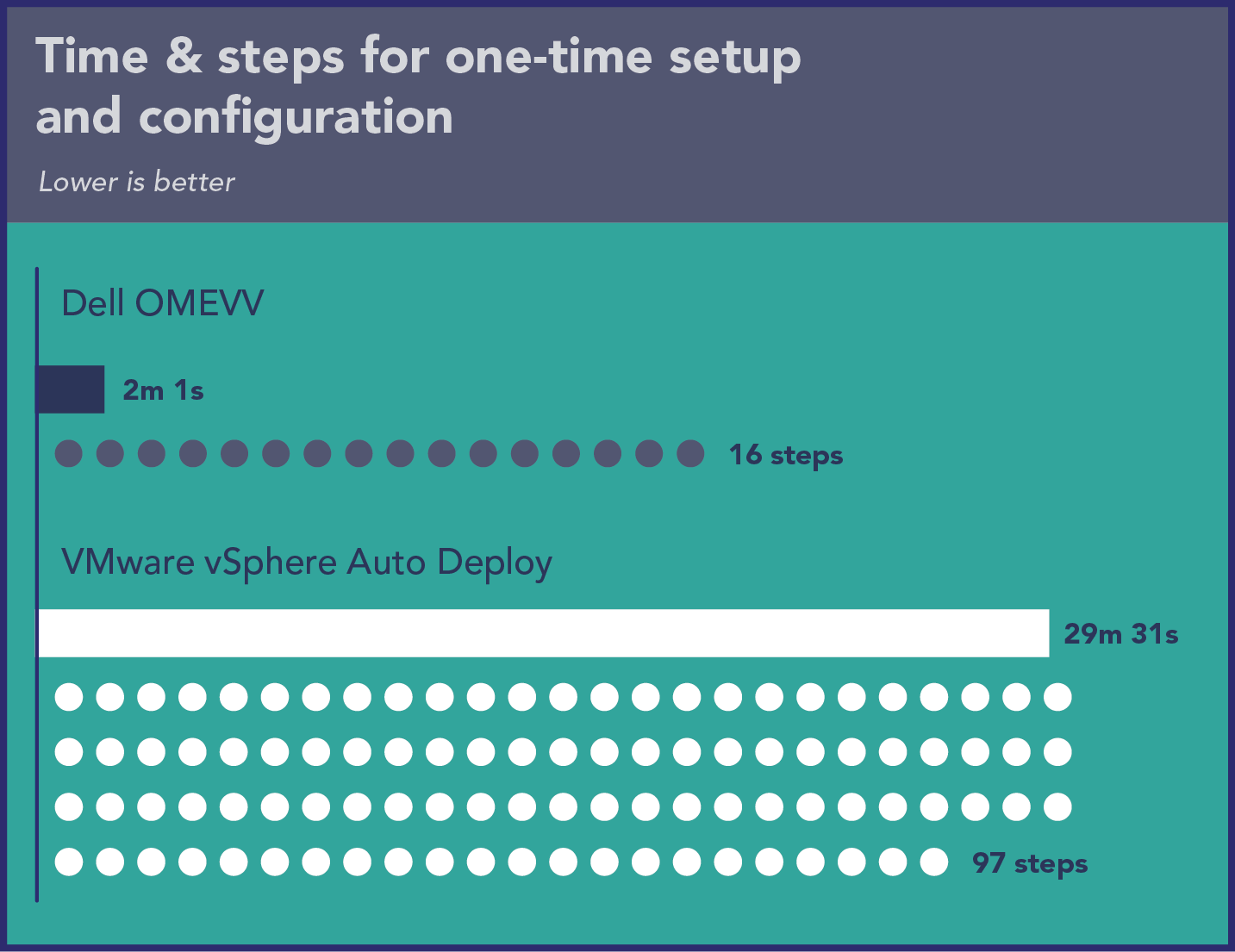

In the first phase of testing, we investigated the time requirements and complexity of performing one-time setup and configuration with the two solutions. Before we dive into our detailed findings, let’s look at a high-level overview of what the two processes involve. As Figure 1 illustrates, the Dell OMEVV process consisted of only two phases. In contrast, the VMware vSphere Auto Deploy process consisted of six phases (see Figure 2).

Having more phases does not necessarily equate to requiring more time and steps, but in our testing, it did. As Figure 3 shows, using Dell OMEVV to perform one-time setup and configuration was indeed more streamlined than the process with VMware vSphere Auto Deploy, with the process taking fewer than one-sixth the number of steps. It required less admin time, requiring just over 2 minutes versus nearly half an hour.

Table 1 breaks down the time and steps for each of the two phases of the Dell OMEVV process. As it shows, both were quick for our technician to execute.

| Dell OMEVV | Time (min:sec) | Steps |

|---|---|---|

| Installing OMEVV | 1:19 | 11 |

| Creating ISO profile | 0:42 | 5 |

| Total one-time setup | 02:01 | 16 |

Table 2 breaks down the time and steps for each of the six phases of the VMware vSphere Auto Deploy process. While most of these took our technician a minute or less to execute, setting up and configuring the TFTP server and deploying the reference server took significantly longer.

| VMware vSphere Auto Deploy | Time (min:sec) | Steps |

|---|---|---|

| Enabling vCenter Auto Deploy | 0:40 | 3 |

| Creating Software Depot | 00:42 | 2 |

| Setting up and configuring TFTP server | 13:13 | 37 |

| Configuring DHCP options | 01:23 | 9 |

| Deploying reference server | 12:38 | 25 |

| Creating host profile from reference server | 00:55 | 21 |

| Total one-time setup | 29:31 | 97 |

Deploying hosts

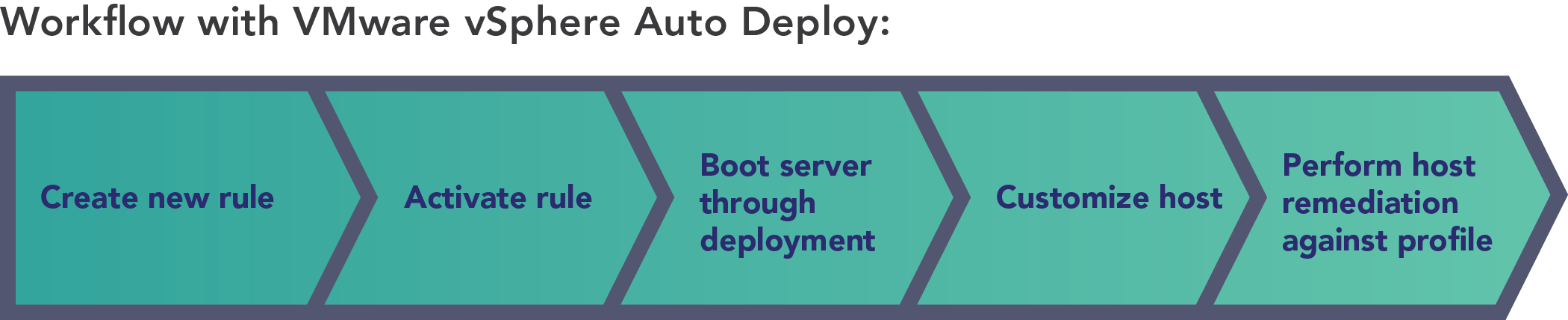

In the second phase of our testing, we investigated the time requirements and complexity of deploying one, two, and three hosts using the two solutions. As we did earlier, let’s start with a high-level overview of what the two processes involve. As Figure 4 illustrates, the Dell OMEVV process of deploying a host consisted of three phases: discovering the servers in OME, discovering those hosts as bare-metal servers in OMEVV within vCenter, and creating a deployment job. In contrast, the VMware vSphere Auto Deploy process consisted of five phases (see Figure 5).

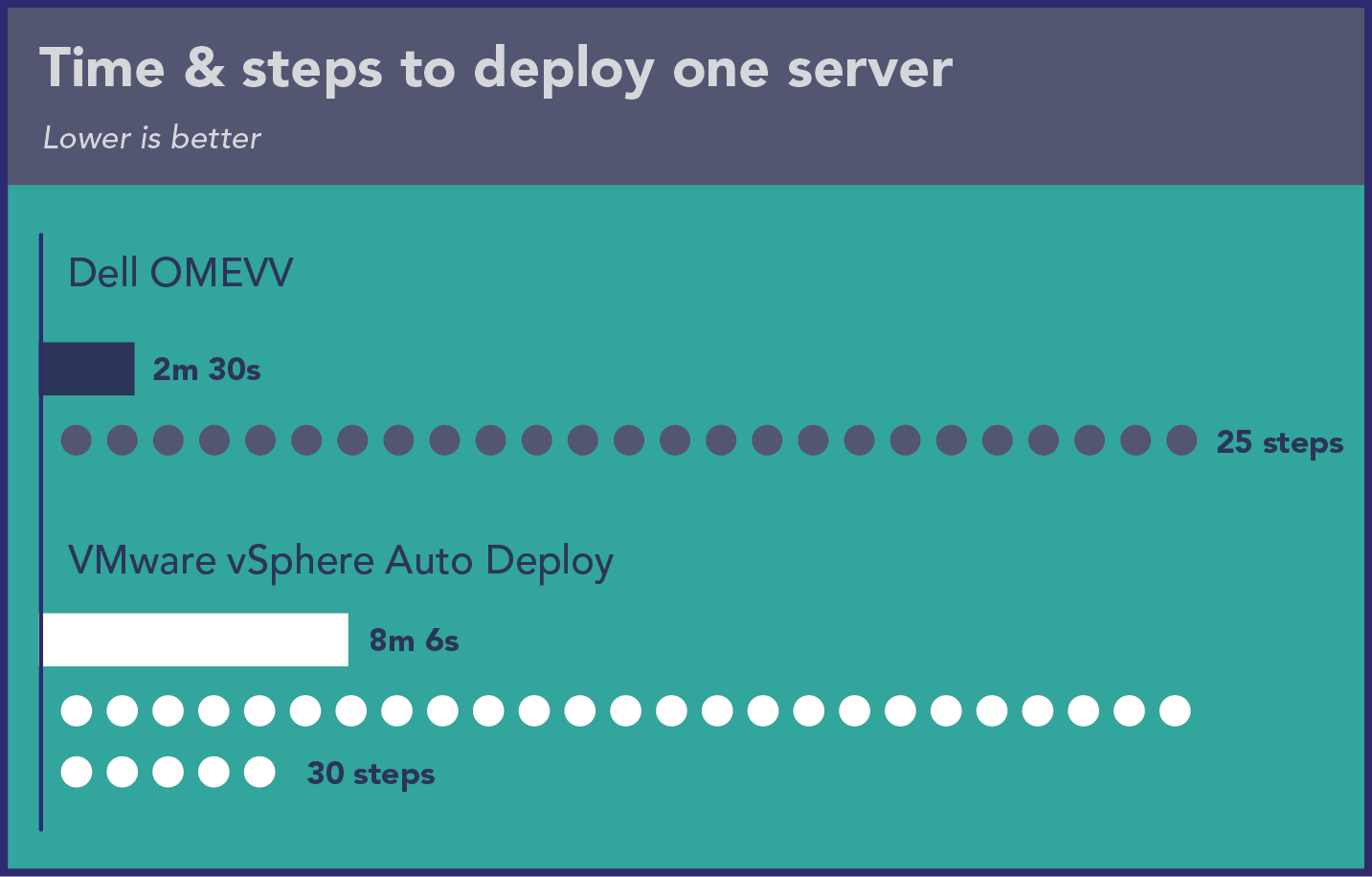

Time and steps to deploy a single host

As Figure 6 shows, using Dell OMEVV to deploy one host was more streamlined than performing the same task using VMware vSphere Auto Deploy, with the process taking fewer steps. Deployment with OMEVV took 2.5 minutes of admin time compared to more than 8 minutes of admin time for vSphere Auto Deploy.

Table 3 provides of breakdown of the time and steps each phase of the Dell OMEVV process required.

| Dell OMEVV | Time (min:sec) | Steps |

|---|---|---|

| Discovering servers in OME | 00:17 | 8 |

| Discovering bare-metal servers | 00:29 | 8 |

| Creating deployment job | 01:44 | 9 |

| Total | 02:30 | 25 |

In contrast to the Dell OMEVV process, the VMware vSphere Auto Deploy process was more complex, with five distinct phases. Four of these took our technician 45 seconds or less to execute, but booting the server through deployment took more than 6 minutes (see Table 4).

| VMware vSphere Auto Deploy | Time (min:sec) | Steps |

|---|---|---|

| Creating new rule | 00:45 | 8 |

| Activating rule | 00:12 | 6 |

| Booting server through deployment | 06:27 | 4 |

| Customizing host | 00:34 | 6 |

| Performing host remediation against profile | 00:08 | 6 |

| Total | 08:06 | 30 |

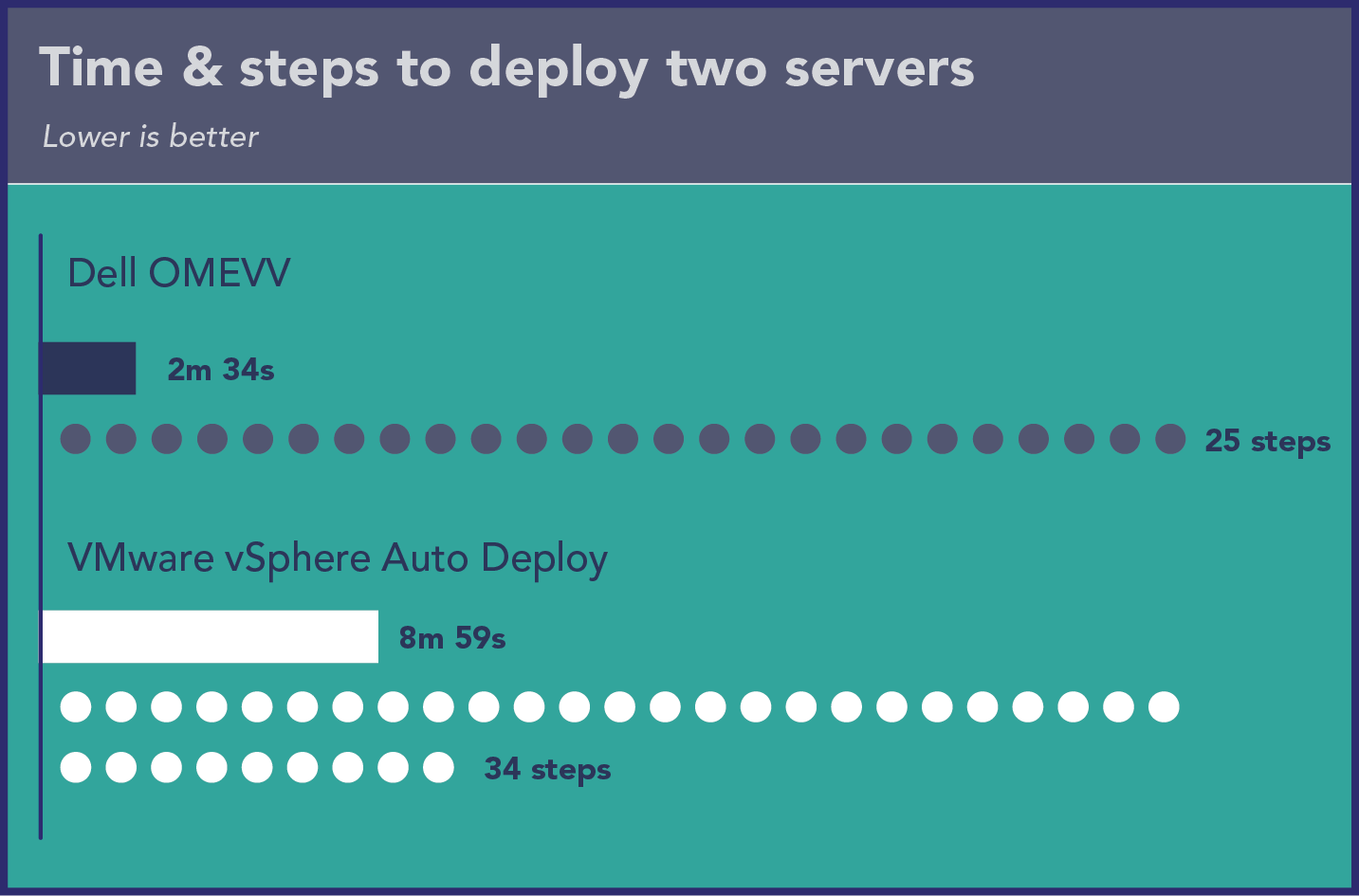

Time and steps to deploy two hosts

As Figure 7 shows, using Dell OMEVV to deploy two hosts took the same number of steps as deploying one with only 4 additional seconds. In contrast, the process with VMware vSphere Auto Deploy needed four additional steps and 53 additional seconds.

Table 5 provides of breakdown of the time and steps each phase of the Dell OMEVV process required.

| Dell OMEVV | Time (min:sec) | Steps |

|---|---|---|

| Discovering servers in OME | 00:17 | 8 |

| Discovering bare-metal servers | 00:29 | 8 |

| Creating deployment job | 01:48 | 9 |

| Total | 02:34 | 25 |

Table 6 breaks down the time and steps for each of the five phases of the VMware vSphere Auto Deploy process for deploying two hosts. Four of these took our technician 53 seconds or less to execute, but booting the server through deployment took almost 7 minutes.

| VMware vSphere Auto Deploy | Time (min:sec) | Steps |

|---|---|---|

| Creating new rule | 00:53 | 8 |

| Activating rule | 00:12 | 6 |

| Booting server through deployment | 06:59 | 8 |

| Customizing host | 00:47 | 6 |

| Performing host remediation against profile | 00:08 | 6 |

| Total | 08:59 | 34 |

Time and steps to deploy three hosts

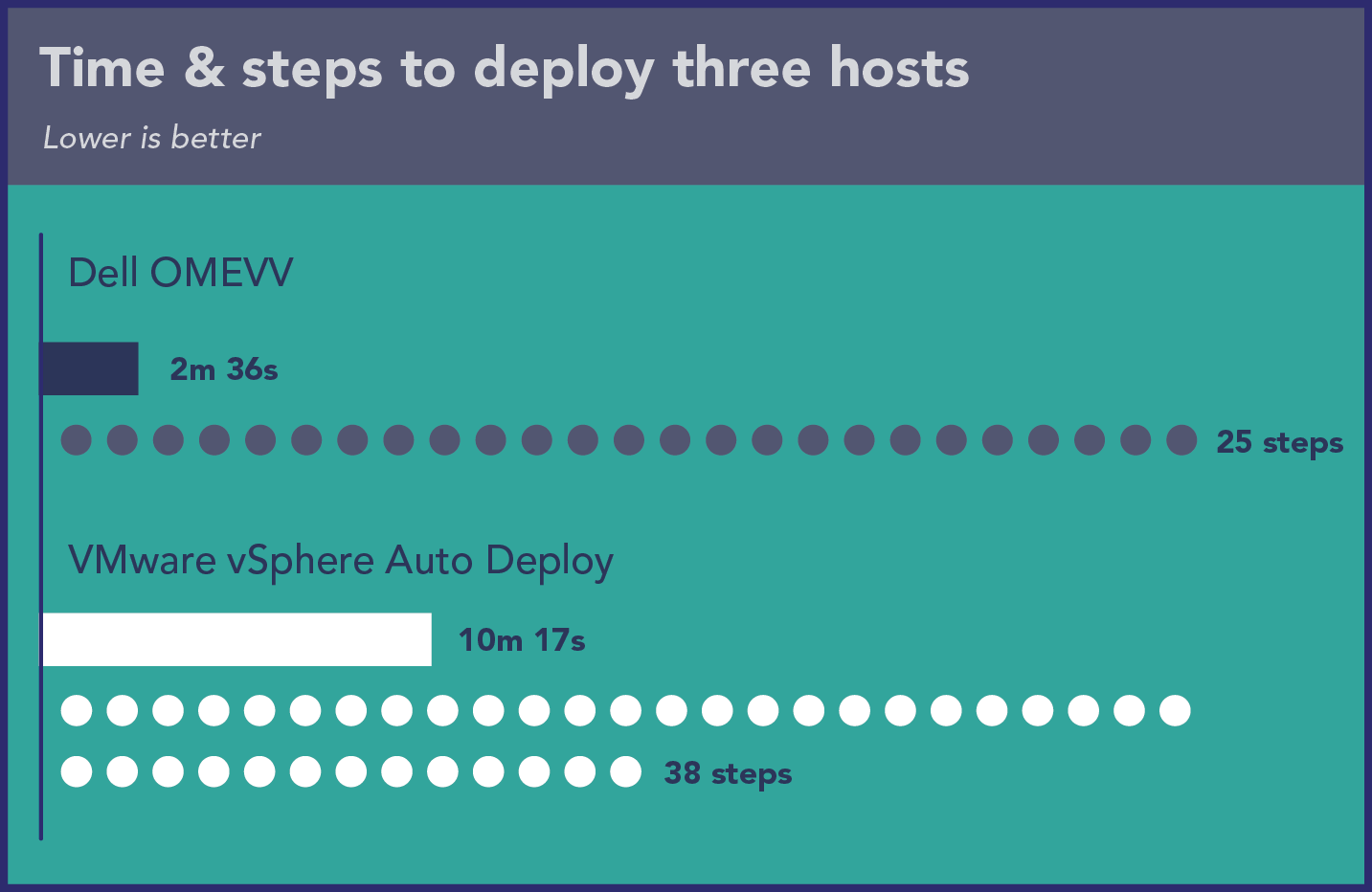

As Figure 8 shows, using Dell OMEVV to deploy three hosts took the same number of steps as deploying a single host and only 6 additional seconds. In contrast, the process with VMware vSphere Auto Deploy required eight additional steps and an extra 2 minutes and 11 seconds.

Table 7 provides of breakdown of the time and steps each phase of the Dell OMEVV process required.

| Dell OMEVV | Time (min:sec) | Steps |

|---|---|---|

| Discovering servers in OME | 00:17 | 8 |

| Discovering bare-metal servers | 00:29 | 8 |

| Creating deployment job | 01:50 | 9 |

| Total | 02:36 | 25 |

With three hosts, four of the five phases of the VMware vSphere Auto Deploy process took our technician 1 minute and 19 seconds or less to execute. However, booting the server through deployment took 7 minutes and 40 seconds (see Table 8).

| VMware vSphere Auto Deploy | Time (min:sec) | Steps |

|---|---|---|

| Creating new rule | 00:58 | 8 |

| Activating rule | 00:12 | 6 |

| Booting server through deployment | 07:40 | 12 |

| Customizing host | 01:19 | 6 |

| Performing host remediation against profile | 00:08 | 6 |

| Total | 10:17 | 38 |

As our findings in this section show, the amount of time that administrators save by selecting the Dell solution increased as the number of deployed servers increased. Because the time savings as we added servers was not linear, we can’t reliably extrapolate the savings organizations would see with larger deployments. However, when deploying a large VMware vSphere ESXi cluster of 32 or more servers, the time savings would be even more substantial than what we have shown here.

What we found: The experience

In this section, we present some of the qualitative advantages of Dell OMEVV over VMware vSphere Auto Deploy for configuration and deployment.

Some advantages of Dell OMEVV

- Our team found OMEVV substantially easier to use than vSphere Auto Deploy and found that its documentation covers all of the use cases we tested.

- By reducing complexity, job deployment in OMEVV reduces the likelihood of error. Using vSphere Auto Deploy, it is possible to miss steps when navigating among Profiles, Auto Deploy, Rules, Inventory, and back to Profiles to remediate after the first deployment pass completes.

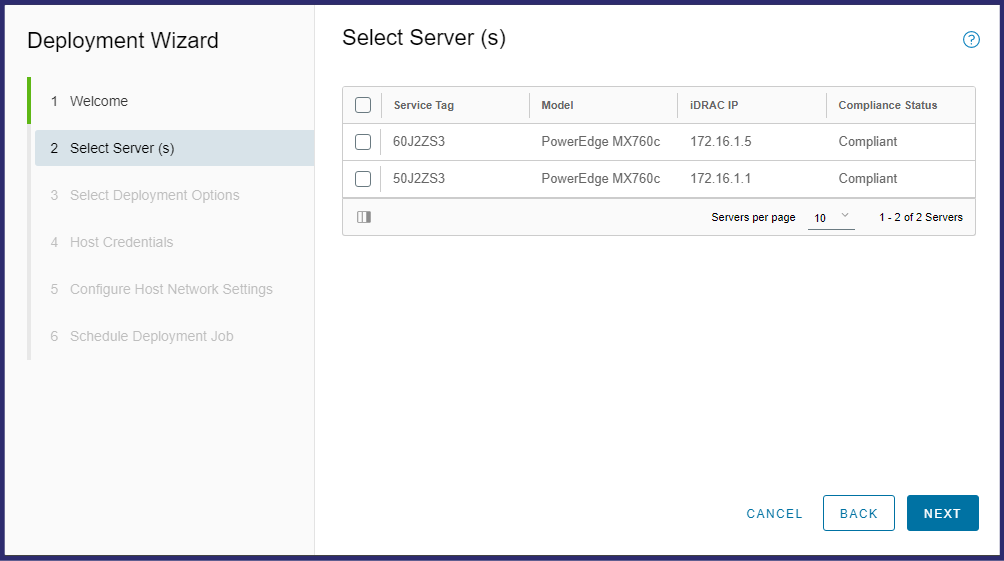

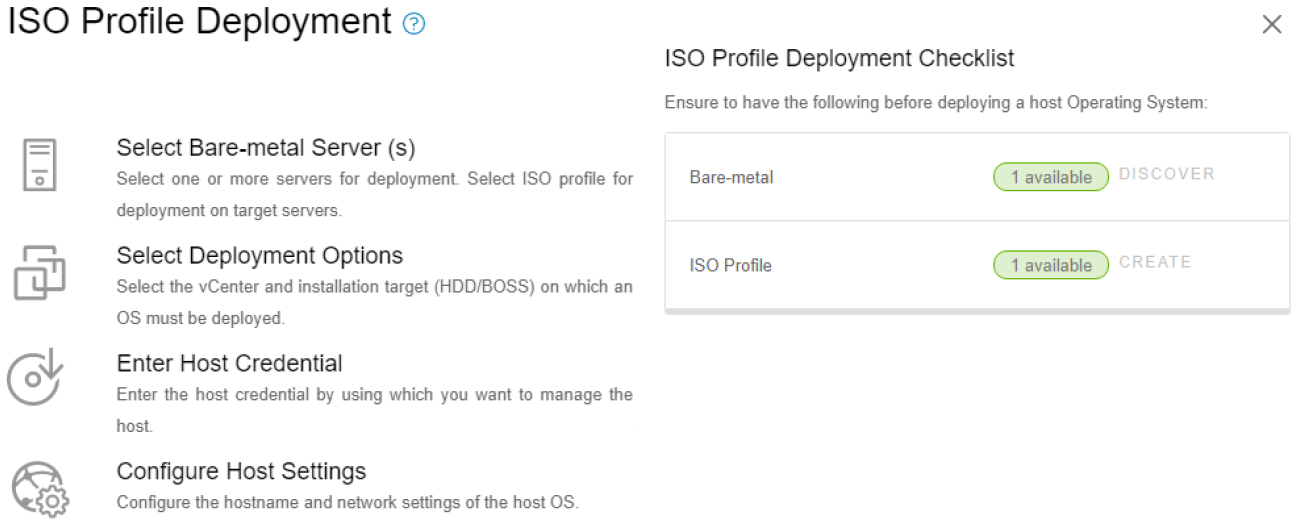

- OMEVV operates within a single GUI. The user stays within the OMEVV plugin area to complete tasks such as selecting target servers, creating an ISO profile, and creating a deployment job. To create a deployment job, the user stays in a single-pane Deployment Wizard, which functions as a guided tutorial (see Figure 9). In contrast, vSphere Auto Deploy requires the user to move among a variety of different screens and locations.

- Even if an existing OS is present on the server boot volume, OMEVV can perform an installation without intervention by leveraging the Lifecycle Controller; in contrast, using VMware guidance for setting PXE as the secondary boot method, reinstalling using vSphere Auto Deploy requires manual intervention to invoke the boot menu and users must manually select the PXE option for boot.

- OMEVV assigns static IPs before installation; vSphere Auto Deploy does this after installation. In a real-world setting, the vSphere Auto Deploy approach wastes time. Admins must wait, turning the install time into active administration time where the admin is unable to work on other tasks or jobs because another step is waiting for them at the end.

- OMEVV offers additional features beyond deployment that vSphere Auto Deploy does not (see the section "About the OpenManage Enterprise Integration for VMware vCenter"). Once you have added servers using OMEVV, they benefit from OME. For example, OME offers development of firmware setting and support for call-home service, detailed reports, and other plugins.

Some disadvantages of vSphere Auto Deploy

- vSphere Auto Deploy requires PXE boot, which has networking considerations that users must accommodate.

- vSphere Auto Deploy requires a TFTP server, which is a potential disadvantage because their lack of authentication and encryption makes these servers less secure and more prone to attacks.

- In vSphere Auto Deploy, users can only import or extract host profiles and cannot build them from scratch. This limitation means users must have a template server, which adds steps.

- In vSphere Auto Deploy, Server Initial Config is more specific, whereas in OMEVV Server Initial Config only ensures that defaults are enabled. It is possible to work with Dell so that servers come pre-configured and ready to go for OME/OMEVV on the day they arrive; the admin needs only to power them on, discover them in OME, and run a deployment job.

- To use rules that target specific servers in vSphere Auto Deploy, the user must know certain information about the servers ahead of time, such as IP addresses, serial numbers, or drive and driver types for targeted installation. OMEVV does not require this.

Conclusion

Deploying servers can be a time-consuming task but it doesn’t have to be. In a VMware-based Dell PowerEdge environment, we found that using Dell OpenManage Enterprise Integration for VMware vCenter to configure and deploy bare-metal server hosts greatly reduced the administrative burden in terms of time and complexity compared to using VMware vSphere Auto Deploy. In addition, by letting our technician perform these tasks in up to 74 percent less administrative time and with 34 percent fewer steps, Dell OMEVV is the clear winner in efficiency and complexity, all from a single console with the added benefit of introducing customers to the host of other OME features.

To streamline your deployment of new ESXi servers and optimize your administrator’s time, choose Dell OpenManage Enterprise Integration for VMware vCenter.

- Dell, “OpenManage Enterprise Integration for VMware vCenter,” accessed April 14, 2023, https://www.dell.com/support/kbdoc/000176981/openmanage-integration-for-vmware-vcenter.

- Principled Technologies, “Implement cluster-aware firmware updates to save time and effort,” accessed April 18, 2023, https://www.principledtechnologies.com/clients/reports/Dell/OpenManage-Integration-for-VMware-vCenter-1122.pdf.

This project was commissioned by Dell Technologies.

Related Documents

OpenManage Enterprise Integration for VMware Virtual Center Overview

Mon, 29 Apr 2024 19:17:32 -0000

|Read Time: 0 minutes

Summary

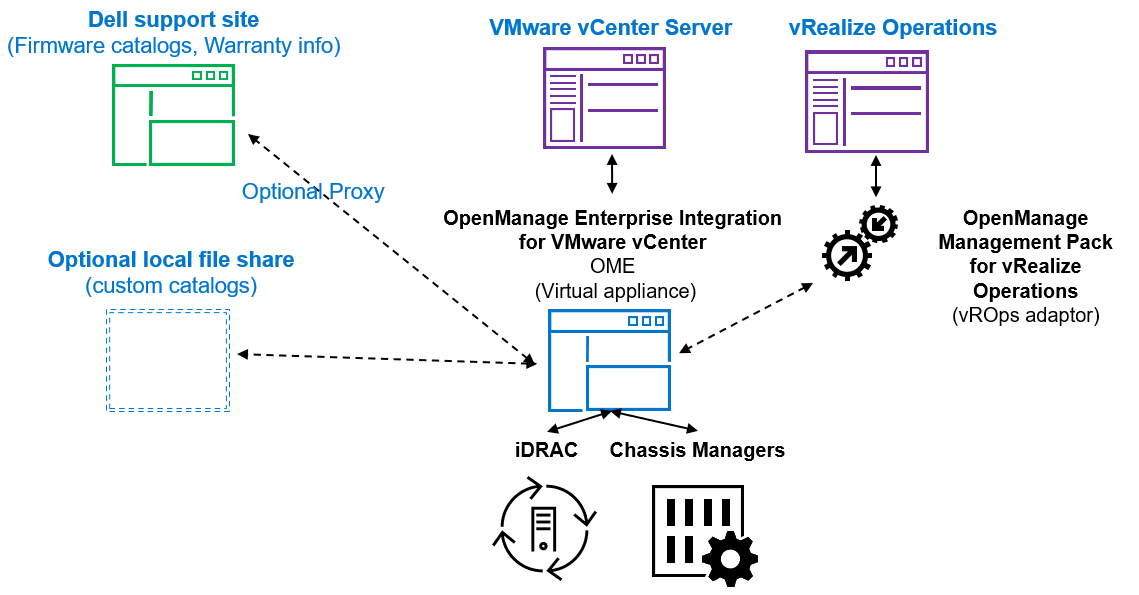

OpenManage Enterprise Integration for VMware vCenter (OMEVV) offers extensive functionality to manage Dell PowerEdge server hardware and firmware from within VMware vCenter. Delivered as a simple virtual appliance, OpenManage Enterprise, with its integration for VMware vCenter plugin architecture, has no dependence on local software agent installations on the managed hosts. This tech note highlights the key features of the plugin which provides deep level details for inventory, monitoring, firmware updating, and deployment of Dell servers, all from within the vCenter console GUI.

IT administrators face many challenges managing physical servers in VMware environments. This process can be complex and time-consuming. VMware vCenter provides a scalable platform that forms the foundation for VMware software management of these environments. The addition of OpenManage Enterprise Integration for VMware vCenter allows IT administrators to manage both their virtual and physical infrastructure from within vCenter, thus dramatically simplifying overall management. Additional PowerEdge menu options are added in vCenter, alongside Dell server data, to monitor and manage physical servers. These options also include semi-automated updates of server firmware and bare-metal deployment of ESXi hypervisor on Dell PowerEdge servers, including modular systems.

OpenManage integration architecture

OpenManage Enterprise Integration for VMware vCenter is a plugin to the OpenManage Enterprise virtual appliance for server management. The OpenManage Enterprise virtual appliance is a virtual machine image that can be deployed easily containing Dell’s server management software. It can be installed on any ESXi, Microsoft Hyper-V, or Red Hat Linux KVM host.

Figure 1. High level architecture (vRealize Aria, previously known as vROps or vRealize Operations, integration is expected to be released 2nd half 2023)

The OpenManage integration provides native integration into the vCenter Server console interface. It helps make the vCenter console the single pane of glass to manage both the virtual and physical environments. The integration goes beyond a simple “link and launch” to existing Dell system management tools. Instead, it brings server management tasks and server data natively into the vCenter console. An API interface is also supported for customers who want to automate or integrate with additional tools. VMware administrators do not need to learn to use additional tools for many of the PowerEdge management tasks because these are integrated into the menus that they are already familiar with within vCenter.

Managing Dell hosts

OpenManage Integration provides deep level details for inventory, monitoring, and alerting of Dell hosts (that is, physical servers) within vCenter and recommends or performs vCenter actions based on Dell hardware events. From the OpenManage Enterprise Plugin, administrators can view details of managed servers.

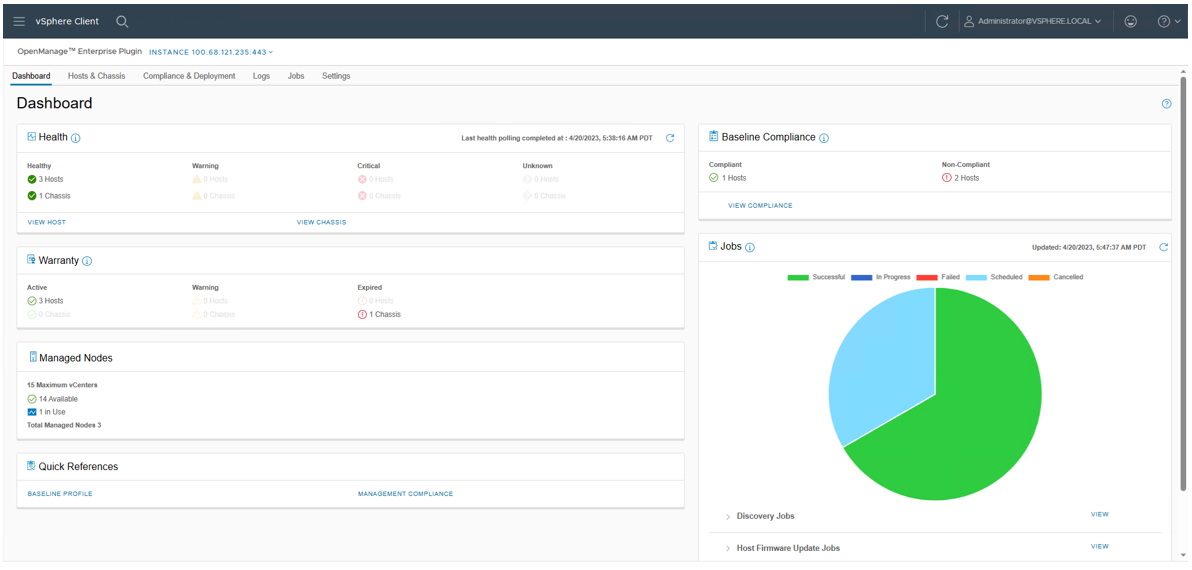

The dashboard view provides the health status of the monitored clusters and physical servers alongside host information, including warranty status. It also provides appliance information, such as the number of vCenters monitored, baseline compliance status, and OMEVV job status.

Figure 2. OMEVV Dashboard

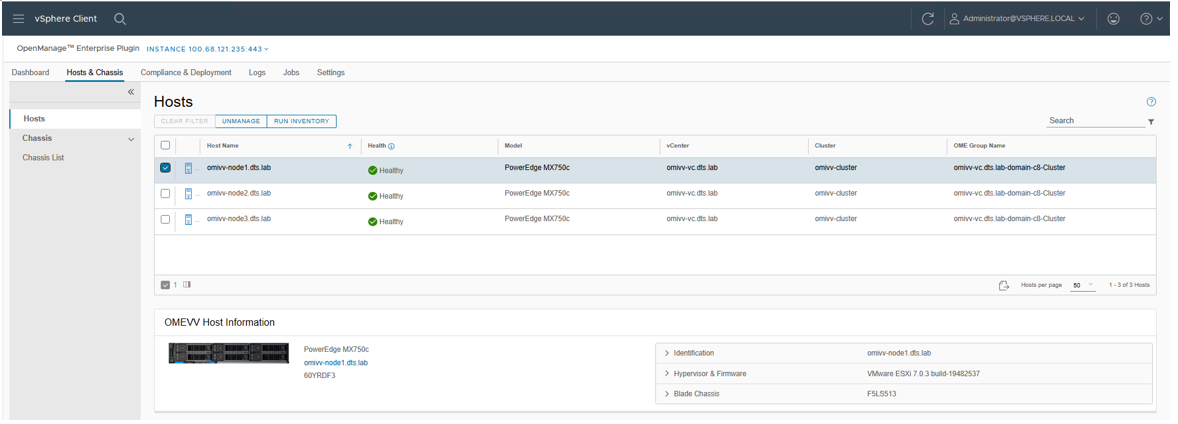

At the Hosts & Chassis level, the view provides the health status of the physical server. It also displays server details including power status, iDRAC IP, model name, service tag, asset tag, warranty data, last inventory scan, ESXi Hypervisor version, and core firmware versions.

Figure 3. OMEVV list of managed hosts

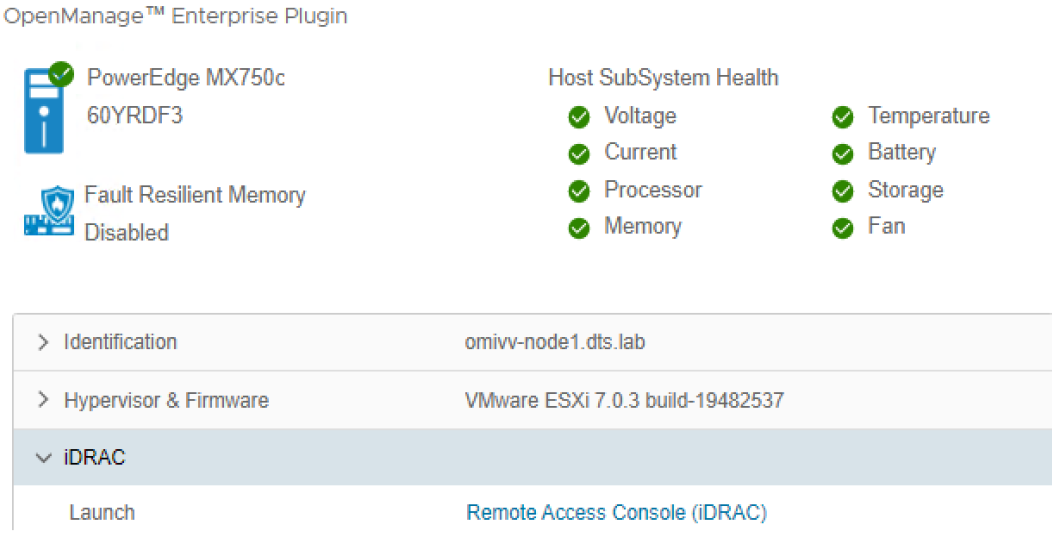

The vSphere inventory view provides additional details. At the host level, the OMEVV host information view provides deeper server and component details, along with data, about local storage. It also includes server information, such as comprehensive firmware version reporting, power usage data, iDRAC IP address, Service Console IP, warranty type with expiration information, and recent system event log entries. The System Event Log (SEL) provides details such as iDRAC login events, firmware update jobs, and server reboots. Host subsystem health is displayed in the host summary area; detailed component health is available in OpenManage Enterprise.

Figure 4. OMEVV server and component health

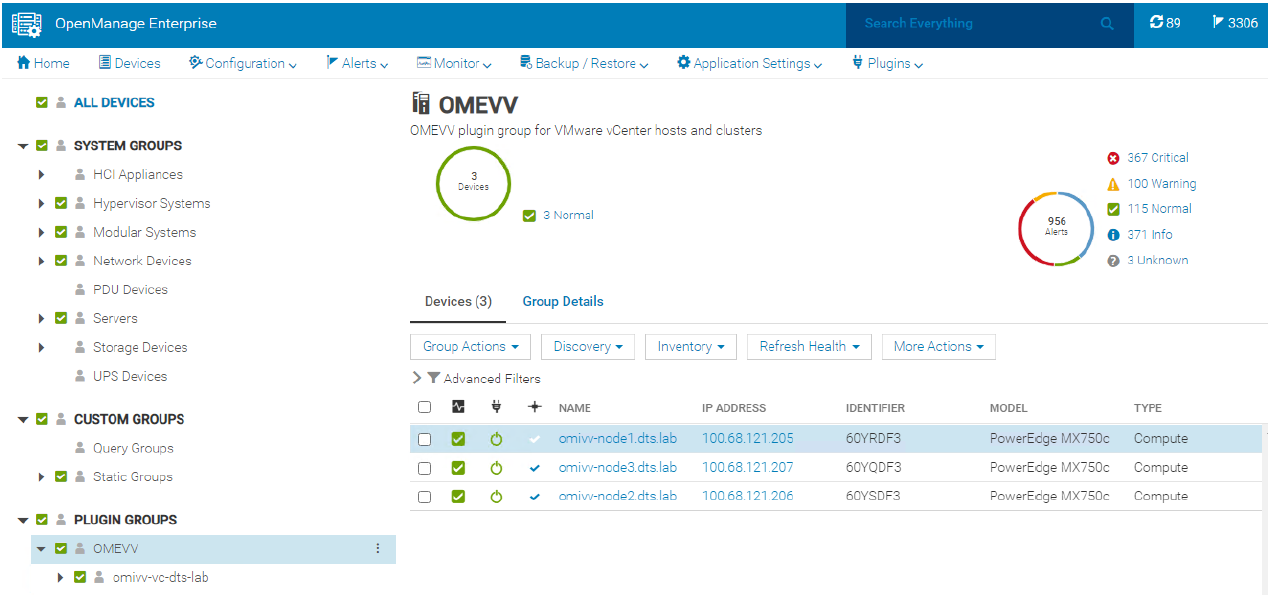

There are a few prerequisites to meet for a Dell server to be managed by OMEVV, such as licensing requirements and minimum firmware versions. The OMEVV management compliance wizard ensures that the hosts have met these requirements. After it is discovered and selected as a managed host, a server will appear in the OpenManage Enterprise plugin group for OMEVV and in the list of managed hosts in the OMEVV plugin (see Figure 5).

For detailed steps about how to use the configuration wizard, see the OpenManage Integration User Guide. Although VxRail monitoring is supported by the core OME console, and the power manager plugin will manage VxRail power and thermal data, OMEVV does not support VxRail because VxRail has its own life cycle management solution. For more information about supported server models and iDRAC versions, see the OMEVV support matrix and the OpenManage Enterprise support matrix.

Figure 5. OMEVV managed server group in OME

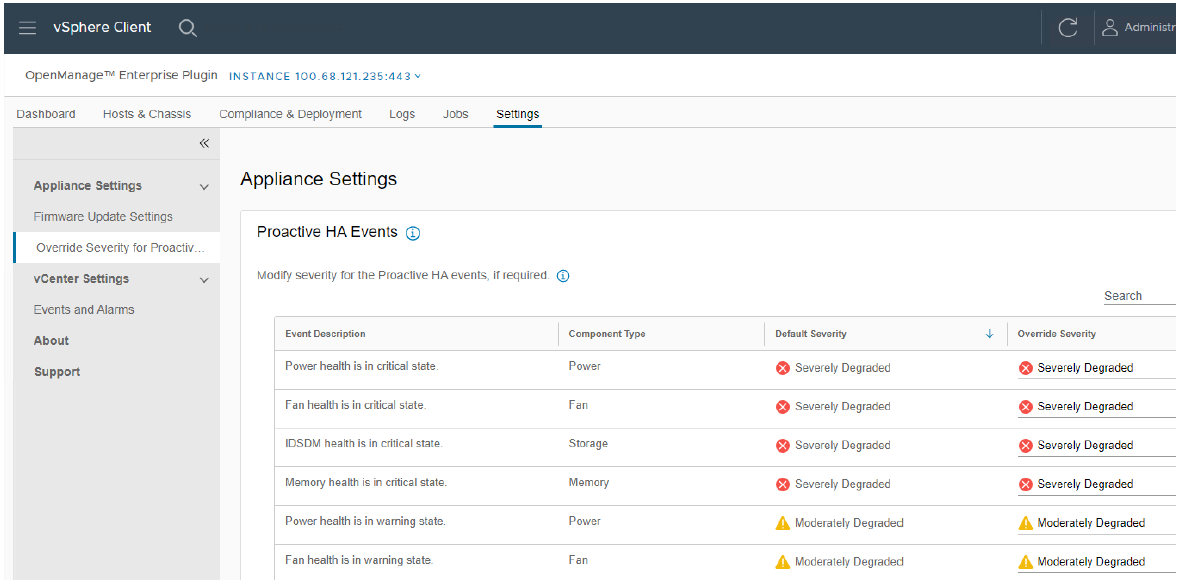

Proactive automated actions to hardware alerts

The OpenManage Integration contains a predefined list of hardware events with recommended actions within vCenter which are triggered by Dell hardware events. Critical hardware alarms, such as loss of redundant power, can be enabled to put the affected host into VMware maintenance mode. If VMware DRS is configured, the VMs are evacuated by vMotion to another VMware host in the cluster. (Note: By default, all Dell alarms are disabled.) This is called VMware proactive High Availability (PHA) and is a vCenter feature that works with OMEVV. Customers can override the default severity assigned by Dell for these events to allow them to be tailored.

Figure 6. Example server event alarms severity

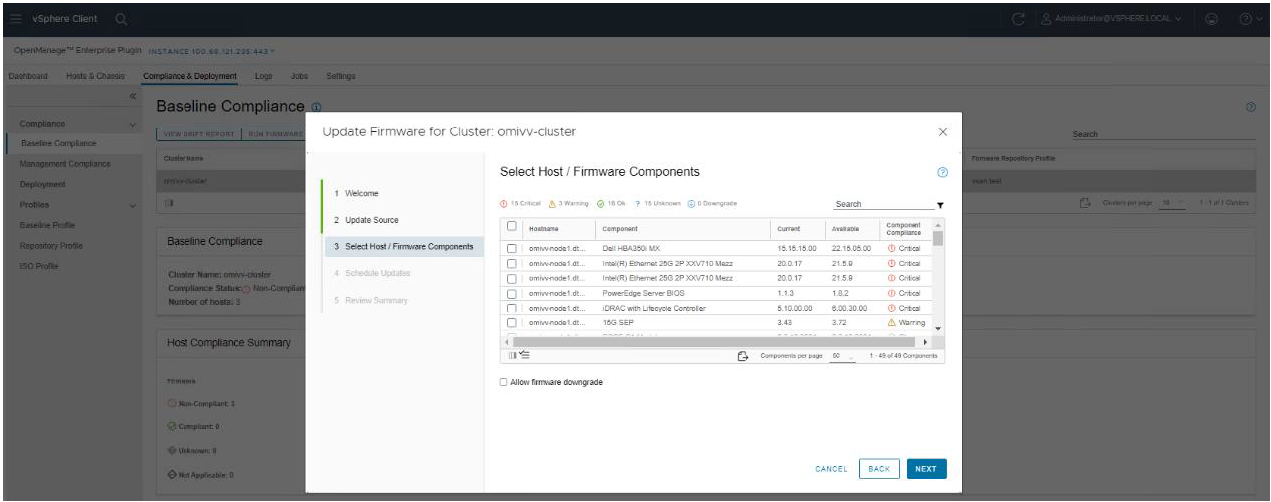

Updating Dell server BIOS and firmware

Within the vCenter console, users can view BIOS / firmware versions, compare them to desired versions, and perform updates at the host or cluster level. This feature supports Dell 13G, 14G, 15G, 16G, and future generation servers with either iDRAC express or iDRAC enterprise. OMEVV offers cluster aware firmware updates where updates run sequentially one host at a time across the entire cluster, putting the target host into maintenance mode and using DRS to migrate virtual machines hot to ensure workloads are kept running. This firmware update feature can run tasks concurrently in parallel on up to 15 different VMware clusters simultaneously. This functionality is also supported by registering OMEVV as a Hardware Support Manager (HSM) for VMware vSphere Life Cycle Manager vLCM. vLCM is a VMware supplied tool that coordinates the OMEVV firmware updates in conjunction with ESXi software updates, including drivers and hypervisor patches, offering administrators an easier way to update the entire cluster.

The integrated firmware update process is wizard-based, allowing the selection of the new firmware level(s), targeting all or selected component(s), and scheduling the update. A baseline profile contains the location of the catalog/repository detailing required firmware versions and the target host(s) to be associated with the profile. If the host does not have internet access to the Dell support site, you can use Dell Repository Manager to create a local repository for use with OMEVV within the firewall or in air gapped environments.

Figure 7. Firmware compliance / available upgrades

Dell publishes:

- Default firmware catalogs containing the latest released firmware. When using this, customers should check compatibility with the installed version of ESXi.

- Firmware catalogs for the Dell customized ESXi image non-vSAN (IOS file) to streamline deployments.

- Firmware catalogs specific for vSAN that support the VMware compatibility matrix. The vSAN firmware catalog has the specific firmware versions for supported vSAN components, such as HBAs when used with the corresponding Dell customized ESXi image. When OMEVV discovers a host running vSAN, OMEVV prevents the use of the default Dell firmware catalog for updates.

Together these three elements provide an easy path to the desired cluster state.

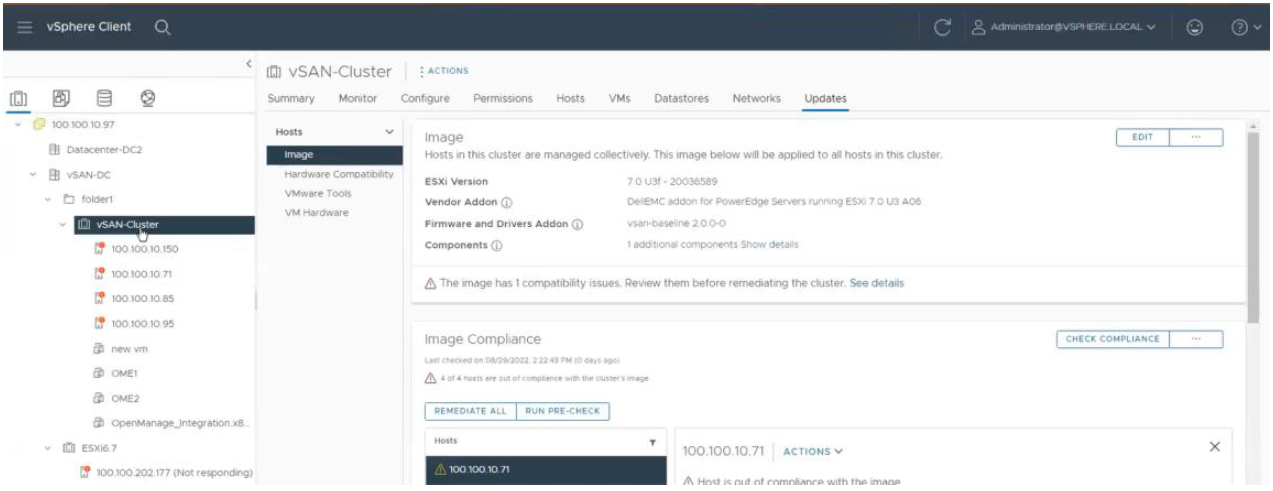

Figure 8. vLCM using OMEVV integration to patch Dell firmware as part of a VMware host update

Deploying the ESXi Hypervisor on new bare metal servers

Another key feature of the OpenManage Integration provides deployment of ESXi on Dell servers without using PXE. It includes the initial discovery, the optional deployment of the ESXi hypervisor with optional vSphere Host Profile, and registration of the host with a selected vCenter. It leverages the iDRAC9 enterprise hardware supported by 14G, 15G, and 16G generation Dell servers.

The deployment feature separates the deployment preparation steps from the actual hypervisor deployment. After a bare metal server(s) has been discovered and appears in the list as compliant, it is ready for the hypervisor deployment. The deployment wizard collects details of the target servers, the ISO OS image file, the vCenter Destination Container, and the optional VMware ESXi host profile. This optional host profile encapsulates deeper configuration template of the ESXi install. The deployment information includes details such as the settings of vCenter instance, host name, host IP address, new password, NIC for management tasks, is collected by the wizard with common data being applied across all target hosts. A deployment job can be run immediately or scheduled.

Figure 9. Bare metal server deployment wizard

Dell chassis discovery and monitoring

OMEVV allows administrators to discover and monitor chassis details including hyperlinks to OME-M, related hosts, inventory, firmware, and warranty.

Figure 10. MX chassis management information

Conclusion

The integration of OpenManage Enterprise with VMware vCenter provides a comprehensive, highly automated, end-to-end combined physical and virtual system management platform. OMEVV replaces the legacy standalone OMIVV, with only the new OMEVV supporting vSphere 8 and the latest server hardware. It enables host health monitoring, firmware update and bare metal deployment from within vCenter. It removes the complexities associated with manual processes and helps to avoid shuffling between multiple tools. This integration assists customers to reduce cost through a centralized, scalable, and customizable approach which is designed to enable and significantly simplify the management of Dell PowerEdge servers and modular chassis in a VMware environment.

References

- OMEVV manuals, including User Guide, Support Matrix, and API Guide Documentation

- API interactive explorer Developer Hub

- OpenManage Enterprise Support Site

- OpenManage Enterprise 3.10 Support Matrix

- Temporary OMEVV trial licenses can be downloaded here

- A downloadable catalog of supported and certified Dell firmware for VMware:

Improve workload resilience & streamline management of modern apps

Wed, 06 Sep 2023 00:21:03 -0000

|Read Time: 0 minutes

The combination of the latest hardware and software platforms can give DevOps and infrastructure admin teams better reliability and more provisioning options for containers—with simple and efficient management.

Introduction

Developer and operations teams that adopt DevOps philosophies and methodologies often rely on container technologies for the flexibility, efficiency, and consistency containers provide. Compared to running multiple VMs, which can be more resource-intensive, containers require less overhead and offer greater portability.[i] When teams need to spin up their workloads, containers are a convenient way for infrastructure admins to provide those resources and a speedy way for developers to test applications. These teams also need orchestration and management software to manage those containerized workloads. Kubernetes® is one such orchestration platform. It helps admins fully manage, automate, deploy, and scale containerized applications and workloads across an environment.[ii]

VMware® developed vSphere® with Tanzu to integrate Kubernetes containers with vSphere and to provide self-service environments for DevOps applications. With vSphere 8.0, the latest version of the platform, VMware has further enhanced vSphere with Tanzu.

Using an environment that included the latest 16th Generation Dell™ PowerEdge™ servers, we explored three new vSphere with Tanzu features that VMware released with vSphere 8.0:

- the ability to span clusters with Workload Availability Zones to increase resiliency and availability

- the introduction of the ClusterClass definition in the Cluster API, which helps streamlines Kubernetes cluster creation

- the ability to create custom node images using vSphere Tanzu Kubernetes Grid (TKG) Image Builder.

We found that these features could let DevOps teams save time while also increasing availability, helping pave the way for more efficient application development processes.

Understanding containers and their use in DevOps

Containers are packages of software that contain all the elements to run in a computing environment. Those elements include applications and services, libraries, and configuration files, among others. Containers do not typically contain operating system images but rather share elements of operating systems on the device—in our test cases, VMs running on PowerEdge servers. Using containers can benefit developers and DevOps applications because they are more lightweight and portable than single-function VMs.

Additionally, containers offer quick, reliable, and consistent deployments across different environments because they run as resource-isolated processes. They can run in a private data center, in the public cloud, or on a developer’s personal laptop.

An organizational advantage of containers is that they can help teams focus on their tasks: Developers can focus on application development and code issues, and operations teams can focus on the physical and virtual infrastructure. Some organizations may choose to combine developers and operations teams in a single DevOps group that manages the entire application development lifecycle, from planning to deployment and operations. In other organizations, separate development and operational teams may choose to adopt DevOps methodologies to streamline processes. For our test purposes, we assumed that a DevOps team is responsible for provisioning and managing virtual infrastructure for developers but not necessarily using that infrastructure themselves.

16th Generation Dell PowerEdge servers

The latest line of servers from Dell, PowerEdge servers come in multiple form factors for workload acceleration. Also in 16th Generation Dell PowerEdge servers is the Dell PowerEdge RAID controller 12 (PERC 12 Series), which offers expanded support and capabilities compared to previous versions, including support for SAS4, SATA, and NVMe® drive types.

Dell PowerEdge servers, including those from the 16th Generation, offer resiliency in the form of Fault Resistant Memory (FRM).[iii] FRM creates a fault-resilient area of memory that protects the hypervisor against uncorrectable memory errors, which in turn helps safeguard the system from unplanned downtime. On the virtual side, VMware vSAN™ 8.0 allows DevOps and infrastructure teams to pool storage to create smaller fault domains.

To learn more about the latest 16th Generation Dell PowerEdge servers, visit https://www.dell.com/en-us/shop/dell-poweredge-servers/sc/servers.

To see more of our recent work with the latest Dell PowerEdge servers, visit https://www.principledtechnologies.com/portfolio-marketing/Dell/2023.

A quick look at vSphere with Tanzu

VMware created the Tanzu Kubernetes Grid (TKG) framework to simplify the creation of a Kubernetes environment. VMware includes it as a central component in many of their Tanzu offerings.[iv] vSphere with Tanzu takes TKG one step further by integrating the Tanzu Kubernetes environments with vSphere clusters. The addition of vSphere Namespaces (collections of CPU, memory, and storage quotas for running TKG clusters) allows vSphere admins to allocate resources to each Namespace to help balance developer requests with resource realities.[v]

vSphere admins can use vSphere with Tanzu to create environments in which developers can quickly and easily request and access the containers they need to develop and maintain workloads. At the same time, vSphere with Tanzu allows admins to monitor and manage resource consumption using familiar VMware tools. (To learn more about how Dell and VMware solutions can enable a self-service DevOps environment, read our report “Give DevOps teams self-service resource pools within your private infrastructure with Dell Technologies APEX cloud and storage solutions” at https://facts.pt/MO2uvKh.)

New features with VMware vSphere 8.0

As we previously noted, VMware vSphere 8.0 brings new and improved features to vSphere with Tanzu. These include the ability to deploy vSphere with Tanzu Supervisor in a zonal configuration, the inclusion of ClusterClass specification in the TKG API, and tools that let administrators customize OS images for TKG cluster worker nodes. In this section, we describe these features and how they function; later, we cover how we tested them and what our results might mean to your organization.

Deploying vSphere with Tanzu Supervisor in a zonal configuration provides high availability

In previous versions of vSphere, administrators could deploy vSphere with Tanzu Supervisor to only a single vSphere host cluster. vSphere 8.0 introduces the ability to deploy the Supervisor in a configuration spanning three Workload Availability Zones, which map directly to three respective clusters of physical hosts. This zonal deployment of the Supervisor provides high availability at the vSphere cluster layer and keeps Tanzu Kubernetes workloads running even if one cluster or availability zone is temporarily unavailable. Figure 1 shows how we set up our environment to test the functionality of Workload Availability Zones.

Figure 1: The zonal setup of our testbed. Source: Principled Technologies.

The vSphere with Tanzu Supervisor is a vSphere-managed Kubernetes control plane that operates on the VMware ESXi™ hypervisor layer and functionally replaces a standalone TKG management cluster. vSphere administrators can configure and deploy the Supervisor via the Workload Management tool in VMware vCenter Server®. Once an administrator has deployed the Supervisor cluster and associated it with a vSphere Namespace, the cluster acts as a control and management plane for the Namespace and all the TKG workload clusters it contains.

The Namespace provides role-based access control (RBAC) to the virtual infrastructure and defines the hardware resources—CPU, memory, and storage—available to the Supervisor for provisioning workload clusters. It also allows infrastructure administrators to specify the available VM classes, storage policies, and Tanzu Kubernetes Releases (TKrs) available to users with access to the Namespace. Users can then provision their workload clusters as necessary to host Kubernetes applications and services in a way that the hypervisor and vSphere administrators can see.

Based on our testing, having the Supervisor span three Workload Availability Zones provided increased resiliency to our Tanzu Kubernetes workload clusters. We tested by deploying a webserver application to a workload cluster spanning all three availability zones. Even after we powered off one of the physical hosts that comprised the three Workload Availability Zones physically, the application remained functional and accessible. After we powered on the hosts, the Supervisor repaired itself and brought all missing control plane and worker nodes back online automatically without administrator intervention.

Defining clusters with the ClusterClass specification in the TKG API is easier

Another significant improvement to vSphere with Tanzu in vSphere 8.0 is the introduction of the ClusterClass resource to the TKG API. vSphere with Tanzu in vSphere 8.0 uses TKG 2, which implements the ClusterClass abstraction from the Kubernetes Cluster API and provides a modular, cloud-agnostic format for configuring and provisioning clusters and cluster lifecycle management.

Previous versions of vSphere with Tanzu used the TKG 1.x API. This API used a VMware proprietary cluster definition called tanzukubernetescluster (TKC) that was specific to vSphere. With the introduction of ClusterClass to the v1beta1 version of the open-source Kubernetes Cluster API and its implementation in the TKG 2.x API, cluster provisioning and management in vSphere with Tanzu are now more directly aligned with the broader multi-cloud Kubernetes ecosystem and provide a more portable and modular way to define clusters regardless of the specific cloud environment. The ClusterClass specification also allows users to define which Workload Availability Zones any specific workload cluster will span and is less complicated than the previous TKC cluster specification.

Administrators can customize OS images for TKG cluster worker nodes by using new tools

vSphere 8.0 also provides tools for administrators to build their own OS images for TKG cluster

worker nodes. vSphere with Tanzu includes a VMware-curated set of worker node images for specific combinations of Kubernetes releases and Linux OSes, but now administrators can create custom OS images and make them available to DevOps users via a local content library for use in provisioning workload clusters. This allows administrators to create custom OS images outside of the options distributed with TKG, including the ability to create worker nodes based on Red Hat® Enterprise Linux® 8 that TKG supports but does not currently include in the TKG distribution set of default images. To suit the needs of their DevOps users, administrators can also use this feature to preinstall binaries and packages to worker node images that the OS does not include by default.

What can the combination of 16th Generation Dell PowerEdge servers and VMware vSphere 8.0 deliver for containerized environments?

A multi-node Dell PowerEdge server cluster running VMware vSphere 8.0 could improve workload resiliency and streamline management and security of modern apps. The following sections delve into our testing and explore how the new vSphere 8.0 features could benefit developers and infrastructure teams.

About VMware vSphere 8.0

vSphere is an enterprise compute virtualization program that aims to bring “the benefits of cloud to on-premises workloads” by combining “industry-leading cloud infrastructure technology with data processing unit (DPU)- and GPU-based acceleration to boost workload performance.”[vi]

This latest version introduces the vSphere Distributed Services Engine, which enables organizations to distribute infrastructure services across compute resources available to the VMware ESXi host and offload networking functions to the DPU.[vii]

In addition to the improvements for vSphere for Tanzu and the introduction of vSphere Distributed Services Engine, the latest version of the vSphere platform offers new features for vSphere Lifecycle Manager, artificial intelligence and machine learning hardware and software, and other facets of data center operations.

To learn more about vSphere 8.0, visit https://core.vmware.com/vmware-vsphere-8.

How we tested

About the Dell PowerEdge R6625 servers we used

The PowerEdge R6625 rack server is a high-density, two-socket 1U server. According to Dell, the company designed the server for “data speed” and “HPC workloads or running multiple VDI instances,”[viii] and to serve “as the backbone of your data center.”[ix] Two AMD EPYC™ 4th Generation 9004 Series processors power these servers, delivering up to 128 cores per processor. For storage, customers can get up to 153.6 TB in the front bays from 10 2.5-inch SAS, SATA, or NVMe HDD or SSD drives, and up to 30.72 TB in the rear bays from two 2.5-inch SAS or SATA HDD or SSD drives. For memory, servers have 24 DDR5 DIMM slots to support up to 6 TB of RDIMM at speeds up to 4800 MT/s. Customers can also choose from many power and cooling options. The server and processor offer the following security features:[x]

- AMD Secure Encrypted Virtualization (SEV)

- AMD Secure Memory Encryption (SME)

- Cryptographically signed firmware

- Data at Rest Encryption (SEDs with local or external key management)

- Secure Boot

- Secured Component Verification (hardware integrity check)

- Secure Erase

- Silicon Root of Trust

- System Lockdown (requires iDRAC9 Enterprise or Datacenter)

- TPM 2.0 FIPS, CC-TCG certified, TPM 2.0 China Nation

Table 1 shows the technical specifications of the six 16th Generation PowerEdge R6625 servers we tested. Three of the servers had one configuration, and the other three had a different one. We clustered the similarly configured servers together.

Table 1: Specifications of the 16th Generation Dell PowerEdge R6625 servers we tested. Source: Principled Technologies.

Cluster 1 | |

Hardware | 3x Dell PowerEdge R6625 |

Processor | 2x AMD EPYC 9554 64-core processor, 128 cores, 3.1 GHz |

Disks (vSAN) | 4x 3.2TB Dell Ent NVMe v2 AGN MU U.2 2x 600GB Seagate ST600MM0069 |

Disks (OS) | 2x 600GB Seagate ST600MM0069 |

Total memory in system (GB) | 128 |

Operating system name and version/build number | VMware ESXi, 8.0.1, 21495797 |

Cluster 2 | |

Hardware | 3x Dell PowerEdge R6625 |

Processor | 2x AMD EPYC 9554 64-core processor, 128 cores, 3.1 GHz |

Disks (vSAN) | 4x 6.4TB Dell Ent NVMe v2 AGN MU U.2 |

Disks (OS) | 2x servers: 2x 600GB Seagate ST600MM0069 1x server: 2x 600GB Toshiba AL15SEB060NY |

Total memory in system (GB) | 128 |

Operating system name and version/build number | VMware ESXi, 8.0.1, 21495797 |

In addition to the 16th Generation Dell PowerEdge R6625 servers, we included previous-generation PowerEdge R7525 servers in our testbed (see Table 2). Our use of the older servers in testing reflects actual use cases where organizations might include them for less-critical applications or as failover options.

Table 2: Specifications of the Dell PowerEdge R7525 servers we tested. Source: Principled Technologies.

Cluster 3 | |

Hardware | 3x Dell PowerEdge R7525 |

Processor | 2x AMD EPYC 7513 32-Core Processor, 64 cores, 2.6 GHz |

Disks (vSAN) | 4x 3.2TB Dell Ent NVMe v2 AGN MU U.2 |

Disks (OS) | 2x servers: 2x 240GB Micron MTFDDAV240TDU 1x server: 2x 240GB Intel SSDSCKKB240G8R |

Total memory in system (GB) | 512 |

Operating system name and version/build number | VMware ESXi, 8.0.1, 21495797 |

For more information on the servers we used in testing, see the science behind this report.

To learn more about the Dell PowerEdge R6625, visit https://www.dell.com/en-us/shop/cty/pdp/spd/ poweredge-r6625/pe_r6625_tm_vi_vp_sb.

Setting up the VMware vCenter environment

We started with six Dell PowerEdge R6625 and three Dell PowerEdge R7525 servers and installed the VMware vSphere 8.0.1 release of the Dell-customized VMware ESXi image on each server. To support our environment, we deployed the latest version of VMware vCenter on a separate server. We then created three separate vSAN-enabled vSphere clusters, as we defined in Table 1, and deployed vSphere with Tanzu with a zonal Supervisor cluster spanning the three vSphere clusters, each set as a vSphere Zone. Figure 2 shows the details of our testbed.

Figure 2: Our testbed in detail. Source: Principled Technologies.

Testing Workload Availability Zones

To demonstrate the utility of a zonal Supervisor deployment spanning three Workload Availability Zones, we deployed a simple “hello world” NGINX web server application to a workload cluster that spanned all three zones. Our deployment defined three replicas of the application, which the Supervisor distributed across the three zones automatically. Then, to simulate a system failure—such as might occur with a power outage—we powered off the physical hosts of one vSphere cluster mapped as an availability zone.

Even after this loss of power on the cluster, the workload continued to function. The missing Supervisor and worker nodes and their services automatically came back online after we powered on the hosts and they reconnected to the vCenter Server.

For clusters hosting any kind of key workload, whether user-facing or internal, availability is critical. If a cluster goes down completely—whether due to natural disaster, human error, or malicious intent—the resulting downtime can have severe consequences, from hurting productivity to losing revenue. As an example for our use case, downtime for containerized microservices that are part of an ecommerce app could lead to miscalculations in inventory or the inability to process an order, potentially causing customer dissatisfaction and lost revenue. Workload Availability Zones offer a resiliency that can ease the minds of both development and infrastructure teams by ensuring that containerized applications and services have the uptime to meet usage demands.

Testing ClusterClass

We used the v1beta1 Cluster API implementation of ClusterClass to deploy workload clusters using TKG 2.0 on a multi-zone vSphere with Tanzu Supervisor. The Cluster API, implemented in the vSphere with Tanzu environment as part of TKG, provides provisioning and operating of Kubernetes clusters across different types of infrastructure, including vSphere, Amazon Web Services, and Microsoft Azure, among others. We used YAML configuration files in our test environment to define and test two sample ClusterClass cluster definitions representing different options available to users when provisioning a workload cluster deployment in vSphere with Tanzu: One spanning all three Workload Availability Zones, and one that uses the Antrea Container Network Interface (CNI) rather than the default Calico CNI.

The implementation of the ClusterClass object in the TKG 2 API replaces the previous tanzukubernetescluster resource used in TKG 1.x and brings Tanzu cluster definitions into a more modular and cloud-agnostic format that aligns with user expectations and helps with portability across diverse cloud environments. Although we did not test the full extent of the feature, we found that it worked well in our testing.

Testing node image customization on the workload cluster

Using the VMware Tanzu Image Builder, we created a custom Ubuntu 20.04 image for use with Kubernetes v.1.24.9 and vSphere with Tanzu. We added a simple utility package (curl) that the base Ubuntu installation does not include by default. To confirm that vSphere used the custom OS image on the provisioned cluster’s nodes as we expected, we connected to one of the worker nodes via SSH. When we did so, we saw that the platform had installed curl—the feature had worked as intended.

This feature allows teams to build OS images to meet the specific needs of their applications or services. They can then add the custom-built OS images as a custom TKr in vSphere for Tanzu to provision workload clusters.

DevOps teams can create custom machine images for the management and workload cluster nodes to use as VM templates. Each custom machine image packages a base operating system, a Kubernetes version, and any additional customizations into an image that runs on vSphere. However, custom images must use the operating systems that vSphere for Tanzu supports; currently, it supports Ubuntu 20.04, Ubuntu 18.04, Red Hat Enterprise Linux 8, Photon OS 3, and Windows 2019.[xi]

This kind of customization could save time for DevOps teams and help maintain Tanzu environmental consistency. It also allows users to predefine binaries included on an OS image rather than install them afterward.

Conclusion

The combination of 16th Generation Dell PowerEdge servers and VMware vSphere 8.0 provides a resilient and efficient solution for organizations running containerized applications. Upgrading to the hardware and software combination provides helpful features that increase workload availability and streamline management. We found Workload Availability Zones, ClusterClass, and node image customization features to function well and as we expected, thus aiding both development and infrastructure teams in a DevOps context. Deploying vSphere 8.0 on Dell PowerEdge servers enables organizations to take advantage of the benefits of containerization and modernize their IT infrastructure.

This project was commissioned by Dell Technologies.

August 2023

Principled Technologies is a registered trademark of Principled Technologies, Inc.

All other product names are the trademarks of their respective owners.

[i] Rosbach, Felix, “Why we use Containers and Kubernetes - an Overview,” accessed July 10, 2023, https://insights.comforte.com/why-we-use-containers-and-kubernetes-an-overview.

[ii] Rosbach, Felix, “Why we use Containers and Kubernetes - an Overview.”

[iii] Dell Technologies, “Dell Fault Resilient Memory,” accessed June 28, 2023,

https://dl.dell.com/content/manual19947903-dell-fault-resilient-memory.pdf?language=en-us.

[iv] VMware, “VMware Tanzu Kubernetes Grid Documentation,” accessed July 7, 2023,

https://docs.vmware.com/en/VMware-Tanzu-Kubernetes-Grid/index.html.

[v] VMware, “What is a vSphere Namespace?,” accessed July 7, 2023, https://docs.vmware.com/en/VM-ware-vSphere/8.0/vsphere-with-tanzu-concepts-planning/GUID-638682AE-5EC9-47AA-A71C-0BECF9DC27A1.html.

[vi] VMware, “VMware vSphere,” accessed June 29, 2023,

https://www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/vsphere/vmw-vsphere-datasheet.pdf.

[vii] VMware, “Introducing VMware vSphere Distributed Services Engine and Networking Acceleration by Using DPUs,” accessed June 29, 2023, https://docs.vmware.com/en/VMware-vSphere/8.0/vsphere-esxi-installation/GUID-EC3CE886-63A9-4FF0-B79F-111BCB61038F.html.

[viii] Dell Technologies, “PowerEdge R6625 Rack Server,” accessed June 28, 2023, https://www.dell.com/en-us/shop/cty/pdp/spd/poweredge-r6625/pe_r6625_tm_vi_vp_sb.

[ix] Dell Technologies, “PowerEdge R6625: Breakthrough performance,” accessed June 28, 2023, https://www.dell-technologies.com/asset/en-us/products/servers/technical-support/poweredge-r6625-spec-sheet.pdf.

[x] Dell Technologies, “PowerEdge R6625: Breakthrough performance.”

[xi] VMware, “Tanzu Kubernetes Releases and Custom Node Images,” accessed July 10, 2023,

https://docs.vmware.com/en/VMware-Tanzu-Kubernetes-Grid/2/about-tkg/tkr.html.

Author: Principled Technologies