Quantifying Performance of Dell EMC PowerEdge R7525 Servers with NVIDIA A100 GPUs for Deep Learning Inference

Tue, 17 Nov 2020 21:10:22 -0000

|Read Time: 0 minutes

The Dell EMC PowerEdge R7525 server provides exceptional MLPerf Inference v0.7 Results, which indicate that:

- Dell Technologies holds the #1 spot in performance per GPU with the NVIDIA A100-PCIe GPU on the DLRM-99 Server scenario

- Dell Technologies holds the #1 spot in performance per GPU with the NVIDIA A100-PCIe on the DLRM-99.9 Server scenario

- Dell Technologies holds the #1 spot in performance per GPU with the NVIDIA A100-PCIe on the ResNet-50 Server scenario

Summary

In this blog, we provide the performance numbers of our recently released Dell EMC PowerEdge R7525 server with two NVIDIA A100 GPUs on all the results of the MLPerf Inference v0.7 benchmark. Our results indicate that the PowerEdge R7525 server is an excellent choice for inference workloads. It delivers optimal performance for different tasks that are in the MLPerf Inference v0.7 benchmark. These tasks include image classification, object detection, medical image segmentation, speech to text, language processing, and recommendation.

The PowerEdge R7525 server is a two-socket, 2U rack server that is designed to run workloads using flexible I/O and network configurations. The PowerEdge R7525 server features the 2nd Gen AMD EPYC processor, supports up to 32 DIMMs, has PCI Express (PCIe) Gen 4.0-enabled expansion slots, and provides a choice of network interface technologies to cover networking options.

The following figure shows the front view of the PowerEdge R7525 server:

Figure 1. Dell EMC PowerEdge R7525 server

The PowerEdge R7525 server is designed to handle demanding workloads and for AI applications such as AI training for different kinds of models and inference for different deployment scenarios. The PowerEdge R7525 server supports various accelerators such as NVIDIA T4, NVIDIA V100S, NVIDIA RTX, and NVIDIA A100 GPU s. The following sections compare the performance of NVIDIA A100 GPUs with NVIDIA T4 and NVIDIA RTX GPUs using MLPerf Inference v0.7 as a benchmark.

The following table provides details of the PowerEdge R7525 server configuration and software environment for MLPerf Inference v0.7:

Component | Description |

Processor | AMD EPYC 7502 32-Core Processor |

Memory | 512 GB (32 GB 3200 MT/s * 16) |

Local disk | 2x 1.8 TB SSD (No RAID) |

Operating system | CentOS Linux release 8.1 |

GPU | NVIDIA A100-PCIe-40G, T4-16G, and RTX8000 |

CUDA Driver | 450.51.05 |

CUDA Toolkit | 11.0 |

Other CUDA-related libraries | TensorRT 7.2, CUDA 11.0, cuDNN 8.0.2, cuBLAS 11.2.0, libjemalloc2, cub 1.8.0, tensorrt-laboratory mlperf branch |

Other software stack | Docker 19.03.12, Python 3.6.8, GCC 5.5.0, ONNX 1.3.0, TensorFlow 1.13.1, PyTorch 1.1.0, torchvision 0.3.0, PyCUDA 2019.1, SacreBLEU 1.3.3, simplejson, OpenCV 4.1.1 |

System profiles | Performance |

For more information about how to run the benchmark, see Running the MLPerf Inference v0.7 Benchmark on Dell EMC Systems.

MLPerf Inference v0.7 performance results

The MLPerf inference benchmark measures how fast a system can perform machine learning (ML) inference using a trained model in various deployment scenarios. The following results represent the Offline and Server scenarios of the MLPerf Inference benchmark. For more information about different scenarios, models, datasets, accuracy targets, and latency constraints in MLPerf Inference v0.7, see Deep Learning Performance with MLPerf Inference v0.7 Benchmark.

In the MLPerf inference evaluation framework, the LoadGen load generator sends inference queries to the system under test, in our case, the PowerEdge R7525 server with various GPU configurations. The system under test uses a backend (for example, TensorRT, TensorFlow, or PyTorch) to perform inferencing and sends the results back to LoadGen.

MLPerf has identified four different scenarios that enable representative testing of a wide variety of inference platforms and use cases. In this blog, we discuss the Offline and Server scenario performance. The main differences between these scenarios are based on how the queries are sent and received:

- Offline—One query with all samples is sent to the system under test. The system under test can send the results back once or multiple times in any order. The performance metric is samples per second.

- Server—Queries are sent to the system under test following a Poisson distribution (to model real-world random events). One query has one sample. The performance metric is queries per second (QPS) within latency bound.

Note: Both the performance metrics for Offline and Server scenario represent the throughput of the system.

In all the benchmarks, two NVIDIA A100 GPUs outperform eight NVIDIA T4 GPUs and three NVIDIA RTX800 GPUs for the following models:

- ResNet-50 image classification model

- SSD-ResNet34 object detection model

- RNN-T speech recognition model

- BERT language processing model

- DLRM recommender model

- 3D U-Net medical image segmentation model

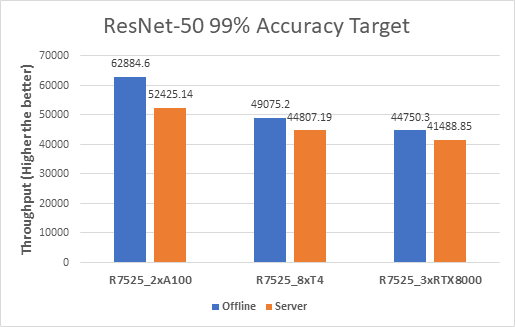

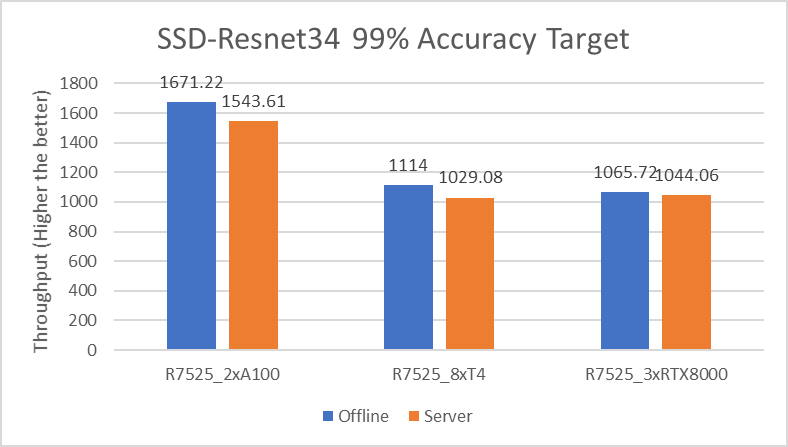

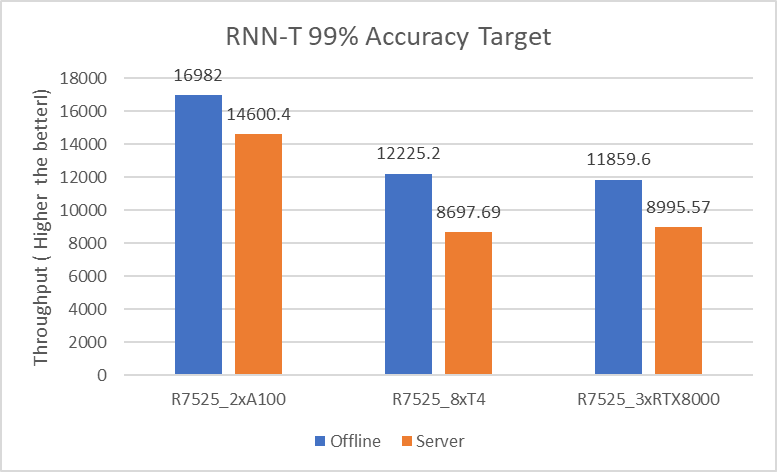

The following graphs show PowerEdge R7525 server performance with two NVIDIA A100 GPUs, eight NVIDIA T4 GPUs, and three NVIDIA RTX8000 GPUs with 99% accuracy target benchmarks and 99.9% accuracy targets for applicable benchmarks:

- 99% accuracy (default accuracy) target benchmarks: ResNet-50, SSD-Resnet34, and RNN-T

- 99% and 99.9% accuracy (high accuracy) target benchmarks: DLRM, BERT, and 3D-Unet

99% accuracy target benchmarks

ResNet-50

The following figure shows results for the ResNet-50 model:

Figure 2. ResNet-50 Offline and Server inference performance

From the graph, we can derive the per GPU values. We divide the system throughput (containing all the GPUs) by the number of GPUs to get the Per GPU results as they are linearly scaled.

SSD-Resnet34

The following figure shows the results for the SSD-Resnet34 model:

Figure 3. SSD-Resnet34 Offline and Server inference performance

RNN-T

The following figure shows the results for the RNN-T model:

Figure 4. RNN-T Offline and Server inference performance

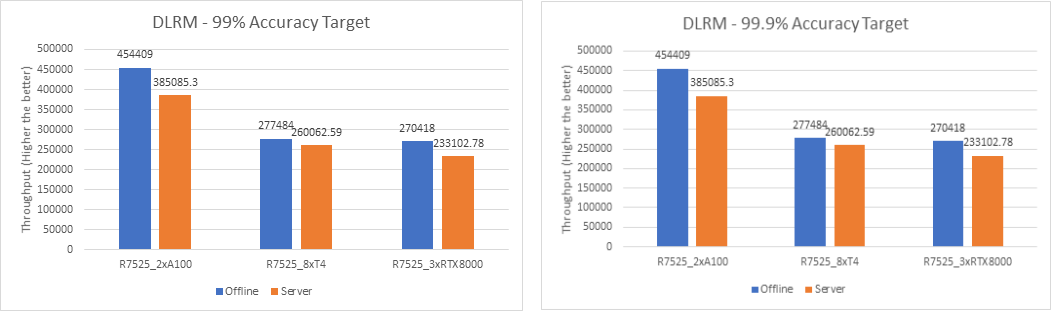

99.9% accuracy target benchmarks

DLRM

The following figures show the results for the DLRM model with 99% and 99.9% accuracy:

Figure 5. DLRM Offline and Server Scenario inference performance – 99% and 99.9% accuracy

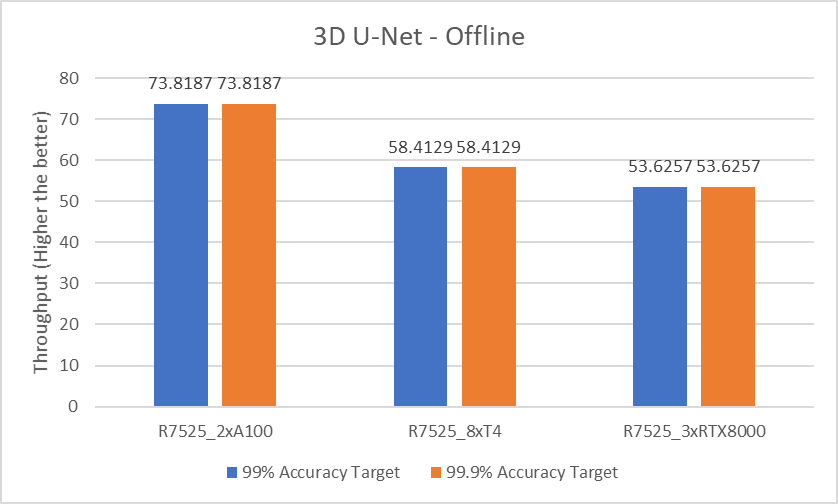

For the DLRM recommender and 3D U-Net medical image segmentation (see Figure 7) models, both 99% and 99.9% accuracy have the same throughput. The 99.9% accuracy benchmark also satisfies the required accuracy constraints with the same throughput as that of 99%.

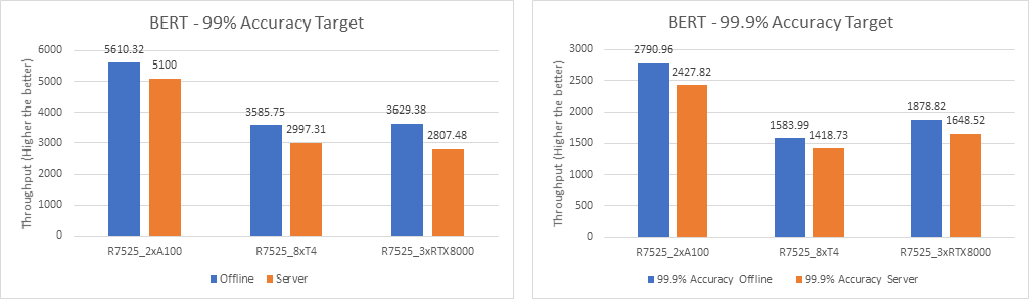

BERT

The following figures show the results for the BERT model with 99% and 99.9% accuracy:

Figure 6. BERT Offline and Server inference performance – 99% and 99.9% accuracy

For the BERT language processing model, two NVIDIA A100 GPUs outperform eight NVIDIA T4 GPUs and three NVIDIA RTX8000 GPUs. However, the performance of three NVIDIA RTX8000 GPUs is a little better than that of eight NVIDIA T4 GPUs.

3D U-Net

For the 3D-Unet medical image segmentation model, only the Offline scenario benchmark is available.

The following figure shows the results for the 3D U-Net model Offline scenario:

Figure 7. 3D U-Net Offline inference performance

For the 3D-Unet medical image segmentation model, since there is only offline scenario benchmark for 3D-Unet the above graph represents only Offline scenario.

The following table compares the throughput between two NVIDIA A100 GPUs, eight NVIDIA T4 GPUs, and three NVIDIA RTX8000 GPUs with 99% accuracy target benchmarks and 99.9% accuracy targets:

Model | Scenario | Accuracy | 2 x A100 GPUs vs 8 x T4 GPUs | 2 x A100 GPUs vs 3 x RTX8000 GPUs |

ResNet-50 | Offline | 99% | 5.21x | 2.10x |

Server | 4.68x | 1.89x | ||

SSD-Resnet34 | Offline | 6.00x | 2.35x | |

Server | 5.99x | 2.21x | ||

RNN-T | Offline | 5.55x | 2.14x | |

Server | 6.71x | 2.43x | ||

DLRM | Offline | 6.55x | 2.52x | |

Server | 5.92x | 2.47x | ||

Offline | 99.9% | 6.55x | 2.52x | |

Server | 5.92x | 2.47x | ||

BERT | Offline | 99% | 6.26x | 2.31x |

Server | 6.80x | 2.72x | ||

Offline | 99.9% | 7.04x | 2.22x | |

Server | 6.84x | 2.20x | ||

3D U-Net | Offline | 99% | 5.05x | 2.06x |

Server | 99.9% | 5.05x | 2.06x |

Conclusion

With support of NVIDIA A100, NVIDIA T4, or NVIDIA RTX8000 GPUs, Dell EMC PowerEdge R7525 server is an exceptional choice for various workloads that involve deep learning inference. However, the higher throughput that we observed with NVIDIA A100 GPUs translates to performance gains and faster business value for inference applications.

Dell EMC PowerEdge R7525 server with two NVIDIA A100 GPUs delivers optimal performance for various inference workloads, whether it is in a batch inference setting such as Offline scenario or Online inference setting such as Server scenario.

Next steps

In future blogs, we will discuss sizing the system (server and GPU configurations) correctly based on the type of workload (area and task).

Related Blog Posts

Unveiling the Power of the PowerEdge XE9680 Server on the GPT-J Model from MLPerf™ Inference

Tue, 16 Jan 2024 18:30:32 -0000

|Read Time: 0 minutes

Abstract

For the first time, the latest release of the MLPerf™ inference v3.1 benchmark includes the GPT-J model to represent large language model (LLM) performance on different systems. As a key player in the MLPerf consortium since version 0.7, Dell Technologies is back with exciting updates about the recent submission for the GPT-J model in MLPerf Inference v3.1. In this blog, we break down what these new numbers mean and present the improvements that Dell Technologies achieved with the Dell PowerEdge XE9680 server.

MLPerf inference v3.1

MLPerf inference is a standardized test for machine learning (ML) systems, allowing users to compare performance across different types of computer hardware. The test helps determine how well models, such as GPT-J, perform on various machines. Previous blogs provide a detailed MLPerf inference introduction. For in-depth details, see Introduction to MLPerf inference v1.0 Performance with Dell Servers. For step-by-step instructions for running the benchmark, see Running the MLPerf inference v1.0 Benchmark on Dell Systems. Inference version v3.1 is the seventh inference submission in which Dell Technologies has participated. The submission shows the latest system performance for different deep learning (DL) tasks and models.

Dell PowerEdge XE9680 server

The PowerEdge XE9680 server is Dell’s latest two-socket, 6U air-cooled rack server that is designed for training and inference for the most demanding ML and DL large models.

Figure 1. Dell PowerEdge XE9680 server

Key system features include:

- Two 4th Gen Intel Xeon Scalable Processors

- Up to 32 DDR5 DIMM slots

- Eight NVIDIA HGX H100 SXM 80 GB GPUs

- Up to 10 PCIe Gen5 slots to support the latest Gen5 PCIe devices and networking, enabling flexible networking design

- Up to eight U.2 SAS4/SATA SSDs (with fPERC12)/ NVMe drives (PSB direct) or up to 16 E3.S NVMe drives (PSB direct)

- A design to train and inference the most demanding ML and DL large models and run compute-intensive HPC workloads

The following figure shows a single NVIDIA H100 SXM GPU:

Figure 2. NVIDIA H100 SXM GPU

GPT-J model for inference

Language models take tokens as input and predict the probability of the next token or tokens. This method is widely used for essay generation, code development, language translation, summarization, and even understanding genetic sequences. The GPT-J model in MLPerf inference v3.1 has 6 B parameters and performs text summarization tasks on the CNN-DailyMail dataset. The model has 28 transformer layers, and a sequence length of 2048 tokens.

Performance updates

The official MLPerf inference v3.1 results for all Dell systems are published on https://mlcommons.org/benchmarks/inference-datacenter/. The PowerEdge XE9680 system ID is ID 3.1-0069.

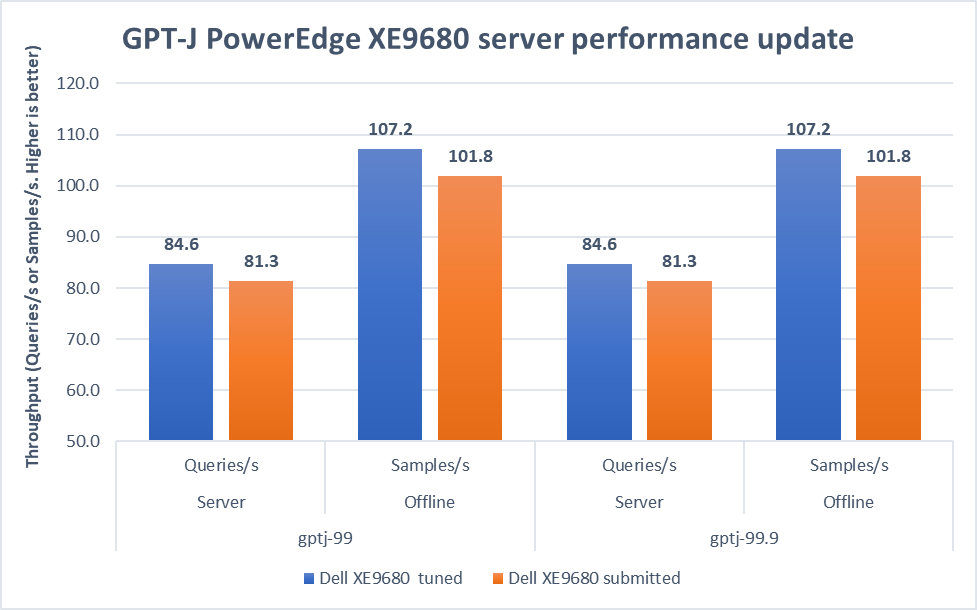

After submitting the GPT-J model, we applied the latest firmware updates to the PowerEdge XE9680 server. The following figure shows that performance improved as a result:

Figure 3. Improvement of the PowerEdge XE9680 server on GPT-J Datacenter 99 and 99.9, Server and Offline scenarios [1]

In both 99 and 99.9 Server scenarios, the performance increased from 81.3 to an impressive 84.6. This 4.1 percent difference showcases the server's capability under randomly fed inquires in the MLPerf-defined latency restriction. In the Offline scenarios, the performance saw a notable 5.3 percent boost from 101.8 to 107.2. These results mean that the server is even more efficient and capable of handling batch-based LLM workloads.

Note: For PowerEdge XE9680 server configuration details, see https://github.com/mlcommons/inference_results_v3.1/blob/main/closed/Dell/systems/XE9680_H100_SXM_80GBx8_TRT.json

Conclusion

This blog focuses on the updates of the GPT-J model in the v3.1 submission, continuing the journey of Dell’s experience with MLPerf inference. We highlighted the improvements made to the PowerEdge XE9680 server, showing Dell's commitment to pushing the limits of ML benchmarks. As technology evolves, Dell Technologies remains a leader, constantly innovating and delivering standout results.

[1] Unverified MLPerf® v3.1 Inference Closed GPT-J. Result not verified by MLCommons Association.

The MLPerf name and logo are registered and unregistered trademarks of MLCommons Association in the United States and other countries. All rights reserved. Unauthorized use is strictly prohibited. See www.mlcommons.org for more information.

Comparison of Top Accelerators from Dell Technologies’ MLPerf™ Inference v3.0 Submission

Fri, 21 Apr 2023 21:43:39 -0000

|Read Time: 0 minutes

Abstract

Dell Technologies recently submitted results to MLPerfTM Inference v3.0 in the closed division. This blog highlights the NVIDIA H100 PCIe GPU and compares the results to the NVIDIA A100 PCIe GPU with the PCIe form factor held constant.

Introduction

MLPerf Inference v3.0 submission falls under the benchmarking pillar of the MLCommonsTM consortium with the objective to make fair comparisons across server configurations. Submissions that are made to the closed division warrant an equitable comparison of the systems.

This blog highlights the closed division submissions Dell Technologies made with the NVIDIA A100 GPU using the PCIe (peripheral component interconnect express) form factor. The PCIe form factor is an interfacing standard for connecting various high-speed components in hardware such as a computer or a server. Servers include a certain number of PCIe slots in which to insert GPUs or other additional cards. Note that there are different physical configurations for the slots to indicate the number of lanes for data to travel to and from the PCIe card. The NVIDIA H100 GPU is truly the latest and greatest GPU with NVIDIA AI Enterprise included; it is a dual-slot air cooled PCIe generation 5.0 GPU. This GPU runs at a memory bandwidth speed of over 2,000 megabits per second and up to seven Multi-Instance GPUs at 10 gigabytes each. The NVIDIA A100 80 GB GPU is a dual-slot PCIe generation 4.0 GPU that runs at a memory bandwidth speed of over 2,000 megabits per second.

NVIDIA H100 PCIe GPU and NVIDIA A100 PCIe GPU comparison

In addition to making a submission with the NVIDIA A100 GPU, Dell Technologies made a submission with the NVIDIA H100 GPU. To make a fair comparison, the systems were identical and the PCIe form factor was held constant.

Platform | Dell PowerEdge R750xa (4x A100-PCIe-80GB, TensorRT) | Dell PowerEdge R750xa (4x H100-PCIe-80GB, TensorRT) |

Round | V3.0 | |

MLPerf System ID | R750xa_A100_PCIe_80GBx4_TRT | R750xa_H100_PCIe_80GBx4_TRT |

Operating system | CentOS 8.2 | |

CPU | Intel Xeon Gold 6338 CPU @ 2.00 GHz | |

Memory | 1 TB | 1 TB |

GPU | NVIDIA A100-PCIe-80GB | NVIDIA H100-PCIe-80GB |

GPU form factor | PCIe | |

GPU memory configuration | HBM2e | |

GPU count | 4 | |

Software stack | TensorRT 8.6 CUDA 12.0 cuDNN 8.8.0 Driver 525.85.12 DALI 1.17.0 | TensorRT 8.6 CUDA 12.0 cuDNN 8.8.0 Driver 525.60.13 DALI 1.17.0 |

Table 1: Software stack of submissions made on NVIDIA A100 PCIe and NVIDIA H100 PCIe GPUs for MLPerf Inference v3.0 on the Dell PowerEdge R750xa server

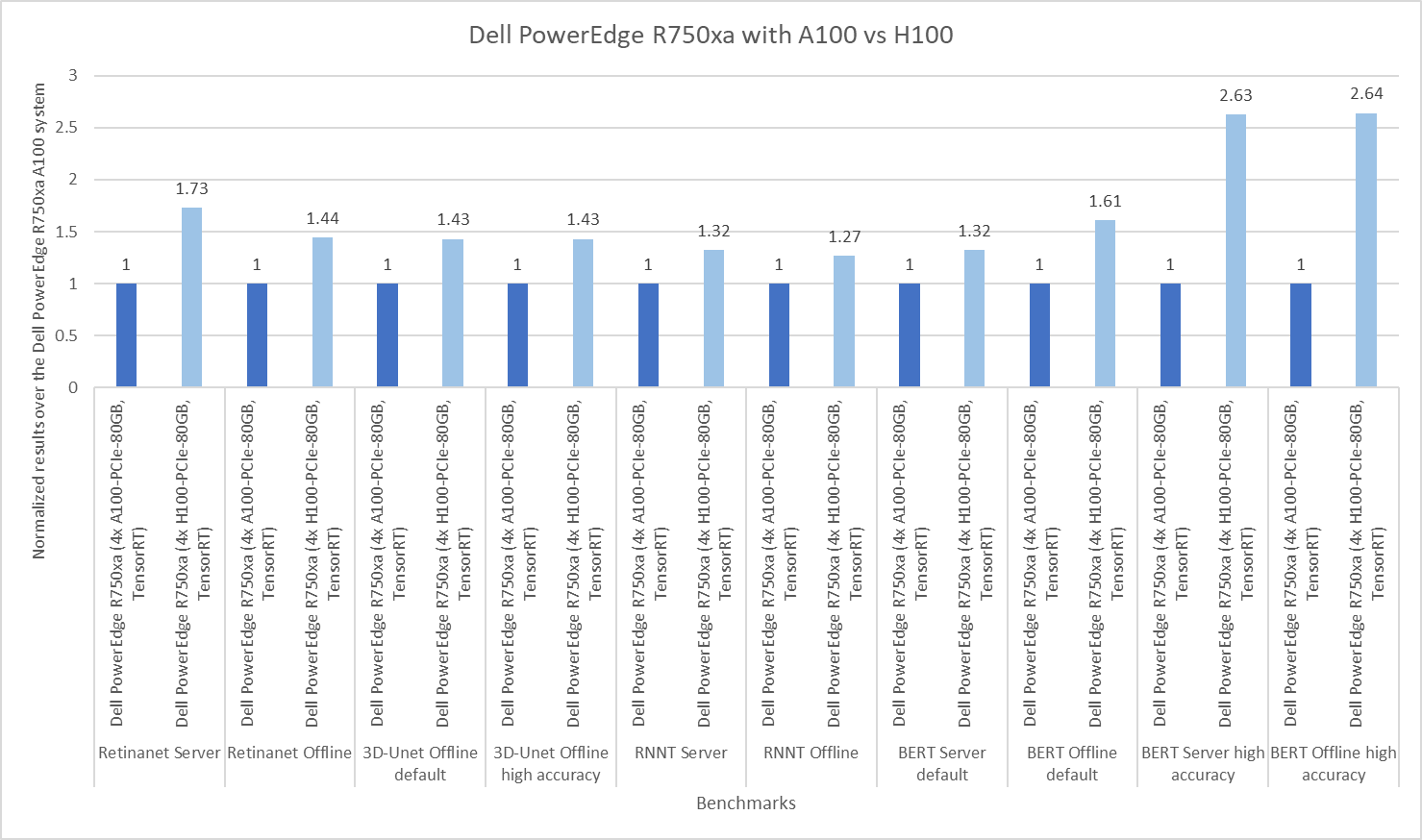

In the following figure, the per card numbers are normalized over the NVIDIA A100 GPU results to show a readable comparison of the GPUs on the same system. Across object detection, medical image segmentation, and speech to text and natural language processing, the latest NVIDIA H100 GPU outperforms its predecessor in all categories. Note the outstanding performance of the Dell PowerEdge R750xa server with NVIDIA H100 GPUs with the BERT benchmark in the high accuracy mode. With the advancements in generative artificial intelligence, the Dell PowerEdge R750xa server is a versatile, reliable, and high performing platform.

Figure 1: Normalized per GPU comparison of NVIDIA A100 and NVIDIA H100 GPUs on the Dell PowerEdge R750xa server

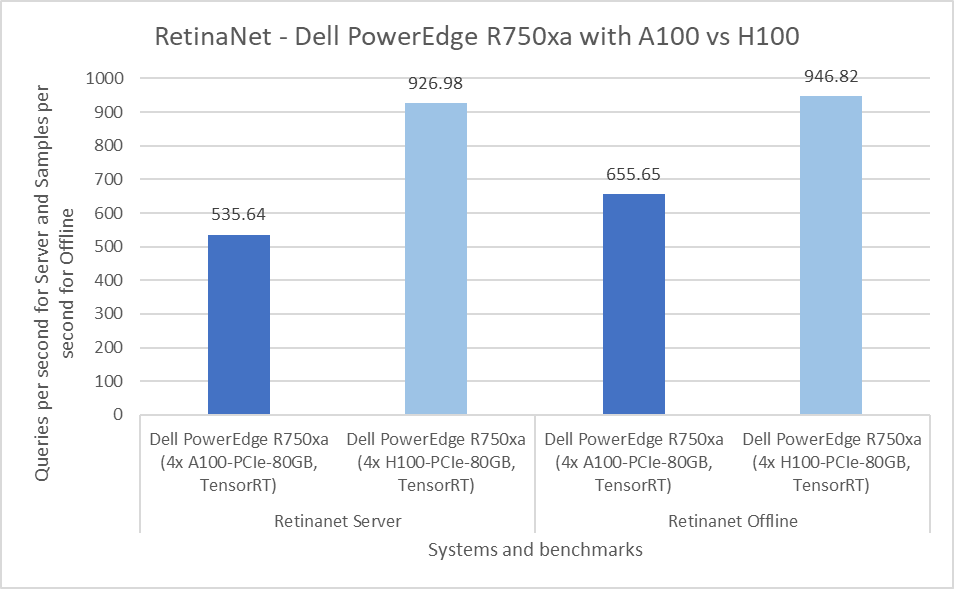

The following figures show absolute numbers for a comparison of the NVIDIA H100 and NVIDIA A100 GPUs.

Figure 2: Per GPU comparison of NVIDIA A100 and NVIDIA H100 GPUs for RetinaNet on the PowerEdge R750xa server

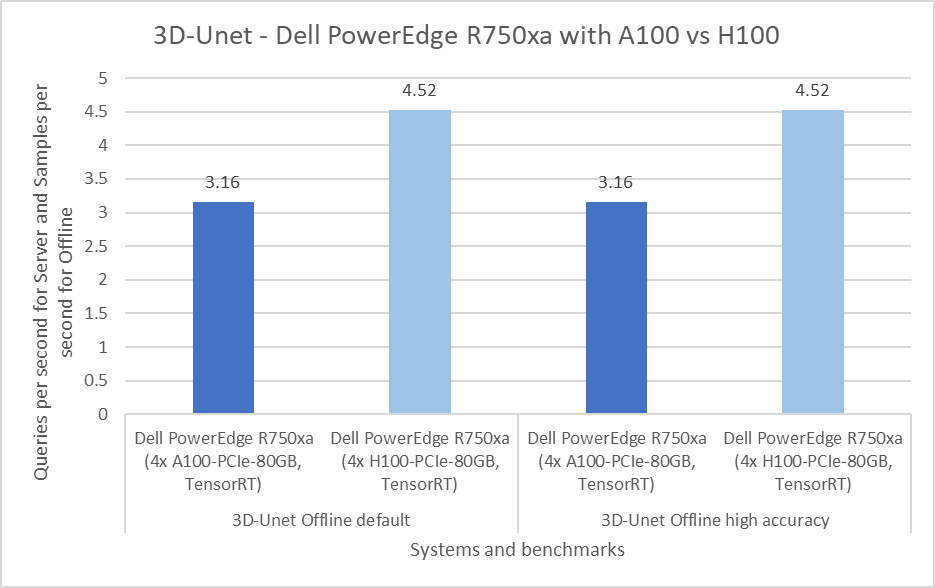

Figure 3: Per GPU comparison of NVIDIA A100 and NVIDIA H100 GPUs for 3D-Unet on the PowerEdge R750xa server

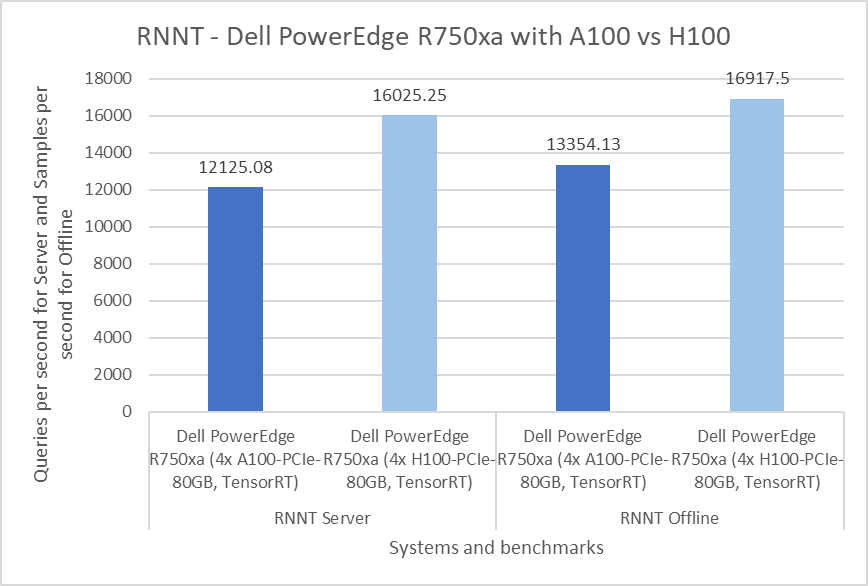

Figure 4: Per GPU comparison of NVIDIA A100 and NVIDIA H100 GPUs for RNNT on the PowerEdge R750xa server

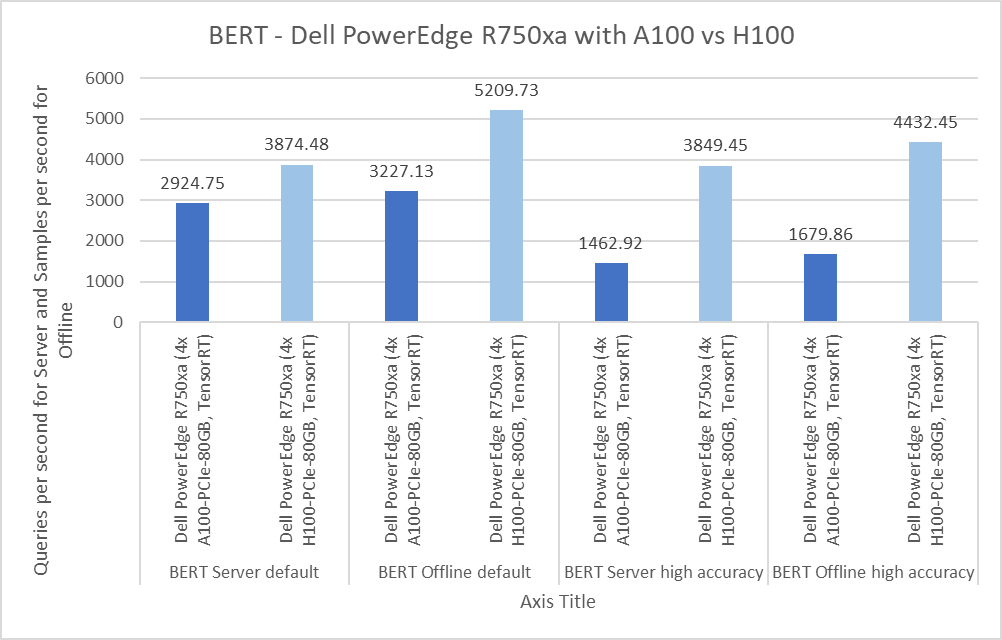

Figure 5: Per GPU comparison of NVIDIA A100 and NVIDIA H100 GPUs for BERT on the PowerEdge R750xa server

These results can be found on the MLCommons website.

Submissions made with the NVIDIA A100 PCIe GPU

In this round of submissions, Dell Technologies submitted results on the PowerEdge R750xa server packaged with four NVIDIA A100 80 GB PCIe GPUs. In previous rounds, the PowerEdge R750xa server showed outstanding performance across all the benchmarks. For a deeper dive of a previous round's submission, check out our blog from MLPerf Inference v2.0. From the previous round of MLPerf Inference v2.1 submissions, Dell Technologies submitted results on an identical system. However, across the two rounds of submissions, the main difference is the upgrades in the software stack, as described in the following table:

Platform | Dell PowerEdge R750xa (4x A100-PCIe-80GB, TensorRT) | Dell PowerEdge R750xa (4x A100-PCIe-80GB, TensorRT) |

Round | V3.0 | V2.1 |

MLPerf System ID | R750xa_A100_PCIe_80GBx4_TRT | |

Operating system | CentOS 8.2 | |

CPU | Intel Xeon Gold 6338 CPU @ 2.00 GHz | |

Memory | 512 GB | |

GPU | NVIDIA A100-PCIe-80GB | |

GPU form factor | PCIe | |

GPU memory configuration | HBM2e | |

GPU count | 4 | |

Software stack | TensorRT 8.6 CUDA 12.0 cuDNN 8.8.0 Driver 525.85.12 DALI 1.17.0 | TensorRT 8.4.2 CUDA 11.6 cuDNN 8.4.1 Driver 510.39.01 DALI 0.31.0 |

Table 2: Software stack for submissions made on the NVIDIA A100 PCIe GPU in MLPerf Inference v3.0 and v2.1

Comparison of PowerEdge R750xa NVIDIA A100 results from Inference v3.0 and v2.1

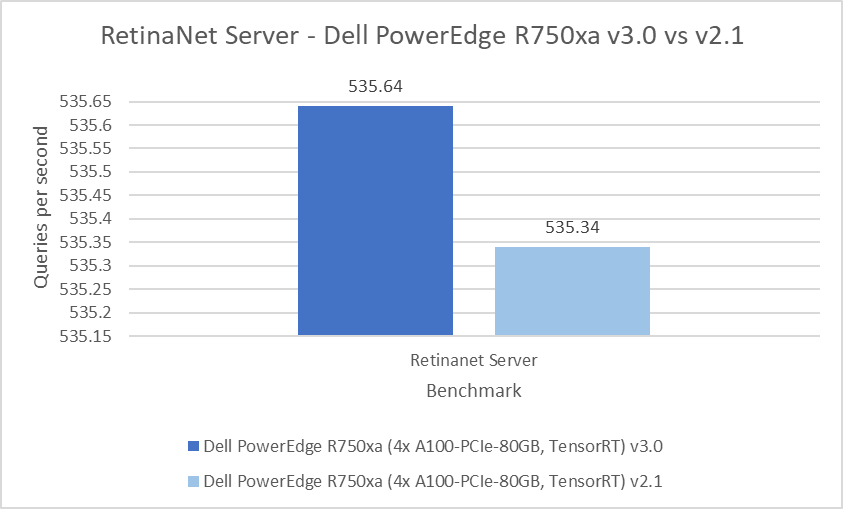

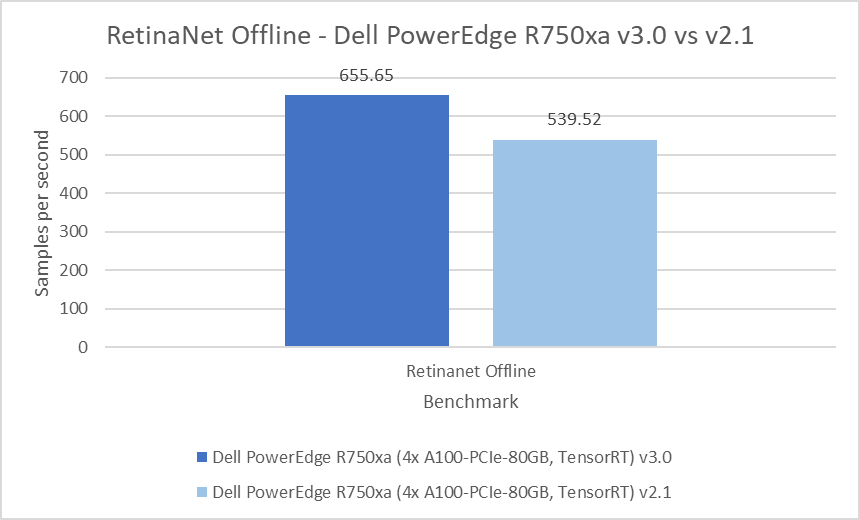

Object detection

The RetinaNet benchmark falls under the object detection category and uses the OpenImages dataset. The results from Inference v3.0 show a less than 0.05 percent difference in the Server scenario and a 21.53 percent difference in the Offline scenario. A potential reason for this result might be NVIDIA’s optimizations, as outlined in their technical blog.

Figure 6: RetinaNet Server and Offline results on the PowerEdge R750xa server from Inference v3.0 and Inference v2.1

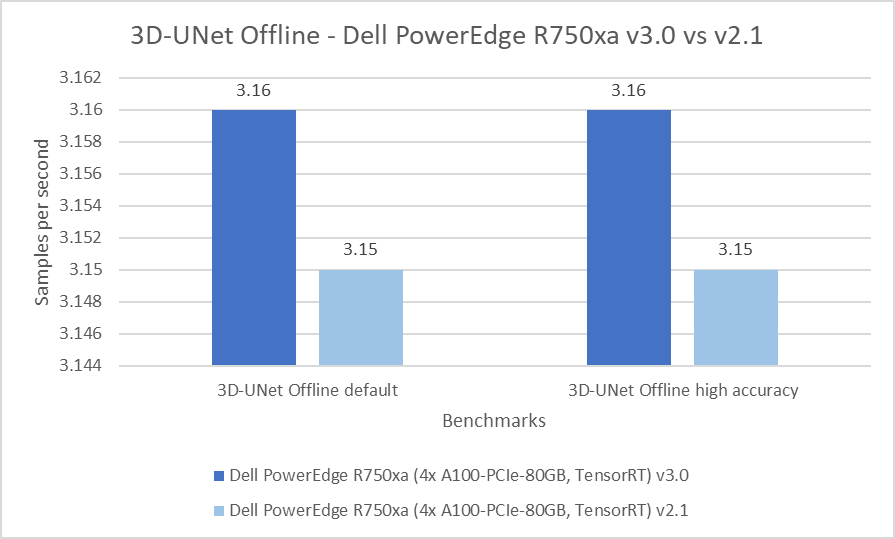

Medical image segmentation

The 3D-Unet benchmark performs the KiTS 2019 kidney tumor segmentation task. Across the two rounds of submission, the PowerEdge R750xa server performed consistently well with a 0.3 percent difference in both the default and high accuracy modes.

Figure 7: 3D-UNet Offline results on the PowerEdge R750xa server from Inference v3.0 and v2.1

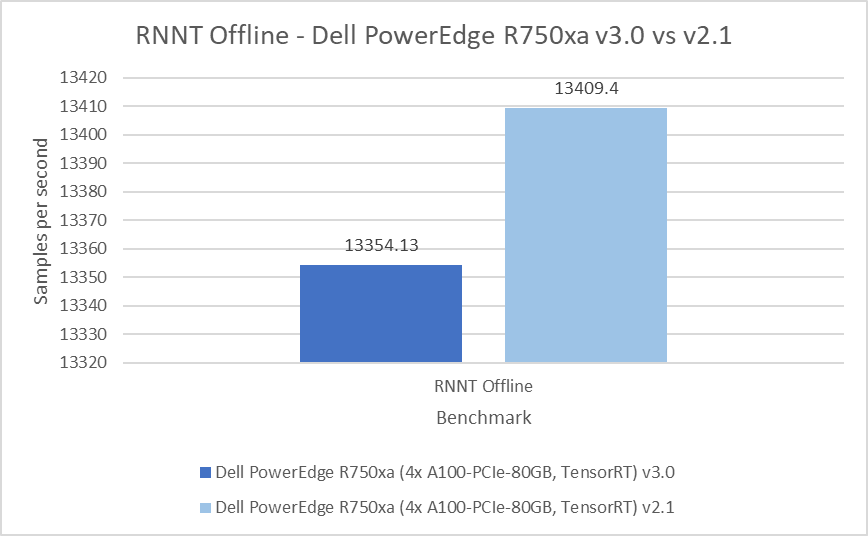

Speech to text

The Recurrent Neural Network Transducers (RNNT) model falls under the speech recognition category. This benchmark accepts raw audio samples and produces the corresponding character transcription. In the Server scenario, the results are within a 2.25 percent difference and 0.41 percent difference in the Offline scenario.

Figure 8: RNNT Server and Offline results on the Dell PowerEdge R750xa server from Inference v3.0 and v2.1

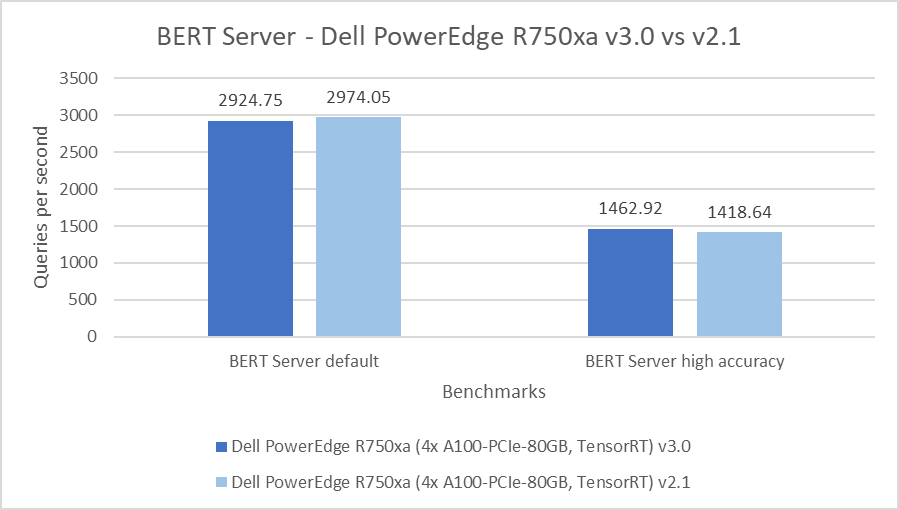

Natural language processing

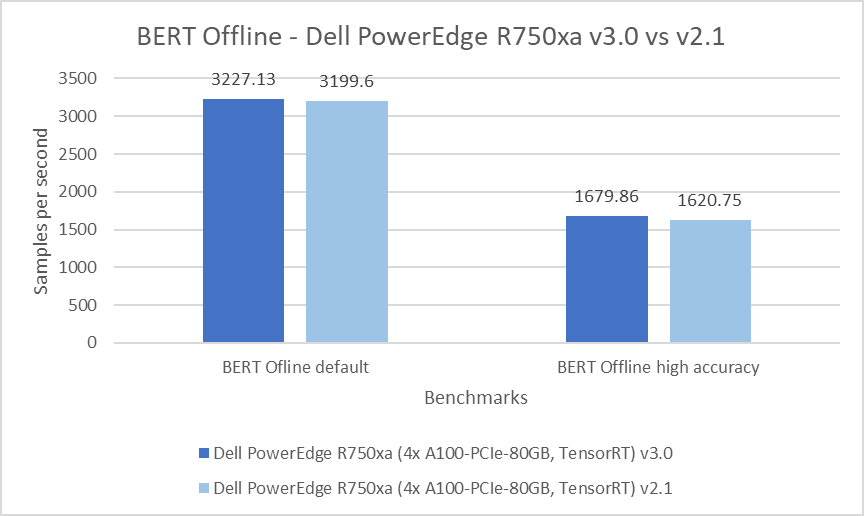

Bidirectional Encoder Representation from Transformers (BERT) is a state-of-the-art language representational model for Natural Language Processing applications. This benchmark performs the SQuAD question answering task. The BERT benchmark consists of default and high accuracy modes for the Offline and Server scenarios. For the Server scenarios, the default mode results are within a 1.69 percent range and 3.12 percent range for the high accuracy mode. For the Offline scenarios, a similar behavior is noticeable in which the default mode results are within a 0.86 percent range and 3.65 percent range in the high accuracy mode.

Figure 9: BERT Server and Offline results on the PowerEdge R750xa server from Inference v3.0 and v2.1

Conclusion

Across the various rounds of submissions to the MLPerf Inference benchmark suite, the PowerEdge R750xa server has been a consistent top performer for any machine learning tasks ranging from object detection, medical image segmentation, speech to text and natural language processing. The PowerEdge R750xa server continues to be an excellent server choice for machine learning inference workloads. Customers can take advantage of the diverse results submitted on the Dell PowerEdge R750xa server with the NVIDIA H100 GPU to make an informed decision for their specific solution needs.