PowerFlex Summer 2021 Updates Deliver on Execution, Compliance, and Confidence

Tue, 04 Jul 2023 09:48:51 -0000

|Read Time: 0 minutes

Execute Flawlessly – Comply Effortlessly – Be Confident

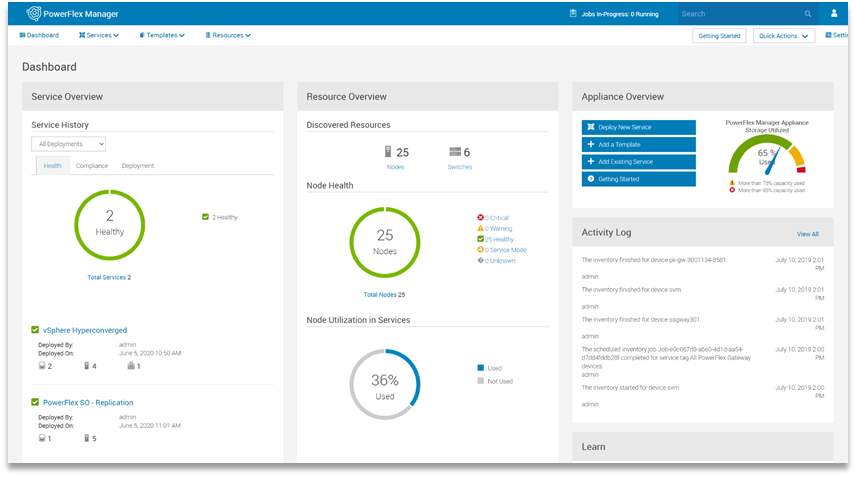

The summer 2021 release of Dell EMC PowerFlex Software-defined Infrastructure extends the PowerFlex family’s transformational superpowers, providing businesses with the agility to thrive in ever-changing economic and technological landscapes. The release of PowerFlex 3.6 and PowerFlex Manager 3.7 enables customers to supercharge their mission-critical workloads with enhanced automation and platform options. It safeguards workload execution with expanded continuity and compliance offerings. And businesses running PowerFlex can be confident in predictable outcomes at scale with new infrastructure insights, network resiliency enhancements, and integrated upgrade guidance.

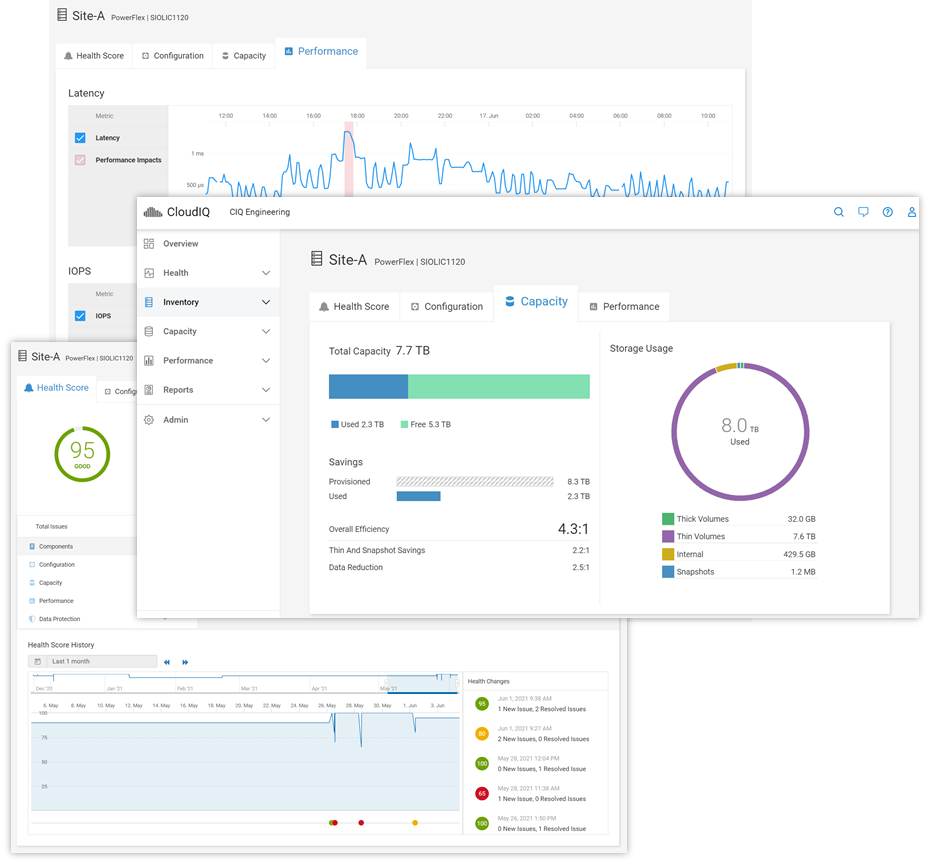

Keep an eye on the important stuff

A highlight of this release is PowerFlex integration with Dell EMC CloudIQ, a cloud-based application that intelligently and proactively monitors the health of Dell EMC storage, data protection, HCI and other systems. Users can enjoy a single UI for multi-system, multi-site PowerFlex monitoring that includes system health, configuration/inventory, capacity usage, and performance. The PowerFlex system must be first connected to Dell EMC Secure Remote Services (SRS), and then CloudIQ is automatically enabled. Health scores are based on health check algorithms that use capacity, performance, configuration, components, and data protection criteria whose value is informed by PowerFlex alert data. Users can opt in to get health notifications via email or mobile phones, and the history of generated and cleared health issues is maintained for two years. After ingesting a couple of weeks of data, CloudIQ machine learning will begin looking for and noting IOPS and bandwidth anomalies. It also watches for and signals latency performance impacts.

For information on adding your PowerFlex system(s) to CloudIQ see the Knowledge Base article. And to get a hands-on look at PowerFlex in CloudIQ, check out the online Simulator (log in with your support account) and see technical white papers and demo recordings on www.delltechnologies.com/cloudiq.

Be safe with your data out there

PowerFlex native asynchronous replication was introduced last year with version 3.5. Now, in PowerFlex 3.6, we have made it even more flexible and improved compliance targets. We cut the minimum RPO in half and now support RPOs as low as 15 seconds. We also added tooling to improve control over Replication Consistency Groups (RCGs) – sets of PowerFlex volumes being replicated together. RCGs can now be active or inactive, where inactive RCGs hold their configuration but use no additional system resources. The ability to terminate an RCG and leave it in an inactive state also improves the recovery process if a user runs out of journal capacity.

With this release, PowerFlex supports replication in VMware HCI environments. In this scenario, PowerFlex Manager 3.7 (and above) orchestrates resizing the Storage Virtual Machines (SVMs) and the addition of the Storage Data Replicators (SDRs) into the system. Because the orchestration is done by PowerFlex Manager, the option to replicate between PowerFlex HCI deployments running VMware is limited to appliance and rack deployments.

Systems running 3.5.x can be active replication peers with systems running 3.6, and the source and destination systems can be on different code versions long term. For further information about PowerFlex replication architecture, limitations and design considerations, see the Dell EMC PowerFlex: Introduction to Replication white paper.

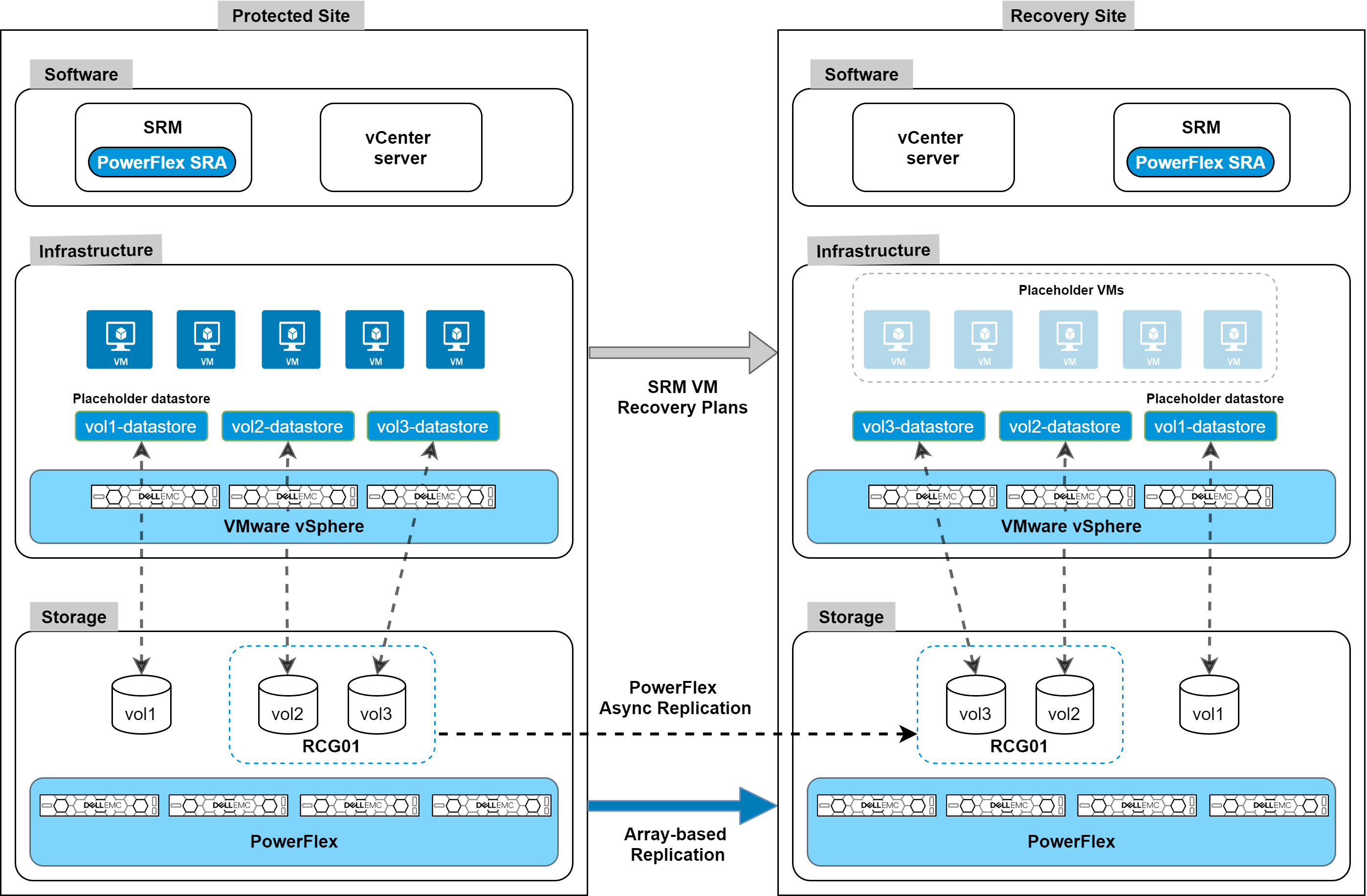

Along with these internal replication improvements, we are introducing integration with VMware Site Recovery Manager (SRM) – disaster recovery management and automation software for virtual machines and their workloads. The PowerFlex Storage Replication Adapter (SRA) enables PowerFlex as the native replication engine for protecting VMs on vSphere datastores. The PowerFlex SRA is compatible with SRM 8.2 or 8.3, the Photon OS-based SRM appliances. And while we are introducing this with the current releases, the SRA is compatible with PowerFlex systems running 3.5.1.x and above. Users can create recovery plans to failover VMs to another site, fail back to the original, or use PowerFlex’s non-disruptive replication failover testing to run failover tests in SRM.

The SRA and installation instructions are available for download from the VMware website. For detailed information about the SRA implementation and usage examples, see the whitepaper on Disaster Recovery for Virtualized Workloads Dell EMC PowerFlex using VMware Site Recovery Manager.

The following figure shows an architecture overview of PowerFlex SRA and VMware SRM:

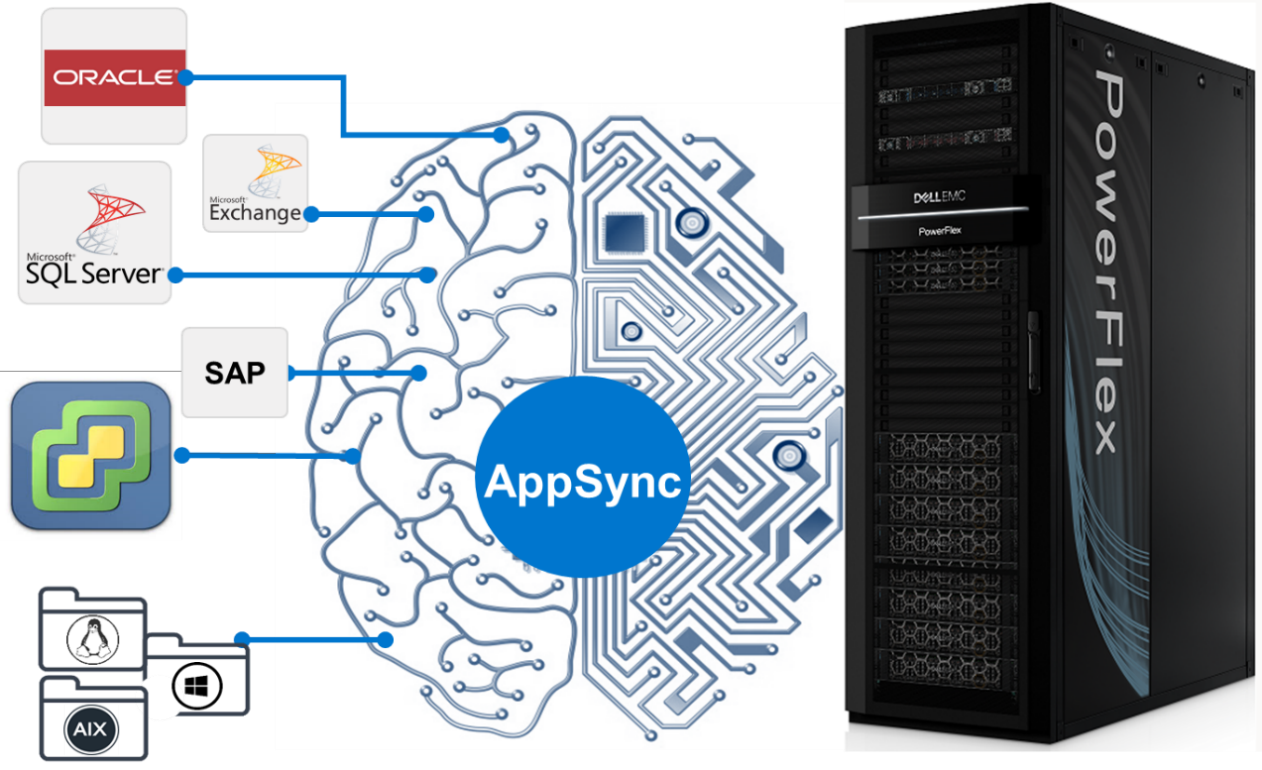

PowerFlex native replication, and the integration with VMware Site Recovery Manager, provide robust, crash-consistent data protection for disaster recovery and business continuity. But we are also introducing integration with Dell EMC AppSync for application-consistent copy lifecycle management. For customers using the wide range of supported databases and filesystems, AppSync v4.3 adds support for PowerFlex, seamlessly bringing PowerFlex’s superpowers into AppSync’s simplified copy data management. AppSync has deep integrations with Oracle, Microsoft SQL Server, Microsoft Exchange, and SAP HANA, and it enables VM-consistent copies of data stores and individual VM recovery for VMware environments. But it can also support other enterprise applications – like EPIC Cache, DB2, MySQL, etc. – through file system copies.

AppSync with PowerFlex integration will be available mid-July 2021. For information and examples, see the Dell EMC PowerFlex and AppSync integration video.

One more note on security. PowerFlex rack and appliance are now FIPS 140-2 compliant for data at rest and key management. Hardware based data at rest encryption is achieved using supported self-encrypting drives (SEDs), with the encryption engine running on the SEDs to deliver better performance and security. The SEDs based encryption claim is based on FIPS 140-2 Level 2 certification. Dell EMC CloudLink, the KMIP and FIPS 140-2 Level 1 (CloudLink Agent and CloudLink Server) compliant key manager, is used to manage SEDs encryption keys.

Automate (and containerize) all the things

PowerFlex software-defined infrastructure is eminently suited to cloud-native use cases and automatable workflows. There has been a lot of recent progress in PowerFlex’s support for these ecosystems. The Container Storage Interface (CSI) driver for PowerFlex continues to evolve, with support for accessing multiple PowerFlex clusters, ephemeral inline volumes, and importantly a containerized PowerFlex Storage Data Client (SDC) deployment and management. The containerized SDC allows CSI to inject the PowerFlex volume driver into the kernel of container-optimized operating systems that lack package managers. This provides PowerFlex CSI support for Red Hat CoreOS and Fedora Core OS. And it also enables integration of PowerFlex with RedHat OpenShift 4.6 and greater. The forthcoming CSI version 1.5 adds support for volume consistency groups and custom file system format options. Users can set specific disk format command parameters when provisioning a volume. Star and watch the GitHub Repository for the PowerFlex CSI Driver for updates.

In addition to this, Dell Technologies has been developing a set of Container Storage Modules (CSM) that complement the CSI drivers. PowerFlex is at the forefront of that effort, and there are several modules available for tech preview, with general availability coming later this year.

- Observability CSM: Provides exportable telemetry metrics for I/O performance & storage usage, for consumption in tools like Grafana and Prometheus. Bridges the observability gap between Kubernetes and PowerFlex storage admins.

- Authorization CSM: Provides a set of RBAC tools for PowerFlex and Kubernetes. This is an out-of-band tool proxying admin credentials and enabling the management of storage consumers and their limits (e.g., tenant segmentation, storage quota limits, isolation, auditing, etc.).

- Resiliency CSM: Provides stateful application fault protection & detection, resiliency for node failure and network disruptions. Reschedules failed pods on new resources and asks the CSI driver to un-map and re-map the persistent storage volumes to the online nodes.

Users can automate volume and snapshot lifecycle management with the PowerFlex Ansible Modules. They can also use the modules to gather facts about their PowerFlex systems and manage various storage pool and SDC details. The Ansible modules are available on GitHub and Ansible Galaxy. They work with Ansible 2.9 or later and require the PowerFlex Python SDK (which may also be used by itself to facilitate authentication to and interaction with a PowerFlex cluster). Again, watch the repositories for additional modules and expansions in the near future.

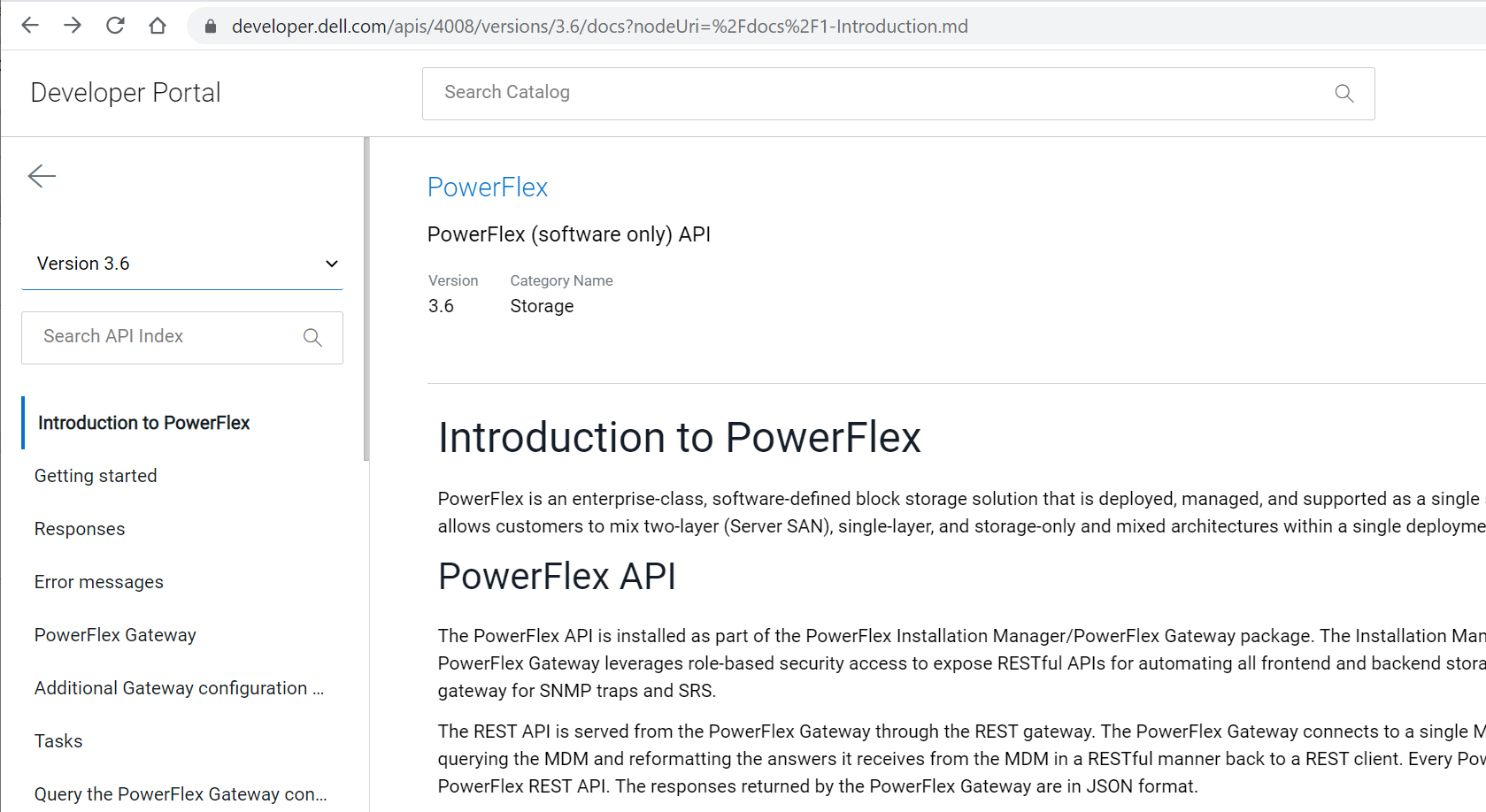

All these automation tools leverage and rely upon the PowerFlex REST API. And Dell Technologies has introduced a new Developer Portal, where the APIs for many products can be explored. The PowerFlex API, along with explanations and usage examples, can be found at https://developer.dell.com/apis/4008/versions/3.6/docs.

Always keep on improving

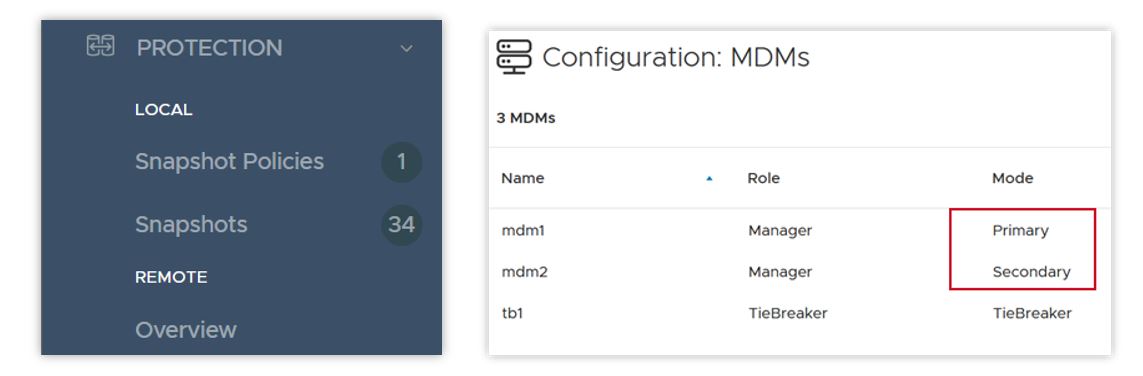

With every release, PowerFlex and PowerFlex Manager get faster, more secure, and more easily manageable. In PowerFlex 3.6 there are a number of UI enhancements, including simplification of menus, better capacity reporting around data reduction, a new dedicated area for snapshots and snapshot policy management, and – following on Dell Technologies’ drive towards more inclusive language – a change in the labels for the MDM cluster roles. “Master” and “Slave” roles are now “Primary” and “Secondary”.

PowerFlex 3.6 introduces support for Oracle Linux Virtualization (KVM based), which adds a supported hypervisor layer to the previous support for Oracle Enterprise Linux. This advances the numerous Oracle database deployments on PowerFlex, providing improved Oracle supportability while still offering the great cost-effectiveness PowerFlex offers for running Oracle. For detailed information on installing and configuring, please refer to the Oracle Linux KVM on PowerFlex white paper.

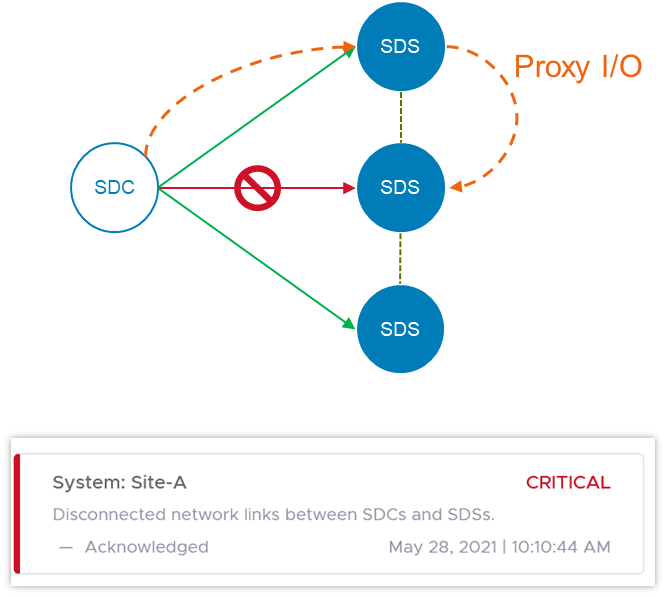

In the software-defined storage layer itself, version 3.6 doubles the number of Storage Data Clients (the consumers of PowerFlex volumes) per system to 2048. This doubles the number of hosts that can map volumes from PowerFlex storage pools. The software is also smarter when it comes to detecting and handling network error cases. In some disaggregated, or two-layer, systems where the SDCs live on a separate network than the storage cluster itself, a network path impairment between an SDC and a single Storage Data Server (SDS) node can cause I/O failures – even when there isn’t a general network failure in the cluster. In version 3.6 if such a disruption occurs, the SDC can use another SDS in the system to proxy the I/O to its original destination. Users are alerted until the problem is cleared, but I/O continues uninterrupted.

Because of the highly distributed architecture of PowerFlex, ports or sockets experiencing frequent disconnects (flapping), can cause overall system performance issues. 3.6 detects this and proactively disqualifies the path, preventing general disruption across the system.

In version 3.5, we introduced Protected Maintenance Mode (PMM), a super-safe way to put a node into maintenance while nevertheless avoiding a lengthy data-rehydration process at the end. Now, PMM makes use of the highly parallel many-to-many rebalancing algorithm, as a node goes into maintenance. Depending on the amount of data stored on the node, this can still be a long process, and other things can change in the system as it’s happening. PowerFlex 3.6 adds an auto-abort feature, in which the system continually scans for hardware or capacity issues that would prevent the node from completely entering PMM. If any flags are raised, the system will abort the process and notify the user. More information on maintenance modes, and the new PMM auto-abort feature, can be found in this whitepaper.

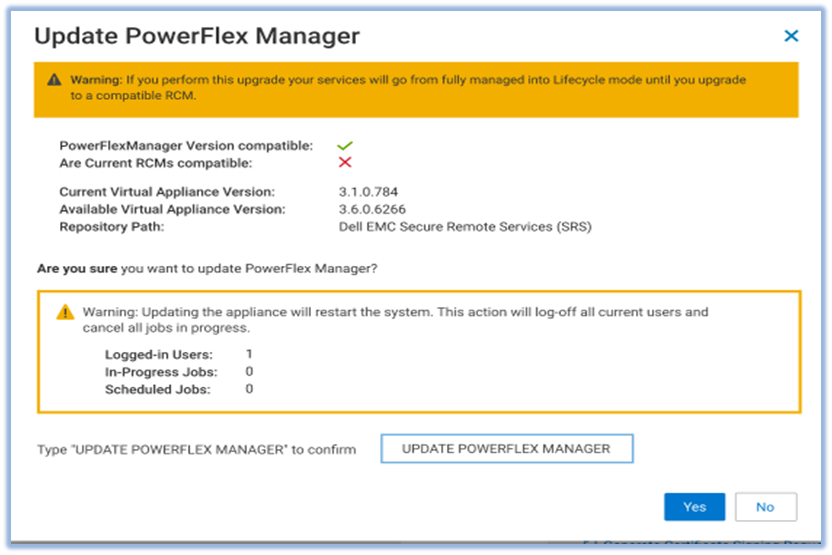

PowerFlex Manager 3.7 has gotten much more powerful as well. Foremost among the improvements is a new Compatibility Management feature. This new feature helps customers automatically identify the recommended upgrade paths for both the PowerFlex Manager appliance itself and the system RCM/IC upgrade. Prior to this release, whenever a customer or Dell Professional Services wished to do an upgrade, it took a lot of effort and time to manually investigate the documentation and compatibility matrixes to understand all of the upgrade rules – what are the allowed upgrade paths, which PowerFlex Manager version works with which RCM/IC versions, etc.

The new Compatibility Management tools eliminate the work and assist users by automatically identifying recommended upgrade paths. To determine which paths are valid and which are not, PowerFlex Manager uses information that is provided in a compatibility matrix file. The compatibility matrix file maps all the known valid and invalid paths for all previous releases of the software. It breaks the possible upgrade paths down as:

- Recommended: tested or implied as tested

- Supported: allowed, but not necessarily tested

- Not Allowed: unsupported update path

PowerFlex Manager 3.7 also introduces support for vSphere 7.0 U2. Upgrading to this version requires a manual vCenter upgrade. But then PowerFlex Manager will take over and manage the ESXi clusters. PowerFlex Manager 3.7 supports VMware ESXi 7.0 Update 2 installation, upgrade, and expansion operations for both hyperconverged and compute-only services. Users can deploy new services, add existing services running VMware ESXi 7.0 U2, or expand existing services. PowerFlex Manager also supports upgrades of VMware ESXi clusters in hyperconverged or compute-only services. You can upgrade VMware ESXi clusters from version 6.5, 6.7, or 7.0 to VMware ESXi 7.0 Update 2.

When you deploy a new ESXi 7.0U2 service, PowerFlex Manager automatically deploys two service volumes and maps these volumes to two heartbeat datastores on shared storage. PowerFlex Manager also deploys three vSphere Cluster Services (vCLS) VMs for the cluster.

PowerFlex Manager introduces several other enhancements in this release. It now supports 32k volumes per Service, aligned with PowerFlex core software volume scalability. It has enhanced security for SMB/NFS. A user-specific account is now required to gain access to the SMB share. PowerFlex Manager also updates the NFS share configuration when a user upgrades or restores the virtual appliance. PowerFlex Manager has disabled support for the SMBv1 protocol. PowerFlex Manager now uses SMBv2 or SMBv3 to enhance security.

It has also expanded its management capabilities over the PowerFlex Presentation Server and Gateway services. Prior to this release, PowerFlex Manager could deploy a Presentation Server (which hosts the WebUI) but not upgrade it. Now, PowerFlex Manager 3.7 can both discover existing instances and upgrade Presentation Servers. Similarly, it has gained the ability to upgrade the OS for the Gateway (which hosts the REST API). Prior to this release, PowerFlex Manager could only upgrade the Gateway RPM package without upgrading and patching the OS of the Gateway. Now PowerFlex Manager 3.7 can do both.

But it’s not all about software

This release adds support for a broader array of NVIDIA GPUs. Next-gen NVIDIA acceleration cards are now available for customers looking to run specialized, high-performance computing and analytics applications - Quadro RTX 6000, Quadro RTX 8000, A40, and A100. And we also introduce a small form factor GPU that can be used in the 1U R640-based PowerFlex Nodes – the NVIDIA A100. The past year demonstrated the importance supporting remote workers with virtual desktops, and PowerFlex supports GPU implementations on Citrix and VMware VDI.

We now support the Dell PowerSwitch S5296F-ON for the PowerFlex appliance. The S5295 has 96x 10/25G SFP28 ports and 8x 100G QSFP28 ports. It can support high node counts in a single cabinet, if the high oversubscription ratio is acceptable. We also introduce support for the Cisco Nexus 93180YC-FX, for use as either an access or an aggregation switch, and the Cisco 9364C-GX, for use as either a leaf or a spine switch, with 64x 100G ports.

Virtualized network infrastructure continues to grow in capability and deployment share. NSX-T™ is VMware's software-defined-network infrastructure that addresses cross-cloud needs for VM-centric network services and security. The PowerFlex appliance now joins the PowerFlex rack, in supporting NSX-T Ready configurations. “NSX-T Ready” means that the hardware configuration meets NSX-T requirements. The customer will provide NSX-T software and deploy with assistance from VMware or Dell Professional Services. The enabling components are:

- A 4-node PowerFlex management cluster, available to host the NSX-T controller VMs

- Appliance-specific NSX-T edge nodes (need 2 to 8 for running the NSX-T edge services)

- High-level NSX-T topologies and considerations available in the PowerFlex Appliance Network Planning Guide

- PowerFlex Manager will run the NSX-T edge nodes in Lifecyle Mode

As with the PowerFlex rack, appliance NSX-T edge nodes are “service appliances” that are dedicated to run network services, while the newly available HA appliance management nodes run the NSX-T management VMs. PowerFlex Manager can assist in deploying the edge nodes and will lifecycle the hardware aspects.

Wrap it up

Thanks for taking time to read about what’s new with Dell EMC PowerFlex software-defined infrastructure. We haven’t even been able to cover all the great new things being introduced this summer. Supercharge your mission-critical workloads flawlessly with enhanced automation, effortlessly enable business continuity and compliance, and confidently manage your data center operations at scale. To continue exploring, visit us on the Dell Technologies website for PowerFlex.

Related Blog Posts

A Case for Repatriating High-value Workloads with PowerFlex Software-Defined Storage

Tue, 20 Oct 2020 12:16:04 -0000

|Read Time: 0 minutes

Kent Stevens, Product Management, PowerFlex

Brian Dean, Senior Principal Engineer, TME, PowerFlex

Michael Richtberg, Chief Strategy Architect, PowerFlex

We observe customers repatriating key applications from the Cloud, help you think about where to run your key applications, and explain how PowerFlex’s unique architecture meets the demands of these workloads in running and transforming your business

For critical software applications you depend upon to power core business and operational processes, moving to “The Cloud” might seem the easiest way to gain the agility to transform the surrounding business processes. Yet we see many of our customers making the move back home, back “On-Prem” for these performance-sensitive critical workloads – or resisting the urge to move to The Cloud in the first place. PowerFlex is proving to deliver agility and ease of operations for the IT infrastructure for high-value, large-scale workloads and data-center consolidation, along with a predictable cost profile – as a Cloud-like environment enabling you to reach your business objectives safely within your own data center or at co-lo facilities.

IDC recently found that 80% of their customers had repatriation activities, and 50% of public-cloud based applications were targeted to move to hosted-private cloud or on-premises locations within two years(1). IDC notes that the main drivers for repatriation are security, performance, cost, and control. Findings reported by 451 Research(2) show cost and performance as the top disadvantages when comparing on-premises storage to cloud storage services. We’ve further observed that core business-critical applications are a significant part of these migration activities.

If you’ve heard the term “data gravity,” which relates to the difficulty in moving data to and from the cloud and that may only be part of the problem. “Application” gravity is likely a bigger problem for performance sensitive workloads that struggle to achieve the required business results because of scale and performance limitations of cloud storage services.

Transformation is the savior of your business – but a problem for your key business applications

Business transformation impacts the data-processing infrastructure in important ways: Applications that were stable and seldom touched are now the subject of massive changes on an ongoing basis. Revamped and intelligent business processes require new pieces of data, increasing the storage requirements and those smarts (the newly automated or augmented decision-making) require constant tuning and adjustments. This is not what you want for applications that power your most important business workflows that generate your profitability. You need maximum control and full purview over this environment to avoid unexpected disruptions. It’s a well-known dilemma that you must change the tires while the car is driving down the road – and today’s transformation projects can take this to the extreme.

The infrastructure used to host such high-profile applications – computing, storage and networking – must be operated at scale yet still be ready to grow and evolve. It must be resilient, remain available when hardware fails, and be able to transform without interruption to the business.

Does the public cloud deliver the results you expected?

Do your applications require certain minimum amounts of throughput? Are there latency thresholds you consider critical? Do you require large data capacities and the ability to scale as demands grow? Do require certain levels of availability? You may assume all these requirements come with a “storage” product offered by the public cloud platforms, but most fall short of meeting these needs. Some require over-provisioning to get better performance. High availability options may be lacking. The highest performing options have capacity scale limitations and can be prohibitively expensive. If you assume what you’ve been using on-prem comes from a hyperscaler, you may be quite surprised that there are substantial gaps that require expensive application rearchitecting to be “cloud native” which may become budget busters. These public cloud attributes can lead to “application gravity” gaps.

While the agility of it is tempting, the unexpected costliness of moving everything to the public cloud has turned back more than one company. When evaluating the economics and business justification for Cloud solutions, many costs associated with full-scale operations, spikes in demand or extended services can be hard to estimate, and can turn out to be large and unpredictable.

The full price of cloud adoption must account for the required levels of resiliency, management infrastructure, storage and analytics for operational data, security solutions, and scaling up the resources to realistic production levels. Recognizing all the necessary services and scale may undermine what might have initially appeared to be a solid cost justification. Once the budget is established, active effort and attention must be devoted to monitoring and oversight. Adapting to unexpected operational events, such as bursting or autoscaling for temporary spikes in workload or traffic, can bring unforeseen leaps in the monthly bill. Such situations can be especially hard to predict and plan for – and very difficult to control.

You want the speed, convenience and elasticity of running in the cloud - but how do you ensure that agility while staying within the necessary bounds of cost and oversight? Truly transformative infrastructure allows businesses to consolidate compute and storage for disparate workloads onto a single unified infrastructure to simplify their environment, increase agility, improve resiliency and lower operational costs. And your potential payoff is big with far easier scaling, more efficient hardware utilization, and less time spent figuring out how to get things right or tracking down issues that complicate disparate system architectures.

Software-Defined is the Future

IDC Predicts that by 2024, software-defined infrastructure solutions will account for 30% of storage solutions(3). At the heart of the PowerFlex family, and the enabler of its flexibility, scale and performance is PowerFlex software-defined storage. The ease and reliability of deployment and operation is provided by PowerFlex Manager, an IT operations and lifecycle management tool for full visibility and control over the PowerFlex infrastructure solutions.

PowerFlex’s unmatched combination of flexibility, elasticity, and simplicity with predictable high performance - at any scale - makes it ideally suited to be the common infrastructure for any company. Utilizing software defined storage (SDS) and hosting multiple heterogeneous computing environments, PowerFlex enables growth, consolidation, and change with cloud-like elasticity – without barriers that could impede your business.

The resulting unique architecture of the PowerFlex family easily meets the large-scale, always-on requirements of our customers’ core enterprise applications. The power and resiliency of the PowerFlex infrastructure platforms handle everything from high-performance enterprise databases, to web-scale transaction processing, to demanding business solutions in various industries including healthcare, utilities and energy. And this includes the new big-data and analytical workloads that are quickly augmenting the core applications as the business processes are being transformed.

PowerFlex: A Unique Platform for Operating and Transforming Critical Applications

PowerFlex provides the flexibility to utilize your choice of tools and solutions to drive your transformation and consolidation, while controlling the costs of the relentless expansion in data processing. PowerFlex provides the modularity to adapt and grow efficiently while providing the manageability to simplify your operations and reduce costs. It provides the scalable infrastructure on-premises to allow you focus on your business operations. PowerFlex on-demand options by the end of 2020 enable an elastic OPEX consumption model as well.

PowerFlex provides the flexibility to utilize your choice of tools and solutions to drive your transformation and consolidation, while controlling the costs of the relentless expansion in data processing. PowerFlex provides the modularity to adapt and grow efficiently while providing the manageability to simplify your operations and reduce costs. It provides the scalable infrastructure on-premises to allow you focus on your business operations. PowerFlex on-demand options by the end of 2020 enable an elastic OPEX consumption model as well.

As your business needs change, PowerFlex provides a non-disruptive path of adaptability. As you need more compute, storage or application workloads, PowerFlex modularly expands without complex data migration services. As your application infrastructure needs change from virtualization to containers and bare metal, PowerFlex can mix and match these in any combination necessary without needing physical changes or cluster segmentation. PowerFlex provides future-proof capabilities that keep up with your demands with six nines of availability and linear scalability.

With the dynamic new pace of growth and change, PowerFlex can ensure you stay in charge while enabling the agility to adapt efficiently. PowerFlex enables you to leverage the advantages of oversight and cost-effectiveness of the on-premises environment with the ability to meet transformation head-on.

For more information, see PowerFlex on Dell EMC.com, or reach out to Contact Us.

footnotes:

1 IDC Cloud Repatriation Accelerates in a Multi-Cloud World, July 2018

2 451 Research, 2020 Voice of the Enterprise

3 IDC FutureScape: Worldwide Enterprise Infrastructure 2020 Predictions, October 2019

Q1 2024 Update for Terraform Integrations with Dell Infrastructure

Tue, 02 Apr 2024 14:45:56 -0000

|Read Time: 0 minutes

This post covers all the new Terraform resources and data sources that have been released in the last two quarters: Q4’23 and Q1 ‘24. You can check out previous releases of Terraform providers here: Q1-2023, Q2-2023, and Q3-2023. I also covered the first release of PowerScale provider here.

Here is a summary of the Dell Terraform Provider versions released over the last two quarters:

- v1.1 and v1.2 of the provider for PowerScale

- v1.3 and v1.4 of the provider for PowerFlex

- v1.3 and v1.4 of the provider for PowerStore

- v1.2 of the Provider for OME

- v1.1 and v1.2 of the Provider for Redfish

PowerScale Provider v1.1 and v1.2

PowerScale received the most number of new Terraform capabilities in the last few months. New resources and corresponding data sources have been under the following workflow categories:

- Data Management

- User and Access Management

- Cluster Management

Data management

Following is the summary for the different resource-datasource pairs introduced to automate operations related to Data management on PowerScale:

Snapshots: CRUD operations for Snapshots

Here's an example of how to create a snapshot resource within a PowerScale storage environment using Terraform:

resource "powerscale_snapshot" "example_snapshot" {

name = "example-snapshot"

filesystem = powerscale_filesystem.example_fs.id

description = "Example snapshot description"

// Add any additional configurations as needed

}- name: Specifies the name of the snapshot to be created.

- filesystem: References the PowerScale filesystem for which the snapshot will be created.

- description: Provides a description for the snapshot.

Here's an example of how to retrieve information about existing snapshots within a PowerScale environment using Terraform:

data "powerscale_snapshot" "existing_snapshot" {

name = "existing-snapshot"

}

output "snapshot_id" {

value = data.powerscale_snapshot.existing_snapshot.id

}- name: Specifies the name of the existing snapshot to query.

Snapshot schedules: CRUD operations for Snapshot schedules

Following is an example of how to define a snapshot schedule resource:

resource "powerscale_snapshot_schedule" "example_schedule" {

name = "example-schedule"

filesystem = powerscale_filesystem.example_fs.id

snapshot_type = "weekly"

retention_policy = "4 weeks"

snapshot_start_time = "23:00"

// Add any additional configurations as needed

}- name: Specifies the name of the snapshot schedule.

- filesystem: References the PowerScale filesystem for which the snapshot schedule will be applied.

- snapshot_type: Specifies the type of snapshot schedule, such as "daily", "weekly", and so on.

- retention_policy: Defines the retention policy for the snapshots created by the schedule.

- snapshot_start_time: Specifies the time at which the snapshot creation process should begin.

Data Source Example:

The following example shows how to retrieve information about existing snapshot schedules within a PowerScale environment using Terraform. The powerscale_snapshot_schedule data source fetches information about the specified snapshot schedule. An output is defined to display the ID of the retrieved snapshot schedule:

data "powerscale_snapshot_schedule" "existing_schedule" {

name = "existing-schedule"

}

output "schedule_id" {

value = data.powerscale_snapshot_schedule.existing_schedule.id

}- name: Specifies the name of the existing snapshot schedule to query.

File Pool Policies: CRUD operations for File Pool Policies

File policies in PowerScale help establish policy-based workflows like file placement and tiering of files that match certain criteria. Following is an example of how the new file pool policy resource can be configured:

resource "powerscale_filepool_policy" "example_filepool_policy" {

name = "filePoolPolicySample"

is_default_policy = false

file_matching_pattern = {

or_criteria = [

{

and_criteria = [

{

operator = ">"

type = "size"

units = "B"

value = "1073741824"

},

{

operator = ">"

type = "birth_time"

use_relative_time = true

value = "20"

},

{

operator = ">"

type = "metadata_changed_time"

use_relative_time = false

value = "1704742200"

},

{

operator = "<"

type = "accessed_time"

use_relative_time = true

value = "20"

}

]

},

{

and_criteria = [

{

operator = "<"

type = "changed_time"

use_relative_time = false

value = "1704820500"

},

{

attribute_exists = false

field = "test"

type = "custom_attribute"

value = ""

},

{

operator = "!="

type = "file_type"

value = "directory"

},

{

begins_with = false

case_sensitive = true

operator = "!="

type = "path"

value = "test"

},

{

case_sensitive = true

operator = "!="

type = "name"

value = "test"

}

]

}

]

}

# A list of actions to be taken for matching files. (Update Supported)

actions = [

{

data_access_pattern_action = "concurrency"

action_type = "set_data_access_pattern"

},

{

data_storage_policy_action = {

ssd_strategy = "metadata"

storagepool = "anywhere"

}

action_type = "apply_data_storage_policy"

},

{

snapshot_storage_policy_action = {

ssd_strategy = "metadata"

storagepool = "anywhere"

}

action_type = "apply_snapshot_storage_policy"

},

{

requested_protection_action = "default"

action_type = "set_requested_protection"

},

{

enable_coalescer_action = true

action_type = "enable_coalescer"

},

{

enable_packing_action = true,

action_type = "enable_packing"

},

{

action_type = "set_cloudpool_policy"

cloudpool_policy_action = {

archive_snapshot_files = true

cache = {

expiration = 86400

read_ahead = "partial"

type = "cached"

}

compression = true

data_retention = 604800

encryption = true

full_backup_retention = 145152000

incremental_backup_retention = 145152000

pool = "cloudPool_policy"

writeback_frequency = 32400

}

}

]

description = "filePoolPolicySample description"

apply_order = 1

}You can import existing file pool policies using the file pool policy ID:

terraform import powerscale_filepool_policy.example_filepool_policy <policyID>

or by simply referencing the default policy:

terraform import powerscale_filepool_policy.example_filepool_policy is_default_policy=true

The data source can be used to get a handle to a particular file pool policy:

data "powerscale_filepool_policy" "example_filepool_policy" {

filter {

# Optional list of names to filter upon

names = ["filePoolPolicySample", "Default policy"]

}

}or to get the complete list of policies including the default policy:

data "powerscale_filepool_policy" "all" {

}You can then deference into the data structure as needed.

User and Access management

Following is a summary of the different resource-datasource pairs introduced to automate operations related to User and Access management on PowerScale:

LDAP Providers: CRUD operations

To create and manage LDAP providers, you can use the new resource as follows:

resource "powerscale_ldap_provider" "example_ldap_provider" {

# Required params for creating and updating.

name = "ldap_provider_test"

# root of the tree in which to search identities.

base_dn = "dc=tthe,dc=testLdap,dc=com"

# Specifies the server URIs. Begin URIs with ldap:// or ldaps://

server_uris = ["ldap://10.225.108.54"]

}You can import existing LDAP providers using the provider name:

terraform import powerscale_ldap_provider.example_ldap_provider <ldapProviderName>

and also get a handle using the corresponding data source using a variety of criteria:

data "powerscale_ldap_provider" "example_ldap_provider" {

filter {

names = ["ldap_provider_name"]

# If specified as "effective" or not specified, all fields are returned. If specified as "user", only fields with non-default values are shown. If specified as "default", the original values are returned.

scope = "effective"

}

}ACL Settings: CRUD operations

PowerScale OneFS provides very powerful ACL capabilities, including a single namespace for multi-protocol access and its own internal ACL representation to perform access control. The internal ACL is presented as protocol-specific views of permissions so that NFS exports display POSIX mode bits for NFSv3 and shows ACL for NFSv4 and SMB. Now, we have a new resource to manage the global ACL settings for a given cluster:

resource "powerscale_aclsettings" "example_acl_settings" {

# Optional fields both for creating and updating

# Please check the acceptable inputs for each setting in the documentation

# access = "windows"

# calcmode = "approx"

# calcmode_group = "group_aces"

# calcmode_owner = "owner_aces"

# calcmode_traverse = "ignore"

# chmod = "merge"

# chmod_007 = "default"

# chmod_inheritable = "no"

# chown = "owner_group_and_acl"

# create_over_smb = "allow"

# dos_attr = "deny_smb"

# group_owner_inheritance = "creator"

# rwx = "retain"

# synthetic_denies = "remove"

# utimes = "only_owner"

}Import is supported, and there is corresponding data source for the resource as well.

Smart Quotas: CRUD operations

Following is an example that shows how to define a quota resource:

resource "powerscale_quota" "example_quota" {

name = "example-quota"

filesystem = powerscale_filesystem.example_fs.id

size = "10GB"

soft_limit = "8GB"

hard_limit = "12GB"

grace_period = "7d"

// Add any additional configurations as needed

}- name: Specifies the name of the quota.

- filesystem: References the PowerScale filesystem to associate with the quota.

- size: Sets the size of the quota.

- soft_limit: Defines the soft limit for the quota.

- hard_limit: Defines the hard limit for the quota.

- grace_period: Specifies the grace period for the quota.

Data Source Example:

The following code snippet illustrates how to retrieve information about existing smart quotas within a PowerScale environment using Terraform. The powerscale_quota data source fetches information about the specified quota. An output is defined to display the ID of the retrieved quota:

data "powerscale_quota" "existing_quota" {

name = "existing-quota"

}

output "quota_id" {

value = data.powerscale_quota.existing_quota.id

}- name: Specifies the name of the existing smart quota to query.

Cluster management

Groupnet: CRUD operations

Following is an example that shows how to define a GroupNet resource:

resource "powerscale_groupnet" "example_groupnet" {

name = "example-groupnet"

subnet = powerscale_subnet.example_subnet.id

gateway = "192.168.1.1"

netmask = "255.255.255.0"

vlan_id = 100

// Add any additional configurations as needed

}- name: Specifies the name of the GroupNet.

- subnet: References the PowerScale subnet to associate with the GroupNet.

- gateway: Specifies the gateway for the GroupNet.

- netmask: Defines the netmask for the GroupNet.

- vlan_id: Specifies the VLAN ID for the GroupNet.

Data Source Example:

The following code snippet illustrates how to retrieve information about existing GroupNets within a PowerScale environment using Terraform. The powerscale_groupnet data source fetches information about the specified GroupNet. An output is defined to display the ID of the retrieved GroupNet:

data "powerscale_groupnet" "existing_groupnet" {

name = "existing-groupnet"

}

output "groupnet_id" {

value = data.powerscale_groupnet.existing_groupnet.id

}- name: Specifies the name of the existing GroupNet to query.

Subnet: CRUD operations

Resource Example:

The following code snippet shows how to provision a new subnet:

resource "powerscale_subnet" "example_subnet" {

name = "example-subnet"

ip_range = "192.168.1.0/24"

network_mask = 24

gateway = "192.168.1.1"

dns_servers = ["8.8.8.8", "8.8.4.4"]

// Add any additional configurations as needed

}- name: Specifies the name of the subnet to be created.

- ip_range: Defines the IP range for the subnet.

- network_mask: Specifies the network mask for the subnet.

- gateway: Specifies the gateway for the subnet.

- dns_servers: Lists the DNS servers associated with the subnet.

Data Source Example:

The powerscale_subnet data source fetches information about the specified subnet. The following code snippet illustrates how to retrieve information about existing subnets within a PowerScale environment. An output block is defined to display the ID of the retrieved subnet:

data "powerscale_subnet" "existing_subnet" {

name = "existing-subnet"

}

output "subnet_id" {

value = data.powerscale_subnet.existing_subnet.id

}- name: Specifies the name of the existing subnet to query. The result is stored in the data object called existing_subnet.

Network pool

Following is an example demonstrating how to define a network pool resource:

resource "powerscale_networkpool" "example_network_pool" {

name = "example-network-pool"

subnet = powerscale_subnet.example_subnet.id

gateway = "192.168.1.1"

netmask = "255.255.255.0"

start_addr = "192.168.1.100"

end_addr = "192.168.1.200"

// Add any additional configurations as needed

}- name: Specifies the name of the network pool.

- subnet: References the PowerScale subnet to associate with the network pool.

- gateway: Specifies the gateway for the network pool.

- netmask: Defines the netmask for the network pool.

- start_addr and end_addr: Specify the starting and ending IP addresses for the network pool range.

Data Source Example:

The following code snippet illustrates how to retrieve information about existing network pools. The powerscale_networkpool data source fetches information about the specified network pool. An output is defined to display the ID of the retrieved network pool:

data "powerscale_networkpool" "existing_network_pool" {

name = "existing-network-pool"

}

output "network_pool_id" {

value = data.powerscale_networkpool.existing_network_pool.id

}- name: Specifies the name of the existing network pool to query.

SmartPool settings

Here's an example that shows how to configure SmartPool settings within a PowerScale storage environment using Terraform:

resource "powerscale_smartpool_settings" "example_smartpool_settings" {

name = "example-smartpool-settings"

default_policy = "balanced"

compression = true

deduplication = true

auto_tiering = true

auto_tiering_policy = "performance"

auto_tiering_frequency = "weekly"

// Add any additional configurations as needed

}- name: Specifies the name of the SmartPool settings.

- default_policy: Sets the default policy for SmartPool.

- compression: Enables or disables compression.

- deduplication: Enables or disables deduplication.

- auto_tiering: Enables or disables auto-tiering.

- auto_tiering_policy: Sets the policy for auto-tiering.

- auto_tiering_frequency: Sets the frequency for auto-tiering.

Data Source Example:

The following example shows how to retrieve information about existing SmartPool settings within a PowerScale environment using Terraform. The powerscale_smartpool_settings data source fetches information about the specified SmartPool settings. An output is defined to display the ID of the retrieved SmartPool settings:

data “powerscale_smartpool_settings” “existing_smartpool_settings” {

name = “existing-smartpool-settings”

}

output “smartpool_settings_id” {

value = data.powerscale_smartpool_settings.existing_smartpool_settings.id

}- name: Specifies the name of the existing SmartPool settings to query.

New resources

New resources and datasources are also available for the following entities:

- NTP Server

- NTP Settings

- Cluster Email Settings

In addition to the previously mentioned resource-datasource pairs for PowerScale Networking, an option to enable or disable “Source based networking” has been added to the Network settings resource. The corresponding datasources can retrieve this setting on a PowerScale cluster.

PowerFlex Provider v1.3 and v1.4

The following new resources and corresponding datasources have been added to PowerFlex:

Fault Sets: CRUD and Import operations

The following is an example that shows how to define a Fault Set resource within a PowerFlex storage environment using Terraform:

resource "powerflex_fault_set" "example_fault_set" {

name = "example-fault-set"

protection_domain_id = powerflex_protection_domain.example_pd.id

fault_set_type = "RAID-1"

// Add any additional configurations as needed

}- name: Specifies the name of the Fault Set.

- protection_domain_id: References the PowerFlex Protection Domain to associate with the Fault Set.

- fault_set_type: Defines the type of Fault Set, such as "RAID-1".

If you would like to bring an existing fault set resource into Terraform state management, you can import it using the fault set id:

terraform import powerflex_fault_set.fs_import_by_id "<id>"

Data Source Example:

The following code snippet illustrates how to retrieve information about existing Fault Sets within a PowerFlex environment using Terraform. The powerflex_fault_set data source fetches information about the specified Fault Set. An output is defined to display the ID of the retrieved Fault Set:

Ldata "powerflex_fault_set" "existing_fault_set" {

name = "existing-fault-set"

}

output "fault_set_id" {

value = data.powerflex_fault_set.existing_fault_set.id

}- name: Specifies the name of the existing Fault Set to query.

Snapshot policies: CRUD operations

- Snapshot policy resource – create, update, and delete.

- Snapshot policy data source – to get information of an existing policy.

Two new data sources

- powerflex_node: to get complete information related to a PowerFlex node firmware, hardware, and node health details.

- powerflex_template: this is a massive object that has information categorized into multiple groups within this object.

OME Provider v1.2

Following are the new resources to support Firmware baselining and compliance that have been added to the Dell OME Provider:

- Firmware Catalog

- Firmware Baselines

Firmware Catalog

Here is an example of how the catalog resource can be used to create or update catalogs:

# Resource to manage a new firmware catalog

resource "ome_firmware_catalog" "firmware_catalog_example" {

# Name of the catalog required

name = "example_catalog_1"

# Catalog Update Type required.

# Sets to Manual or Automatic on schedule catalog updates of the catalog.

# Defaults to manual.

catalog_update_type = "Automatic"

# Share type required.

# Sets the different types of shares (DELL_ONLINE, NFS, CIFS, HTTP, HTTPS)

# Defaults to DELL_ONLINE

share_type = "HTTPS"

# Catalog file path, required for share types (NFS, CIFS, HTTP, HTTPS)

# Start directory path without leading '/' and use alphanumeric characters.

catalog_file_path = "catalogs/example_catalog_1.xml"

# Share Address required for share types (NFS, CIFS, HTTP, HTTPS)

# Must be a valid ipv4 (x.x.x.x), ipv6(xxxx:xxxx:xxxx:xxxx:xxxx:xxxx:xxxx:xxxx), or fqdn(example.com)

# And include the protocol prefix ie (https://)

share_address = "https://1.2.2.1"

# Catalog refresh schedule, Required for catalog_update_type Automatic.

# Sets the frequency of the catalog refresh.

# Will be ignored if catalog_update_type is set to manual.

catalog_refresh_schedule = {

# Sets to (Weekly or Daily)

cadence = "Weekly"

# Sets the day of the week (Monday, Tuesday, Wednesday, Thursday, Friday, Saturday, Sunday)

day_of_the_week = "Wednesday"

# Sets the hour of the day (1-12)

time_of_day = "6"

# Sets (AM or PM)

am_pm = "PM"

}

# Domain optional value for the share (CIFS), for other share types this will be ignored

domain = "example"

# Share user required value for the share (CIFS), optional value for the share (HTTPS)

share_user = "example-user"

# Share password required value for the share (CIFS), optional value for the share (HTTPS)

share_password = "example-pass"

}Existing catalogs can be imported into the Terraform state with the import command:

# terraform import ome_firmware_catalog.cat_1 <id> terraform import ome_firmware_catalog.cat_1 1

After running the import command, populate the name field in the config file to start managing this resource.

Firmware Baseline

Here is an example that shows how a baseline can be compared to an array of individual devices or device groups:

# Resource to manage a new firmware baseline

resource "ome_firmware_baseline" "firmware_baseline" {

// Required Fields

# Name of the catalog

catalog_name = "tfacc_catalog_dell_online_1"

# Name of the Baseline

name = "baselinetest"

// Only one of the following fields (device_names, group_names , device_service_tags) is required

# List of the Device names to associate with the firmware baseline.

device_names = ["10.2.2.1"]

# List of the Group names to associate with the firmware baseline.

# group_names = ["HCI Appliances","Hyper-V Servers"]

# List of the Device service tags to associate with the firmware baseline.

# device_service_tags = ["HRPB0M3"]

// Optional Fields

// This must always be set to true. The size of the DUP files used is 64 bits."

#is_64_bit = true

// Filters applicable updates where no reboot is required during create baseline for firmware updates. This field is set to false by default.

#filter_no_reboot_required = true

# Description of the firmware baseline

description = "test baseline"

}Although the resource supports terraform import, in most cases a new baseline can be created using a Firmware catalog entry.

Following is a list of new data sources and supported operations in Terraform Provider for Dell OME:

- Firmware Repository

- Firmware Baseline Compliance Report

- Firmware Catalog

- Device Compliance Report

RedFish Provider v1.1 and 1.2

Several new resources have been added to the Redfish provider to access and set different iDRAC attribute sets. Following are the details:

Certificate Resource

This is a resource for the import of the ssl certificate to iDRAC based on the input parameter Type. After importing the certificate, the iDRAC will automatically restart. By default, iDRAC comes with a self-signed certificate for its web server. If the user wants to replace with his/her own server certificate (signed by Trusted CA), two kinds of SSL certificates are supported: (1) Server certificate and (2) Custom certificate. Following are the steps to generate these certificates:

- Server Certificate:

- Generate the CSR from iDRAC.

- Create the certificate using CSR and sign with trusted CA.

- The certificate should be signed with hashing algorithm equivalent to sha256

- Custom Certificate:

- An externally created custom certificate which can be imported into the iDRAC.

- Convert the external custom certificate into PKCS#12 format, and it should be encoded via base64. The conversion requires passphrase which should be provided in 'passphrase' attribute.

Boot Order Resource

This Terraform resource is used to configure Boot Order and enable/disable Boot Options of the iDRAC Server. We can read the existing configurations or modify them using this resource.

Boot Source Override Resource

This Terraform resource is used to configure Boot sources of the iDRAC Server. If the state in boot_source_override_enabled is set once or continuous, the value is reset to disabled after the boot_source_override_target actions have completed successfully. Changes to these options do not alter the BIOS persistent boot order configuration.

Manager Reset

This resource is used to reset the manager.

Lifecycle Controller Attributes Resource

This Terraform resource is used to get and set the attributes of the iDRAC Lifecycle Controller.

System Attributes Resource

This Terraform resource is used to configure System Attributes of the iDRAC Server. We can read the existing configurations or modify them using this resource. Import is also supported for this resource to include existing System Attributes in Terraform state.

iDRAC Firmware Update Resource

This Terraform resource is used to update the firmware of the iDRAC Server based on a catalog entry.

Resources

Here are the link sets for key resources for each of the Dell Terraform providers:

- Provider for PowerScale

- Provider for PowerFlex

- Provider for PowerStore

- Provider for Redfish

Author: Parasar Kodati, Engineering Technologist, Dell ISG