OneFS File Locking and Concurrent Access

Mon, 14 Mar 2022 23:03:37 -0000

|Read Time: 0 minutes

There has been a bevy of recent questions around how OneFS allows various clients attached to different nodes of a cluster to simultaneously read from and write to the same file. So it seemed like a good time for a quick refresher on some of the concepts and mechanics behind OneFS concurrency and distributed locking.

File locking is the mechanism that allows multiple users or processes to access data concurrently and safely. For reading data, this is a fairly straightforward process involving shared locks. With writes, however, things become more complex and require exclusive locking, because data must be kept consistent.

OneFS has a fully distributed lock manager that marshals locks on data across all the nodes in a storage cluster. This locking manager is highly extensible and allows for multiple lock types to support both file system locks, as well as cluster-coherent protocol-level locks, such as SMB share mode locks or NFS advisory-mode locks. OneFS supports delegated locks such as SMB oplocks and NFSv4 delegations.

Every node in a cluster can act as coordinator for locking resources, and a coordinator is assigned to lockable resources based upon a hashing algorithm. This selection algorithm is designed so that the coordinator almost always ends up on a different node than the initiator of the request. When a lock is requested for a file, it can either be a shared lock or an exclusive lock. A shared lock is primarily used for reads and allows multiple users to share the lock simultaneously. An exclusive lock, on the other hand, allows only one user access to the resource at any given moment, and is typically used for writes. Exclusive lock types include:

Mark Lock: An exclusive lock resource used to synchronize the marking and sweeping processes for the Collect job engine job.

Snapshot Lock: As the name suggests, the exclusive snapshot lock that synchronizes the process of creating and deleting snapshots.

Write Lock: An exclusive lock that’s used to quiesce writes for particular operations, including snapshot creates, non-empty directory renames, and marks.

The OneFS locking infrastructure has its own terminology, and includes the following definitions:

Domain: Refers to the specific lock attributes (recursion, deadlock detection, memory use limits, and so on) and context for a particular lock application. There is one definition of owner, resource, and lock types, and only locks within a particular domain might conflict.

Lock Type: Determines the contention among lockers. A shared or read lock does not contend with other types of shared or read locks, while an exclusive or write lock contends with all other types. Lock types include:

- Advisory

- Anti-virus

- Data

- Delete

- LIN

- Mark

- Oplocks

- Quota

- Read

- Share Mode

- SMB byte-range

- Snapshot

- Write

Locker: Identifies the entity that acquires a lock.

Owner: A locker that has successfully acquired a particular lock. A locker may own multiple locks of the same or different type as a result of recursive locking.

Resource: Identifies a particular lock. Lock acquisition only contends on the same resource. The resource ID is typically a LIN to associate locks with files.

Waiter: Has requested a lock but has not yet been granted or acquired it.

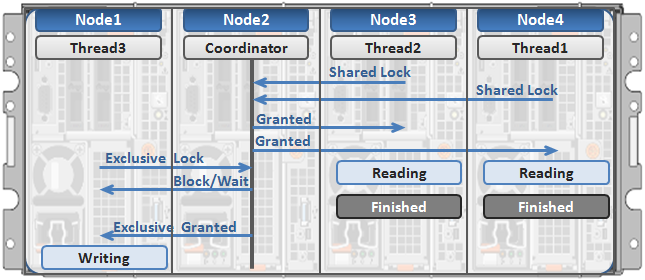

Here’s an example of how threads from different nodes could request a lock from the coordinator:

- Node 2 is selected as the lock coordinator of these resources.

- Thread 1 from Node 4 and thread 2 from Node 3 request a shared lock on a file from Node 2 at the same time.

- Node 2 checks if an exclusive lock exists for the requested file.

- If no exclusive locks exist, Node 2 grants thread 1 from Node 4 and thread 2 from Node 3 shared locks on the requested file.

- Node 3 and Node 4 are now performing a read on the requested file.

- Thread 3 from Node 1 requests an exclusive lock for the same file as being read by Node 3 and Node 4.

- Node 2 checks with Node 3 and Node 4 if the shared locks can be reclaimed.

- Node 3 and Node 4 are still reading so Node 2 asks thread 3 from Node 1 to wait for a brief instant.

- Thread 3 from Node 1 blocks until the exclusive lock is granted by Node 2 and then completes the write operation.

Author: Nick Trimbee

Related Blog Posts

OneFS Log Gather Transmission

Wed, 17 Apr 2024 15:45:51 -0000

|Read Time: 0 minutes

The OneFS isi_gather_info utility is the ubiquitous method for collecting and uploading a PowerScale cluster’s context and configuration to assist with the identification and resolution of bugs and issues. As such, it performs the following roles:

- Executes many commands, scripts, and utilities on a cluster, and saves their results

- Collates, or gathers, all these files into a single ‘gzipped’ package

- Optionally transmits this log gather package back to Dell using a choice of several transport methods

By default, a log gather tarfile is written to the /ifs/data/Isilon_Support/pkg/ directory. It can also be uploaded to Dell by the following means:

Upload mechanism | Description | TCP port | OneFS release support |

SupportAssist / ESRS | Uses Dell Secure Remote Support (SRS) for gather upload. | 443/8443 | Any |

FTP | Use FTP to upload the completed gather. | 21 | Any |

FTPS | Use SSH-based encrypted FTPS to upload the gather. | 22 | Default in OneFS 9.5 and later |

HTTP | Use HTTP to upload the gather. | 80/443 | Any |

As indicated in this table, OneFS 9.5 and later releases now leverage FTPS as the default option for FTP upload, thereby protecting the upload of cluster configuration and logs with an encrypted transmission session.

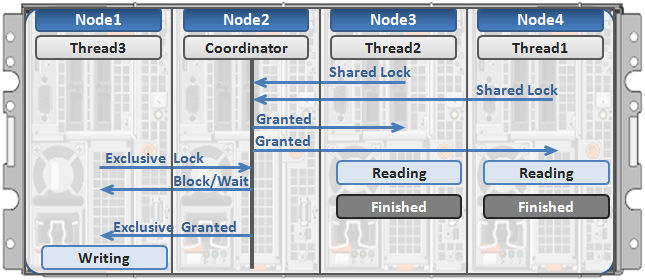

Under the hood, the log gather process comprises an eight phase workflow, with transmission comprising the penultimate ‘Upload’ phase:

The details of each phase are as follows:

Phase | Description |

1. Setup | Reads from the arguments passed in, and from any config files on disk, and sets up the config dictionary, which will be used throughout the rest of the codebase. Most of the code for this step is contained in isilon/lib/python/gather/igi_config/configuration.py. This is also the step in which the program is most likely to exit, if some config arguments end up being invalid. |

2. Run local | Executes all the cluster commands, which are run on the same node that is starting the gather. All these commands run in parallel (up to the current parallelism value). This is typically the second longest running phase. |

3. Run nodes | Executes the node commands across all of the cluster’s nodes. This runs on each node, and while these commands run in parallel (up to the current parallelism value), they do not run in parallel with the ‘Run local’ step. |

4. Collect | Ensures that all of the results end up on the overlord node (the node that started the gather). If the gather is using /ifs, it is very fast; if it is not using /ifs, it needs to SCP all the node results to a single node. |

5. Generate Extra Files | Generates nodes_info.xml and package_info.xml. These two files are present in every gather, and provide important metadata about the cluster. |

6. Packing | Packs (tars and gzips) all the results. This is typically the longest running phase, often by an order of magnitude. |

7. Upload | Transports the tarfile package to its specified destination using SupportAssist, ESRS, FTPS, FTP, HTTP, and so on. Depending on the geographic location, this phase might also be lengthy. |

8. Cleanup | Cleans up any intermediary files that were created on the cluster. This phase will run even if the gather fails, or is interrupted. |

Because the isi_gather_info tool is primarily intended for troubleshooting clusters with issues, it runs as root (or compadmin in compliance mode), because it needs to be able to execute under degraded conditions (such as without GMP, during upgrade, and under cluster splits, and so on). Given these atypical requirements, isi_gather_info is built as a standalone utility, rather than using the platform API for data collection.

While FTPS is the new default and recommended transport, the legacy plaintext FTP upload method is still available in OneFS 9.5 and later. As such, Dell’s log server, ftp.isilon.com, also supports both encrypted FTPS and plaintext FTP, so will not impact older release FTP log upload behavior.

This OneFS 9.5 FTPS security enhancement encompasses three primary areas where an FTPS option is now supported:

- Directly executing the /usr/bin/isi_gather_info utility

- Running using the isi diagnostics gather CLI command set

- Creating a diagnostics gather through the OneFS WebUI

For the isi_gather_info utility, two new options are included in OneFS 9.5 and later releases:

New option for isi_gather_info | Description | Default value |

--ftp-insecure | Enables the gather to use unencrypted FTP transfer. | False |

--ftp-ssl-cert | Enables the user to specify the location of a special SSL certificate file. | Empty string. Not typically required. |

Similarly, there are two corresponding options in OneFS 9.5 and later for the isi diagnostics CLI command:

New option for isi diagnostics | Description | Default value |

--ftp-upload-insecure | Enables the gather to use unencrypted FTP transfer. | No |

--ftp-upload-ssl-cert | Enables the user to specify the location of a special SSL certificate file. | Empty string. Not typically required. |

Based on these options, the following table provides some command syntax usage examples, for both FTPS and FTP uploads:

FTP upload type | Description | Example isi_gather_info syntax | Example isi diagnostics syntax |

Secure upload (default) | Upload cluster logs to the Dell log server (ftp.isilon.com) using encrypted FTP (FTPS). | # isi_gather_info Or # isi_gather_info --ftp | # isi diagnostics gather start Or # isi diagnostics gather start --ftp-upload-insecure=no |

Secure upload | Upload cluster logs to an alternative server using encrypted FTPS. | # isi_gather_info --ftp-host <FQDN> --ftp-ssl-cert <SSL_CERT_PATH> | # isi diagnostics gather start --ftp-upload-host=<FQDN> --ftp-ssl-cert= <SSL_CERT_PATH> |

Unencrypted upload | Upload cluster logs to the Dell log server (ftp.isilon.com) using plaintext FTP. | # isi_gather_info --ftp-insecure | # isi diagnostics gather start --ftp-upload-insecure=yes |

Unencrypted upload | Upload cluster logs to an alternative server using plaintext FTP. | # isi_gather_info --ftp-insecure --ftp-host <FQDN> | # isi diagnostics gather start --ftp-upload-host=<FQDN> --ftp-upload-insecure=yes |

Note that OneFS 9.5 and later releases provide a warning if the cluster admin elects to continue using non-secure FTP for the isi_gather_info tool. Specifically, if the --ftp-insecure option is configured, the following message is displayed, informing the user that plaintext FTP upload is being used, and that the connection and data stream will not be encrypted:

# isi_gather_info --ftp-insecure

You are performing plain text FTP logs upload.

This feature is deprecated and will be removed

in a future release. Please consider the possibility

of using FTPS for logs upload. For further information,

please contact PowerScale support

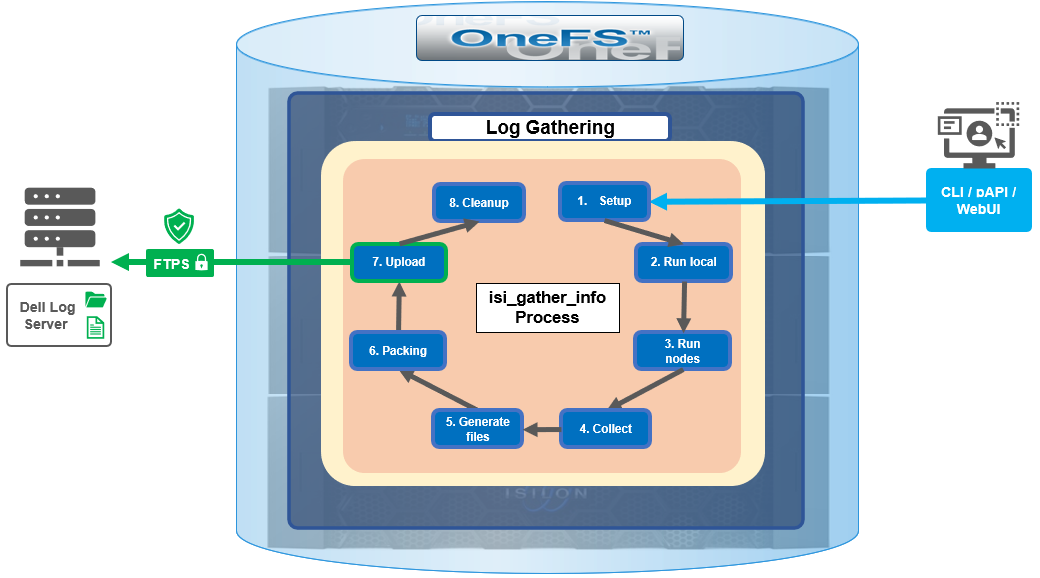

...In addition to the command line, log gathers can also be configured using the OneFS WebUI by navigating to Cluster management > Diagnostics > Gather settings.

The Edit gather settings page in OneFS 9.5 and later has been updated to reflect FTPS as the default transport method, plus the addition of radio buttons and text boxes to accommodate the new configuration options.

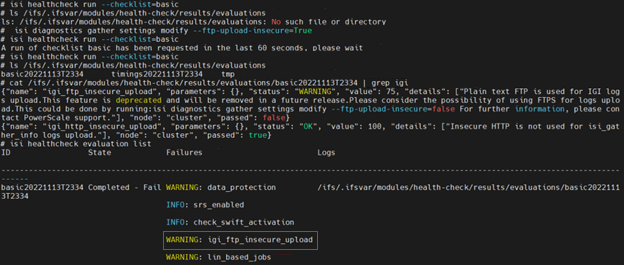

If plaintext FTP upload is configured, the healthcheck command will display a warning that plaintext upload is used and is no longer a recommended option. For example:

For reference, the OneFS 9.5 and later isi_gather_info CLI command syntax includes the following options:

Option | Description |

--upload <boolean> | Enable gather upload. |

--esrs <boolean> | Use ESRS for gather upload. |

--noesrs | Do not attempt to upload using ESRS. |

--supportassist | Attempt SupportAssist upload. |

--nosupportassist | Do not attempt to upload using SupportAssist. |

--gather-mode (incremental | full) | Type of gather: incremental or full. |

--http-insecure <boolean> | Enable insecure HTTP upload on completed gather. |

--http-host <string> | HTTP Host to use for HTTP upload. |

--http-path <string> | Path on HTTP server to use for HTTP upload. |

--http-proxy <string> | Proxy server to use for HTTP upload. |

--http-proxy-port <integer> | Proxy server port to use for HTTP upload. |

--ftp <boolean> | Enable FTP upload on completed gather. |

--noftp | Do not attempt FTP upload. |

--set-ftp-password | Interactively specify alternate password for FTP. |

--ftp-host <string> | FTP host to use for FTP upload. |

--ftp-path <string> | Path on FTP server to use for FTP upload. |

--ftp-port <string> | Specifies alternate FTP port for upload. |

--ftp-proxy <string> | Proxy server to use for FTP upload. |

--ftp-proxy-port <integer> | Proxy server port to use for FTP upload. |

--ftp-mode <value> | Mode of FTP file transfer. Valid values are both, active, and passive. |

--ftp-user <string> | FTP user to use for FTP upload. |

--ftp-pass <string> | Specify alternative password for FTP. |

--ftp-ssl-cert <string> | Specifies the SSL certificate to use in FTPS connection. |

--ftp-upload-insecure <boolean> | Whether to attempt a plaintext FTP upload. |

--ftp-upload-pass <string> | FTP user to use for FTP upload password. |

--set-ftp-upload-pass | Specify the FTP upload password interactively. |

When a logfile gather arrives at Dell, it is automatically unpacked by a support process and analyzed using the logviewer tool.

Author: Nick Trimbee

PowerScale OneFS 9.8

Tue, 09 Apr 2024 14:00:00 -0000

|Read Time: 0 minutes

It’s launch season here at Dell Technologies, and PowerScale is already scaling up spring with the innovative OneFS 9.8 release which shipped today, 9th April 2024. This new 9.8 release has something for everyone, introducing PowerScale innovations in cloud, performance, serviceability, and ease of use.

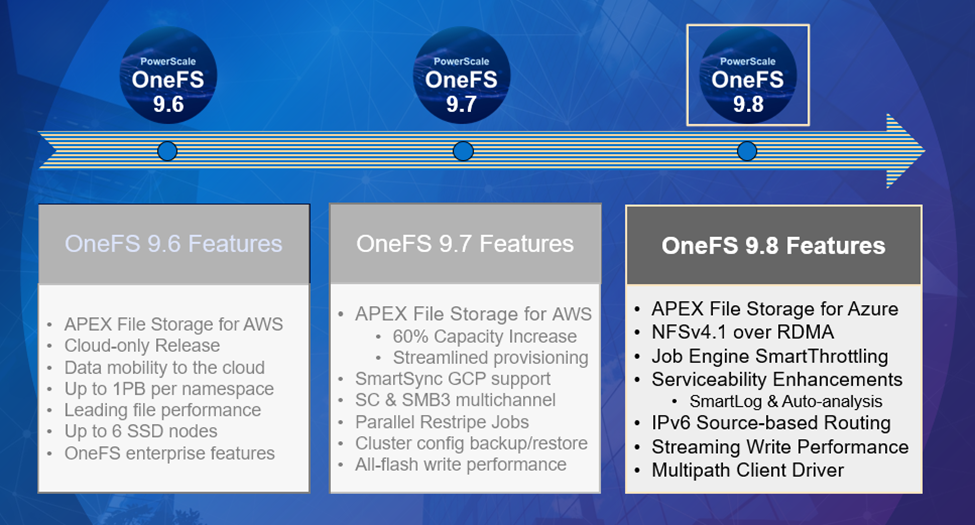

Figure 1. OneFS 9.8 release features

Figure 1. OneFS 9.8 release features

APEX File Storage for Azure

After the debut of APEX File Storage for AWS last year, OneFS 9.8 amplifies PowerScale’s presence in the public cloud by introducing APEX File Storage for Azure.

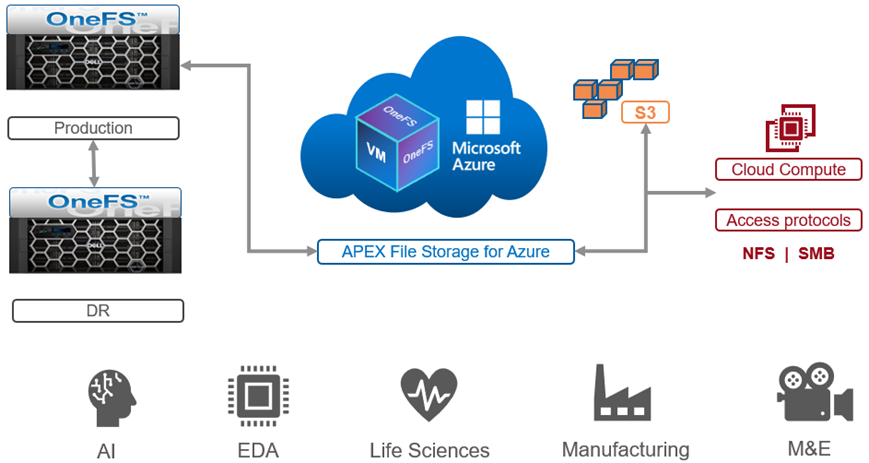

Figure 2. OneFS 9.8 APEX File Storage for Azure

Figure 2. OneFS 9.8 APEX File Storage for Azure

In addition to providing the same OneFS software platform on-prem and in the cloud as well as customer-managed for full control, APEX File Storage for Azure in OneFS 9.8 provides linear capacity and performance scaling from four to eighteen SSD nodes and up to 3PB per cluster, making it a solid fit for AI, ML, and analytics applications, as well as traditional file shares and home directories and vertical workloads like M&E, healthcare, life sciences, and financial services.

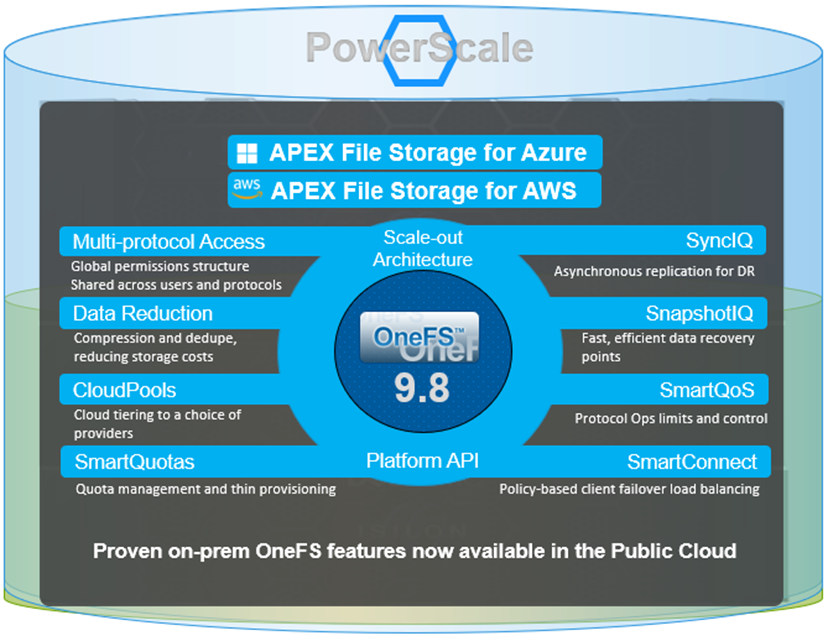

Figure 3. Dell PowerScale scale-out architecture

Figure 3. Dell PowerScale scale-out architecture

PowerScale’s scale-out architecture can be deployed on customer-managed AWS and Azure infrastructure, providing the capacity and performance needed to run a variety of unstructured workflows in the public cloud.

Once in the cloud, existing PowerScale investments can be further leveraged by accessing and orchestrating your data through the platform's multi-protocol access and APIs.

This includes the common OneFS control plane (CLI, WebUI, and platform API) and the same enterprise features, such as Multi-protocol, SnapshotIQ, SmartQuotas, Identity management, and so on.

Simplicity and efficiency

OneFS 9.8 SmartThrottling is an automated impact control mechanism for the job engine, allowing the cluster to automatically throttle job resource consumption if it exceeds pre-defined thresholds in order to prioritize client workloads.

OneFS 9.8 also delivers automatic on-cluster core file analysis, and SmartLog provides an efficient, granular log file gathering and transmission framework. Both of these new features help dramatically accelerate the ease and time to resolution of cluster issues.

Performance

OneFS 9.8 also adds support for Remote Direct Memory Access (RDMA) over NFS 4.1 support for applications and clients. This allows for substantially higher throughput performance – especially in the case of single-connection and read-intensive workloads such as machine learning and generative AI model training – while also reducing both cluster and client CPU utilization and provides the foundation for interoperability with NVIDIA’s GPUDirect.

RDMA over NFSv4.1 in OneFS 9.8 leverages the ROCEv2 network protocol. OneFS CLI and WebUI configuration options include global enablement and IP pool configuration, filtering, and verification of RoCEv2 capable network interfaces. NFS over RDMA is available on all PowerScale platforms containing Mellanox ConnectX network adapters on the front end and with a choice of 25, 40, or 100 Gigabit Ethernet connectivity. The OneFS user interface helps easily identify which of a cluster’s NICs support RDMA.

Under the hood, OneFS 9.8 introduces efficiencies such as lock sharding and parallel thread handling, delivering a substantial performance boost for streaming write-heavy workloads such as generative AI inferencing and model training. Performance scales linearly as compute is increased, keeping GPUs busy and allowing PowerScale to easily support AI and ML workflows both small and large. OneFS 9.8 also includes infrastructure support for future node hardware platform generations.

Multipath Client Driver

The addition of a new Multipath Client Driver helps expand PowerScale’s role in Dell Technologies’ strategic collaboration with NVIDIA, delivering the first and only end-to-end large scale AI system. This is based on the PowerScale F710 platform in conjunction with PowerEdge XE9680 GPU servers and NVIDIA’s Spectrum-X Ethernet switching platform to optimize performance and throughput at scale.

In summary, OneFS 9.8 brings the following new features to the Dell PowerScale ecosystem:

Feature | Info |

Cloud |

|

Simplicity |

|

Performance |

|

Serviceability |

|

We’ll be taking a deeper look at this new functionality in blog articles over the course of the next few weeks.

Meanwhile, the new OneFS 9.8 code is available on the Dell Online Support site, both as an upgrade and reimage file, allowing installation and upgrade of this new release.

Author: Nick Trimbee