Natural Language Processing

Tue, 02 Mar 2021 16:10:04 -0000

|Read Time: 0 minutes

“Hey Google, do I look good today?”

“You’re more stunning than a new router fresh out of the box.”

“Aww, thank you!”

“You’re welcome.”

Oh, the joys of natural language processing, and one of many short conversations some of us have with our smart home or personal assistance devices.

The AI subfield of Natural Language Processing (NLP) trains computers to understand human language so that computers can communicate using the same language. The interdisciplinary studies of theoretical computer science, principles of linguistics, and artificial intelligence (AI) that are focused on natural human language and human-machine interactions, brought about what we know today as NLP. Linguistics provides the formula for language such as semantics, syntax, vocabulary, grammar and phrases, while computer science and machine/deep learning transform these linguistic formulas into the NLP algorithm itself.

Common examples of NLP in use today include:

- Email spam detection or document classification

- Website chatbots

- Automated voice response systems (IVR/AVR) on support calls

- Support and marketing use cases analyze written text on the Internet, in support tickets, on social media platforms, and more to determine if the content contains positive or negative sentiment about a product or service.

- Real-time translation of a language to another such as in Google Translate.

- Search made simple such as with Google Search

- On-demand spell checking such as in Microsoft Word

- On-demand next word prediction found in messaging applications such as on mobile phones.

- In drug trials where text is scanned to determine overlap in intellectual property during drug development.

- Personal assistance agents such as Siri, Alexa, Cortana, and Google Assistant

In the case of personal assistants as an example, NLP in action looks like the following:

- You ask Siri: ‘What’s the weather today?”

- Siri collects your question in audio format and converts it to text, which is processed for understanding.

- Based on that understanding, a response is created, converted to audio, and then delivered to you.

Algorithmically, NLP starts with understanding the syntax of the text to extract the grammatical sense from the arrangement of words; a much easier task as most language has clearly defined grammatical rules that can be used to train the algorithms. When the syntax is understood, the algorithm works to infer meaning, nuance, and semantics, which is a harder task because language is not a precise science. The same thing can be said in multiple ways and still have the same meaning in and across multiple languages.

Tools and frameworks

Tools and frameworks that support the implementation of NLP applications, like those mentioned earlier, must be able to derive high-quality information from analyzed text through Text Mining. The components of text mining enable NLP to carry out the following operations:

- Noise removal—Extraction of useful data

- Tokenization—Identification and key segmentation of the useful data

- Normalization—Translation of text into equivalent numerical values appropriate for a computer to understand

- Pattern classification—Discovery of relevancy in segmented data pieces and classify them

Common NLP frameworks with the capabilities that are described above are listed below. The intricacies of these frameworks are outside the scope of this blog; go to the following sites to learn more.

Conclusion

We know where NLP came from and some of its applications today, but where is it going and is it ready for wider adoption? What we understand about most existing AI algorithms is that they are suitable for narrow implementations where they carry out a very specific task. Such algorithms are considered to be Artificial Narrow Intelligence, and not Artificial General Intelligence; where the latter implies that they are expert at many things. Most AI is still yet to fully have a grasp on context and what covers time, space, and causality the way humans do. NLP is no exception.

For example, an Internet search returns irrelevant results that do not answer our questions because NLP is excellent at parsing large amounts of data for similarities in content. Then, there is the nuance of spoken language mentioned before and the variance in language rules across languages and even domains. These factors make training for complete accuracy difficult. Some ways to address this might be larger data sets, more infrastructure to train, and perhaps model-based training versus the use of neural networks. However, these come with their own challenges.

At Dell, we have successfully deployed NLP in our tech support center applications, where agents write quick descriptions of a customer’s issues and the application returns predictions for the next best troubleshooting step. 3,000 agents use the tool to service over 10 K customers per day.

We use NLP techniques on input text to generate a format that the AI model can use and have employed K-nearest neighbor (KNN) clustering and logistic regressions for predictions. Microservice APIs are in place to pass information to agents as well. To address the concerns around text as input, we worked with our subject matter experts from the tech support space to identify Dell-specific lingo, which we used to develop a library of synonyms where different entries could mean the same thing. This helped greatly with cleaning up data, providing data to train, and helped us group similar words for context.

For a high turnover role (support agents), we were able to train new agents to be successful sooner by making their onboarding process easier. The support application’s ability to provide the right information quickly lessened the time spent on browsing large irrelevant amounts of information, which can lead to disgruntled customers and frustrated agents. We saw a 10% reduction in the time it took for customers to be serviced. The solution made it possible to feed newly discovered issues to our engineering teams when agents reported or searched for new technical issues with which we were not already familiar. This worked conversely to support agents from engineering as well.

Our research teams at Dell are actively feeding our findings on neural machine translations into the open-source community: one of our current projects is work on AI Voice Synthesis, where NLP works so well you can’t tell that a computer is speaking!

For more information about natural language processing (BERT) MLPerf benchmark ratings for Dell PowerEdge platforms, visit the linked blog posts, then reach out to Dell’s Emerging Tech Team for help with NLP projects in your organization.

Related Blog Posts

Edge Intelligence Trends in the Retail Industry

Tue, 07 Feb 2023 22:58:02 -0000

|Read Time: 0 minutes

Retail and Edge Computing

In today's digital landscape, the integration of Retail and Edge Computing is becoming increasingly important. Retail encompasses the sale of goods and services both online and in physical stores, while Edge computing deals with processing and analyzing data close to its source of collection, rather than in a centralized location. The use of Edge computing in retail environments can improve Customer Experiences, optimize store operations, and support new technologies such as self-checkout and augmented reality systems. This leads to a mandate for real-time data analysis, allowing for a more personalized and seamless shopping experience for customers, as well as cost reduction and increased revenue for retailers. In this post, we will delve into the advantages of Edge computing and how they align with modern AI trends in the retail industry, serving as a reference to Dell's positioning and solutions for retail organizations to effectively utilize AI to meet business needs.

Leading Retail Edge AI Trends in 2023

Focus on AI uses cases with High ROI

Edge AI holds vast potential in a variety of industries with a high ROI in the form of automation of repetitive tasks, increased efficiency, and revenue growth. Edge AI can be used to analyze customer data and predict future purchasing patterns, leading to optimized product offerings and improved sales. Personalization through tailored marketing campaigns and personalized product recommendations can boost customer loyalty and repeat business. Edge AI can track Point of Sales (POS) and online shopping transactions inventory levels in real-time, identify discrepancies, and prevent stockouts, leading to cost savings and increased revenue. It can also be used to detect and prevent theft in retail environments, increasing profitability. The technology can be applied in smart shelves and robotics for inventory management and stocking shelves, resulting in increased efficiency and reduced labor costs.

Growth in Human and Machine Collaboration

Edge AI boosts human-machine collaboration in retail by automating tasks and powering virtual assistants, predictive analytics, sensors, and robots. These AI tools free up store staff for tasks requiring human skills, offer personalized information, optimize product offerings, track inventory, detect theft, and provide real-time data analysis and recommendations to improve the customer experience.

New AI Use cases for safety

Edge AI can be used to improve safety in retail environments in various ways. Edge AI-powered cameras and sensors can be used for real-time surveillance, helping to detect potential safety hazards such as spills or broken equipment. Edge AI can also be used for crowd management, analyzing data on foot traffic to identify patterns and optimize store layouts. In case of emergencies, Edge AI can detect potential incidents such as fires or gas leaks and send real-time alerts to emergency responders. Edge AI can be used to track inventory levels in real-time and monitor individual movements, detecting falls or accidents and alerting store staff or emergency responders. Edge AI can also be used for temperature monitoring in areas such as refrigerated storage to ensure safe temperatures and prevent food-borne illnesses. Real-time alerting can be done using Edge AI to ensure quick and effective responses to safety incidents.

IT Focus on Cybersecurity at the Edge

The retail industry is rapidly adopting Edge AI technologies to improve the customer experience and enhance operational efficiency. However, this increased reliance on Edge AI also creates new cybersecurity risks, as cyber criminals seek to exploit vulnerabilities in these systems. To address these challenges, retailers are increasing their investment in cybersecurity for Edge AI technologies. Some of the key trends in Edge AI cybersecurity for retail include the implementation of advanced security protocols, such as encryption and multi-factor authentication, to protect sensitive customer data. Another trend is the use of artificial intelligence to detect and prevent cyber threats in real-time, by analyzing data from Edge AI devices and network traffic. As Edge AI continues to become more widespread in the retail industry, the need for robust cybersecurity measures will only continue to grow, making it essential for retailers to stay ahead of the latest trends and best practices.

Connecting Digital Twins to the Edge

Edge AI can be utilized to create digital twins for various aspects of the retail environment, leading to improvements in store operations and customer experience. Retailers can create digital twins of store layouts to optimize product placement and simulate customer movement. Digital twins can also be created for inventory systems, smart shelves, and automated systems to optimize stock levels, predict demand patterns, and improve task efficiency and accuracy. Predictive maintenance can be performed by creating digital twins of equipment and infrastructure to avoid downtime. Edge AI also enables real-time monitoring of retail environments by creating digital twins and analyzing data, such as foot traffic and temperature, to inform better decision-making. Virtual reality applications can also be enhanced by creating digital twins of the store, providing virtual try-ons and product demonstrations to customers.

Importance of Edge Computing

Edge computing is becoming a crucial technology as the amount of data generated from devices and sensors continues to grow. By decentralizing and distributing computing, edge computing offers several advantages, including real-time processing with lower latency, improved efficiency, enhanced security, cost-effectiveness, and increased scalability.

Benefits

Low Latency: Edge computing enables near real-time processing for applications like self-driving cars, industrial automation, and IoT devices.

Improved Efficiency: By processing data near source, edge computing reduces data transmission, improving system efficiency and reducing data storage costs.

Improved Security: Edge computing protects sensitive data by processing it near source and analyzing it before transmitting to a centralized location, reducing data breach risks.

Cost-effective: Edge computing reduces costs by reducing the need for powerful servers and large data centers, and reducing data transmission and storage costs.

Increased Scalability: Edge computing allows decentralized, distributed computing, making it easier to scale systems as needed without infrastructure constraints.

Dell Solutions for Retail AI Edge Computing

Dell Technologies collaborates with a multitude of business partners to offer market-leading software integrated with its latest PowerEdge XR4000 infrastructure for Retail AI Edge Computing. These comprehensive solutions are carefully curated and validated through Dell's Validated Designs to support retailers in realizing their AI objectives and applications. In this discourse, we will delve into three distinctive solutions that pertain to the following areas: Manage and Scale AI at the Edge, Retail Loss Prevention, and Retail Analytics.

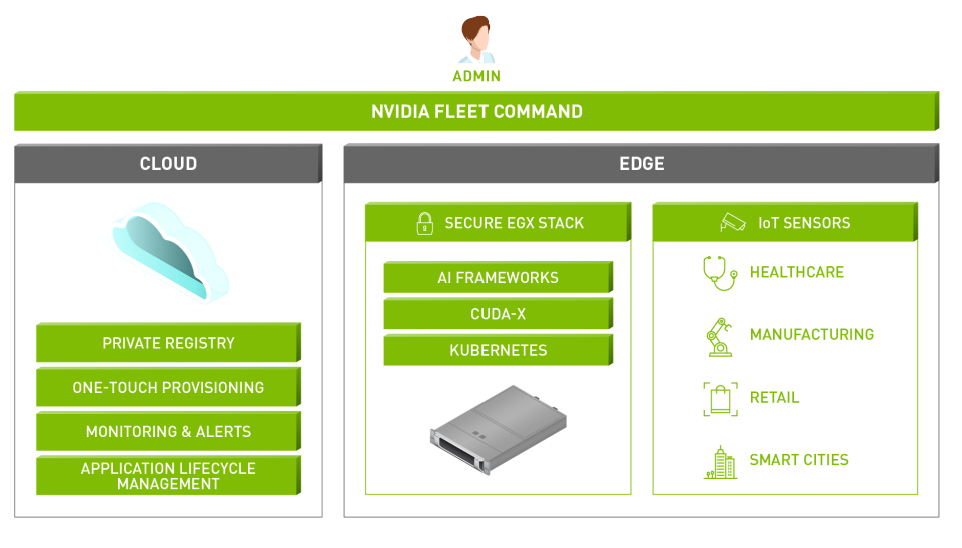

Nvidia Fleet Command – Manage Configurations and Scale AI at the Edge

Edge AI deployment introduces new opportunities with real-time insights at the decision point, reducing latency and costs compared to data center and cloud transfer. However, bringing Edge AI to retail pipelines can be challenging due to resource limitations. Fleet Command is a hybrid cloud platform for managing large-scale AI, including edge devices, through a single web-based control plane. With Fleet Command and Dell EMC PowerEdge servers, IT administrators can remotely control AI deployments securely and efficiently, streamlining deployment and ensuring resilient AI across the network.

Adaptive Compute

Dell Technologies' systems management enables fast response to business opportunities through intelligent systems that collaborate and act independently to align outcomes with business goals, freeing IT to focus on innovation. Fleet Command simplifies AI management through centralization and one-touch provisioning, reducing the learning curve and accelerating the path to AI.

Autonomous Management

Dell Technologies' systems management enables quick response to business opportunities with smart systems that act independently to align with business goals, freeing IT to focus on innovation. Fleet Command streamlines AI management with centralized, straightforward management and one-touch provisioning.

Proactive Resilience

Dell EMC PowerEdge servers, prioritize security throughout the infrastructure and IT setup, detecting potential risks. Fleet Command adds extra protection with integrated security features that secure application and sensor data, including self-healing capabilities to minimize downtime and reduce maintenance expenses.

Figure 1. Fleet Command Platform UI

RetailAI Protect by Malong Technologies – Retail Loss Prevention

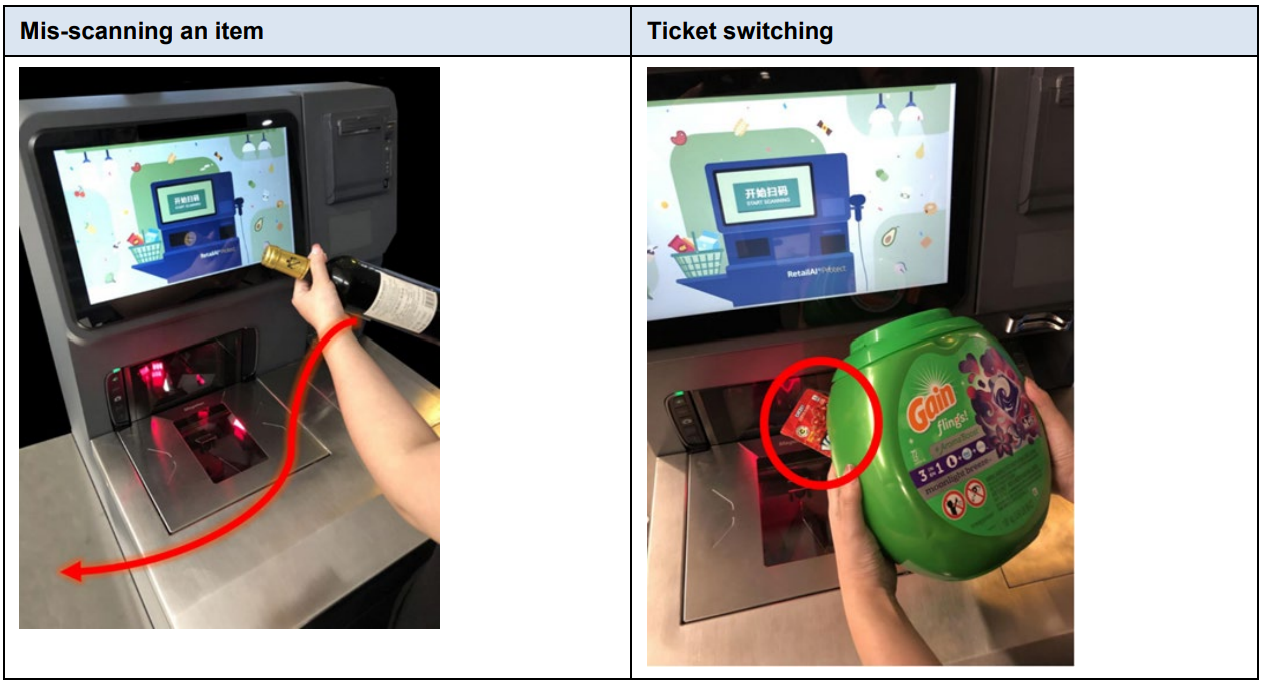

The Retail Loss Prevention solution is driven by Dell EMC PowerEdge server in-store and uses advanced product recognition technology from Malong RetailAI® Protect. The aim of the Retail Loss Prevention architecture is to prevent fraud while maintaining a seamless customer experience. It is an AI-powered system that can detect mis-scans and ticket switching in near real-time, covering a wide range of stock keeping units (SKUs). The solution components are chosen based on their compatibility and enhancement of existing POS scanners. The Malong RetailAI Protect solution can prevent retail loss in two ways: ticket switching and mis-scans. The overhead fixed-dome camera records an item with a suspect UPC barcode or an item that wasn't scanned. The video is processed in a GPU and fed into the Malong RetailAI model to predict the item's UPC. If the item wasn't scanned, the Malong RetailAI Protect system alerts the self-checkout (SCO) system after a set time interval. If the scanned UPC doesn't match the correct code, the system immediately raises an alert for the retail associate to take appropriate action.

Figure 2. Examples of mis-scanning and ticket switching

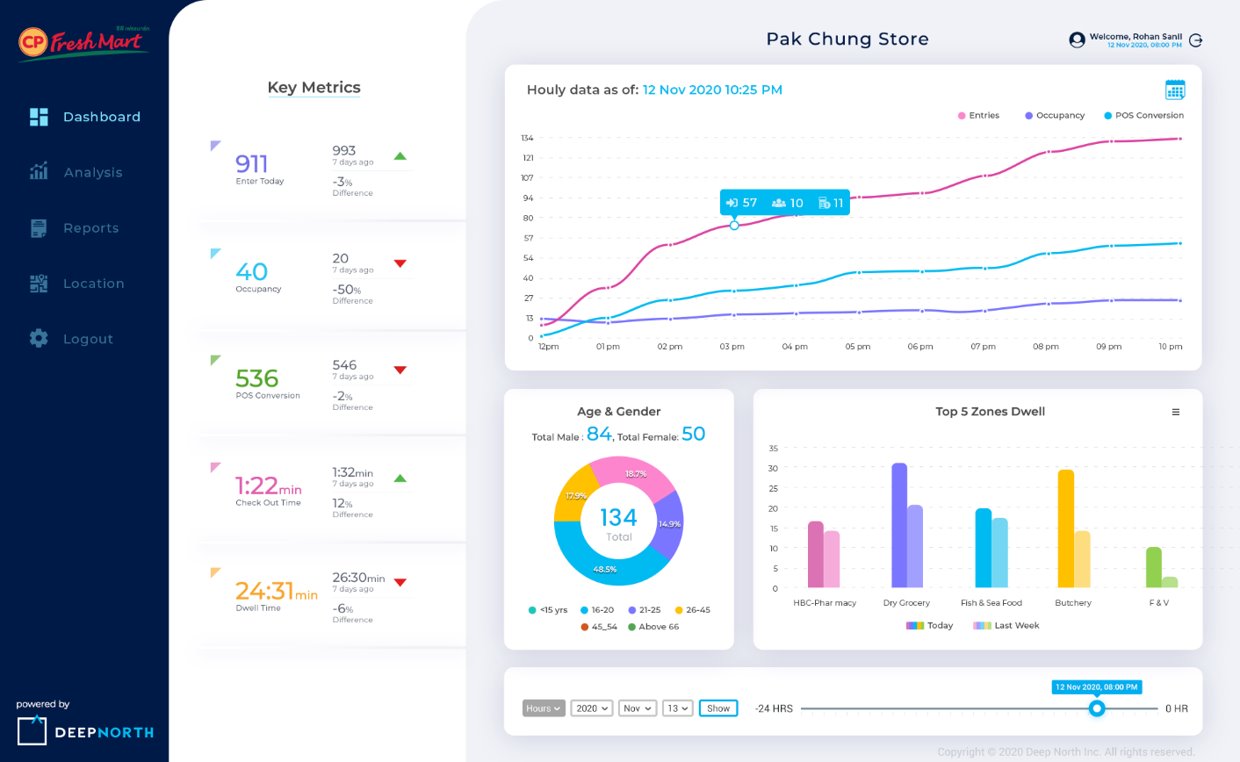

Deep North Video Analytics– Retail Analytics

Deep North Video Analytics is a leading-edge platform that performs real-time and batch processing of images that are captured by cameras that are typically located in the ceiling of a retail environment. The Deep North platform ingests the images and feeds them directly into the memory of GPU cards that are installed in a Dell PowerEdge or Dell VxRail node. Each camera stream is analyzed frame by frame, and then the Deep North inferencing algorithms produce specific metadata. This metadata is then sent to the Deep North Analytics Cloud and converted into a dashboard that provides valuable information to the store owner.

Figure 3. Visualizations in a Deep North Analytics Dashboard

Dell is your partner in your AI Edge for Retail Journey

As AI continues to evolve, keeping up with the design, development, deployment, and management of AI solutions can be a challenge for organizations lacking AI expertise. That's where Dell Technologies comes in, as your trusted partner in the AI journey. With a decade of experience as a leader in advanced computing, Dell offers industry-leading products, solutions, and expertise to empower your organization. Our specialized team of AI, HPC, and Data Analytics experts are dedicated to helping you stay ahead of the curve, with a focus on Edge AI for Retail. Our experts can assist you in leveraging Edge AI to drive business outcomes, improve customer experiences, and increase operational efficiency. Trust Dell to help you stay at the forefront of AI innovation.

Customer Solution Center

The Customer Solution Center is a dedicated resource designed to provide customers with a comprehensive range of information, recommendations, and demonstrations of technologies and platforms that support AI. Our experienced staff are well-versed in the challenges faced by customers and offer valuable insight and guidance to help organizations leverage AI to its full potential.

AI and HPC Innovation Lab

The AI and HPC Innovation Lab is a state-of-the-art infrastructure staffed by an exceptional team of computer scientists, engineers, and Ph.D.-level experts. These specialists work in close collaboration with customers and the wider AI and HPC community to advance the field through early access to emerging technologies, performance optimization of clusters, benchmarking of applications, best practice development, and publication of industry thought leadership. By engaging with the Lab, organizations have direct access to Dell's leading experts, enabling them to tailor a customized solution for their unique AI or HPC needs.

Conclusion

Edge computing has become a crucial technology in the retail industry, allowing for real-time data processing and analysis close to the information source. This leads to improved customer experiences, optimized store operations, and new technology implementation, such as self-checkout and augmented reality systems. As data generation continues to grow, edge computing offers low latency, improved efficiency, enhanced security, cost-effectiveness, and increased scalability. Edge AI is at the forefront of retail trends, offering high ROI in the form of intelligent automation and improved efficiency, human-machine collaboration, new AI use cases for safety, a focus on cybersecurity at the edge, and the creation of digital twins for improved intelligent decision making.

Accelerate Your Journey to AI Success with MLOps and AutoML

Tue, 07 Feb 2023 22:05:15 -0000

|Read Time: 0 minutes

Artifical Intelligence (AI) and Machine Learning (ML) helps organizations make intelligent data driven business decisions and are critical components to help businesses thrive in a digitally transforming world. While the total annual corporate AI investment has increased substantially since 2019, many organizations are still experiencing barriers to successfully adopt AI. Organizations move along the AI and analytics maturity curve at different rates. Automation methodologies such as Machine Learning Operations (MLOps) and Automatic Machine Learning (AutoML) can serve as the backbone for tools and processes that allow organizations to experiment and deploy models with the speed and scale of a highly efficient, AI-first enterprise. MLOps and AutoML are associated with distinct and important components of AI/ML work streams. This blog introduces how software platfoms like cnvrg.io and H2O Driverless AI make it easy for organizations to adopt these automation methodologies into their AI environment.

This blog is intended to serve as a reference for Dell’s position on MLOps solutions that help organizations scale their AI and ML practices. MLOps and AutoML provide a powerful combination that brings business value out of AI projects quicker and in a secure and scalable way. Dell Validated Designs provides the Reference Architecture that combines the software and Dell hardware to bring these solutions to life.

Importance of automation methodologies

Deploying models to a production environment is an important component to getting the most business value from an AI/ML project. While there are numerous tasks to get a project into production, from Exploratory Data Analysis to model training and tuning, successfully deployed models require additional sets of tasks and procedures, such as runtime model management, model observability and retraining, and inferencing reliability and cost optimization. The lifeycle of a AI/ML project involves disciplines of data engineering, data science, DevOps engineering and roles with differing skillsets across these teams. With all the steps listed above for just a single AI/ML project, it’s not difficult to see the challenges organizations have when faced with wanting to rapidly grow the number of projects across different business units within the organization. Organizations that prioritize ROI, consistency, reusability, traceability, reliability and automation in their AI/ML projects through sets of procedures and tools described in this paper are set up to scale in AI and meet the demand of AI for its business.

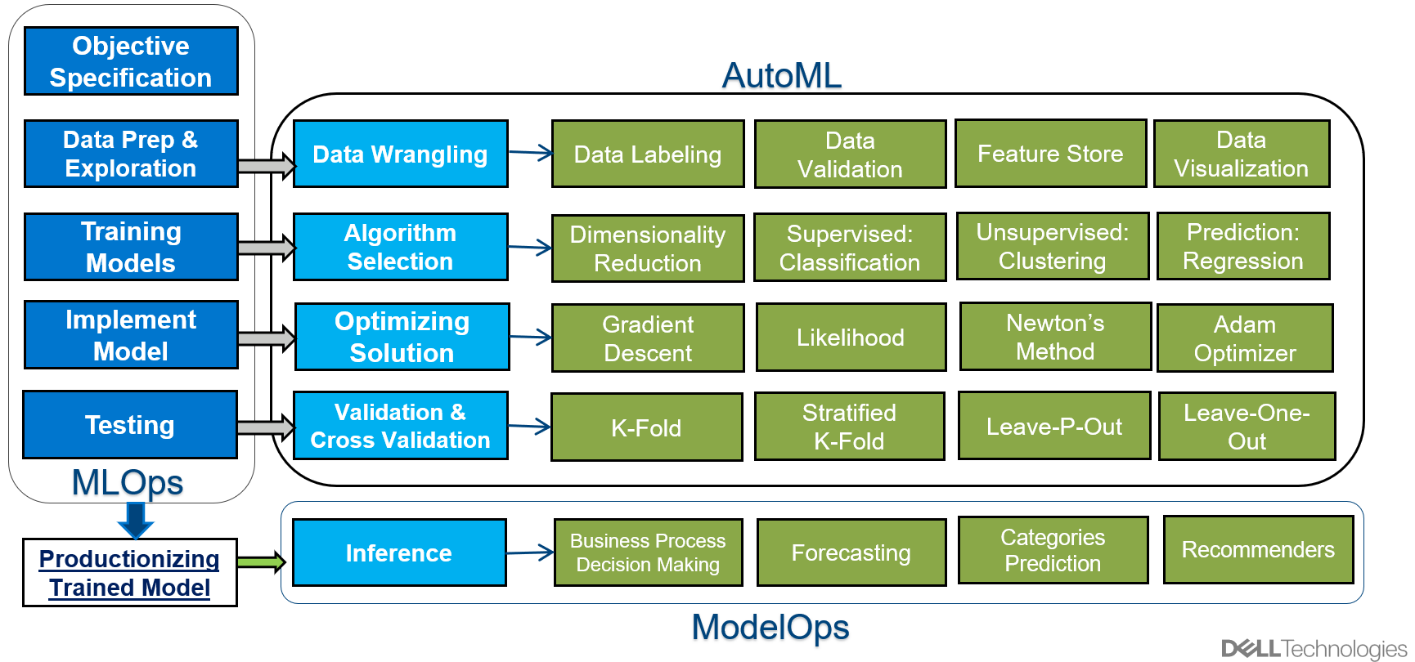

Components of an AI/ML project

A typical AI/ML project has many distinct tasks which can flow in a cascading, yet circular manner. This means that while tasks may have dependencies on completion of previous tasks, the continuous learning nature of ML projects create an iterative feedback loop throughout the project.

The following list describes steps that a typical AI/ML project will run through.

- Objective Specification

- Exploratory Data Analysis (EDA)

- Model Training

- Model Implementation

- Model Optimization and Cross Validation

- Testing

- Model Deployment

- Inference

Figure 1. Distinct Tasks in an AI/ML Project

Each task serves an important role in the project and can be grouped at a high level by defining the problem statement, the data and modeling work, and productionizing the final model for inference. Because these groups of tasks have different objectives, there are different automation methodologies that have been developed to streamline and scale projects. The concept of Automated Machine Learning (AutoML) was developed to make the data and modeling work as efficient as possible. AutoML is a set of proven and optimized solutions in data modeling. The practice of ModelOps was developed to deploy models faster and more scalable. While AutoML and ModelOps automate specific tasks within a project, the practice of MLOps serves as an umbrella automation methodology that contains guiding principles for all AI/ML projects.

MLOpsThe key to navigating the challenges of inconsistent data, labor constraints, model complexity, and model deployment to operate efficiently and maximize the business value of AI is through the adoption of MLOps. MLOps, at a high level, is the practice of applying software engineering principles of Continuous Improvement and Continuous Delivery (CI/CD) to Machine Learning projects. It is a set of practices that provide the framework for consistencty and reusability that leads to quicker model deployments and scalability.

MLOps tools are the software based applications that help organizations put the MLOps principles into practice in an automated fashion.

The complexities stemming from ever-changing business environments that affect underlying data, inference needs, etc mean that MLOps in AI/ML projects need to have quicker iteratons than typical DevOps software projects.

AutoML

At the heart of a AI/ML project is the quest for business insights, and the tasks that lead to these insights can be done at a scalable and efficient manner with AutoML. AutoML is the process of automating exploratory data analysis (EDA), algorithm selection, training and optimizations of models.

AutoML tools are low-code or no-code platforms that begin with the ingestion of data. Summary statistics, data visualizations, outlier detection, feature interaction, and other tasks associated with EDA are then automatically completed. For model training, AutoML tools can detect what type of algorthms are appopriate for the data and business question and proceed to test each model. AutoML also itierates over hundreds of versions of the models by tweaking the parameters to find the optimal settings. After cross-validation and further testing of the model, a model package is created which includes the data transformations and scoring pipelines for easy deployment into a production environment.

ModelOps

Oncea trained model is ready for deployment in a production environment, a whole new set of tasks and processes begin. ModelOps is the set of practices and processes that support fast and scalable deployment of models to production. Model performance degrades over time for reasons such as underlying trends in the data changing or introduction of new data, so models need to be monitored closely and be updated to keep peak business value throughout its lifecycle.

Model monitoring and management are key components of ModelOps, but there are many other aspects to consider as part of a ModelOps strategy. Managing infrastructure for proper resource allocation (e.g how and when to include accelerators), automatic model re-training in near real time, and integrating with advanced network sevurity solutions, versioning, and migration are other elements that must be considered when thinking about scaling an AI environment.

Dell solution for automation methodologies

Dell offers solutions that bring together the infrastructure and software partnerships to capitalize on the benefits of AutoML, MLOps and ModelOps. Through jointly engineered and tested solutions with Dell Validated Designs, organizations can provide their AI/ML teams with predictable and configurable ML environments and with their operational AI goals.

Dell has partnered with cnvrg.io and H2O to provide the software platforms to pair with the compute, storage, and networking infrastructure from Dell to complete the AI/ML solutions.

MLOps – cnvrg.io

cnvrg.io is a machine learning platform built by data scientists that makes implementation of MLOps and the process of taking models from experimentation to deployment efficient and scalable. cnvrg.io provides the platform to manage all aspects of the ML life cycle, from data pipelines, to experimentation, to model deployment. It is a Kubernetes-based application that allows users to work in any compute environment, whether it be in the cloud or on-premises and have access to any programming language.

The management layer of cnvrg.io is powered by a control plane that leverages Kubernetes to manage the containers and pods that are needed to orchestrate the tasks of a project. Users can view the state and health and resource statistics of the environment and each task using the cnvrg.io dashboard.

cnvrg.io makes it easy to access the algorithms and data components, whether they are pre-trained models or models built from scratch, with Git interaction through the AI Library. Data pre-processing logic or any customized models can be stored and implemented for tasks across any project by using the drag-and-drop interface for end-to-end management called cnvrg.io Pipelines.

The orchestration and scheduling features use Kubernetes-based meta-scheduler, which makes jobs portable across environments and can scale resources up or down on demand. Cnvrg.io facilitates job scheduling across clusters in the cloud and on-premises to navigate through resource contention and bottlenecks. The ability to intelligently deploy and manage compute resources, from CPU, GPU, and other specialized AI accelerators to the tasks where they can be best used is important to achieving operational goals in AI.

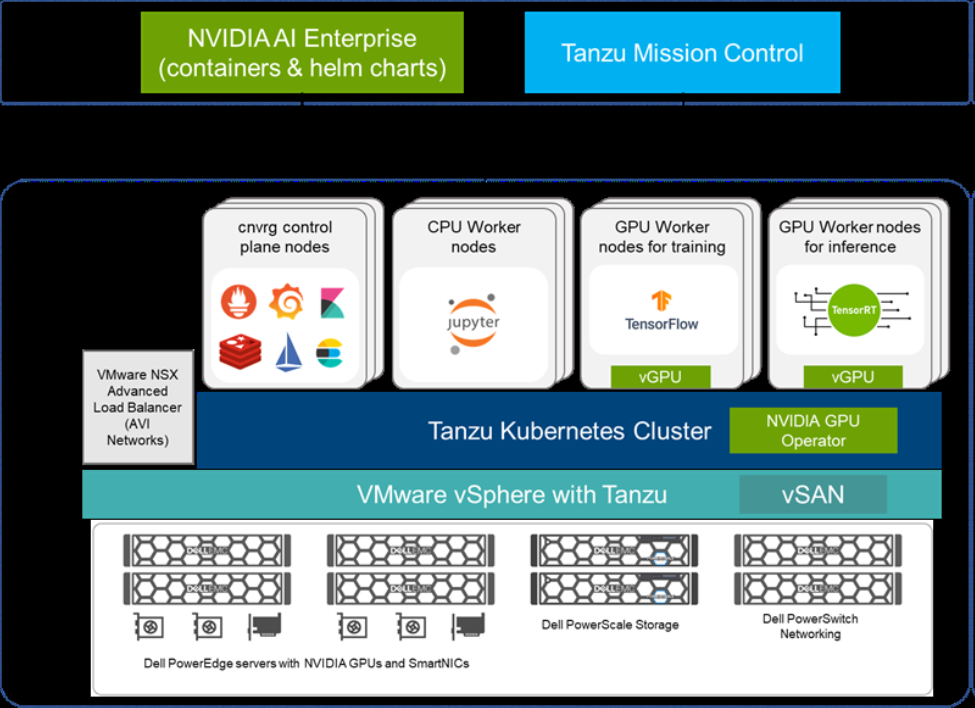

cnvrg.io solution architecture

The cnvrg.io software can be installed directly on your data center, or it can be accessed through the cnvrg.io Metacloud offering. Both versions allow users to configure the organization’s own infrastructure into compute templates. For installations into an on-premises data center, cnvrg.io can be deployed on various Kubernetes infrastructures, including bare metal, but the Dell Validated Design for AI uses VMware and NVIDIA to provide a powerful combination of composability and performance.

Dell’s PowerEdge servers that can be equipped with NVIDIA GPUs provide the compute resources required to run any algorithm in machine learning packages like scikit learn to deep learning algorithms in frameworks like TensorFlow and PyTorch. For storage, Dell’s PowerScale appliance with all-flash, scale out NAS storage deliver the concurrency performance to support data heavy neural networks. VMware vSphere with Tanzu allows for the Tanzu Kubernetes clusters, which are managed by Tanzu Mission Control. The servers running VMware vSAN provide a storage repository for the VM and pods. PowerSwitch network switches with a 25 GbE-based design or 100 GbE-based design allow for neural network training jobs than can run on a single node. Finally, the NVIDIA AI Enterprise comes with the software support for GPUs such as fractionalizing GPU resources with the MIG capability.

Dell provides recommendations for sizing of the different worker node configurations, such as the number of CPUs/GPUs and amount of memory, that users can deploy for the various types of algorithms different AI/ML projects may use.

Figure 2. Dell/cnvrg.io Solution Architecture

For more information, see the Design Guide—Optimize Machine Learning Through MLOps with Dell Technologies cnvrg.io.

AutoML – H2O.ai Driverless AI

Dell has partnered with H2O and its flagship product, Driverless AI, to give organizations a comprehensive AutoML platform to empower both data scientists and non-technical folks to unlock insights efficiently and effectively. Driverless AI has several features that help optimize the model development portion of an AI/ML workflow, from data ingestion to model selection, as organizations look to gain faster and higher quality insights to business stakeholders. It is a true no-code solution with a drag and drop type interface that opens the door for citizen data scientists.

Starting with data ingestion, Driverless AI can connect to datasets in various formats and file systems, no matter where the data resides, from on-premises to a clould provider. Once ingested, Driverless AI runs EDA, provides data visualization, outlier detection, and summary statistics on your data. The tool also automatically suggests data transformations based on the shape of your data and performs a comprehensive feature engineering process that search for high-value predictors against the target variable. A summary of the auto-created features is displayed in an easy to digest dashboard.

For model development, Driverless AI automatically trains multiple in-built models, with numerous iterations for hyper parameter tuning. The tool applies a genetic algorithm that creates an ensemble, ‘survival of the fittest’ final model. The user also has the ability to set the priority on factors of accuracy, time, and interpretability. If the user wishes to arrive at a model that needs to be presented to a less technical busines audience, for example, the tool will focus on algorithms that have more explainable features rather than black box type models that may achieve better accuracy with a longer training time. While the Driverless AI tool may be run as a no-code solution, the bring your own recipe feature empowers more seasoned data scientists to bring custom data transformations and algorithms into the tools as part of the experimenting process.

The final output of Driverless AI, after a champion model is crowned, will include a scoring pipeline file that makes it easy to deploy to a production environment for inference. The scoring pipeline can be saved in Python or a MOJO and includes components like data transformations, scripts, runtime, etc.

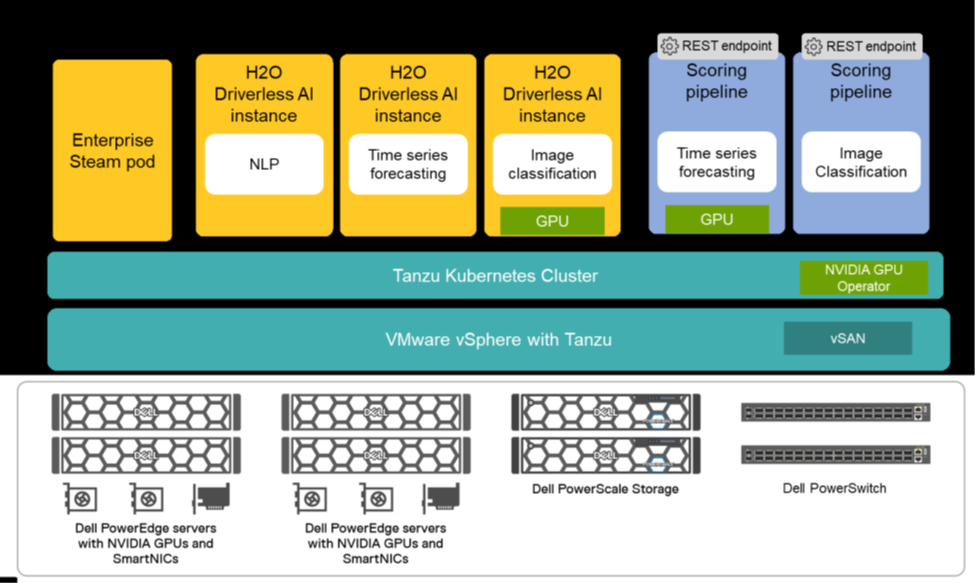

Driverless AI solution architecture

The H2O Driverless AI platform can be deployed either in Kubernetes as pods or as a stand-alone container. The Dell Validated Design of Driverless AI highlights the flexibility VMware vSphere with Tanzu for the Kubernetes layer works with H2O’s Enterprise Steam to provide resource control and monitoring, access control, and security out of the box.

Dell PowerEdge servers, with optional NVIDIA GPUs, and the NVIDIA AI Enterprise make building containers easy for different sets of users. For use cases that are heavy on EDA or employ traditional machine learning algorithms, Driverless AI containers with CPUs only may be appropriate, while containers with GPUs are best suited for training deep learning models usually associated with natural language processing and computer vision. Dell PowerScale storage and Dell PowerSwitch network adapters provide concurrency at scale to train the data intensive algorithms found within Driverless AI.

Dell provides sizing deployments recommendations specific to an organization’s requirements and capabilities. For organizations starting their AI journey, a deployment with 5 Driverless AI instances, 40 CPU cores, 3.2 TB of memory, and 5 TB storage is recommended for workloads and projects that perform classic machine learning or statistical modeling. For a mainstream deployment with more users and heavier workloads that would benefit from GPU acceleration, 10 Driverless AI instances with 100 CPU cores and 5 NVIDIA A100 GPUs, 8 TB of memory, and 10 TB of storage is recommended. Finally, for high performance deployments for organizations that want to deploy AI models at scale, 20 Driverless AI instances, 200 CPU cores and 10 A100 GPUs, 16 TB of memory, and 20 TB of storage provides the infrastructure for a full-service AI environment.

Figure 3. Dell/H2O Driverless AI Solution Architecture

For more information, see Automate Machine Learning with H2O Driverless AI.

Dell is your partner in your AI Journey

AI is constantly evolving, and many organizations do not have the AI expertise to keep up with designing, developing, deploying, and managing solution stacks at the competitive pace. Dell Technologies is your trusted partner and offers solutions that empower your organization in its AI journey. For over the past decade, Dell has been a proven leader in the advanced computing space that includes industry leading products, solutions, and expertise. We have a specialized team of AI, High Performance Computing (HPC), and Data Analytics experts dedicated to helping you keep pace on your AI journey.

AI Professional Services

Regardless of your AI needs, you can rest assured that your deployments will be backed up by Dell’s world class technology services. Our expert consulting services for AI help you plan, implement, and optimize AI solutions, while more than 35,000 services experts can meet you where you are on your AI journey.

- Consulting Services

- Deployment Services

- Support Services

- Payment Solutions

- Managed Services

- Residency Services

Customer Solution Center

The Customer Solution Center is a team of experienced professionals equipped to provide expert advice, recommendations, and demonstrations of the cutting-edge technologies and platforms essential for successful AI implementation. Our staff maintains a thorough understanding of the diverse needs and challenges of our customers and offers valuable insights garnered from extensive engagement with a broad range of clients. By leveraging our extensive knowledge and expertise, you gain a competitive advantage in your pursuit of AI solutions.

AI and HPC Innovation Lab

The AI and HPC Innovation Lab is a premier infrastructure, equipped with a highly skilled team of computer scientists, engineers, and Ph.D. level experts. This team actively engages with customers and members of the AI and HPC community, fostering partnerships and collaborations to drive innovation. With early access to cutting-edge technologies, the Lab is equipped to integrate and optimize clusters, benchmark applications, establish best practices, and publish insightful white papers. By working directly with Dell's subject matter experts, customers can expect tailored solutions for their specific AI and HPC requirements.

Conclusion

MLOps and AutoML play a critical role in fostering the successful integration of AI/ML into organizations. MLOps provides a standardized framework for ensuring consistency, reusability, and scalability in AI/ML initiatives, while AutoML streamlines the data and modeling process. This synergistic approach enables organizations to make data-driven decisions and derive maximum business value from their AI/ML endeavors. Dell Validated Designs offer a blueprint for implementing MLOps, thereby bringing these concepts to fruition. The dynamic nature of AI/ML projects necessitates rapid iterations and automation to tackle challenges such as data inconsistency and resource limitations. MLOps and AutoML serve as crucial enablers in driving digital transformation and establishing an AI-centric enterprise.