More GPUs, CPUs and performance - oh my!

Mon, 14 Jun 2021 14:30:46 -0000

|Read Time: 0 minutes

Continuous hardware and software changes deployed with VxRail’s Continuously Validated State

A wonderful aspect of software-defined-anything, particularly when built on world class PowerEdge servers, is speed of innovation. With a software-defined platform like VxRail, new technologies and improvements are continuously added to provide benefits and gains today, and not a year or so in the future. With the release of VxRail 7.0.200, we are at it again! This release brings support for VMware vSphere and vSAN 7.0 Update 2, and for new hardware: 3rd Gen AMD EPYC processors (Milan), and more powerful hardware from NVIDIA with their A100 and A40 GPUs.

VMware, as always, does a great job of detailing the many enhanced or new features in a release. From high level What’s New corporate or personal blog posts, to in-depth videos by Duncan Epping. However, there are a few changes that I want to highlight:

Get thee to 25GbE: A trilogy of reasons - Storage, load-balancing, and pricing.

vSAN is a distributed storage system. To that end, anything that improves the network or networking efficiency improves storage performance and application performance -- but there is more to networking than big, low-latency pipes. RDMA has been a part of vSphere since the 6.5 release; it is only with 7.0 Update 2 that it is leveraged by vSAN. John Nicholson explains the nuts and bolts of vSAN RDMA in this blog post, but only touches on the performance gains. From our performance testing on VxRail, I can share with you the gains we have seen with VxRail: up to 5% reduction in CPU utilization, up to 25% lower latency, and up to 18% higher IOPS, along with increases in read and write throughput. It should be noted that even with medium block IO, vSAN is more than capable of saturating a 10GbE port, RDMA is pushing performance beyond that, and we’ve yet to see what Intel 3rd Generation Xeon processors will bring. The only fly in the ointment for vSAN RDMA is the current small list of approved network cards – no doubt more will be added soon.

vSAN is not the only feature that enjoys large low-latency pipes. Niels Hagoort describes the changes in vSphere 7.0 Update 2 that have made vMotion faster, thus making Balancing Workloads Invisible and the lives of virtualization administrators everywhere a lot better. Aside: Can I say how awesome it is to see VMware continuing to enhance a foundational feature that they first introduced in 2003, a feature that for many was that lightbulb Aha! moment that started their virtualization journey.

One last nudge: pricing. The cost delta between 10GbE and 25GbE network hardware is minimal, so for greenfield deployments the choice is easy; you may not need it today, but workloads and demands continue to grow. For brownfield, where the existing network is not due for replacements, the choice is still easy. 25GbE NICs and switch ports can negotiate to 10GbE making a phased migration, VxRail nodes now and switches in the future, possible. The inverse is also possible: upgrade the network to 25GbE switches while still connecting your existing VxRail 10GbE SFP+ NIC ports.

Is 25GbE in your infrastructure upgrade plans yet? If not, maybe it should be.

A duo of AMD goodness

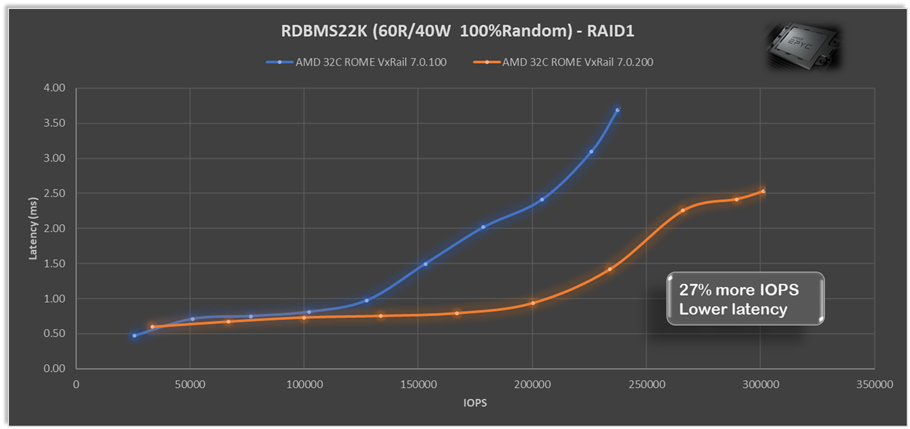

Last year we released two AMD-based VxRail platforms, the E665/F and the P675F/N, so I’m delighted to see CPU scheduler optimizations for AMD EPYC processors, as described in Aditya Sahu blog post. What is even better is the 29 page performance study Aditya links to, the depth of detail provided on how the ESXi CPU scheduling works, and didn’t work, with AMD EYPC processors is truly educational. The extensive performance testing VMware continuously runs and the results they share (spoiler: they achieved very significant gains) are also a worthwhile read. In our testing we’ve seen that with just these scheduler optimizations AMD alone VxRail 7.0.200 can provide up to 27% more IOPS and up to 27% lower latency for both RAID1 and RAID5 with relational database (RDBMS22K 60R/40W 100%Random) workloads.

The extensive performance testing VMware continuously runs and the results they share (spoiler: they achieved very significant gains) are also a worthwhile read. In our testing we’ve seen that with just these scheduler optimizations AMD alone VxRail 7.0.200 can provide up to 27% more IOPS and up to 27% lower latency for both RAID1 and RAID5 with relational database (RDBMS22K 60R/40W 100%Random) workloads.

VxRail begins shipping the 3rd generation AMD EYPC processors – also known as

Milan – in VxRail E665 and P675 nodes later this month. These are not a replacement

for the current 2nd Gen EPYC processors we offer, rather the addition of higher

performing 24-core, 32-core, and 64-core choices to the VxRail line up delivering up to 33% more IOPS and 16% lower latency across a range of workloads and block sizes. Check out this VMware blog post for the performance gains they showcase with the VMmark benchmarking tool.

HCI Mesh – only recently introduced, yet already getting better

When VMware released HCI Mesh just last October, it enabled stranded storage on one VxRail cluster to be consumed by another VxRail cluster. With the release of VxRail 7.0.200 this has been expanded to making it more applicable to more customers by enabling any vSphere clusters to also be consumers of that excess storage capacity – these remote clusters do not require a vSAN license and consume the storage in the same manner they would any other datastore. This opens up some interesting multi-cluster use cases, for example:

In solutions where a software application licensing requires each core/socket in the vSphere cluster to be licensed, this licensing cost can easily dwarf other costs. Now this application can be deployed on a small compute-only cluster, while consuming storage from the larger VxRail cluster. Or where the density of storage per socket didn’t make VxRail viable, it can now be achieved with a smaller VxRail cluster, plus a separate compute-only cluster. If only the all the goodness that is VxRail was available in a compute-only cluster – now that would be something dynamic…

A GPU for every workload

GPUs, once the domain of PC gamers, are now a data center staple with their parallel processing capabilities accelerating a variety of workloads. The versatile VxRail V Series has multiple NVIDIA GPUs to choose from and we’ve added two more with the addition of the NVIDIA A40 and A100. The A40 is for sophisticated visual computing workloads – think large complex CAD models, while the A100 is optimized for deep learning inference workloads for high-end data science.

Evolution of hardware in a software-defined world

PowerEdge took a big step forward with their recent release built on 3rd Gen Intel Xeon Scalable processors. Software-defined principles enable VxRail to not only quickly leverage this big step forward, but also to quickly leverage all the small steps in hardware changes throughout a generation. Building on the latest PowerEdge servers we are Reimagine HCI with VxRail with the next generation VxRail E660/F, P670F or V670F. Plus, what’s great about VxRail is that you can seamlessly integrate this latest technology into your existing infrastructure environment. This is an exciting release, but equally exciting are all the incremental changes that VxRail software-defined infrastructure will get along the way with PowerEdge and VMware.

VxRail, flexibility is at its core.

Availability

- VxRail systems with Intel 3rd Generation Xeon processors will be globally available in July 2021.

- VxRail systems with AMD 3rd Generation EPYC processors will be globally available in June 2021.

- VxRail HCI System Software updates will be globally available in July 2021.

- VxRail dynamic nodes will be globally available in August 2021.

- VxRail self-deployment options will begin availability in North America through an early access program in August 2021.

Additional resources

- Blog: Reimagine HCI with VxRail

- Attend our launch webinar to learn more.

- Press release: Dell Technologies Reimagines Dell EMC VxRail to Offer Greater Performance and Storage Flexibility

Related Blog Posts

New VxRail Node Lets You Start Small with Greater Flexibility in Scaling and Additional Resiliency

Mon, 29 Aug 2022 19:00:25 -0000

|Read Time: 0 minutes

When deploying infrastructure, it is important to know two things: current resource needs and that those resource needs will grow. What we don’t always know is in what way the demands for resources will grow. Resource growth is rarely equal across all resources. Storage demands will grow more rapidly than compute, or vice-versa. At the end of the day, we can only make an educated guess, and time will tell if we guessed right. We can, however, make intelligent choices that increase the flexibility of our growth options and give us the ability to scale resources independently. Enter the single processor Dell VxRail P670F.

The availability of the P670F with only a single processor provides more growth flexibility for our customers who have smaller clusters. By choosing a less compute dense single processor node, the same compute workload will require more nodes. There are two benefits to this:

- More efficient storage: More nodes in the cluster opens the door to using the more capacity efficient erasure coding vSAN storage option. Erasure coding, also known as parity RAID, (such as RAID 5 and RAID 6) has a capacity overhead of 33% compared to the 100% overhead that mirroring requires. Erasure coding can deliver 50% more usable storage capacity while using the same amount of raw capacity. While this increase in storage does come with a write performance penalty, VxRail with vSAN has shown that the gap between erasure coding and mirroring has narrowed significantly, and provides significant storage performance capabilities.

- Reduced cluster overhead: Clusters are designed around N+1, where ‘N’ represents sufficient resources to run the preferred workload, and ‘+1’ are spare and unused resources held in reserve should a failure occur in the nodes that make up the N. As the number of nodes in N increases, the percentage of overall resources that are kept in reserve to provide the +1 for planned and unplanned downtime drops.

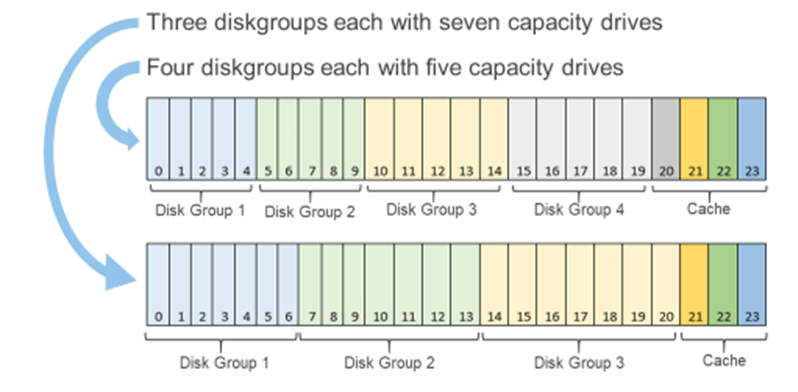

Figure 1: Single processor P670F disk group options

You may be wondering, “How does all of this deliver flexibility in the options for scaling?”

You can scale out the cluster by adding a node. Adding a node is the standard option and can be the right choice if you want to increase both compute and storage resources. However, if you want to grow storage, adding capacity drives will deliver that additional storage capacity. The single processor P670F has disk slots for up to 21 capacity drives with three cache drives, which can be populated one at a time, providing over 160TB of raw storage. (This is also a good time to review virtual machine storage policies: does that application really need mirrored storage?) The single processor P670F does not have a single socket motherboard. Instead, it has the same dual socket motherboard as the existing P670F—very much a platform designed for expanding CPU and memory in the future.

If you are starting small, even really small, as in a 2-node cluster (don’t worry, you can still scale out to 64 nodes), the single processor P670F has even more additional features that may be of interest to you. Our customers frequently deploy 2-node clusters outside of their core data center at the edge or at remote locations that can be difficult to access. In these situations, the additional data resiliency that provided by Nested Fault Domains in vSAN is attractive. To provide this additional resiliency on 2-node clusters requires at least three disk groups in each node, for which the single processor P670F is perfectly suited. For more information, see VMware’s Teodora Hristov blog post about Nested fault domain for 2 Node cluster deployments. She also posts related information and blog posts on Twitter.

It is impressive how a single change in configuration options can add so much more configuration flexibility, enabling you to optimize your VxRail nodes specifically to your use cases and needs. These configuration options impact your systems today and as you scale into the future.

Author Information

Author: David Glynn, Sr. Principal Engineer, VxRail Technical Marketing

Twitter: @d_glynn

I feel the need – the need for speed (and endurance): Intel Optane edition

Wed, 13 Oct 2021 17:37:52 -0000

|Read Time: 0 minutes

It has only been three short months since we launched VxRail on 15th Generation PowerEdge, but we're already expanding the selection of configuration offerings. So far we've added 18 additional processors to power your workloads, including some high frequency and low core count options. This is delightful news for those with applications that are licensed per core, an additional NVIDIA GPU - the A30, a slew of additional drives, and doubled the RAM capacity to 8TB. I've probably missed something, as it can be hard to keep up with the all the innovations taking place within this race car that is VxRail!

In my last blog, I hinted at one of those drive additions, faster cache drives. Today I'm excited to announce that you can now order, and turbo charge your VxRail with the 400GB or 800GB Intel P5800X – Intel’s second generation Optane NVMe drive. Before we delve into some of the performance numbers, let’s discuss what it is about the Optane drives that makes them so special. More specifically, what is it about them that enables them to deliver so much more performance, in addition to significantly higher endurance rates.

To grossly over-simplify it, and my apologies in advance to the Intel engineers who poured their lives into this, when writing to NAND flash an erase cycle needs to be performed before a write can be made. These erase cycles are time-consuming operations and the main reason why random write IO capabilities on NAND flash is often a fraction of the read capability. Additionally, a garbage collection is running continuously in the background to ensure that there is space available to incoming writes. Optane, on the other hand, does bit-level write in place operations, therefore it doesn’t require an erase cycle, garbage collection, or performance penalty writes. Hence, random write IO capability almost matches the random read IO capability. So just how much better is endurance with this new Optane drive? Endurance can be measured in Drive Writes Per Day (DWPD), which measures how many times the drive's entire size could be overwritten each day of its warranty life. For the 1.6TB NVMe P5600 this is 3 DWPD, or 55 MB per second, every second for five years – just shy of 9PB of writes, not bad. However, the 800GB Optane P5800X will endure 146PB over its five-year warranty life, or almost 1 GB per second (926 MB/s) every second for its five year 100 DWPD warranty life. Not quite indestructible, but that is a lot of writes, so much so you don’t need extra capacity for wear leveling and a smaller capacity drive will suffice.

To grossly over-simplify it, and my apologies in advance to the Intel engineers who poured their lives into this, when writing to NAND flash an erase cycle needs to be performed before a write can be made. These erase cycles are time-consuming operations and the main reason why random write IO capabilities on NAND flash is often a fraction of the read capability. Additionally, a garbage collection is running continuously in the background to ensure that there is space available to incoming writes. Optane, on the other hand, does bit-level write in place operations, therefore it doesn’t require an erase cycle, garbage collection, or performance penalty writes. Hence, random write IO capability almost matches the random read IO capability. So just how much better is endurance with this new Optane drive? Endurance can be measured in Drive Writes Per Day (DWPD), which measures how many times the drive's entire size could be overwritten each day of its warranty life. For the 1.6TB NVMe P5600 this is 3 DWPD, or 55 MB per second, every second for five years – just shy of 9PB of writes, not bad. However, the 800GB Optane P5800X will endure 146PB over its five-year warranty life, or almost 1 GB per second (926 MB/s) every second for its five year 100 DWPD warranty life. Not quite indestructible, but that is a lot of writes, so much so you don’t need extra capacity for wear leveling and a smaller capacity drive will suffice.

You might wonder why you should care about endurance, as Dell EMC will replace the drive under warranty anyway – there are three reasons. When a cache drive fails, its diskgroup is taken offline, so not only have you lost performance and capacity, your environment is taking on the additional burden of a rebuild operation to re-protect your data. Secondly, more and more systems are being deployed outside of the core data center. Replacing a drive in your data center is straightforward, and you might even have spares onsite, but what about outside of your core datacenter? What is your plan for replacing a drive at a remote office, or a thousand miles away? What if that remote location is not an office but an oilrig one hundred miles offshore, or a cruise ship halfway around the world where the cost of getting a replacement drive there is not trivial? In these remote locations, onsite spares are commonplace, but the exceptions are what lead me to the third reason, Murphy's Law. IT and IT staffing might be an afterthought at these remote locations. Getting a failed drive swapped out at a remote location which lacks true IT staffing may not get the priority it deserves, and then there is that ever present risk of user error... “Oh, you meant the other drive?!? Sorry...”

Cache in its many forms plays an important role in the datacenter. Cache enables switches and storage to deliver higher levels of performance. On VxRail, our cache drives fall into two categories, SAS and NVMe, with NVMe delivering up to 35% higher IOPS and 14% lower latency. Among our NVMe cache drive we have two from Intel, the 1.6TB P5600 and the Optane P5800X, in 400GB and 800GB capacities. The links for each will bring you to the drive specification including performance details. But how does the performance at a drive level impact performance at the solution level? Because, at the end of the day that is what your application consumes at the solution level, after cache mirroring, network hops, and the vSAN stack. Intel is a great partner to work with, when we checked with them about publishing solution level performance data comparing the two drives side-by-side, they were all for it.

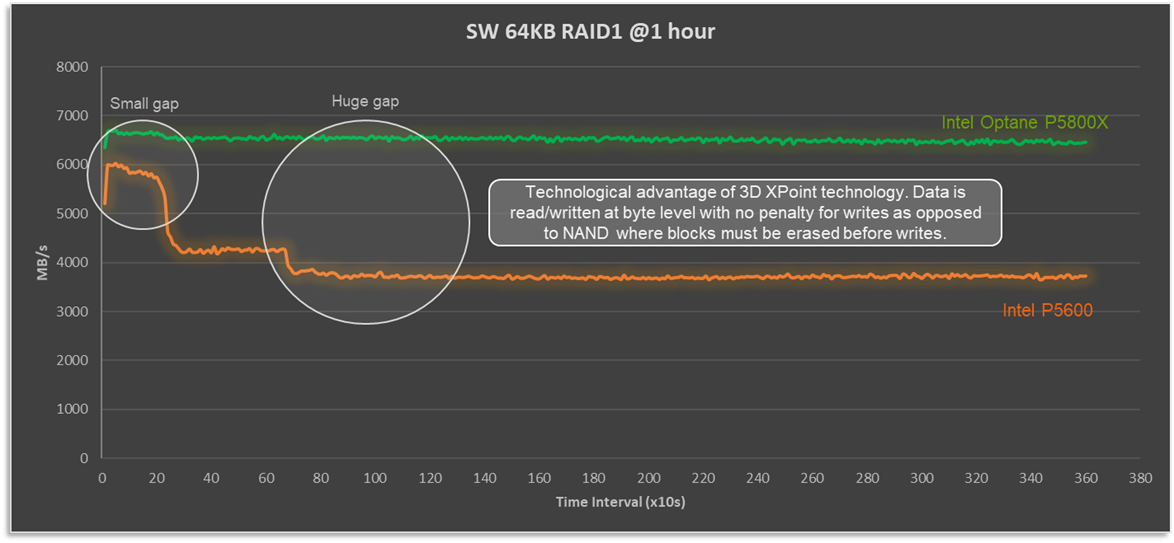

In my over-simplified explanation above, I described how the write cycle for Optane drives is significantly different as an erase operation and does not need to be done first. So how does that play out in a full solution stack? Figure 1 compares a four node VxRail P670F cluster, running a 100% sequential write 64KB workload. Not a test that reflects any real-world workload, but one that really stresses the vSAN cache layer, highlights the consistent write performance that 3D XPoint technology delivers, and shows how Optane is able to de-stage cache when it fills up without compromising performance.

Figure 1: Optane cache drives deliver consistent and predictable write performance

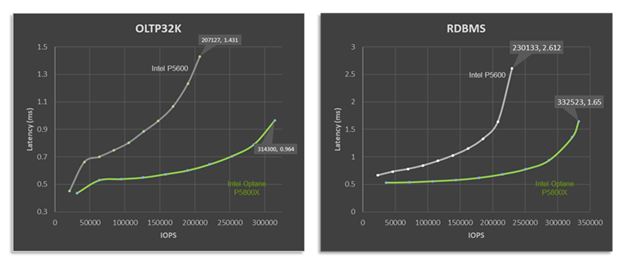

When we look at performance, there are two numbers to keep in mind: IOPS and latency. The target is to have high IOPS with low and predictable latency, at a real-world IO size and read:write ratio. To that end, let’s look at how VxRail performance differs with the P5600 and P5800X under OLTP32K (70R30W) and RDBMS (60R40W) benchmark workload, as shown in Figure 2.

Figure 2: Optane cache drives deliver higher performance and lower latency across a variety of workload types.

It doesn't take an expert to see that with the P5800X this four node VxRail P670F cluster's peak performance is significantly higher than when it is equipped with the P5600 as a cache drive. For RDBMS workloads up to 44% higher IOPS with a 37% reduction in latency. But peak performance isn't everything. Many workloads, particularly databases, place a higher importance on latency requirements. What if our workload, database or otherwise, requires 1ms response times? Maybe this is the Service Level Agreement (SLA) that the infrastructure team has with the application team. In such a situation, based on the data shown, and for a OLTP 70:30 workload with a 32K block size, the VxRail cluster would deliver over twice the performance at the same latency SLA, going from 147,746 to 314,300 IOPS.

In the datacenter, as in life, we are often faced with "Good, fast, or cheap. Choose two." When you compare the price tag of the P5600 and P5800X side by side, the Optane drive has a significant premium for its good and fast. However, keep in mind that you are not buying an individual drive, you are buying a full stack solution of several pieces of hardware and software, where the cost of the premium pales in comparison to the increased endurance and performance. Whether you are looking to turbo charge your VxRail like a racecar, or make it as robust as a tank, Intel Optane SSD drives will get you both.

Author Information

David Glynn, Technical Marketing Engineer, VxRail at Dell Technologies

Twitter: @d_glynn

LinkedIn: David Glynn

Additional Resources

Intel SSD D7P5600 Series 1.6TB 2.5in PCIe 4.0 x4 3D3 TLC Product Specifications

Intel Optane SSD DC P5800X Series 800GB 2.5in PCIe x4 3D XPoint Product Specifications