Introducing VxRail Concepts

Tue, 04 Oct 2022 14:12:18 -0000

|Read Time: 0 minutes

Believe it or not, VxRail has been around for six years! It may not sound like a particularly long time because there are products in the Dell storage portfolio that got their start a couple of decades ago. In any case, the early rapid growth of VxRail created a sizable customer base that has matured and developed into seasoned VxRail users. The figures I last saw cited just over 17,000 customers with some 237,000 nodes deployed. As a new member of the VxRail product group, I can see that our VxRail content has matured and developed with our seasoned customers to an extent that there’s an assumed common knowledge about VxRail. This is amazing for the existing VxRail community, but it would also be nice for people new to VxRail, like me, who are in a different phase of their VxRail journey, to learn about it from the ground up. After a few months on the job, I saw a great opportunity to do just that—create content that gets back to the basics of VxRail and focuses on the fundamentals of VxRail with vSAN and VxRail Dynamic Nodes for traditional SAN architecture.

While I was new to the VxRail technical marketing team at the start of the year, I’d previously spent many years as a Dell Support specialist, primarily working with our PowerEdge servers and Compellent storage offerings. It has definitely been a rewarding experience so far learning about HCI and VxRail—though sometimes it was like trying to drink from a firehose. While I have many resources to lean on, most other folks don’t have the same benefit. That’s why I’m building a blog series that covers the basics of VxRail so you can quickly get a leg up on your VxRail journey. Through this, you can have a solid foundation so that you can consume more advanced VxRail content. Here’s what I have so far in my queue:

- Understanding the importance of cluster integrity—The biggest pain point, I find, is the amount of time that infrastructure administrators spend to ensure application uptime and compliance, and looking out for the next system update. Understanding the continuously validated state of VxRail is a game changer.

- Cluster updates—Knowing what needs to be updated is critical, but the actual process of doing a system update to a cluster isn’t a walk in the park. The VxRail cluster update experience is a significant factor in your operational investment.

- Maintaining cluster integrity—VxRail has some very helpful tools to ensure that the cluster is running in an optimal state. These tools can make your administration activity much easier.

- Serviceability—There will be times when things go wrong; that’s inevitable. I want to bring focus onto how VxRail can make this part of the experience as streamlined as possible. Current clusters feature phone-home capabilities that provide a massive benefit to the support experience.

- Extensibility—While this might be a bit on the advanced side, it’s good to know that VxRail is designed to be managed efficiently at scale. Whether your concern is deployment complexity or scaling up the management experience, this will be a good read.

Over the course of the next few weeks, I’ll be posting blogs about these topics. Stay tuned! For those new to VxRail, I hope I can provide a great start to your VxRail journey. Feel more than welcome to reach out and connect with me on LinkedIn. If you have suggestions for other topics that you’d like covered, I’d be more than happy to hear them.

Related Blog Posts

VxRail Edge Automation Unleashed - Simplifying Satellite Node Management with Ansible

Thu, 30 Nov 2023 17:43:03 -0000

|Read Time: 0 minutes

VxRail Edge Automation Unleashed

Simplifying Satellite Node Management with Ansible

In the previous blog, Infrastructure as Code with VxRail made easier with Ansible Modules for Dell VxRail, I introduced the modules which enable the automation of VxRail operations through code-driven processes using Ansible and VxRail API. This approach not only streamlines IT infrastructure management but also aligns with Infrastructure as Code (IaC) principles, benefiting both technical experts and business leaders.

The corresponding demo is available on YouTube:

The previous blog laid the foundation for the continued journey where we explore more advanced Ansible automation techniques, with a focus on satellite node management in the VxRail ecosystem. I highly recommend checking out that blog before diving deeper into the topics discussed here - as the concepts discussed in this demo will be much easier to absorb

What are the VxRail satellite nodes?

VxRail satellite nodes are individual nodes designed specifically for deployment in edge environments and are managed through a centralized primary VxRail cluster. Satellite nodes do not leverage vSAN to provide storage resources and are an ideal solution for those workloads where the SLA and compute demands do not justify even the smallest of VxRail 2-node vSAN clusters.

Satellite nodes enable customers to achieve uniform and centralized operations within the data center and at the edge, ensuring VxRail management throughout. This includes comprehensive, automated lifecycle management for VxRail satellite nodes, while encompassing hardware and software and significantly reducing the need for manual intervention.

To learn more about satellite nodes, please check the following blogs from my colleagues:

- David’s introduction: Satellite nodes: Because sometimes even a 2-node cluster is too much

- Stephen’s update on enhancements: Enhancing Satellite Node Management at Scale

Automating VxRail satellite node operations using Ansible

You can leverage the Ansible Modules for Dell VxRail to automate various VxRail operations, including more advanced use cases, like satellite node management. It’s possible today by using the provided samples available in the official repository on GitHub.

Have a look at the following demo, which leverages the latest available version of these modules at the time of recording – 2.2.0. In the demo, I discuss and demonstrate how you can perform the following operations from Ansible:

- Collecting information about the number of satellite nodes added to the primary VxRail cluster

- Adding a new satellite node to the primary VxRail cluster

- Performing lifecycle management operations – staging the upgrade bundle and executing the upgrade on managed satellite nodes

- Removing a satellite node from the primary cluster

The examples used in the demo are slightly modified versions of the following samples from the modules' documentation on GitHub. If you’d like to replicate these in your environment, here are the links to the corresponding samples for your reference, which need slight modification:

- Retrieving system information: systeminfo.yml

- Adding a new satellite node: add_satellite_node.yml

- Performing LCM operations: upgrade_host_folder.yml (both staging and upgrading as explained in the demo)

- Removing a satellite node: remove_satellite_node.yml.

In the demo, you can also observe one of the interesting features of the Ansible Modules for Dell VxRail that is shown in action but not explained explicitly. You might be aware that some of the VxRail API functions are available in multiple versions – typically, a new version is made available when some new features are available in the VxRail HCI System Software, while the previous versions are stored to provide backward compatibility. The example is “GET /vX/system”, which is used to retrieve the number of the satellite nodes – this property was introduced in version 4. If you avoid specifying the version, the modules will automatically select the latest supported version, simplifying the end-user experience.

How can you get more hands-on experience with automating VxRail operations programmatically?

The above demo, discussing the satellite nodes management using Ansible, was configured in the VxRail API hands-on lab which is available in the Dell Technologies Demo Center. With the help of the Demo Center team, we built this lab as the self-education tool for learning VxRail API and how it can be used for automating VxRail operations using various methods – through exploring the built-in, interactive, web-based documentation, VxRail API PowerShell Modules, Ansible Modules for Dell VxRail and Postman.

The hands-on lab provides a safe VxRail API sandbox, where you can easily start experimenting by following the exercises from the lab guide or trying some other use cases on your own without any concerns about making configuration changes to the VxRail system.

The lab was refreshed for the Dell Technologies World 2023 conference to leverage VxRail HCI System Software 8.0.x and the latest version of the Ansible Modules. If you’re a Dell partner, you should have access directly, and if you’re a customer who’d like to get access – please contact your Account SE from Dell or Dell Partner. The lab is available in the catalog as: “HOL-0310-01 - Scalable Virtualization, Compute, and Storage with the VxRail REST API”.

Conclusion

In the fast-evolving landscape of IT infrastructure, the ability to automate operations efficiently is not just a convenience but a necessity. With the power of Ansible Modules for Dell VxRail, we've explored how this necessity can be met, looking at the examples of satellite nodes use case. We encourage you to embrace the full potential of VxRail automation using VxRail API and Ansible or other tools. If it is something new, you can get the experience by experimenting with the hands-on lab available in the Demo Center catalog.

Resources

- Previous blog: Infrastructure as Code with VxRail Made Easier with Ansible Modules for Dell VxRail

- The “master” blog containing a curated list of publicly-available educational resources about the VxRail API: VxRail API - Updated List of Useful Public Resources

- Ansible Modules for Dell VxRail on GitHub, which is the central code repository for the modules. It also contains complete product documentation and examples.

- Dell Technologies Demo Center, which includes VxRail API hands-on lab.

Author: Karol Boguniewicz, Senior Principal Engineering Technologist, VxRail Technical Marketing

Twitter/X: @cl0udguide

LinkedIn: https://www.linkedin.com/in/boguniewicz/

Learn More About the Latest Major VxRail Software Release: VxRail 7.0.480

Tue, 24 Oct 2023 15:51:48 -0000

|Read Time: 0 minutes

Happy Autumn, VxRail customers! As the morning air gets chillier and the sun rises later, this blog on our latest software release – VxRail 7.0.480 – paired with your Pumpkin Spice Latte will give you the boost you need to kick start your day. It may not be as tasty as freshly made cider donuts, but this software release has significant additions to the VxRail lifecycle management experience that can surely excite everyone.

VxRail 7.0.480 provides support for VMware ESXi 7.0 Update U3o and VMware vCenter 7.0 Update U3o. All existing platforms that support VxRail 7.0, except ones based on Dell PowerEdge 13th Generation platforms, can upgrade to VxRail 7.0.480. This includes the VxRail systems based on PowerEdge 16th Generation platforms that were released in August.

Read on for a deep dive into the VxRail Lifecycle Management (LCM) features and enhancements in this latest VxRail release. For a more comprehensive rundown of the features and enhancements in VxRail 7.0.480, see the release notes.

Improving update planning activities for unconnected clusters or clusters with limited connectivity

VxRail 7.0.450, released earlier this year, provided significant improvements to update planning activities in a major effort to streamline administrative work and increase cluster update success rates. Enhancements to the cluster pre-update health check and the introduction of the update advisor report were designed to drive even more simplicity to your update planning activities. By having VxRail Manager automatically run the update advisor report, inclusive of the pre-update health check, every 24 hours against the latest information, you will always have an up-to-date report to determine your cluster’s readiness to upgrade to the latest VxRail software version.

If you are not familiar with the LCM capabilities added in VxRail 7.0.450, you can review this blog for more information.

VxRail 7.0.450 offered a seamless path for clusters that are connected to the Dell cloud to take advantage of these new capabilities. Internet-connected clusters can automatically download LCM pre-checks and the installer metadata files, which provide the manifest information about the latest VxRail software version, from the Dell cloud. The ability to periodically scan the Dell cloud for the latest files ensures the update advisor report is always up to date to support your decision-making.

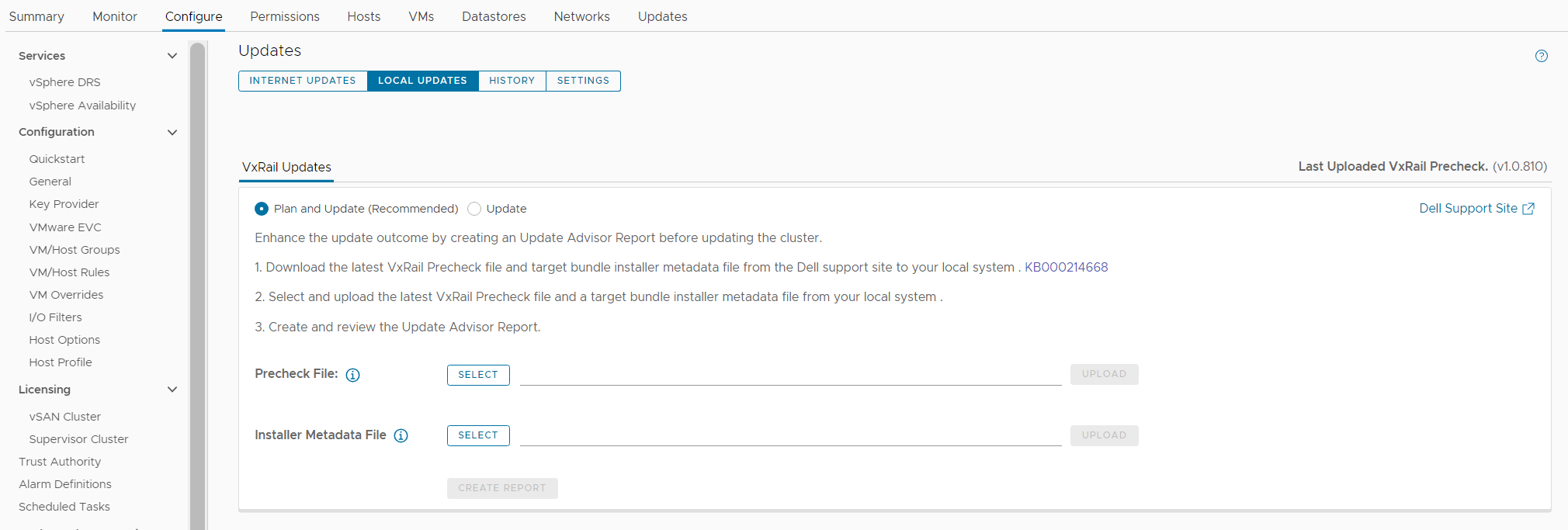

While unconnected clusters could use these features, the user experience in VxRail 7.0.450 made it more cumbersome for users to upload the latest LCM pre-checks and installer metadata files. VxRail 7.0.480 aims to improve the user experience for those who have clusters deployed in dark or remote sites that have limited network connectivity.

Starting in VxRail 7.0.480, users of unconnected clusters will have an easier experience uploading the latest LCM pre-checks file onto VxRail Manager. The VxRail Manager UI has been enhanced, so you no longer have to upload via CLI.

Knowing that some clusters are deployed in areas where network bandwidth is at a premium, the VxRail Manager UI has also been updated so that you only need to upload the installer metadata file to generate the update advisor report. In VxRail 7.0.450, users had to upload the full LCM bundle for the update advisor report. The difference in the payload size of greater than 10GB for a full LCM bundle versus a 50KB installer metadata file is a tremendous improvement for bandwidth-constrained clusters, eliminating a barrier to relying on the update advisor report as a standard cluster management practice. With VxRail 7.0.480, whether you have connected or unconnected clusters, these update planning features are easy to use and will help increase your cluster update success rates.

To accommodate these improvements, the Local Updates tab has been modified to support these new capabilities. There are now two sub-tabs underneath the Local Updates tab:

- The Update sub-tab represents the existing cluster update workflow where you would upload the full LCM bundle to generate the update advisor report and initiate the cluster update operation.

- The Plan and Update sub-tab is the recommended path which incorporates the enhancements in VxRail 7.0.480. Here you can upload the latest LCM pre-checks file and the installer metadata file that you found and downloaded from the Dell Support website. Uploading the LCM pre-checks file is optional to create a new report because there may not always be an updated file to apply. However, you do need to upload an installer metadata file to generate a new report from here. Once uploaded, VxRail Manager will generate an update advisor report against that installer metadata file every 24 hours.

Figure 1. New look to the Local Updates tab

Easier record-keeping for compliance drift and update advisor reports

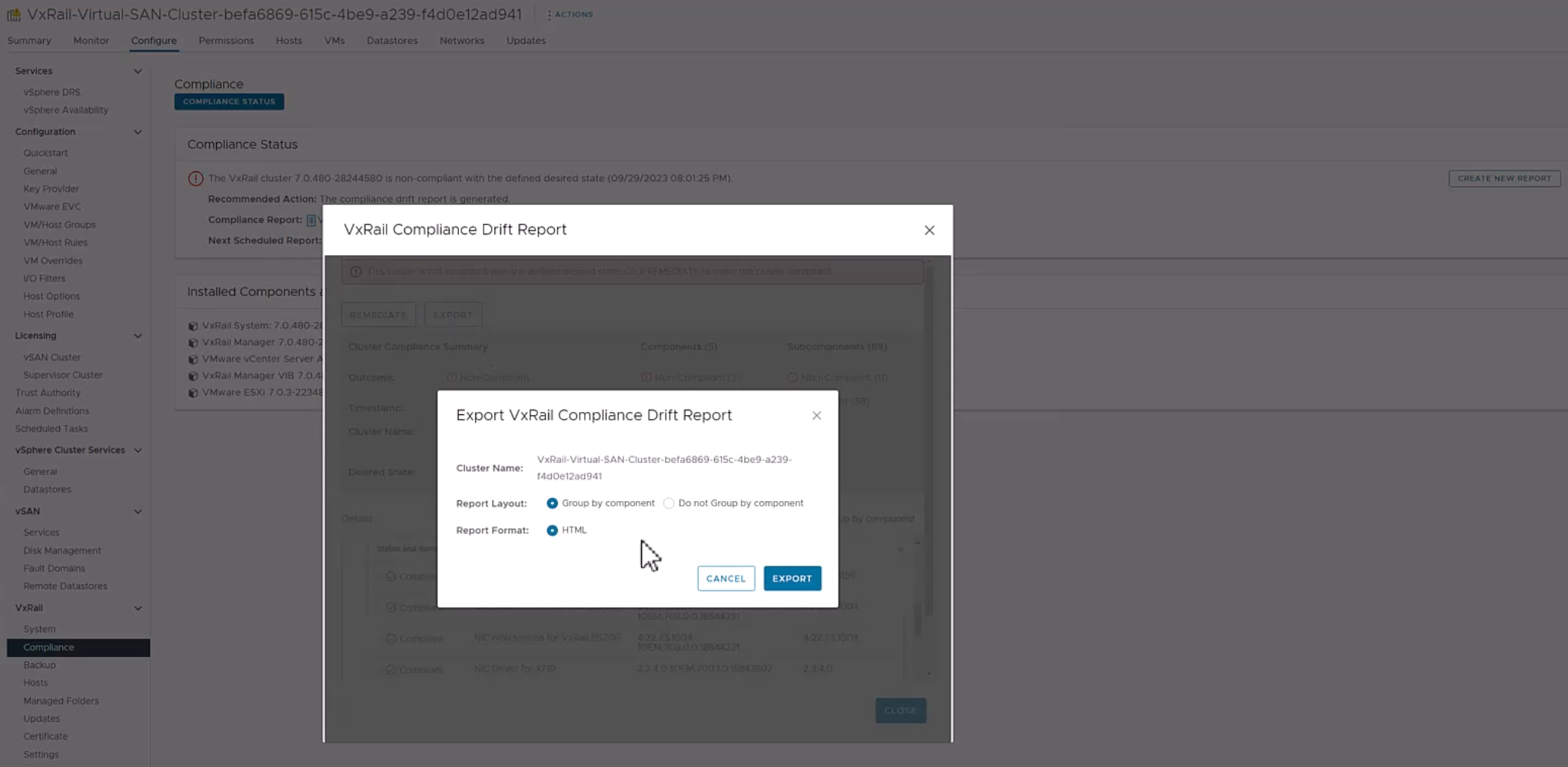

VxRail 7.0.480 adds new functionality to make the compliance drift reports exportable to outside the VxRail Manager UI while also introducing a history tab to access past update advisor reports.

Some of you use the contents of the compliance drift report to build out a larger infrastructure status report for information sharing across your organizations. Making the report exportable would simplify that report building process. When exporting the report, there is an option to group the information by host if you prefer.

Note that the compliance check functionality has moved from the Compliance tab under the Updates page to a separate page, which you can navigate to by selecting Compliance from under the VxRail section.

Figure 2. Exporting the compliance drift report

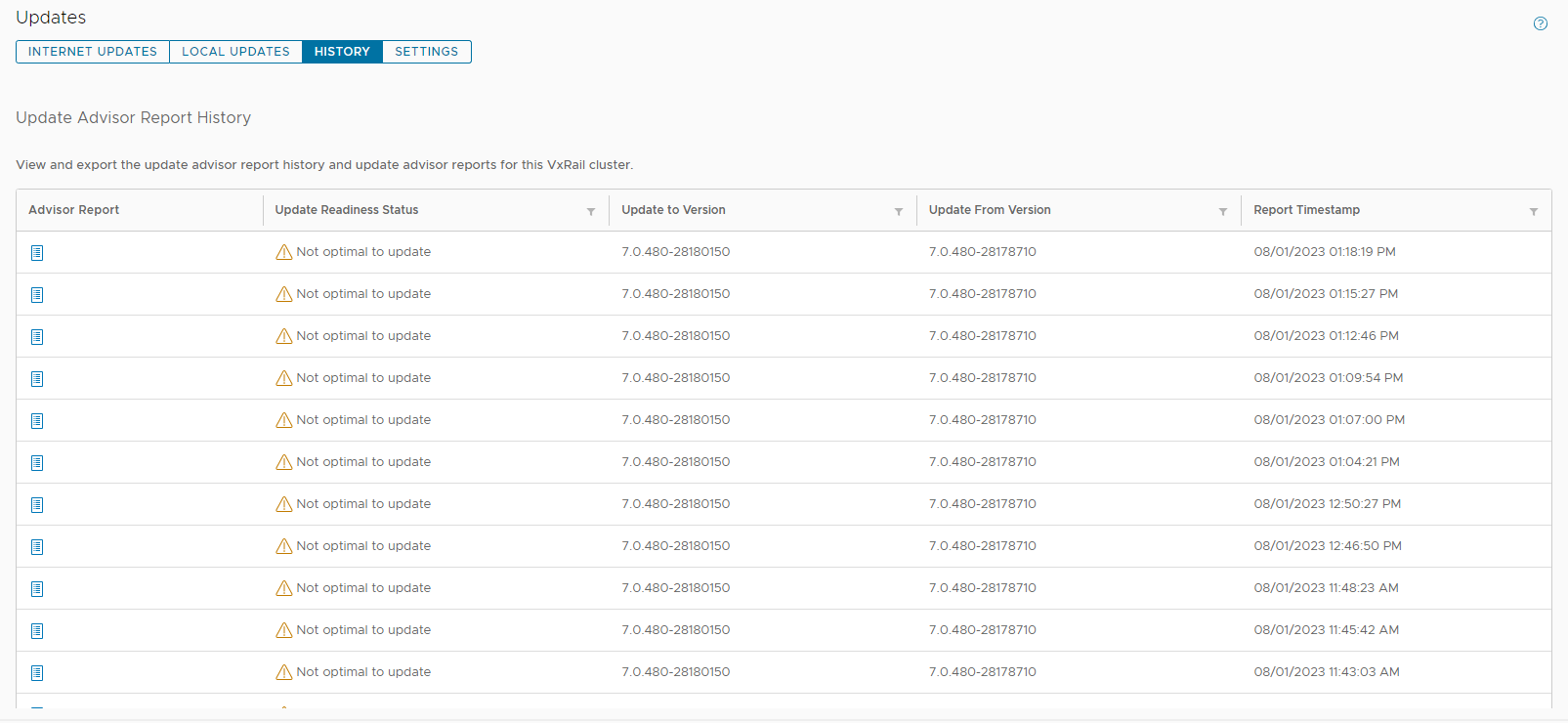

The exit of the Compliance tab comes with the introduction of the History tab on the Updates page in VxRail 7.0.480. Because VxRail Manager automatically generates a new update advisor report every 24 hours and you have the option to generate one on-demand, the update advisor report is often overwritten. To avoid the need to constantly export them as a form of record-keeping, the new History tab stores the last 30 update advisor reports. The reports are listed in a table format where you can see which target version the report was run against and when it was run. To view the full report, you can click on the icon on the left-hand column.

Figure 3. New History tab to store the last 30 update advisor reports

Addressing cluster update challenges for larger-sized clusters

For some of you that have larger-sized clusters, cluster updates pose challenges that may prevent you from upgrading more frequently. For example, the length of the maintenance window required to complete a full cluster update may not fit within your normal business operations such that any cluster update activity will impact service availability. As a result, cluster updates are kept to a minimum and nodes inevitably are not rebooted for long periods of time. While the cluster pre-update health check is an effective tool to determine cluster readiness for an upgrade, some issues may be lurking that a node reboot can uncover. That’s why some of you script your own node reboot sequence that acts as a test run for a cluster upgrade. The script reboots each node one at a time to ensure service levels of your workloads are maintained. If any nodes fail to reboot, you can investigate those nodes.

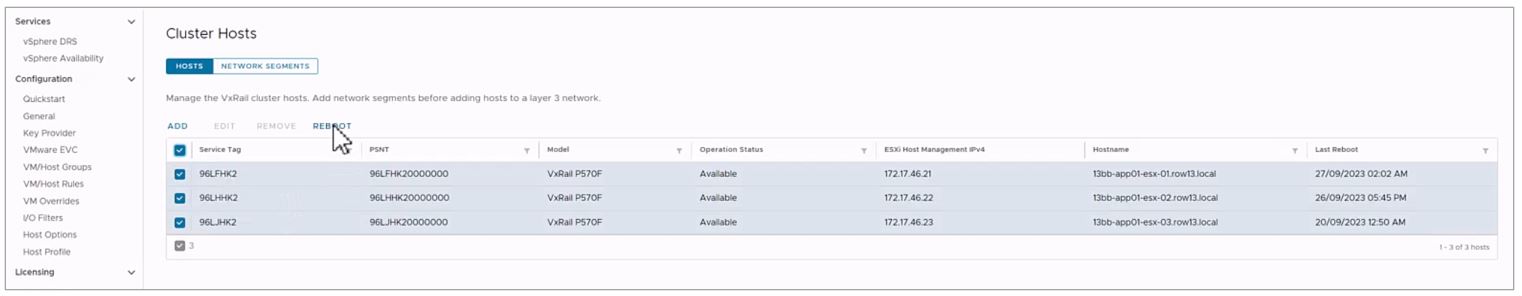

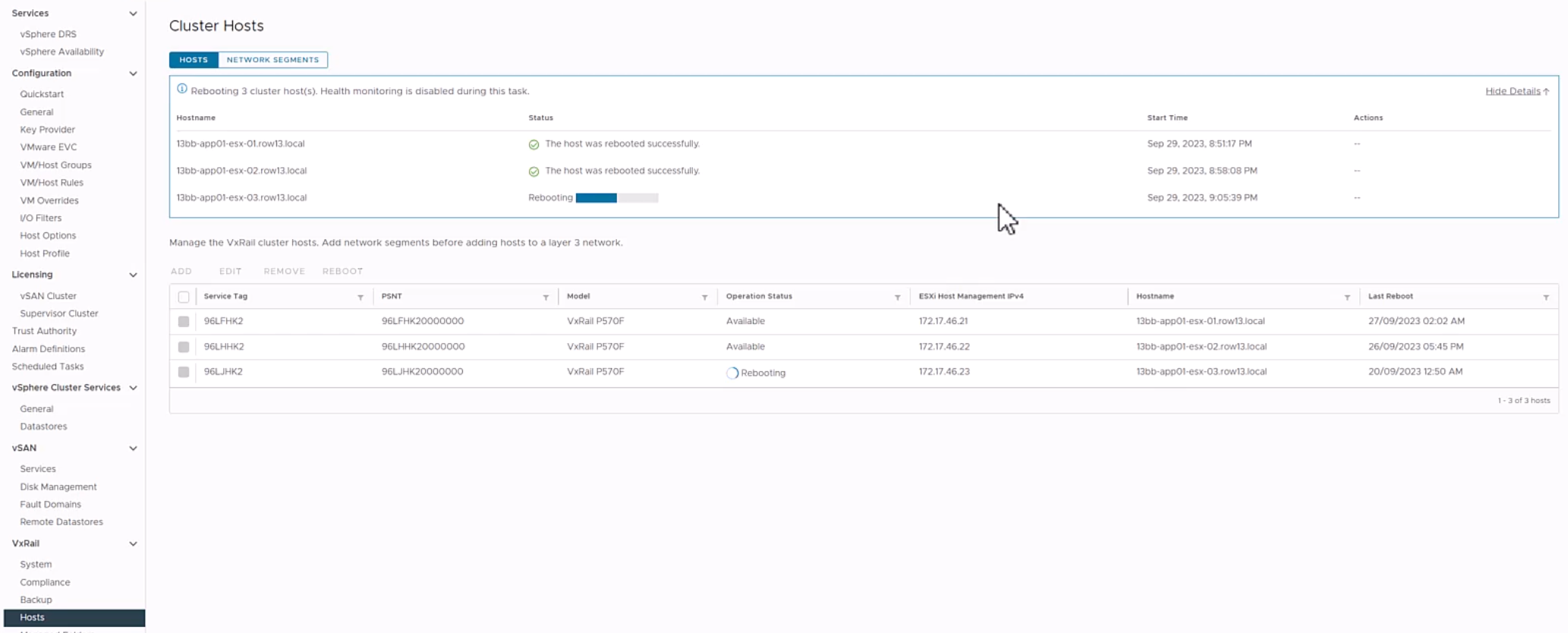

VxRail 7.0.480 introduces the node reboot sequence on VxRail Manager UI so that you do not have to manage your scripts anymore. The new feature includes cluster-level and node-level prechecks to ensure it is safe to perform this activity. If nodes fail to reboot, there is an option for you to retry the reboot or skip it. Making this activity easy may also encourage more customers to do this additional pre-check before upgrading their clusters.

Figure 5. Monitoring the node reboot sequence on the dashboard

VxRail 7.0.480 also provides the capability to split your cluster update into multiple parts. Doing so allows you to separate your cluster upgrade into smaller maintenance windows and work around your business operation needs. Though this capability could reduce the impact of a cluster upgrade to your organization, VMware does recommend that you complete the full upgrade within one week given that there are some Day 2 operations that are disabled while the cluster is partially upgraded. VxRail enables this capability only through VxRail API. When a cluster is in a partially upgraded state, features in the Updates tab are disabled and a banner appears alerting you of the cluster state. Cluster expansion and node removal operations are also unavailable in this scenario.

Conclusion

The new lifecycle management capabilities added to VxRail 7.0.480 are part of the continual evolution of the VxRail LCM experience. They also represent how we value your feedback on how to improve the product and our dedication to making your suggestions come to fruition. The LCM capabilities added to this software release will drive more effective cluster update planning, which will result in higher rates of cluster update success that will drive more efficiencies in your IT operations. Though this blog focuses on the improvements in lifecycle management, please refer to the release notes for VxRail 7.0.480 for a complete list of features and enhancements added to this release. For more information about VxRail in general, visit the Dell Technologies website.

Author: Daniel Chiu