Have You Checked Your Snapshots Lately?

Mon, 28 Mar 2022 21:44:38 -0000

|Read Time: 0 minutes

While this question may sound like a line from a scary movie, it is a serious one. Have you checked your snapshots lately?

In many regions of the world, seasonal time changes occur to maximize daylight hours and ensure darkness falls later in the day. This time shift is commonly known as Daylight Time, Daylight Saving Time, or Summer Time. Regions that observe this practice often change their clocks by 30 minutes or 1 hour depending on the region and time of year. At the time of this publication, multiple regions of the world have recently experienced a time change, while others occur shortly after this publication.

Some storage systems use Coordinated Universal Time (UTC) internally for logging purposes and to run scheduled jobs. Users typically create a schedule to run a task based on their local time, but the storage system then adjusts this time and runs the job based on the internal UTC time. When a regional change in time occurs, scheduled tasks that run on a UTC schedule “shift” when compared to wall clock time. Something that used to run at one time locally may seem to run at another, but only because the wall clock time in the region has changed. While this shift in schedule may not be an issue to most, with some customers the change is noticeable. Some have found that jobs such as snapshot creations and deletions are now occurring during other scheduled tasks such as backups or the snapshots are now missing the intended time, such as the beginning or end of the business workday.

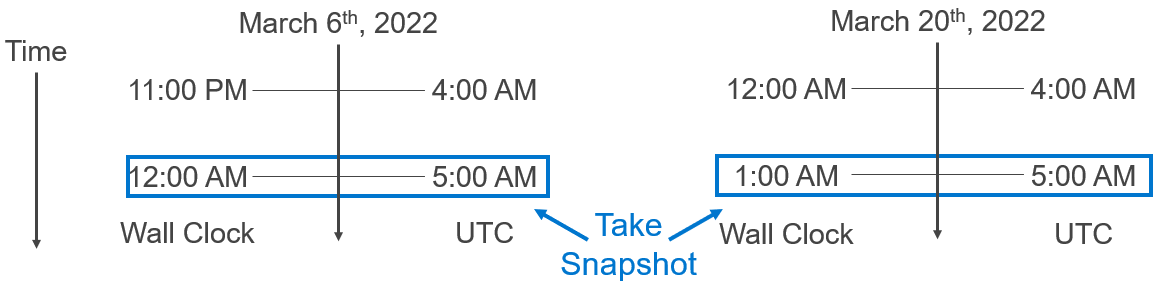

To show what I mean, let’s use the Eastern US time zone as an example. Let’s say a user has created a rule to take a snapshot daily at 12:00 AM midnight local time. When Daylight Saving Time is not in observance, 12:00 AM US EST is equivalent to 5:00 AM UTC and the snapshot schedule will be configured to run at 5:00 AM UTC daily within the system. On Sunday, March 13, 2022 at 2:00 AM the regions of the United States that observe time changes altered their clocks 1 hour forward. The 2:00 AM hour instantaneously became 3:00 AM and an hour of time was seemingly lost.

As the figure below shows, a scheduled job that is configured to run at 5:00 AM UTC daily was taking snapshots at 12:00 AM local time but now runs at 1:00 AM local time, due to the UTC schedule of the storage system and the time change. A similar shift also occurs when the time change occurs again later in the year.

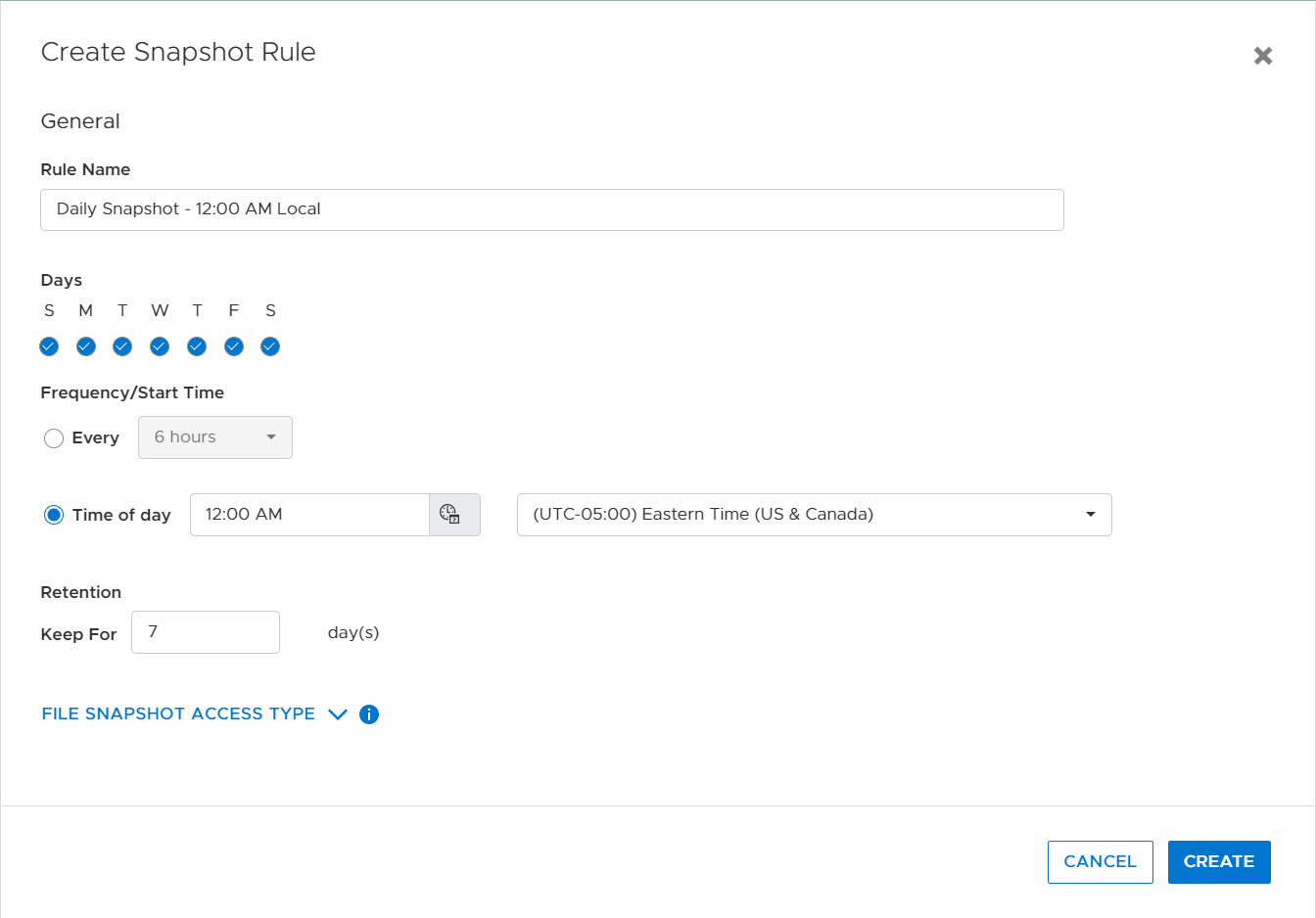

Within PowerStore, protection policies, snapshot rules, and replication rules are used to apply data protection to a resource. A snapshot rule is created to tell the system when to create a snapshot on a resource. The snapshot rule is then added to a protection policy, and the protection policy is assigned to a resource. When creating a snapshot rule, the user can either choose a fixed interval based on several hours or provide a specific time to create a snapshot.

For systems running PowerStoreOS 2.0 or later, when specifying the exact time to create a snapshot, the user also selects a time zone. The time zone drop-down list defaults to the user’s local time zone, but it can be adjusted if the system is physically located in a different time zone. Specifying a specific time with a time zone ensures that seasonal time changes do not impact the creation time of a snapshot.

For systems that were configured with a code prior to the 2.0 release and later upgraded, it is a great idea to review the snapshot rules and ensure that ones that are configured for a particular time of day are set correctly.

So, I ask again: Have you checked your snapshots lately?

Resources

Technical Documentation

- To learn more about the different features that PowerStore provides, see the PowerStore Info Hub.

- For additional information about PowerStore snapshots, see the PowerStore: Snapshots and Thin Clones white paper.

Demos and Hands-on Labs

- To see how PowerStore’s features work and integrate with different applications, see the PowerStore Demos YouTube playlist.

- To gain firsthand experience with PowerStore, see our many Hands-On Labs.

Author: Ryan Poulin

Related Blog Posts

What’s New in PowerStoreOS 3.6?

Thu, 05 Oct 2023 14:22:36 -0000

|Read Time: 0 minutes

Dell PowerStoreOS 3.6 is the latest software release on the Dell PowerStore platform.

This release contains a diversified feature set in categories such as hardware, data protection, NVMe/TCP, file, and serviceability. The following list provides a brief overview of the major features in those categories:

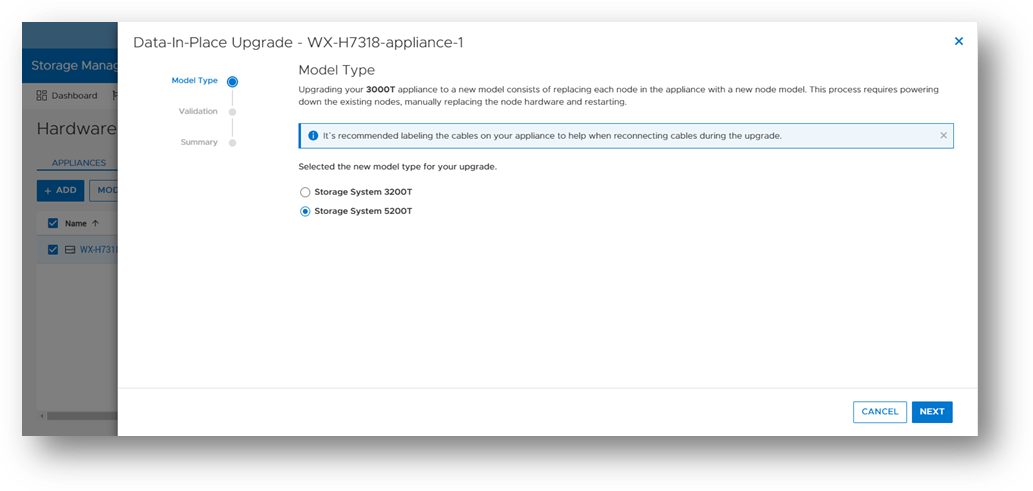

- Hardware: PowerStoreOS 3.6 introduces the highly anticipated Data-In-Place (DIP) upgrade feature, which allows users to perform a hardware refresh while remaining online, with no downtime or host migration.

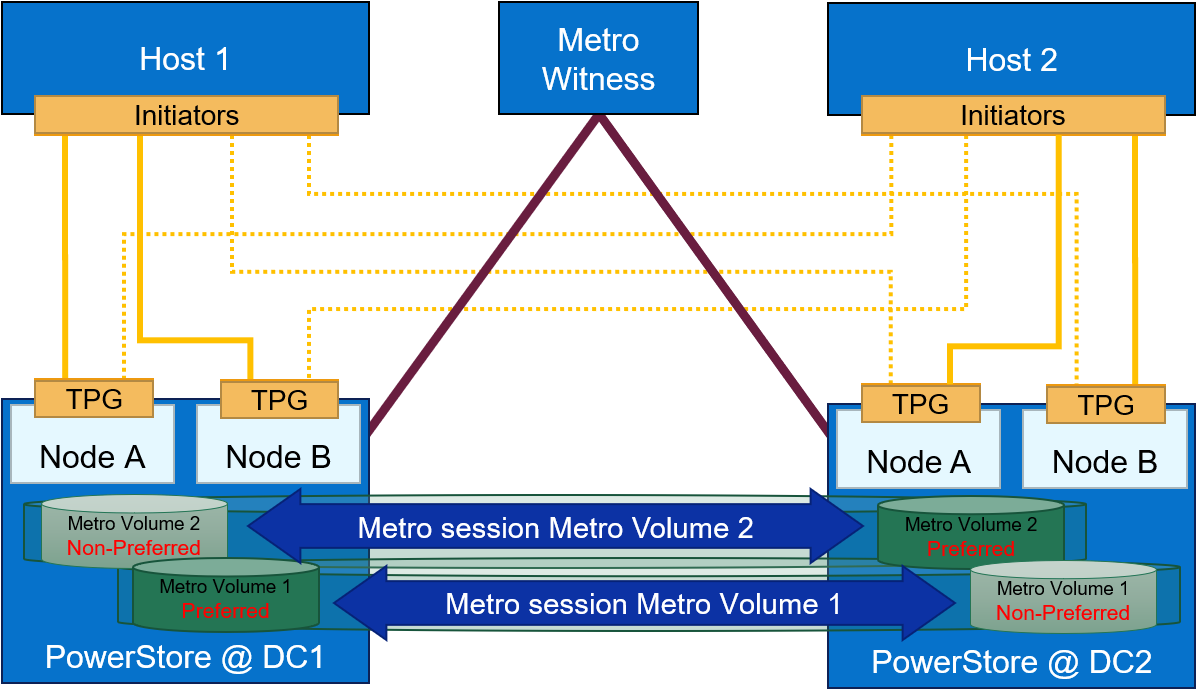

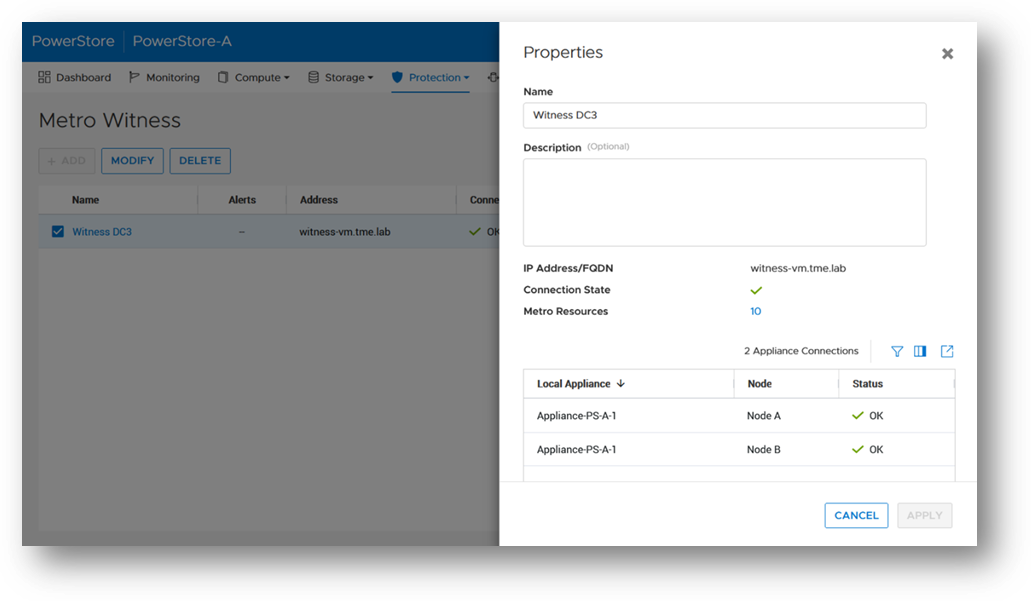

- Data Protection: PowerStoreOS 3.6 now includes support for Metro Witness Server, which allows users to configure a fully active-active configuration for metro volumes across two PowerStore clusters—with more intelligent failure handling, resiliency, and availability during an unplanned outage.

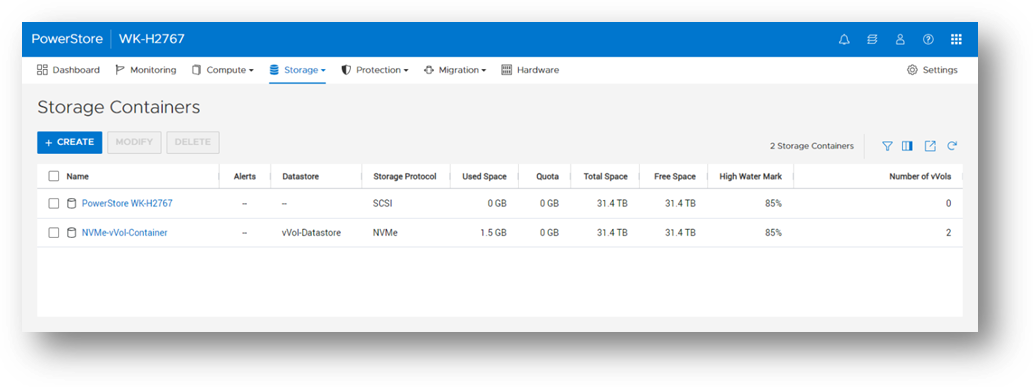

- NVMe/TCP enhancements: Users now have the option to use NVMe storage containers to support host access through the NVMe/TCP protocol for Virtual Volumes (vVols).

- File: Administrators can perform disaster recovery tests within a network bubble, while using an identical configuration as their production NAS server environment.

- Serviceability: To build on the existing remote syslog implementation, PowerStore alerts can now be forwarded to one or more remote syslog servers in PowerStoreOS 3.6. The following sections also provide information about the Non-Disruptive Upgrade (NDU) paths to the PowerStoreOS 3.6 release.

Hardware

Data-In-Place (DIP) upgrades

Data-In-Place upgrades allow users to convert their PowerStore Appliance from a PowerStore x000T model to a PowerStore x200T model. This is a non-disruptive process because only a single node is upgraded at a time, while the other node continues to service host I/O. Data-In-Place upgrades are performed easily through PowerStore Manager’s Hardware tab.

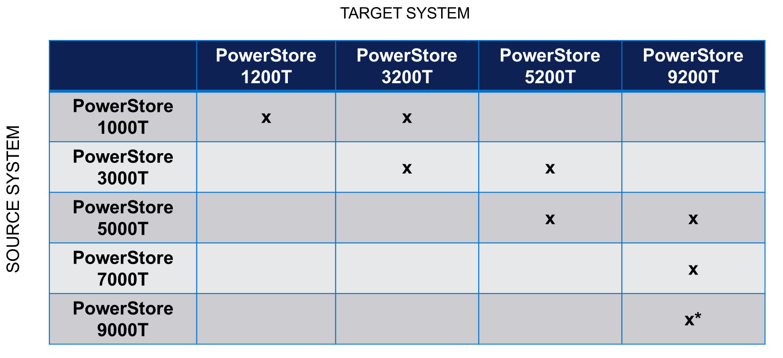

The following table outlines the supported Data-In-Place upgrade paths from the source to target models. For PowerStore 9000T models, only block-optimized upgrades are supported to the PowerStore 9200T model. When upgrading a PowerStore 3000T to a PowerStore 5200T model, additional NVRAM drives are required. When upgrading from a PowerStore 5000T model to a PowerStore 9200T model, a power supply upgrade may also be required.

Note: *Denotes only block-optimized upgrade is supported

Data Protection

Metro Witness server support

Metro Volume support was introduced in PowerStoreOS 3.0. Since PowerStoreOS 3.0, Metro Volumes required manual intervention to fail over if the preferred site went down. PowerStoreOS 3.6 introduces the Metro Witness server feature. The Metro Witness server runs software that automatically forces the non-preferred site to remain online and service I/O if the preferred site were to go offline.

The Metro Witness software is a distributed RPM package available for Linux SLES or RHEL distributions. The RPM can be deployed on a bare-metal server or a virtual machine. The Metro Witness server and software can easily be set up in minutes!

NVMe/TCP enhancements

NVMe/TCP for Virtual Volumes (vVols)

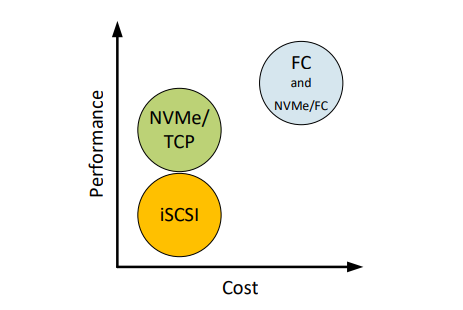

NVMe is transfer protocol that is specifically designed for connecting Solid State Drives (SSDs) to PCIe buses. NVMe over Fabrics (NVMe-oF) is an extension of the NVMe protocol to both TCP and Fibre Channel (FC) data streams. PowerStore currently supports both TCP and FC as NVMe-oF transports.

With the VMware vSphere 8.0U1 release, VMware introduced NVMe/TCP support for vVols. As the request for NVMe/TCP support grows, PowerStoreOS 3.6 expands its existing NVMe/TCP support to vVols as well! With this feature, PowerStore will be the industry’s 1st array to support NVMe/TCP for vVols[1].

From a performance perspective, NVMe/TCP is comparable to FC. From a cost perspective, NVMe/TCP infrastructure is cheaper than FC and can leverage existing network infrastructure. NVMe/TCP has a higher performance benefit than iSCSI and has lower hardware costs than FC. With the addition of NVMe/TCP support for vVols in PowerStoreOS 3.6, we combine performance, cost, and storage/compute granularity for system administrators.

File

Disaster Recovery (DR) tests within a network bubble

Many organizations are required to run disaster recovery (DR) tests using the exact same configuration as production. This includes identical IP addresses and fully qualified domain names. Running these types of tests reduces risk, increases reproducibility, and minimizes the chance of any surprises during an actual disaster recovery event.

These DR tests are carried out in an isolated environment, which is completely siloed from the production environment. Using network segmentation for proper isolation allows there to be no impact to production or replication. This allows users to meet the requirements of using identical IP addresses and FQDNs during their DR tests.

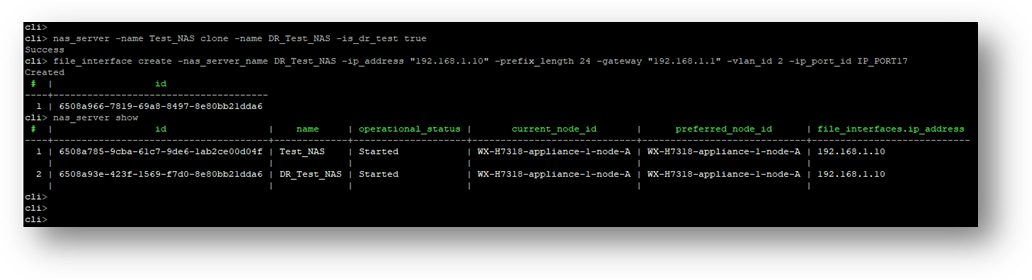

In PowerStoreOS 3.6, the appliance offers the file capability to create a Disaster Recovery Test (DRT) NAS server with a DR test interface. These DRT NAS servers permit a user to create a NAS server with an identical configuration as production, including the ability to duplicate IP addresses.

Note: DRT NAS servers and interfaces can only be configured using the CLI or REST API.

Serviceability

Remote Syslog support for PowerStore alerts

PowerStoreOS 2.0.x introduced support for remote syslog for auditing. These audit types included:

- Config

- System

- Service

- Authentication / Authorization / Logout

PowerStoreOS 3.6 has added support for forwarding of system alerts as well. This equips system administrators with more versatility to monitor their PowerStore appliances from a centralized location.

Upgrade Path

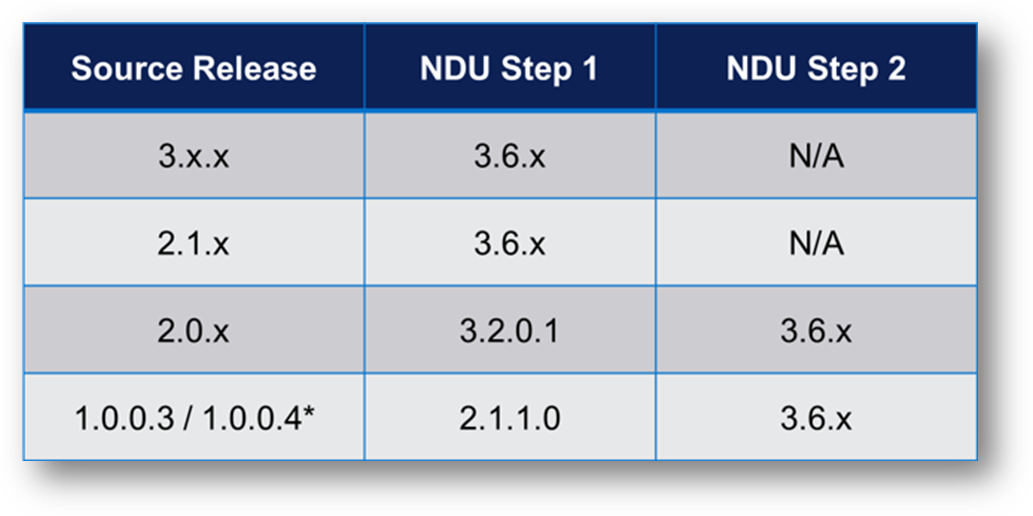

The following table outlines the NDU paths to upgrade to the PowerStoreOS 3.6 release. Depending on your source release, it may be a one or two step upgrade.

Note: *Denotes source release is not supported on PowerStore 500T models

Conclusion

The PowerStoreOS 3.6 release offers numerous feature enhancements that are unique and deepen the platform. It’s no surprise that PowerStore is deployed in over 90% of Fortune 500 vertical sectors[2] [1]. With PowerStore continuing to deliver on hardware, data protection, NVMe/TCP, file, and serviceability in this release, it’s no secret that the product is extremely adaptable and versatile in modern IT environments.

Resources

For additional information about the features described in this blog, plus other information about the PowerStoreOS 3.6 release, see the following white papers and solution documents:

- Dell PowerStore: Introduction to the Platform

- Dell PowerStore Manager Overview

- Dell PowerStore: File Capabilities

- Dell PowerStore: Replication Technologies

- Dell PowerStore: Virtualization Integration

- Dell PowerStore: Metro Volume

- Dell PowerStore: VMware vSphere Best Practices

- Dell PowerStore: VMware Site Recovery Manager Best Practices

- Dell PowerStore: VMware vSphere with Tanzu and TKG Clusters

- NVMe Transport Performance Comparison

Other Resources

- What’s New in PowerStoreOS 3.5?

- PowerStore Simple Support Matrix

- PowerStore: Info Hub – Product Documentation & Videos

- Dell Technologies PowerStore Info Hub

Author: Louie Sasa

[1] PowerStore is the industry's first array to support NVMe/TCP for vVols. Based on Dell internal analysis, September 2023.

[2] As of January 2023, based on internal analysis of vertical industry categories from 2022 Fortune 500 rankings.

Dell PowerStore: vVol Replication with PowerCLI

Wed, 14 Jun 2023 14:57:44 -0000

|Read Time: 0 minutes

Overview

In PowerStoreOS 2.0, we introduced asynchronous replication of vVol-based VMs. In addition to using VMware SRM to manage and control the replication of vVol-based VMs, you can also use VMware PowerCLI to replicate vVols. This blog shows you how.

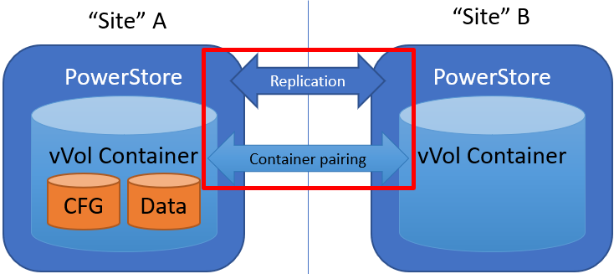

To protect vVol-based VMs, the replication leverages vSphere storage policies for datastores. Placing VMs in a vVol storage container with a vSphere storage policy creates a replication group. The solution uses VASA 3.0 storage provider configurations in vSphere to control the replication of all individual configuration-, swap-, and data vVols in a vSphere replication group on PowerStore. All vVols in a vSphere replication group are managed in a single PowerStore replication session.

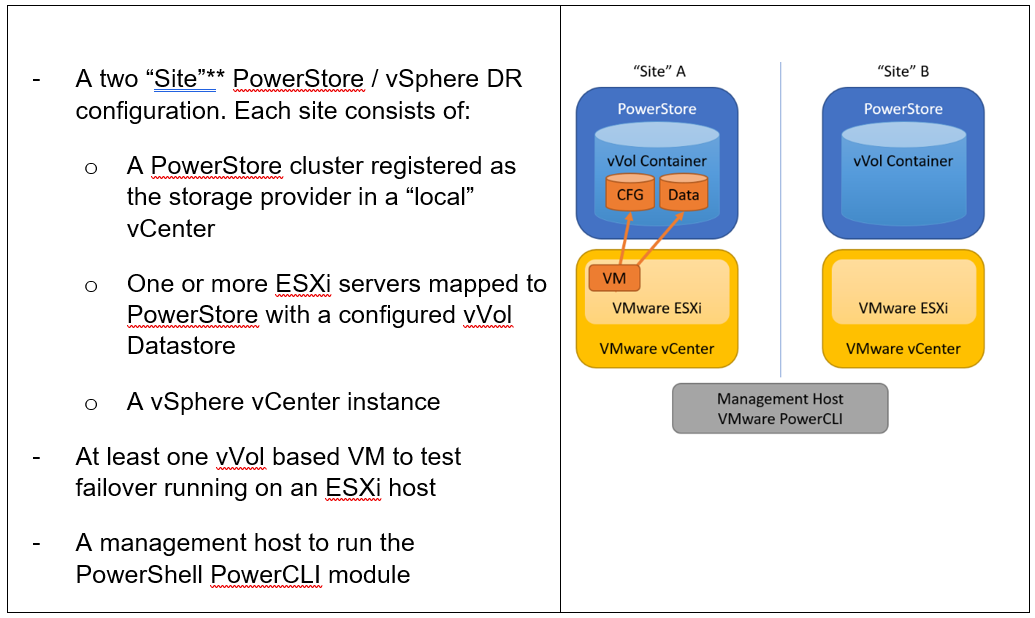

Requirements for PowerStore asynchronous vVol replication with PowerCLI:

**As in VMware SRM, I’m using the term “site” to differentiate between primary and DR installation. However,

depending on the use case, all systems could also be located at a single location.

Let’s start with some terminology used in this blog.

PowerStore cluster | A configured PowerStore system that consists of one to four PowerStore appliances. |

PowerStore appliance | A single PowerStore entity that comes with two nodes (node A and node B). |

PowerStore Remote Systems (pair) | Definition of a relationship of two PowerStore clusters, used for replication. |

PowerStore Replication Rule | A replication configuration used in policies to run asynchronous replication. The rule provides information about the remote systems pair and the targeted recovery point objective time (RPO). |

PowerStore Replication Session | One or more storage objects configured with a protection policy that include a replication rule. The replication session controls and manages the replication based on the replication rule configuration. |

VMware vSphere VM Storage Policy | A policy to configure the required characteristics for a VM storage object in vSphere. For vVol replication with PowerStore, the storage policy leverages the PowerStore replication rule to set up and manage the PowerStore replication session. A vVol-based VM consists of a config vVol, swap vVol, and one or more data vVols. |

VMware vSphere Replication Group | In vSphere, the replication is controlled in a replication group. For vVol replication, a replication group includes one or more vVols. The granularity for failover operations in vSphere is replication group. A replication group uses a single PowerStore replication session for all vVols in that replication group. |

VMware Site Recovery Manager (SRM) | A tool that automates failover from a production site to a DR site. |

Preparing for replication

For preparation, similar to VMware SRM, there are some steps required for replicated vVol-based VMs:

Note: When frequently switching between vSphere and PowerStore, an item may not be available as expected. In this case, a manual synchronization of the storage provider in vCenter might be required to make the item immediately available. Otherwise, you must wait for the next automatic synchronization.

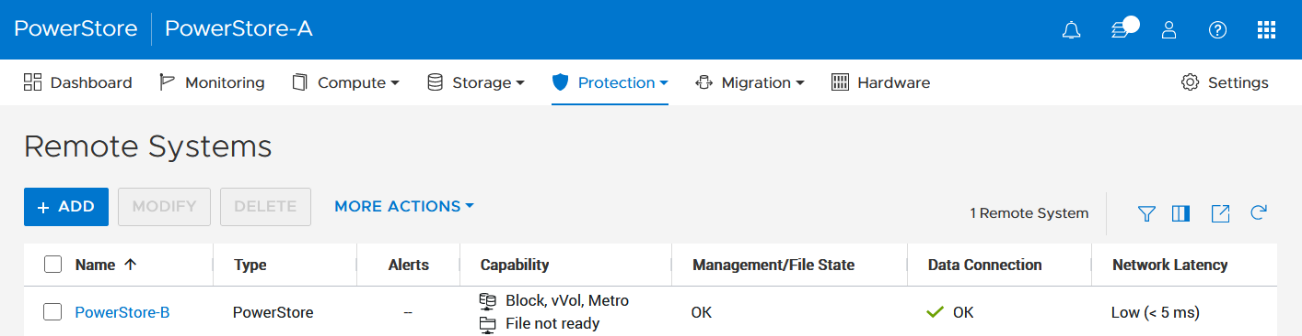

- Using the PowerStore UI, set up a remote system relationship between participating PowerStore clusters. It’s only necessary to perform this configuration on one PowerStore system. When a remote system relationship is established, it can be used by both PowerStore systems.

Select Protection > Remote Systems > Add remote system.

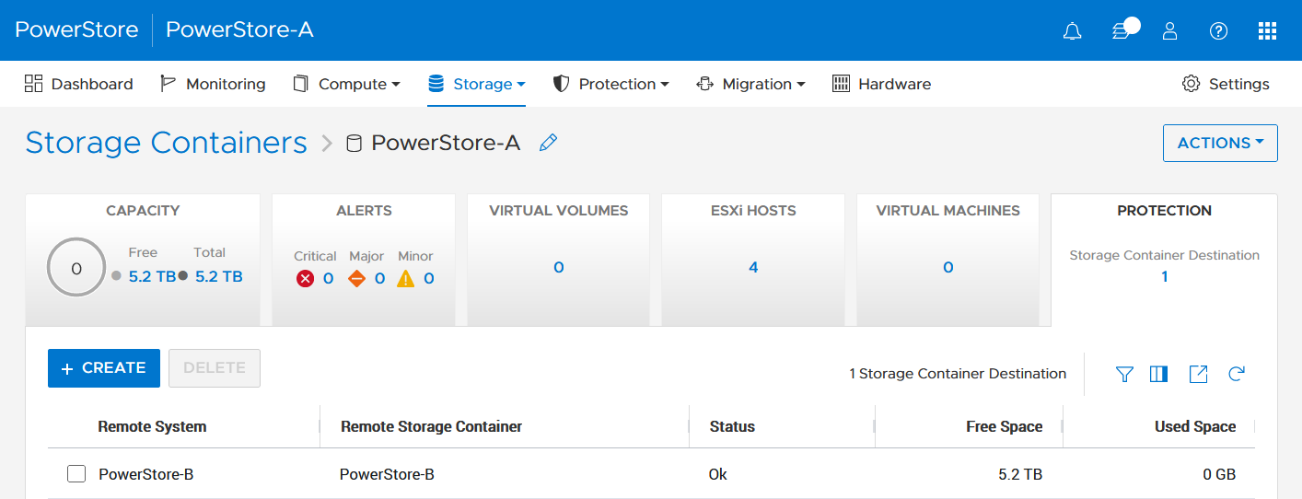

When there is only a single storage container on each PowerStore in a remote system relationship, PowerStoreOS also creates the container protection pairing required for vVol replication.

To check the configuration or create storage container protection pairing when more storage containers are configured, select Storage > Storage Containers > [Storage Container Name] > Protection.

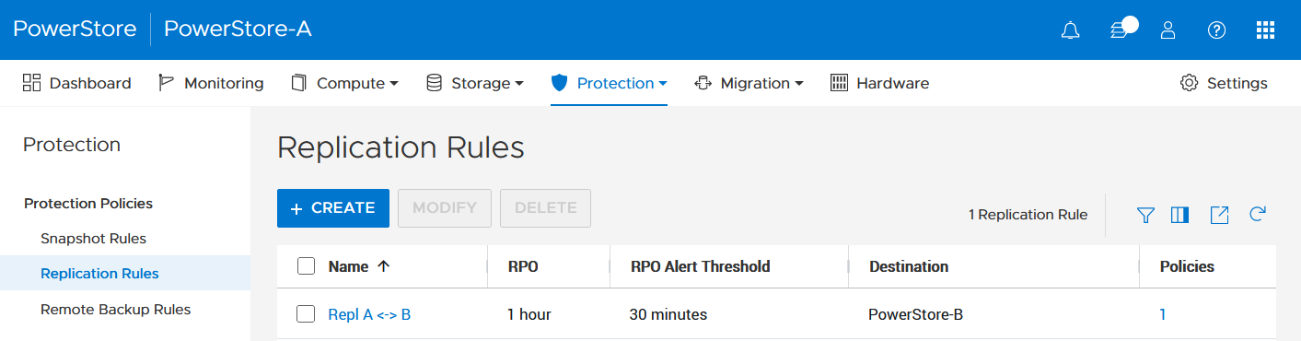

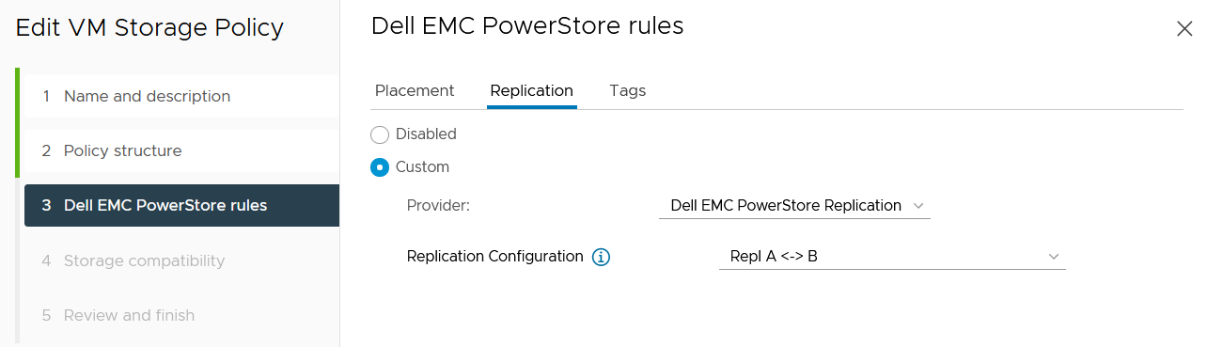

2. The VMware Storage Policy (which is created in a later step) requires existing replication rules on both PowerStoresystems, ideally with same characteristics. For this example, the replication rule replicates from PowerStore-A to PowerStore-B with a RPO of one hour, and 30 minutes for the RPO Alert Threshold.

Select Protection > Protection Policies > Replication Rules.

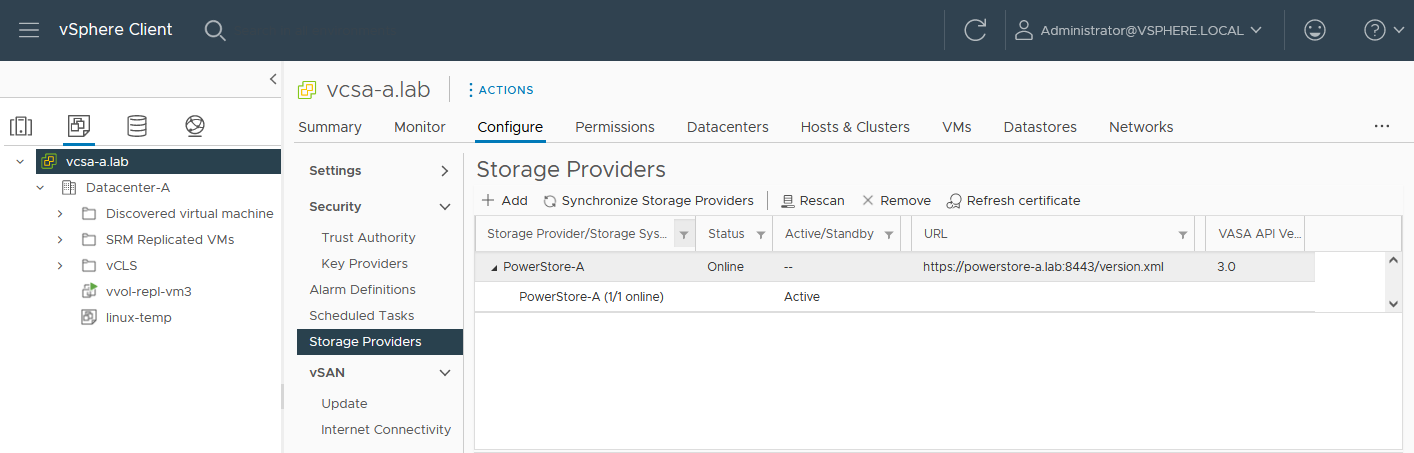

3. As mentioned in the Overview, VASA 3.0 is used for the communication between PowerStore and vSphere. If not configured already, register the local PowerStore in both vCenters as the storage provider in the corresponding vSphere vCenter instance.

In the vSphere Client, select [vCenter server] > Configuration > Storage Providers.

Use https://<PowerStore>:8443/version.xml as the URL with the PowerStore user and password to register the PowerStore cluster.

Alternatively, use PowerStore for a bidirectional registration. When vCenter is registered in PowerStore, PowerStoreOS gets more insight about running VMs for that vCenter. However, in the current release, PowerStoreOS can only handle a single vCenter connection for VM lookups. When PowerStore is used by more than one vCenter, it’s still possible to register a PowerStore in a second vCenter as the storage provider, as mentioned before.

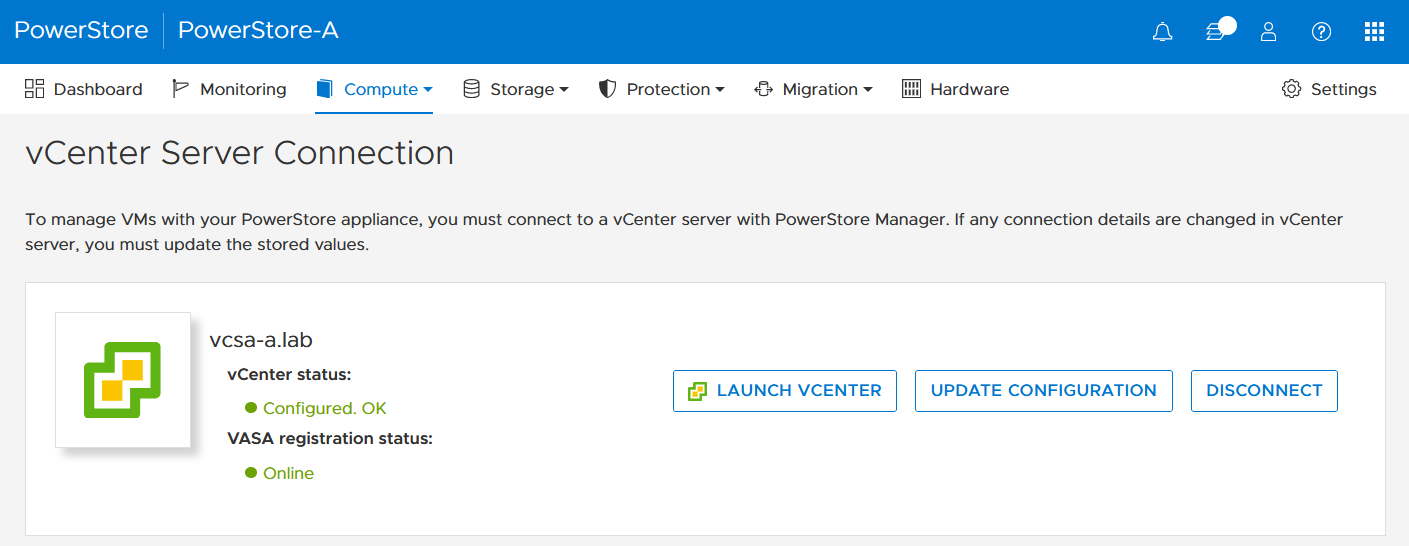

In the PowerStore UI, select Compute > vCenter Server Connection.

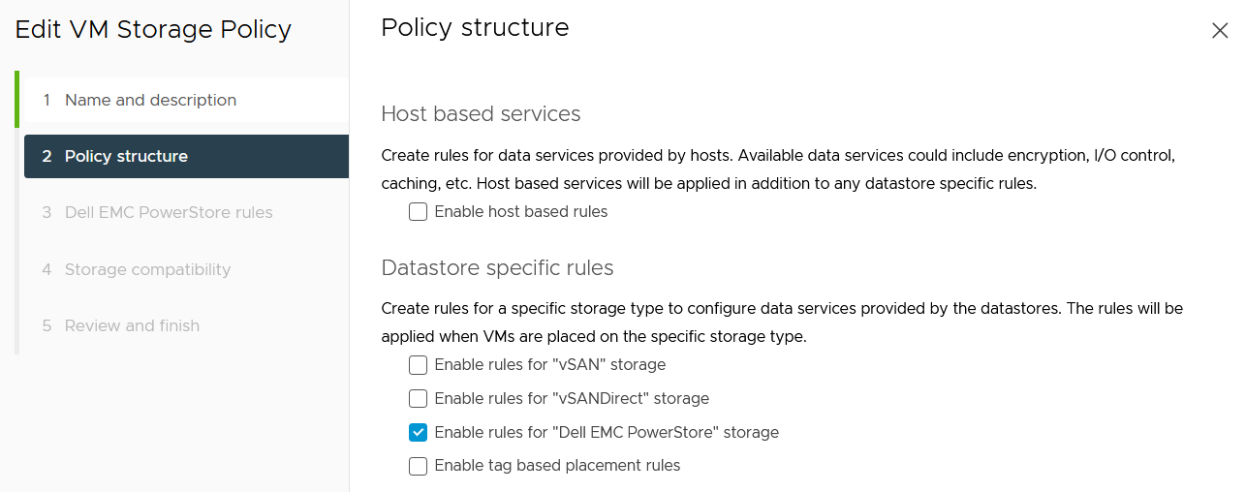

4. Set up a VMware storage policy with a PowerStore replication rule on both vCenters.

The example script in the section section Using PowerCLI and on myScripts4u@github requires the same Storage Policy name for both vCenters.

In the vSphere Client, select Policies and Profiles > VM Storage Policies > Create.

Enable “Dell EMC PowerStore” storage for datastore specific rules:

then choose the PowerStore replication rule:

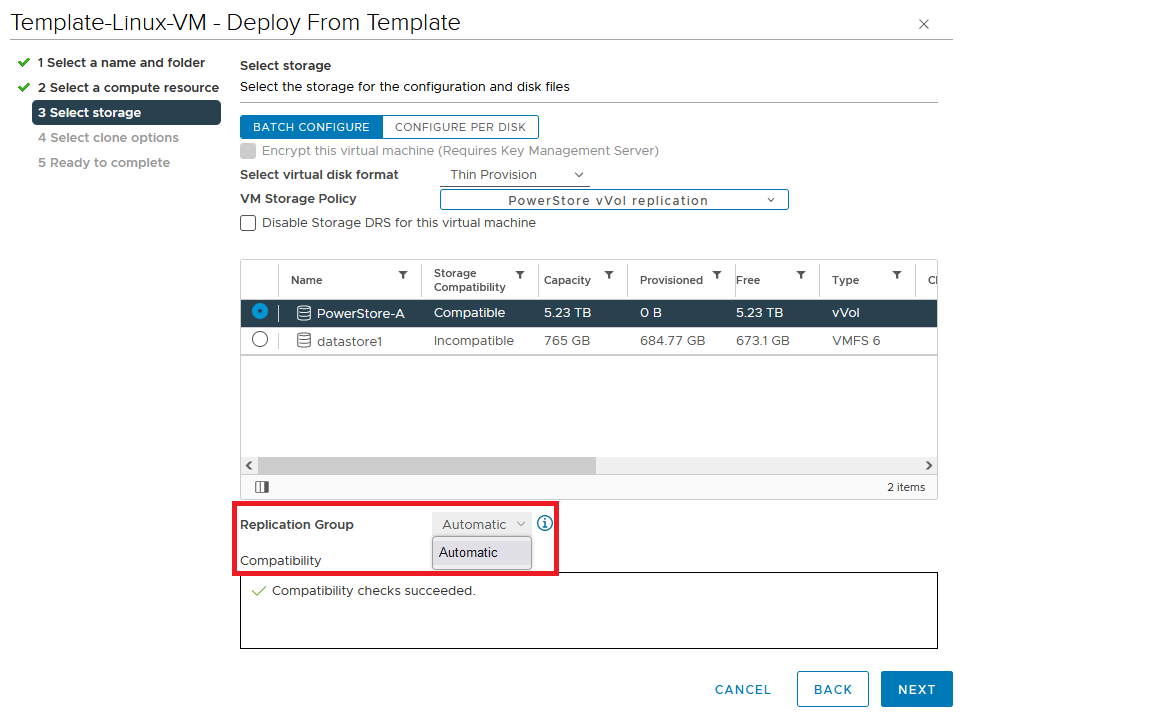

5. Create a VM on a vVol storage container and assign the storage protection policy with replication.

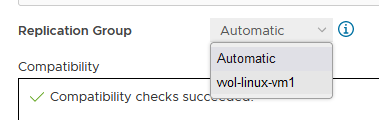

When a storage policy with replication is set up for a VM, you must specify a replication group. Selecting “automatic” creates a replication group with the name of the VM. Multiple VMs can be protected in one replication group.

When deploying another VM on the same vVol datastore, the name of the other replicated VM appears in the list for the Replication Group.

All vVol replication operations are on a Replication Group granularity. For instance, it’s not possible to failover only a single VM of a replication group.

That’s it for the preparation! Let’s continue with PowerCLI.

Using PowerCLI

Disclaimer: The PowerShell snippets shown below are developed only for educational purposes and provided only as examples. Dell Technologies and the blog author do not guarantee that this code works in your environment or can provide support in running the snippets.

To get the required PowerStore modules for PowerCLI, start PowerShell or PowerShell ISE and use Install-Module to install VMware.PowerCLI:

PS C:\> Install-Module -Name VMware.PowerCLI

The following example uses the replication group “vvol-repl-vm1”, which includes the virtual machines “vvol-repl-vm1” and “vvol-repl-vm2”. Because a replication group name might not be consistent with a VM to failover, the script uses the virtual machine name “vvol-repl-vm2” to get the replication group for failover.

Failover

This section shows an example failover of a vVol-based VM “vvol-vm2” from a source vCenter to a target vCenter.

1. Load modules, allow PowerStore to connect to multiple vCenter instances, and set variables for the VM, vCenters, and vCenter credentials. The last two commands in this step establishes the connection to both vCenters.

Import-Module VMware.VimAutomation.Core Import-Module VMware.VimAutomation.Common Import-Module VMware.VimAutomation.Storage Set-PowerCLIConfiguration -DefaultVIServerMode 'Multiple' -Scope ([VMware.VimAutomation.ViCore.Types.V1.ConfigurationScope]::Session) -Confirm:$false | Out-Null $virtualmachine = "vvol-vm2" # Enter VM name of a vVol VM which should failover $vcUser = 'administrator@vsphere.local' # Change this to your VC username $vcPass = 'xxxxxxxxxx' # VC password $siteA = "vcsa-a.lab" # first vCenter $siteB = "vcsa-b.lab" # second vCenter Connect-VIServer -User "$vcUser" -Password "$vcPass" -Server $siteA -WarningAction SilentlyContinue Connect-VIServer -User "$vcUser" -Password "$vcPass" -Server $siteB -WarningAction SilentlyContinue

2. Get replication group ($rg), replication group pair ($rgPair), and storage policy ($stoPol) for the VM. Because a replication group may have additional VMs, all VMs in the replication group are stored in $rgVMs.

$vm = get-vm $virtualmachine

# find source vCenter – this allows the script to failover (Site-A -> Site-B) and failback (Site-B -> Site-A)

$srcvCenter=$vm.Uid.Split(":")[0].Split("@")[1]

if ( $srcvCenter -like $siteA ) {

$siteSRC=$siteA

$siteDST=$siteB

} else {

$siteSRC=$siteB

$siteDST=$siteA

}

$rg = get-spbmreplicationgroup -server $siteSRC -VM $vm

$rgPair = Get-SpbmReplicationPair -Source $rg

$rgVMs=(Get-SpbmReplicationGroup -server $siteSRC -Name $rg| get-vm)

$stoPol = ( $vm | Get-SpbmEntityConfiguration).StoragePolicy.Name3. Try a graceful shutdown of VMs in $rgVMs and wait ten seconds. Shut down VMs after three attempts.

$rgVMs | ForEach-Object {

if ( (get-vm $_).PowerState -eq "PoweredOn")

{

stop-vmguest -VM $_ -confirm:$false -ErrorAction silentlycontinue | Out-Null

start-sleep -Seconds 10

$cnt=1

while ((get-vm $_).PowerState -eq "PoweredOn" -AND $cnt -le 3 ) {

Start-Sleep -Seconds 10

$cnt++

}

if ((get-vm $_).PowerState -eq "PoweredOn") {

stop-vm $_ -Confirm:$false | Out-Null

}

}

}4. It’s now possible to prepare and execute the failover. At the end, $vmxfile contains the vmx files that are required to register the VMs at the destination. During failover, a final synchronize before doing the failover ensures that all changes are replicated to the destination PowerStore. When the failover is completed, the vVols at the failover destination are available for further steps.

$syncRg = Sync-SpbmReplicationGroup -PointInTimeReplicaName "prePrepSync" -ReplicationGroup $rgpair.target $prepareFailover = start-spbmreplicationpreparefailover $rgPair.Source -Confirm:$false -RunAsync Wait-Task $prepareFailover $startFailover = Start-SpbmReplicationFailover $rgPair.Target -Confirm:$false -RunAsync $vmxfile = Wait-Task $startFailover

5. For clean up on the failover source vCenter, we remove the failed over VM registrations. On the failover target, we search for a host ($vmhostDST) and register, start, and set the vSphere Policy on VMs. The array @newDstVMs will contain VM information at the destination for the final step.

$rgvms | ForEach-Object {

$_ | Remove-VM -ErrorAction SilentlyContinue -Confirm:$false

}

$vmhostDST = get-vmhost -Server $siteDST | select -First 1

$newDstVMs= @()

$vmxfile | ForEach-Object {

$newVM = New-VM -VMFilePath $_ -VMHost $vmhostDST

$newDstVMs += $newVM

}

$newDstVms | forEach-object {

$vmtask = start-vm $_ -ErrorAction SilentlyContinue -Confirm:$false -RunAsync

wait-task $vmtask -ErrorAction SilentlyContinue | out-null

$_ | Get-VMQuestion | Set-VMQuestion -Option ‘button.uuid.movedTheVM’ -Confirm:$false

$hdds = Get-HardDisk -VM $_ -Server $siteDST

Set-SpbmEntityConfiguration -Configuration $_ -StoragePolicy $stopol -ReplicationGroup $rgPair.Target | out-null

Set-SpbmEntityConfiguration -Configuration $hdds -StoragePolicy $stopol -ReplicationGroup $rgPair.Target | out-null

}6. The final step enables the protection from new source.

start-spbmreplicationreverse $rgPair.Target | Out-Null

$newDstVMs | foreach-object {

Get-SpbmEntityConfiguration -HardDisk $hdds -VM $_ | format-table -AutoSize

}Additional operations

Other operations for the VMs are test-failover and an unplanned failover on the destination. The test failover uses the last synchronized vVols on the destination system and allows us to register and run the VMs there. The vVols on the replication destination where the test is running are not changed. All changes are stored in a snapshot. The writeable snapshot is deleted when the test failover is stopped.

Test failover

For a test failover, follow Step 1 through Step 3 from the failover example and continue with the test failover. Again, $vmxfile contains VMX information for registering the test VMs at the replication destination.

$sync=Sync-SpbmReplicationGroup -PointInTimeReplicaName "test" -ReplicationGroup $rgpair.target $prepareFailover = start-spbmreplicationpreparefailover $rgPair.Source -Confirm:$false -RunAsync $startFailover = Start-SpbmReplicationTestFailover $rgPair.Target -Confirm:$false -RunAsync $vmxfile = Wait-Task $startFailover

It’s now possible to register the test VMs. To avoid IP network conflicts, disable the NICs, as shown here.

$newDstVMs= @()

$vmhostDST = get-vmhost -Server $siteDST | select -First 1

$vmxfile | ForEach-Object {

write-host $_

$newVM = New-VM -VMFilePath $_ -VMHost $vmhostDST

$newDstVMs += $newVM

}

$newDstVms | forEach-object {

get-vm -name $_.name -server $siteSRC | Start-VM -Confirm:$false -RunAsync | out-null # Start VM on Source

$vmtask = start-vm $_ -server $siteDST -ErrorAction SilentlyContinue -Confirm:$false -RunAsync

wait-task $vmtask -ErrorAction SilentlyContinue | out-null

$_ | Get-VMQuestion | Set-VMQuestion -Option ‘button.uuid.movedTheVM’ -Confirm:$false

while ((get-vm -name $_.name -server $siteDST).PowerState -eq "PoweredOff" ) {

Start-Sleep -Seconds 5

}

$_ | get-networkadapter | Set-NetworkAdapter -server $siteDST -connected:$false -StartConnected:$false -Confirm:$false

}After stopping and deleting the test VMs at the replication destination, use stop-SpbmReplicationFailoverTest to stop the failover test. In a new PowerShell or PowerCLI session, perform Steps 1 and 2 from the failover section to prepare the environment, then continue with the following commands.

$newDstVMs | foreach-object {

stop-vm -Confirm:$false $_

remove-vm -Confirm:$false $_

}

Stop-SpbmReplicationTestFailover $rgpair.targetUnplanned failover

For an unplanned failover, the cmdlet Start-SpbmReplicationFailover provides the option -unplanned which can be executed against a replication group on the replication destination for immediate failover in case of a DR. Because each infrastructure and DR scenario is different, I only show the way to run the unplanned failover of a single replication group in case of a DR situation.

To run an unplanned failover, the script requires the replication target group in $RgTarget. The group pair information is only available when connected to both vCenters. To get a mapping of replication groups, use Step 1 from the Failover section and execute the Get-SpbmReplicationPair cmdlet:

PS> Get-SpbmReplicationPair | Format-Table -autosize Source Group Target Group ------------ ------------ vm1 c6c66ee6-e69b-4d3d-b5f2-7d0658a82292

The following part shows how to execute an unplanned failover for a known replication group. The example connects to the DR vCenter and uses the replication group id as an identifier for the unplanned failover. After the failover is executed, the script registers the VMX in vCenter to bring the VMs online.

Import-Module VMware.VimAutomation.Core

Import-Module VMware.VimAutomation.Common

Import-Module VMware.VimAutomation.Storage

$vcUser = 'administrator@vsphere.local' # Change this to your VC username

$vcPass = 'xxxxxxxxxx' # VC password

$siteDR = "vcsa-b.lab" # DR vCenter

$RgTarget = "c6c66ee6-e69b-4d3d-b5f2-7d0658a82292" # Replication Group Target – required from replication Source before running the unplanned failover

# to get the information run get-SpbmReplicationPair | froamt-table -autosize when connected to both vCenter

Connect-VIServer -User "$vcUser" -Password "$vcPass" -Server $siteDR -WarningAction SilentlyContinue

# initiate the failover and preserve vmxfiles in $vmxfile

$vmxfile = Start-SpbmReplicationFailover -server $siteDR -Unplanned -ReplicationGroup $RgTarget

$newDstVMs= @()

$vmhostDST = get-vmhost -Server $siteDR | select -First 1

$vmxfile | ForEach-Object {

write-host $_

$newVM = New-VM -VMFilePath $_ -VMHost $vmhostDST

$newDstVMs += $newVM

}

$newDstVms | forEach-object {

$vmtask = start-vm $_ -server $siteDST -ErrorAction SilentlyContinue -Confirm:$false -RunAsync

wait-task $vmtask -ErrorAction SilentlyContinue | out-null

$_ | Get-VMQuestion | Set-VMQuestion -Option ‘button.uuid.movedTheVM’ -Confirm:$false

}To recover from an unplanned failover after both vCenters are back up, perform the following required steps:

- Add a storage policy with the previous target recovery group to VMs and associated HDDs.

- Shutdown (just in case) and remove the VMs on the previous source.

- Start reprotection of VMs and associated HDDs.

- Use Spmb-ReplicationReverse to reestablish the protection of the VMs.

Conclusion

Even though Dell PowerStore and VMware vSphere do not provide native vVol failover handling, this example shows that vVol failover operations are doable with some script work. This blog should give you a quick introduction to a script based vVol mechanism, perhaps for a proof of concept in your environment. Note that it would need to be extended, such as with additional error handling, when running in a production environment.

Resources

- GitHub - myscripts4u/PowerStore-vVol-PowerCLI: Dell PowerStore vVol failover with PowerCLI

- Dell PowerStore: Replication Technologies

- Dell PowerStore: VMware Site Recovery Manager Best Practices

- VMware PowerCLI Installation Guide

Author: Robert Weilhammer, Principal Engineering Technologist

https://www.xing.com/profile/Robert_Weilhammer