Edge AI Integration in Retail: Revolutionizing Operational Efficiency

Mon, 12 Feb 2024 11:43:11 -0000

|Read Time: 0 minutes

Edge AI plays a significant role in the digital transformation of retail warehouses and stores, offering benefits in terms of efficiency, responsiveness, and enhanced customer experience in the following areas:

- Real-time analytics—Edge AI enables real-time analytics for monitoring and optimizing warehouse management systems (WMS). This includes tracking inventory levels, predicting demand, and identifying potential issues in the supply chain. In the store, real-time analytics can be applied to monitor customer behavior, track product popularity, and adjust pricing or promotions dynamically based on the current context using AI algorithms that analyze this data and provide personalized recommendations.

- Inventory management—Edge AI can improve inventory management by implementing real-time tracking systems. This helps in reducing stockouts, preventing overstock situations, and improving the overall supply chain efficiency. On the store shelves, edge devices equipped with AI can monitor product levels, automate reordering processes, and provide insights into shelf stocking and arrangement.

- Optimized supply chain—Edge AI assists in optimizing the supply chain by analyzing data at the source. This includes predicting delivery times, identifying inefficiencies, and dynamically adjusting logistics routes for both warehouses and stores.

- Autonomous systems—Edge AI facilitates the deployment of autonomous systems, such as autonomous robots, conveyor belts, robotic arms, automated guided vehicles (AGVs), and collaborative robotics (cobots). Autonomous systems in the store can include checkout processes, inventory monitoring, and even in-store assistance.

- Predictive maintenance—In both warehouses and stores, Edge AI can enable predictive maintenance of equipment. By analyzing data from sensors on machinery, it can predict when equipment is likely to fail, reducing downtime and maintenance costs.

- Offline capabilities—Edge AI systems can operate offline, ensuring that critical functions can continue even when there is a loss of internet connectivity. This is especially important in retail environments where uninterrupted operations are crucial.

The Operational Complexity Behind the Edge-AI Transformation

The scale and complexity of Edge-AI transformation in retail are influenced by factors such as the number of edge devices, data volume, AI model complexity, real-time processing requirements, integration challenges, security considerations, scalability, and maintenance needs.

The Scalability and Maintenance Challenge

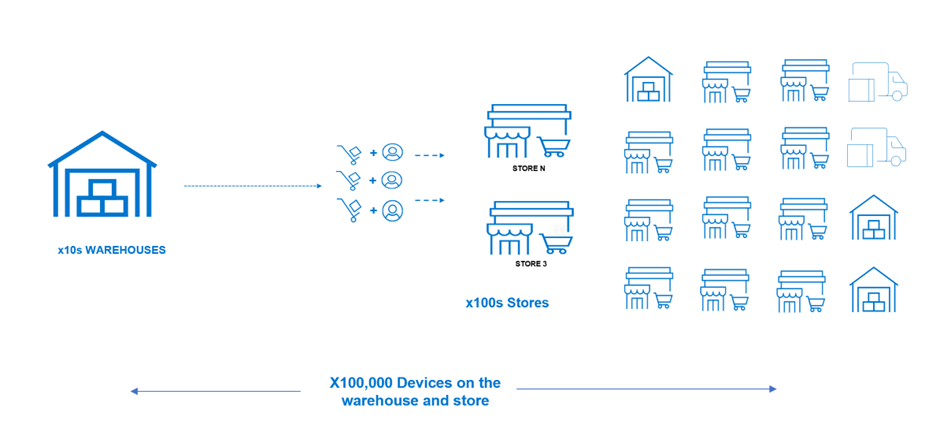

A mid-size retail organization is composed of tens of warehouses and hundreds of stores spread across different locations. In addition to that, it needs to support dozens of external suppliers that also need to become an integral part of the supply chain system. To enable Edge-AI retail, it will need to introduce many new sensors, devices, and systems that will enable it to automate a large part of its daily operation. This will result in hundreds of thousands of devices across the stores and warehouses.

Figure 1. The Edge-AI device scale challenge

The scale of the transformation depends on the number of edge devices deployed in retail environments. These devices could include smart cameras, sensors, RFID readers, and other internet of things (IoT) devices. The ability to scale the Edge-Ai solution as the retail operation grows is an essential factor. Scalability considerations involve not only the number of devices but also the adaptability of the overall architecture to accommodate increased data volume and computational requirements.

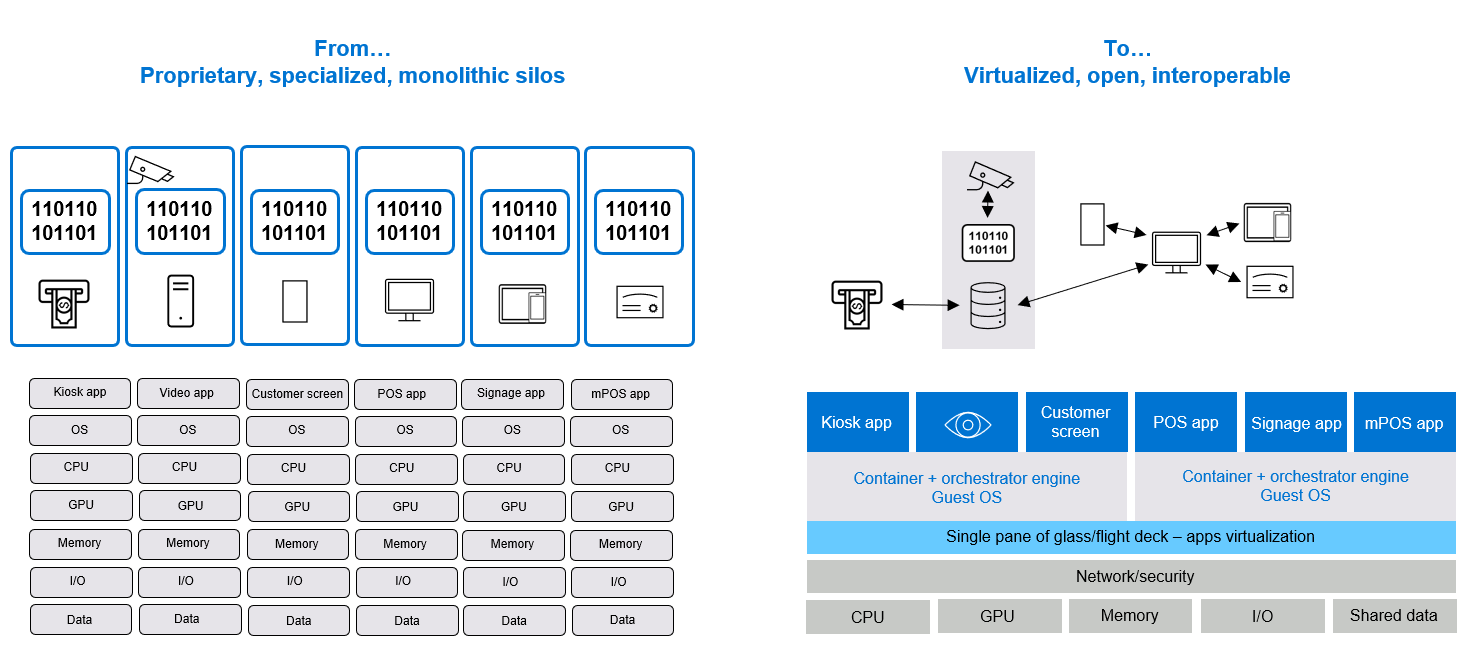

Breaking Silos Through Cloud Native and Cloud Transformation

Each device comes with its proprietary stack, making the overall management and maintenance of such a diverse and highly fragmented environment extremely challenging. To address that, Edge-Ai transformation also includes the transformation to a more common cloud-native and cloud-based infrastructure. This level of modernization is quite massive and costly and cannot happen in one go.

Figure 2. Cloud native and cloud transformation break the device management silos challenges

This brings the need to handle the integration with existing systems (brownfield) to enable smoother transformation. This often involves integration with existing retail systems, such as point-of-sale systems, inventory management software, and customer relationship management tools.

NativeEdge and Centerity Solution to Simplify Retail Edge-AI Transformation

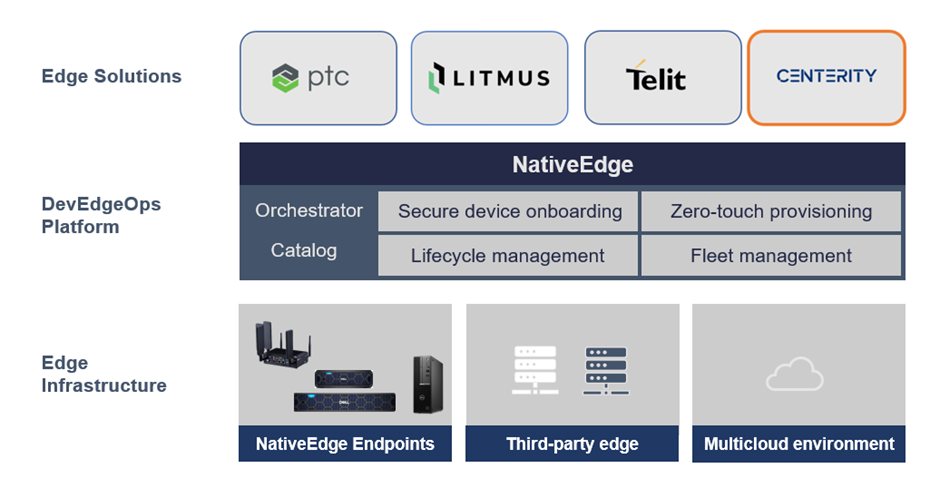

Dell NativeEdge serves as a generic platform for deploying and managing edge devices and applications at the edge of the network. One notable addition in the latest version of NativeEdge is the ability to deliver an end-to-end solution on top of the platform that includes PTC, Litmus, Telit, Centerity, and so on. This capability allows users to get a consistent and simple management from Bare-Metal provisioning to a fully automated full-blown solution.

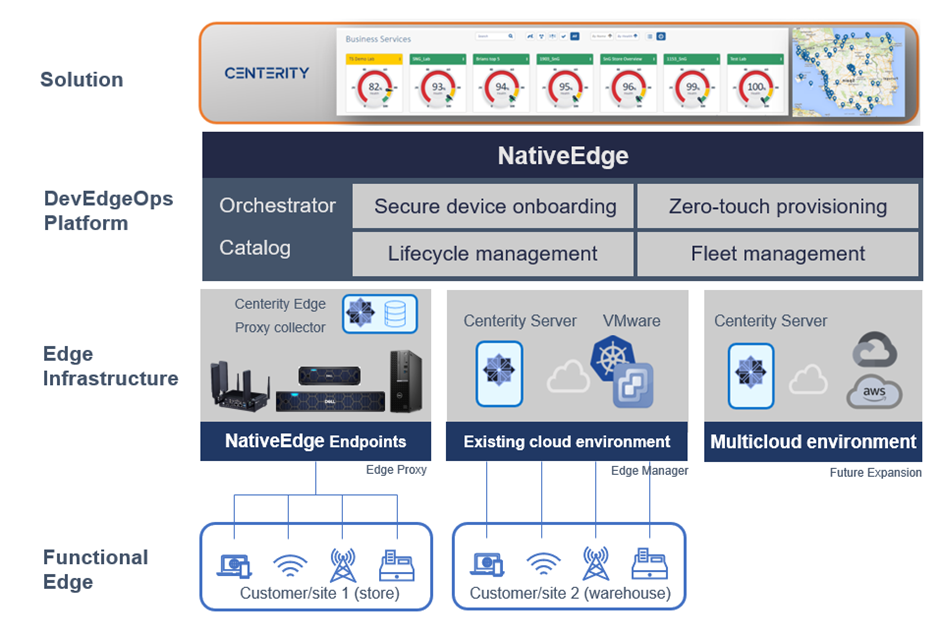

Figure 3. Using NativeEdge and Centerity as part of the open edge solution stack

In this blog, we demonstrate the benefits behind the integration of NativeEdge and Centerity that simplify the retail Edge-AI transformation challenges.

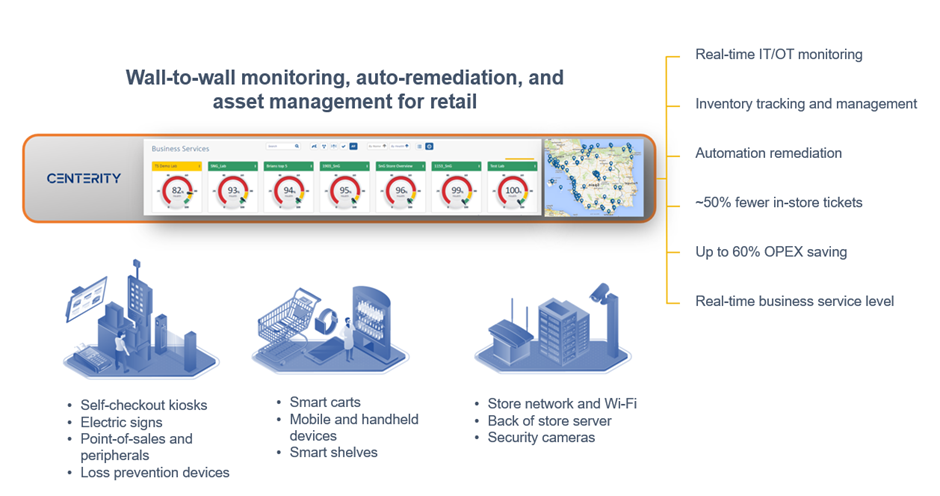

Introduction to Centerity

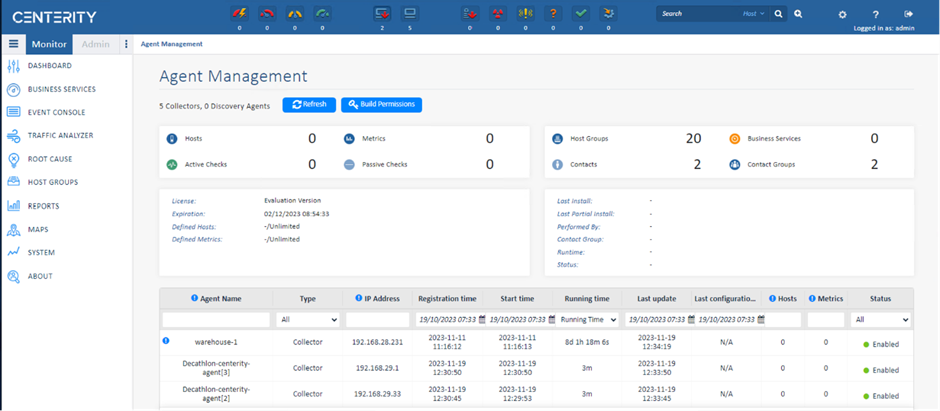

Centerity CSM² is a purpose-built monitoring, auto-remediation, and asset management platform for enterprise retailers that provides proactive wall-to-wall observability of the in-store technology stack. The key part in the Centrity architecture is the Centerity Manager is responsible for collecting all the data from the edge devices into a common dashboard.

Figure 4. Centerity retail management and monitoring

Using NativeEdge and Centerity to Automate the Entire Retail Operation

The following are the architecture choices made to address the Edge-AI transformation challenges with Dell NativeEdge as the edge platform and Centerity as the asset management and monitoring for both the retail warehouse and store. In this case, we have two sites, one representing a warehouse where we connect to the customer’s existing environment running on VMware infrastructure, and a retail store running in a different location.

Note: The Centrify Proxy (customer site-1 in the following figure) is used to aggregate multiple remote devices through a single network connection.

Figure 5. Using NativeEdge and Centerity to fully automate and manage and retail warehouse and store

Since the store is often limited by infrastructure capacity, we will use a gateway to aggregate the data from all the devices. For this purpose, we will use a NativeEdge Endpoint as a gateway and install the Centerity monitoring agent on it. The monitoring agent will act as a proxy that on one hand connects to the individual devices in the store and, on the other hand, sends this information back to the Centerity Manager to aggregate all this information into one control plane. In this case, the warehouse runs on a private cloud based on VMware and represents a central data center. Since we have more capacity on this environment, we will collect the data directly from the device to the manager without the need for a proxy agent. The architecture is also set to enable future expansion to public clouds such as AWS and GCP.

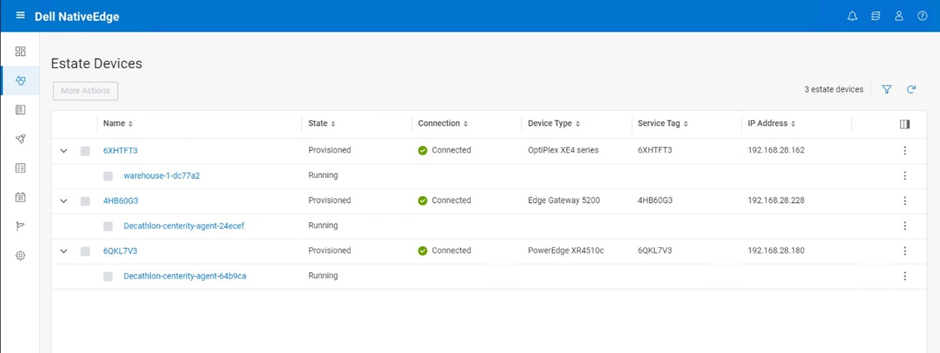

Step 1: Use NativeEdge for zero-touch secure on-boarding of the edge infrastructure

Secure device onboarding—In this step, we will onboard three different edge compute classes (PowerEdge, OptiPlex, and Gateway) to represent a warehouse facility with diverse set of devices. NativeEdge will treat each of these devices as a separate ECE instance and, thus, provide a consistent management layer to all the devices, regardless of their compute class.

Figure 6. Zero-touch provisioning of edge infrastructure from BareMetal to cloud

Step 2: Deploy Centerity solution on top of NativeEdge infrastructure

This phase is broken down into two parts; The first is provisioning the Centerity Manager which is the main component and then provision the edge proxy on the target store and warehouse.

Step 2.1: Deploy and manage the Centerity Manager on VMware (Site 2)

To do that:

- Choose the on-prem Centerity server catalog item from the NativeEdge solution tab. Full Centerity server installation starts on VMware private cloud (external infra, not NativeEdge Endpoint).

- Use the deployment output to fetch the newly created Centerity server endpoint, credentials, and so on.

Step 2.2: Deploy and manage the Centerity Edge proxy (agent) on NativeEdge Endpoints

To install Centerity Edge proxy collector on each warehouse:

- Choose the Centerity Collector or Edge proxy catalog item.

- Select the target environment and deploy the proxy on all the selected sites. The installation happens in parallel installation on all sites.

- Fill the relevant deployment inputs and install deployment.

- Native Edge starting the fulfillment phase with all operations.

- Install and configure Centos VM per each warehouse, install edge proxy agent/ collector, and connect it to server.

- Execute day-2 operations, such as updating one of the warehouses using security update check, custom workflow.

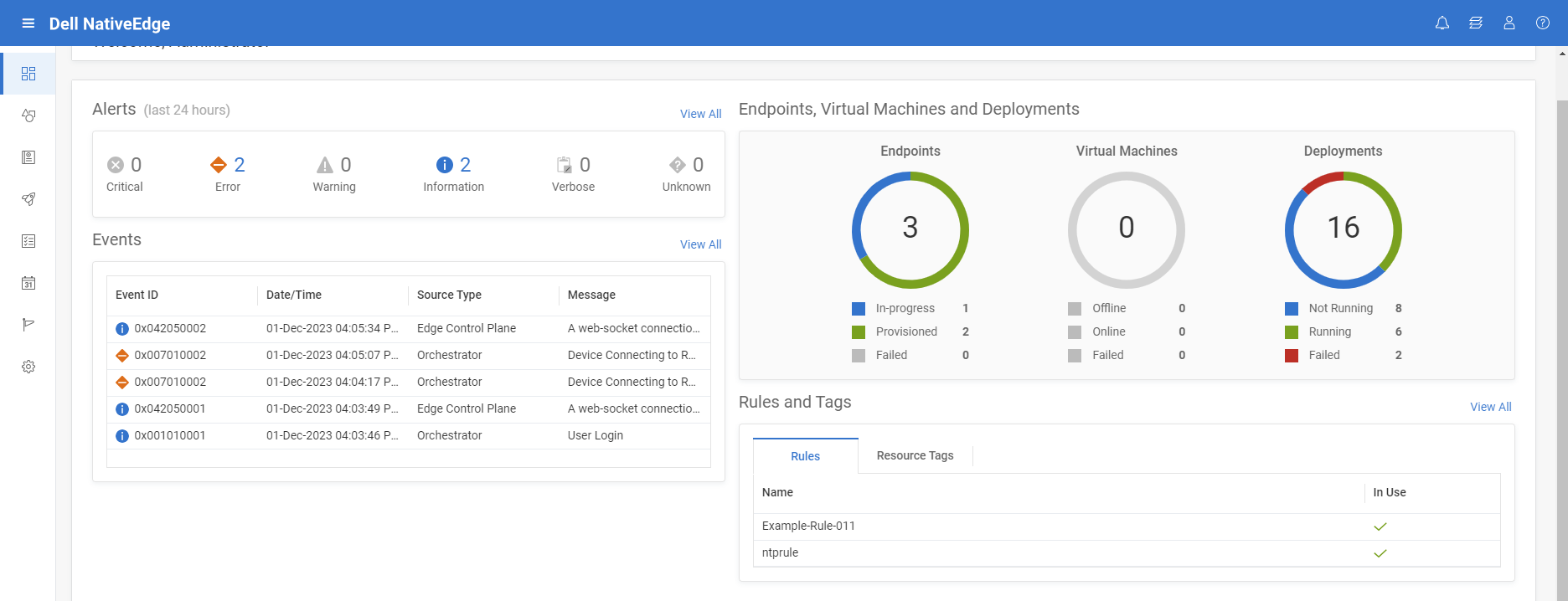

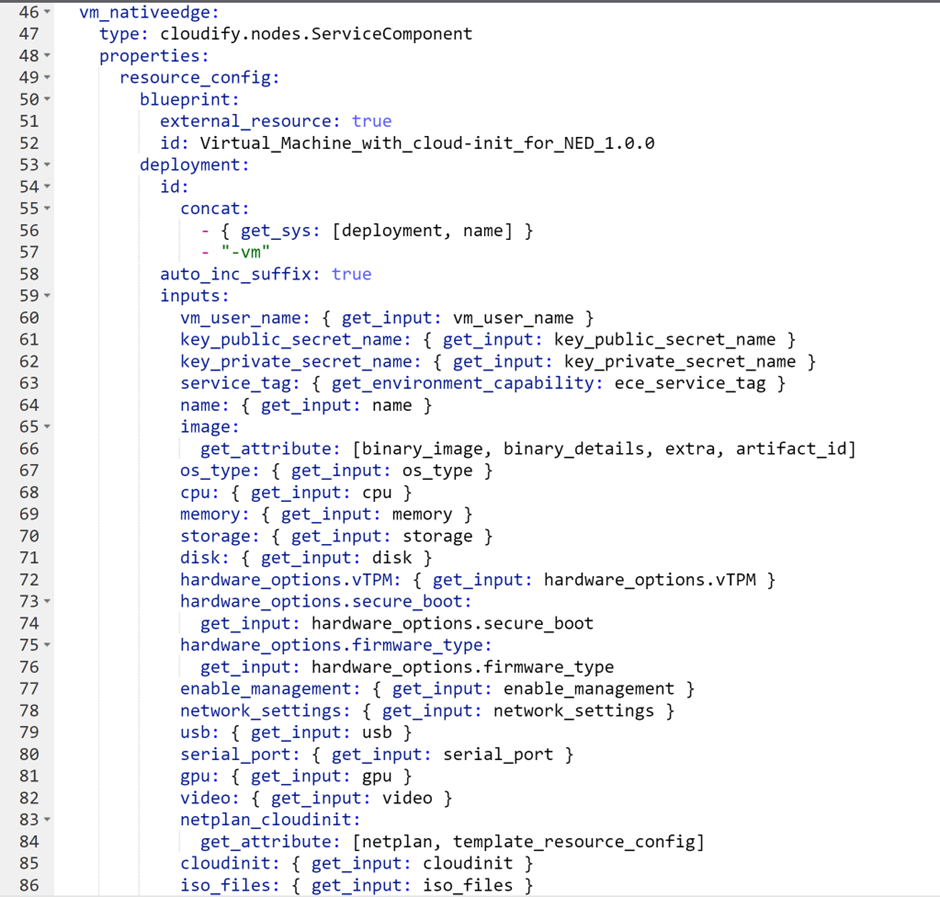

The following blueprint automates the deployment of the Centerity agent on a NativeEdge Endpoint. It launches a virtual machine (VM) on the remote device which is configured to connect to the Centerity Manager. It also optimizes the VM to support AI workload by enabling GPU passthrough.

Figure 7. Create an AI optimized VM on the target device

NativeEdge can execute the above blueprint simultaneously on all the devices. The following figure shows the result of executing this blueprint on three devices.

Figure 8. Deploy the Edge Proxy on all the stores in one bulk

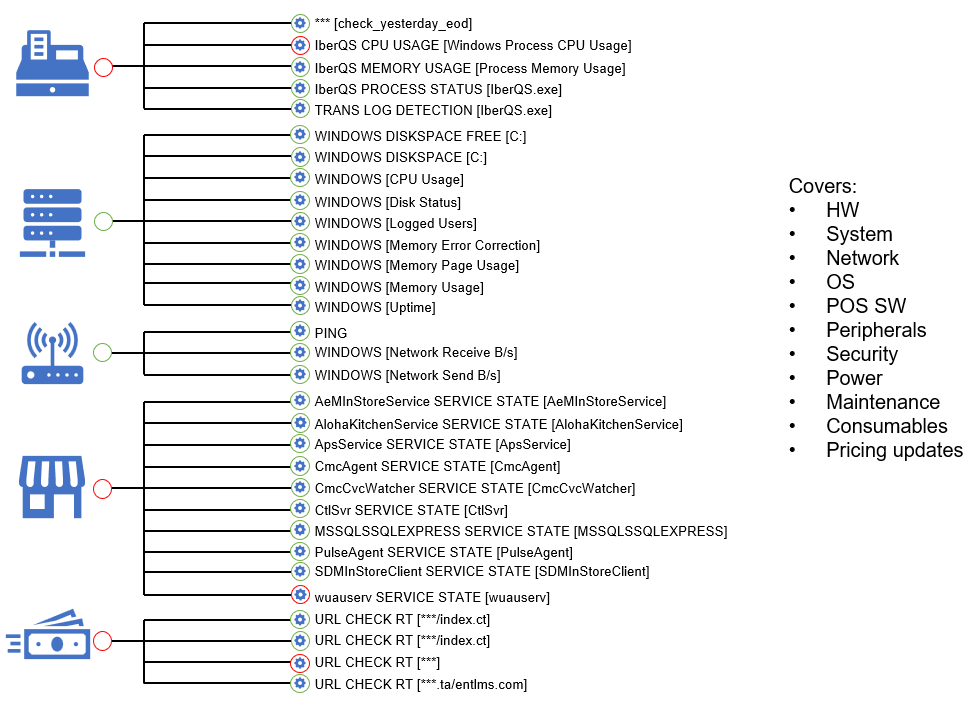

Step 3: Connect the retail and logistic devices to Centerity

In this step, we will configure and set up the devices and connect them to the Centerity monitoring service. Note that this step is done directly on the centerity management console and not through NativeEdge console.

In this case, we chose the following endpoints within the logistic center or warehouse.

- Tablet type – Dell Windows11

- Mobile terminal type – Zebra TC52

- API based devices – SES (Digital signage)

- Printer – Bixolon (Log based)

- Agentless based devices – Security camera

Figure 9. Centerity Management connected to the edge device managed by NativeEdge

Step 4: Managing and monitoring the retail warehouse and store

In this step, we will manage the retail warehouse and store through the monitoring of the devices that we connected to the system in the previous step. This will include the following set of operations:

- Device monitoring

- Inventory tracking (if applicable)

- Failures alerts

- Auto remediation (if applicable)

- Operational and business SLA dashboards

- Reports

- Generating events for proactive operational support

- Updating and keeping up the system software for compliance

- Breaking or fixing the workflow

Figure 10. Monitoring and managing retail devices

Conclusion

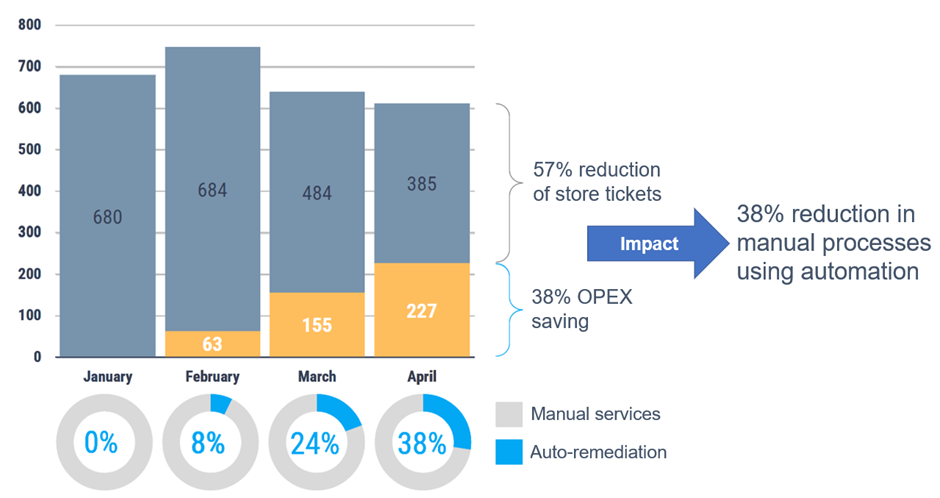

Dell NativeEdge provides a fully-automated secure device onboarding from Bare Metal to the cloud. As a DevEdgeOps platform, NativeEdge also provides the ability to validate and continuously manage the provisioning and configuration of those devices in a secure way. This minimizes the risk of failure and security breaches due to misconfiguration or human errorThose potential vulnerabilities can be detected earlier in the pre-deployment development process. The introduction of NativeEdge Orchestrator enables customers to have a consistent and simple management of built-in solutions across their entire fleet of new and existing devices. The separation between the device management and solution is key to enabling consistent operational management between different solution vendors as well as cloud infrastructure. In addition to that, the ability to integrate with the retail existing infrastructure (VMware in this specific example) as well as cloud-native infrastructure simultaneously ensures smoother transformation to a modern Edge-AI-enabled infrastructure.

The specific integration between NativeEdge and Centerity in this specific use case enables customers to deliver a full-blown retail management which integrates with both their legacy and new AI enabled devices. According to recent studies, this level of end-to-end monitoring and automation can reduce the maintenance overhead and potential downtime by 57 percent.

Figure 11. Moving to a fully automated and monitored retail warehouse and store brings a significant TCO saving

It is also worth noting that the open solution framework provided by NativeEdge allows partners such as Centerity to use Dell NativeEdge as a generic edge infrastructure framework, addressing fundamental aspects of device fleet management. Vendors can then focus on delivering the unique value of their solution, be it predictive maintenance or real-time monitoring, as demonstrated by the Centerity use case in this blog.

References

Related Blog Posts

Will AI Replace Software Developers?

Thu, 02 May 2024 09:38:01 -0000

|Read Time: 0 minutes

Over the past year, I have been actively involved in generative artificial intelligence (Gen AI) projects aimed at assisting developers in generating high-quality code. Our team has also adopted Copilot as part of our development environment. These tools offer a wide range of capabilities that can significantly reduce development time. From automatically generating commit comments and code descriptions to suggesting the next logical code block, they have become indispensable in our workflow.

According to a recent study by McKinsey, quantify the level of productivity gain in the following areas:

Figure 1. Software engineering: speeding developer work as a coding assistant (McKinsey)

This study shows that “The direct impact of AI on the productivity of software engineering could range from 20 to 45 percent of current annual spending on the function. This value would arise primarily from reducing time spent on certain activities, such as generating initial code drafts, code correction and refactoring, root-cause analysis, and generating new system designs. By accelerating the coding process, Generative AI could push the skill sets and capabilities needed in software engineering toward code and architecture design. One study found that software developers using Microsoft’s GitHub Copilot completed tasks 56 percent faster than those not using the tool. An internal McKinsey empirical study of software engineering teams found those who were trained to use generative AI tools rapidly reduced the time needed to generate and refactor code and engineers also reported a better work experience, citing improvements in happiness, flow, and fulfilment.”

What Makes the Code Assistant (Copilot) the Killer App for Gen AI?

The remarkable progress of AI-based code generation owes its success to the unique characteristics of programming languages. Unlike natural language text, code adheres to a structured syntax with well-defined rules. This structure enables AI models to excel in analyzing and generating code.

Several factors contribute to the swift evolution of AI-driven code generation:

- Structured nature of code–Code follows a strict format, making it amenable to automated analysis. The consistent structure allows AI algorithms to learn patterns and generate syntactically correct code.

- Validation tools–Compilers and other development tools play a crucial role. They validate code for correctness, ensuring that generated code adheres to language specifications. This continuous feedback loop enables AI systems to improve without human intervention.

- Repeatable work identification–AI excels at identifying repetitive tasks. In software development, there are numerous areas where routine work occurs, such as boilerplate code, data transformations, and error handling. AI can efficiently recognize and automate these repetitive patterns.

From Coding Assistant to Fully-Autonomous AI Software Engineer

The Cognition & Development Lab at Washington University in St. Louis investigates how infants and young children think, reason, and learn about the world around them. Their research focuses on the development of early social-cognitive capacities. They are the makers of Devin, the world’s first AI software engineer.

Devin possesses remarkable capabilities in software development in the following areas:

- Complex engineering tasks–With advances in long-term reasoning and planning, Devin can plan and execute complex engineering tasks that involve thousands of decisions. Devin recalls relevant context at every step, learns over time, and even corrects mistakes.

- Coding and debugging–Devin can write code, debug, and address bugs in codebases. It autonomously finds and fixes issues, making it a valuable teammate for developers.

- End-to-end app development–Devin builds and deploys apps from scratch. For example, it can create an interactive website, incrementally adding features requested by the user and deploying the app.

- AI model training and fine-tuning–Devin sets up fine-tuning for large language models, demonstrating its ability to train and improve its own AI models.

- Collaboration and communication–Devin actively collaborates with users. It reports progress in real-time, accepts feedback, and engages in design choices as needed.

- Real-world challenges–Devin tackles real-world GitHub issues found in open-source projects. It can also contribute to mature production repositories and address feature requests. Devin even takes on real jobs on platforms like Upwork, writing and debugging code for computer vision models.

The Devin project is a clear indication of how fast we move from simple coding assistants to more complete engineering capabilities.

Will AI Replace Software Developers?

When I asked this question recently during a Copilot training session that our team took, the answer was “No”, or to be more precise “Not yet”. The common thinking is that it provides a productivity enhancement tool that will save developers from spending time on tedious tasks such as documentation, testing, and so on. This could have been true yesterday, but as seen with project Devin, it already goes beyond simple assistance to full development engineering. We can rely on the experience from past transformations to learn a bit more about where this is all heading.

Learning from Cloud Transformation: Parallels with Gen AI Transformation

The advent of cloud computing, pioneered by AWS approximately 15 years ago, revolutionized the entire IT landscape. It introduced the concept of fully automated, API-driven data centers, significantly reducing the need for traditional system administrators and IT operations personnel. However, beyond the mere shrinking of the IT job market, the following parallel events unfolded:

- Traditional IT jobs shrank significantly–Small to medium-sized companies can now operate their IT infrastructure without dedicated IT operators. The cloud’s self-service capabilities have made routine maintenance and management more accessible.

- Emergence of new job titles: DevOps, SRO, and more–As organizations embrace cloud technologies, new roles emerge. DevOps engineers, site reliability operators (SROs), and other specialized positions became essential for optimizing cloud-based systems.

- The rise of SaaS startups–Cloud computing lowered the barriers of entry for delivering enterprise-grade solutions. Startups capitalized on this by becoming more agile and growing faster than established incumbents.

- Big tech companies’ accelerated growth–Tech giants like Google, Facebook, and Microsoft swiftly adopted cloud infrastructure. The self-service nature of APIs and SaaS offerings allowed them to scale rapidly, resulting in record growth rates.

Impact on Jobs and Budgets

While traditional IT jobs declined, the transformation also yielded positive outcomes:

- Increased efficiency and quality–Companies produced more products of higher quality at a fraction of the cost. The cloud’s scalability and automation played a pivotal role in achieving this.

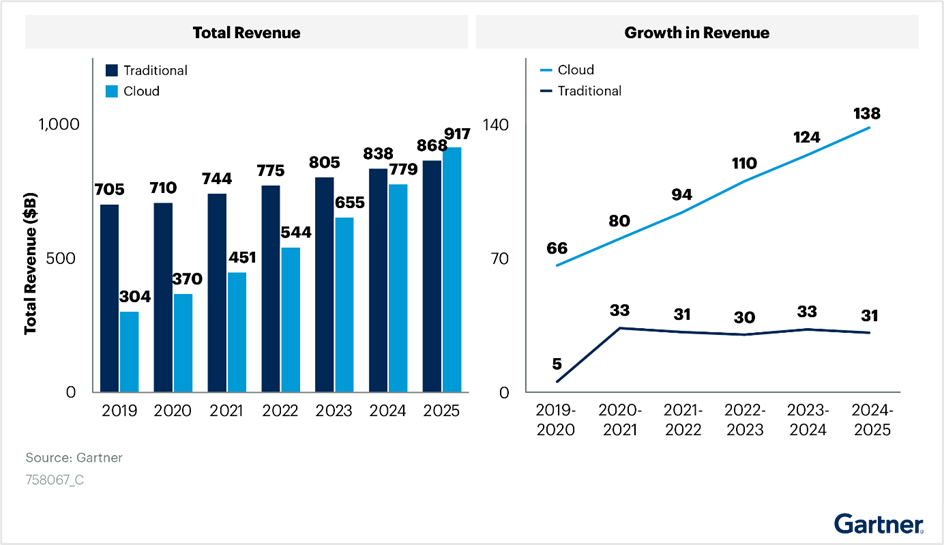

- Budget shift from traditional IT to cloud–Gartner’s IT spending reports reveal a clear shift in budget allocation. Cloud investments have grown steadily, even amidst the disruption caused by the introduction of cloud infrastructure, see the following figure:

Figure 2. Cloud transformation’s impact on IT budget allocation

Looking Ahead: AI Transformation

As we transition to the era of AI, we can anticipate similar trends:

- Decline in traditional jobs–Just as cloud computing transformed the job landscape, AI adoption may lead to the decline of certain traditional roles.

- Creation of new jobs–Simultaneously, AI will create novel opportunities. Roles related to AI development, machine learning, and data science will flourish.

Short Term Opportunity

Organizations will allocate more resources to AI initiatives. The transition to AI is not merely an evolutionary step; it is a strategic imperative.

According to a research conducted by ISG on behalf of Glean, Generative AI projects consumed an average of 1.5 percent of IT budgets in 2023. These budgets are expected to rise to 2.7 percent in 2024 and further increase to 4.3 percent in 2025. Organizations recognize the potential of AI to enhance operational efficiency and bridge IT talent gaps. Gartner predicts that Generative AI impacts will be more pronounced in 2025. Despite this, worldwide IT spending is projected to grow by 8 percent in 2024. Organizations continue to invest in AI and automation to drive efficiency. The White House budget proposes allocating $75 billion for IT spending at civilian agencies in 2025. This substantial investment aims to deliver simple, seamless, and secure government services through technology.

The impact of AI extends far beyond the confines of the IT job market. It permeates nearly every facet of our professional landscape. As with any significant transformation, AI presents both risks and opportunities. Those who swiftly embrace it are more likely to seize the advantages.

So, what steps can software developers take to capitalize on this opportunity?

Tips for Software Developers in the Age of AI

In the immediate term, developers can enhance their effectiveness when working with AI assistants by acquiring a combination of the following technical skills:

- Learn AI basics–I would recommend starting the learning with AI Terms 101. I also recommend following the leading AI podcasts. I found this useful to keep myself up to date in this space and learn some useful tips and updates from industry experts.

- Use coding assistant tools (Copilot)–Coding assistant tools are definitely the low-hanging fruit and probably the simplest step to get into the AI development world. There is a growing list of tools that are available and can be integrated seamlessly into your existing development IDE. The following provides a useful reference to The Top 11 AI Coding Assistants to Use in 2024.

- Learn machine learning (ML) and deep learning concepts–Understanding the fundamentals of ML and deep learning is crucial. Familiarize yourself with neural networks, training models, and optimization techniques.

- Data science and analytics–Developers should grasp data preprocessing, feature engineering, and model evaluation. Proficiency in tools like Pandas, NumPy, and scikit-learn is beneficial.

- Frameworks and tools–Learn about popular AI frameworks such as TensorFlow, and PyTorch. These tools facilitate model building and deployment.

More skilled developers will need to learn how to create their own “AI engineers” which they will train and fine tune to assist them with user interface (UI), backend, and testing development tasks. They could even run a team of “AI engineers” to write an entire project.

Will AI Reduce the Demand for Software Engineers?

Not necessarily. In the case of cloud transformation, developers with AI expertise will likely be in high demand. Those who will not be able to adapt to this new world are likely to stay behind and face the risk of losing their job.

It would be fair to assume that the scope of work, post-AI transformation, will grow and will not stay stagnant. As an example, we will likely see products adding more “self-driving” capabilities, where they could run more complete tasks without the need for human feedback or enable close to human interaction with the product.

Under this assumption, the scope of new AI projects and products is going to grow, and that growth should balance the declining demand for traditional software engineering jobs.

Conclusion

As a history enthusiast, I often find parallels in the past that can serve as a guide to our future. The industrial era witnessed disruptive technological advancements that reshaped job markets. Some professions became obsolete, while new ones emerged. As a society, we adapted quickly, discovering new growth avenues. However, the emergence of AI presents unique challenges. Unlike previous disruptions, AI simultaneously impacts a wide range of job markets and progresses at an unparalleled pace. The implications are indeed profound.

Recent research by Nexford University on How Will Artificial Intelligence Affect Jobs 2024-2030 reveals some startling predictions. According to a report by the investment bank Goldman Sachs, AI could potentially replace the equivalent of 300 million full-time jobs. It could automate a quarter of the work tasks in the US and Europe, leading to new job creation and a productivity surge. The report also suggests that AI could increase the total annual value of goods and services produced globally by 7 percent. It predicts that two-thirds of jobs in the US and Europe are susceptible to some degree of AI automation, and around a quarter of all jobs could be entirely performed by AI.

The concerns raised by Yuval Noa Harari, a historian and professor at the Department of History of the Hebrew University of Jerusalem, resonate with many. The rapid evolution of AI may indeed lead to significant unemployment.

However, when it comes to software engineers, we can assert with confidence that regardless of how automated our processes become, there will always be a fundamental need for human expertise. These skilled professionals perform critical tasks such as maintenance, updates, improvements, error corrections, and the setup of complex software and hardware systems. These systems often require coordination among multiple specialists for optimal functionality.

In addition to these responsibilities, computer system analysts play a pivotal role. They review system capabilities, manage workflows, schedule improvements, and drive automation. This profession has seen a surge in demand in recent years and is likely to remain in high demand.

In conclusion, AI represents both risk and opportunity. While it automates routine tasks, it also paves the way for innovation. Our response will ultimately determine its impact.

References

- Economic potential of generative AI | McKinsey

- Introducing Devin, the first AI software engineer (cognition-labs.com)

- IT Spending & Budgets: Trends & Forecasts 2024

- Organizations continue to invest in AI and automation to drive efficiency

- This substantial investment aims to deliver simple, seamless, and secure government services through technology

- AI Terms 101: An A to Z AI Terminology Guide for Beginners

- 11 AI Podcasts That Will Shape Your Perspective (geekflare.com)\

- How Will Artificial Intelligence Affect Jobs 2024-2030 | Nexford University

- The Top 11 AI Coding Assistants to Use in 2024 | DataCamp

- Yuval Harari On The Future of Jobs & Technology, Intelligence vs Consciousness, & Future Threats to Humanity - Jacob Morgan (thefutureorganization.com)

How can Agile Transformation Lead to a One-Team Culture?

Thu, 22 Feb 2024 09:47:46 -0000

|Read Time: 0 minutes

Many blogs cover the Agile process itself; however, this blog is not one of them. Instead, I want to share the lessons learned from working in a highly distributed development team across eleven countries. Our teams ranged from small startups post-acquisition to multiple teams from Dell, and we had an ambitious goal to deliver a complex product in one year! This journey started when Dell’s Project Frontier leaped to the next stage of development and became NativeEdge.

This blog focuses on how Agile transformation enables us to transform into a one-team culture. The journey is ongoing as we get closer to declaring success. The Agile transformation process is a constant iterative process of learning and optimizing along the way, of failing and recovering fast, and above all, of committed leadership and teamwork.

Having said that, I thought that we reached an important milestone, at one year, in this journey that makes it worthwhile sharing.

Why Agile?

Agile methodologies were originally developed in the manufacturing industry with the introduction of Lean methodology by Toyota. Lean is a customer-centric methodology that focuses on delivering value to the customer by optimizing the flow of work and minimizing waste. The evolution of these principles into the software industry is known as Agile development, which focuses on rapid delivery of high-quality software. Scrum is a part of the Agile process framework and is used to rapidly adjust to changes and produce products that meet organizational needs.

Lean Manufacturing Versus Agile Software Delivery

The fact that a software product doesn’t look like a physical device doesn’t make the production and delivery process as different as many tend to think. The increasing prevalence of embedded software in physical products further blurs the line between these two worlds.

Software product delivery follows similar principles to the Lean manufacturing process of any physical product, as shown in the following table:

| Lean manufacturing | Agile software development |

| Supply chain | Features backlog |

| Manufacturing pipeline | CI/CD pipeline |

| Stations | Pods, cells, squads, domains |

| Assembly line | Build process |

| Goods | Product release |

Agile addresses the need of organizations to react quickly to market demands and transform into a digital organization. It encompasses two main principles:

- Project management–Large projects are better broken into smaller increments with minimal dependencies to enable parallel development rather than one large project that is serialized through dependencies. The latter would be a waterfall process where one milestone/dependency missed can cause a reset of the entire program.

- Team structure–The organizational structure should be broken into self-organizing teams that align with the product architecture structure. These teams are often referred to as squads, pods, or cells. Each team needs to have the capability to deliver its specific component in the architecture, as opposed to a tier-based approach where teams are organized based on skills, such as the product management team, UI team, or backend team, and so on.

What Could Lead to an Unsuccessful Agile Transformation?

Many detailed analyses show why Agile transformation fails. However, I would like to suggest a simpler explanation. Despite the similarities between manufacturing and software delivery, as outlined in the previous section, many software companies don’t operate with a manufacturing mindset.

Software companies that operate with a manufacturing mindset are companies where their leadership measures their development efficiency just as they measure other business KPIs, such as sales growth. They understand that their development efficiency directly impacts their business productivity. This is obvious in manufacturing, but for some reason, it has become less obvious in software. When you measure your development efficiency at the top leadership level and even board level, all the rest of the agile transformation issues that are reported in the failure analysis, such as resistance to change, become just symptoms of that root cause. It is, therefore, no surprise that companies like Spotify have been successful in this regard. Spotify has even published a lot of its learning and use cases, as well as open-source projects such as Backstage, which helped them differentiate themselves from other media streaming companies, just as Toyota did when they introduced Lean.

Lessons from a Recent Agile Transformation Journey

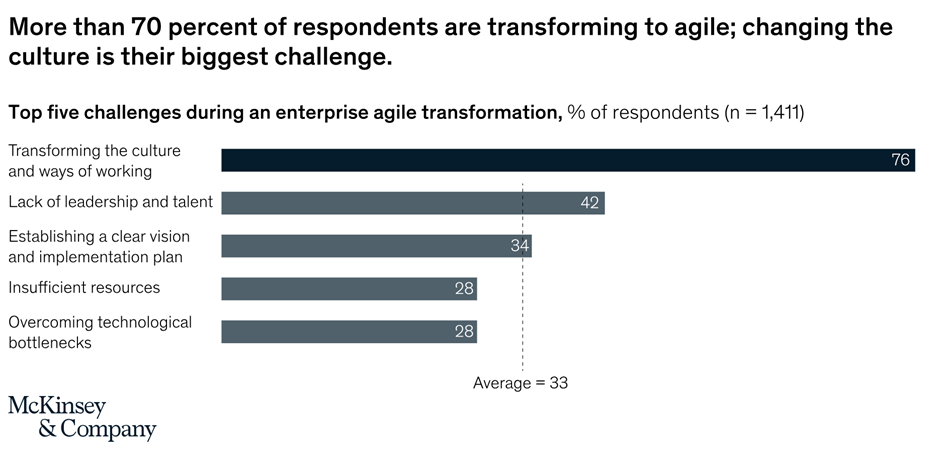

Changing a culture is the biggest challenge in any Agile transformation project. As many researchers have noted, Agile transformation requires a big cultural transformation including team structure. Therefore, it is no surprise that this came up as the biggest challenge in the Doing vs being: Practical lessons on building an agile culture article by McKinsey & Company.

Figure 1. Exhibit 1 from McKinsey & Company article: Doing vs being: Practical lessons on building an Agile culture

Our challenge was probably at the top of the scale in that regard, as our team was built out of a combination of people from all around the world. Our challenge was to create a one-team agile culture that would enable us to deliver a new and complex product in one year.

Getting to this one-team culture is tough, because it works in many ways against human nature, which is often competitive.

One thing that helped us go through this process was the fact that we all felt frustration and pain when things didn’t work. We also had a lot to lose if we failed. At this point, we realized that our only way out of this would be to adopt Agile processes and team structures. The pain that we all felt was a great source of motivation that drove everyone to get out of their comfort zone and be much more open to adopting the changes that were needed to follow a truly Agile culture.

This wasn’t a linear process by any means and involved many iterations and frustrating moments until it became what it is today. For the sake of this blog, I will spare you from that part and focus on the key lessons that we took to implement our specific Agile transformation journey.

Key Lessons for a Successful Agile Transformation

Don’t Re-invent the Wheel

There are many lessons and processes that were already defined on how to implement Agile methodologies. Many of the lessons were built on the success of other companies. So, as a lesson learned, it’s always better to build on a mature baseline and use it as a basis for customization rather than trying to come up with your own method. In our case, we chose to use the Scrum@Scale as our base methodology.

Define Your Custom Agile Process That Is Tailored to Your Organization’s Reality

As one can expect, out-of-the-box methodologies don’t consider your specific organizational reality and challenges. It is therefore very common to customize generic processes to fit your own needs. We chose to write our own guidebook, which summarizes our version of the agile roles and processes. I found that the process of writing our ‘Agile guidebook’ was more important than the book itself. It created a common vocabulary, cleared out differences, and enabled team collaboration, which later led to a stronger buy-in from the entire team.

Test Your Processes Using Real-World Simulation

Defining Agile processes can sometimes feel like an academic exercise. To ensure that we weren’t falling into this trap, we took specific use cases from our daily routine and tested them against the process that we had just defined. We measured how much those processes got clearer or better than the existing ones, and only if we all felt that we had reached a consensus did we make it official.

Restructure the Team Into Self-Organizing Teams

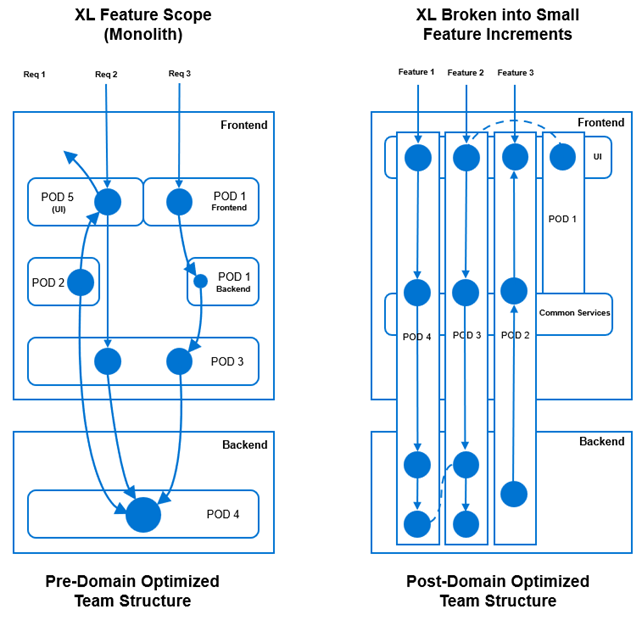

This task is easier said than done. It represents the most challenging aspect, as it necessitates restructuring teams to align with the skills required in each domain. Additionally, we had to ensure that each domain maintained the appropriate capacity, in line with business priorities. Flexibility was crucial, allowing us to adapt teams as priorities shifted.

In this context, it was essential that those involved in defining this structure remained unbiased and earned the trust of the entire team when proposing such changes. As part of our Agile process, we also employed simulations to validate the model’s effectiveness. By minimizing dependencies between teams for each feature development, we transformed the team structure. Initially, features required significant coordination and dependency across teams. However, we evolved to a point where features could be broken down without inter-team dependencies, as illustrated in the following figure:

Figure 2. Organizing teams into self-organizing domains teams. Breaking large features into smaller increments (2-4 sprints each) likely fits better into the domain structure than large features

Invest in Improving the Developer Experience (DevX)

Agile processes require an agile development environment. One of the constant challenges that I’ve experienced in this regard is that many organizations fail to put the right investment and leadership attention into this area. If that is the case, you wouldn’t gain the speed and agility that you were hoping to get through the entire Agile transformation. In manufacturing terms, that's like investing in robots to automate the manufacturing pipeline but leaving humans to pass the work between them. A number of these humans could never keep up with the rest of the supply chain. This actually gets worse as the supply (feature development) gets faster. Your development speed is largely determined by how far your development processes are automated. To get to that level of automation, you need to constantly invest in the development platform. The challenge is that in most cases, the ratio between developers and DevOps can sometimes be 20:1, and that turns DevOps quickly into the next bottleneck. Platform engineering can be a solution. In a nutshell, in the shift-left model much of the ongoing responsibility for handling the feature development and testing automation to the development team and puts the main effort of the "DevOps" team to focus mostly on delivering and evolving a self-service development platform that enables the developers to do this work without having to become a DevOps expert themselves.

Keep the ‘Eye on the Ball’ With Clear KPIs

Teams can easily get distracted by daily pressures, causing focus to drift. Keeping discipline on those Agile processes is where a lot of teams fail, as they tend to take shortcuts when the delivery pressure grows. KPIs allow us to keep track of things and ensure that we’re not drifting over time, keeping our ‘eye on the ball’ even when such a distraction happens. There are many KPIs that can measure team effectiveness. The key is to pick the three that are the most important at each stage, such as stability of the release, peer review time, average time to resolve a failure, and test coverage percentage.

Don’t Try It at Home Without a Good Coach

As leaders, we often tend to be impatient and opinionated towards the ‘elephant memory’ of our colleagues. Trying to let the team figure out this sort of transformation all by themselves is a clear recipe for failure. Failure in such a process can make things much worse. On the other hand, having a highly experienced coach with good knowledge of the organization and with the right preparation was a vital facilitator in our case. We needed two iterations to come closer together. The first one was used mostly to get the ‘steam out’, which allowed us to work more effectively on all the rest of these points during the second iteration.

Conclusion

As I close my first year at Dell Technologies and reflect on all the things that I’ve learned, especially for someone who’s been in startups all of his career, I never expected that we could accomplish this level of transformation in less than a year. I hope that the lessons from this journey are useful and hopefully save some of the pain that we had to go through to get there. Obviously, none of this could have been accomplished without the openness and inclusive culture of the entire team in general and leadership specifically within Dell’s NativeEdge team. Thank you!

References

- 8 Reasons Why Agile Projects Fail | Agile Alliance

- Why Many Agile Transformations Fail | Accenture

- Squads, pods, cells? Making sense of Agile teams | TechTarget

- Practical lessons on building an agile culture | McKinsey

The journey to an agile organization | McKinsey - The Toyota Way - Wikipedia

- 11 Agile Metrics For Highly Effective Teams - AGILE KEN

- The Scrum@Scale Guide Online | Scrum@Scale Framework (scrumatscale.com)

- What Is Platform Engineering, and What Does It Do? (gartner.com)

- Talent Assessment & Development Advisors (tada-advisors.com)