Do I Need Layer 2 Switching?

Tue, 13 Sep 2022 23:21:29 -0000

|Read Time: 0 minutes

Not really, at least not anymore.

The world of computer networking is rather interesting. For the longest time, the architecture has been around a combination of Layer 2 (Spanning Tree, VLANs, Port-Channels, and so forth) and Layer 3 (IP, OSPF, BGP, and so on) features. Each of these categories comes with its own set of advantages and disadvantages.

For example, when deploying Layer 2 features such as Spanning Tree, or VLAN, scalability comes to mind. With Spanning Tree, bandwidth utilization runs at fifty percent due to links being blocked as part of loop avoidance algorithms. With VLANs, the number of IDs is limited to 4,094. Having said that, Layer 2 is simple to deploy and it is better understood.

As far as Layer 3 is concerned, IP is considered more complex than Layer 2, routing protocols such as OSPF, or BGP can be complex. Removing these inherent complexities has always been key to the overall adoption of Layer 3 deployments.

Dell SmartFabric OS10 is widely deployed across all sectors and infrastructure environments. Among these environments, large enterprise, and cloud deployments there is a unique deployment model where Layer 3 is fully extended all the way to the host. This feature is referred to as “Routing to the Host.”

Let’s eliminate Layer 2 switching

In a typical network, an end-host sends its data packet to a switch or a router so the packet can be either switched or routed to its destination. The end-host needs … but what if I told you that we can eliminate the need for a switch and connect straight.

Routing to the Host

Routing to the host is an evolution in simplicity and often deployed as a cloud-based model. It fundamentally shifts the idea in how data communication works from the end-host while bringing the benefits of a Layer 3 environment with none of Layer 2’s limitations. Routing to the host has the following characteristics:

- No VLAN needed, therefore no ID limitations and no need for a Layer 3 device to terminate these VLANs to allow inter-VLAN communication.

- Direct route advertisement allowing complete flexibility in movement by end-hosts.

- No configuration is needed. This feature is enabled by default on Dell SmartFabric OS10.

- No network loops.

- No device redundancy feature needed such as multi-chassis (MC-LAG) to provide end-host redundant connectivity.

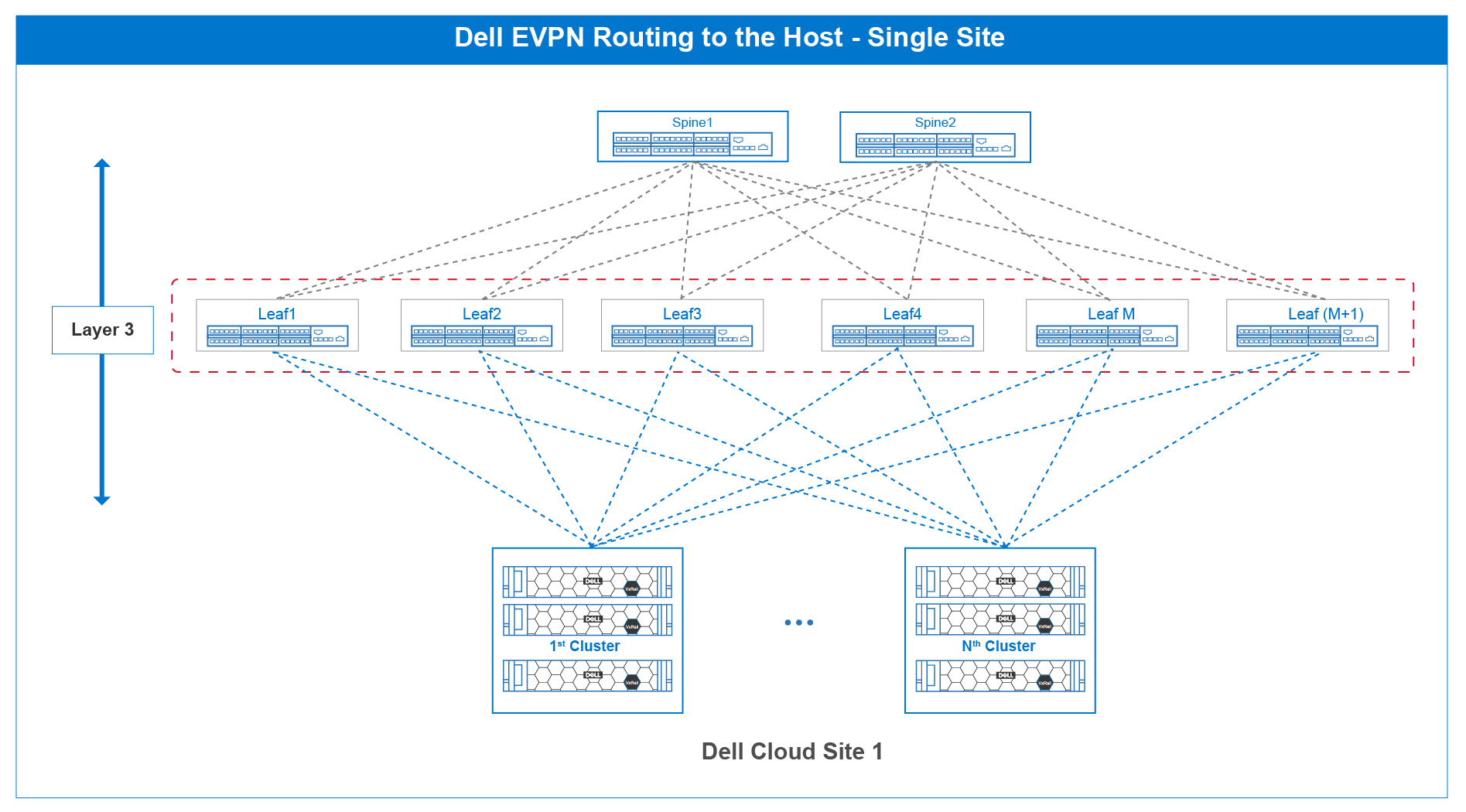

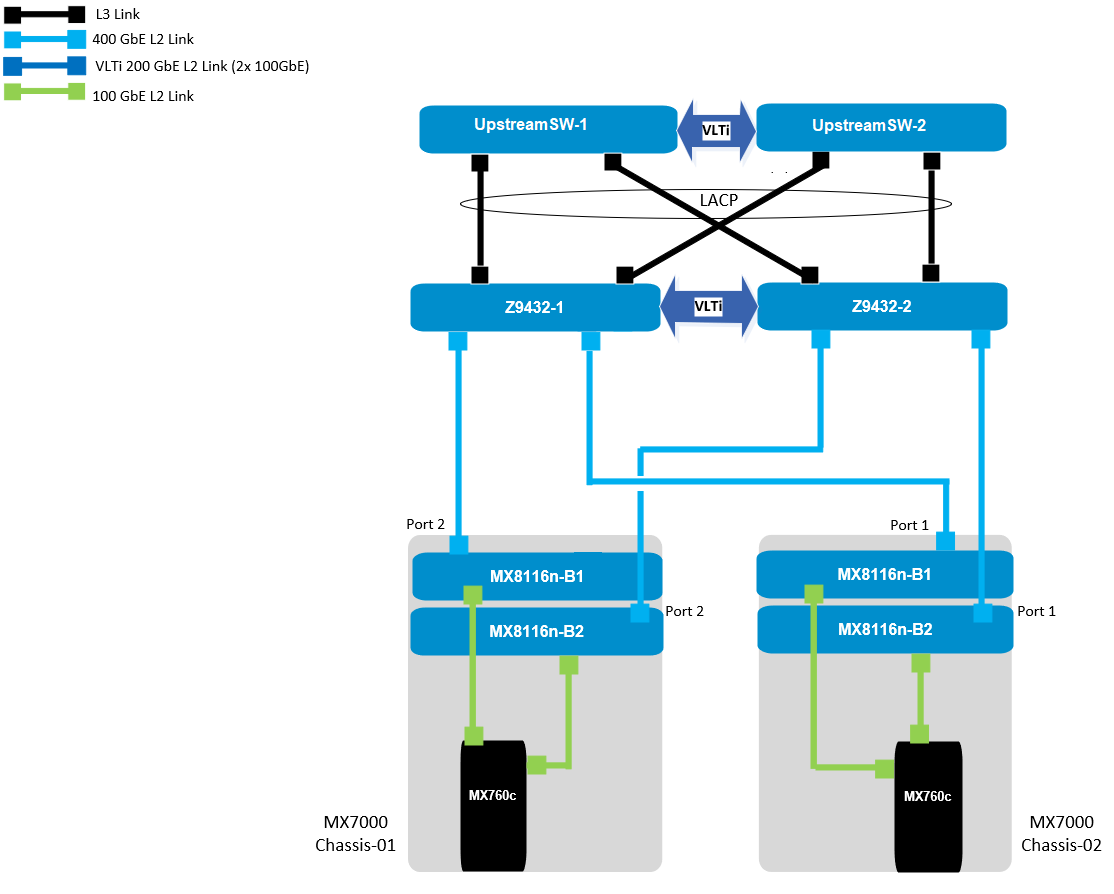

Figure 1 shows the typical routing to a host single-site cloud-based deployment model where Layer 3 is deployed end-to-end, including the end-host.

Figure 1 – Routing to the host single-site cloud reference architecture

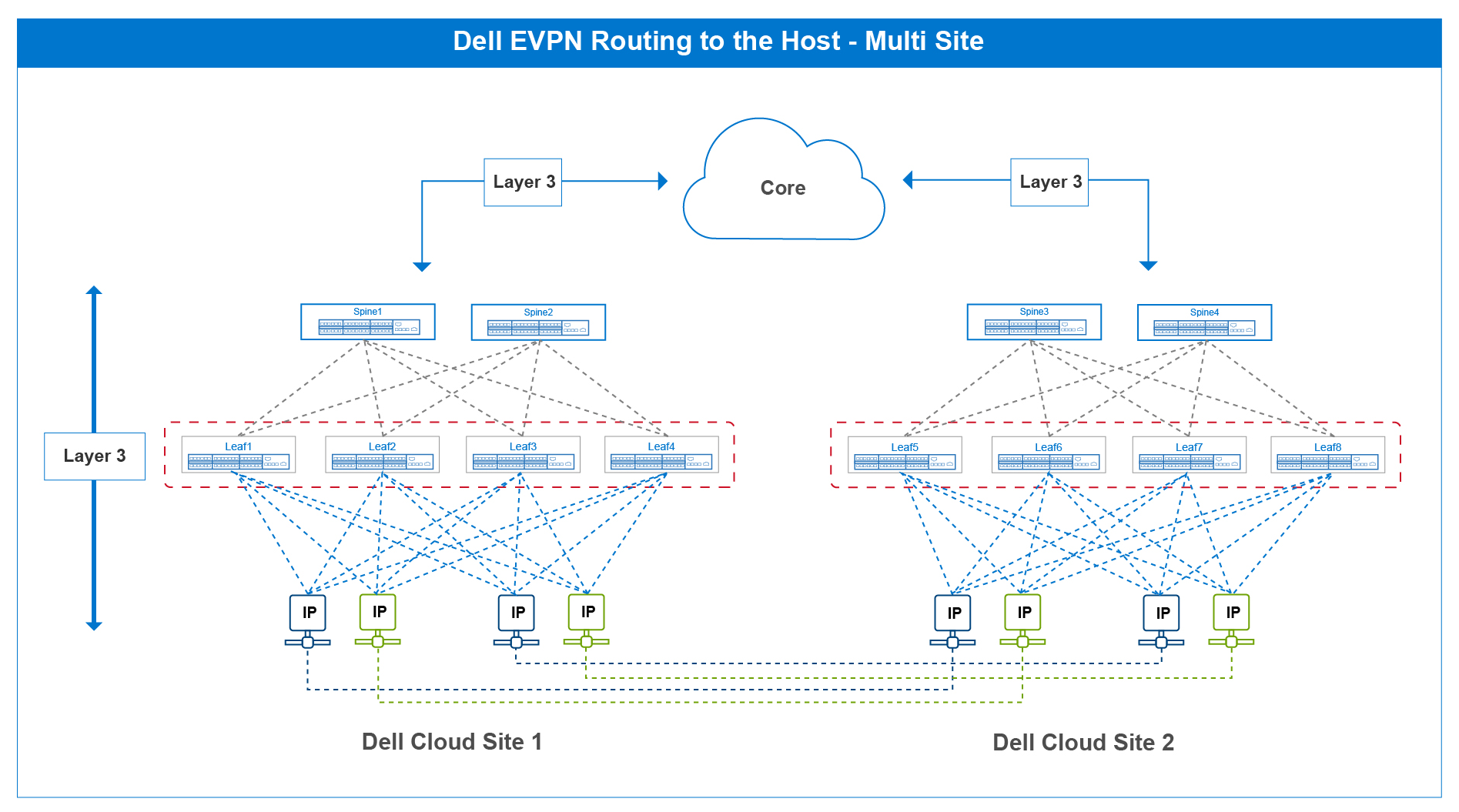

Figure 2 shows two fabrics with routing to the host deployed on each fabric. It is here where this freedom of movement can be better understood. In typical network design where routing to the host is not deployed, the end-host needs a default gateway to send its data packet to, in this case it is the upstream leaf connection. This is often a 1:1 correlation, creating dependency.

To move a connection from the left side to the right side and vice versa, companion configurations would be needed on the leaf switches, creating unnecessary configuration churn.

With routing to the host, end-hosts are simply IP addresses moving between sites. There is no configuration tie-down to any upstream device. The routing protocols ensure these IP addresses are routed properly to their destinations.

Figure 2 – Routing to the host multi-site cloud reference architecture

Routing to the host is new when compared to other Layer 3 routing features such as OSPF or BGP. However, in the short time it has been implemented, it has created a unique value proposition whenever planning any infrastructure deployment.

It is going to be interesting to see how the industry reacts and Dell Technologies is excited to see how many deployments incorporate this interesting SmartFabric OS10 feature.

Related Blog Posts

Dell Technologies PowerEdge MX 100 GbE solution with external Fabric Switching Engine

Mon, 26 Jun 2023 20:31:38 -0000

|Read Time: 0 minutes

The Dell PowerEdge MX platform is advancing its position as the leading high-performance data center infrastructure by introducing a 100 GbE networking solution. This evolved networking architecture not only provides the benefit of 100 GbE speed but also increases the number of MX7000 chassis within a Scalable Fabric. The 100 GbE networking solution brings a new type of architecture, starting with an external Fabric Switching Engine (FSE).

PowerEdge MX 100 GbE solution design example

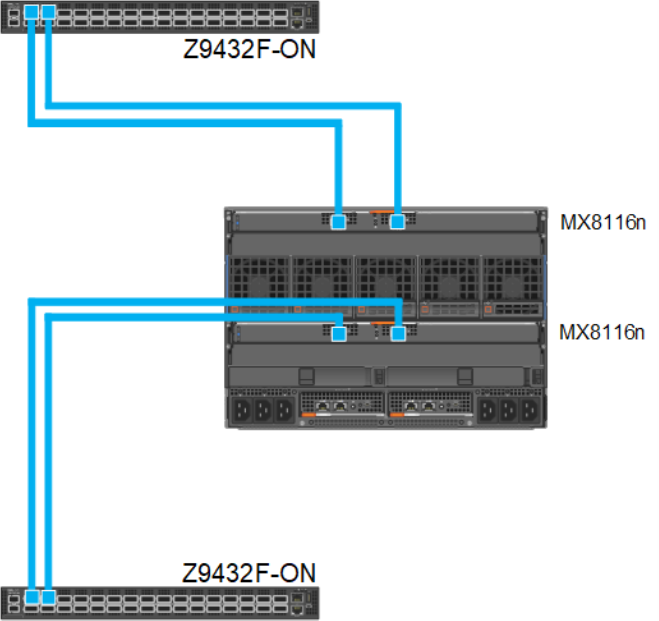

The diagram shows only one connection on each MX8116n for simplicity. See the port-mapping section in the networking deployment guide here.

Figure 1. 100 GbE solution example topology

Components for 100 GbE networking solution

The key hardware components for 100 GbE operation within the MX Platform are described below with a minimal description.

Dell Networking MX8116n Fabric Expander Module

The MX8116n FEM includes two QSFP56-DD interfaces, with each interface providing up to 4x 100Gbps connections to the chassis, 8x 100 GbE internal server-facing ports for 100 GbE NICs, and 16x 25 GbE for 25 GbE NICs.

The MX7000 chassis supports up to four MX8116n FEMs in Fabric A and Fabric B.

Figure 2. MX8116n FEM

The following MX8116n FEM components are labeled in the preceding figure:

- Express service tag

- Power and indicator LEDs

- Module insertion and removal latch

- Two QSFP56-DD fabric expander ports

Dell PowerEdge MX760c compute sled

- The MX760c is ideal for dense virtualization environments and can serve as a foundation for collaborative workloads.

- Businesses can install up to eight MX760c sleds in a single MX7000 chassis and combine them with compute sleds from different generations.

- Single or dual CPU (up to 56 cores per processor/socket with four x UPI @ 24 GT/s) and 32x DIMM slots DDR5 with eight memory channels.

- 8x E3.S NVMe (Gen5 x4) or 6 x 2.5" SAS/SATA SSDs or 6 x NVMe (Gen4) SSDs and iDRAC9 with lifecycle controller.

Note: The 100 GbE Dual Port Mezzanine card is also available on the MX750c.

Figure 3. Dell PowerEdge MX760c sled with eight E3.s SSD drives

Dell PowerSwitch Z9432F-ON external Fabric Switching Engine

The Z9432F-ON provides state-of-the-art, high-density 100/400 GbE ports, and a broad range of functionality to meet the growing demands of modern data center environments. Compact and offers an industry-leading density of 32 ports of 400 GbE in QSFP56-DD, 128 ports of 100, or up to 144 ports of 10/25/50 (through breakout) in a 1RU design. Up to 25.6 Tbps non-blocking (full duplex), switching fabric delivers line-rate performance under full load.L2 multipath support using Virtual Link Trunking (VLT) and Routed VLT support. Scalable L2 and L3 Ethernet switching with QoS and a full complement of standards-based IPv4 and IPv6 features, including OSPF and BGP routing support.

Figure 4. Dell PowerSwitch Z9432F-ON

Note: Mixed dual port 100 GbE and quad port 25 GbE mezzanine cards connecting to the same MX8116n are not a supported configuration.

100 GbE deployment options

There are four deployment options for the 100 GbE solution, and every option requires servers with a dual port 100 GbE mezzanine card. You can install the mezzanine card in either mezzanine slot A, B, or both. When you use the Broadcom 575 KR dual port 100 GbE mezzanine card, you should set the Z9432F-ON port-group to unrestricted mode and configure the port mode for 100g-4x.

PowerSwitch CLI example:

port-group 1/1/1

profile unrestricted

port 1/1/1 mode Eth 100g-4x

port 1/1/2 mode Eth 100g-4x

Note: The 100 GbE solution deployment, 14 maximum numbers of chassis are supported in single fabric, and 7 maximum numbers of chassis are supported in dual fabric using the same pair of FSE solution.

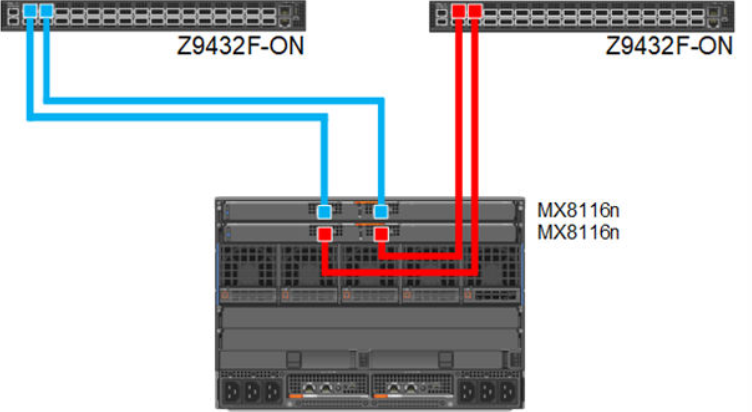

Single fabric

In a single fabric deployment, two MX8116n can be installed either in Fabric A or Fabric B, and the corresponding slot of the sled in slot-A or slot-B can have the 100 GbE mezzanine card installed.

Figure 5. 100 GbE Single Fabric

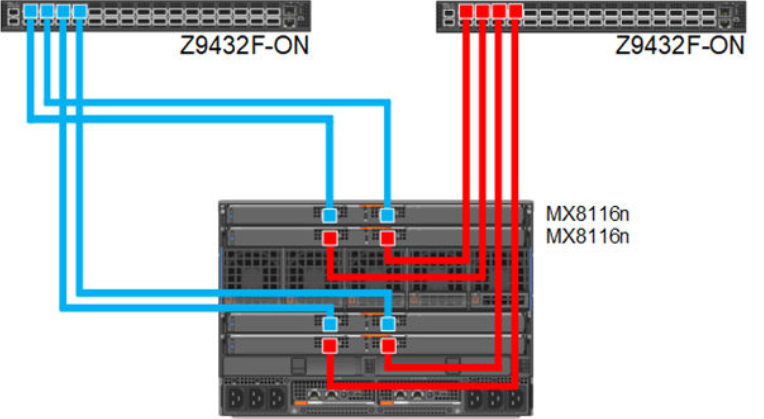

Dual fabric combined fabrics

In this option, four MX8116n (2x in Fabric A and 2x in Fabric B) can be installed and combined to connect Z9432F-ON external FSE.

Figure 6. 100 GbE Dual Fabric combined Fabrics

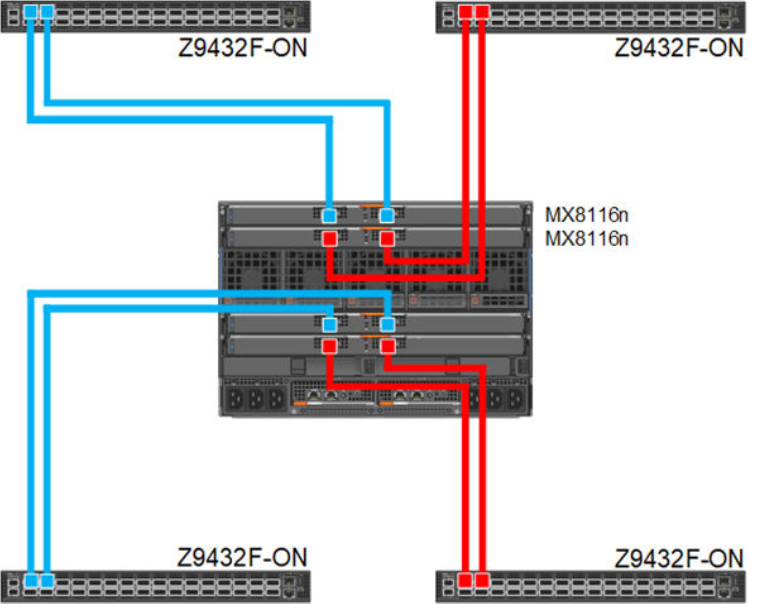

Dual fabric separate fabrics

In this option four, MX8116n (2x in Fabric A and 2x in Fabric B) can be installed and connected to two different networks. In this case, the MX760c server module has two mezzanine cards, with each card connected to a separate network.

Figure 7. 100 GbE Dual Fabric separate Fabrics

Dual fabric, single MX8116n in each fabric, separate fabrics

In this option two, MX8116n (1x in Fabric A and 1x in Fabric B) can be installed and connected to two different networks. In this case, the MX760c server module has two mezzanine cards, each connected to a separate network.

Figure 8. 100 GbE Dual Fabric single FEM in separate Fabrics

References

Dell PowerEdge Networking Deployment Guide

A chapter about 100 GbE solution with external Fabric Switching Engine

What are the Dell SmartFabric™ Products?

Mon, 26 Jun 2023 14:09:10 -0000

|Read Time: 0 minutes

This blog aims to clarify the range of Dell Technologies’ SmartFabric branded products available today. We are always innovating here at Dell, so look out for more to come!

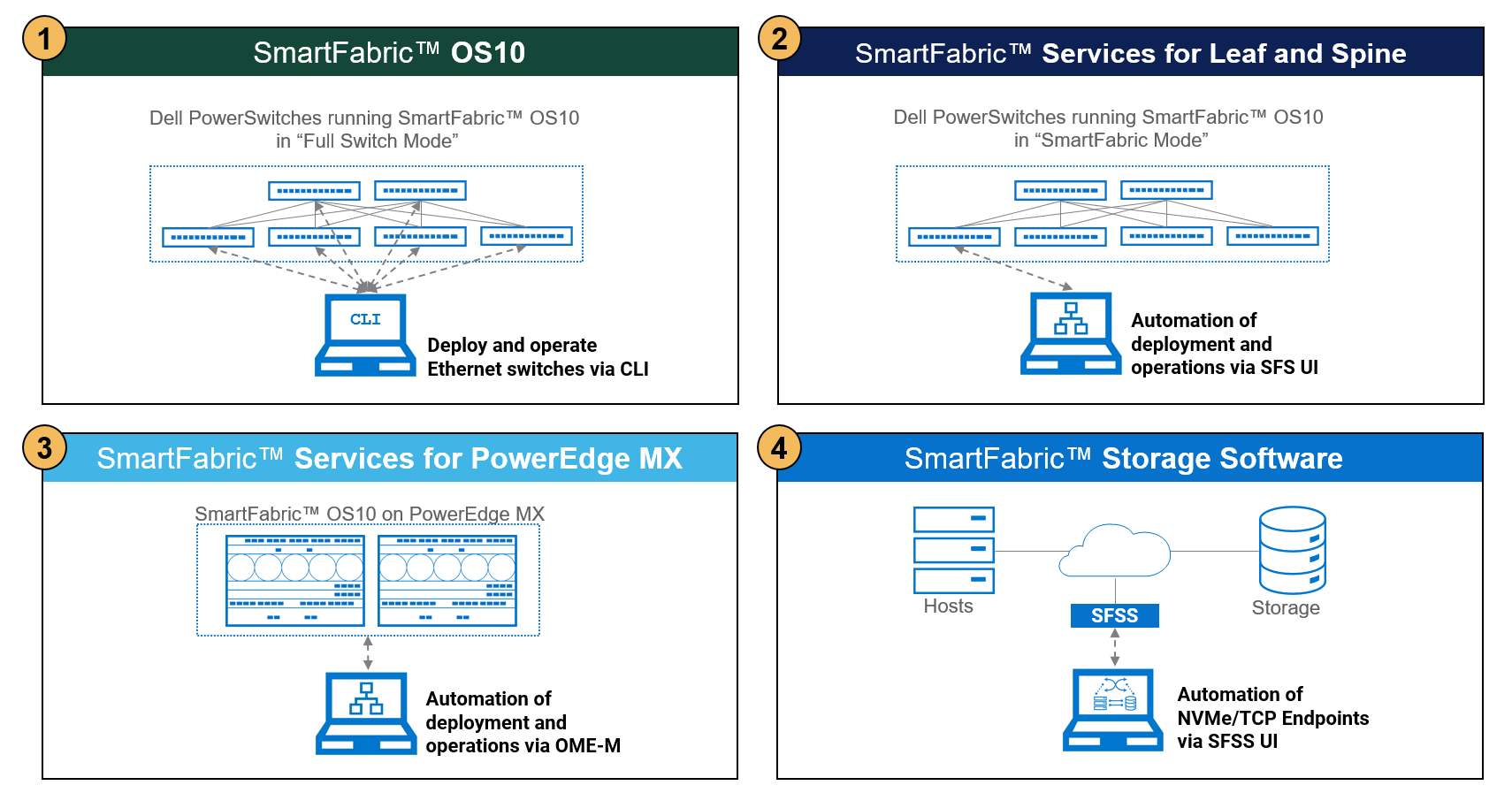

Here are four solutions that use the SmartFabric brand:

The following table provides links to further documentation, products that are interoperable with the solution, and how an administrator can access the product.

| Product | What does it do? | Related products | User Interface |

1 | SmartFabric OS10 | Operates Dell PowerSwitch switches as the network operating system. OS10 can run in “Full switch mode” or “SmartFabric mode.” | Dell PowerSwitch switches listed in the SmartFabric OS10 Hardware Compatibility List. |

|

2 | SmartFabric Services (SFS) for Leaf and Spine | Automates configuration of Dell PowerSwitch switches that run SmartFabric OS10 in SmartFabric mode. Applies to SFS on Spine/Leaf and Top of Rack (ToR) switches. | Dell PowerSwitch switches Visit the Networking Support & Interoperability Matrix, and then select this icon:

|

|

3 | SmartFabric Services (SFS) for PowerEdge MX | Automates configuration of SmartFabric OS10 operating system on the Ethernet switches (IOMs) in the PowerEdge MX Platform. | Dell PowerEdge MX

|

|

4 | SmartFabric Storage Software (SFSS) | Provides zoning and autodiscovery of hosts and storage in NVMe over TCP SANs. | Server and Storage Operating Systems Visit the Networking Support & Interoperability Matrix, and then select this icon:

|

|

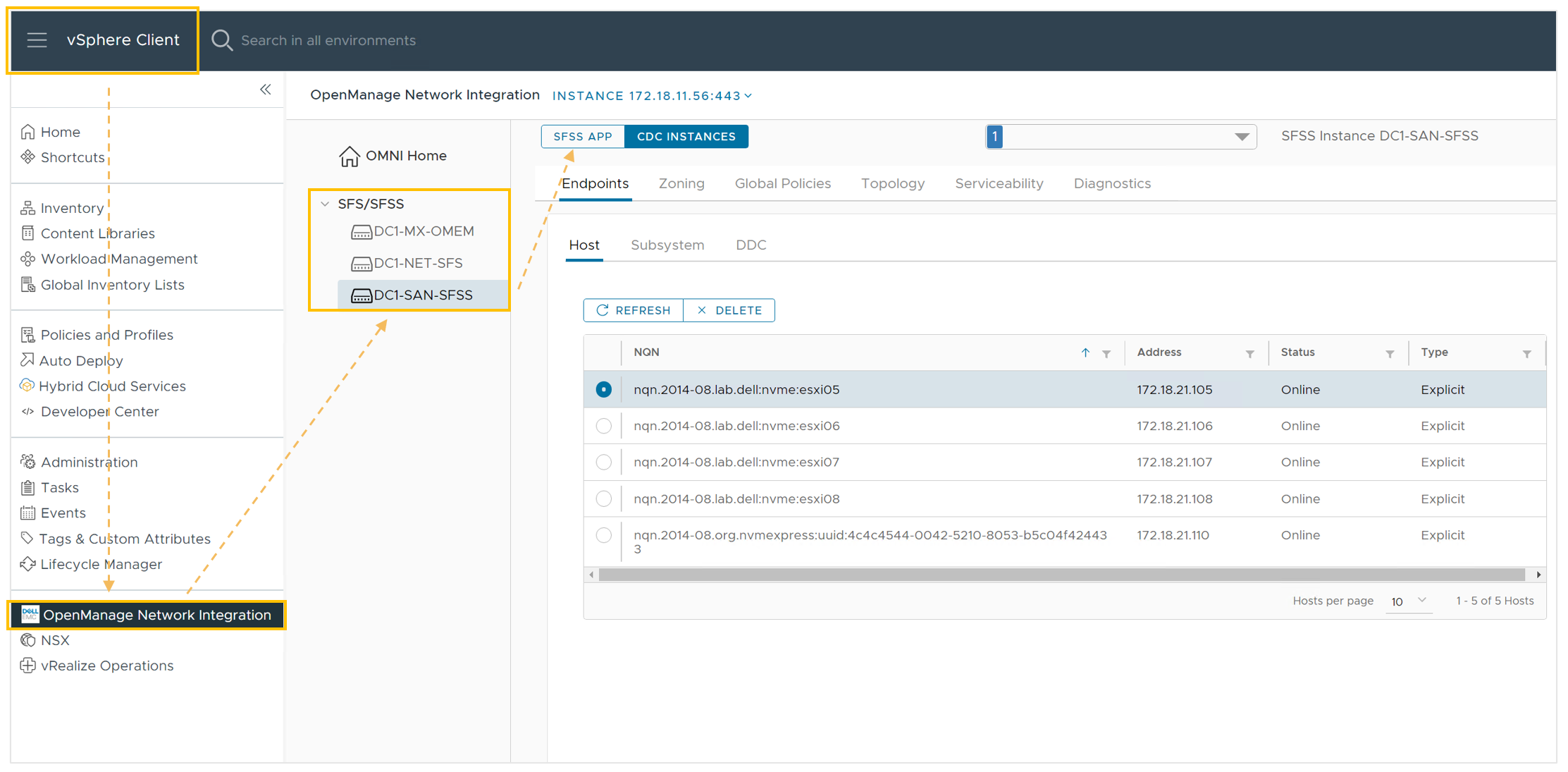

OpenManage Network Integration (OMNI)

OMNI is a vCenter plug-in that brings the user interface of the SmartFabric product into your vCenter UI, making vCenter a single pane of glass.

Here is an example of the OMNI vCenter plug-in facilitating access to a SmartFabric Services instance, and two SmartFabric Storage Software instances:

If you are interested in exploring any of the SmartFabric products further, browse the links above or reach out to Dell Technologies sales team.