Dell NativeEdge Speeds Edge Deployments with FIDO Device Onboard (FDO)

Tue, 26 Sep 2023 19:15:00 -0000

|Read Time: 0 minutes

Edge computing is generally defined as “a distributed computing paradigm that brings computation and data storage closer to the sources of data.1” The goal of this approach is to improve response times and save bandwidth.

Beyond this definition, edge computing is critical for enterprises to drive innovation and business outcomes. Existing approaches to the edge have led to technology silos, unscalable operations, poor infrastructure utilization, and inflexible legacy ecosystems. The massive proliferation of diverse edge devices has also increased exposure to cyberattacks. Dell has addressed these challenges with the new NativeEdge solution, a key feature of which is the ability to deploy edge devices swiftly and securely. At the root of this capability is FIDO Device Onboard (FDO), an open standard defined by technology leaders within the FIDO Alliance to automatically and securely onboard devices within edge deployments as diverse as retail, manufacturing, and energy. The FDO implementation used by Dell is based on the open-source implementation that has been contributed to the Linux Foundation Edge project by Intel.

The integration of the FIDO Device Onboard (FDO) with the Dell NativeEdge solution helps organizations to deploy and manage infrastructure at the edge by utilizing zero-trust principles and a streamlined supply chain to secure the edge environment at scale. “Intel developed and contributed the base technology that became FDO. Our work with Dell and the FIDO Alliance is a great example of the power of collaboration to address the continuously evolving threat landscape faced by our edge customers,” said Sunita Shenoy, Senior Director, Edge Technology Product Management at Intel.

Edge computing is transforming industries and we are delighted that FDO is a key component in Dell's innovative NativeEdge platform," said Andrew Shikiar, executive director and CMO of the FIDO Alliance. See the press release here: FIDO Device Onboard (FDO) Certification Program is Launched to Enable Faster, More Secure, Deployments of Edge Nodes and IoT Devices

In this blog, we will look at the edge challenges and three key elements that seek to address them: firstly Dell’s NativeEdge solution (described here), secondly the FIDO Device Onboarding (FDO) standard, and lastly the Linux Foundation Edge Open-Source software implementation of FDO (described here).

Business Challenges at the Edge

Recent years have seen a significant shift towards the edge, as more companies deploy devices that increase the demand for more data and analytics. By deploying devices to the edge, companies can reduce latency, improve the speed of data processing, and enhance security. Further, deploying devices at the edge can also help reduce bandwidth consumption and minimize the costs that are associated with transmitting large amounts of data to the cloud. The deployment of devices at the edge has therefore become a crucial component of modern technology infrastructure, enabling businesses to improve their operational efficiency and deliver better customer experiences.

The Dell NativeEdge Solution

The NativeEdge operations software platform enables organizations to securely deploy and manage infrastructure at the edge. NativeEdge supports a wide range of NativeEdge Endpoints. It uses zero-trust principles, combined with a holistic factory integration approach and application orchestration, to create a secure edge environment. It can start small with a single device and scale out as needed, and it can be deployed centrally or globally, regardless of network connectivity challenges, absence of technical staff, or facility environment.

Driving Improved Return on Investment at the Edge

In an internal Dell analysis2 consisting of return-on-investment modeling together with nearly a hundred Dell customer interviews, and a third-party environmental consultant review for methodology validation, Dell examined the potential economic impact of running NativeEdge across 25 facilities of a composite manufacturing company.

The study found that after three years, the company could expect to see the following benefits:

- Up to 132-percent return on investment for Dell NativeEdge platform costs

- An average of 20-minute time saving per month for every edge infrastructure asset managed with NativeEdge

- Savings on transportation costs by decreasing the need for site-support dispatches, helping to reduce travel time, and eliminating up to 14 metric tons of carbon dioxide emissions

Key Elements of Dell NativeEdge

As these figures show, NativeEdge is designed to address the major aspects of managing an edge system. The first two of these aspects are closely linked as the ability to provide zero-touch provisioning (also known as onboarding) together with zero-trust security, a key tenet of which is, “Never trust, always verify."

Automating the Onboarding Process with FIDO Device Onboard (FDO)

Traditionally, the installation of edge devices has been a cumbersome and time-consuming process. Edge installers, who could be individuals such as retail store managers or factory plant managers, may lack the expertise to manage complex edge devices and operating system installations. This highlights the importance of ensuring that edge devices are user-friendly and straightforward to deploy, as mistakes in manual onboarding can lead to security issues as well as service outages.

With NativeEdge, anyone can easily set up a NativeEdge Endpoint by simply plugging in a network cable, powering on the device, and stepping away. By leveraging the FIDO Alliance’s open standard known as FIDO Device Onboard Specification 1.1, Dell assures a streamlined installation process that is as easy as possible. The FIDO Alliance is a standards organization with over 250 members that was formed in 2012 with the goal of “simpler, stronger authentication.”

Leaders in technology from the FIDO Alliance (including Intel, Amazon, Google, Qualcomm, and Arm) created FDO. It is an open specification that defines an approach which combines 'plug and play'-like simplicity with the highest levels of security. It fully aligns with the zero-trust security framework in that neither the edge device nor the platform onto which it is being onboarded are trusted before onboarding takes place. FDO extends zero trust from the installation point back to the manufacturer.

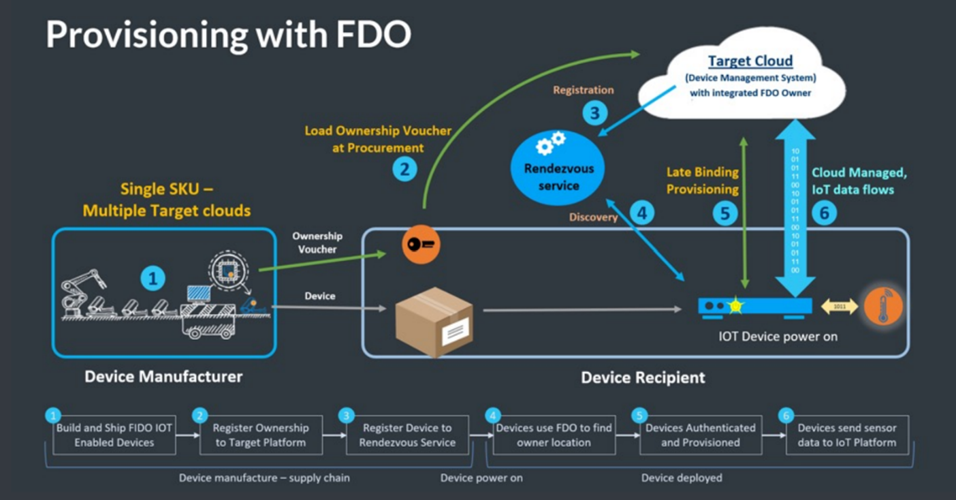

How FIDO Device Onboard (FDO) Works

The following steps are aligned with the numbers in the figure:

- At the manufacturing stage of the device (or later if preferred), the FDO software client is installed on the device. A trusted key (sometimes called an IDevID or LDevID) is also created inside the device to uniquely identify it. This key may be built into the silicon processor (or associated Trusted Platform Module, know as TPM) or protected within the file system. Other FDO credentials are also placed in the device. A digital proof of ownership, known as the Ownership Voucher (represented as the orange/black key shape in the figure) is created outside the device. This self-protected digital document can be transmitted as a text file. The Ownership Voucher allows the owner of the device to identify themselves during the onboarding process.

- The device passes its way through the supply chain (for example, from distributor to VAR). The Ownership Voucher file follows a parallel path.

- Once the target cloud or platform is selected by the device owner, the Ownership Voucher is sent to that cloud/platform. In turn, the Ownership Voucher is registered with the Rendezvous Server (RV). The RV acts in a comparable way to a Domain Name System (DNS) service.

- When the time for device onboarding comes, the device is connected to the network and powered on. After the device boots up, it uses the Rendezvous Server (RV) to find its target cloud/platform. On-premise and cloud-based RVs can be programmed into the device.

- Based on the information provided by the RV, the device contacts the cloud/platform. The device uses its trusted key to uniquely identify itself to the cloud/platform, and in return the cloud/platform identifies itself as the device owner using the Ownership Voucher. Next, the device and owner perform a cryptographic trick called a key exchange to create a secured, encrypted tunnel between them.

- The cloud/platform can now download credentials and software agents over this encrypted tunnel (or whatever else is needed for correct device operation and management). FDO allows any kind of credential to be downloaded, so that solution owners do not have to change their existing solution when they adopt FDO.

Finally, having finished the FDO process, the device contacts its management platform, which is the platform that manages it for the rest of its lifecycle. FDO then lies dormant, although it can be re-awakened if needed, such as if the device is sold or repurposed.

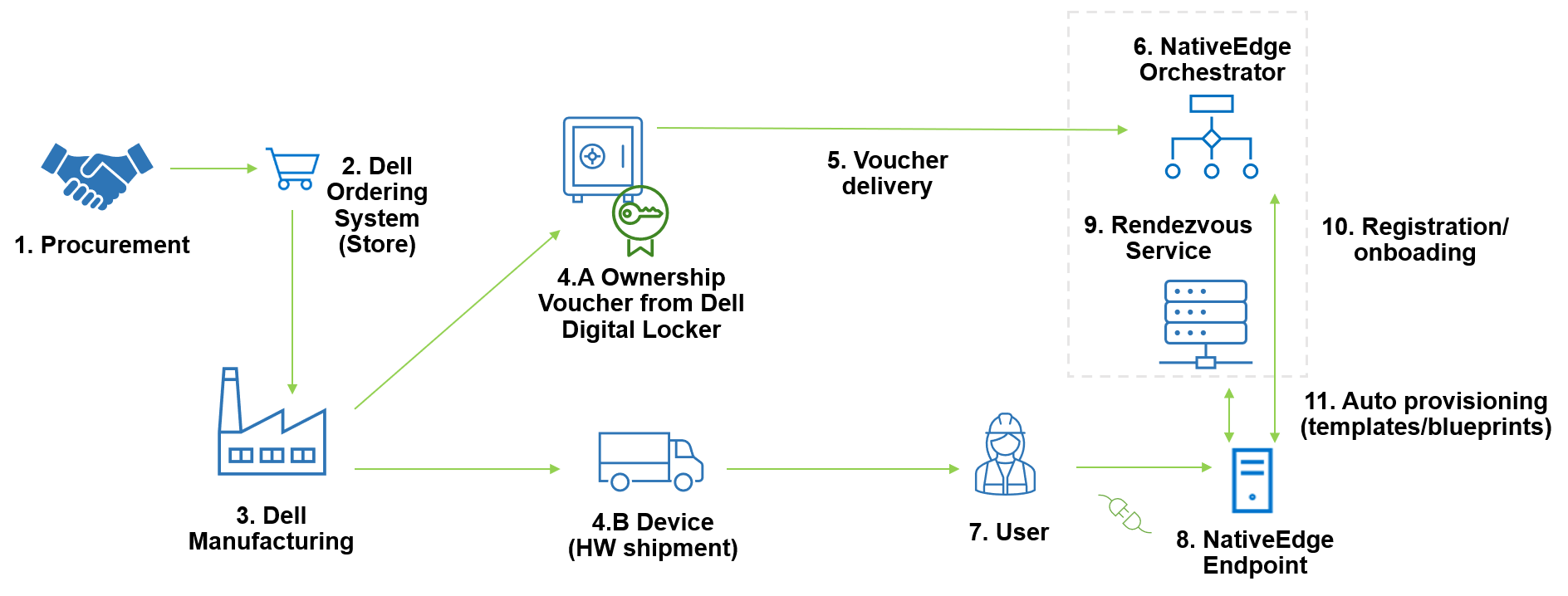

Dell NativeEdge FDO End-to-End Integration

Dell has integrated FDO into many elements of its NativeEdge solution, from its secure manufacturing facilities to the Dell Digital Locker used to store Ownership Vouchers to the NativeEdge Orchestrator. A full and detailed description of how FDO has been dovetailed into NativeEdge is available here.

The following diagram shows the FDO process applied within the NativeEdge environment.

The numbered steps in the diagram are explained in detail in the following steps:

- In the procurement process, the user selects the device configuration and places an order in the Dell store.

- The Dell stores receives the order and sends information to the Dell manufacturing facility.

- The Dell manufacturing facility builds the device and creates the Ownership Voucher.

- The following sub-steps occur simultaneously:

- The Dell manufacturing facility transfers the Ownership Voucher to the end user. This credential is passed through the supply chain, allowing the device owner to verify the device, and also giving the device a mechanism to verify its owner.

- The Dell manufacturing facility ships the NativeEdge Endpoint device to the user.

- The Ownership Voucher is delivered to the Edge Orchestrator that will control the device.

- The Edge Orchestrator now holds the device Ownership Voucher.

- Non IT-skilled staff unbox the device, cable the device to the network, and power it on.

- Once connected to the network, the device contacts the Rendezvous Service configured in the device.

- The Rendezvous Service provides information to the device about which orchestrator it belongs to. The Rendezvous server (which may be part of the NativeEdge Orchestrator or a separate system) is a service that acts a rendezvous point between a newly powered-on device and the owner onboarding service.

- Once the device connects to the NativeEdge Orchestrator that holds its Ownership Voucher, it starts the Secure Component Verification (SCV) process, and if it passes, it starts the registration and onboarding. This secure onboarding process includes device and ownership identification as well as component validation. SCV is part of Dell Supply Chain Security (described here).

- Once the onboarding is finished, the device is automatically provisioned with the deployment of pre-defined templates and blueprints that have been assigned to the device.

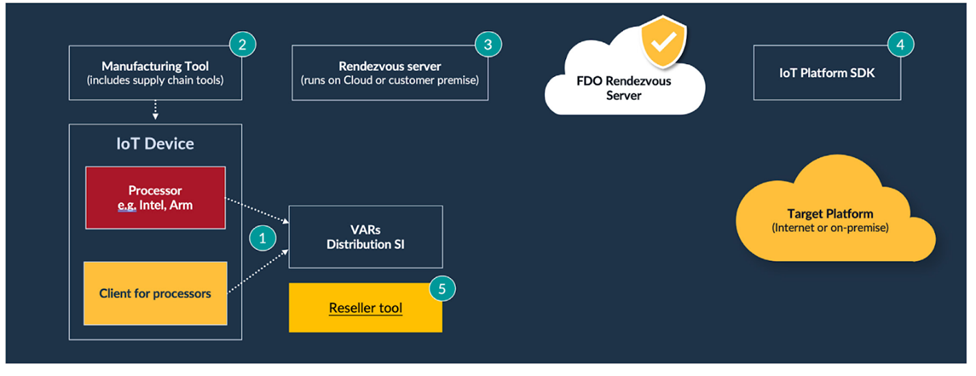

Implementing FDO with the Linux Foundation Edge Open-Source Implementation

Software implementations of FDO consist of several functional elements, which are highlighted in the following generic FDO tool diagram.

The numbered steps in the diagram are described in further detail as follows:

- The FDO client is placed on the device.

- The Manufacturing Tool installs the device credentials and creates the Ownership Voucher.

- The Rendezvous Server can be run in the cloud or on-premise.

- The FDO Platform Software Development Kit (SDK) is integrated into the target cloud or on-premise platform.

- A Reseller tool can be used by the supply chain ecosystem to extend the Ownership Voucher’s cryptographic key.

- Additionally, tools provide initial network access for the device (not shown).

Companies have a range of options when implementing the FDO software. They can develop the software themselves directly from the specification, use one of the commercially available implementations of FDO (for example, Red Hat), or they can use the Linux Foundation Edge implementation (described here).

The FDO software within the Linux Foundation Edge has been developed and contributed by Intel, one of the authors of the FDO specification. The code is a mixture of C and Java (depending on which part of the FDO system is being implemented). It offers client software for both Intel and other processors including Arm.

NativeEdge - Delivering on the Edge Promise

With NativeEdge, Dell set a simple but critical goal; allow customers to deploy Edge solutions quickly and securely and then manage them effectively throughout their lifetime. As with all simple goals, the challenge is in developing a solution that fully delivers on the promise. With NativeEdge, Dell has taken full advantage of FIDO Device Onboarding (FDO) together with the Linux Foundation Edge FDO project code to build on top of an industry onboarding technology that fully supports Dell’s mission to simplify deployment and management at the edge while delivering the highest levels of security. NativeEdge is now available for customers to deploy at scale.

1 https://en.wikipedia.org/wiki/Edge_computing

2 Based on internal analysis, May 2023. The internal analysis consisted of internal modeling, customer interviews, and third-party environmental consultant review for methodology validation.

Related Blog Posts

Dell NativeEdge Platform Empowers Secure Application Delivery

Tue, 08 Aug 2023 14:31:00 -0000

|Read Time: 0 minutes

Introduction

With an ever-evolving digital landscape and most edge use cases built around brownfield applications, IT operations have become a challenging matter for many organizations, particularly when bringing workloads to the enterprise edge.

These edge operational challenges include:

- Security of data and assets—Many of these assets have no user or identity awareness.

- Proliferation of solution silos—Many solutions have a bespoke implementation.

- Supporting distant locations—Many of these locations have no skilled IT staff.

- Latency requirements—Many of these locations have limited bandwidth or are even completely disconnected.

- Fragmented technology landscape—Many of these solutions have been implemented over years of technology evolution.

- Environmental constraints—Many of these solutions require extended temperature, vibration, and shock resilience and have use-case specific regulatory requirements.

Edge lives outside data centers in the real world where we live. It is located where data is captured close to devices or endpoints, to generate immediate and actionable insights.

We are experiencing a perfect storm of innovation driven by an explosion of data (IoT, telemetry, video, and streaming data), technology capabilities (multicloud, AI/ML, heterogeneous computing, software-defined, and 5G), and the resulting business challenges (security, compliance, productivity, and customer experience).

Security that is required at these locations needs a different approach:

- Security breaches can have a major effect on human well-being as they often impact essential infrastructure and services, such as power grids, housing, retail, transportation, schools, and hospitals.

- These failures can have a direct impact on everyday business operations and equipment, such as point of sales, advanced optical inspection (AOI), overall equipment effectiveness (OEE), energy efficiency, telco base station monitoring, and patient care.

- Edge infrastructure requires the highest level of data security. Network devices are often located at dark sites without Internet access and require the highest level of data confidentiality, such as patient records which are bound to compliance and regulatory constraints.

Dell is committed to assisting customers with the simplification of edge operations as the demand for secure and efficient application delivery has become paramount. The Dell NativeEdge platform leverages the power of edge computing to revolutionize application delivery in a secure environment.

NativeEdge provides a unique set of assets in an edge operations software platform which allows IT operations to deliver application orchestration, multicloud connectivity, zero-touch onboarding, a zero-trust security approach, and infrastructure management.

Application Orchestration

NativeEdge provides a standardized framework for defining and deploying applications. This simplifies the management and scalability of complex edge environments while ensuring consistency and reliability in application orchestration.

Zero-Touch Provisioning

NativeEdge zero-touch provisioning is a feature that allows for the automatic and seamless deployment of NativeEdge Endpoint (OptiPlex, Gateways, and PowerEdge) without manual intervention. It enables quick and effortless setup by leveraging order and manufacturing preconfigured settings, eliminating the need for on-site configuration, and reducing deployment time and effort.

Multicloud

NativeEdge multicloud capabilities allow NativeEdge Endpoints to connect and integrate with multiple cloud platforms. It enables organizations to leverage various cloud services and resources, such as storage, computing power, and analytics, across different cloud providers, which enhances flexibility and scalability in edge computing deployments.

Infrastructure Management

NativeEdge infrastructure management capabilities provide a comprehensive set of tools and features that enable centralized control and monitoring of NativeEdge Endpoints. It includes functions such as remote device management, software updates, configuration management, and performance monitoring—all of which enhance efficiency and simplify the management of edge computing infrastructure.

Zero Trust

Zero trust is a security framework according to the National Institute of Standards and Technology Special Publication (NIST SP) 800-207 that challenges the traditional perimeter-based approach. It assumes that no user or device should be inherently trusted, requiring continuous verification and authentication of every access request. It aims to improve cybersecurity by minimizing risks and enforcing strict access controls regardless of location or network. A zero-trust solution starts with the seven pillars of security as defined by the Department of Defense (DoD), such as device trust, user trust, transport and session trust, data trust, software trust, the two layers that provide the visibility and analytics, and automation and orchestration. Each pillar has 45 capabilities, and each capability has 152 zero-trust activities.

Conclusion

NativeEdge is a powerful and secure edge computing application delivery solution that combines features like zero-touch provisioning, multicloud capabilities, and robust infrastructure management. It provides seamless edge, core, and cloud deployment, integration with multiple cloud platforms, and centralized control, which brings scale to edge operations.

Watch the overview video:

Curious to know more about NativeEdge capabilities? See Edge Security Essentials: Edge Security and How Dell NativeEdge Can Help, or visit Dell.com/NativeEdge and Dell Technologies Solutions Info Hub for NativeEdge.

Will AI Replace Software Developers?

Wed, 01 May 2024 10:41:32 -0000

|Read Time: 0 minutes

Over the past year, I have been actively involved in generative artificial intelligence (Gen AI) projects aimed at assisting developers in generating high-quality code. Our team has also adopted Copilot as part of our development environment. These tools offer a wide range of capabilities that can significantly reduce development time. From automatically generating commit comments and code descriptions to suggesting the next logical code block, they have become indispensable in our workflow.

According to a recent study by McKinsey, quantify the level of productivity gain in the following areas:

Figure 1. Software engineering: speeding developer work as a coding assistant (McKinsey)

This study shows that “The direct impact of AI on the productivity of software engineering could range from 20 to 45 percent of current annual spending on the function. This value would arise primarily from reducing time spent on certain activities, such as generating initial code drafts, code correction and refactoring, root-cause analysis, and generating new system designs. By accelerating the coding process, Generative AI could push the skill sets and capabilities needed in software engineering toward code and architecture design. One study found that software developers using Microsoft’s GitHub Copilot completed tasks 56 percent faster than those not using the tool. An internal McKinsey empirical study of software engineering teams found those who were trained to use generative AI tools rapidly reduced the time needed to generate and refactor code and engineers also reported a better work experience, citing improvements in happiness, flow, and fulfilment.”

What Makes the Code Assistant (Copilot) the Killer App for Gen AI?

The remarkable progress of AI-based code generation owes its success to the unique characteristics of programming languages. Unlike natural language text, code adheres to a structured syntax with well-defined rules. This structure enables AI models to excel in analyzing and generating code.

Several factors contribute to the swift evolution of AI-driven code generation:

- Structured nature of code–Code follows a strict format, making it amenable to automated analysis. The consistent structure allows AI algorithms to learn patterns and generate syntactically correct code.

- Validation tools–Compilers and other development tools play a crucial role. They validate code for correctness, ensuring that generated code adheres to language specifications. This continuous feedback loop enables AI systems to improve without human intervention.

- Repeatable work identification–AI excels at identifying repetitive tasks. In software development, there are numerous areas where routine work occurs, such as boilerplate code, data transformations, and error handling. AI can efficiently recognize and automate these repetitive patterns.

From Coding Assistant to Fully-Autonomous AI Software Engineer

The Cognition & Development Lab at Washington University in St. Louis investigates how infants and young children think, reason, and learn about the world around them. Their research focuses on the development of early social-cognitive capacities. They are the makers of Devin, the world’s first AI software engineer.

Devin possesses remarkable capabilities in software development in the following areas:

- Complex engineering tasks–With advances in long-term reasoning and planning, Devin can plan and execute complex engineering tasks that involve thousands of decisions. Devin recalls relevant context at every step, learns over time, and even corrects mistakes.

- Coding and debugging–Devin can write code, debug, and address bugs in codebases. It autonomously finds and fixes issues, making it a valuable teammate for developers.

- End-to-end app development–Devin builds and deploys apps from scratch. For example, it can create an interactive website, incrementally adding features requested by the user and deploying the app.

- AI model training and fine-tuning–Devin sets up fine-tuning for large language models, demonstrating its ability to train and improve its own AI models.

- Collaboration and communication–Devin actively collaborates with users. It reports progress in real-time, accepts feedback, and engages in design choices as needed.

- Real-world challenges–Devin tackles real-world GitHub issues found in open-source projects. It can also contribute to mature production repositories and address feature requests. Devin even takes on real jobs on platforms like Upwork, writing and debugging code for computer vision models.

The Devin project is a clear indication of how fast we move from simple coding assistants to more complete engineering capabilities.

Will AI Replace Software Developers?

When I asked this question recently during a Copilot training session that our team took, the answer was “No”, or to be more precise “Not yet”. The common thinking is that it provides a productivity enhancement tool that will save developers from spending time on tedious tasks such as documentation, testing, and so on. This could have been true yesterday, but as seen with project Devin, it already goes beyond simple assistance to full development engineering. We can rely on the experience from past transformations to learn a bit more about where this is all heading.

Learning from Cloud Transformation: Parallels with Gen AI Transformation

The advent of cloud computing, pioneered by AWS approximately 15 years ago, revolutionized the entire IT landscape. It introduced the concept of fully automated, API-driven data centers, significantly reducing the need for traditional system administrators and IT operations personnel. However, beyond the mere shrinking of the IT job market, the following parallel events unfolded:

- Traditional IT jobs shrank significantly–Small to medium-sized companies can now operate their IT infrastructure without dedicated IT operators. The cloud’s self-service capabilities have made routine maintenance and management more accessible.

- Emergence of new job titles: DevOps, SRO, and more–As organizations embrace cloud technologies, new roles emerge. DevOps engineers, site reliability operators (SROs), and other specialized positions became essential for optimizing cloud-based systems.

- The rise of SaaS startups–Cloud computing lowered the barriers of entry for delivering enterprise-grade solutions. Startups capitalized on this by becoming more agile and growing faster than established incumbents.

- Big tech companies’ accelerated growth–Tech giants like Google, Facebook, and Microsoft swiftly adopted cloud infrastructure. The self-service nature of APIs and SaaS offerings allowed them to scale rapidly, resulting in record growth rates.

Impact on Jobs and Budgets

While traditional IT jobs declined, the transformation also yielded positive outcomes:

- Increased efficiency and quality–Companies produced more products of higher quality at a fraction of the cost. The cloud’s scalability and automation played a pivotal role in achieving this.

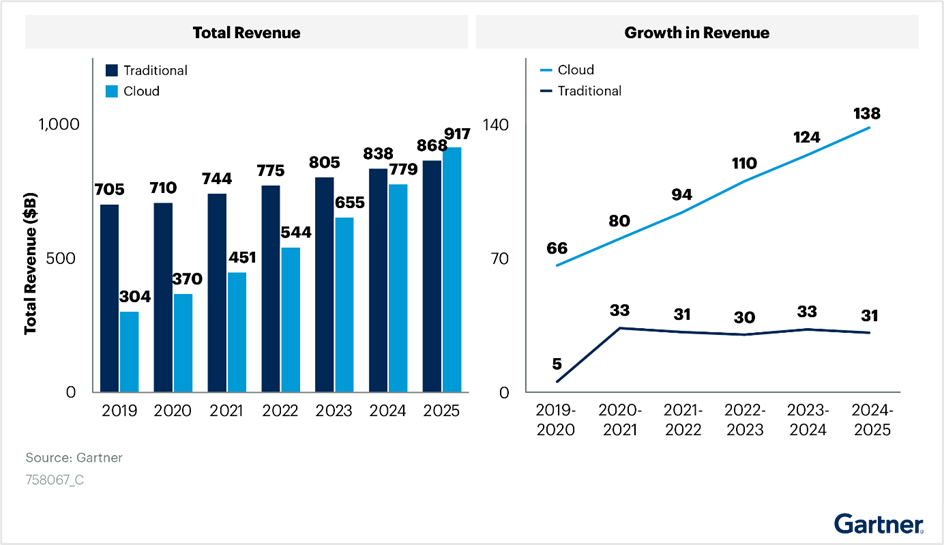

- Budget shift from traditional IT to cloud–Gartner’s IT spending reports reveal a clear shift in budget allocation. Cloud investments have grown steadily, even amidst the disruption caused by the introduction of cloud infrastructure, see the following figure:

Figure 2. Cloud transformation’s impact on IT budget allocation

Looking Ahead: AI Transformation

As we transition to the era of AI, we can anticipate similar trends:

- Decline in traditional jobs–Just as cloud computing transformed the job landscape, AI adoption may lead to the decline of certain traditional roles.

- Creation of new jobs–Simultaneously, AI will create novel opportunities. Roles related to AI development, machine learning, and data science will flourish.

Short Term Opportunity

Organizations will allocate more resources to AI initiatives. The transition to AI is not merely an evolutionary step; it is a strategic imperative.

According to a research conducted by ISG on behalf of Glean, Generative AI projects consumed an average of 1.5 percent of IT budgets in 2023. These budgets are expected to rise to 2.7 percent in 2024 and further increase to 4.3 percent in 2025. Organizations recognize the potential of AI to enhance operational efficiency and bridge IT talent gaps. Gartner predicts that Generative AI impacts will be more pronounced in 2025. Despite this, worldwide IT spending is projected to grow by 8 percent in 2024. Organizations continue to invest in AI and automation to drive efficiency. The White House budget proposes allocating $75 billion for IT spending at civilian agencies in 2025. This substantial investment aims to deliver simple, seamless, and secure government services through technology.

The impact of AI extends far beyond the confines of the IT job market. It permeates nearly every facet of our professional landscape. As with any significant transformation, AI presents both risks and opportunities. Those who swiftly embrace it are more likely to seize the advantages.

So, what steps can software developers take to capitalize on this opportunity?

Tips for Software Developers in the Age of AI

In the immediate term, developers can enhance their effectiveness when working with AI assistants by acquiring a combination of the following technical skills:

- Learn AI basics–I would recommend starting the learning with AI Terms 101. I also recommend following the leading AI podcasts. I found this useful to keep myself up to date in this space and learn some useful tips and updates from industry experts.

- Use coding assistant tools (Copilot)–Coding assistant tools are definitely the low-hanging fruit and probably the simplest step to get into the AI development world. There is a growing list of tools that are available and can be integrated seamlessly into your existing development IDE. The following provides a useful reference to The Top 11 AI Coding Assistants to Use in 2024.

- Learn machine learning (ML) and deep learning concepts–Understanding the fundamentals of ML and deep learning is crucial. Familiarize yourself with neural networks, training models, and optimization techniques.

- Data science and analytics–Developers should grasp data preprocessing, feature engineering, and model evaluation. Proficiency in tools like Pandas, NumPy, and scikit-learn is beneficial.

- Frameworks and tools–Learn about popular AI frameworks such as TensorFlow, and PyTorch. These tools facilitate model building and deployment.

More skilled developers will need to learn how to create their own “AI engineers” which they will train and fine tune to assist them with user interface (UI), backend, and testing development tasks. They could even run a team of “AI engineers” to write an entire project.

Will AI Reduce the Demand for Software Engineers?

Not necessarily. In the case of cloud transformation, developers with AI expertise will likely be in high demand. Those who will not be able to adapt to this new world are likely to stay behind and face the risk of losing their job.

It would be fair to assume that the scope of work, post-AI transformation, will grow and will not stay stagnant. As an example, we will likely see products adding more “self-driving” capabilities, where they could run more complete tasks without the need for human feedback or enable close to human interaction with the product.

Under this assumption, the scope of new AI projects and products is going to grow, and that growth should balance the declining demand for traditional software engineering jobs.

Conclusion

As a history enthusiast, I often find parallels in the past that can serve as a guide to our future. The industrial era witnessed disruptive technological advancements that reshaped job markets. Some professions became obsolete, while new ones emerged. As a society, we adapted quickly, discovering new growth avenues. However, the emergence of AI presents unique challenges. Unlike previous disruptions, AI simultaneously impacts a wide range of job markets and progresses at an unparalleled pace. The implications are indeed profound.

Recent research by Nexford University on How Will Artificial Intelligence Affect Jobs 2024-2030 reveals some startling predictions. According to a report by the investment bank Goldman Sachs, AI could potentially replace the equivalent of 300 million full-time jobs. It could automate a quarter of the work tasks in the US and Europe, leading to new job creation and a productivity surge. The report also suggests that AI could increase the total annual value of goods and services produced globally by 7 percent. It predicts that two-thirds of jobs in the US and Europe are susceptible to some degree of AI automation, and around a quarter of all jobs could be entirely performed by AI.

The concerns raised by Yuval Noa Harari, a historian and professor at the Department of History of the Hebrew University of Jerusalem, resonate with many. The rapid evolution of AI may indeed lead to significant unemployment.

However, when it comes to software engineers, we can assert with confidence that regardless of how automated our processes become, there will always be a fundamental need for human expertise. These skilled professionals perform critical tasks such as maintenance, updates, improvements, error corrections, and the setup of complex software and hardware systems. These systems often require coordination among multiple specialists for optimal functionality.

In addition to these responsibilities, computer system analysts play a pivotal role. They review system capabilities, manage workflows, schedule improvements, and drive automation. This profession has seen a surge in demand in recent years and is likely to remain in high demand.

In conclusion, AI represents both risk and opportunity. While it automates routine tasks, it also paves the way for innovation. Our response will ultimately determine its impact.

References

- Economic potential of generative AI | McKinsey

- Introducing Devin, the first AI software engineer (cognition-labs.com)

- IT Spending & Budgets: Trends & Forecasts 2024

- Organizations continue to invest in AI and automation to drive efficiency

- This substantial investment aims to deliver simple, seamless, and secure government services through technology

- AI Terms 101: An A to Z AI Terminology Guide for Beginners

- 11 AI Podcasts That Will Shape Your Perspective (geekflare.com)\

- How Will Artificial Intelligence Affect Jobs 2024-2030 | Nexford University

- The Top 11 AI Coding Assistants to Use in 2024 | DataCamp

- Yuval Harari On The Future of Jobs & Technology, Intelligence vs Consciousness, & Future Threats to Humanity - Jacob Morgan (thefutureorganization.com)