Dell EMC Solutions for Azure Stack HCI Furthers Customer Value

Wed, 16 Jun 2021 13:35:49 -0000

|Read Time: 0 minutes

As customers address the upgrade cycle of retiring Microsoft Windows Server 2008 into software defined infrastructures using Windows Server 2019, the core tenets of hyperconverged infrastructure (HCI) and hybrid cloud enablement continue to be desired goals. Many customers, however, are unsure how to best leverage their investments in Windows Server to modernize their datacenters to take advantage of software defined infrastructure.

At Dell Technologies, we have leadership positions in converged, hyperconverged, and cloud infrastructures covering several platforms, including being a founding launch partner with Microsoft’s Azure Stack HCI solution. Built over three decades of partnership with Microsoft, we bring the insights and expertise to help our customers with their IT transformation utilizing software defined features of Windows Server 2019, the foundational platform for Azure Stack HCI.

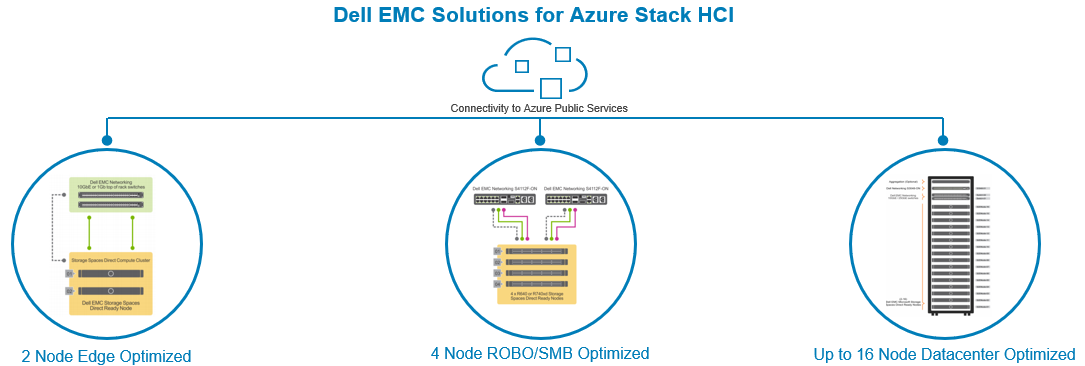

Built on globally available and supported Storage Spaces Direct (S2D) Ready Nodes, Dell EMC offers a wide range of Azure Stack HCI Solutions that provide an excellent value proposition for customers who have standardized on Microsoft Hyper-V and looking to modernize IT infrastructure while utilizing their existing investments and expertise in Windows Server.

As we head to Microsoft’s largest customer event – Microsoft Ignite 2019 – we are delighted to share some new enhancements and offerings to our Azure Stack HCI solution portfolio.

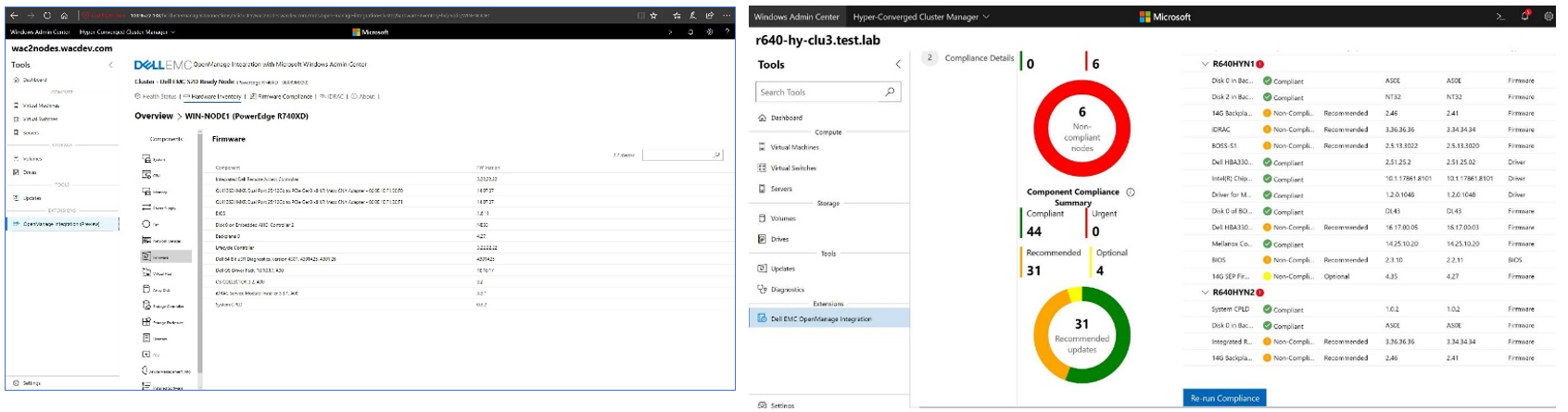

Simplifying Managing Azure Stack HCI via Windows Admin Center (WAC)

With a goal of simplifying Azure Stack HCI management, we have integrated monitoring of S2D Ready Nodes into the Windows Admin Center (WAC) console. The Dell EMC OpenManage Extension for WAC allows our customers to manage Azure Stack HCI clusters from a single pane of glass. The current integration provides health monitoring, hardware inventory, and firmware compliance reporting of S2D Ready Nodes, the core building block of our Azure Stack HCI solution. By using this extension, infrastructure administrators can monitor all their clusters in real time and check if the nodes are compliant to Dell EMC recommended firmware and driver versions. Customers wanting to leverage Azure public cloud to either extend or protect their on-prem applications can do so within the WAC console to utilize services such as Azure Back up, Azure Site Recovery, Azure Monitor, etc.

Here is what Greg Altman, IT Infrastructure Manager at Swiff-Train and one our early customers had to say about our OpenManage integration with WAC:

"The Dell EMC OpenManage Integration with Microsoft Windows Admin Center gives us full visibility to Dell EMC Solutions for Microsoft Azure Stack HCI, enabling us to more easily respond to situations before they become critical. With the new OpenManage integration, we can also manage Microsoft Azure Stack HCI from anywhere, even simultaneously managing our clusters located in different cities."

New HCI Node optimized for Edge and ROBO Use Cases

Customers looking at modernizing infrastructure at edge, remote or small office locations now have an option of utilizing the new Dell EMC R440 S2D Ready Node which provides both hybrid and all-flash options. A 2-node Azure Stack HCI cluster provides a great solution for such use cases that need limited hardware infrastructure, yet superior performance and availability and ease of remote management.

The dual socket R440 S2D Ready Node is shallower (depth of 27.26 in) than a typical rack server, comes with up to 8 or 10 2.5” drive configurations providing up to 76.6TB of all-flash capacity in a single 1U node.

The table below summarizes our S2D Ready Node portfolio.

R440 S2D RN | R640 S2D RN | R740xd S2D RN | R740xd2 S2D RN | |

Best For | Edge/ROBO and space (depth) constrained locations | Density optimized node for applications needing balance of high-performance storage and compute | Capacity and performance optimized node for applications needing balance of compute and storage | Capacity optimized node for data intensive applications and use cases such as backup and archive |

Storage Configurations | Hybrid & All-Flash | Hybrid, All-Flash, All-NVMe including Intel Optane DC Persistent Memory | Hybrid, All-Flash, and All-NVMe | Hybrid with SSDs and 3.5” HDDs |

For detailed node specifications, please refer to our website.

Stepping up the Performance Capabilities

With applications and growing data analysis needs increasingly driving the lower latency and higher capacity requirements, it’s imperative the underlying infrastructure does not create performance bottlenecks. The latest refresh of our solution includes several updates to scale infrastructure performance:

- All S2D Ready Nodes now support Intel 2nd Generation Xeon Scalable Processors that provide improved compute performance and security features.

- Support for Intel Optane SSDs and Intel Optane DC memory (on R640 S2D Ready node) enable lower latency storage and persistent memory tier to accelerate application performance. The R640 S2D Ready Node can be configured with 1.5TB of Optane DC persistent memory working in App Direct Mode to a provide a cache tier for the NVMe storage local to the node.

- The new all-NVMe option on R640 S2D Ready Node provides a compact 1U node for applications that are sensitive to both compute and storage performance.

- Faster Networking Options: For applications needing high bandwidth and low latency access to network, the R640 and R740XD S2D Ready Nodes can now be configured with Mellanox CX5 100Gb Ethernet adapters. In addition, we have also qualified the PowerSwitch S5232 100Gb switch to provide a fully validated solution by Dell EMC.

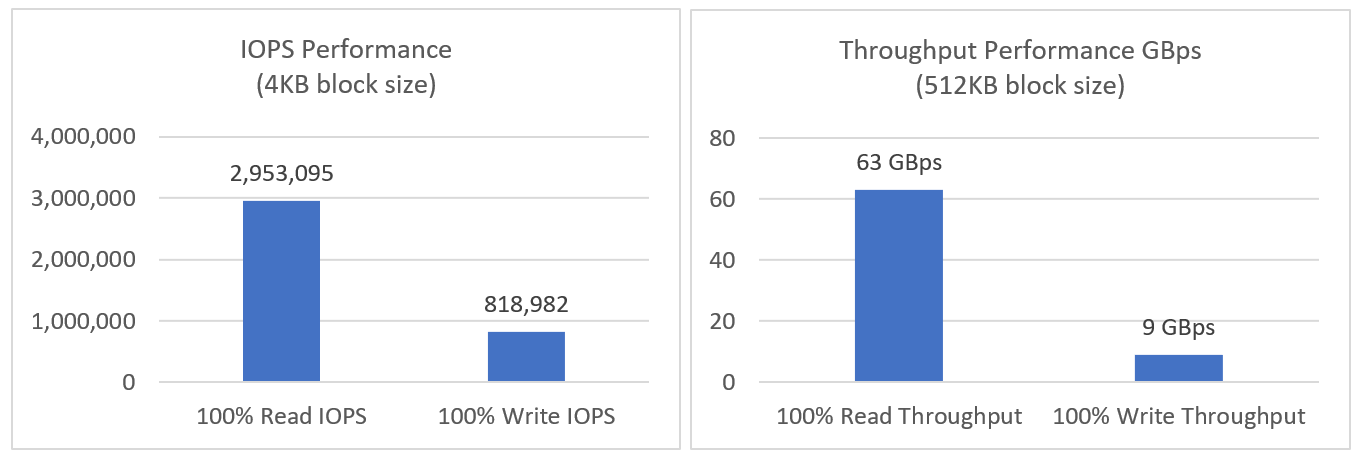

As we drove new hardware enhancements to our Azure Stack HCI portfolio, we also put a configuration to test the performance we can expect from a representative configuration. With just a four node Azure Stack HCI cluster with R640 S2D Ready Nodes configure all NVMe drives and 100Gb Ethernet, we observed:

- 2.95M IOPS with an average read latency of 242μs in a VM Fleet test configured for 4K block size and 100% reads

- 0.8M IOPS with an average write latency of 4121 μs in a VM Fleet test configured for 4K block size and 100% writes

- Up to 63GB/s of 100% sequential read throughput and 9GB/s of 100% sequential write throughput with 512KB block size

Yes, you got it right. Not only the solution is compact, easy to manage but also provides a tremendous performance capability.

Read our detailed blog for more information on our lab performance test results.

Overall, we are very excited to bring so many new capabilities to our customers. We invite you to come meet us at Microsoft Ignite 2019 at Booth 1547, talk to Dell EMC experts and see live demos. Besides the show floor, Dell EMC experts will also be available at Hyatt Regency Hotel, Level 3, Discovery 43 Suite for detailed conversations. Register here for time with our experts.

Meanwhile, visit our website for more details or if you have any questions, contact our team directly at s2d_readynode@dell.com

Related Blog Posts

Test Drive Azure Stack HCI in the Dell Demo Center!

Thu, 13 Apr 2023 21:28:09 -0000

|Read Time: 0 minutes

A picture is worth a thousand words, but the value of a good hands-on lab is immeasurable!

Our newly minted interactive demo and hands-on lab are published in the Dell Technologies Demo Center:

- The interactive demo (ITD-0910) offers an immersive look at all Azure Stack HCI management and governance features in Dell OpenManage Integration with Microsoft Windows Admin Center.

- If you're seeking a deep-dive into the Dell Integrated System for Microsoft Azure Stack HCI initial deployment experience, you may prefer the PowerShell-heavy hands-on lab (HOL-0313-01).

In this blog, we'll begin with a brief introduction to these test drives. Then, we'll share our list of other virtual labs that will prove invaluable on your journey to becoming an Azure Stack HCI champion. Fasten your seatbelt and get ready to take your skills to the next level!

The interactive demo can be accessed directly by all customers and partners. When first navigating to the Demo Center site, remember to click the Sign In drop-down menu in the upper right corner of the page.

At the present time, you will not see the hands-on lab appear in the Demo Center catalog. You will need to contact your Dell Technologies account team to gain access to HOL-0313-01.

Interactive Demo ITD-0910

Taking this demo is like competing in a Formula1 or NASCAR race. It is fast-paced and remains within the secure confines of the track's guardrails. Each module in the demo guides you down a well-defined path that leads to a desired business outcome. Here is a summary of the benefits our OpenManage Integration extension delivers:

- Uses automation to streamline operational efficiency and flexibility

- Provides a consistent hybrid management experience by using a single Dell HCI Configuration Profile policy definition

- Reduces operational expense by intelligently right-sizing infrastructure to match your workload profile

- Ensures stability and security of infrastructure with real-time monitoring and lifecycle management

- Protects your IT estate from costly changes to configuration settings made inadvertently or maliciously

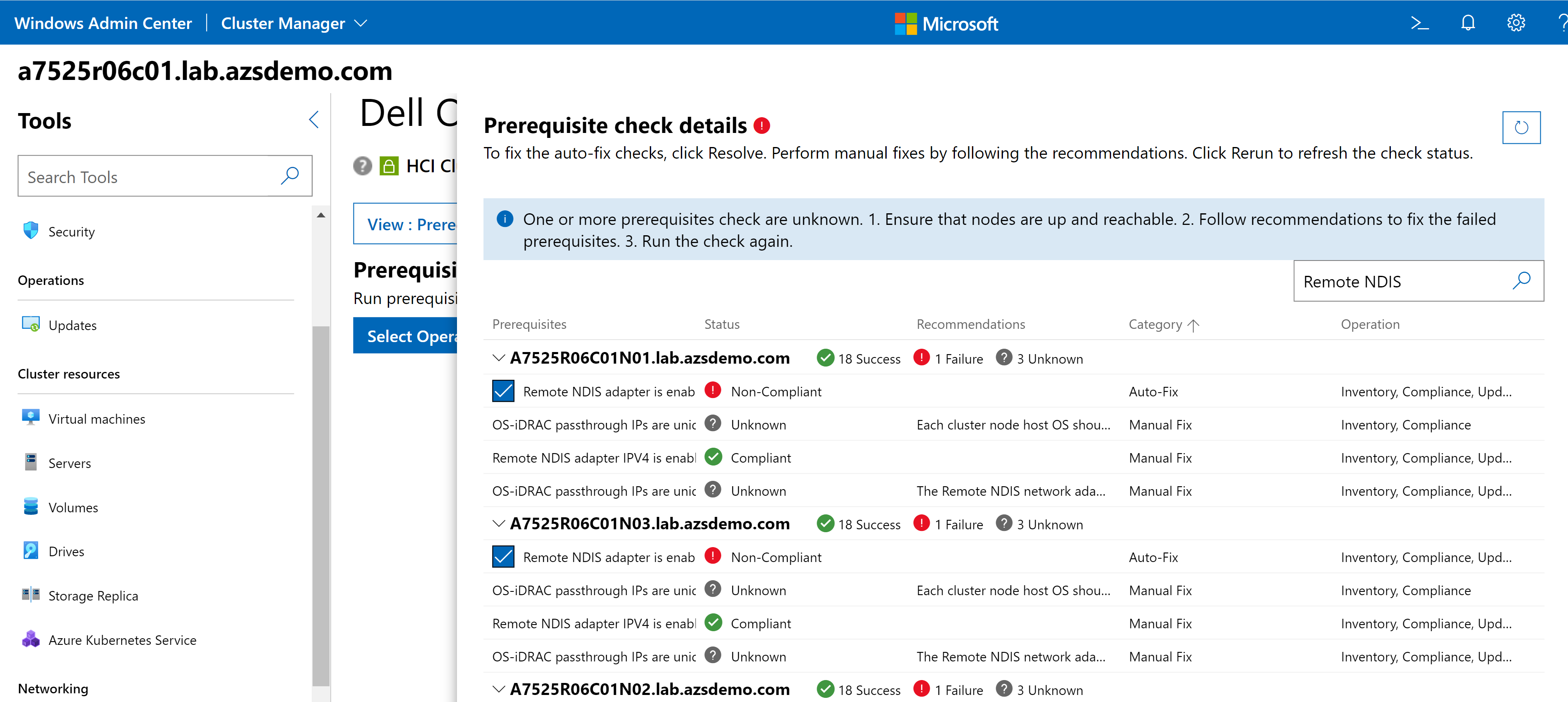

Whenever new features are released for our extension, you'll be able to familiarize yourself with them here first. In the latest release (v3.0), we completely revamped the user interface for improved usability and navigation. We also added server and cluster-level checks to ensure that all prerequisites are met for seamless enablement of management and monitoring operations. The following figure illustrates the results of a prerequisite check. In the interactive demo, you learn more about these failures and how to use the OpenManage Integration extension to fix them.

When we first start driving, we need our parents and teachers to provide turn-by-turn directions. If you're exploring the extension for the first time, you'll want to keep the guides enabled to aid your understanding.

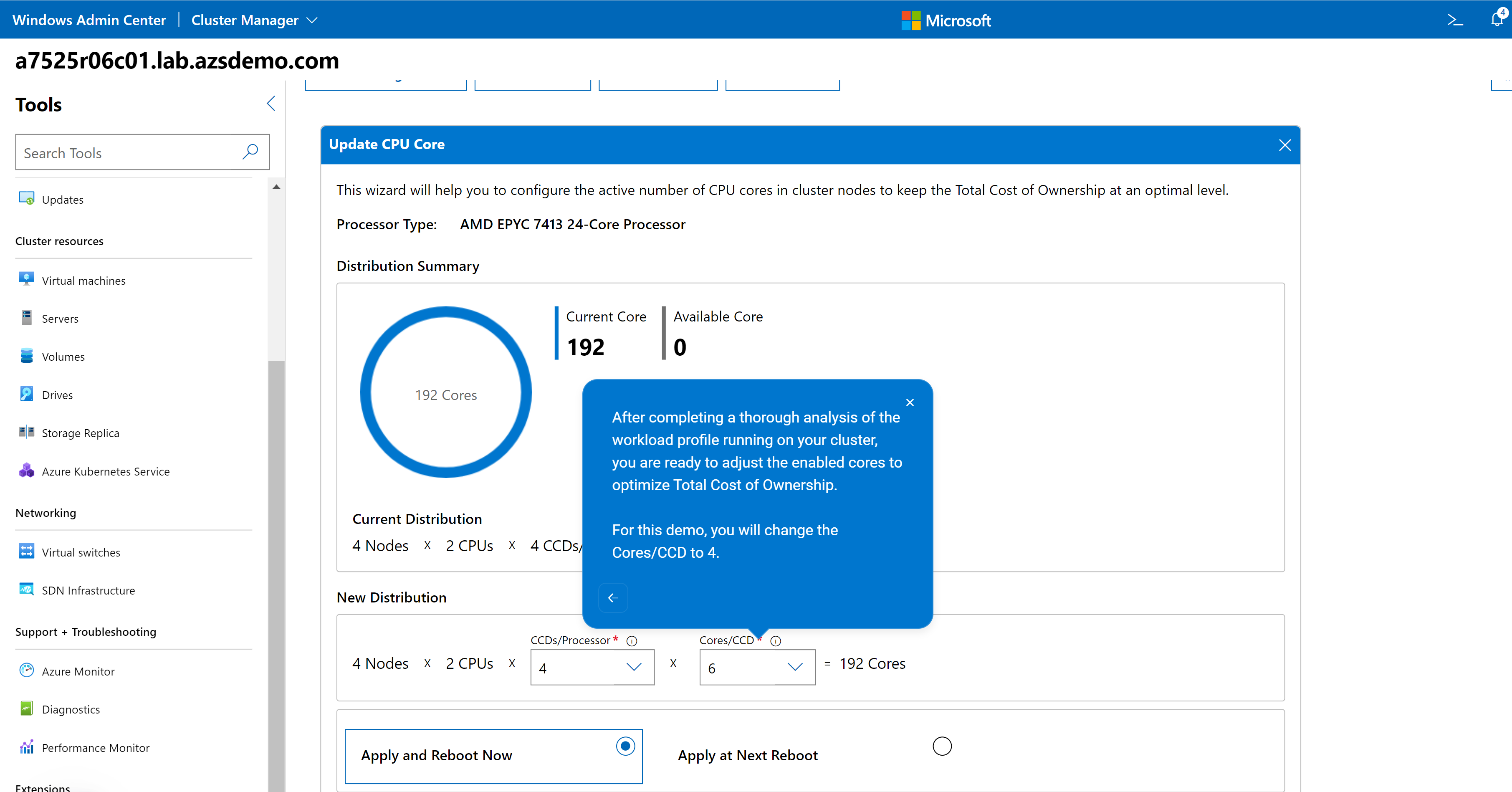

For example, consider the CPU Core Management feature. This feature allows you to right size your Azure Stack HCI cluster by enabling/disabling CPU cores to meet the requirements of your workload profile. It can also help save in subscription costs because Azure Stack HCI hosts are billed by CPU core per month. The guide in the following figure reminds you that a thorough analysis of your workload characteristics is essential prior to reducing the enabled CPU cores on this cluster.

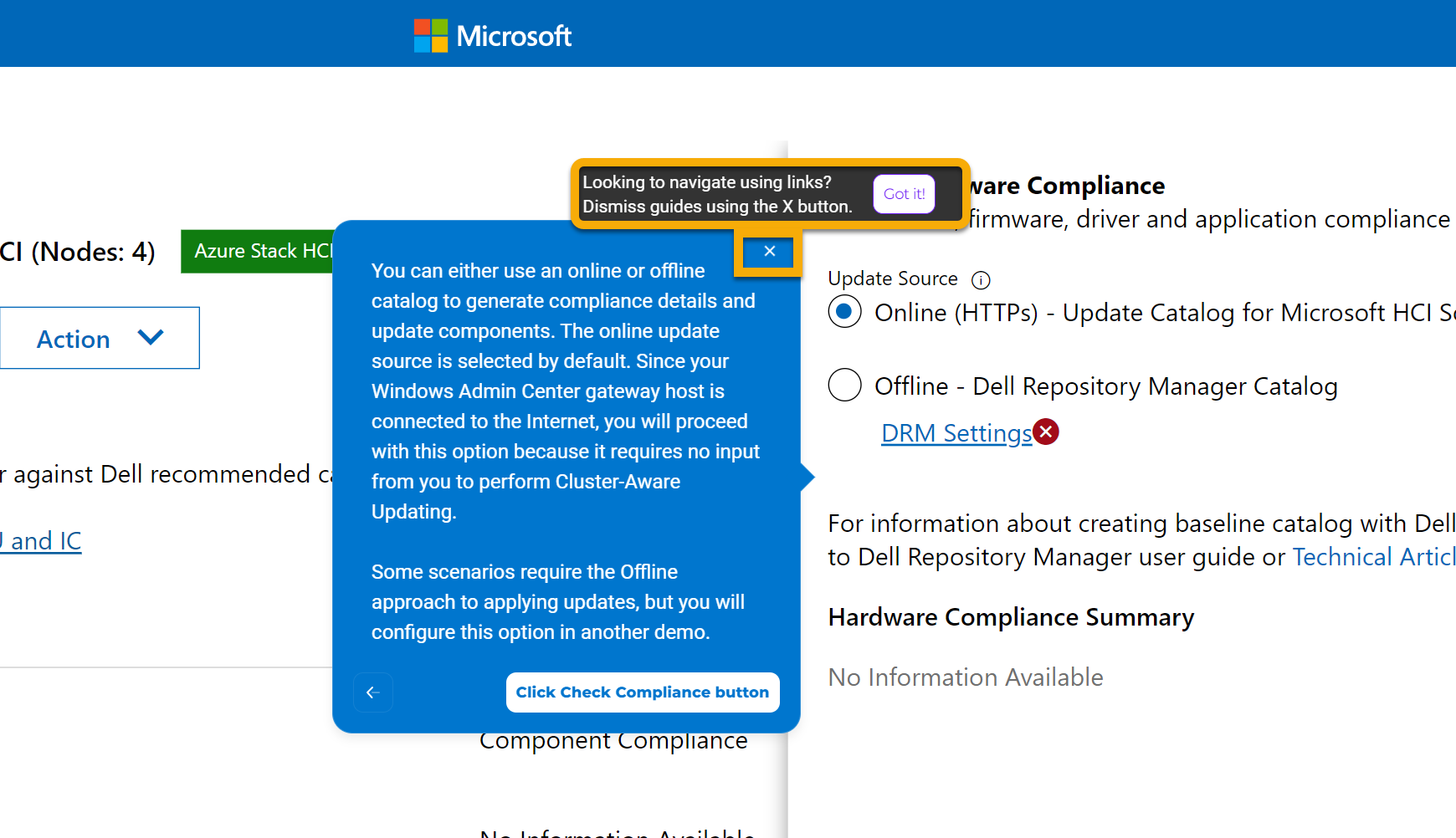

After you've familiarized yourself with the talk track, you can leave your parents and teachers at home and drive through the demos without the detailed explanations. You can navigate using links alone by clicking the X in the upper right-hand corner of any guide. You might choose to proceed down this road to test your knowledge. As a Dell Technologies partner, you might want to create the illusion of performing a demo from a live environment to impress prospective clients.

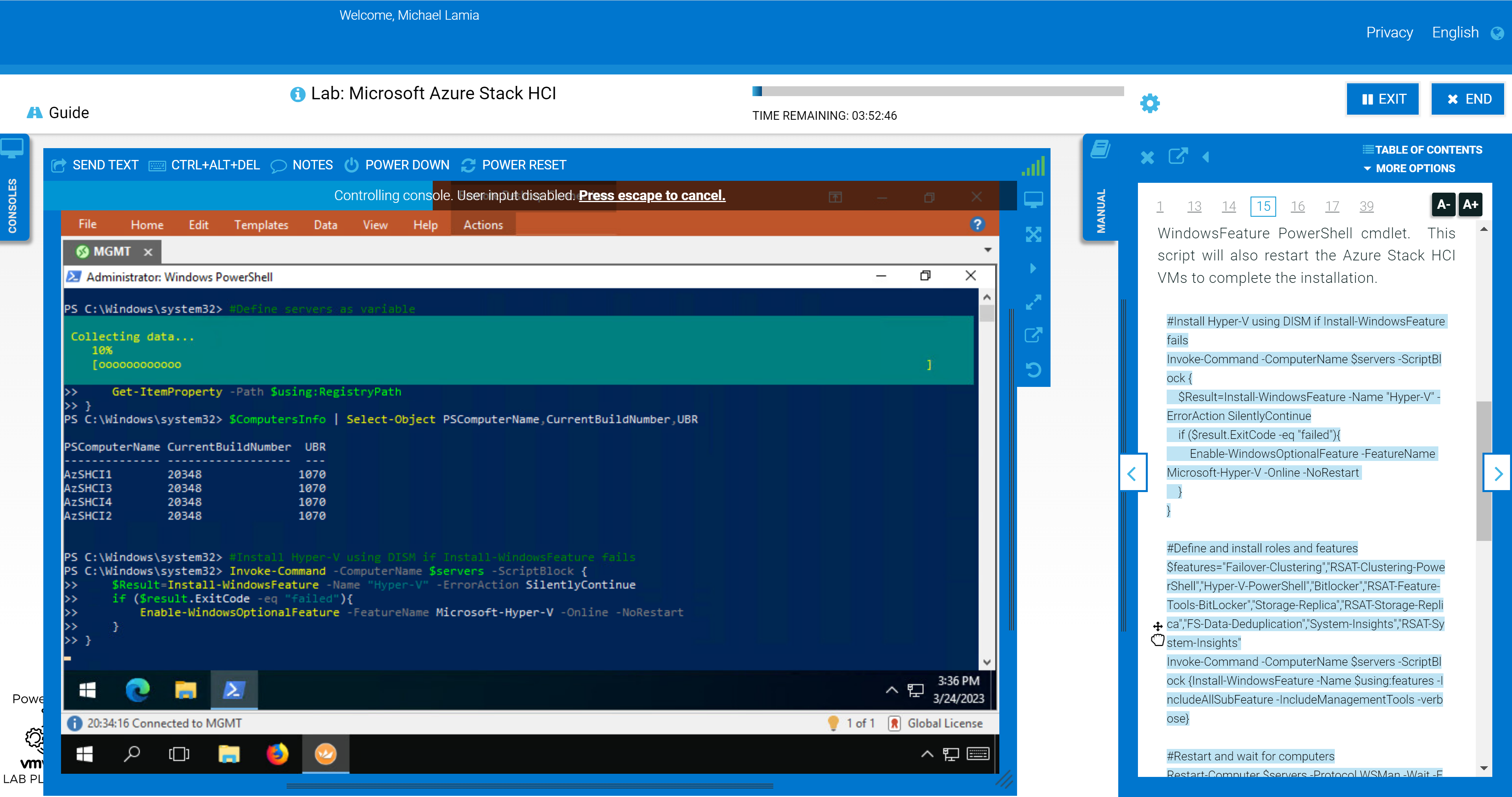

Hands-on Lab HOL-0313-01

The Microsoft Azure Stack HCI Deployment hands-on lab in the Demo Center will appeal to the more mechanically inclined. It pops open the hood so you can get your hands dirty with all the PowerShell automation in our End-to-End Deployment Guide for Day 1 deployments. It is accompanied by an in-depth student manual to point you in the right direction, but there is a bit more freedom to go off-road compared with the interactive demo. Keep in mind that this is a virtual environment, so certain tasks that require the physical hardware may be limited.

This figure illustrates how you can drag and drop the PowerShell code into the console, so you aren't wasting time typing everything yourself:

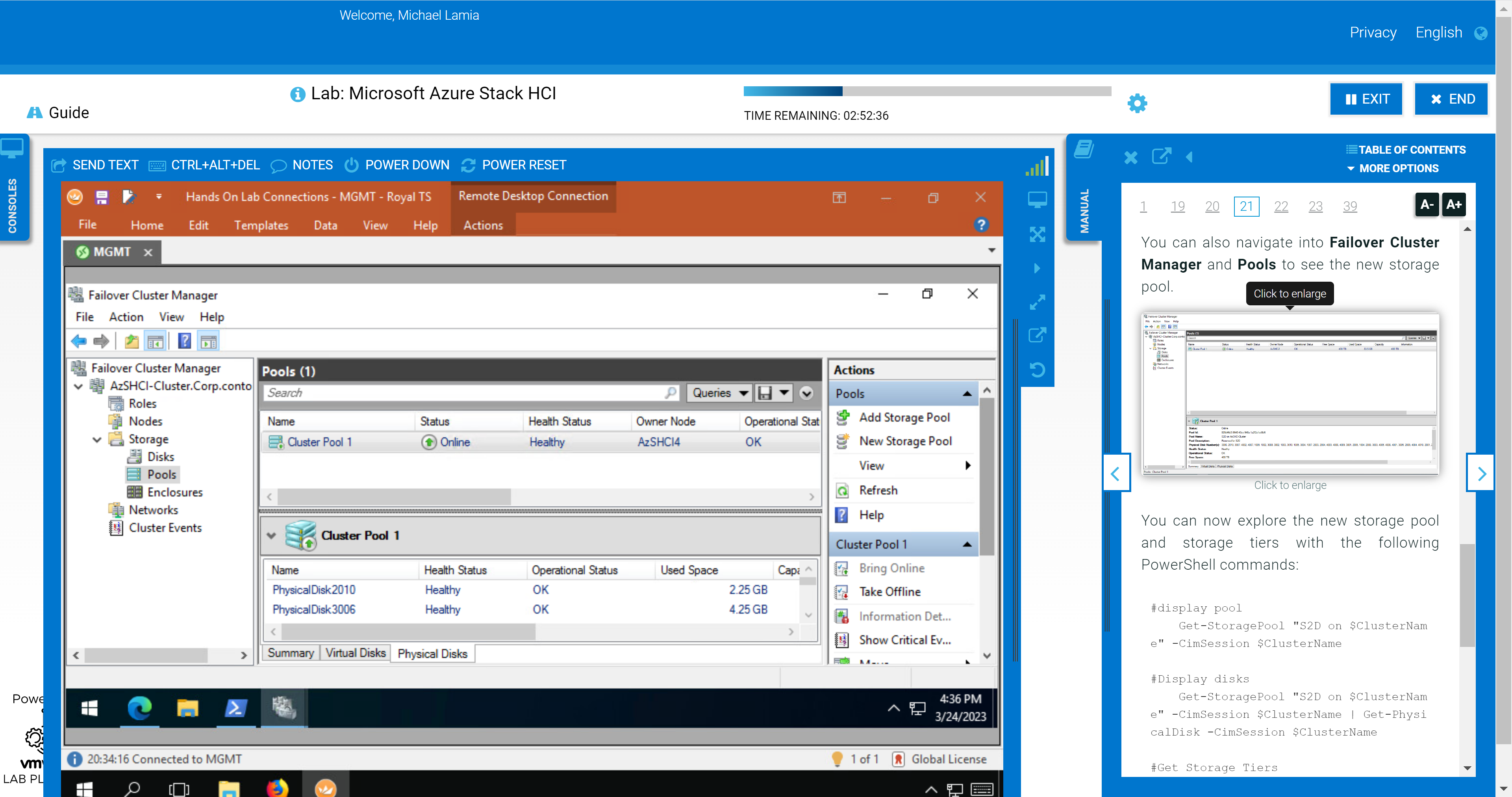

We still show the GUI some love in the later portions of the lab. Failover Cluster Manager and Windows Admin Center make an appearance after you've used PowerShell to configure the hosts, create the cluster, configure a cluster witness, and enable Storage Spaces Direct (S2D). You'll be able to confirm the expected outcome at the command line using the graphical tools.

The following figure shows the step where you use Failover Cluster Manager to inspect the newly created storage pool after its created with PowerShell.

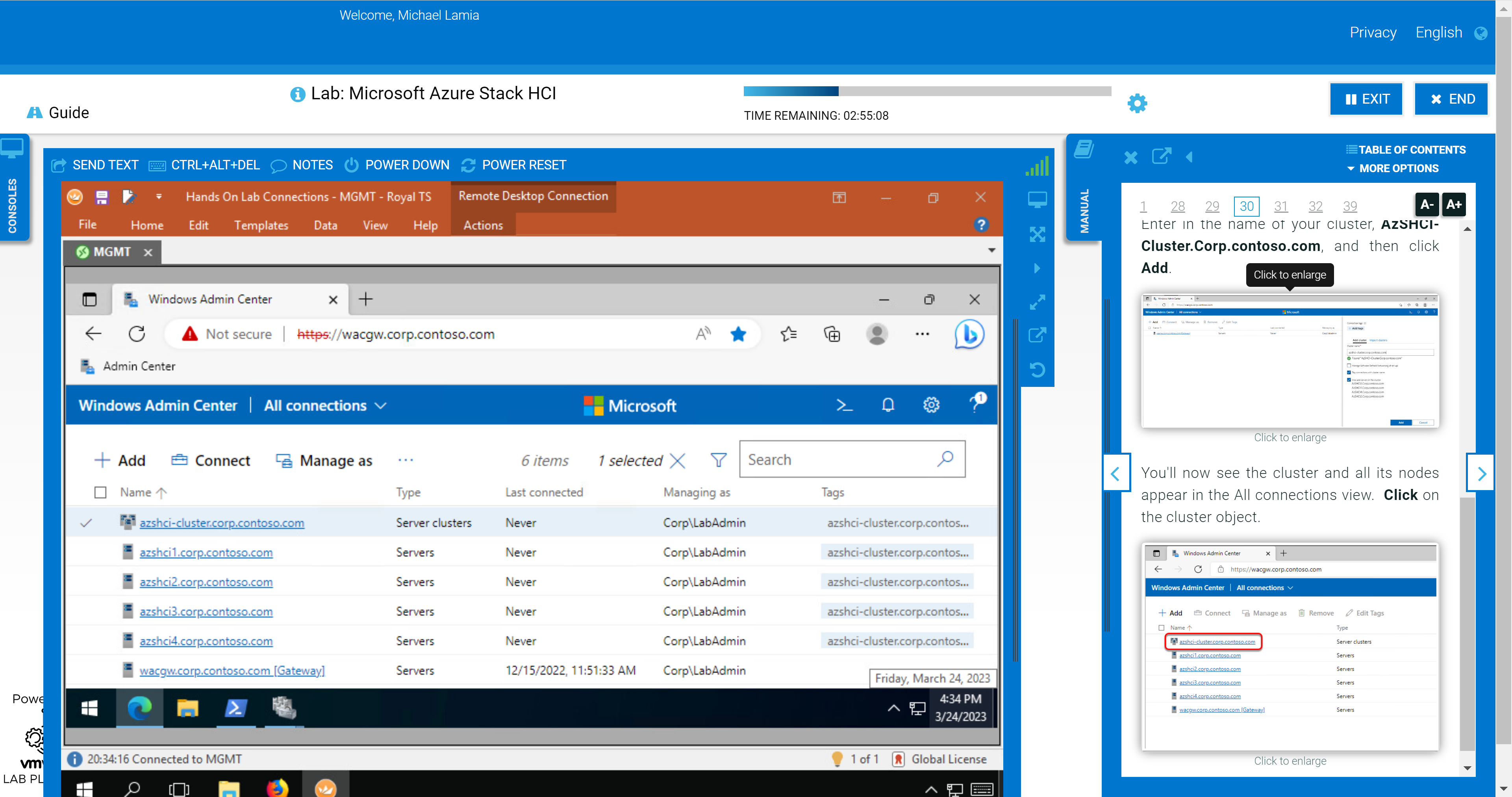

You'll also explore some of the management and monitoring capabilities in Windows Admin Center after adding your new cluster as a connection. This section of the HOL stops short of exploring the OpenManage Integration extension, though. We provide a link in the student manual to the interactive demo. If you’re not a fan of the layout of the lab shown in the following figure, you can rearrange the panes to fit your preferences. For example, you can open the manual in a separate window and allow your virtual desktop to consume all your screen real estate.

Other Opportunities to Get Hands-On

Maybe the interactive demo and hands-on lab don't meet your needs. Maybe you're looking to kick the tires on Azure Stack HCI without any training wheels. In that case, there are other options available to you. We have compiled a great list of resources that address a variety of use cases:

- MSLab – Using this GitHub project, you can run entire virtual Azure Stack HCI environments directly on your laptop if it meets moderate hardware requirements. There are endless Azure hybrid scenarios available to try (Azure Kubernetes Service hybrid, Azure Virtual Desktop, Azure Arc portfolio, and so on), and new ones are added almost immediately after new features are released.

- Dell GEOS Azure Stack HCI Hands-on Lab Guides – The Dell Global Engineering Outreach Specialists have crafted extensive guides to accompany the MSLab scenarios.

- Dell Technologies & Microsoft | Hybrid Jumpstart – The goal of this jumpstart is to help you grow your knowledge, skills, and experience around several core hybrid cloud solutions from the Dell Technologies and Microsoft hybrid portfolio. This has many highly prescriptive hands-on modules and resembles more of a Pluralsight or Microsoft Learn course.

- Azure Arc Jumpstart – If you want to skip the infrastructure deployment steps and get right into the key features of the Azure Arc portfolio, then this project is for you. All you need is an Azure subscription and a single resource group to get started immediately.

- Dell Technologies Customer Solution Center – Speak with your Dell Technologies account team to schedule a personalized engagement with a Customer Solution Center. Our dedicated subject matter experts can help you with extensive Proofs of Concept with your target workloads.

If you're looking for educational materials on Azure Stack HCI, like white papers, blogs, and videos, visit our Info Hub and main product page.

Be sure to follow me on Twitter @Evolving_Techie and LinkedIn.

Author: Mike Lamia

Dell Hybrid Management: Azure Policies for HCI Compliance and Remediation

Mon, 30 May 2022 17:05:47 -0000

|Read Time: 0 minutes

Dell Hybrid Management: Azure Policies for HCI Compliance and Remediation

Companies that take an “Azure hybrid first” strategy are making a wise and future-proof decision by consolidating the advantages of both worlds—public and private—into a single entity.

Sounds like the perfect plan, but a key consideration for these environments to work together seamlessly is true hybrid configuration consistency.

A major challenge in the past was having the same level of configuration rules concurrently in Azure and on-premises. This required different tools and a lot of costly manual interventions (subject to human error) that resulted, usually, in potential risks caused by configuration drift.

But those days are over.

We are happy to introduce Dell HCI Configuration Profile (HCP) Policies for Azure, a revolutionary and crucial differentiator for Azure hybrid configuration compliance.

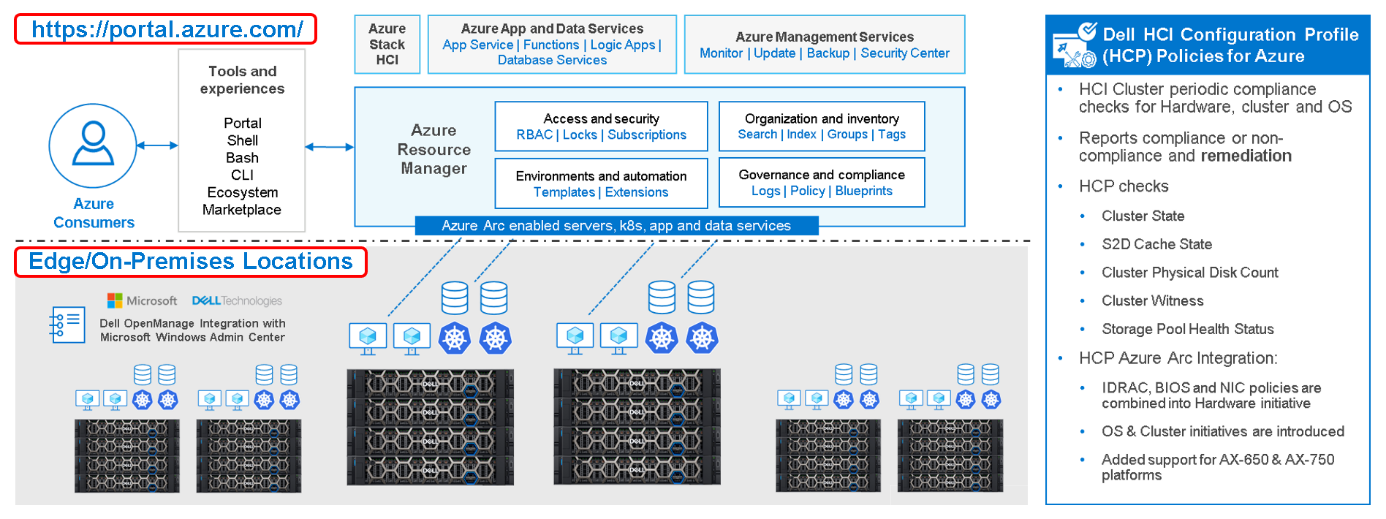

Figure 1: Dell Hybrid Management with Windows Admin Center (local) and Azure/Azure Arc (public)

So, what is it? How does it work? What value does it provide?

Dell HCP Policies for Azure is our latest development for Dell OpenManage Integration with Windows Admin Center (OMIMSWAC). With it, we can now integrate Dell HCP policy definitions into Azure Policy. Dell HCP is the specification that captures the best practices and recommended configurations for Azure Stack HCI and Windows-based HCI solutions from Dell to achieve better resiliency and performance with Dell HCI solutions.

The HCP Policies feature functions at the cluster level and is supported for clusters that are running Azure Stack HCI OS (21H2) and pre-enabled for Windows Server 2022 clusters.

IT admins can manage Azure Stack HCI environments through two different approaches:

- At-scale through the Azure portal using the Azure Arc portfolio of technologies

- Locally on-premises using Windows Admin Center

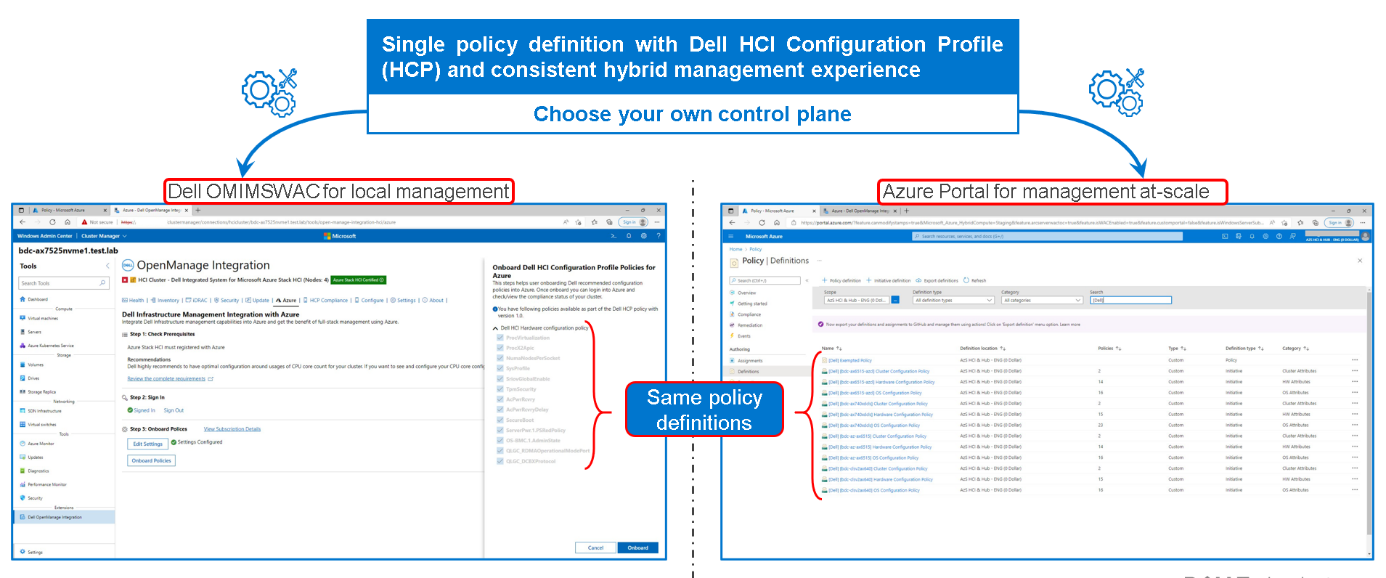

Figure 2: Dell HCP Policies for Azure - onboarding Dell HCI Configuration Profile

By using a single Dell HCP policy definition, both options provide a seamless and consistent management experience.

Running Check Compliance automatically compares the recommended rules packaged together in the Dell HCP policy definitions with the settings on the running integrated system. These rules include configurations that address the hardware, cluster symmetry, cluster operations, and security.

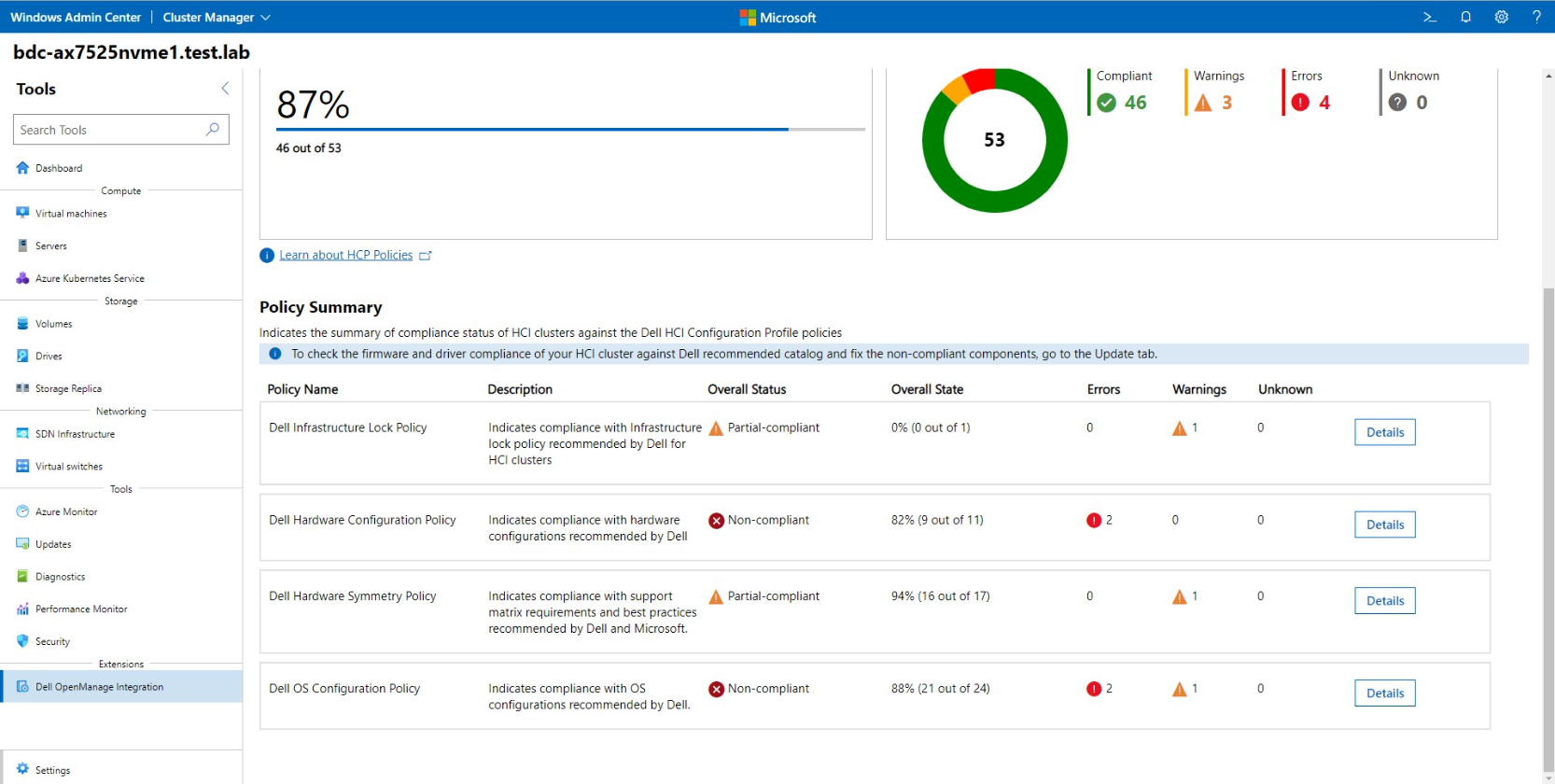

Figure 3: Dell HCP Policies for Azure - HCP policy compliance

Dell HCP Policy Summary provides the compliance status of four policy categories:

- Dell Infrastructure Lock Policy - Indicates enhanced security compliance to protect against unintentional changes to infrastructure

- Dell Hardware Configuration Policy - Indicates compliance with Dell recommended BIOS, iDRAC, firmware, and driver settings that improve cluster resiliency and performance

- Dell Hardware Symmetry Policy - Indicates compliance with integrated-system validated components on the support matrix and best practices recommended by Dell and Microsoft

- Dell OS Configuration Policy - Indicates compliance with Dell recommended operating system and cluster configurations

Figure 4: Dell HCP Policies for Azure - HCP Policy Summary

To re-align non-compliant policies with the best practices validated by Dell Engineering, our Dell HCP policy remediation integration with WAC (unique at the moment) helps to fix any non-compliant errors. Simply click “Fix Compliance.”

Figure 5: Dell HCP Policies for Azure - HCP policy remediation

Some fixes may require manual intervention; others can be corrected in a fully automated manner using the Cluster-Aware Updating framework.

Conclusion

The “Azure hybrid first” strategy is real today. You can use Dell HCP Policies for Azure, which provides a single-policy definition with Dell HCI Configuration Profile and a consistent hybrid management experience, whether you use Dell OMIMSWAC for local management or Azure Portal for management at-scale.

With Dell HCP Policies for Azure, policy compliance and remediation are fully covered for Azure and Azure Stack HCI hybrid environments.

You can see Dell HCP Policies for Azure in action at the interactive Dell Demo Center.

Thanks for reading!

Author: Ignacio Borrero, Dell Senior Principal Engineer CI & HCI, Technical Marketing

Twitter: @virtualpeli