Day 1 – Deploying an APEX Private Cloud Subscription

Fri, 04 Nov 2022 19:03:21 -0000

|Read Time: 0 minutes

Ordering and deploying new physical infrastructure for a business-critical application is often challenging.

This series of blogs reveals the Dell differences that simplify the complex task of infrastructure deployment[1], specifically the processes of fulfillment, configuration, and workload creation. These steps are typically referred to as Day 0, Day 1, and Day 2, respectively. Each blog in this series will show how an APEX subscription can remove complexity and achieve quicker time to value and operational efficiency. (In this blog series, we assume that the application being built requires the compute resources of a 4-node general purpose APEX Private Cloud Dell-integrated rack, ordered through the APEX console with typical network and power requirements.)

Before we dive in, let’s review briefly what has happened so far during the fulfillment stage after an order for a subscription is submitted. To get to this point, the APEX backend business coordination team has been orchestrating the entire fulfillment process, including people, parts, and procedures. The Dell Project Manager and Planning & Optimization Manager have been in frequent contact with the customer, assisting them with configuration and site review. Dell team members support the customer through the Enterprise Project Services portal: a planning and approval tool that allows customer visibility throughout the deployment process, from setting up the host network, to verifying and validating the new hardware. During planning, the Dell Customer Success Manager meets the customer and becomes the customer’s main point of contact for Day 2 operations and afterward.

Delivery day begins when Dell’s preferred shipping partner carefully escorts the rack from the customer loading area to the tile where it needs to be installed inside the customer’s data center. While the rack is being shipped and installed, the Dell Project Manager assigns and coordinates with an on-site professional services technician.

Day 1

Day 1 starts when the professional services technician arrives at the customer site, ready to configure the rack with the agreed upon options. The technician first inspects the rack inside and out, making sure that the wiring is secure and that there are no electrical or physical hazards. The technician then guides the customer or the customer’s electrician to plug the PDUs into datacenter power, and to power up the rack. The technician also plugs the customer provided network uplink cables into the APEX switches. When power and networking are connected, the technician verifies that all systems are in compliance and validated for the next steps.

The technician then configures the APEX switches and works with the customer to get the switches communicating on the customer’s core network, according to the specifications previously agreed upon during the planning meetings. Each APEX Private Cloud rack is pre-wired for 24 compute nodes, regardless of the number of nodes in a subscription. This forward-thinking feature is yet another Dell difference that simplifies rapid expansion. (When the need for an expansion arises, the customer can contact their CSM directly to expedite the order process. Both Dell-integrated and customer provided rack options come with on-site configuration by a professional services technician.)

After the technician performs network health checks, the technician initiates the cluster build. Upon verification and validation of the APEX compute nodes, the technician installs the latest VxRail Manager and vCenter on each, to tie all nodes together into a robust, highly available cluster.

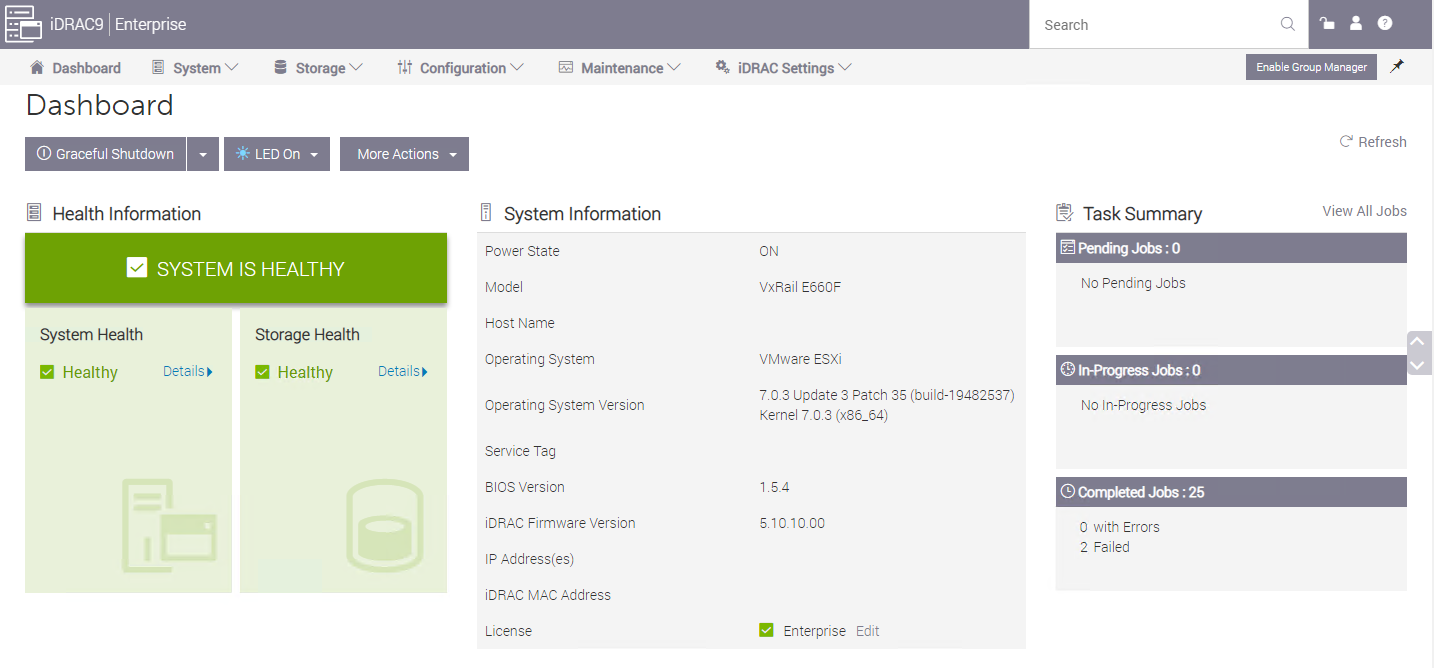

With APEX VxRail compute nodes, customers get a broad range of benefits from the only hardware platform that is co-engineered with VMware. VxRail is a highly-trusted platform with thousands of tested and validated firmware and hypervisor installations around the globe. Each node hosts an instance of the integrated Dell Remote Access Controller (iDRAC) with the latest security and update features. Built-in automations include hot node addition and removal, capacity expansions, and graceful cluster shutdown.

An APEX subscription also includes Dell’s Secure Connect Gateway appliance, which proactively monitors compute nodes and switches. If an anomaly is detected, the appliance gathers logs and issues a support ticket, reducing the time it takes to resolve problems if they arise.

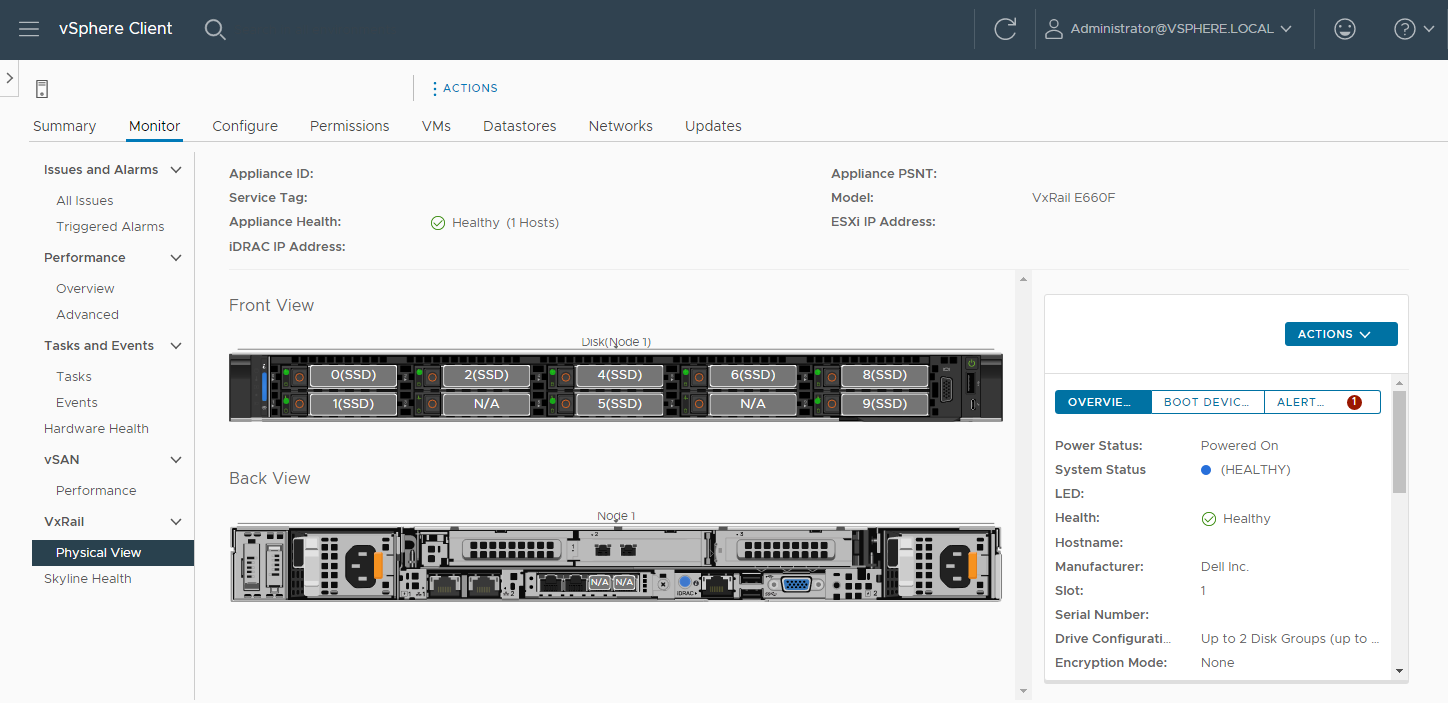

VMware vCenter on VxRail, included with each APEX Private Cloud subscription, comes equipped with Dell integrations such as firmware, driver, and power alerts, and an intuitive physical view to help resolve any hardware issues simply and quickly. Dell is the customer’s single point of contact for help with our streamlined Pro Deploy Plus service and Pro Support Plus with Mission Critical Support - all included in the customer’s APEX Private Cloud subscription.

After the latest versions of VxRail Manager and vCenter are installed, the technician brings up the vCenter interface at an IP address, in accordance with the customer’s network requests. Even after the technician is gone and additional help is needed, customers can ask support to review and help guide updates twice a year at no additional cost.

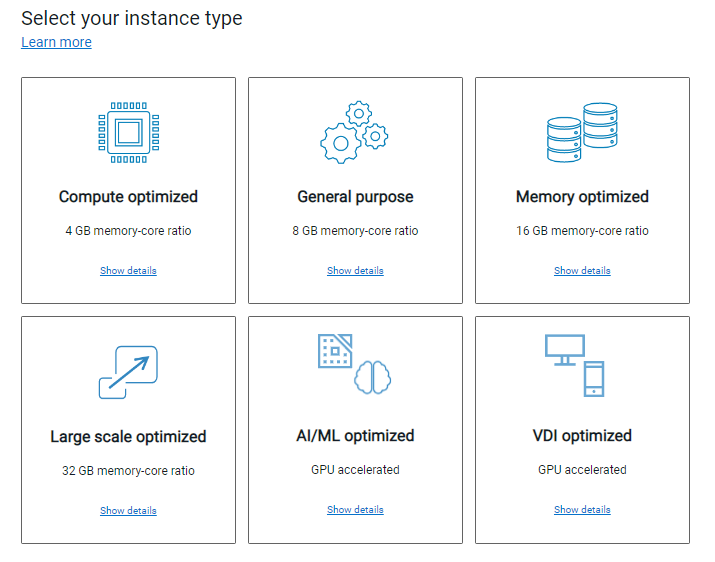

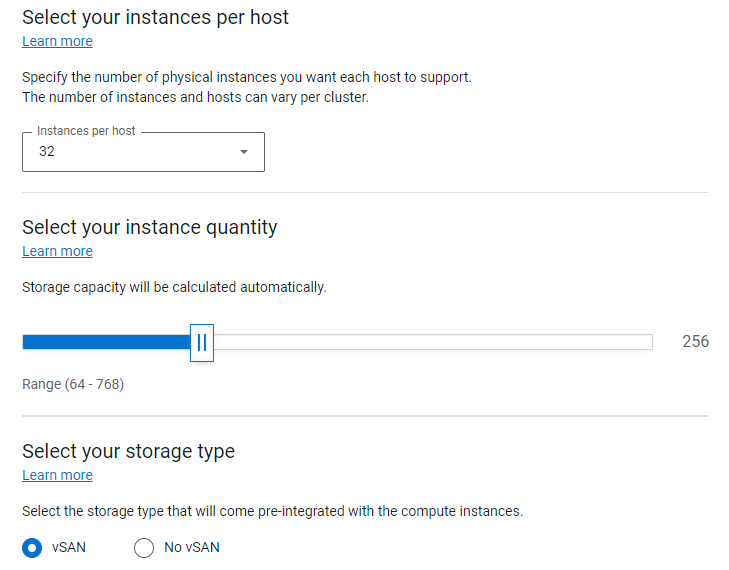

While the underlying hardware is essential and a major differentiator when comparing Dell to the rest of the market, the spirit behind APEX is to provide the best possible outcome for the customer by removing the complexity when deploying a rack-scale solution. To achieve this goal, the APEX Console simplifies the planning process with a wide variety of subscription choices with preconfigured compute, memory, and GPU accelerators. This means that the customer can easily select the number and type of instances they need, or use the Cloud Sizer for assistance to match their workload needs to the available subscription options. The customer can use the APEX Console to contact support directly, manage console users, and assign roles and permissions to those with console access to facilitate the entire lifecycle of their subscription.

Licensing

After vCenter is up and running, the technician installs enterprise licenses for both vCenter and vSAN. APEX is flexible enough that the customer can also bring their own licenses for a potential discount on their subscription. If this is the case, during the planning phase and prior to the subscription order, VMware will review the licenses to eliminate any lapses during the APEX subscription term.

All APEX Private Cloud subscriptions include 60-day full-feature trial licenses for VMware Tanzu and NSX Advanced Load Balancer. After licenses are installed and all software stacks are running successfully, the Dell technician securely hands the usernames and passwords to the customer and requests that they change the passwords.

Additional Services

The technician is also available to configure additional services such as a stretched cluster within the rack, deduplication compression, and in-flight or at-rest encryption. The technician can also help stretch a cluster across racks and to configure fault domains. Although these additional services and costs need to be declared and agreed to during the planning phase, this is well within the capabilities of Dell professional services.

When all the customer requested services are up and running, the technician updates the EPS portal to conclude their tasks and to offer any notes and feedback on the process.

At this point the customer’s subscription is activated! Customers can now move into Day 2 operations and start using new resources for various business workloads.

Resources

Author: David O’Dell, Senior Principal Tech Marketing Engineer - APEX

[1] Deployment time is measured between order acceptance and activation. The 28-day deployment applies to single rack deployments of select APEX Cloud services pre-configured solutions and does not include customizations to the standard configuration.

Related Blog Posts

VxRail’s Latest Hardware Evolution

Thu, 04 Jan 2024 17:22:21 -0000

|Read Time: 0 minutes

December is a time of celebration and anticipation, a month in which we may reflect on the events of the year and look ahead to what is yet to come. Charles Dickens’ “A Christmas Carol” – and its many stage and movie remakes – is one of those literary classics that helps showcase this season’s magic at its finest. It is even said that there is a special kind of magic—one full of excitement, innovation, and productivity—that finds a way to (hyper)converge the past, present, and future for data center administrators all around the world who have been good all year!

No, your wondering eyes do not deceive you. Appearing today are VxRail’s next generation platforms—the VE-660 and VP-760—in all-new, all-NVMe configurations! While Santa’s elves have spent the year building their backlog of toys and planning supply-chain delivery logistics that rival SLA standards of the world’s largest e-tailers, the VxRail team has been hard at work innovating our VxRail family portfolio to ensure that your workloads can run faster than ever before. So, let’s grab a glass of eggnog and invite the holiday spirits along for a tour of VxRail past, present, and future to better understand our latest portfolio addition.

Figure 1. VxRail VE-660

Figure 1. VxRail VE-660 Figure 2. VxRail VP-760

Figure 2. VxRail VP-760

Spirit of VxRail Past

This was especially true earlier this summer when we launched the VE-660 and VP-760 VxRail platforms based on 16th Generation Dell PowerEdge servers. These next-gen successors to the VxRail E-Series and P-Series platforms not only contained the latest hardware innovations, but also represented a systemic change in the overall VxRail offering.

First, the mainline E- and P-series platforms were respectively re-christened as the VE-660 and VP-760. This was done primarily to invite easier comparison points to the underlying PowerEdge servers on which they’re based – the R660 and R760. Second, we tracked how the use of accelerators in the data center had evolved over the years and made the strategic decision to fold the capabilities of the V-Series platform into the P-Series by way of specific riser configurations. Now, customers have the ability to glean all the benefits of a high-performant 2U system with the choice of either storage-optimized (up to 28 total drive bays) or accelerator-optimized (up to 2x double wide or 6x single wide GPUs) chassis configurations—whichever best aligns to the specifics of their workload needs. And third, VxRail platforms dropped the storage type suffix from the model name. Hybrid and all-flash (and as of today, all-NVME–more on this later) storage variants are now offered as part of the riser configuration selection options of these baseline platforms, where applicable.

These changes are representative of how the breadth and depth of customer needs have grown tremendously over the years. By taking these steps to streamline the VxRail portfolio, we charted an evolutionary path forward that continues our commitment to offer greater customer choice and flexibility.

Spirit of VxRail Present

These themes of greater choice and flexibility are amplified by the architectural improvements underpinning these new VxRail platforms. Primary among them is the introduction of Intel® 4th Generation Xeon® Scalable processors. Intel’s latest generation of processors do more than bump VxRail core density per socket to 56 (112 max per node). They also come with built-in AMX accelerators (Advanced Matrix Extensions) that support AI and HPC workloads without the need for any additional drivers or hardware. For a deeper dive into the Intel® AMX capability set, the Spirit of VxRail Present invites you to read this blog: VxRail and Intel® AMX, Bringing AI Everywhere, authored by Una O’Herlihy.

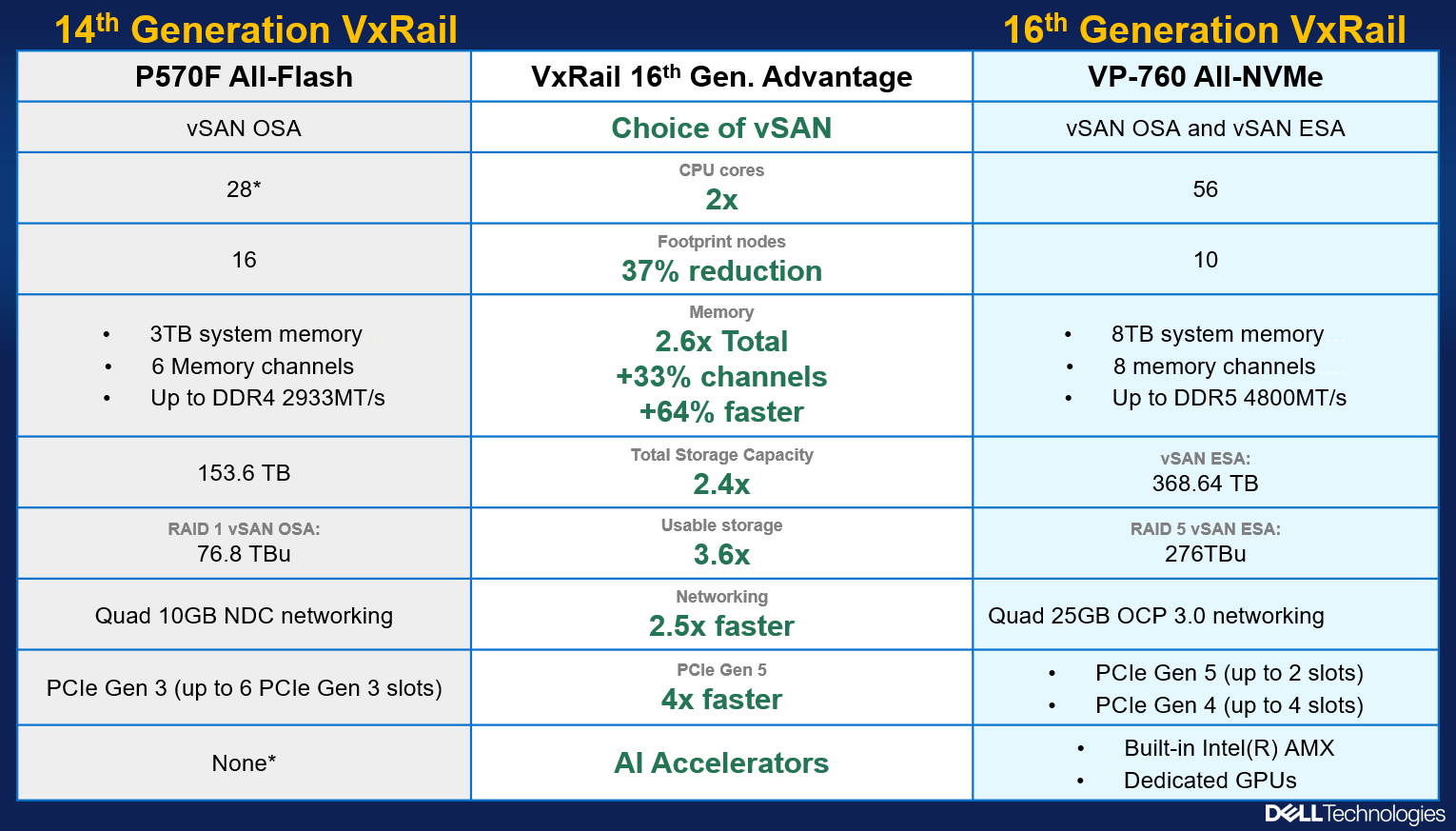

Intel’s latest processors also usher in support for DDR5 memory and PCIe Gen 5, two other architectural pillars that underpin significant jumps in performance. The following table offers a high-level overview and comparison of these pillars and a useful at-a-glance primer for those considering a technology refresh from earlier generation VxRail:

Table 1. VxRail 14th Generation to 16th Generation comparison

VxRail VE-660 & VP-760 | VxRail E560, P570 & V570 | |

Intel Chipset | 4th Generation Xeon | 2nd Generation Xeon |

Cores | 8 - 56 | 4 - 28 |

TDP | 125W – 350W | 85W – 205W |

Max DRAM Memory | 4TB per socket | 1.5TB per socket |

Memory Channels | 8 (DDR5) | 6 (DDR4) |

Memory Bandwidth | Up to 4800 MT/s | Up to 2933 MT/s |

PCIe Generation | PCIe Gen 5 | PCIe Gen 3 |

PCIe Lanes | 80 | 48 |

PCIe Throughput | 32 GT/s | 8 GT/s |

As the operational needs of a business change day-by-day, finding the right balance between workload density and load balance can often feel like an infinite war for resources. The adoption of DDR5 memory across the latest generation of VxRail platforms offers additional flexibility in the way system resources can be divvied up by virtue of two key benefits: greater memory density and faster bandwidth. The VE-660 and VP-760 wield eight memory channels per processor, with the ability to slot up to two 4800MT/s DIMMs per channel for a maximum memory capacity of 8TB per node. Compared to a VxRail P570, the density and speed improvements are staggering: 33% more memory channels per processor, 2.6x increase in per system total memory, and up to a 64% increase in memory speed! With faster and greater density compute and memory available for workloads, each node in a VxRail cluster can handle more VMs, and if there is ever a case of task bottlenecking, there are plenty of resources still available for optimal load balancing.

When we consider the presence of PCIe Gen 5, we see an even greater increase in the overall performance envelope. PowerEdge’s Next-Generation Tech Note does a great job of contextualizing the capabilities of PCIe Gen 5. The main takeaway for VxRail, however, is that it increases the maximum bandwidth achievable from various peripheral components by roughly 25% when compared to PCIe Gen 4 and roughly 66% when compared to PCIe Gen 3. In particular, the jump in available PCIe lanes (48 lanes to a luxurious 80 lanes) and associated throughput (8 GT/s to 32 GT/s per lane) from Gen 3 to Gen 5 significantly reduces performance bottlenecks, resulting in faster storage transfer rates and more bandwidth for accelerators to process AI and ML workloads.

PCIe Gen 5 is also backwards compatible with previous generation peripherals, enabling a certain degree of flexibility with respect to VxRail’s component extensibility and longevity in the data center. Yesterday’s technologies can still be used, but the VE-660 and VP-760 can adapt to growing workload demands by taking full advantage of the latest peripherals as they are released. They are even equipped with an additional PCIe slot over their E- & P-Series predecessors, providing extra dimensions of configuration. These boons in flexibility ensure any investment into this generation of VxRail enjoys longer relevance as your infrastructure backbone.

Spirit of VxRail Future

Even with all these architectural improvements defining the VP-760 and VE-660, we knew we could find ways of improving the capability set. So, we made our list of desired features (and checked it twice!) and determined that the best way to augment these next-generation hardware enhancements would be with the introduction of all-NVMe storage options.

The Spirit of VxRail Past wishes to remind us that VxRail with all-NVMe storage is not new—NVMe first made its way to the VxRail lineup with the P580N and E560N almost four years ago and has been a mainstay facet of the VxRail with vSAN architecture ever since. However, what is most compelling about all-NVMe versions of the VE-660 and VP-760—what the Spirit of VxRail Future wishes to strongly communicate—is that NVMe opens the door to two very compelling benefits: additional flexibility of choice with respect to vSAN architecture and an associated increase in overall storage capacity with the addition of read intensive NVMe drives in sizes of up to 15.36TB.

The following figure outlines all of the generational advantages customers can benefit from when transitioning from existing 14th Generation VxRail environments to VP-760 all-NVMe platforms.

Figure 4. The VxRail 16th Generation all-NVMe advantage

Figure 4. The VxRail 16th Generation all-NVMe advantage

In addition, VxRail on 16th Generation hardware can now support deployments with either vSAN Original Storage Architecture (OSA) or vSAN Express Storage Architecture (ESA). David Glynn provided a great summary of the core value vSAN ESA brings to the table for VxRail in his blog written nearly a year ago. With today’s launch, the VP-760 and VE-660 can now take advantage of vSAN ESA’s single-tier storage architecture that enables RAID-5 resiliency and capacity with RAID-1 performance. Customers who choose to deploy with vSAN OSA can also see the benefit of these new read intensive NVMe drives, with a total storage per node of up to 122.88TB in the VE-660 and 322.56TB in the VP-760. For those who deploy with vSAN ESA, maximum achievable storage is 153.6TB on the VE-660 and up to 368.64TB on the VP-760.

The Spirit of VxRail Future has seen the value of all-NVMe and is content knowing that VxRail will continue to underpin VMware mission-critical workloads for years to come.

Resources

Author: Mike Athanasiou, Sr. Engineering Technologist

A Closer Look at New Features Brought with VxRail 7.0.480

Sat, 17 Feb 2024 23:57:31 -0000

|Read Time: 0 minutes

The landscape of VxRail software is ever-evolving. As software releases become available, so too do new features and functions. These new features and functions create a more robust ecosystem, focusing on simplifying regular tasks that appear mundane but are critical to maintaining a secure, up-to-date, and healthy IT environment. VxRail 7.0.480 brought several new and enhanced capabilities to administrators, continuing to build on the streamlined infrastructure management experience that VxRail offers. Many of these improvements are part of the LCM experience. Let’s take a moment to discuss some of these new software improvements and what they can do for infrastructure staff. These include expanded storage of update advisor reports from one report to thirty reports, the ability to export compliance reports to preservable files, automated node reboots for clusters, and extended ADC bundle and installer metadata upload functionality for improved prechecking and update advisor reporting.

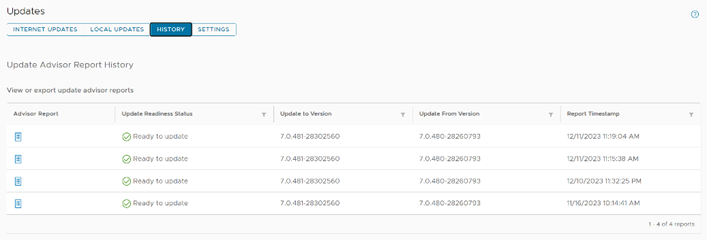

Extended update advisor report availability

Administrative teams have likely seen various update advisor reports. These reports have been part of the VxRail LCM experience for the past few releases and present a look at the Figure 1. A view of the pane showing multiple update advisor reports available for review cluster as it is at the moment. That said, storing multiple reports helps provide a documented history of the cluster. VxRail 7.0.480 has taken these singular reports and extended their storage to hold up to thirty reports, granting administrators the information and reporting to review up to the last thirty updates.

Figure 1. A view of the pane showing multiple update advisor reports available for review cluster as it is at the moment. That said, storing multiple reports helps provide a documented history of the cluster. VxRail 7.0.480 has taken these singular reports and extended their storage to hold up to thirty reports, granting administrators the information and reporting to review up to the last thirty updates.

Imagine that you have a large cluster. Different nodes could need different remediating actions. The ability to maintain multiple reports would enable administrators to address issues raised in a report while also creating a documentation trail for when corrective actions take multiple administrative cycles spanning extended lengths of time, possibly exceeding a day.

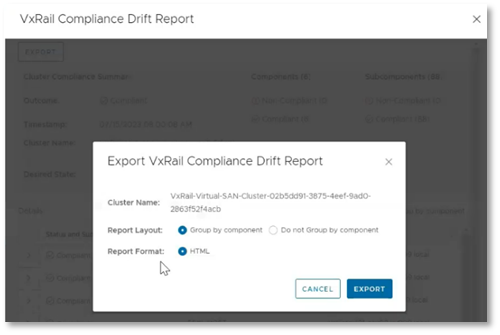

Export of compliance drift reports

Compliance drift reports are another reporting element of the LCM process, helping administrators to ensure that clusters conform with a Continuously Validated State (CVS) on a daily basis. This frees up administrators to attend to business-specific tasks, while ensuring that the more mundane work of gathering software versions for review is automated. This is a critical task that helps prevent time-intensive infrastructure issues that IT teams need to dedicate resources to correcting. Additionally, these reports ensure that LCM updates are successful by identifying any components that may have drifted from what is defined by the current Continuously  Figure 2. The option to export a drift report to a local HTML fileValidated State.

Figure 2. The option to export a drift report to a local HTML fileValidated State.

These compliance drift reports, demonstrated to the right, can now be exported, aiding administrators in creating and maintaining a documented history of their clusters' adherence to Continuously Validated States. Each report can be grouped by components and is saved to an HTML file, preserving the original view that VxRail administrators have come to know.

Sequential node reboot

Our next new feature automates the sequential reboot of nodes within a cluster, a task that many customers engage in manually. The automatic node reboot function is found within the Hosts submenu in the Configure tab. As shown in the following demonstration, administrators simply select the nodes they want to reboot, click the reboot button, and then complete the wizard. The wizard offers the options to begin rebooting immediately or schedule them for a later time. Once this selection is made, the wizard will run a precheck, and the reboot cycles can begin. While this feature most benefits larger clusters, clusters of any size are advantaged by automating infrastructure tasks. Node reboots can help further improve update cycle success rates by clearing issues like memory utilization or restarting any potentially hung processes.

As an example, let’s consider memory utilization again. If there were an issue with the balloon driver making memory available, the update precheck would detect it, however rebooting the node would restart the service and force the memory to be made available once again. We’ve also observed cases where larger clusters are updated less often compared to smaller clusters due to longer maintenance windows. This can lead to longer times between reboots for larger clusters. The sequential reboot of nodes within a cluster eases the difficulty in restarting larger clusters through automation and orchestration, leading to restarts with minimal administrator activity. This can clear a variety of issues that could halt an upgrade.

That said, manually rebooting each node within a cluster can require a significant time investment. Imagine for a moment that we have a 20-node cluster. If it took just 10 minutes per node to migrate workloads away from a node, restart the host, bring it back online physically and relaunch software services, and finally bring workload back, cycling through all 20 nodes would still take over three hours of an administrator's undivided attention and time. In reality, this reboot cycle would likely take longer. Automating these actions allows clusters to benefit from these actions while freeing IT staff up to focus on other critical business tasks.

ADC bundle and installer metadata upload

VxRail 7.0.480 brings the ability to use the adaptive data collector (ADC) bundle and installer metadata, shown being uploaded in the following demonstration, to update the LCM precheck and update advisor functions VxRail Manager provides. This is helpful because the precheck routinely welcomes new developments, leading to a more robust precheck and more successful LCM update cycles. For example, one of the more recent precheck developments involves an additional check on memory utilization. The LCM precheck examines CPU and memory utilization of the vCenter Server appliance. If either CPU or memory utilization exceeds an 80% threshold, a warning will appear in the precheck report. If the check occurs as part of an upgrade cycle, then the warning appears in the update progress dashboard. The update advisor metadata file includes all the version information related to the target VxRail release version. This allows the update advisor to create reports showing the current, expected, and target software versions for each LCM cycle. These packages are pulled by VxRail Manager automatically over the network for clusters using a Secure Connect Gateway connection and are also available to offline dark sites using the Local Updates tab.

Conclusion

The VxRail engineering team routinely delivers new features and functions to our customers. In this blog, we reviewed the enhancements for expanded update advisor report storage, the ability to export drift reports to local HTML files, automated cluster node reboot cycles, and the enhanced LCM precheck and update advisor with the ADC bundle and installer metadata file uploads. As we move forward, we continue to enhance LCM operations and minimize the time required to manage VxRail. As such, VxRail is a fantastic choice to run your virtualized workloads and will continue to become a more robust and administration-friendly platform.

Author: Dylan Jackson, Engineering Technologist