Simplify Cloud Application Deployments with Red Hat OpenShift

Fri, 13 Sep 2024 14:17:36 -0000

|Read Time: 0 minutes

Introduction

If you’ve read the other entries in this series (see links below), then you’ll know that improved container management is a core feature of Red Hat OpenShift. If you haven’t read those articles, I’d recommend taking a moment to review them. There is an OpenShift deployment option we haven’t discussed yet, which is serverless design. We’ll discuss what it means for an OpenShift deployment to be serverless in addition to the improvements developers benefit from. We’ll also cover how the Dell APEX Cloud Platform for Red Hat OpenShift helps users worry less about the infrastructure running business applications.

What is Serverless Infrastructure?

Despite what the serverless title may lead you to believe, it doesn’t mean that servers and other components, like networking and storage, are unnecessary to host applications. These components are still present in these implementations of OpenShift. Instead, serverless means that these layers are abstracted away so that developers can focus all their attention on business-centric software development.

Serverless deployments are an implementation of event-driven architecture. Event-driven architectures, at a high level, use software events to trigger activity and communication between various services. An example of a software event could be something as simple as placing an item in a shopping cart when you’re browsing an online store. OpenShift Serverless supports more than just responding to HTTP activity. Other sources, like file uploads for long-term storage and timers for recurring jobs, are additional examples of event triggers that can dynamically start and stop processes for efficient scaling. The communication of these events allows software to spin up and scale various software services as needed to create a more responsive and flexible software environment.

Let’s compare serverless with monolithic and microservice architectures. monolithic architecture is the oldest backend architecture here. A monolith is a single software process that essentially does everything. It’s a simple architecture that can be easy to deploy but can be challenging to scale up. More hardware resources can be dedicated, but as each monolith is instantiated, the overhead will increase quickly. This includes multiple operating systems, increased network traffic, a load balancer, and more. This architecture can also have reliability problems, as each monolith is a single point of failure. If the process crashes, the entire application goes offline. This can also be a cascading issue if the monoliths are replicas of each other.

A microservices architecture breaks down an application into multiple different microservices that interact with each other. A microservice-based web application might have one process, like an API gateway, that passes various user requests to other processes. This could include things like registrations, logins, searches, and more. The API gateway could hand account-related activity to an authentication service and product-related activity to a product service. These services, as well as any other needed services, could then tie into a database. A microservice architecture can be a bit more complex to build because multiple services need to coordinate and communicate with each other, but it is also more resilient than a monolith. For example, if the product management service crashed, users could still log in and perform other activities that don’t rely on the crashed service. Microservices also scale much more easily than monoliths. For example, additional compute resources can be added on demand.

How Serverless Environments Can Improve Applications

Serverless architecture takes the segmentation that microservices introduced and brings them to individual functions. This means that a user registration, login, searching a product list, adding an item to a cart, uploading a file, anything really, becomes decoupled from each other allowing each component to scale as needed. Compared to our other two architectures, serverless is certainly the most scalable.

If you read more about serverless architectures, you may run into the term Functions-as a-Service. Functions-as-a-Service refers to executing individual software functions, like logins or searches, as an individual process that may run anywhere in an OpenShift cluster. This allows for creating very simple and independent code. This code segmentation also makes debugging much more straightforward. If the software code needs to be adjusted to address a bug, developers can target and isolate the faulty function in a very specific way that microservice architectures and monoliths can’t offer. If the bug were a more severe issue that causes crashing, then this level of segmentation results in only one function being unavailable while the vast majority of the application remains available.

Using a serverless model for business applications allows developers to create applications that easily scale up and down at the function level to maximize resource efficiency. The benefits of serverless aren’t limited to efficiency, though. Serverless applications offer an unparalleled level of resiliency because application bugs are segmented at the function level. If a service in a serverless deployment crashes, only a small part of the overall application becomes unavailable. If an issue does arise, developers have much smaller code volumes to examine, compared to microservice or monolithic architectures.

AI applications also greatly benefit from a serverless deployment and are a popular use case for OpenShift. Red Hat developed an operator called OpenShift AI, which provides additional tools to develop, train, host, and monitor AI applications. OpenShift AI is fully compatible with the Dell APEX Cloud Platform and can be deployed after the cluster completes its day one deployment process. The operator is dependent on the OpenShift software version. Updates to OpenShift can trigger automated updates for operators to reduce IT effort and a simplified experience.

What Dell APEX Cloud Platform Adds to a Serverless Environment

The Dell APEX Cloud Platform enhancements won’t directly affect how applications operate. However, some of the key benefits of going with a serverless deployment are stability and resiliency. The APEX Cloud Platform absolutely does tie in here. Serverless deployments have all the same complications and challenges as any other Kubernetes deployment. I described some of the challenges in the last entry of this series. To quickly recap, some of these challenges can include hardware component planning, network requirements, storage capabilities, and more. If a cluster needs to be expanded, DIY solutions may encounter logistics, acquisition, and component documentation challenges.

These are problems APEX Cloud Platform resolves by offering hardware platforms tested for compatibility and by packaging cluster upgrades. This is ties back to Continuously Validated States. Continuously Validated States refer to collections of firmware, drivers, and operating system software that are collected and tested for thousands of hours by Dell engineers to ensure that the interoperability between the various layers is stable. APEX Cloud Platform clusters move between various Continuously Validated States throughout their lifecycles via update bundles distributed by Dell servers. This ensures that the hardware powering your business workloads maximizes availability.

The approach to APEX Cloud Platform lifecycle management also echoes the goal of worrying less about infrastructure. Operations teams benefit from massively reduced cycles spent on building out, testing, and deploying updates to APEX Cloud Platform clusters. Instead, pre-configured, tested and validated, known update packages can be pulled from Dell datacenters and deployed to clusters at the click of a button.

There is another feature present in APEX Cloud Platform nodes that is similar to the reactive and automated nature of serverless deployments. This feature is the ability to create support cases with Dell ProSupport teams automatically as a response to alarm events. For example, if a drive or memory module were to fail, the hardware platform starts the support process independently. This allows operations teams to benefit from automation and simplification similar in principle to what is present in OpenShift. Furthermore, DevOps models remain a popular operations model for IT. A business following a DevOps model could keep their engineers focused on application and workload needs instead of spending work hours handling hardware tasks.

Conclusion

OpenShift serverless application deployments are an incredibly scalable choice that maximizes resource efficiency while radically reducing management complexity. An event-based Function as a Service design allows software functions to spin up and execute anywhere in the cluster as needed and then scale back down when appropriate. It’s a deployment choice that reacts to business needs automatically in real time. OpenShift serverless deployments are a natural fit with APEX Cloud Platform for Red Hat OpenShift hardware with a common design feature of enabling IT teams to focus on business software. OpenShift does this with a range of container management enhancements, while APEX Cloud Platform hardware complements this by automating many of the hardware layer maintenance actions, like support ticket creation and simplified cluster upgrades backed by Continuously Validated States.

Not all workloads are suited to containerization. Sometimes there is a greater need for isolation than what container deployments offer. OpenShift meets this need with support for virtualization and with an optional hypervisor operator. I’ll wrap up this series in the next and final entry where I’ll cover virtualization on OpenShift.

Additional Resources

What are Red Hat OpenShift Containers? (2 of 4)

Run Containers and Virtual Machines on the Same Cluster with Red Hat OpenShift (4 of 4)

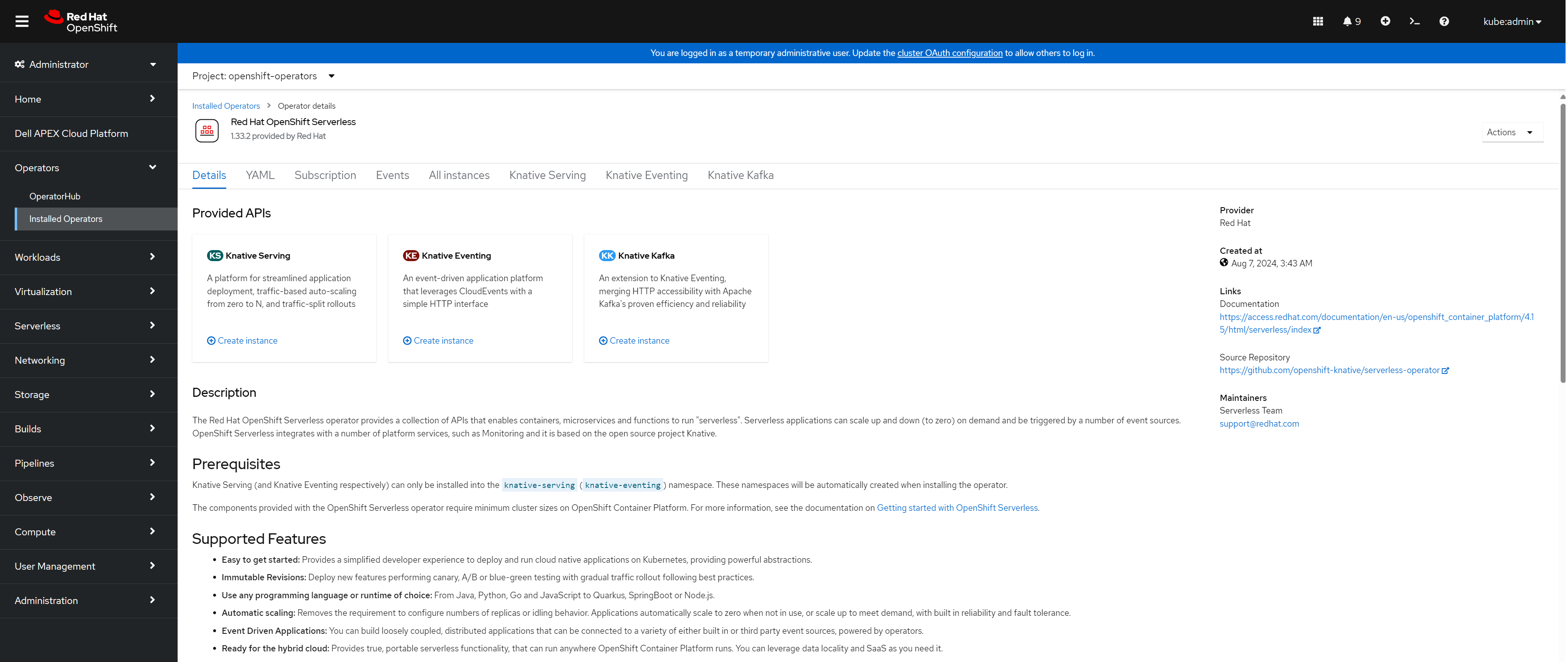

Exploring Serverless with OpenShift

What is Red Hat OpenShift Serverless?

APEX Cloud Platform for Red Hat OpenShift

Author: Dylan Jackson, Engineering Technologist