Scaling Edge Telemetric Near-Time Data Processing with Dell Streaming Solution

Tue, 20 Aug 2024 00:06:43 -0000

|Read Time: 0 minutes

Introduction

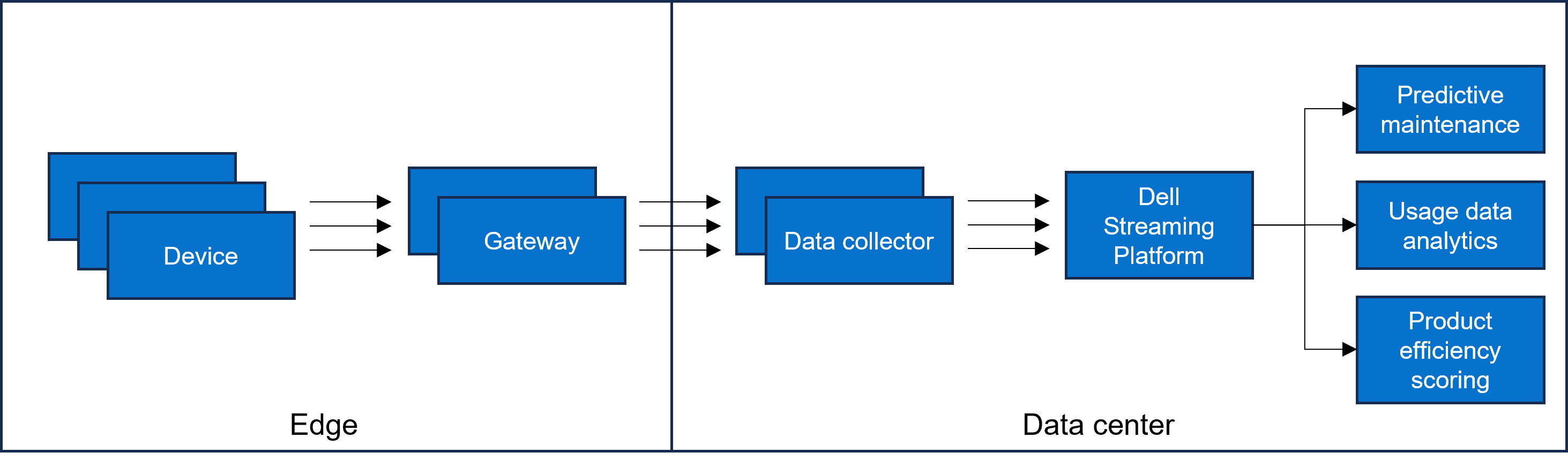

In today’s fast-paced digital world, enterprise storage, servers, networks, and other IT infrastructure devices of Dell Technologies play a crucial role in maintaining smooth operations. These devices are configured to send telemetry and alert files, generating billions of messages daily from millions of edge-connected devices. This telemetric data is essential for remote issue analysis, diagnostics, and preventive maintenance, ensuring that Dell Technologies meets its support Service Level Agreements (SLAs) and resolves issues faster. Also, this data also provides valuable insights into customer usage and feature value analysis.

Background information

Choosing the right technology is the key to success. Any delay in processing this data impacts billing costs to customers and affects the revenue of Dell Technologies. The IoT use case of Dell Technologies is uniquely challenging due to the scale and accuracy that is required to maintain processing time. Event processing with the publish-subscribe principle of Kafka serves as the central nervous system of the IoT solution.

Figure 1: Data flow

Figure 1: Data flow

Key design factors

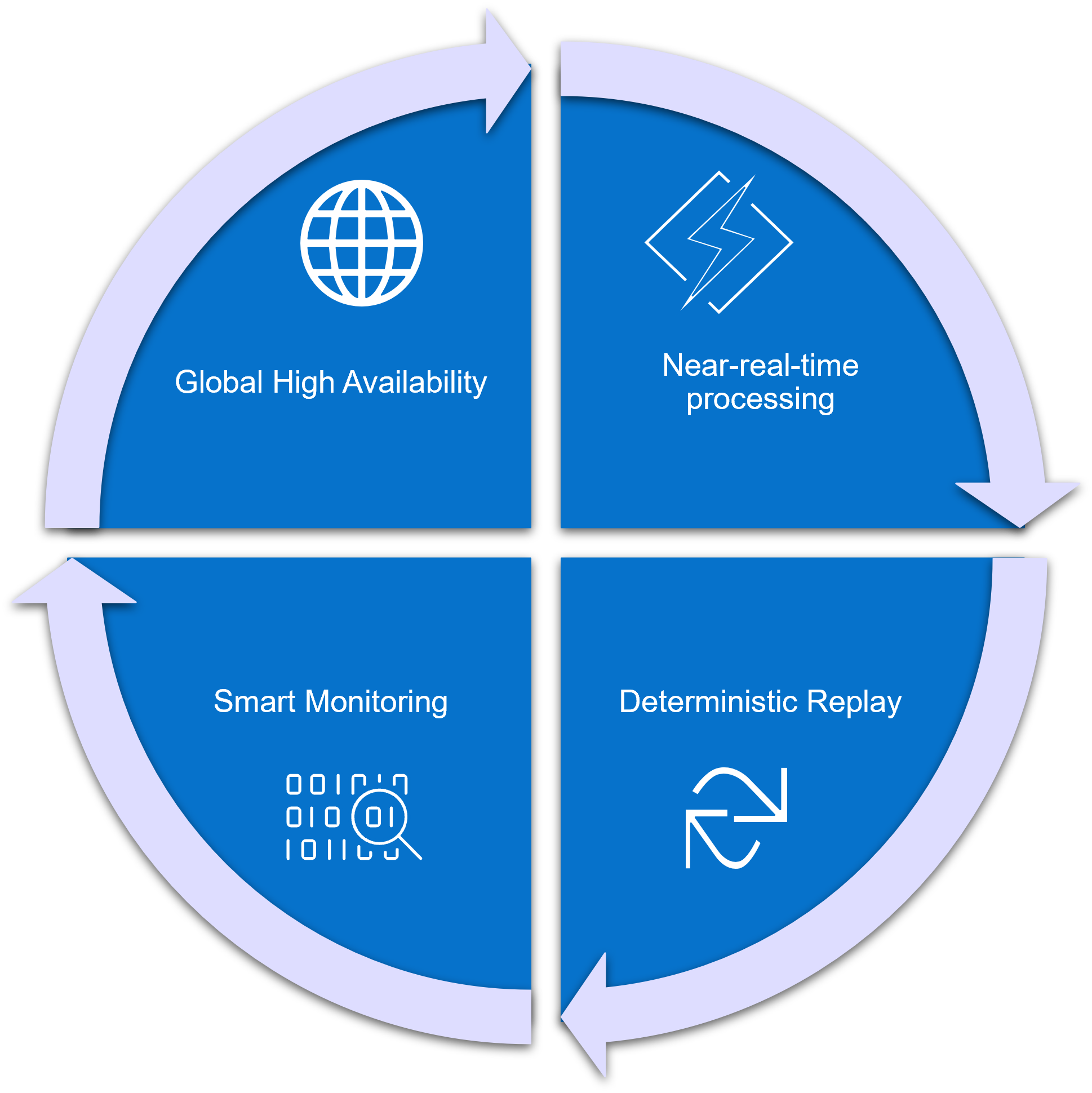

Figure 2: Features to solve the telemetric system need

The Dell Technologies Kafka platform is uniquely positioned to solve the needs of the telemetric system by providing:

- Global high availability

- Near-real-time processing

- Deterministic replay

- Smart monitoring, offering a single pane of glass view into the system health.

Global high availability

Platform availability with no data loss is vital. A multiregion active/active deployment model of the Dell Streaming Solution ensures high availability across different zones, decreasing Recovery Point Objective (RPO) and Recovery Time Objective (RTO). For messages that cannot tolerate elapsed time during any node, infrastructure, or region failure, synchronous replication across regions is configured. Publisher applications are configured with acknowledgment to all to ensure that messages are replicated and committed to several Kafka nodes to receive a successfully committed response.

Near-real-time processing

Near-real-time processing with low latency and high throughput, without compromising on persistence, is another key objective of the telemetric solution. It uses Kafka platform as a transient store where applications publish and subscribe to data topics. While some default parameters for Kafka brokers and client applications are optimized for low latency, network latency often contributes positively to latency impact. Tuning network throughput by increasing network threads and replica fetcher threads reduces overall latency. Latency and throughput vary with the workload and must be benchmarked to determine the optimal number of threads for each use case.

In typical scenarios, client applications may run on a VM or cloud platform and could potentially go down. If any consumer application fails, it triggers a rebalance among the remaining Kafka consumers to take over the task. This rebalancing event stops message consumption, which is undesirable if the failed consumer application automatically recovers quickly. Allowing a grace period for unsuccessful applications enables healthy consumers to continue processing without interruption. Adjusting session.timeout.ms and heartbeat.interval.ms settings provide an appropriate balance for efficiently managing these scenarios.

Other parameters tuned to optimize speed include max.poll.records and max.poll.interval.ms. max.poll.records defines the number of messages that are fetched in a single poll, while max.poll.interval.ms is the time allowed for the consumer to process these messages.

On the Kafka publisher side, adjusting linger.ms and batch size improves throughput. linger.ms is the delay time before sending out the next batch of messages.

Deterministic replay

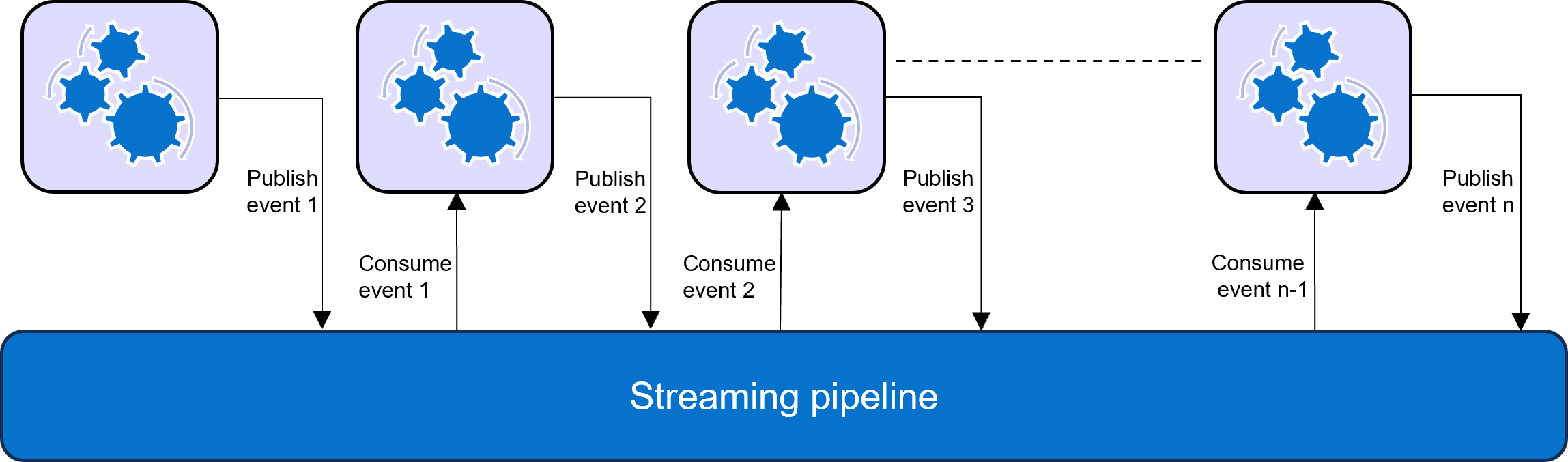

Figure 3: Event processing workflow in a Streaming pipeline

Kafka, as an event streaming platform, is core to building any event pipeline where a consumer application consumes a message, processes it, and publishes another event, which is then managed by the subsequent event processor. If a failure occurs at any level along the pipeline, the process can be replayed from a recovery point. There is no randomness that is involved, as the event processor automatically replays from the last successful event.

Smart monitoring

Monitoring is essential for a stable platform. It collects data from the servers, processes, and platforms to provide a picture of availability, performance, and resource efficiency. Tools like Prometheus, Grafana, Alert Manager, and Node Exporter are used to build monitoring, observability, and alerting around the system. This setup provides a single holistic dashboard view with drill-down capabilities on each component, enabling prediction of future states and resolution of issues before they become critical. Kafka node metrics, resource utilization, process status, process utilization, message traffic, network saturation, connection latency, error rate, and other data are collected to offer useful horizontal and vertical system visibility.

Alert rules are defined to detect any anomaly, sending notifications to act appropriately to prevent any failure. Integration with Dynatrace provides impressive visibility with multilevel tracing of any message flow.

Conclusion

Processing edge telemetric near-real-time data at scale with Kafka enables Dell Technologies to provide modernized service and support to its clients in 180 countries through continuous monitoring and a data-driven approach. This helps develop insights to identify priority actions and maintain better health of the IT environments of the clients.