Managing your on-premises infrastructure with HashiCorp Terraform – Part 5

Fri, 27 Sep 2024 15:00:00 -0000

|Read Time: 0 minutes

Terraform Dell PowerMax

This is the fifth blog post in a series to introduce infrastructure admins and engineers to Terraform and to explain how to use it to manage on-prem physical infrastructure in a data center. It is highly recommended that you quickly review the previous posts before proceeding with this one.

- Terraform on-premises infrastructure

- Terraform Dell PowerStore

- Terraform resource dependencies

- Terraform variables

- Terraform Dell PowerMax

We are going to apply what we have learned so far throughout this series. In this post, we will focus on another storage platform, Dell PowerMax. To interact with PowerMax, as we did with PowerStore, we need a Terraform provider. You can find the Terraform provider for PowerMax in the Terraform registry. It provides all the necessary resources for provisioning and masking of volumes as well as for managing snapshots. You can check the details in the documentation page.

Understanding PowerMax provisioning

The provisioning process in PowerMax is more sophisticated than PowerStore, due to the nature of the platform. Provisioning storage in this platform requires you to group together three different entities:

- Storage Group (logical grouping of volumes)

- Port Group (logical grouping of ports)

- Host Group (logical grouping of hosts)

These three entities are combined into a single “Masking View”. So, a masking view essentially tells the storage array what volumes must be mapped to what hosts and through which ports. In this example we will create a volume and present it to a single host.

Provider and variables

At a minimum, our Terraform plan is going to include a “main.tf” with all the resources and “variables.tf” with the connection details for the provider. The following is my “variables.tf”.

variable "username" {

type = string

description = "Stores the username of Unisphere."

default = "smc"

}

variable "password" {

type = string

description = "Stores the password of Unisphere."

default = "C00lp@sswd"

}

variable "endpoint" {

type = string

description = "Stores the endpoint of Unisphere."

default = "https://10.10.10.1:8443"

}

variable "serial_number" {

type = string

description = "Stores the serial number of the PowerMax array."

default = "000123456789"

}

variable "pmax_version" {

type = string

description = "Stores the username of Unisphere."

default = "100"

}

I have included the description field for each of the five variables to better explain what they are, but the description parameter is optional. Notice how the “endpoint” needs to have the root of the URL to reach the Unisphere REST API. This goes from the “https” to the port that Unisphere is listening on, which by default is “8443”.

Also, notice the “pmax_version” variable. The default value should be the version of the REST API you want to use but without the “.” that separates the major from the minor version. In this example, I am accessing a PowerMax that is running version “10.0”, so the parameter is “100”.

Note: PowerMax will typically run multiple versions of the REST API simultaneously. This is very helpful because it will allow you to keep running old scripts even if you upgrade to code of the array.

The following code is the top of my “main.tf” which includes the provider section. I am not specifying the version of the provider. If we do not request a specific version, it will automatically pick up the latest one. As we saw in the previously in the first blog, the information in this paragraph will be used by Terraform to download the necessary provider when we run “terraform init”.

terraform {

required_providers {

powermax = {

source = "dell/powermax"

}

}

}

provider "powermax" {

username = var.username

password = var.password

endpoint = var.endpoint

serial_number = var.serial_number

pmax_version = var.pmax_version

insecure = true

}

Resource creation

The “main.tf” continues with the first two resources. We are creating a storage group called “terraform_sg” and in it, a 20GB volume called “terraform_vol1”. Notice how we are inserting an “implicit dependency” in the volume block. This is telling Terraform, the storage group needs to be created before the volume. As we saw in the first post in this series, Terraform recommends the use of implicit dependencies wherever possible. If you want to create multiple volumes, you could use the “count” parameter.

resource "powermax_storagegroup" "terraform_sg" {

name = "terraform_sg"

srp_id = "SRP_1"

}

resource "powermax_volume" "terraform_vol1" {

vol_name = "terraform_vol1"

size = 20

cap_unit = "GB"

sg_name = powermax_storagegroup.terraform_sg.name

mobility_id_enabled = false

}

The next two blocks in our plan include the host and the hostgroup. The host resource is created with information such as the initiators WWNs and the host name. According to the documentation, the hostgroup resource requires two mandatory attributes: “name” and “host_ids”. As you see below, we are using an implicit dependency with the name of the host that was created in the “powermax_host” resource block. Notice how “host_ids” is enclosed in square brackets. This means it is a list, so we could specify multiple hosts separated by commas.

resource "powermax_host" "tfhost01" {

name = "tfhost01"

initiator = [

"100000109b56aaaa",

"100000109b56bbbb"]

host_flags = {}

}

resource "powermax_hostgroup" "terraform_cluster" {

name ="terraform_cluster"

host_flags = {}

host_ids = [powermax_host.tfhost01.name]

}

Now is the the portgroup’s turn. This includes at a minimum: a name, a protocol, and a list of ports. Each port contains the director and the port itself. Unlike the previous two sections, we do not need to create the ports. They already exist, so it is a single Terraform block with no dependencies.

resource "powermax_portgroup" "terraform_pg" {

name = "terraform_pg"

protocol = "SCSI_FC"

ports = [

{

director_id = "FA-1D"

port_id = "26"

},

{

director_id = "FA-2D"

port_id = "11"

}

]

}

The final step and final bit of code in our “main.tf” is the masking view. This is the final construct that ties everything together: the storage group, the host group, and the port group. Notice how all three are expressed as an implicit dependency. However, if you try to run it like that there is a good chance that it will fail. Why? If you look carefully at the “storage group” block, we first need to create the storage group and then we create the volume inside that storage group. So, the volume depends on the storage group and not the other way around. If we do not define any additional dependencies what ends up happening is that the masking view creation starts as soon as the storage group is created, without waiting for the volume to be created. In that case an error is thrown to let us know that we cannot create a masking view with an empty storage group. So, for all this to work every time, we need to add an “explicit dependency” with the “depends on” attribute. This instructs Terraform to wait until the volume is created before the masking view creation can proceed.

resource "powermax_maskingview" "terraform_mv" {

name = "terraform_mv"

storage_group_id = powermax_storagegroup.terraform_sg.id

port_group_id = powermax_portgroup.terraform_pg.id

host_group_id = powermax_hostgroup.terraform_cluster.id

depends_on = [powermax_volume.terraform_vol1]

}

Init and plan

As discussed in the first post in this series, once we have our plan, i.e. the “main.tf” and the “variables.tf” we can run “terraform init”. Notice how it has downloaded the latest PowerMax provider, which is v1.0.2 at the time of this writing.

root@alb-terraform:~/powermax# terraform init

Initializing the backend...

Initializing provider plugins...

- Reusing previous version of dell/powermax from the dependency lock file

- Using previously-installed dell/powermax v1.0.2

Terraform has been successfully initialized!

You may now begin working with Terraform. Try running "terraform plan" to see

any changes that are required for your infrastructure. All Terraform commands

should now work.

If you ever set or change modules or backend configuration for Terraform,

rerun this command to reinitialize your working directory. If you forget, other

commands will detect it and remind you to do so if necessary.

Now we can proceed with “terraform plan”. As we can see below, six resources will be created. The output has been truncated for better visibility

root@alb-terraform:~/powermax# terraform plan

Terraform used the selected providers to generate the following execution plan. Resource actions are indicated with the following symbols:

+ create

Terraform will perform the following actions:

# powermax_host.tfhost01 will be created

+ resource "powermax_host" "tfhost01" {

...

}

# powermax_hostgroup.terraform_cluster will be created

+ resource "powermax_hostgroup" "terraform_cluster" {

...

}

# powermax_maskingview.terraform_mv will be created

+ resource "powermax_maskingview" "terraform_mv" {

...

}

# powermax_portgroup.terraform_pg will be created

+ resource "powermax_portgroup" "terraform_pg" {

...

}

# powermax_storagegroup.terraform_sg will be created

+ resource "powermax_storagegroup" "terraform_sg" {

...

}

# powermax_volume.terraform_vol1 will be created

+ resource "powermax_volume" "terraform_vol1" {

...

}

Plan: 6 to add, 0 to change, 0 to destroy.

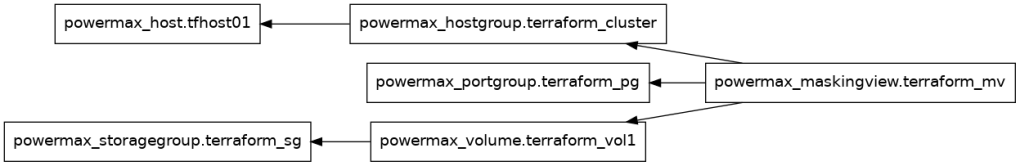

Let us use the graphing tool to understand the dependencies in between, as Terraform sees them. We are using the “graphviz” package to generate an actual image.

root@alb-terraform:~/powermax# terraform graph | dot -Tpng >powermax-plan.png

root@alb-terraform:~/powermax# ll *.png

-rw-r--r-- 1 root root 18861 Jun 4 02:56 powermax-plan.png

This is what the generated image looks like. Notice how the masking view depends on the volume, not on the storage group.

Figure 1

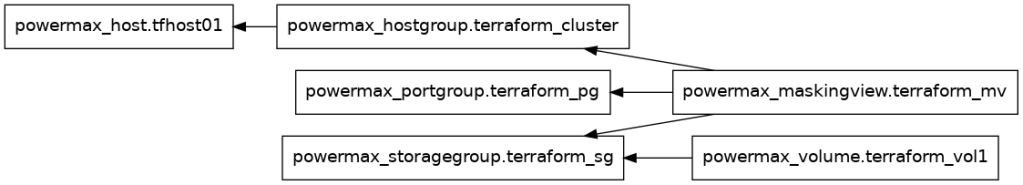

For comparison, I have gone back to the “main.tf” and commented the “depends_on” attribute, and then I generated the graph again. Notice how the masking view and the volume resources are at the same level and they are likely to run concurrently, which as we discussed will lead to the masking view failing because the storage group is empty.

Figure 2

Apply and destroy

We can now apply the plan. Here also, we are only showing the relevant pieces of the output for clarity purposes. Notice how the creation of the storage group took 6 seconds and the volume creation did not kick off until the storage group was complete.

root@alb-terraform:~/powermax# terraform apply

...

Plan: 6 to add, 0 to change, 0 to destroy.

Do you want to perform these actions?

Terraform will perform the actions described above.

Only 'yes' will be accepted to approve.

Enter a value: yes

powermax_portgroup.terraform_pg: Creating...

powermax_storagegroup.terraform_sg: Creating...

powermax_host.tfhost01: Creating...

powermax_portgroup.terraform_pg: Creation complete after 3s [id=terraform_pg]

powermax_host.tfhost01: Creation complete after 4s [id=tfhost01]

powermax_hostgroup.terraform_cluster: Creating...

powermax_hostgroup.terraform_cluster: Creation complete after 0s [id=terraform_cluster]

powermax_storagegroup.terraform_sg: Creation complete after 6s [id=terraform_sg]

powermax_volume.terraform_vol1: Creating...

powermax_volume.terraform_vol1: Creation complete after 2s [id=0002E]

powermax_maskingview.terraform_mv: Creating...

powermax_maskingview.terraform_mv: Creation complete after 0s [id=terraform_mv]

Apply complete! Resources: 6 added, 0 changed, 0 destroyed.

Finally, we can run “terraform destroy” and we will see how the same operations are performed in reverse to guarantee success.

root@alb-terraform:~/powermax# terraform destroy

powermax_portgroup.terraform_pg: Refreshing state... [id=terraform_pg]

powermax_storagegroup.terraform_sg: Refreshing state... [id=terraform_sg]

powermax_host.tfhost01: Refreshing state... [id=tfhost01]

powermax_hostgroup.terraform_cluster: Refreshing state... [id=terraform_cluster]

powermax_volume.terraform_vol1: Refreshing state... [id=0002E]

powermax_maskingview.terraform_mv: Refreshing state... [id=terraform_mv]

...

Plan: 0 to add, 0 to change, 6 to destroy.

Do you really want to destroy all resources?

Terraform will destroy all your managed infrastructure, as shown above.

There is no undo. Only 'yes' will be accepted to confirm.

Enter a value: yes

powermax_maskingview.terraform_mv: Destroying... [id=terraform_mv]

powermax_maskingview.terraform_mv: Destruction complete after 0s

powermax_hostgroup.terraform_cluster: Destroying... [id=terraform_cluster]

powermax_portgroup.terraform_pg: Destroying... [id=terraform_pg]

powermax_volume.terraform_vol1: Destroying... [id=0002E]

powermax_hostgroup.terraform_cluster: Destruction complete after 1s

powermax_host.tfhost01: Destroying... [id=tfhost01]

powermax_host.tfhost01: Destruction complete after 0s

powermax_portgroup.terraform_pg: Destruction complete after 1s

powermax_volume.terraform_vol1: Destruction complete after 2s

powermax_storagegroup.terraform_sg: Destroying... [id=terraform_sg]

powermax_storagegroup.terraform_sg: Destruction complete after 0s

Destroy complete! Resources: 6 destroyed.

As we discussed in the previous blog you could also declare additional variables for attributes like names and volume sizes to make the plan more flexible. At that point, you could also pass the value of those variables dynamically through with the “-var” or “-var-file” parameter.

Resources

- Part 1: Managing your on-premises infrastructure with HashiCorp Terraform

- Part 2: Managing your on-premises infrastructure with HashiCorp Terraform

- Part 3: Managing your on-premises infrastructure with HashiCorp Terraform

- Part 4: Managing your on-premises infrastructure with HashiCorp Terraform

Author: Alberto Ramos, Sr. Principal Engineer

https://developer.dell.com